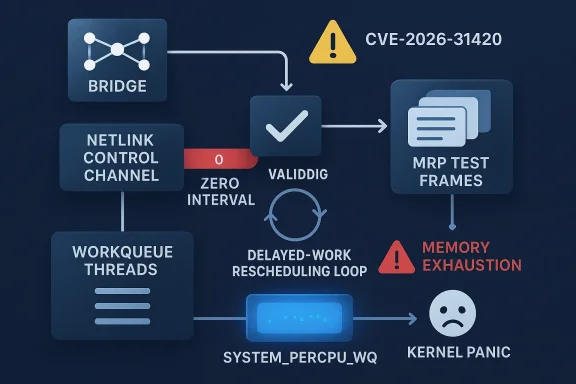

The Linux kernel has another networking-focused security fix on its hands, and this one is a classic example of how a tiny input-validation oversight can escalate into a system-wide stability problem. CVE-2026-31420 affects the bridge MRP path, where a zero test interval supplied through netlink can drive delayed work into a tight rescheduling loop, rapidly allocating and transmitting MRP test frames until memory is exhausted and the kernel panics. The upstream fix is straightforward: reject zero at the attribute-parsing layer with

CVE-2026-31420 sits squarely in the long-running category of Linux kernel vulnerabilities where the issue is not an exotic exploit primitive but a boundary failure: the kernel trusted a user-supplied value that should have been rejected earlier. In this case, the affected functions

That pattern is important because it shows how kernel bugs often begin as validation mistakes and end as availability failures. The kernel is not failing because the MRP logic is conceptually broken; it is failing because a control-plane parameter crosses a trust boundary without enough guardrails. In production, that distinction matters. Administrators do not care whether the code path is elegant if the machine can be pushed into a panic by an invalid configuration. They care that the bad state is prevented, not merely detected after the fact.

The fix is also notable because it lands at the same layer where the bug enters: netlink parsing. By using

This is also consistent with a broader design pattern in Linux networking code. When the kernel already has examples of range-constrained netlink attributes in adjacent bridge subsystems, the safest choice is to keep the validation rules aligned. That reduces surprise for userspace tooling and makes the subsystem easier to reason about during review. In other words, the fix is not only a security patch; it is also a cleanup of the interface contract.

That is especially true in network control paths, where a misconfiguration can be made by automation, orchestration, or an otherwise routine administrative action. The bug is not asking for packet crafting or local privilege escalation. It is asking for a malformed configuration value, which means the exposure can be accidental, scripted, or triggered by management tooling. In practical terms, that broadens the risk surface well beyond a narrow exploit scenario.

The Linux kernel has a long history of turning what looks like a modest validation problem into a CVE because the kernel is the last line of defense between user input and hardware-facing behavior. Netlink is particularly sensitive because it is a user-visible control channel into the kernel. If a malformed attribute can reach a subsystem that schedules work, spawns traffic, or manages state transitions, then a tiny missing check can become a serious availability defect.

It is also worth noting that the CVE record was still awaiting NVD enrichment at the time of publication. That means defenders do not yet have the comfort of a finalized CVSS score from NIST, which is common early in the disclosure cycle for kernel bugs. In practice, that puts more weight on the technical description and the fix itself. When the patch is precise and the failure mode is obvious, the absence of a published score should not be mistaken for lack of urgency.

Kernel CVEs often feel deceptively mundane because they are described in terms of specific functions, attribute names, and helper calls. But that language matters. It tells operators where the trust boundary is, what path is reachable from userspace, and which part of the subsystem has been hardened. In this case, the boundary is the netlink parser, the failure point is delayed work scheduling, and the correction is to reject invalid input earlier. That is a coherent, understandable security story, which is exactly what makes the issue actionable for administrators.

That rapid loop is the real danger. It does not just wake up frequently; it allocates and transmits MRP test frames at maximum rate. Once those frames are being generated continuously, memory consumption rises sharply. Eventually, the machine runs out of memory and the kernel can deadlock in the out-of-memory path, which turns a logic error into a full system panic. The exact wording of the advisory matters here because it highlights that this is not a cosmetic glitch but a catastrophic resource exhaustion condition.

This approach also matches a general Linux security preference: validate as early as possible and avoid letting invalid state leak into asynchronous code. Once a bad input reaches a delayed work item, timers, queues, and worker threads all become part of the problem. Preventing that chain is better than trying to unwind it later. In a high-performance kernel, every extra corrective branch is a potential maintenance burden, so early rejection is usually the strongest option.

The enterprise risk is amplified by automation. Modern infrastructure often configures networking through orchestration systems, templates, or scripts rather than manual clicks. That makes a bad interval value more plausible, not less, because the same invalid parameter can be replicated across many hosts. A single misconfigured playbook or management profile can turn a local bug into a fleet-wide outage.

The bigger risk for non-enterprise users is not direct exploitation but accidental exposure through advanced networking features. A user experimenting with bridging, redundant links, or embedded network appliances may turn on the exact functionality needed to hit the bug. That is why kernel networking issues often surprise people: they are not always visible until someone uses a feature that seems routine in a lab but rare on a desktop.

The lesson is also about how kernel teams think about security. They do not wait for exploit chains to become obvious before hardening edge cases. Instead, they fix the unsafe input contract and reduce the surface where future bugs might hide. That style of engineering is one reason Linux networking remains robust despite its complexity. It is not because every edge case is avoided; it is because the boundary conditions are increasingly being made explicit.

There is also a broader opportunity here for network administrators and distribution maintainers to audit similar netlink-controlled subsystems for missing range checks. Bugs like this tend to appear where validation was assumed but not explicitly enforced. A review of adjacent bridge and networking paths can often catch the next problem before it becomes a CVE.

Another concern is underestimation. Because the bug starts as an invalid configuration and ends as an out-of-memory panic, some teams may wrongly classify it as a minor administrative mistake rather than a security-relevant availability issue. That would be a mistake. In infrastructure, a reliable panic path is serious even when it does not involve code execution or data theft.

It will also be worth watching whether similar validation gaps surface in adjacent bridge control paths. When one subsystem accepts a zero or out-of-range value and another nearby subsystem already enforces the limit, that often signals a broader review opportunity. Kernel hardening is frequently iterative, and the first bug discovered in a family of controls is often a map to the next one.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

NLA_POLICY_MIN(NLA_U32, 1) so the invalid value never reaches the workqueue logic in the first place. That makes the bug look simple on the surface, but the operational consequences are anything but simple, especially on hosts that rely on bridge-heavy networking configurations.

Overview

Overview

CVE-2026-31420 sits squarely in the long-running category of Linux kernel vulnerabilities where the issue is not an exotic exploit primitive but a boundary failure: the kernel trusted a user-supplied value that should have been rejected earlier. In this case, the affected functions br_mrp_start_test() and br_mrp_start_in_test() accept interval values from netlink without validating that they are nonzero. Once a zero interval slips through, usecs_to_jiffies(0) returns zero, and the delayed work routines keep rescheduling themselves with no delay, creating a continuous loop on system_percpu_wq. The result is a flood of MRP test traffic, memory pressure, and, eventually, an out-of-memory panic.That pattern is important because it shows how kernel bugs often begin as validation mistakes and end as availability failures. The kernel is not failing because the MRP logic is conceptually broken; it is failing because a control-plane parameter crosses a trust boundary without enough guardrails. In production, that distinction matters. Administrators do not care whether the code path is elegant if the machine can be pushed into a panic by an invalid configuration. They care that the bad state is prevented, not merely detected after the fact.

The fix is also notable because it lands at the same layer where the bug enters: netlink parsing. By using

NLA_POLICY_MIN(NLA_U32, 1) for both IFLA_BRIDGE_MRP_START_TEST_INTERVAL and IFLA_BRIDGE_MRP_START_IN_TEST_INTERVAL, the kernel rejects zero before any workqueue scheduling happens. That is the right kind of fix for this class of problem because it removes ambiguity from the API contract itself. A value of zero was never a meaningful interval in this context, so the safest move is to make it invalid everywhere rather than try to compensate later in the execution path.This is also consistent with a broader design pattern in Linux networking code. When the kernel already has examples of range-constrained netlink attributes in adjacent bridge subsystems, the safest choice is to keep the validation rules aligned. That reduces surprise for userspace tooling and makes the subsystem easier to reason about during review. In other words, the fix is not only a security patch; it is also a cleanup of the interface contract.

Why this bug matters

The vulnerability is serious because it attacks the kernel’s ability to pace its own background work. A tight rescheduling loop in delayed work is not just inefficient; it is a resource-amplification mechanism. Once the workqueue begins spinning at maximum rate, the system can spend itself into the ground through its own housekeeping path, which is exactly the kind of failure operators dread because it can look like a random panic until the root cause is traced.That is especially true in network control paths, where a misconfiguration can be made by automation, orchestration, or an otherwise routine administrative action. The bug is not asking for packet crafting or local privilege escalation. It is asking for a malformed configuration value, which means the exposure can be accidental, scripted, or triggered by management tooling. In practical terms, that broadens the risk surface well beyond a narrow exploit scenario.

What the fix actually changes

The technical change is small, but the effect is significant. By enforcing a minimum value of one on the interval attributes, the kernel ensures that the delayed work never gets a zero-delay schedule. That closes the loop before it can spin, and it does so without changing how valid MRP intervals are handled. In kernel maintenance, that is the sweet spot: prevent the crash, preserve valid behavior, and keep the patch narrow enough to backport cleanly.Background

Media Redundancy Protocol, or MRP, is used in network topologies where resiliency matters. On bridge-based systems, MRP-related test traffic helps validate that connectivity and failover behavior remain healthy. That means the code sits in an area of the kernel where correctness and timing both matter. If the timing logic goes wrong, the system can end up generating the very traffic it is supposed to regulate in a controlled way. That makes the bug more than a theoretical oddity; it touches the operational core of bridged networking.The Linux kernel has a long history of turning what looks like a modest validation problem into a CVE because the kernel is the last line of defense between user input and hardware-facing behavior. Netlink is particularly sensitive because it is a user-visible control channel into the kernel. If a malformed attribute can reach a subsystem that schedules work, spawns traffic, or manages state transitions, then a tiny missing check can become a serious availability defect.

It is also worth noting that the CVE record was still awaiting NVD enrichment at the time of publication. That means defenders do not yet have the comfort of a finalized CVSS score from NIST, which is common early in the disclosure cycle for kernel bugs. In practice, that puts more weight on the technical description and the fix itself. When the patch is precise and the failure mode is obvious, the absence of a published score should not be mistaken for lack of urgency.

Kernel CVEs often feel deceptively mundane because they are described in terms of specific functions, attribute names, and helper calls. But that language matters. It tells operators where the trust boundary is, what path is reachable from userspace, and which part of the subsystem has been hardened. In this case, the boundary is the netlink parser, the failure point is delayed work scheduling, and the correction is to reject invalid input earlier. That is a coherent, understandable security story, which is exactly what makes the issue actionable for administrators.

Netlink as the control plane

Netlink is one of those kernel interfaces that rarely gets attention until something goes wrong. It is the control plane for a lot of Linux networking activity, which means bugs there can affect tools, daemons, scripts, and orchestration systems. When a malformed attribute enters that path, the damage is often indirect: a crash, a hang, a memory leak, or a flood of work that the kernel never intended to create.Why zero is dangerous here

A zero interval is not merely a small value. In this code path, it is effectively a no-delay trigger. Onceusecs_to_jiffies(0) collapses to zero, the delayed work mechanism no longer throttles itself, and the reschedule loop becomes effectively self-sustaining. That is why the fix is a minimum-value policy rather than a later check in the work function. The safest place to reject an impossible state is at the point of entry.How the Failure Unfolds

The failure chain is easy to follow once the code path is described. A userspace process submits a bridge MRP test interval over netlink. The kernel accepts the value, passes it into the test-start logic, and then converts the interval to jiffies. If the value is zero, the resulting delay is zero as well. The delayed work then keeps queueing itself immediately, which creates a rapid-fire execution pattern onsystem_percpu_wq.That rapid loop is the real danger. It does not just wake up frequently; it allocates and transmits MRP test frames at maximum rate. Once those frames are being generated continuously, memory consumption rises sharply. Eventually, the machine runs out of memory and the kernel can deadlock in the out-of-memory path, which turns a logic error into a full system panic. The exact wording of the advisory matters here because it highlights that this is not a cosmetic glitch but a catastrophic resource exhaustion condition.

The workqueue loop

Delayed work is meant to defer execution by a defined amount of time. A zero-delay reschedule defeats that purpose. In many kernel subsystems, a zero delay can be harmless if the logic expects it, but here it is pathological because it creates a tight feedback loop. The kernel keeps doing the same work as fast as it can, and the work itself creates more pressure on the system. That is how a configuration bug becomes a self-inflicted denial of service.Why OOM panic is so severe

An out-of-memory panic in kernel space is more than a failed allocation. It means the system has exhausted the resources it needs to keep operating safely. On a bridge-heavy host, that can take down virtualization traffic, storage paths, management links, or any service riding on top of the machine. Even if the system recovers after reboot, the operational cost is measured in downtime, lost state, and potential failover cascades.- The bug is triggered by a zero interval supplied over netlink.

- The zero value becomes a zero-delay reschedule in the delayed work path.

- The loop generates MRP frames at maximum rate.

- The result is memory exhaustion and possible kernel panic.

- The fix rejects the value before scheduling can begin.

Why the Patch Is the Right Shape

One of the better signs in a kernel fix is when the patch addresses the root cause instead of dressing up the symptoms. That is what happens here. The problem is not that the workqueue code is misbehaving on its own; it is that the input contract allows an impossible value. By enforcingNLA_POLICY_MIN(NLA_U32, 1), the kernel stops the bad value at the border. That is cleaner than adding ad hoc checks deeper in the subsystem, and it is far easier to audit later.This approach also matches a general Linux security preference: validate as early as possible and avoid letting invalid state leak into asynchronous code. Once a bad input reaches a delayed work item, timers, queues, and worker threads all become part of the problem. Preventing that chain is better than trying to unwind it later. In a high-performance kernel, every extra corrective branch is a potential maintenance burden, so early rejection is usually the strongest option.

Validation at the parser layer

Parser-layer validation is valuable because it makes the rule explicit to every caller. Userland tools do not need to guess whether zero is “kind of okay” or “undefined but tolerated.” They receive a clear answer: it is invalid. That clarity reduces the risk of future regressions and makes testing easier because the failure can be caught immediately at configuration time.Consistency with other bridge checks

The advisory notes that similar bridge subsystems such asbr_fdb and br_mst already enforce range constraints on netlink attributes. That is a useful clue because it shows the patch is not inventing a new policy style; it is bringing the MRP code into line with established practice. Consistency across subsystems is not just aesthetic. It lowers the chances that one feature becomes the odd one out and accumulates unsafe assumptions.- Early rejection is safer than downstream recovery.

- A parser check avoids contaminating asynchronous work.

- The fix preserves the existing valid behavior.

- Consistency with other bridge code improves maintainability.

- Range enforcement makes testing and documentation cleaner.

Enterprise Impact

For enterprises, the key question is not whether the bug is clever. It is whether it can take down a production host. In this case, the answer is yes. Any environment that uses Linux bridge MRP features for redundancy or network resilience should treat the issue as an availability concern first and a technical curiosity second. A kernel panic on a network node can ripple outward into application downtime, storage interruptions, and failover events.The enterprise risk is amplified by automation. Modern infrastructure often configures networking through orchestration systems, templates, or scripts rather than manual clicks. That makes a bad interval value more plausible, not less, because the same invalid parameter can be replicated across many hosts. A single misconfigured playbook or management profile can turn a local bug into a fleet-wide outage.

Why availability matters more than score

Even though the CVE has not yet received a finalized NVD score, that should not lull defenders into waiting. Availability bugs in privileged kernel paths are high-value operational problems, especially in infrastructure roles. A box that panics under load is not merely “unstable”; it is a liability in the network path. That makes patch adoption a practical necessity rather than a theoretical best practice.Where exposure is most likely

The most exposed systems are likely to be those using bridge configurations that include MRP features, especially when they are managed centrally. Edge appliances, industrial networks, gateways, and any host that relies on bridging for redundancy should be reviewed first. Desktop systems are less likely to encounter the path, but that does not mean consumer-adjacent Linux devices are immune. Embedded infrastructure often hides in places that are easy to overlook.- Gateways and bridges are the highest-priority systems.

- Automation can reproduce the bad state across many hosts.

- The issue is an availability risk, not just a crash note.

- Vendor backports matter more than upstream commit visibility.

- Long-lived infrastructure kernels may lag behind the fix.

Consumer and Edge Device Impact

Consumer desktop users may never touch the affected code path, which means the issue is likely to be lower priority on ordinary laptops and workstations. That said, the boundary between consumer and infrastructure Linux has blurred. Home labs, small-office gateways, container hosts, and appliance-style devices often run the same kernel networking stack in a more exposed configuration. In those environments, the bug can absolutely matter.The bigger risk for non-enterprise users is not direct exploitation but accidental exposure through advanced networking features. A user experimenting with bridging, redundant links, or embedded network appliances may turn on the exact functionality needed to hit the bug. That is why kernel networking issues often surprise people: they are not always visible until someone uses a feature that seems routine in a lab but rare on a desktop.

Home labs are not harmless

Home labs often run production-like networking experiments with fewer safeguards than enterprise environments. That makes them a perfect place for invalid configuration values to slip through unnoticed. If a test interval is set incorrectly in a script or UI, the machine can end up self-DOSing just as effectively as a data-center host. The difference is usually scale, not mechanism.Embedded systems deserve attention

Linux-based appliances can be especially sensitive because they often run with limited memory and fewer observability tools. That means the same zero-delay loop can push a smaller system over the edge even faster. In edge deployments, where rebooting may require physical access or remote hands, a panic is not just inconvenient; it can be operationally expensive.What This Says About Kernel Hardening

CVE-2026-31420 is a reminder that kernel hardening in 2026 is often about preventing invalid states from propagating into complex subsystems. The kernel has become exceptionally good at handling many classes of memory corruption, but there is still plenty of room for failures rooted in logic, timing, and resource handling. This bug is a clean example: the problem is not memory corruption in the traditional sense, but the effect can still be catastrophic.The lesson is also about how kernel teams think about security. They do not wait for exploit chains to become obvious before hardening edge cases. Instead, they fix the unsafe input contract and reduce the surface where future bugs might hide. That style of engineering is one reason Linux networking remains robust despite its complexity. It is not because every edge case is avoided; it is because the boundary conditions are increasingly being made explicit.

Input validation as security policy

The best security checks are often the boring ones. A minimum-value policy is not flashy, but it is highly effective because it encodes the feature’s actual operating range. Once that policy exists, userspace cannot accidentally wander into undefined behavior. That is especially important in a subsystem like bridge MRP, where timing and state transitions interact with resource consumption.Why early rejection scales better

Rejecting invalid input at parse time scales better than trying to sanitize later because it short-circuits the entire cascade. There is no delayed work item to cancel, no frame storm to suppress, and no memory pressure to unwind. This is one of those cases where the simplest solution is also the most durable one. It reduces both technical debt and operational risk.- Input validation is part of the security boundary.

- Early rejection reduces async complexity.

- Small policy checks prevent large failure cascades.

- The fix is both minimal and future-proof.

- Kernel hardening often means making bad states unrepresentable.

Strengths and Opportunities

The strongest feature of this fix is that it is precise. It does not modify MRP behavior for valid intervals, and it does not introduce a complicated workaround that might be difficult to backport. That makes the patch attractive to stable-tree maintainers and less risky for distributors shipping long-term kernel lines. The fix also improves the user-facing contract by making invalid configuration fail fast instead of failing catastrophically later.There is also a broader opportunity here for network administrators and distribution maintainers to audit similar netlink-controlled subsystems for missing range checks. Bugs like this tend to appear where validation was assumed but not explicitly enforced. A review of adjacent bridge and networking paths can often catch the next problem before it becomes a CVE.

- Surgical fix with low regression risk.

- Better alignment with existing bridge policies.

- Easier stable backporting for vendors.

- Clearer error handling for userspace tools.

- Reduced chance of future zero-value bugs.

- Improved operational predictability.

- Stronger protection against resource-exhaustion loops.

Risks and Concerns

The biggest concern is patch lag. Even when the fix is simple, downstream kernels can take time to absorb it, especially in vendor-supported branches and appliance firmware. That means the public disclosure may outpace real-world remediation by weeks or months, leaving some systems exposed longer than operators expect. In environments with mixed kernel fleets, that lag can be easy to miss.Another concern is underestimation. Because the bug starts as an invalid configuration and ends as an out-of-memory panic, some teams may wrongly classify it as a minor administrative mistake rather than a security-relevant availability issue. That would be a mistake. In infrastructure, a reliable panic path is serious even when it does not involve code execution or data theft.

- Vendor backports may arrive later than expected.

- Automation can reintroduce the bad state repeatedly.

- Smaller systems may crash faster under the same loop.

- Teams may underestimate availability-only CVEs.

- Legacy configurations can survive in templates and scripts.

- Scanner output may not reflect actual kernel backport status.

- Bridge features are often poorly inventoried in large fleets.

Looking Ahead

The next thing to watch is backport coverage. Upstream fixes are only part of the story; the real question is how quickly distribution kernels and appliance vendors absorb the change. For many organizations, the shipped kernel matters far more than the mainline commit, which is why patch verification should focus on vendor advisories and package metadata rather than just upstream status.It will also be worth watching whether similar validation gaps surface in adjacent bridge control paths. When one subsystem accepts a zero or out-of-range value and another nearby subsystem already enforces the limit, that often signals a broader review opportunity. Kernel hardening is frequently iterative, and the first bug discovered in a family of controls is often a map to the next one.

What administrators should check

- Confirm whether your kernel includes the bridge MRP fix for CVE-2026-31420.

- Review whether any automation can set MRP test intervals to zero.

- Inventory bridge-based deployments that depend on MRP for resiliency.

- Verify whether vendor backports have landed in your supported kernel branch.

- Test whether management tools fail cleanly when invalid values are submitted.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

Last edited: