The Linux kernel has a new security issue on the radar, and this one is a reminder that even highly specialized storage and virtualization paths can leak sensitive state when a single bounds check is missed. CVE-2026-31464 affects the ibmvfc SCSI driver, where a malicious or compromised VIO server can send a

The immediate technical story is straightforward, but the operational implications are broader. ibmvfc is part of the Linux stack used in IBM Power environments, and it interacts with virtual I/O infrastructure rather than a local desktop-style device path. That matters because vulnerabilities in virtualization-adjacent code often sit in the trust boundary between the guest kernel and the management plane. When that boundary is weak, a compromised host-side component can turn what looks like a guest bug into a channel for kernel memory disclosure.

The kernel description says the discover-targets MAD response can return a

This is the kind of bug that kernel maintainers tend to treat seriously even when the public description sounds narrow. The kernel project has long recognized that memory-safety flaws in driver code may not always look dramatic at first glance, but they can still become meaningful security issues if a remote or privileged counterpart can shape the data path. In this case, the attacker model is not “internet random,” but rather a VIO server that is malicious, compromised, or otherwise able to return malformed discovery metadata. That makes the trust relationship itself the weak point.

The CVE record also tells us something about modern disclosure flow. The issue was published on April 22, 2026, with references to multiple stable kernel commits already attached, while NVD’s CVSS enrichment had not yet been completed at the time of publication. That combination is increasingly common for kernel issues: the patch exists, the upstream reasoning is clear, and the public severity score may lag behind the technical record. For operators, that means the practical response should not wait on a formal score if the affected code is in their fleet.

Because the memory in question comes from a DMA-coherent allocation, the contents may be deterministic enough for an attacker to exploit the leak repeatedly. That does not automatically imply full compromise, but it can absolutely help with bypassing mitigations, mapping kernel layout, or extracting sensitive runtime state. In security analysis, information disclosure bugs are often underestimated because they do not always produce an instant crash. Yet they can be highly valuable primitives when paired with other weaknesses.

The patch approach is deliberately narrow: clamp

That pattern is common in driver bugs. The code often assumes a negotiated or protocol-derived field is already sane, then uses it to shape allocation, indexing, or iteration. If the field is larger than expected, the error may not become obvious until much later, when a different routine references memory under the assumption that the original count was accurate. That lag between cause and effect is what makes these bugs especially expensive to audit.

The fact that the data path is mediated through SCSI and virtualization metadata may make the issue sound niche, but niche is not the same as low value. Infrastructure vulnerabilities often have limited reach and high consequence. A flaw that affects only a specific platform can still matter a great deal if that platform hosts production workloads, storage, or consolidation layers.

In that context, CVE-2026-31464 is not a curiosity. It is a signal that guest-side validation in platform-specific drivers remains a critical security control. Even when the attack surface is relatively specialized, the underlying lesson is broad: never let protocol metadata drive memory access without a hard upper bound. That principle applies far beyond IBM Power environments.

The broader point is that kernel vulnerabilities increasingly travel through layered systems. A workstation user may never know that a virtualization or storage driver had a bug, but the service they rely on may still be exposed through a hosted environment. That makes backport visibility and vendor response speed just as important as the original technical fix.

It also keeps the repair easy to backport. Kernel maintainers generally favor fixes that are small enough to be cherry-picked into stable branches without dragging in unrelated behavior changes. The stable references linked in the CVE record suggest that this patch follows that pattern, which should help downstream vendors absorb it with relatively low friction.

The patch also reflects a common kernel security maxim: don’t trust externally controlled sizes, even if they came from an expected protocol exchange. Protocol correctness and memory safety are not the same thing. The first says the peer followed the format; the second says the peer’s values are safe for your local buffers. CVE-2026-31464 is a reminder that those are separate checks, not interchangeable ones.

Teams running mixed fleets should also pay attention because these are exactly the kinds of issues that can slip through centralized vulnerability management. A generic scanner may know the CVE exists, but it may not know whether the specific kernel build in use has already incorporated the backport. That is why version verification against vendor advisories still matters.

That is why security teams should think in layers. A vulnerability in guest-side driver code may not be the first thing on a list of top-tier remote threats, but it can still become important if the surrounding infrastructure is already under pressure. The right response is to understand the dependency, validate patch status, and treat the bug as a real hardening issue rather than a theoretical edge case.

There is also a categorization problem. Teams that prioritize only high-score or crash-oriented issues may be tempted to defer this one because it looks narrow. That would be a mistake. Small leaks matter when they occur in privileged paths, especially when the output goes directly to a remote management endpoint.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

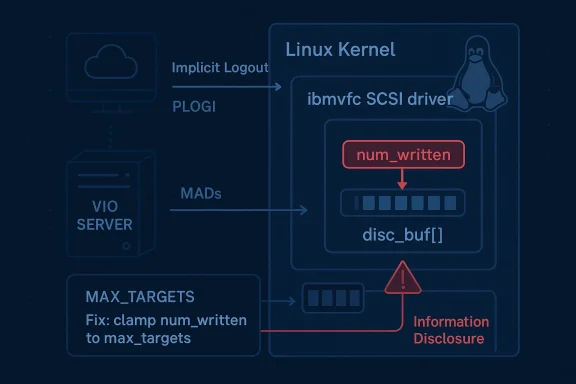

num_written value larger than max_targets, leading the kernel to walk beyond the allocated disc_buf[] array and expose kernel memory back to the server in follow-on MAD traffic. The fix is simple but important: clamp the reported target count before storing it, preventing the out-of-bounds access at the source. Microsoft’s vulnerability guidance points to the same kernel-originated description and the upstream stable fixes already linked from kernel.org.

Background

Background

The immediate technical story is straightforward, but the operational implications are broader. ibmvfc is part of the Linux stack used in IBM Power environments, and it interacts with virtual I/O infrastructure rather than a local desktop-style device path. That matters because vulnerabilities in virtualization-adjacent code often sit in the trust boundary between the guest kernel and the management plane. When that boundary is weak, a compromised host-side component can turn what looks like a guest bug into a channel for kernel memory disclosure.The kernel description says the discover-targets MAD response can return a

num_written field that exceeds max_targets. That value is then copied into vhost->num_targets without validation and later used as a loop bound in ibmvfc_alloc_targets(), which indexes into disc_buf[]. Since disc_buf[] is only allocated for max_targets entries, the loop can step beyond the DMA-coherent allocation and read adjacent memory. The leaked data is then embedded into Implicit Logout and PLOGI MADs sent back to the VIO server, turning a bounds bug into an information disclosure path.This is the kind of bug that kernel maintainers tend to treat seriously even when the public description sounds narrow. The kernel project has long recognized that memory-safety flaws in driver code may not always look dramatic at first glance, but they can still become meaningful security issues if a remote or privileged counterpart can shape the data path. In this case, the attacker model is not “internet random,” but rather a VIO server that is malicious, compromised, or otherwise able to return malformed discovery metadata. That makes the trust relationship itself the weak point.

The CVE record also tells us something about modern disclosure flow. The issue was published on April 22, 2026, with references to multiple stable kernel commits already attached, while NVD’s CVSS enrichment had not yet been completed at the time of publication. That combination is increasingly common for kernel issues: the patch exists, the upstream reasoning is clear, and the public severity score may lag behind the technical record. For operators, that means the practical response should not wait on a formal score if the affected code is in their fleet.

What the Vulnerability Does

At the heart of CVE-2026-31464 is a classic out-of-bounds read caused by trusting an externally supplied count too early. The driver receives anum_written field, stores it directly, and later uses that stored value to decide how many targets to allocate and process. If the count is inflated beyond the buffer’s true capacity, the kernel reads memory that was never meant to be part of the discover-targets dataset. That is a correctness problem first, but it becomes a security issue because the read data is later repackaged and transmitted back to the remote side.Why the leak matters

The important detail is not just that the kernel reads too far; it is that the read data is subsequently reflected in outbound management packets. That makes the bug more than a local crash candidate. Instead of merely risking a panic or a bad state machine transition, the driver may leak fragments of kernel memory that could include stale pointers, internal structures, or other data not intended for disclosure. In kernel security terms, that is a very different class of failure.Because the memory in question comes from a DMA-coherent allocation, the contents may be deterministic enough for an attacker to exploit the leak repeatedly. That does not automatically imply full compromise, but it can absolutely help with bypassing mitigations, mapping kernel layout, or extracting sensitive runtime state. In security analysis, information disclosure bugs are often underestimated because they do not always produce an instant crash. Yet they can be highly valuable primitives when paired with other weaknesses.

The trust boundary problem

This CVE is also a reminder that virtualization trust boundaries are only as strong as the validation on each side. A guest kernel may assume the management server is well-behaved because the environment is controlled, but control is not the same thing as immunity. If the VIO server is compromised, misconfigured, or malicious, then the guest’s assumptions become liabilities. That is why even infrastructure code that seems “internal” can have a serious security profile.The patch approach is deliberately narrow: clamp

num_written to max_targets before storing it. That is good engineering because it constrains the bad input as close to the source as possible and avoids propagating invalid state through later functions. Kernel fixes of this style are often preferred because they reduce regression risk and preserve the original behavior for valid inputs while closing off the unsafe edge case.How the Bug Likely Emerged

The driver path in question is a good example of how kernel bugs often arise not from one obvious mistake, but from a series of individually reasonable assumptions that fail when chained together. A discovery response returns a count, the count is copied into state, later code uses that state as a loop bound, and the loop assumes the backing array matches the count. Each step may look harmless in isolation. Together, they create the exploit path.The danger of “trusted” metadata

In many kernel subsystems, a returned count feels safe because it came from another component in the same platform stack. That can lull developers into treating the field as a fact rather than as an input. But once the count crosses a component boundary, it should be treated like any other external value. The CVE description makes clear that this was not done here, and the lack of validation directly enabled the out-of-bounds read.That pattern is common in driver bugs. The code often assumes a negotiated or protocol-derived field is already sane, then uses it to shape allocation, indexing, or iteration. If the field is larger than expected, the error may not become obvious until much later, when a different routine references memory under the assumption that the original count was accurate. That lag between cause and effect is what makes these bugs especially expensive to audit.

Why the issue is security-relevant

It is tempting to call this a “just” an access bug, but the disclosure channel changes that assessment. The out-of-bounds memory is not simply accessed and discarded; it is copied into management messages that return to the VIO server. That means the remote side can potentially observe data that should have remained inside the guest kernel. In practical terms, the bug crosses from memory corruption territory into kernel information exposure.The fact that the data path is mediated through SCSI and virtualization metadata may make the issue sound niche, but niche is not the same as low value. Infrastructure vulnerabilities often have limited reach and high consequence. A flaw that affects only a specific platform can still matter a great deal if that platform hosts production workloads, storage, or consolidation layers.

What Makes ibmvfc Important

The ibmvfc driver is not one of the most commonly discussed Linux subsystems, but that does not make it unimportant. Kernel security is full of code paths that only a subset of the installed base ever uses, yet those paths still support real systems that may be critical to enterprises. The blast radius of a bug is defined not just by how many users are affected, but by who those users are and what they rely on the code for.Enterprise environments care most

This is especially true in enterprise and virtualization-heavy estates. A vulnerability in a storage or virtual I/O component can affect clustered servers, consolidated workloads, or high-availability environments where even a limited leak matters. Enterprises often prioritize uptime and data integrity over novelty, which means a bug that undermines either of those values is likely to be taken seriously by platform teams.In that context, CVE-2026-31464 is not a curiosity. It is a signal that guest-side validation in platform-specific drivers remains a critical security control. Even when the attack surface is relatively specialized, the underlying lesson is broad: never let protocol metadata drive memory access without a hard upper bound. That principle applies far beyond IBM Power environments.

Consumer relevance is limited, but not irrelevant

Typical consumer desktops are unlikely to encounter this code path, so the direct impact is probably low outside affected infrastructure. Still, many users interact with Linux indirectly through cloud instances, appliances, and managed services. A vulnerability in a backend kernel path can still influence user-facing availability or trust if it sits inside the infrastructure layer. That is why “not my laptop” is not a sufficient risk assessment for kernel CVEs.The broader point is that kernel vulnerabilities increasingly travel through layered systems. A workstation user may never know that a virtualization or storage driver had a bug, but the service they rely on may still be exposed through a hosted environment. That makes backport visibility and vendor response speed just as important as the original technical fix.

The Fix and Why It Is Good Practice

The fix for CVE-2026-31464 is exactly the sort of response kernel developers prefer: minimal, direct, and local. By clampingnum_written to max_targets before storing it in vhost->num_targets, the driver prevents an invalid count from flowing into later logic. That avoids the out-of-bounds loop without changing the intended behavior for valid responses.Clamp early, fail safely

This matters because clamping early reduces the number of places where assumptions can be violated. Once the invalid count is allowed into shared state, every downstream consumer must remember to defend itself. That is a fragile pattern. Stopping the bad value at the boundary is cleaner and less error-prone.It also keeps the repair easy to backport. Kernel maintainers generally favor fixes that are small enough to be cherry-picked into stable branches without dragging in unrelated behavior changes. The stable references linked in the CVE record suggest that this patch follows that pattern, which should help downstream vendors absorb it with relatively low friction.

Why this kind of patch scales well

A narrowly scoped fix is valuable because it lowers the chance of introducing a second bug while repairing the first. That is especially important in driver code that interacts with hardware or virtualization management planes, where the runtime consequences of regression can be severe. In security terms, this is a surgical remediation rather than a redesign, and that is usually the right answer for a discrete bounds issue.The patch also reflects a common kernel security maxim: don’t trust externally controlled sizes, even if they came from an expected protocol exchange. Protocol correctness and memory safety are not the same thing. The first says the peer followed the format; the second says the peer’s values are safe for your local buffers. CVE-2026-31464 is a reminder that those are separate checks, not interchangeable ones.

Who Is Most Exposed

The most exposed users are the operators who run Linux guests or systems that rely on the ibmvfc stack in IBM Power virtualization environments. Those are the systems where the trust relationship between guest and VIO server is most relevant and where the affected code path is actually exercised. If the deployment includes a potentially compromised or untrusted VIO server, the risk rises significantly.Infrastructure teams should care first

For infrastructure teams, the concern is not only data leakage but also the possibility of hidden state exposure that could assist follow-on attacks. Even if the leak is limited, information disclosure can help an attacker understand memory layout, internal object state, or live kernel metadata. In a complex environment, that can be enough to move from a minor bug to a meaningful foothold.Teams running mixed fleets should also pay attention because these are exactly the kinds of issues that can slip through centralized vulnerability management. A generic scanner may know the CVE exists, but it may not know whether the specific kernel build in use has already incorporated the backport. That is why version verification against vendor advisories still matters.

Why the threat model is unusual

This CVE is not an internet-facing RCE story. The attacker needs influence over the VIO server side of the interaction, which narrows the practical threat model. But narrow does not mean harmless. In many enterprise environments, the management plane is already a high-value target, and once an attacker gets there, leaks from guest kernels can become highly useful.That is why security teams should think in layers. A vulnerability in guest-side driver code may not be the first thing on a list of top-tier remote threats, but it can still become important if the surrounding infrastructure is already under pressure. The right response is to understand the dependency, validate patch status, and treat the bug as a real hardening issue rather than a theoretical edge case.

Strengths and Opportunities

The strongest aspect of this disclosure is that the problem is clearly described and the fix is easy to understand. That gives operators and maintainers a clean path to remediation, and it gives vendors a straightforward backport target. The issue also reinforces a best practice that is easy to explain and hard to argue with: validate count fields before using them to size iteration or memory access.- The bug has a clear root cause and a simple mitigation.

- The patch is small enough to backport cleanly.

- The affected code path is specific and identifiable in fleet inventories.

- The leak mechanism is explicit, which helps defenders reason about exposure.

- The fix improves input validation discipline in a virtualization-adjacent driver.

- The CVE record already links stable commits, which should help downstream adoption.

- The issue is a useful reminder that specialized kernel paths still deserve full security review.

Risks and Concerns

The most obvious risk is that the bug can leak kernel memory rather than merely fail closed. Information disclosure in kernel space is often dismissed until it becomes clear how much it can assist attacker reconnaissance or chaining. Because the leaked data is repackaged into outbound MADs, the disclosure channel is built into the bug’s normal control flow, which makes it more concerning than a silent internal read.- The bug can expose kernel memory outside the intended buffer.

- A malicious or compromised VIO server is a plausible threat actor.

- The issue may be underestimated because it is not a crash or RCE.

- Patch lag in vendor kernels could leave production systems exposed.

- Scanner output may not accurately reflect whether a backport is present.

- Operators may overlook the issue if they do not track IBM Power virtualization paths closely.

- The leak could potentially aid follow-on attacks even if the initial disclosure is limited.

There is also a categorization problem. Teams that prioritize only high-score or crash-oriented issues may be tempted to defer this one because it looks narrow. That would be a mistake. Small leaks matter when they occur in privileged paths, especially when the output goes directly to a remote management endpoint.

Looking Ahead

The immediate question is how quickly the fixed commits propagate into downstream kernels, especially enterprise distributions and vendor-maintained Power images. Because the public record already includes upstream stable references, the practical challenge should be less about identifying the fix and more about confirming its presence in every branch that matters. That is where most real-world exposure will be decided.What administrators should verify

- Confirm whether your kernel build includes the published ibmvfc clamp fix.

- Check vendor advisories rather than relying only on upstream version numbers.

- Audit IBM Power and VIO-dependent systems separately from generic Linux fleets.

- Treat the issue as an information disclosure risk, not just a correctness bug.

- Verify that patch management tools recognize backported kernel changes correctly.

What to watch next

- Vendor backports for long-term-support branches.

- Any follow-up hardening patches in adjacent SCSI or virtualization paths.

- Updated advisories from downstream Linux distributors.

- Potential severity guidance once NVD enrichment completes.

- Fleet reports that show whether the fix has been absorbed into production kernels.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center