Microsoft’s CVE-2026-33109 is a remote code execution vulnerability in Azure Managed Instance for Apache Cassandra, listed by MSRC for Azure customers on May 8, 2026, and framed around confidence in the vulnerability’s existence rather than public exploit mechanics. That distinction matters. In cloud security, the most dangerous line is often not between “patched” and “unpatched,” but between “understood” and “known only enough to act.” CVE-2026-33109 sits squarely in that uneasy middle ground where defenders must move before the public technical narrative catches up.

The obvious story is that Azure Managed Instance for Apache Cassandra has a remote code execution flaw. The more important story is that a managed database service has reminded customers that “managed” does not mean “riskless.” It means the operational burden has moved, not vanished.

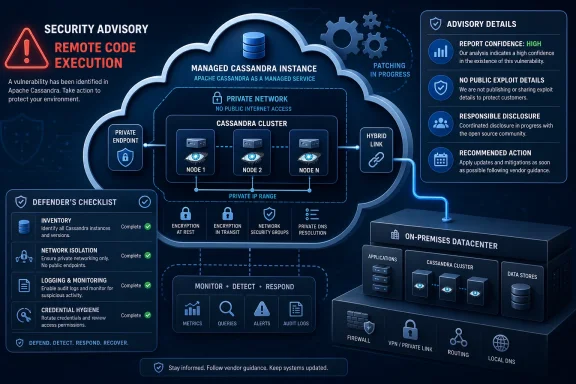

Azure Managed Instance for Apache Cassandra exists for organizations that want native Apache Cassandra behavior without building and babysitting the full stack themselves. Microsoft handles much of the machinery: deployment, node health, operating system patching, Cassandra patching, scaling orchestration, backups, certificate rotation, and other routine infrastructure work. That is the sales pitch, and for many workloads it is a good one.

But remote code execution changes the tone of the conversation. RCE is the vulnerability class that collapses abstractions. A bug that lets an attacker run code in the wrong place can turn a managed service from a neatly packaged platform into a question about tenant isolation, control-plane boundaries, data-plane exposure, and the limits of customer visibility.

That does not mean CVE-2026-33109 is being exploited, nor does it mean every Cassandra workload in Azure is in immediate danger. The public record available from Microsoft’s advisory does not give defenders a neat exploit chain to diagram on a whiteboard. It gives them something less satisfying and more realistic: a vendor-confirmed vulnerability class, an affected Azure service, and enough severity language to demand attention.

Security teams sometimes treat CVSS fields as a bureaucratic afterthought, something vulnerability scanners ingest and dashboards flatten into red, orange, and yellow squares. That habit is dangerous. Report confidence is one of the few metrics that tries to separate rumor from confirmation, and confirmation from exploit-ready detail.

A vulnerability can be publicly whispered about before the vendor knows the root cause. It can be independently researched but not fully proven. It can be acknowledged by the author or vendor, which sharply raises the confidence that defenders should treat it as real. CVE-2026-33109 belongs in the latter mental bucket: not because we have public exploit code, but because Microsoft has published it through MSRC as an Azure service vulnerability.

That creates an asymmetry. Defenders may not have the technical detail they want, but attackers do not necessarily need the advisory to hand them a complete recipe. In many modern vulnerability races, the title, affected product, impact type, and patch timing are enough to start targeted research.

In a traditional self-hosted Cassandra deployment, an RCE bug points the investigation toward specific nodes, JVM behavior, exposed interfaces, authentication paths, and network reachability. In a managed service, many of those details sit behind the provider’s curtain. The customer can see configuration, logs, metrics, access paths, and application behavior, but not always the underlying patch state or every layer of service implementation.

That is the bargain of managed services. Customers gain speed, reduced maintenance, and vendor-operated patching. They lose some forensic intimacy. When a vulnerability lands, they are asked to trust the provider’s remediation story more than their own ability to inspect every moving part.

Microsoft is hardly unique here. AWS, Google Cloud, Oracle, and every other serious cloud provider operate on the same fundamental premise: the provider owns parts of the stack that customers cannot directly audit. The issue with CVE-2026-33109 is not that this premise is unusual. It is that RCE forces everyone to remember the premise exists.

It is also why vulnerability management gets complicated. The closer a managed service stays to an upstream technology, the more it inherits the operational personality of that technology. Cassandra’s strengths — distributed writes, tunable consistency, horizontal scale, and survivability across nodes — come with a rich surface area of protocols, configuration, repair operations, compaction behavior, authentication choices, and cluster membership mechanics.

Microsoft’s managed offering tries to wrap that complexity in Azure automation. It deploys datacenters into virtual networks, supports hybrid rings, automates patching, and lets customers retain more Cassandra-like control than they would get from a more abstract database service. That flexibility is useful for real enterprises with real migration constraints.

But flexibility always leaves seams. Hybrid connectivity, existing rings, custom configurations, DBA commands, client-to-node encryption choices, and workload-specific tuning all create places where the shared responsibility model becomes more than a slide in a compliance deck. CVE-2026-33109 should push customers to ask which parts of their Cassandra estate are truly Microsoft-managed and which parts remain their own problem.

But the absence of public technical detail cuts both ways. It may mean the bug is difficult to exploit, tightly constrained, or already mitigated within Microsoft’s service fabric. It may also mean the public is behind the private timeline. Vendors, researchers, cloud incident responders, and attackers rarely move at the same speed.

The advisory language around confidence reminds us that vulnerability knowledge matures. First comes existence. Then come root-cause clues. Then comes corroborating research. Then come weaponized techniques, scanner checks, and exploit modules. The window between vendor disclosure and broad technical understanding is often when disciplined defenders can get ahead.

For Azure customers, the right question is not “Can I reproduce CVE-2026-33109 in a lab today?” The right question is “What would I regret not having checked if more detail appears tomorrow?” That is a less satisfying question, but it is the one mature operations teams ask.

That is good news for CVE-2026-33109, but it is not a permission slip to ignore it. Managed patching shifts the remediation workflow from “download and install this update” to “verify the provider’s mitigation applies to my environment, understand the timing, and monitor for signs of exposure.” That is a different kind of work, not no work.

Customers should expect Microsoft to handle the vulnerable service components under its control. They should not assume Microsoft can automatically fix every risky topology, every weak application credential, every overbroad network rule, or every hybrid deployment pattern. The shared responsibility boundary becomes especially relevant for customers connecting Azure-managed Cassandra datacenters to on-premises or third-party hosted Cassandra rings.

In other words, the patch may be Microsoft’s job, but the risk register still belongs to the customer. If a business-critical workload depends on the service, security teams need to record the exposure, confirm mitigation status through Azure channels, and coordinate with application owners. “It is managed by Microsoft” is not an incident response plan.

But private does not mean unreachable. Enterprise virtual networks are full of VPNs, ExpressRoute connections, peered networks, jump boxes, service principals, automation accounts, integration runtimes, and application subnets. The attacker model for cloud databases increasingly assumes that the first foothold may already exist somewhere adjacent.

This is where defenders should resist simplistic thinking. If CVE-2026-33109 requires network access to a Cassandra endpoint, then segmentation, private DNS hygiene, firewall rules, and identity controls become materially important. If exploitation requires authentication, credential hygiene and least privilege become more important. If exploitation is constrained to certain configurations, configuration inventory becomes the difference between panic and precision.

The advisory’s public detail may be limited, but the defensive implication is not. Treat reachability as a first-class control. The fewer systems that can talk to Cassandra, the fewer systems can become the launchpad for whatever exploit path eventually becomes public.

It is also where vulnerability response becomes harder. A pure Azure deployment has a relatively clear provider boundary. A hybrid Cassandra ring can stretch trust across network links, operational teams, legacy nodes, old configuration assumptions, and inconsistent patch practices. When a managed datacenter participates in a wider ring, the customer has to think about the whole system, not merely the Azure resource.

This does not mean hybrid customers are necessarily more vulnerable to CVE-2026-33109. It means their investigation surface is larger. They need to understand which clients connect where, how authentication is enforced, whether client-to-node encryption is consistently enabled, how replication is configured, and which nodes remain outside Microsoft’s management envelope.

Hybrid cloud often looks tidy in architecture diagrams. During a vulnerability event, it looks like a chain of custody problem. Every link matters, and the links outside the managed service may be the ones least prepared for scrutiny.

The base metrics tell part of the story: attack vector, complexity, privileges required, user interaction, scope, and impacts to confidentiality, integrity, and availability. Temporal metrics, including report confidence, tell another part: how mature the exploit environment is, whether remediation exists, and how reliable the vulnerability information appears. Environmental context tells the part that matters most to the business: whether this particular Cassandra cluster holds crown-jewel data or a disposable test dataset.

Security programs that live by scanner severity alone will struggle with cloud vulnerabilities like this. A scanner may not see the managed layer. A configuration inventory may not know which internal applications use Cassandra. A CMDB may list the Azure resource but miss the dependency chain that makes it mission-critical.

The better approach is to use CVSS as a starting point and then ask operational questions. Is the service in production? Does it hold regulated or sensitive data? Is it reachable from broad internal networks? Is it part of a hybrid ring? Are logs enabled and retained? Has Microsoft indicated remediation or mitigation through Azure Service Health, support channels, or the Security Update Guide?

Attackers do not need a public proof of concept to begin. They can compare service behavior before and after patches, inspect open-source Cassandra components for likely bug classes, look for recent dependency changes, analyze client and management interfaces, and test assumptions in their own tenants where possible. The more popular and business-critical the service, the more worthwhile that work becomes.

This is why “no known exploitation” should never be translated as “no urgency.” It means only that exploitation has not been publicly confirmed or disclosed through the channels being watched. There is always a period when exploitation is possible but not yet visible, and another period when it is visible to someone but not yet broadly reported.

For defenders, the answer is not paranoia. It is disciplined reduction of exposure. If the attack surface is small, logs are useful, privileges are constrained, and provider mitigation is confirmed, then the organization can absorb uncertainty without spiraling.

There is a reasonable defense of that restraint. Publishing too much too quickly can arm attackers. In managed services, the provider may be able to remediate centrally without requiring every customer to perform a manual patch, making detailed exploit notes less necessary for broad protection.

But there is also a cost. Customers need enough information to scope their own risk. They need to know whether the vulnerability affects data-plane access, management operations, specific Cassandra versions, certain configurations, hybrid deployments, authentication states, or underlying infrastructure components. Without that, every customer has to build a cautious worst-case model.

The best cloud advisories are the ones that disclose progressively. Start with the urgent facts, then add clarifying detail as mitigation coverage improves. Customers do not need a weaponized exploit narrative. They do need enough specificity to avoid either underreacting or shutting down business unnecessarily.

There may be no MSI to install. There may be no KB package to push through WSUS or Configuration Manager. There may be no server you can RDP into and inspect. The remediation path may run through Azure Service Health, Microsoft support, resource configuration, network policy, and waiting for the provider’s backend rollout.

That requires a different operational culture. Cloud security is less about owning every patch and more about owning every dependency. If a managed Cassandra cluster supports a line-of-business application, it belongs in the same risk conversation as domain controllers, SQL servers, storage accounts, and identity providers.

The Windows admin skill set still matters. Inventory, change management, least privilege, segmentation, logging, and incident response are timeless. What changes is where those disciplines are applied.

That sounds basic because it is. It is also where many enterprises fail. Cloud resources are easy to create, easy to forget, and easy to bury under subscription sprawl. A managed database that began as a migration test can become production infrastructure through the slow magic of convenience.

Once inventory exists, prioritization becomes possible. Production clusters with sensitive data and broad network reachability deserve immediate attention. Isolated development clusters still matter, but they do not deserve the same response tempo. Hybrid clusters deserve a separate review because their risk depends partly on systems outside Azure’s managed boundary.

This is also the moment to check whether monitoring is real or aspirational. Azure Monitor integration, diagnostic logging, audit logs, and application-level telemetry should be enabled before an incident, not negotiated during one. If a team cannot answer who connected to Cassandra last week, it is not ready to evaluate a cloud RCE calmly.

For Cassandra, that means reviewing virtual network access, private endpoint assumptions, peering relationships, route tables, network security groups, firewall appliances, and the application identities that can reach the service. It means ensuring that only the systems that genuinely need Cassandra access have it. It means checking that operational tools, jump hosts, and automation accounts have not become broad, permanent bridges into the data tier.

Authentication deserves the same scrutiny. If applications use long-lived secrets, rotate them. If access is overbroad, narrow it. If secrets live in configuration files or old deployment systems, move them into managed secret stores and improve rotation discipline.

None of that depends on knowing the root cause of CVE-2026-33109. That is the point. Good containment work is useful whether the vulnerability turns out to be easy, hard, narrow, or embarrassing.

Report confidence tells defenders that the vulnerability is credible. It does not tell them how long they have before attacker knowledge improves. That uncertainty is precisely why cloud RCE advisories deserve early action even when the public details are thin.

This is especially true for services tied to valuable data. Cassandra clusters often sit behind high-ingest, high-scale applications: telemetry, personalization, IoT, retail, messaging, and operational analytics. Those workloads may not look like traditional “domain admin” targets, but the data they hold can be commercially and operationally sensitive.

The organization that waits for exploit maturity to rise before acting is choosing to compete with attackers on speed. That is rarely a good bargain.

Those caveats matter because vulnerability coverage often drifts into speculation. Cloud advisories are particularly vulnerable to this because the underlying implementation is opaque. A flaw in “Azure Managed Instance for Apache Cassandra” could involve service-specific orchestration, a bundled component, a management plane path, a configuration boundary, or something closer to Cassandra proper. Without vendor detail, responsible analysis should not pretend otherwise.

But caution is not complacency. A vendor-confirmed remote code execution vulnerability in a managed database service is enough to justify immediate review. The correct posture is neither panic nor dismissal. It is controlled urgency.

That means customers should verify exposure, watch Microsoft’s advisory for revisions, check Azure Service Health and support communications, confirm whether remediation has been applied, reduce unnecessary reachability, and preserve logs. Those are boring actions. Boring actions are what keep vulnerability stories from becoming incident reports.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Microsoft’s Cassandra Problem Is Really a Managed-Service Trust Problem

Microsoft’s Cassandra Problem Is Really a Managed-Service Trust Problem

The obvious story is that Azure Managed Instance for Apache Cassandra has a remote code execution flaw. The more important story is that a managed database service has reminded customers that “managed” does not mean “riskless.” It means the operational burden has moved, not vanished.Azure Managed Instance for Apache Cassandra exists for organizations that want native Apache Cassandra behavior without building and babysitting the full stack themselves. Microsoft handles much of the machinery: deployment, node health, operating system patching, Cassandra patching, scaling orchestration, backups, certificate rotation, and other routine infrastructure work. That is the sales pitch, and for many workloads it is a good one.

But remote code execution changes the tone of the conversation. RCE is the vulnerability class that collapses abstractions. A bug that lets an attacker run code in the wrong place can turn a managed service from a neatly packaged platform into a question about tenant isolation, control-plane boundaries, data-plane exposure, and the limits of customer visibility.

That does not mean CVE-2026-33109 is being exploited, nor does it mean every Cassandra workload in Azure is in immediate danger. The public record available from Microsoft’s advisory does not give defenders a neat exploit chain to diagram on a whiteboard. It gives them something less satisfying and more realistic: a vendor-confirmed vulnerability class, an affected Azure service, and enough severity language to demand attention.

The Report Confidence Signal Is Doing More Work Than Usual

The user-facing metric text attached to the advisory explains report confidence: how certain the industry is that a vulnerability exists and how credible the available technical details are. This is not a decorative CVSS footnote. In this case, it is one of the most revealing parts of the advisory because it tells defenders how much they can trust the existence of the bug even when they cannot see the full internals.Security teams sometimes treat CVSS fields as a bureaucratic afterthought, something vulnerability scanners ingest and dashboards flatten into red, orange, and yellow squares. That habit is dangerous. Report confidence is one of the few metrics that tries to separate rumor from confirmation, and confirmation from exploit-ready detail.

A vulnerability can be publicly whispered about before the vendor knows the root cause. It can be independently researched but not fully proven. It can be acknowledged by the author or vendor, which sharply raises the confidence that defenders should treat it as real. CVE-2026-33109 belongs in the latter mental bucket: not because we have public exploit code, but because Microsoft has published it through MSRC as an Azure service vulnerability.

That creates an asymmetry. Defenders may not have the technical detail they want, but attackers do not necessarily need the advisory to hand them a complete recipe. In many modern vulnerability races, the title, affected product, impact type, and patch timing are enough to start targeted research.

Remote Code Execution Still Means the Same Thing in the Cloud

Cloud platforms have made a generation of administrators more comfortable with outsourcing the noisy parts of infrastructure. They have not repealed the meaning of remote code execution. If anything, cloud RCE is more politically and operationally sensitive because the blast radius is harder for customers to independently verify.In a traditional self-hosted Cassandra deployment, an RCE bug points the investigation toward specific nodes, JVM behavior, exposed interfaces, authentication paths, and network reachability. In a managed service, many of those details sit behind the provider’s curtain. The customer can see configuration, logs, metrics, access paths, and application behavior, but not always the underlying patch state or every layer of service implementation.

That is the bargain of managed services. Customers gain speed, reduced maintenance, and vendor-operated patching. They lose some forensic intimacy. When a vulnerability lands, they are asked to trust the provider’s remediation story more than their own ability to inspect every moving part.

Microsoft is hardly unique here. AWS, Google Cloud, Oracle, and every other serious cloud provider operate on the same fundamental premise: the provider owns parts of the stack that customers cannot directly audit. The issue with CVE-2026-33109 is not that this premise is unusual. It is that RCE forces everyone to remember the premise exists.

Cassandra’s Native Compatibility Is Both the Feature and the Risk

Azure Managed Instance for Apache Cassandra is not the same proposition as a compatibility API that merely imitates Cassandra at the edge. It is designed for customers who want the behavior of open-source Cassandra clusters, including lift-and-shift migrations and hybrid topologies. That native fidelity is the product’s appeal.It is also why vulnerability management gets complicated. The closer a managed service stays to an upstream technology, the more it inherits the operational personality of that technology. Cassandra’s strengths — distributed writes, tunable consistency, horizontal scale, and survivability across nodes — come with a rich surface area of protocols, configuration, repair operations, compaction behavior, authentication choices, and cluster membership mechanics.

Microsoft’s managed offering tries to wrap that complexity in Azure automation. It deploys datacenters into virtual networks, supports hybrid rings, automates patching, and lets customers retain more Cassandra-like control than they would get from a more abstract database service. That flexibility is useful for real enterprises with real migration constraints.

But flexibility always leaves seams. Hybrid connectivity, existing rings, custom configurations, DBA commands, client-to-node encryption choices, and workload-specific tuning all create places where the shared responsibility model becomes more than a slide in a compliance deck. CVE-2026-33109 should push customers to ask which parts of their Cassandra estate are truly Microsoft-managed and which parts remain their own problem.

The Absence of Public Exploit Detail Is Not Comfort

There is a reflex in some IT shops to downgrade urgency when an advisory lacks exploit code, proof-of-concept steps, or a breathless threat-intelligence blog post. That reflex is understandable. Security teams are drowning in vulnerabilities, and triage demands some method of deciding what can wait.But the absence of public technical detail cuts both ways. It may mean the bug is difficult to exploit, tightly constrained, or already mitigated within Microsoft’s service fabric. It may also mean the public is behind the private timeline. Vendors, researchers, cloud incident responders, and attackers rarely move at the same speed.

The advisory language around confidence reminds us that vulnerability knowledge matures. First comes existence. Then come root-cause clues. Then comes corroborating research. Then come weaponized techniques, scanner checks, and exploit modules. The window between vendor disclosure and broad technical understanding is often when disciplined defenders can get ahead.

For Azure customers, the right question is not “Can I reproduce CVE-2026-33109 in a lab today?” The right question is “What would I regret not having checked if more detail appears tomorrow?” That is a less satisfying question, but it is the one mature operations teams ask.

Managed Patching Narrows the Work, Not the Accountability

Microsoft’s documentation for Azure Managed Instance for Apache Cassandra emphasizes automatic patching. Operating system patches are handled on a regular cadence, and Cassandra software-level patches are applied when security vulnerabilities are identified. The service is explicitly pitched as eliminating the customer’s need to manage and patch servers directly.That is good news for CVE-2026-33109, but it is not a permission slip to ignore it. Managed patching shifts the remediation workflow from “download and install this update” to “verify the provider’s mitigation applies to my environment, understand the timing, and monitor for signs of exposure.” That is a different kind of work, not no work.

Customers should expect Microsoft to handle the vulnerable service components under its control. They should not assume Microsoft can automatically fix every risky topology, every weak application credential, every overbroad network rule, or every hybrid deployment pattern. The shared responsibility boundary becomes especially relevant for customers connecting Azure-managed Cassandra datacenters to on-premises or third-party hosted Cassandra rings.

In other words, the patch may be Microsoft’s job, but the risk register still belongs to the customer. If a business-critical workload depends on the service, security teams need to record the exposure, confirm mitigation status through Azure channels, and coordinate with application owners. “It is managed by Microsoft” is not an incident response plan.

Network Isolation Is the Quiet Hero, Until It Isn’t

Azure Managed Instance for Apache Cassandra is designed without public IP exposure for service resources, with instances injected into customer virtual networks using private IPs. That architecture matters. A remote code execution vulnerability in a service reachable only through private network paths is a very different operational problem from one exposed directly to the public internet.But private does not mean unreachable. Enterprise virtual networks are full of VPNs, ExpressRoute connections, peered networks, jump boxes, service principals, automation accounts, integration runtimes, and application subnets. The attacker model for cloud databases increasingly assumes that the first foothold may already exist somewhere adjacent.

This is where defenders should resist simplistic thinking. If CVE-2026-33109 requires network access to a Cassandra endpoint, then segmentation, private DNS hygiene, firewall rules, and identity controls become materially important. If exploitation requires authentication, credential hygiene and least privilege become more important. If exploitation is constrained to certain configurations, configuration inventory becomes the difference between panic and precision.

The advisory’s public detail may be limited, but the defensive implication is not. Treat reachability as a first-class control. The fewer systems that can talk to Cassandra, the fewer systems can become the launchpad for whatever exploit path eventually becomes public.

The Hybrid Cluster Is Where the Clean Cloud Story Gets Messy

The most interesting deployments of Azure Managed Instance for Apache Cassandra are not always the cleanest ones. The service supports hybrid scenarios where Azure-managed datacenters join existing Cassandra rings running on-premises or in other environments. That is useful for migration, latency, resilience, and incremental modernization.It is also where vulnerability response becomes harder. A pure Azure deployment has a relatively clear provider boundary. A hybrid Cassandra ring can stretch trust across network links, operational teams, legacy nodes, old configuration assumptions, and inconsistent patch practices. When a managed datacenter participates in a wider ring, the customer has to think about the whole system, not merely the Azure resource.

This does not mean hybrid customers are necessarily more vulnerable to CVE-2026-33109. It means their investigation surface is larger. They need to understand which clients connect where, how authentication is enforced, whether client-to-node encryption is consistently enabled, how replication is configured, and which nodes remain outside Microsoft’s management envelope.

Hybrid cloud often looks tidy in architecture diagrams. During a vulnerability event, it looks like a chain of custody problem. Every link matters, and the links outside the managed service may be the ones least prepared for scrutiny.

CVSS Can Rank the Fire, but It Cannot Run the Drill

CVSS is useful because it gives organizations a common vocabulary. It is also dangerously flattening. A remote code execution bug in a managed Azure database service cannot be understood only through a numeric score, especially when customers may not control the underlying patch mechanism.The base metrics tell part of the story: attack vector, complexity, privileges required, user interaction, scope, and impacts to confidentiality, integrity, and availability. Temporal metrics, including report confidence, tell another part: how mature the exploit environment is, whether remediation exists, and how reliable the vulnerability information appears. Environmental context tells the part that matters most to the business: whether this particular Cassandra cluster holds crown-jewel data or a disposable test dataset.

Security programs that live by scanner severity alone will struggle with cloud vulnerabilities like this. A scanner may not see the managed layer. A configuration inventory may not know which internal applications use Cassandra. A CMDB may list the Azure resource but miss the dependency chain that makes it mission-critical.

The better approach is to use CVSS as a starting point and then ask operational questions. Is the service in production? Does it hold regulated or sensitive data? Is it reachable from broad internal networks? Is it part of a hybrid ring? Are logs enabled and retained? Has Microsoft indicated remediation or mitigation through Azure Service Health, support channels, or the Security Update Guide?

The Attackers Read Advisory Metadata Too

One uncomfortable reality of modern vulnerability disclosure is that metadata is intelligence. Product name, impact, release date, severity, and metric language all help attackers prioritize research. A cloud RCE in a managed database service is not just another line item; it is a signpost toward a high-value class of targets.Attackers do not need a public proof of concept to begin. They can compare service behavior before and after patches, inspect open-source Cassandra components for likely bug classes, look for recent dependency changes, analyze client and management interfaces, and test assumptions in their own tenants where possible. The more popular and business-critical the service, the more worthwhile that work becomes.

This is why “no known exploitation” should never be translated as “no urgency.” It means only that exploitation has not been publicly confirmed or disclosed through the channels being watched. There is always a period when exploitation is possible but not yet visible, and another period when it is visible to someone but not yet broadly reported.

For defenders, the answer is not paranoia. It is disciplined reduction of exposure. If the attack surface is small, logs are useful, privileges are constrained, and provider mitigation is confirmed, then the organization can absorb uncertainty without spiraling.

Microsoft’s Disclosure Style Leaves Customers Wanting More

MSRC advisories are built to be machine-readable, consistent, and safe. They are not built to satisfy every operator’s hunger for root-cause detail. That tradeoff is especially visible with cloud services, where Microsoft may avoid publishing details that could accelerate exploit development against customers still awaiting mitigation.There is a reasonable defense of that restraint. Publishing too much too quickly can arm attackers. In managed services, the provider may be able to remediate centrally without requiring every customer to perform a manual patch, making detailed exploit notes less necessary for broad protection.

But there is also a cost. Customers need enough information to scope their own risk. They need to know whether the vulnerability affects data-plane access, management operations, specific Cassandra versions, certain configurations, hybrid deployments, authentication states, or underlying infrastructure components. Without that, every customer has to build a cautious worst-case model.

The best cloud advisories are the ones that disclose progressively. Start with the urgent facts, then add clarifying detail as mitigation coverage improves. Customers do not need a weaponized exploit narrative. They do need enough specificity to avoid either underreacting or shutting down business unnecessarily.

For WindowsForum Readers, the Lesson Is Bigger Than Cassandra

WindowsForum’s audience includes people who have lived through decades of Patch Tuesday muscle memory. A Windows Server vulnerability usually implies a familiar workflow: identify affected builds, test updates, deploy patches, watch for regressions, and verify compliance. Azure service vulnerabilities break that rhythm.There may be no MSI to install. There may be no KB package to push through WSUS or Configuration Manager. There may be no server you can RDP into and inspect. The remediation path may run through Azure Service Health, Microsoft support, resource configuration, network policy, and waiting for the provider’s backend rollout.

That requires a different operational culture. Cloud security is less about owning every patch and more about owning every dependency. If a managed Cassandra cluster supports a line-of-business application, it belongs in the same risk conversation as domain controllers, SQL servers, storage accounts, and identity providers.

The Windows admin skill set still matters. Inventory, change management, least privilege, segmentation, logging, and incident response are timeless. What changes is where those disciplines are applied.

The Cassandra Estate Needs an Inventory Before It Needs a Panic Button

The first practical response to CVE-2026-33109 is not drama. It is inventory. Organizations should determine whether they use Azure Managed Instance for Apache Cassandra at all, which subscriptions contain it, which regions host it, which applications depend on it, and whether any deployments are hybrid.That sounds basic because it is. It is also where many enterprises fail. Cloud resources are easy to create, easy to forget, and easy to bury under subscription sprawl. A managed database that began as a migration test can become production infrastructure through the slow magic of convenience.

Once inventory exists, prioritization becomes possible. Production clusters with sensitive data and broad network reachability deserve immediate attention. Isolated development clusters still matter, but they do not deserve the same response tempo. Hybrid clusters deserve a separate review because their risk depends partly on systems outside Azure’s managed boundary.

This is also the moment to check whether monitoring is real or aspirational. Azure Monitor integration, diagnostic logging, audit logs, and application-level telemetry should be enabled before an incident, not negotiated during one. If a team cannot answer who connected to Cassandra last week, it is not ready to evaluate a cloud RCE calmly.

A Narrower Blast Radius Is the Best Patch Companion

Security teams should resist the temptation to treat provider patching as the only control that matters. Patching closes the known hole. Architecture determines how much damage the hole could have done.For Cassandra, that means reviewing virtual network access, private endpoint assumptions, peering relationships, route tables, network security groups, firewall appliances, and the application identities that can reach the service. It means ensuring that only the systems that genuinely need Cassandra access have it. It means checking that operational tools, jump hosts, and automation accounts have not become broad, permanent bridges into the data tier.

Authentication deserves the same scrutiny. If applications use long-lived secrets, rotate them. If access is overbroad, narrow it. If secrets live in configuration files or old deployment systems, move them into managed secret stores and improve rotation discipline.

None of that depends on knowing the root cause of CVE-2026-33109. That is the point. Good containment work is useful whether the vulnerability turns out to be easy, hard, narrow, or embarrassing.

The Real Deadline Is Before Exploit Maturity Changes

The most important clock in a vulnerability like this is not the publication date. It is the exploit maturity curve. Today’s vendor-confirmed advisory can become tomorrow’s reverse-engineered technique and next week’s commodity scanner check.Report confidence tells defenders that the vulnerability is credible. It does not tell them how long they have before attacker knowledge improves. That uncertainty is precisely why cloud RCE advisories deserve early action even when the public details are thin.

This is especially true for services tied to valuable data. Cassandra clusters often sit behind high-ingest, high-scale applications: telemetry, personalization, IoT, retail, messaging, and operational analytics. Those workloads may not look like traditional “domain admin” targets, but the data they hold can be commercially and operationally sensitive.

The organization that waits for exploit maturity to rise before acting is choosing to compete with attackers on speed. That is rarely a good bargain.

The Practical Reading of CVE-2026-33109 Is Caution Without Theater

For all the gravity of the RCE label, there is no need to invent facts that Microsoft has not published. The advisory does not, from the public information provided, prove active exploitation. It does not publicly describe a working exploit chain. It does not establish that every Azure customer is affected. It does not tell us that Cassandra itself, as an upstream open-source project, necessarily carries the same flaw in self-hosted form.Those caveats matter because vulnerability coverage often drifts into speculation. Cloud advisories are particularly vulnerable to this because the underlying implementation is opaque. A flaw in “Azure Managed Instance for Apache Cassandra” could involve service-specific orchestration, a bundled component, a management plane path, a configuration boundary, or something closer to Cassandra proper. Without vendor detail, responsible analysis should not pretend otherwise.

But caution is not complacency. A vendor-confirmed remote code execution vulnerability in a managed database service is enough to justify immediate review. The correct posture is neither panic nor dismissal. It is controlled urgency.

That means customers should verify exposure, watch Microsoft’s advisory for revisions, check Azure Service Health and support communications, confirm whether remediation has been applied, reduce unnecessary reachability, and preserve logs. Those are boring actions. Boring actions are what keep vulnerability stories from becoming incident reports.

The Checklist Is Short Because the Work Is Not

CVE-2026-33109 does not need a theatrical response; it needs a disciplined one. The concrete work is familiar to anyone who has handled cloud risk seriously, but the managed-service context changes who does what and where proof comes from.- Identify every Azure Managed Instance for Apache Cassandra deployment across all subscriptions, tenants, and regions before assuming the organization is unaffected.

- Confirm Microsoft’s remediation or mitigation status through official Azure and MSRC channels, especially for production and hybrid deployments.

- Review network reachability so that only required application hosts, administrative paths, and trusted services can connect to Cassandra endpoints.

- Treat hybrid Cassandra rings as higher-complexity environments because part of the exposure may live outside Microsoft’s managed boundary.

- Preserve and review audit logs, diagnostic logs, Azure Monitor data, and application telemetry for unusual access patterns around the advisory window.

- Rotate credentials and reduce overbroad application permissions where Cassandra access depends on long-lived secrets or legacy deployment practices.

Source: MSRC Security Update Guide - Microsoft Security Response Center