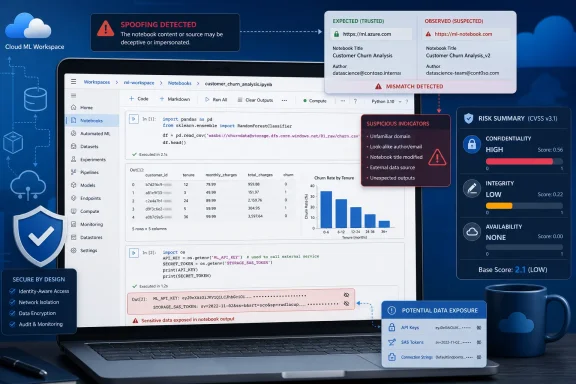

Microsoft’s May 2026 security guidance describes CVE-2026-33833 as an Azure Machine Learning Notebook spoofing vulnerability in which successful exploitation could expose sensitive information and permit limited modification of disclosed information, while not directly disrupting service availability. That CVSS split matters because it tells defenders this is not a “crash the service” bug; it is a trust, data, and workflow integrity bug. In a notebook environment, that distinction is more important than it first appears, because the most valuable thing in the room is often not uptime but the data, credentials, model context, and human confidence attached to an interactive session.

The CVSS impact line for CVE-2026-33833 reads like a compact risk memo: confidentiality is high, integrity is low, and availability is none. In plain English, Microsoft is saying that an attacker who successfully exploits the flaw could see information they should not see, could make some limited changes to information that becomes exposed through the attack path, but should not be able to knock Azure Machine Learning Notebook service offline simply by exploiting this vulnerability.

That is a different class of pain from the vulnerabilities that dominate patch headlines. There is no immediate promise of ransomware-style encryption, resource exhaustion, kernel panic, or cloud service outage in the metric Microsoft provided. The danger is quieter: a poisoned or deceptive notebook experience that can reveal sensitive material and slightly alter what the victim sees or handles.

For ordinary endpoint vulnerabilities, “no availability impact” can sound reassuring. In a machine learning notebook, it should not. Notebooks are where exploratory code, credentials, sample datasets, evaluation outputs, internal documentation, and model development assumptions tend to accumulate in one browser-accessible workbench. A vulnerability that preserves uptime while compromising that workbench can still be a serious incident.

Azure Machine Learning notebooks are not static web pages. They are interactive development environments tied to compute instances, workspace storage, identities, kernels, files, outputs, and often downstream services. If a flaw lets an attacker convincingly alter what a user sees or interacts with, the attacker may not need remote code execution to cause damage. They need the victim to trust the wrong thing at the wrong moment.

That is why the confidentiality score is the key signal. A high confidentiality impact means sensitive information could be disclosed at scale or with serious consequence. In this context, “sensitive information” could mean notebook contents, displayed results, workspace metadata, embedded secrets, pasted tokens, data previews, model artifacts, or other information exposed in the affected interaction. The CVSS metric does not enumerate those items, but it tells us the potential disclosure is not trivial.

The low integrity rating narrows the claim. Microsoft is not saying the attacker can freely rewrite the whole environment or arbitrarily corrupt the workspace. It is saying some modification is possible, but limited. That may mean changes to displayed information, user-facing content, or data involved in the spoofed interaction rather than broad write access across the service.

In many organizations, notebooks are also informal by design. They are used for experiments, debugging, demos, incident analysis, model prototyping, and one-off transformations that never become polished production code. That informality produces security blind spots: secrets end up in cells, outputs include more data than intended, and shared notebooks become semi-official records of how something works.

The result is a strange inversion of traditional severity thinking. A vulnerability that cannot bring down the platform can still undermine the chain of trust that leads people to make production decisions. If a notebook view can be spoofed or its displayed content manipulated, a user may copy a value, run a command, approve a result, trust a dataset preview, or share an output that has been shaped by an attacker.

That is why CVE-2026-33833 should be triaged as a data-handling risk, not merely a web UI blemish. The “availability: none” metric means your notebook service may keep humming while the wrong information leaves the building.

In Azure Machine Learning, valuable information rarely sits in one neat place. A notebook may contain code that references storage accounts, paths to training data, experiment names, model registry details, internal APIs, package feeds, environment variables, and snippets of operational logic. Even when secrets are not hard-coded, the notebook can reveal how the environment is wired together.

The attacker’s prize may be context rather than a password. Knowing which datasets exist, how models are trained, where outputs are stored, what naming conventions identify sensitive projects, or which users operate a workspace can all help an attacker move from opportunistic probing to targeted intrusion. High confidentiality impact says defenders should not reduce the issue to “someone may see a screen they should not.”

There is also a compliance dimension. ML notebooks frequently touch regulated or commercially sensitive data, including customer samples, telemetry, financial records, medical-derived datasets, intellectual property, or evaluation results that reveal product plans. If the vulnerable path exposes notebook content or outputs, the incident may become a data exposure review even without service downtime.

Low integrity impact often means the attacker’s ability to alter data is constrained in scope, duration, target, or consequence. In a spoofing scenario, that could involve changing what a user sees rather than permanently changing backend records. But for notebooks, even transient deception can matter because users act on displayed information.

Imagine a notebook output that appears to show a successful validation, a benign dataset sample, a safe endpoint, or a trusted instruction. If the user relies on that output, the attacker has influenced a workflow without needing durable write access. The platform’s authoritative data may remain intact, but the human decision loop has been compromised.

This is the unpleasant truth about integrity in browser-based developer tools: not every consequential modification is a database write. Some of the most damaging changes are changes to perception. A spoofed interface can redirect attention, hide warning signs, or make malicious content appear native to the environment.

That is good news for operations teams worried about downtime. It suggests this vulnerability is unlikely to become a mass outage story by itself. There is no indication from the provided metric that attackers can use CVE-2026-33833 to stop compute instances, break notebook kernels, or deny access to Azure Machine Learning.

But security teams should resist the temptation to translate “no outage” into “low priority.” Cloud compromises increasingly preserve availability because stealth is useful. An attacker interested in data exposure generally wants the service to remain normal enough that users keep using it.

For defenders, the right mental model is closer to phishing inside a trusted workbench than to a denial-of-service event. The user experience may continue to function, but the trust boundary around what is being displayed, disclosed, or modified has weakened.

Azure Machine Learning notebooks sit especially close to privileged activity. They are not just pages displaying information; they are tools for running code against cloud-connected data and compute. Microsoft’s documentation describes notebooks as part of the workspace authoring experience, with compute instances used to run notebook cells and Python scripts. That means the notebook UI is an operational interface, not a brochure.

The affected vulnerability’s spoofing classification should therefore be read through a cloud-console lens. If a user can be tricked inside a trusted Microsoft-hosted environment, the attacker benefits from the credibility of the platform. Users are more likely to trust prompts, outputs, links, paths, and visible data when they appear inside the tool they use every day.

This is where administrators need to update old instincts. In the desktop era, spoofing might have meant a fake dialog. In the cloud development era, spoofing can mean manipulating the interface through which users handle data, credentials, model logic, and business decisions.

Attackers do not need every user to fall for a crafted interaction. They need the right user: a data scientist with access to a sensitive workspace, an engineer debugging production data, an analyst reviewing model outputs, or an administrator validating a shared notebook. The target’s role determines the blast radius more than the vulnerability label does.

This is particularly relevant for Azure Machine Learning because collaboration is normal. Teams share notebooks, clone samples, inspect outputs, and move between Studio, VS Code, Jupyter, storage, and source control. Any vulnerability that rides along those collaboration patterns has a more plausible path to real-world exploitation than one that requires users to do something bizarre.

The most dangerous attacks often look like ordinary work. A shared link, a notebook preview, a generated output, or a familiar workspace page can become the delivery mechanism. If the flaw is patched service-side, customers may not see a traditional update package, but they still need to examine how trust moves through their environment.

That does not mean customers have nothing to do. It means the customer action shifts from “install this binary” to “verify exposure, review access, reduce risky workflows, and watch for suspicious activity.” If Microsoft has already rolled out a fix or mitigation in the cloud service, the remaining work is governance and detection.

The first practical question is whether the organization uses Azure Machine Learning notebooks at all. Many tenants have Azure subscriptions but no meaningful ML workspace usage. Others have experimental workspaces created by research teams or business units outside the central IT view. The risk conversation starts with inventory.

The second question is who can access those workspaces and what data they can reach. If a notebook vulnerability can expose sensitive information, role assignments, workspace membership, storage permissions, and private endpoint configuration become more than hygiene items. They become compensating controls.

The third question is whether users have been treating notebooks as secure scratchpads for secrets. If the answer is yes, this vulnerability should trigger a cleanup even if exploitation is not known. Secret material belongs in managed secret stores and identity-based flows, not in notebook cells or outputs that may be exposed through UI flaws.

A training notebook built on public sample data may not carry much confidentiality risk. A fraud model notebook connected to customer transactions is different. A notebook used for internal red-team analytics, product telemetry, financial forecasting, or healthcare data preparation sits in a different category again.

That is why severity should be locally recalculated in business terms. Microsoft’s C:H tells you the vulnerability class can expose important information. Your environment determines whether the exposed information is mildly embarrassing, contractually sensitive, regulated, or strategically valuable.

The low integrity metric should also be interpreted locally. If the affected workflow only displays disposable experiment notes, limited modification may be tolerable. If users rely on notebook outputs for production decisions, audit evidence, model promotion, or compliance reporting, even modest manipulation becomes more serious.

That pushes defenders toward behavioral review. Which users accessed sensitive notebooks around the exposure window? Were unusual notebooks opened, shared, cloned, or modified? Did users report strange UI behavior, unexpected prompts, altered outputs, or suspicious links? Were secrets rotated after possible exposure?

Logs from Azure, Entra ID, storage accounts, workspaces, and related services may matter more than notebook logs alone. If the confidentiality impact is high, defenders should think beyond the vulnerable component and ask what a successful attacker would do next with the information. That may include probing storage, attempting token reuse, targeting users, or crafting follow-on attacks using internal project knowledge.

This is also a moment to test whether ML workspaces are covered by the same security monitoring as more traditional infrastructure. In many companies, they are not. Data science environments can sit awkwardly between IT, security, research, and platform engineering, which makes ownership unclear precisely when a vulnerability arrives.

That shared responsibility model is easy to recite and hard to operationalize. Azure Machine Learning is a good example because it combines cloud identity, web UI, compute, storage, source-controlled code, package dependencies, and human experimentation. A single CVE can touch all of those layers indirectly.

For IT pros, the lesson is not that Azure ML notebooks are uniquely unsafe. It is that browser-based developer surfaces are now Tier 1 administrative and data-access surfaces. They deserve the same attention given to VPN portals, identity providers, CI/CD systems, and privileged management consoles.

The old perimeter did not disappear; it became a chain of sessions, tokens, web apps, and delegated permissions. Spoofing bugs attack that chain where humans make judgments. CVE-2026-33833 is a reminder that ML security is not only about adversarial models or poisoned datasets. Sometimes it is about whether the notebook interface itself can be trusted.

Administrators should confirm whether Azure Machine Learning is in use, identify workspaces that contain sensitive data, and verify that Microsoft’s remediation guidance has been applied or that the service-side fix is in place. Security teams should brief notebook users to report suspicious interface behavior, especially if they handle sensitive datasets or credentials in browser-based workflows.

This is also a good excuse to tighten old habits. Remove secrets from notebooks. Prefer managed identities and key vault references. Restrict workspace roles. Keep sensitive projects behind private networking where appropriate. Treat shared notebook links and externally sourced notebook content with the same skepticism you would apply to documents containing macros.

The most important change may be cultural. Data science teams often move fast because they are supposed to explore. Security teams often arrive late because notebooks look like productivity tools rather than privileged interfaces. CVE-2026-33833 shows why that split is increasingly artificial.

Source: MSRC Security Update Guide - Microsoft Security Response Center

The Score Is Saying “Data Exposure,” Not “System Outage”

The Score Is Saying “Data Exposure,” Not “System Outage”

The CVSS impact line for CVE-2026-33833 reads like a compact risk memo: confidentiality is high, integrity is low, and availability is none. In plain English, Microsoft is saying that an attacker who successfully exploits the flaw could see information they should not see, could make some limited changes to information that becomes exposed through the attack path, but should not be able to knock Azure Machine Learning Notebook service offline simply by exploiting this vulnerability.That is a different class of pain from the vulnerabilities that dominate patch headlines. There is no immediate promise of ransomware-style encryption, resource exhaustion, kernel panic, or cloud service outage in the metric Microsoft provided. The danger is quieter: a poisoned or deceptive notebook experience that can reveal sensitive material and slightly alter what the victim sees or handles.

For ordinary endpoint vulnerabilities, “no availability impact” can sound reassuring. In a machine learning notebook, it should not. Notebooks are where exploratory code, credentials, sample datasets, evaluation outputs, internal documentation, and model development assumptions tend to accumulate in one browser-accessible workbench. A vulnerability that preserves uptime while compromising that workbench can still be a serious incident.

Spoofing Is the Wrong Word If You Hear “Cosmetic”

Microsoft labels this a spoofing vulnerability, and that word often undersells the risk. To many administrators, spoofing still evokes fake login pages, misleading links, or UI trickery that lives somewhere below “real compromise.” In modern cloud consoles, that boundary is less comfortable.Azure Machine Learning notebooks are not static web pages. They are interactive development environments tied to compute instances, workspace storage, identities, kernels, files, outputs, and often downstream services. If a flaw lets an attacker convincingly alter what a user sees or interacts with, the attacker may not need remote code execution to cause damage. They need the victim to trust the wrong thing at the wrong moment.

That is why the confidentiality score is the key signal. A high confidentiality impact means sensitive information could be disclosed at scale or with serious consequence. In this context, “sensitive information” could mean notebook contents, displayed results, workspace metadata, embedded secrets, pasted tokens, data previews, model artifacts, or other information exposed in the affected interaction. The CVSS metric does not enumerate those items, but it tells us the potential disclosure is not trivial.

The low integrity rating narrows the claim. Microsoft is not saying the attacker can freely rewrite the whole environment or arbitrarily corrupt the workspace. It is saying some modification is possible, but limited. That may mean changes to displayed information, user-facing content, or data involved in the spoofed interaction rather than broad write access across the service.

Notebooks Turn Small Trust Failures Into Big Operational Risks

The notebook is the least boring part of the ML stack because it is where human intent and machine execution meet. A data scientist can inspect a dataset, run a cell, authenticate to a service, pull from storage, evaluate model behavior, and write results back to a repository or workspace from the same session. That convenience is exactly why a spoofing bug in this surface deserves attention.In many organizations, notebooks are also informal by design. They are used for experiments, debugging, demos, incident analysis, model prototyping, and one-off transformations that never become polished production code. That informality produces security blind spots: secrets end up in cells, outputs include more data than intended, and shared notebooks become semi-official records of how something works.

The result is a strange inversion of traditional severity thinking. A vulnerability that cannot bring down the platform can still undermine the chain of trust that leads people to make production decisions. If a notebook view can be spoofed or its displayed content manipulated, a user may copy a value, run a command, approve a result, trust a dataset preview, or share an output that has been shaped by an attacker.

That is why CVE-2026-33833 should be triaged as a data-handling risk, not merely a web UI blemish. The “availability: none” metric means your notebook service may keep humming while the wrong information leaves the building.

The Confidentiality Rating Is the Loudest Part of the Advisory

CVSS impact metrics are blunt instruments, but they are useful when read in order. Here, C:H is the headline. It means the expected disclosure impact is high enough that defenders should assume valuable information could be exposed if exploitation succeeds.In Azure Machine Learning, valuable information rarely sits in one neat place. A notebook may contain code that references storage accounts, paths to training data, experiment names, model registry details, internal APIs, package feeds, environment variables, and snippets of operational logic. Even when secrets are not hard-coded, the notebook can reveal how the environment is wired together.

The attacker’s prize may be context rather than a password. Knowing which datasets exist, how models are trained, where outputs are stored, what naming conventions identify sensitive projects, or which users operate a workspace can all help an attacker move from opportunistic probing to targeted intrusion. High confidentiality impact says defenders should not reduce the issue to “someone may see a screen they should not.”

There is also a compliance dimension. ML notebooks frequently touch regulated or commercially sensitive data, including customer samples, telemetry, financial records, medical-derived datasets, intellectual property, or evaluation results that reveal product plans. If the vulnerable path exposes notebook content or outputs, the incident may become a data exposure review even without service downtime.

Low Integrity Still Means the Attacker Can Bend Reality

The integrity metric is I:L, not I:N. That small letter matters. Microsoft’s wording indicates that an attacker could make some changes to disclosed information, but not enough to justify a high integrity rating.Low integrity impact often means the attacker’s ability to alter data is constrained in scope, duration, target, or consequence. In a spoofing scenario, that could involve changing what a user sees rather than permanently changing backend records. But for notebooks, even transient deception can matter because users act on displayed information.

Imagine a notebook output that appears to show a successful validation, a benign dataset sample, a safe endpoint, or a trusted instruction. If the user relies on that output, the attacker has influenced a workflow without needing durable write access. The platform’s authoritative data may remain intact, but the human decision loop has been compromised.

This is the unpleasant truth about integrity in browser-based developer tools: not every consequential modification is a database write. Some of the most damaging changes are changes to perception. A spoofed interface can redirect attention, hide warning signs, or make malicious content appear native to the environment.

No Availability Impact Is Not a Free Pass

Availability is the easiest CVSS category to understand and the easiest to overvalue. A:N means successful exploitation is not expected to reduce the availability of the affected component. The service does not crash, lock, or become unusable as a direct consequence of the exploit.That is good news for operations teams worried about downtime. It suggests this vulnerability is unlikely to become a mass outage story by itself. There is no indication from the provided metric that attackers can use CVE-2026-33833 to stop compute instances, break notebook kernels, or deny access to Azure Machine Learning.

But security teams should resist the temptation to translate “no outage” into “low priority.” Cloud compromises increasingly preserve availability because stealth is useful. An attacker interested in data exposure generally wants the service to remain normal enough that users keep using it.

For defenders, the right mental model is closer to phishing inside a trusted workbench than to a denial-of-service event. The user experience may continue to function, but the trust boundary around what is being displayed, disclosed, or modified has weakened.

Azure ML’s Browser Surface Is Now Part of the Security Boundary

The modern Microsoft cloud admin experience is deeply browser-mediated. Azure portals, Entra workflows, Microsoft 365 consoles, Defender dashboards, GitHub integrations, and Azure Machine Learning Studio all ask users to trust complex web applications with powerful capabilities. That makes web application bugs in cloud consoles more important than their old “website bug” reputation suggests.Azure Machine Learning notebooks sit especially close to privileged activity. They are not just pages displaying information; they are tools for running code against cloud-connected data and compute. Microsoft’s documentation describes notebooks as part of the workspace authoring experience, with compute instances used to run notebook cells and Python scripts. That means the notebook UI is an operational interface, not a brochure.

The affected vulnerability’s spoofing classification should therefore be read through a cloud-console lens. If a user can be tricked inside a trusted Microsoft-hosted environment, the attacker benefits from the credibility of the platform. Users are more likely to trust prompts, outputs, links, paths, and visible data when they appear inside the tool they use every day.

This is where administrators need to update old instincts. In the desktop era, spoofing might have meant a fake dialog. In the cloud development era, spoofing can mean manipulating the interface through which users handle data, credentials, model logic, and business decisions.

User Interaction Is Often the Hidden Cost

The provided summary does not spell out every CVSS base metric, but spoofing vulnerabilities in browser-delivered notebook experiences commonly involve some kind of user interaction. That does not make them harmless. It makes them social-technical vulnerabilities: part bug, part workflow trap.Attackers do not need every user to fall for a crafted interaction. They need the right user: a data scientist with access to a sensitive workspace, an engineer debugging production data, an analyst reviewing model outputs, or an administrator validating a shared notebook. The target’s role determines the blast radius more than the vulnerability label does.

This is particularly relevant for Azure Machine Learning because collaboration is normal. Teams share notebooks, clone samples, inspect outputs, and move between Studio, VS Code, Jupyter, storage, and source control. Any vulnerability that rides along those collaboration patterns has a more plausible path to real-world exploitation than one that requires users to do something bizarre.

The most dangerous attacks often look like ordinary work. A shared link, a notebook preview, a generated output, or a familiar workspace page can become the delivery mechanism. If the flaw is patched service-side, customers may not see a traditional update package, but they still need to examine how trust moves through their environment.

Patch Management Looks Different When the Product Is a Cloud Service

For Windows admins, vulnerability response usually starts with a patching calendar. WSUS, Intune, Configuration Manager, servicing rings, reboot windows, and rollback plans all shape the muscle memory. Azure service vulnerabilities complicate that rhythm because remediation may be deployed by Microsoft rather than installed by the customer.That does not mean customers have nothing to do. It means the customer action shifts from “install this binary” to “verify exposure, review access, reduce risky workflows, and watch for suspicious activity.” If Microsoft has already rolled out a fix or mitigation in the cloud service, the remaining work is governance and detection.

The first practical question is whether the organization uses Azure Machine Learning notebooks at all. Many tenants have Azure subscriptions but no meaningful ML workspace usage. Others have experimental workspaces created by research teams or business units outside the central IT view. The risk conversation starts with inventory.

The second question is who can access those workspaces and what data they can reach. If a notebook vulnerability can expose sensitive information, role assignments, workspace membership, storage permissions, and private endpoint configuration become more than hygiene items. They become compensating controls.

The third question is whether users have been treating notebooks as secure scratchpads for secrets. If the answer is yes, this vulnerability should trigger a cleanup even if exploitation is not known. Secret material belongs in managed secret stores and identity-based flows, not in notebook cells or outputs that may be exposed through UI flaws.

Enterprise Risk Depends on the Notebook’s Contents

CVE entries necessarily generalize. Enterprises cannot. The same vulnerability can be a moderate nuisance in a sandbox workspace and a serious incident in a regulated production ML environment.A training notebook built on public sample data may not carry much confidentiality risk. A fraud model notebook connected to customer transactions is different. A notebook used for internal red-team analytics, product telemetry, financial forecasting, or healthcare data preparation sits in a different category again.

That is why severity should be locally recalculated in business terms. Microsoft’s C:H tells you the vulnerability class can expose important information. Your environment determines whether the exposed information is mildly embarrassing, contractually sensitive, regulated, or strategically valuable.

The low integrity metric should also be interpreted locally. If the affected workflow only displays disposable experiment notes, limited modification may be tolerable. If users rely on notebook outputs for production decisions, audit evidence, model promotion, or compliance reporting, even modest manipulation becomes more serious.

Defenders Should Look for Misplaced Trust, Not Just Indicators

The hardest part of responding to a spoofing vulnerability is that traditional indicators may be thin. There may be no crashed host, no malware binary, no failed service, and no obvious persistence mechanism. The event may look like a user interacted with a page, viewed content, or copied information.That pushes defenders toward behavioral review. Which users accessed sensitive notebooks around the exposure window? Were unusual notebooks opened, shared, cloned, or modified? Did users report strange UI behavior, unexpected prompts, altered outputs, or suspicious links? Were secrets rotated after possible exposure?

Logs from Azure, Entra ID, storage accounts, workspaces, and related services may matter more than notebook logs alone. If the confidentiality impact is high, defenders should think beyond the vulnerable component and ask what a successful attacker would do next with the information. That may include probing storage, attempting token reuse, targeting users, or crafting follow-on attacks using internal project knowledge.

This is also a moment to test whether ML workspaces are covered by the same security monitoring as more traditional infrastructure. In many companies, they are not. Data science environments can sit awkwardly between IT, security, research, and platform engineering, which makes ownership unclear precisely when a vulnerability arrives.

Microsoft’s Cloud Security Story Keeps Moving Toward Shared Discipline

This vulnerability lands in a broader reality Microsoft customers already know: cloud services reduce some patching burdens but do not eliminate security work. Microsoft can fix server-side code, harden its platform, and publish CVEs through MSRC. Customers still decide who has access, where data lives, how notebooks are shared, and whether secrets are handled correctly.That shared responsibility model is easy to recite and hard to operationalize. Azure Machine Learning is a good example because it combines cloud identity, web UI, compute, storage, source-controlled code, package dependencies, and human experimentation. A single CVE can touch all of those layers indirectly.

For IT pros, the lesson is not that Azure ML notebooks are uniquely unsafe. It is that browser-based developer surfaces are now Tier 1 administrative and data-access surfaces. They deserve the same attention given to VPN portals, identity providers, CI/CD systems, and privileged management consoles.

The old perimeter did not disappear; it became a chain of sessions, tokens, web apps, and delegated permissions. Spoofing bugs attack that chain where humans make judgments. CVE-2026-33833 is a reminder that ML security is not only about adversarial models or poisoned datasets. Sometimes it is about whether the notebook interface itself can be trusted.

The Practical Reading for WindowsForum Admins

For WindowsForum readers managing Microsoft-heavy estates, the immediate value of this CVE is not panic but translation. The metric combination tells you where to spend time: data exposure, limited content manipulation, and user trust. It does not point to emergency capacity planning or service resilience work.Administrators should confirm whether Azure Machine Learning is in use, identify workspaces that contain sensitive data, and verify that Microsoft’s remediation guidance has been applied or that the service-side fix is in place. Security teams should brief notebook users to report suspicious interface behavior, especially if they handle sensitive datasets or credentials in browser-based workflows.

This is also a good excuse to tighten old habits. Remove secrets from notebooks. Prefer managed identities and key vault references. Restrict workspace roles. Keep sensitive projects behind private networking where appropriate. Treat shared notebook links and externally sourced notebook content with the same skepticism you would apply to documents containing macros.

The most important change may be cultural. Data science teams often move fast because they are supposed to explore. Security teams often arrive late because notebooks look like productivity tools rather than privileged interfaces. CVE-2026-33833 shows why that split is increasingly artificial.

The Notebook Bug Is a Small Window Into a Larger Cloud Risk

CVE-2026-33833 should be understood as a high-confidentiality, low-integrity, no-availability-impact spoofing issue in Azure Machine Learning Notebook rather than as a traditional outage or remote-code-execution crisis. That profile makes it especially relevant to organizations whose notebooks contain sensitive data, operational logic, or credentials.- Successful exploitation could allow an attacker to view sensitive information exposed through the vulnerable notebook interaction.

- The integrity impact is limited, but not zero, meaning some disclosed information or user-facing content could be altered in a constrained way.

- The vulnerability is not expected to directly affect availability, so defenders should not wait for service disruption as a signal of compromise.

- The highest-risk environments are those where Azure Machine Learning notebooks are connected to sensitive datasets, production-adjacent workflows, or broadly shared collaboration patterns.

- The best customer-side response is to verify Azure ML usage, review access controls, remove secrets from notebooks, and monitor for suspicious user or workspace activity.

Source: MSRC Security Update Guide - Microsoft Security Response Center