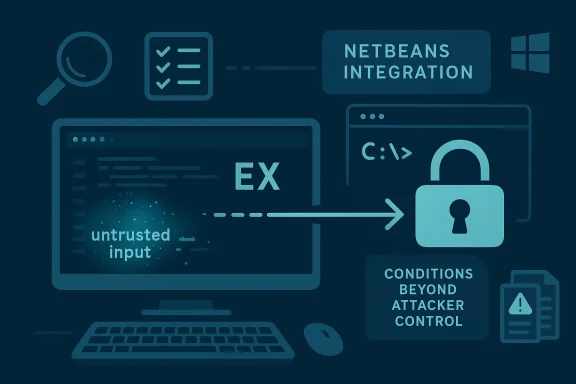

Microsoft’s description of CVE-2026-39881 points to a Vim Ex command injection issue in the editor’s NetBeans integration, but the key nuance is that exploitation is not described as purely opportunistic. Instead, Microsoft says a successful attack depends on conditions beyond the attacker’s direct control, meaning the adversary may need to know the environment, shape the target state, or even position themselves to observe or alter traffic before the bug becomes usable.

That wording matters because it usually signals a vulnerability that is real but not always trivially mass-exploitable. In practical terms, it suggests a scenario where attackers may need preparation and patience rather than a one-shot trigger. Microsoft’s own vulnerability guidance language also makes clear that these descriptions are intended to capture attack complexity, privileges, and environmental factors in a more precise way than a simple severity label can.

Vim is one of those rare tools that outlived multiple computing eras without losing its relevance. It remains deeply embedded in developer workflows, sysadmin sessions, build systems, container images, and remote shell habits, which is exactly why security issues in Vim matter even when they appear niche. A bug in a core feature can ripple outward through scripts, plugins, and habits that assume the editor is safe enough to trust by default.

The NetBeans integration is especially interesting because it is not the part of Vim most casual users think about. It was built for richer editor-to-IDE workflows, and the upstream Vim site still documents NetBeans interface use cases, including debugging-oriented integration. That sort of feature is powerful, but powerful features tend to enlarge the trust boundary.

What makes CVE-2026-39881 notable is the combination of command injection and exploit preconditions. Command injection in an editor often implies that attacker-controlled data can be turned into an Ex command or equivalent shell action, but Microsoft’s wording suggests the path is mediated by context rather than universally exploitable on sight. That is a classic recipe for targeted abuse: lower noise, more reconnaissance, and a higher chance that the attacker will focus on specific environments rather than broad scans.

There is also a broader pattern here. Modern security advisories increasingly frame vulnerabilities in terms of how an attack actually unfolds, not just whether code can be executed. Microsoft has spent years refining the Security Update Guide’s vulnerability descriptions to better reflect attack complexity and preconditions, because that nuance helps defenders prioritize. In other words, the wording itself is a signal that the exploit path is likely context-sensitive, not universal.

That is especially important for a tool like Vim, where the real attack surface is often shaped by how the editor is embedded into a larger workflow. If NetBeans integration is enabled, the environment may already be mixing editor behavior with project metadata, external messages, or remote-controlled interactions. In that kind of setup, the attacker’s best move is often to discover the exact path a target uses, then tailor input to that path.

In fact, targeted exploitation sometimes becomes more attractive when a bug is harder to mass weaponize. Attackers who can spend time on reconnaissance and environment shaping may view that as a tradeoff worth making, especially if the target is a developer workstation, build server, or other high-value asset. Those machines often have broad access to source code, credentials, or internal systems, which can turn a narrow editor flaw into a much broader compromise.

That is why command-injection bugs in integrations tend to be more dangerous than they first appear. The weakness is not merely that a string is malformed; it is that a string may be parsed in a privileged context and converted into an action the user never intended. In a development workflow, that action can include code navigation, file access, or shell-like behavior depending on how the integration is implemented. Trusted plumbing becomes the risk.

That is why command injection has remained such a persistent class of issue across software. The bug often lives in the glue code, not the core feature, and glue code is exactly where assumptions accumulate. Developers tend to optimize for convenience in integration layers, while attackers optimize for ambiguity.

The key issue is that command injection often bypasses the safe assumptions users make about text editing. A user expects a buffer to contain text, not instructions. Once a parser treats text as commands, the attacker’s job is to smuggle a control sequence through a trusted channel. That is why even localized injection bugs can have outsized consequences in tools that are treated as part of the operating environment.

That means the blast radius is not limited to the editor window. On a workstation used for software development, an attacker who achieves command execution-like behavior through a trusted editing session may be able to steal source code, plant backdoors, or modify build artifacts. In that sense, the issue is not just an editor bug; it is a workflow integrity bug.

This kind of prerequisite-heavy vulnerability is common in software that mixes automation with trust. Once a feature assumes friendly inputs, adversaries start asking what happens when inputs are only mostly friendly, or when the surrounding environment can be nudged into a predictable state. A successful attacker often tests variants until one lands in the right parser, on the right line, with the right delimiters or control characters.

That does not make exploitation easy. It makes it more deliberate. For defenders, deliberate attacks are often more dangerous than opportunistic ones because they can target specific users, specific repositories, or specific workstations with far more precision. The quieter the attack, the harder it is to detect early.

The enterprise risk is also asymmetric. A consumer who edits local text files may face inconvenience, but an engineer with repository access, admin keys, or deployment rights can turn a compromised editor session into a much larger incident. That is why security teams should treat editor vulnerabilities as part of the workstation hardening problem, not as merely application-layer annoyances.

There is also a supply-chain dimension. If a workstation is compromised through an editor path, the attacker may use that foothold to alter code before it reaches review or deployment. That means even a vulnerability that looks local can become a source of downstream compromise in a modern software delivery pipeline.

The consumer story is really a reminder that security bugs often hide in features users never learn they are using. If a package manager, IDE bridge, or editor plugin enables the vulnerable path by default, the average user may have no idea that an “extra” capability is active at all. That makes documentation and secure defaults crucial. Silent features can become silent liabilities.

The practical lesson is simple: if you do not need a feature, do not enable it. And if a tool integrates deeply with external systems, keep it updated with the same seriousness you would apply to browsers, email clients, or password managers. Security debt accumulates fastest in tooling people assume is “just an editor.”

The history of Vim security issues over the last few release cycles also reinforces this point. Recent upstream releases have included fixes for multiple vulnerabilities and crashes, reminding users that even a mature codebase can accumulate security debt in distinct subsystems. When a project ships repeated security fixes, the right response is not alarmism; it is disciplined patch management.

The lesson for defenders is to treat “core utilities” as part of the attack surface, not as passive tools. In a world where editors talk to IDEs, terminals, and automation frameworks, a command injection bug in a feature like NetBeans integration can matter just as much as a bug in a web app.

Security teams should also use this moment to reassess how much trust they place in editor extensions and bridge features. Modern development environments are layered, and each layer can become a choke point for attacker-controlled input. The safe assumption is not that a text editor is harmless; it is that any tool able to interpret structured input can become a security boundary.

Source: MSRC Security Update Guide - Microsoft Security Response Center

That wording matters because it usually signals a vulnerability that is real but not always trivially mass-exploitable. In practical terms, it suggests a scenario where attackers may need preparation and patience rather than a one-shot trigger. Microsoft’s own vulnerability guidance language also makes clear that these descriptions are intended to capture attack complexity, privileges, and environmental factors in a more precise way than a simple severity label can.

Overview

Overview

Vim is one of those rare tools that outlived multiple computing eras without losing its relevance. It remains deeply embedded in developer workflows, sysadmin sessions, build systems, container images, and remote shell habits, which is exactly why security issues in Vim matter even when they appear niche. A bug in a core feature can ripple outward through scripts, plugins, and habits that assume the editor is safe enough to trust by default.The NetBeans integration is especially interesting because it is not the part of Vim most casual users think about. It was built for richer editor-to-IDE workflows, and the upstream Vim site still documents NetBeans interface use cases, including debugging-oriented integration. That sort of feature is powerful, but powerful features tend to enlarge the trust boundary.

What makes CVE-2026-39881 notable is the combination of command injection and exploit preconditions. Command injection in an editor often implies that attacker-controlled data can be turned into an Ex command or equivalent shell action, but Microsoft’s wording suggests the path is mediated by context rather than universally exploitable on sight. That is a classic recipe for targeted abuse: lower noise, more reconnaissance, and a higher chance that the attacker will focus on specific environments rather than broad scans.

There is also a broader pattern here. Modern security advisories increasingly frame vulnerabilities in terms of how an attack actually unfolds, not just whether code can be executed. Microsoft has spent years refining the Security Update Guide’s vulnerability descriptions to better reflect attack complexity and preconditions, because that nuance helps defenders prioritize. In other words, the wording itself is a signal that the exploit path is likely context-sensitive, not universal.

What Microsoft’s wording really implies

The phrase describing “conditions beyond the attacker’s control” is not just bureaucratic padding. It usually means the attacker cannot simply fire a payload at the target and expect reliable success. Instead, the exploit may require a particular user action, a specific configuration, a network vantage point, or an understanding of how the vulnerable component is deployed.That is especially important for a tool like Vim, where the real attack surface is often shaped by how the editor is embedded into a larger workflow. If NetBeans integration is enabled, the environment may already be mixing editor behavior with project metadata, external messages, or remote-controlled interactions. In that kind of setup, the attacker’s best move is often to discover the exact path a target uses, then tailor input to that path.

Why this is not “low risk” by default

A vulnerability that requires preparation is still dangerous. It simply means the attacker has to invest more effort before they can expect success, which often filters out casual criminals but not well-resourced ones. Less automatable is not the same thing as less serious.In fact, targeted exploitation sometimes becomes more attractive when a bug is harder to mass weaponize. Attackers who can spend time on reconnaissance and environment shaping may view that as a tradeoff worth making, especially if the target is a developer workstation, build server, or other high-value asset. Those machines often have broad access to source code, credentials, or internal systems, which can turn a narrow editor flaw into a much broader compromise.

- Attack complexity appears elevated, not absent.

- Environmental knowledge may be necessary for reliable exploitation.

- Network position could matter if traffic interception or modification is part of the path.

- Targeted attacks are more plausible than random spray-and-pray abuse.

- Developer systems can turn a local editor weakness into a supply-chain risk.

Why NetBeans integration is a meaningful attack surface

NetBeans integration is not a decorative feature. It is a bridge between the editor and external tooling, which means it can be asked to interpret structured data, commands, or event flows that originate outside Vim’s immediate control. When an editor starts acting as a protocol endpoint, a parser, or a command dispatcher, the security stakes rise quickly.That is why command-injection bugs in integrations tend to be more dangerous than they first appear. The weakness is not merely that a string is malformed; it is that a string may be parsed in a privileged context and converted into an action the user never intended. In a development workflow, that action can include code navigation, file access, or shell-like behavior depending on how the integration is implemented. Trusted plumbing becomes the risk.

The trust boundary problem

The most important security question is not “Can Vim do this?” but “What is Vim trusting when it does this?” NetBeans integration implies some external participant can influence editor behavior, and any time that participant is less trusted than the editor process, the design must be defensive. If command data is not rigorously sanitized, an attacker may be able to turn a benign-looking message into an Ex command.That is why command injection has remained such a persistent class of issue across software. The bug often lives in the glue code, not the core feature, and glue code is exactly where assumptions accumulate. Developers tend to optimize for convenience in integration layers, while attackers optimize for ambiguity.

- Integration features often expand the trusted input surface.

- Command parsing is risky when the input source is not fully trusted.

- Editor plugins can become action translators for attacker-supplied data.

- Security depends on whether commands are validated, escaped, or rejected outright.

How Ex command injection becomes dangerous

Ex commands are one of Vim’s most powerful mechanisms. That power is part of the editor’s identity, but it also means any path that can synthesize or influence Ex commands deserves careful scrutiny. If an attacker can inject a command string into the flow, they may be able to trigger file operations, editor state changes, or, in some configurations, actions that reach outside the editor’s intended boundaries.The key issue is that command injection often bypasses the safe assumptions users make about text editing. A user expects a buffer to contain text, not instructions. Once a parser treats text as commands, the attacker’s job is to smuggle a control sequence through a trusted channel. That is why even localized injection bugs can have outsized consequences in tools that are treated as part of the operating environment.

Practical consequences for defenders

In enterprise environments, the dangerous part is often not the first command but the second-stage effect. A malicious Ex command may be used to rewrite configuration, load scripts, tamper with buffers, or pivot the user into opening additional resources. For developers, the bigger threat is that the editor may sit near secrets, source repositories, build credentials, and deployment tooling.That means the blast radius is not limited to the editor window. On a workstation used for software development, an attacker who achieves command execution-like behavior through a trusted editing session may be able to steal source code, plant backdoors, or modify build artifacts. In that sense, the issue is not just an editor bug; it is a workflow integrity bug.

- Ex commands are high privilege actions inside the editor.

- Command injection can bypass normal user intent.

- Developer workstations magnify the impact because of nearby secrets and tooling.

- The impact may include persistence, tampering, or lateral movement.

Why the attack likely needs preparation

Microsoft’s wording strongly suggests that the attacker may need to gather details about the environment before attempting exploitation. That can mean identifying whether NetBeans integration is enabled, how the target handles incoming data, or whether the vulnerable path is reachable through a particular workflow. In other words, the exploit may depend as much on reconnaissance as on payload construction.This kind of prerequisite-heavy vulnerability is common in software that mixes automation with trust. Once a feature assumes friendly inputs, adversaries start asking what happens when inputs are only mostly friendly, or when the surrounding environment can be nudged into a predictable state. A successful attacker often tests variants until one lands in the right parser, on the right line, with the right delimiters or control characters.

What “attack preparation” can look like

There are several ways an attacker might increase success probability without directly controlling the victim. They might probe the environment, wait for a workflow trigger, manipulate input timing, or set up a man-in-the-middle position if the vulnerable integration depends on network traffic. Microsoft’s own examples for attack complexity explicitly include environment knowledge, reliability improvement steps, and traffic interception or modification in the broader security guidance context.That does not make exploitation easy. It makes it more deliberate. For defenders, deliberate attacks are often more dangerous than opportunistic ones because they can target specific users, specific repositories, or specific workstations with far more precision. The quieter the attack, the harder it is to detect early.

- Reconnaissance may be required before exploitation.

- The attacker may need a specific operational context.

- Interception or modification of traffic could be relevant in some deployments.

- Targeted exploitation is more likely than mass exploitation.

Why this matters to enterprise defenders

For enterprises, the security impact is broader than “one editor on one machine.” Vim often appears in automation scripts, remote administration workflows, Linux jump hosts, container images, and CI/CD-adjacent tasks. If a vulnerability affects a version shipped broadly across those environments, the exposure can be surprisingly widespread even when the feature seems obscure.The enterprise risk is also asymmetric. A consumer who edits local text files may face inconvenience, but an engineer with repository access, admin keys, or deployment rights can turn a compromised editor session into a much larger incident. That is why security teams should treat editor vulnerabilities as part of the workstation hardening problem, not as merely application-layer annoyances.

Separate impact for developers and operators

Developers are especially exposed because they routinely trust input from projects, plugins, issue trackers, and build artifacts. Operators, meanwhile, may use Vim in privileged shells where the editor can reach configuration files or operational scripts. In both cases, a command injection flaw creates a bridge between untrusted content and trusted execution.There is also a supply-chain dimension. If a workstation is compromised through an editor path, the attacker may use that foothold to alter code before it reaches review or deployment. That means even a vulnerability that looks local can become a source of downstream compromise in a modern software delivery pipeline.

- Developer endpoints may expose source code and credentials.

- Admin shells increase the chance of high-impact misuse.

- CI/CD-adjacent workflows magnify the downstream blast radius.

- Trust in plugins and integrations often exceeds their actual security posture.

Consumer impact is narrower, but still real

For typical home users, the risk profile may be lower simply because most people do not use Vim’s NetBeans integration. Still, that does not mean the issue can be dismissed. Power users, hobbyist developers, and anyone who opens untrusted project files or uses advanced editor integrations could be exposed in ways that standard consumer security tools may not anticipate.The consumer story is really a reminder that security bugs often hide in features users never learn they are using. If a package manager, IDE bridge, or editor plugin enables the vulnerable path by default, the average user may have no idea that an “extra” capability is active at all. That makes documentation and secure defaults crucial. Silent features can become silent liabilities.

What consumer users should understand

Even if the attack requires multiple conditions, a user does not need to be a high-value target to matter. A compromised workstation can be used for credential theft, phishing, or lateral movement into personal cloud accounts and developer services. In the modern ecosystem, home versus enterprise is often a false boundary.The practical lesson is simple: if you do not need a feature, do not enable it. And if a tool integrates deeply with external systems, keep it updated with the same seriousness you would apply to browsers, email clients, or password managers. Security debt accumulates fastest in tooling people assume is “just an editor.”

- Casual users are less likely to be direct targets.

- Advanced users may unknowingly expose the vulnerable path.

- A compromised workstation can still support credential theft and phishing.

- Security updates matter even for niche integrations.

The bigger pattern: editor bugs are supply-chain bugs in disguise

Modern editors are no longer isolated text tools. They are extensible runtimes that parse files, interpret project metadata, execute hooks, and interoperate with external services. Once an editor becomes a platform, its vulnerabilities start behaving like platform vulnerabilities. That is why security problems in Vim often deserve the same operational response as flaws in build tools or dependency managers.The history of Vim security issues over the last few release cycles also reinforces this point. Recent upstream releases have included fixes for multiple vulnerabilities and crashes, reminding users that even a mature codebase can accumulate security debt in distinct subsystems. When a project ships repeated security fixes, the right response is not alarmism; it is disciplined patch management.

Why maturity does not equal immunity

Long-lived software often carries compatibility constraints that make aggressive rewrites difficult. That means old assumptions can survive for years, especially in parser-heavy or integration-heavy code paths. The danger is not negligence so much as accumulated complexity. Complexity is where trust boundaries blur.The lesson for defenders is to treat “core utilities” as part of the attack surface, not as passive tools. In a world where editors talk to IDEs, terminals, and automation frameworks, a command injection bug in a feature like NetBeans integration can matter just as much as a bug in a web app.

- Mature software still accumulates vulnerabilities.

- Integration layers are often where the highest-risk assumptions live.

- Security debt in tooling can create downstream supply-chain exposure.

- Patch discipline matters across the entire workstation stack.

Strengths and Opportunities

Microsoft’s phrasing gives defenders a useful head start by signaling that exploitation is not a purely opportunistic, one-click affair. That can help security teams prioritize exposure review, identify likely attack paths, and focus on the users or systems most likely to matter operationally. It also encourages a more realistic view of risk: harder to exploit does not mean safe to ignore.- The advisory language suggests context-aware triage is possible.

- Teams can focus on machines where NetBeans integration is actually used.

- The issue reinforces the value of least privilege on developer endpoints.

- It is a chance to audit editor plugins and integrations more broadly.

- Security teams can improve detection around unusual editor behavior.

- The bug may drive stronger defaults for integration features.

- It highlights the need for patch hygiene in development toolchains.

Risks and Concerns

The biggest concern is that a vulnerability in a niche integration can still hit high-value systems if the attack path is carefully prepared. Developers and administrators are exactly the people most likely to run powerful editor features with broad access, which means the impact could be severe even if exploitation is not trivial. There is also a real risk that teams underestimate the issue because the feature is obscure.- The attack may be targeted and patient, which is harder to spot.

- Developer systems can expose code, secrets, and deployment access.

- Command injection often leads to broader workflow compromise.

- Unpatched long-tail installations can remain vulnerable for a long time.

- Security teams may overlook editor plugins during asset inventory.

- If traffic manipulation is relevant, network trust assumptions become fragile.

- Obscure integrations often escape standard hardening baselines.

Looking Ahead

The most important next step is not speculation about payload mechanics; it is disciplined exposure assessment. Organizations should identify whether Vim is deployed with NetBeans integration enabled, whether the affected build is present anywhere in the estate, and whether any workflows allow untrusted data to reach the command path. If the answer to any of those questions is yes, the issue deserves real prioritization, not a background ticket.Security teams should also use this moment to reassess how much trust they place in editor extensions and bridge features. Modern development environments are layered, and each layer can become a choke point for attacker-controlled input. The safe assumption is not that a text editor is harmless; it is that any tool able to interpret structured input can become a security boundary.

- Inventory Vim versions and plugin usage across the fleet.

- Review whether NetBeans integration is enabled anywhere.

- Look for unusual editor-driven command behavior.

- Harden developer workstations and remote admin shells.

- Treat editor integrations as part of the secure coding toolchain.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Last edited: