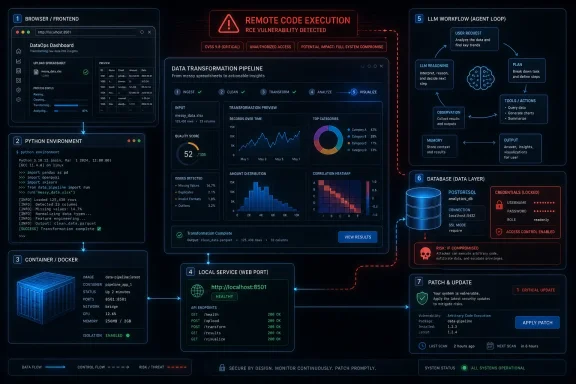

Microsoft has listed CVE-2026-41094 as a Microsoft Data Formulator remote code execution vulnerability in its Security Update Guide on May 12, 2026, tying the issue to a product that turns data into AI-assisted visualizations and exploratory analysis. The advisory matters less because Data Formulator is a household Windows component than because it sits at the increasingly dangerous junction of uploaded data, generated code, local services, databases, and large-language-model workflows. Microsoft’s public wording is still thin, but the classification alone should make administrators ask a sharper question: where have “experimental” AI tools quietly become production-adjacent software?

That is the real story behind CVE-2026-41094. A remote code execution bug in an AI data-analysis tool is not just another line item in the monthly vulnerability ledger; it is a warning about how quickly research prototypes, GitHub repositories, Python packages, Codespaces demos, and internal analyst utilities can become part of an organization’s attack surface without passing through the same gates as Exchange, SQL Server, or Windows Server.

Data Formulator began life as the kind of Microsoft Research project that feels almost deliberately far from traditional enterprise security drama. It is an AI-powered visualization and data exploration tool designed to help users convert messy inputs into charts, reports, and insights through a mix of natural language, interface controls, and generated transformations. In plain English, it lets analysts ask for a view of their data and lets software do much of the munging and chart-building work that previously required SQL, Python, Power Query, or a patient colleague.

That pitch is attractive for exactly the same reason it is risky. Data-analysis tools sit close to the raw material of business: spreadsheets, exports, screenshots, database tables, customer records, telemetry, and operational logs. When those tools add AI agents and code generation, they become more than passive viewers. They begin to act on data, transform it, connect to stores, and sometimes execute generated logic to satisfy a user’s request.

Remote code execution is the nightmare class of bug in that setting. It means an attacker may be able to make software run code of the attacker’s choosing, often by feeding it crafted input or by reaching a vulnerable service path. The exact exploit path for CVE-2026-41094 was not fully spelled out in the public advisory material available at publication time, but the product category gives defenders enough context to worry about practical exposure.

Data Formulator is not a built-in Windows shell component. It is not the sort of vulnerability that will, by itself, send every consumer PC owner diving for Windows Update. But for labs, data teams, internal AI pilots, university groups, consulting shops, and developers who installed it locally or deployed it in shared environments, the relevant question is not “Do we run Microsoft Windows?” It is “Did someone stand up Data Formulator because it was useful?”

That does not mean the vulnerability is imaginary or unimportant. A CVE in Microsoft’s Security Update Guide is a vendor acknowledgement, and vendor acknowledgement is one of the strongest signals defenders get in the vulnerability-confidence chain. It tells security teams that the issue has crossed the threshold from rumor, forum post, proof-of-concept chatter, or third-party guesswork into an official response process.

The user-supplied metric text gets to the heart of this. Confidence is not the same as exploitability, and exploitability is not the same as business impact. A bug can be real but hard to weaponize; a bug can be easy to weaponize but limited to a small population; a bug can be devastating only when deployed in a specific architecture. The point of confidence scoring is to separate “we suspect something might be wrong” from “the affected technology’s owner has confirmed a vulnerability exists.”

For CVE-2026-41094, the existence side of that ledger is strong. The unanswered questions are operational: which versions are affected, what exact component is vulnerable, whether authentication is required, whether malicious files or prompts are involved, whether the issue affects the local web server, whether database connectors alter the risk profile, and whether hosted demos or self-hosted deployments are in scope.

AI-assisted data tools scramble that model. The boundary between “input” and “instruction” is blurrier. A CSV file may be data, but it may also contain formula payloads, malformed fields, embedded markup, or strings that influence downstream code. A natural-language prompt may be harmless user intent, or it may become part of a chain that shapes generated Python, JavaScript, SQL, Vega-Lite, or database queries. A screenshot may be an image, but a modern extraction pipeline may convert it into structured text that flows into an agent loop.

That does not mean every AI tool is uniquely doomed. It does mean security teams need to stop treating them as glorified UI wrappers. If a tool can load arbitrary files, talk to a model, generate transformations, connect to databases, run a local web service, and produce executable artifacts, it belongs in the same risk conversation as notebooks, BI gateways, ETL tools, and developer workstations.

Data Formulator is especially interesting because its value proposition depends on turning user intent into data transformations. The safer design pattern is to isolate generated logic, constrain file access, sanitize inputs, and make dangerous operations explicit. The unsafe pattern is to let convenience quietly accumulate privileges until a charting assistant becomes a code execution broker.

Data Formulator is available through a public GitHub repository and has been installable as a Python package. That is good for experimentation and community adoption, but it complicates enterprise response. A developer may have installed it in a virtual environment months ago. A data scientist may have put it in a Codespace. A research group may have cloned the repository and modified it. An internal team may have containerized it for a pilot and then forgotten the pilot became useful.

This is how “shadow AI” becomes a security problem. The issue is not merely that employees use unsanctioned tools. It is that sanctioned curiosity often produces unsanctioned persistence. A proof of concept becomes a demo, a demo becomes a dashboard, and a dashboard becomes something executives expect to work every Monday morning.

For administrators, the first response to CVE-2026-41094 should be inventory, not panic. Search package indexes, developer machines, containers, internal Git repositories, notebooks, lab servers, and cloud workspaces for Data Formulator. Look for the Python package, cloned repositories, exposed local services, reverse proxies, and shared demo instances. The most exposed installations are not necessarily the most official ones; they are the ones somebody made reachable because collaboration was easier that way.

In the context of a data-analysis application, “remote” could involve a network-accessible web interface. It could involve a crafted dataset delivered to a user. It could involve a malicious database response. It could involve a prompt or uploaded file that reaches a vulnerable backend path. It could also be limited by authentication, user interaction, default binding behavior, or deployment choices.

That nuance matters for prioritization. An internet-exposed instance used by multiple analysts deserves immediate attention. A local-only virtual environment on a developer laptop is still worth patching, but it carries a different operational risk. A hosted internal service connected to production databases may be more sensitive than a public demo with no real data because code execution near credentials and data stores can turn a contained bug into a breach path.

Security teams should therefore avoid the performative binary: either “critical panic” or “ignore because niche.” CVE-2026-41094 lives in the middle ground where many real enterprise incidents begin. The population may be smaller than mainstream Windows components, but the installations that exist may be unusually privileged.

Data Formulator’s own ecosystem has included support for loading structured data and connecting to data sources. That is not inherently a flaw; it is what makes the tool useful. But it changes the blast radius of any code execution vulnerability. An attacker who can execute code inside an analytics tool may not need domain administrator privileges to cause harm. Access to a database token, a local file path, a cached model key, or an internal network route can be enough.

This is where AI-era tooling collides with old-fashioned security hygiene. Least privilege remains boring and undefeated. If an exploratory visualization tool has read access to an entire production warehouse, then a compromise of that tool becomes a production data incident. If it runs with a developer’s broad cloud credentials, the vulnerability inherits that developer’s reach.

The right defensive lens is not “What can Data Formulator do?” It is “What can the account, container, VM, notebook, or user session running Data Formulator do?” That is the difference between a lab nuisance and an incident-response bridge call.

But CVE-2026-41094 shows the limit of format improvements. A well-structured advisory can still be operationally thin. Machines can ingest severity, product names, CVSS vectors, affected versions, and update metadata, but defenders still need the story: what is reachable, what is plausible, what is default, what is mitigated by configuration, and what attacker behavior would look like.

The industry sometimes pretends that vulnerability management is a spreadsheet problem. It is not. It is an interpretation problem disguised as a spreadsheet problem. Scores help, but they do not replace product knowledge. CVSS metrics help, but they do not tell you whether the only exposed instance in your environment belongs to a team that connected it to sensitive data.

That is why confidence metrics are valuable but incomplete. Knowing that the vulnerability exists with vendor confirmation increases urgency. It does not answer how to rank the bug against everything else released on the same patch day. The answer depends on whether Data Formulator exists in your estate and how it is deployed.

The problem is that enterprise adoption no longer waits for enterprise hardening. GitHub stars, package installs, viral demos, internal champions, and executive fascination with AI can push a prototype into real use long before procurement, security review, or architecture boards have named it. That is not a Microsoft-only problem. It is the operating model of modern software.

For vendors, the lesson is that “research” and “open source” labels do not absolve projects from secure defaults. If a tool opens a browser-accessible service, handles untrusted files, invokes generated code, or connects to databases, it should ship with conservative binding, clear authentication guidance, sandboxing assumptions, and aggressive dependency maintenance. Users should not need to reverse-engineer the threat model from a README.

For customers, the lesson is harsher. If your organization permits AI tooling experiments, it needs a lightweight path to register them. Heavy governance will simply drive pilots underground. The goal should be to make the safe path easier than the unsafe one: approved containers, secret handling, network templates, update monitoring, and clear rules for when a demo may touch real data.

Start with exposure. If Data Formulator is running as a local service, confirm whether it binds only to localhost or is reachable from other machines. If it is shared through a tunnel, reverse proxy, Codespace, lab server, or container platform, decide whether that exposure is still necessary. Temporary sharing links and development ports have a nasty habit of becoming semi-permanent infrastructure.

Then examine privilege. Run the tool as a non-admin user. Avoid mounting broad filesystem paths into containers. Do not run it on the same host that stores irreplaceable data. Separate experimentation from production credentials. If database access is required, use narrowly scoped read-only accounts and rotate any secrets that may have been exposed to a vulnerable instance.

Finally, preserve enough telemetry to investigate. Web access logs, process execution traces, dependency versions, package installation times, and shell history can help determine whether a vulnerable deployment was merely present or actually abused. In smaller teams, that may feel excessive. In a post-RCE investigation, it is the difference between confidence and guesswork.

The modern Windows environment is no longer just endpoints, domain controllers, file shares, and Office. It is Windows laptops running WSL, Python packages, VS Code extensions, containers, local web apps, AI assistants, data tools, and cloud CLIs. Vulnerability management that stops at installed MSI packages will miss a growing share of the real attack surface.

That shift makes asset discovery more political as well as technical. Developers and analysts may not think of a local AI visualization tool as “installed software.” They may see it as a repository, a virtual environment, or a notebook dependency. Security teams must meet that reality with better discovery methods rather than scolding people for not filing tickets every time they test a promising tool.

CVE-2026-41094 is therefore a useful forcing function. It asks whether organizations can find a Microsoft Research-derived AI tool in their own environments. If the answer is no, the vulnerability is only part of the problem.

For CVE-2026-41094, the triage formula should be simple. If you do not run Data Formulator, document that and move on. If you run it only in isolated local experiments with no sensitive data and no network exposure, patch it and tighten defaults. If you run it as a shared service, connect it to databases, or expose it beyond a single trusted workstation, treat it as urgent.

The dangerous cases are the ones nobody owns. A lab VM created for a demo. A shared data-science box with broad credentials. A container launched by a team that has since reorganized. A cloud development environment reachable by collaborators. These are the environments where a “niche” RCE becomes an incident because the software’s social lifecycle outlasted its technical review.

Vendor advisories cannot know your topology. They can tell you a product is vulnerable. They cannot tell you whether a forgotten pilot is sitting one hop from crown-jewel data.

For administrators and security teams, the concrete response is refreshingly direct:

Source: MSRC Security Update Guide - Microsoft Security Response Center

That is the real story behind CVE-2026-41094. A remote code execution bug in an AI data-analysis tool is not just another line item in the monthly vulnerability ledger; it is a warning about how quickly research prototypes, GitHub repositories, Python packages, Codespaces demos, and internal analyst utilities can become part of an organization’s attack surface without passing through the same gates as Exchange, SQL Server, or Windows Server.

Microsoft’s AI Data Lab Has Become an Attack Surface

Microsoft’s AI Data Lab Has Become an Attack Surface

Data Formulator began life as the kind of Microsoft Research project that feels almost deliberately far from traditional enterprise security drama. It is an AI-powered visualization and data exploration tool designed to help users convert messy inputs into charts, reports, and insights through a mix of natural language, interface controls, and generated transformations. In plain English, it lets analysts ask for a view of their data and lets software do much of the munging and chart-building work that previously required SQL, Python, Power Query, or a patient colleague.That pitch is attractive for exactly the same reason it is risky. Data-analysis tools sit close to the raw material of business: spreadsheets, exports, screenshots, database tables, customer records, telemetry, and operational logs. When those tools add AI agents and code generation, they become more than passive viewers. They begin to act on data, transform it, connect to stores, and sometimes execute generated logic to satisfy a user’s request.

Remote code execution is the nightmare class of bug in that setting. It means an attacker may be able to make software run code of the attacker’s choosing, often by feeding it crafted input or by reaching a vulnerable service path. The exact exploit path for CVE-2026-41094 was not fully spelled out in the public advisory material available at publication time, but the product category gives defenders enough context to worry about practical exposure.

Data Formulator is not a built-in Windows shell component. It is not the sort of vulnerability that will, by itself, send every consumer PC owner diving for Windows Update. But for labs, data teams, internal AI pilots, university groups, consulting shops, and developers who installed it locally or deployed it in shared environments, the relevant question is not “Do we run Microsoft Windows?” It is “Did someone stand up Data Formulator because it was useful?”

The Advisory Is Sparse, and That Is Part of the Signal

The most frustrating thing about many modern vulnerability disclosures is not that vendors say too much. It is that they often say just enough to trigger remediation work and not enough to help defenders understand exposure. CVE-2026-41094 lands in that familiar zone: the name tells us the affected Microsoft technology and the impact category, while the public details remain constrained.That does not mean the vulnerability is imaginary or unimportant. A CVE in Microsoft’s Security Update Guide is a vendor acknowledgement, and vendor acknowledgement is one of the strongest signals defenders get in the vulnerability-confidence chain. It tells security teams that the issue has crossed the threshold from rumor, forum post, proof-of-concept chatter, or third-party guesswork into an official response process.

The user-supplied metric text gets to the heart of this. Confidence is not the same as exploitability, and exploitability is not the same as business impact. A bug can be real but hard to weaponize; a bug can be easy to weaponize but limited to a small population; a bug can be devastating only when deployed in a specific architecture. The point of confidence scoring is to separate “we suspect something might be wrong” from “the affected technology’s owner has confirmed a vulnerability exists.”

For CVE-2026-41094, the existence side of that ledger is strong. The unanswered questions are operational: which versions are affected, what exact component is vulnerable, whether authentication is required, whether malicious files or prompts are involved, whether the issue affects the local web server, whether database connectors alter the risk profile, and whether hosted demos or self-hosted deployments are in scope.

The Old RCE Mental Model Does Not Fit AI Tooling

Classic Windows remote code execution stories tend to have a familiar shape. A network service listens on a port. A parser mishandles input. An attacker sends a packet or file. Code runs. Administrators patch, block traffic, or apply a mitigation until the maintenance window arrives.AI-assisted data tools scramble that model. The boundary between “input” and “instruction” is blurrier. A CSV file may be data, but it may also contain formula payloads, malformed fields, embedded markup, or strings that influence downstream code. A natural-language prompt may be harmless user intent, or it may become part of a chain that shapes generated Python, JavaScript, SQL, Vega-Lite, or database queries. A screenshot may be an image, but a modern extraction pipeline may convert it into structured text that flows into an agent loop.

That does not mean every AI tool is uniquely doomed. It does mean security teams need to stop treating them as glorified UI wrappers. If a tool can load arbitrary files, talk to a model, generate transformations, connect to databases, run a local web service, and produce executable artifacts, it belongs in the same risk conversation as notebooks, BI gateways, ETL tools, and developer workstations.

Data Formulator is especially interesting because its value proposition depends on turning user intent into data transformations. The safer design pattern is to isolate generated logic, constrain file access, sanitize inputs, and make dangerous operations explicit. The unsafe pattern is to let convenience quietly accumulate privileges until a charting assistant becomes a code execution broker.

Open Source Distribution Makes the Patch Story Messier

Microsoft’s traditional patching empire is built around managed distribution. Windows Update, Microsoft Update, WSUS, Intune, Configuration Manager, and Defender Vulnerability Management all assume that the affected product is known, inventoried, and tied into an update channel. Open source research tools do not always live in that world.Data Formulator is available through a public GitHub repository and has been installable as a Python package. That is good for experimentation and community adoption, but it complicates enterprise response. A developer may have installed it in a virtual environment months ago. A data scientist may have put it in a Codespace. A research group may have cloned the repository and modified it. An internal team may have containerized it for a pilot and then forgotten the pilot became useful.

This is how “shadow AI” becomes a security problem. The issue is not merely that employees use unsanctioned tools. It is that sanctioned curiosity often produces unsanctioned persistence. A proof of concept becomes a demo, a demo becomes a dashboard, and a dashboard becomes something executives expect to work every Monday morning.

For administrators, the first response to CVE-2026-41094 should be inventory, not panic. Search package indexes, developer machines, containers, internal Git repositories, notebooks, lab servers, and cloud workspaces for Data Formulator. Look for the Python package, cloned repositories, exposed local services, reverse proxies, and shared demo instances. The most exposed installations are not necessarily the most official ones; they are the ones somebody made reachable because collaboration was easier that way.

“Remote” Does Not Always Mean Internet-Facing

The phrase remote code execution has a way of flattening nuance. Many readers hear “remote” and immediately imagine a wormable service listening on the public internet. That is one possible shape, but it is not the only one, and sparse advisories make it dangerous to assume either the worst or the best.In the context of a data-analysis application, “remote” could involve a network-accessible web interface. It could involve a crafted dataset delivered to a user. It could involve a malicious database response. It could involve a prompt or uploaded file that reaches a vulnerable backend path. It could also be limited by authentication, user interaction, default binding behavior, or deployment choices.

That nuance matters for prioritization. An internet-exposed instance used by multiple analysts deserves immediate attention. A local-only virtual environment on a developer laptop is still worth patching, but it carries a different operational risk. A hosted internal service connected to production databases may be more sensitive than a public demo with no real data because code execution near credentials and data stores can turn a contained bug into a breach path.

Security teams should therefore avoid the performative binary: either “critical panic” or “ignore because niche.” CVE-2026-41094 lives in the middle ground where many real enterprise incidents begin. The population may be smaller than mainstream Windows components, but the installations that exist may be unusually privileged.

Data Tools Are Credential Magnets

The uncomfortable truth about analytics software is that it often sits next to credentials. To make analysis useful, teams connect tools to databases, warehouses, APIs, storage buckets, and internal services. They store API keys in environment files, connection strings in configuration panels, tokens in developer shells, and secrets in places that were meant to be temporary.Data Formulator’s own ecosystem has included support for loading structured data and connecting to data sources. That is not inherently a flaw; it is what makes the tool useful. But it changes the blast radius of any code execution vulnerability. An attacker who can execute code inside an analytics tool may not need domain administrator privileges to cause harm. Access to a database token, a local file path, a cached model key, or an internal network route can be enough.

This is where AI-era tooling collides with old-fashioned security hygiene. Least privilege remains boring and undefeated. If an exploratory visualization tool has read access to an entire production warehouse, then a compromise of that tool becomes a production data incident. If it runs with a developer’s broad cloud credentials, the vulnerability inherits that developer’s reach.

The right defensive lens is not “What can Data Formulator do?” It is “What can the account, container, VM, notebook, or user session running Data Formulator do?” That is the difference between a lab nuisance and an incident-response bridge call.

Microsoft’s Transparency Push Meets the Reality of Thin CVEs

Microsoft has spent the past few years trying to make its vulnerability disclosures more machine-readable and more aligned with industry standards. The company has moved toward publishing Common Weakness Enumeration data for CVEs and has discussed broader use of machine-readable advisory formats. Those are useful changes, especially for large organizations that triage hundreds of vulnerabilities across scanners, ticketing systems, and asset inventories.But CVE-2026-41094 shows the limit of format improvements. A well-structured advisory can still be operationally thin. Machines can ingest severity, product names, CVSS vectors, affected versions, and update metadata, but defenders still need the story: what is reachable, what is plausible, what is default, what is mitigated by configuration, and what attacker behavior would look like.

The industry sometimes pretends that vulnerability management is a spreadsheet problem. It is not. It is an interpretation problem disguised as a spreadsheet problem. Scores help, but they do not replace product knowledge. CVSS metrics help, but they do not tell you whether the only exposed instance in your environment belongs to a team that connected it to sensitive data.

That is why confidence metrics are valuable but incomplete. Knowing that the vulnerability exists with vendor confirmation increases urgency. It does not answer how to rank the bug against everything else released on the same patch day. The answer depends on whether Data Formulator exists in your estate and how it is deployed.

Research Prototypes Need Production Guardrails Before Production Finds Them

Microsoft Research projects often arrive with the energy of possibility. They show what a future workflow could feel like before the enterprise controls have caught up. Data Formulator fits that mold: a tool that rethinks data visualization through AI agents, blended interaction, and iterative exploration. It is easy to see why analysts would want it.The problem is that enterprise adoption no longer waits for enterprise hardening. GitHub stars, package installs, viral demos, internal champions, and executive fascination with AI can push a prototype into real use long before procurement, security review, or architecture boards have named it. That is not a Microsoft-only problem. It is the operating model of modern software.

For vendors, the lesson is that “research” and “open source” labels do not absolve projects from secure defaults. If a tool opens a browser-accessible service, handles untrusted files, invokes generated code, or connects to databases, it should ship with conservative binding, clear authentication guidance, sandboxing assumptions, and aggressive dependency maintenance. Users should not need to reverse-engineer the threat model from a README.

For customers, the lesson is harsher. If your organization permits AI tooling experiments, it needs a lightweight path to register them. Heavy governance will simply drive pilots underground. The goal should be to make the safe path easier than the unsafe one: approved containers, secret handling, network templates, update monitoring, and clear rules for when a demo may touch real data.

The Patch Is Only the First Control

Assuming Microsoft has provided or will provide updated Data Formulator bits through the project’s normal distribution channels, updating is the obvious first step. But treating the patch as the whole response misses the broader risk. Remote code execution bugs are often symptoms of an architecture that deserves review.Start with exposure. If Data Formulator is running as a local service, confirm whether it binds only to localhost or is reachable from other machines. If it is shared through a tunnel, reverse proxy, Codespace, lab server, or container platform, decide whether that exposure is still necessary. Temporary sharing links and development ports have a nasty habit of becoming semi-permanent infrastructure.

Then examine privilege. Run the tool as a non-admin user. Avoid mounting broad filesystem paths into containers. Do not run it on the same host that stores irreplaceable data. Separate experimentation from production credentials. If database access is required, use narrowly scoped read-only accounts and rotate any secrets that may have been exposed to a vulnerable instance.

Finally, preserve enough telemetry to investigate. Web access logs, process execution traces, dependency versions, package installation times, and shell history can help determine whether a vulnerable deployment was merely present or actually abused. In smaller teams, that may feel excessive. In a post-RCE investigation, it is the difference between confidence and guesswork.

Windows Admins Should Care Even When Windows Is Not the Target

WindowsForum readers are used to Microsoft vulnerabilities meaning Windows vulnerabilities. This one is different. Data Formulator may run in Python environments, developer workspaces, containers, and browsers, and the immediate patch action may not involve the Windows servicing stack at all. Still, Windows administrators should pay attention because their estates increasingly include exactly this kind of cross-platform, developer-led Microsoft software.The modern Windows environment is no longer just endpoints, domain controllers, file shares, and Office. It is Windows laptops running WSL, Python packages, VS Code extensions, containers, local web apps, AI assistants, data tools, and cloud CLIs. Vulnerability management that stops at installed MSI packages will miss a growing share of the real attack surface.

That shift makes asset discovery more political as well as technical. Developers and analysts may not think of a local AI visualization tool as “installed software.” They may see it as a repository, a virtual environment, or a notebook dependency. Security teams must meet that reality with better discovery methods rather than scolding people for not filing tickets every time they test a promising tool.

CVE-2026-41094 is therefore a useful forcing function. It asks whether organizations can find a Microsoft Research-derived AI tool in their own environments. If the answer is no, the vulnerability is only part of the problem.

The Defender’s Job Is to Rank the Unknowns

Every patch cycle brings too many vulnerabilities and too little time. The instinct is to lean on severity labels, exploitability indexes, and whether a bug is known to be exploited in the wild. Those signals matter, but they can under-rank niche software with high-value access.For CVE-2026-41094, the triage formula should be simple. If you do not run Data Formulator, document that and move on. If you run it only in isolated local experiments with no sensitive data and no network exposure, patch it and tighten defaults. If you run it as a shared service, connect it to databases, or expose it beyond a single trusted workstation, treat it as urgent.

The dangerous cases are the ones nobody owns. A lab VM created for a demo. A shared data-science box with broad credentials. A container launched by a team that has since reorganized. A cloud development environment reachable by collaborators. These are the environments where a “niche” RCE becomes an incident because the software’s social lifecycle outlasted its technical review.

Vendor advisories cannot know your topology. They can tell you a product is vulnerable. They cannot tell you whether a forgotten pilot is sitting one hop from crown-jewel data.

The Practical Reading of CVE-2026-41094 Is Narrow but Serious

CVE-2026-41094 should not be inflated into a Windows-wide emergency. It should also not be dismissed because Data Formulator is not a default enterprise fixture. The right reading is narrower and more serious: Microsoft has acknowledged a remote code execution vulnerability in an AI-powered data visualization tool that some organizations may have adopted faster than they inventoried it.For administrators and security teams, the concrete response is refreshingly direct:

- Find every Data Formulator installation, including Python virtual environments, cloned GitHub repositories, containers, Codespaces, lab servers, and shared analyst workstations.

- Update affected installations through the official project or package channel as soon as fixed versions are available.

- Remove or firewall any Data Formulator instance that is reachable beyond the user or team that actually needs it.

- Review database credentials, API keys, environment files, and mounted directories available to any vulnerable deployment.

- Treat shared AI data-analysis tools as application servers, not as harmless desktop utilities.

- Record the ownership of any remaining deployment so the next advisory does not start with a scavenger hunt.

Source: MSRC Security Update Guide - Microsoft Security Response Center