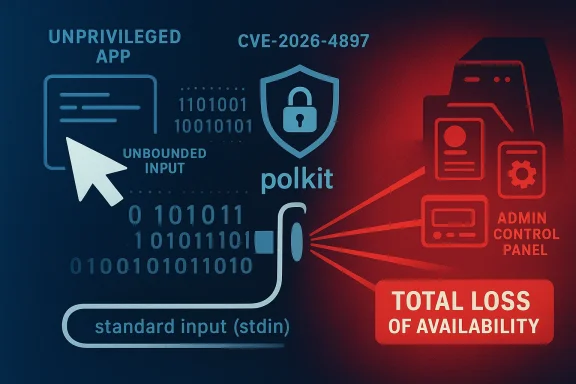

CVE-2026-4897 in polkit is a reminder that not every serious security issue is about code execution or privilege escalation; sometimes, the simplest attack is still the most disruptive. Microsoft’s update guide characterizes the flaw as a denial of service via unbounded input processing through standard input, with the potential for a total loss of availability in the impacted component. That means an attacker may not need to break into the system at all to cause real harm: if they can trigger the vulnerable path repeatedly, they may be able to keep the affected service down or make recovery difficult enough to matter operationally.

The description also signals why this matters beyond a narrow Linux-packaging bug. polkit is one of those low-level, quietly essential components that many desktop and server environments depend on for authorization workflows, service control, and integration with system management tools. When a component like that becomes unavailable, the effects can ripple outward into logins, administrative actions, device management, and automated infrastructure tasks.

PolicyKit, or polkit, sits in the middle layer between unprivileged applications and privileged actions. It is not usually the thing users think about first, but it often decides whether a desktop app may mount a drive, whether a service manager may perform a control operation, or whether a system administration request should proceed. That makes it both highly leveraged and highly sensitive to reliability issues.

This is not the first time polkit has been associated with high-impact security problems. The project has a long history of vulnerabilities ranging from authentication bypasses to local privilege escalation and service instability. In practice, that means operators have learned to treat polkit updates as important even when the headline looks “only” like a DoS issue, because a crash or hang in the authorization stack can still take core functions offline.

Microsoft’s own vulnerability taxonomy underscores why availability bugs can be severe. In its MSRC guidance, Microsoft describes denial of service as potentially causing a total loss of availability or a serious partial loss that has direct operational consequences. That framing is useful here because the CVE-2026-4897 description is not simply “a crash”; it is an availability failure that can become sustained or persistent if the attacker can keep feeding the trigger condition.

The specific wording about unbounded input processing through standard input is important. It suggests the vulnerable code path likely consumes attacker-controlled or attacker-influenced input without enforcing a practical upper limit, which is a classic recipe for resource exhaustion, runaway parsing, or a crash loop. The fact that standard input is involved also points to a component that may be reachable in less obvious ways than a network service, which can make the incident response picture more complicated than a typical remote exploit.

In the broader Linux ecosystem, availability bugs in authorization and system-management components tend to have outsized consequences because they often sit on critical paths. Even if a desktop remains technically booted, users may be blocked from managing devices, mounting media, or invoking privileged operations. On servers, especially those using polkit-based tooling, the impact can be more operationally serious because orchestration and automation may depend on the affected component.

The wording used by Microsoft also hints at a likely local or low-privilege attack surface rather than a broad internet-facing one. That does not make the issue trivial. Local denial-of-service vulnerabilities can still be serious in multi-user environments, shared workstations, container hosts, and enterprise fleets where the goal is often to preserve service continuity more than to protect a single daemon.

Availability failures are especially dangerous when they affect components that are called often or relied upon implicitly. If polkit is unavailable, nearby tools may not necessarily print a neat, actionable error; some will hang, retry, or degrade in ways that are hard to diagnose quickly. That increases mean time to recovery, which is exactly what attackers often want.

The MSRC definition of denial of service also matters because it explicitly includes cases where the attacker does not need to take the whole machine down to create serious impact. A service that blocks new authorization requests, for example, can be just as disruptive as one that crashes entirely if it is the gatekeeper for admin workflows.

Because the vulnerable path is said to be through standard input, one plausible pattern is that some helper or service mode reads from stdin as part of normal operation or a fallback workflow. If the code assumes that input will be small, trusted, or well-formed, an attacker can sometimes force the process into a worst-case path simply by supplying an unusually large or specially shaped payload.

This also means defenders should avoid assuming that “it’s not network-facing” equals “it’s not urgent.” Attackers regularly chain low-privilege local actions with resource exhaustion to knock over high-value components. On shared systems, a determined user may not need elevated rights to make life difficult for everyone else.

In the worst case, the vulnerability can become persistent if the process restarts into the same condition or if every new attempt to initialize the component immediately encounters the same malicious input. That turns an otherwise transient crash into an operational incident that requires deliberate cleanup, patching, or configuration changes.

For servers and headless systems, the consequences can be harsher because polkit often acts as a policy broker for administrative tooling. If a management stack depends on it, failures can interfere with service configuration, storage operations, or remote administration workflows. In an enterprise, that kind of disruption can quickly become expensive.

Enterprise systems are a different story. In managed fleets, even a narrow availability bug can cascade through orchestration tools, package management, or device policy enforcement. When a central control component goes down, everything that depends on it starts to slow down too.

The real enterprise concern is not only the immediate outage but also the operational drag. Help desks field more tickets, remote management becomes less reliable, and administrators may waste time distinguishing a vulnerability-triggered outage from unrelated service instability. That is why DoS advisories in core infrastructure software deserve serious treatment.

Still, severity is contextual. A local DoS bug in polkit may be less dramatic than a remotely exploitable privilege escalation, but it is often easier to trigger repeatedly and harder to ignore in production. A malicious insider, a compromised local account, or an untrusted workload on a shared host may all be enough to turn the bug into a real incident.

The more important question is whether the loss persists after the attack stops. Microsoft’s language explicitly covers both sustained and persistent availability loss, which is significant because persistent conditions are often more damaging than a simple crash. Recovery requires not just ending the attack, but also restoring state, clearing malformed inputs, or applying a patch.

In practical terms, this means security and operations teams should treat CVE-2026-4897 as a maintenance priority, not a theoretical edge case. Reliability bugs become security bugs very quickly when they hit privileged system plumbing.

Where immediate patching is not possible, teams should reduce exposure by limiting who can reach the vulnerable input path and by monitoring for repeated crashes or abnormal resource use. Because the issue involves unbounded processing, even small test probes may show up as disproportionate CPU or memory spikes before a full outage occurs.

Security teams should also remember that some DoS attacks are intentionally noisy while others are designed to look like flaky infrastructure. That makes correlated telemetry valuable. A single crash is a nuisance; a crash pattern is a clue.

Microsoft’s own security writing on denial-of-service bugs provides a useful lens here. In a 2011 MSRC post about a DNS vulnerability, Microsoft explained that a flaw that could theoretically enable code execution was far more likely to produce a denial-of-service condition because of the nature of the bug and platform mitigations. That is a reminder that exploitability and impact do not always line up neatly, and that availability loss can be the dominant real-world outcome even when the headline sounds less dramatic. (msrc.microsoft.com)

It is also partly social: bugs in central infrastructure tend to be noticed quickly because many products and distributions depend on them. That visibility is good for defenders, but it also means security teams should expect periodic polkit advisories and build update workflows accordingly.

That is the practical lesson for CVE-2026-4897. The absence of confidentiality or integrity impact does not reduce the urgency if the affected service is deeply woven into system administration.

Operations teams should also make sure their incident playbooks distinguish between a local daemon fault and a wider system compromise. If polkit is crashing or hanging, responders need to know whether the cause is configuration drift, malformed input, resource exhaustion, or a malicious local user. Clear ownership between endpoint, infrastructure, and security teams matters more than usual in cases like this.

Another useful practice is to keep system management paths separate from general-purpose user workloads. If untrusted code can run in the same trust boundary as polkit-dependent services, a denial-of-service bug becomes much easier to weaponize. Isolation is not just a cloud best practice; it is a security control for local system components too.

For defenders, the immediate priority is simple: identify exposure, install the fix, and verify that service behavior returns to normal. The longer-term lesson is to treat availability as a first-class security property, especially for system services that sit on the critical path of administrative control. If a local user can take down the control plane, the organization has a security problem even if no data is stolen.

Source: MSRC Security Update Guide - Microsoft Security Response Center

The description also signals why this matters beyond a narrow Linux-packaging bug. polkit is one of those low-level, quietly essential components that many desktop and server environments depend on for authorization workflows, service control, and integration with system management tools. When a component like that becomes unavailable, the effects can ripple outward into logins, administrative actions, device management, and automated infrastructure tasks.

Background

Background

PolicyKit, or polkit, sits in the middle layer between unprivileged applications and privileged actions. It is not usually the thing users think about first, but it often decides whether a desktop app may mount a drive, whether a service manager may perform a control operation, or whether a system administration request should proceed. That makes it both highly leveraged and highly sensitive to reliability issues.This is not the first time polkit has been associated with high-impact security problems. The project has a long history of vulnerabilities ranging from authentication bypasses to local privilege escalation and service instability. In practice, that means operators have learned to treat polkit updates as important even when the headline looks “only” like a DoS issue, because a crash or hang in the authorization stack can still take core functions offline.

Microsoft’s own vulnerability taxonomy underscores why availability bugs can be severe. In its MSRC guidance, Microsoft describes denial of service as potentially causing a total loss of availability or a serious partial loss that has direct operational consequences. That framing is useful here because the CVE-2026-4897 description is not simply “a crash”; it is an availability failure that can become sustained or persistent if the attacker can keep feeding the trigger condition.

The specific wording about unbounded input processing through standard input is important. It suggests the vulnerable code path likely consumes attacker-controlled or attacker-influenced input without enforcing a practical upper limit, which is a classic recipe for resource exhaustion, runaway parsing, or a crash loop. The fact that standard input is involved also points to a component that may be reachable in less obvious ways than a network service, which can make the incident response picture more complicated than a typical remote exploit.

In the broader Linux ecosystem, availability bugs in authorization and system-management components tend to have outsized consequences because they often sit on critical paths. Even if a desktop remains technically booted, users may be blocked from managing devices, mounting media, or invoking privileged operations. On servers, especially those using polkit-based tooling, the impact can be more operationally serious because orchestration and automation may depend on the affected component.

Overview

At a high level, the new CVE is a classic example of why input validation is never just a code-quality issue. If a process accepts unbounded data and does expensive work on it, the attacker’s job becomes one of amplification: supply input that drives the parser, allocator, or state machine into pathological behavior until the service no longer responds normally. In a component like polkit, that can translate into a denial of administrative capability, not just a simple application crash.The wording used by Microsoft also hints at a likely local or low-privilege attack surface rather than a broad internet-facing one. That does not make the issue trivial. Local denial-of-service vulnerabilities can still be serious in multi-user environments, shared workstations, container hosts, and enterprise fleets where the goal is often to preserve service continuity more than to protect a single daemon.

Why availability bugs in system services matter

A vulnerable service can fail in several distinct ways. It might exit cleanly, leak memory until it is killed, enter a busy loop, or become wedged in a state where requests queue up but never complete. From an operator’s point of view, all of those are bad, because the practical result is the same: users and dependent services cannot get work done.Availability failures are especially dangerous when they affect components that are called often or relied upon implicitly. If polkit is unavailable, nearby tools may not necessarily print a neat, actionable error; some will hang, retry, or degrade in ways that are hard to diagnose quickly. That increases mean time to recovery, which is exactly what attackers often want.

The MSRC definition of denial of service also matters because it explicitly includes cases where the attacker does not need to take the whole machine down to create serious impact. A service that blocks new authorization requests, for example, can be just as disruptive as one that crashes entirely if it is the gatekeeper for admin workflows.

How the Vulnerability Likely Works

The phrase unbounded input processing through standard input is a strong clue about the bug class, even if Microsoft’s page does not expose implementation details in the excerpt provided. In many real-world cases, this points to a parser or helper routine that keeps consuming bytes without a cap, perhaps building internal structures or repeatedly reallocating memory until the process becomes unstable. The result may be exhaustion, unexpected recursion depth, or failure in a downstream dependency.Because the vulnerable path is said to be through standard input, one plausible pattern is that some helper or service mode reads from stdin as part of normal operation or a fallback workflow. If the code assumes that input will be small, trusted, or well-formed, an attacker can sometimes force the process into a worst-case path simply by supplying an unusually large or specially shaped payload.

The standard input angle

Standard input issues can be deceptively broad. A daemon, a helper binary, or a test-oriented subcommand may all accept stdin in ways that are not obvious from the outside. If those paths are reachable by a local user or by another service, the vulnerability may be more accessible than the surface description suggests.This also means defenders should avoid assuming that “it’s not network-facing” equals “it’s not urgent.” Attackers regularly chain low-privilege local actions with resource exhaustion to knock over high-value components. On shared systems, a determined user may not need elevated rights to make life difficult for everyone else.

Unbounded processing as a failure mode

“Unbounded” is the operative word here. Security bugs often arise when code trusts that data will arrive in manageable chunks, but attackers deliberately violate that assumption. Once a parser or reader keeps iterating without limits, the process can chew through CPU, memory, file descriptors, or stack space until it fails.In the worst case, the vulnerability can become persistent if the process restarts into the same condition or if every new attempt to initialize the component immediately encounters the same malicious input. That turns an otherwise transient crash into an operational incident that requires deliberate cleanup, patching, or configuration changes.

Impact on Linux Deployments

For desktop Linux environments, polkit issues frequently surface as problems users can see immediately. Authentication prompts may fail, device operations may stall, and normal desktop tasks can become unexpectedly blocked. Even when the system is not fully dead, the user experience can feel like a partial outage.For servers and headless systems, the consequences can be harsher because polkit often acts as a policy broker for administrative tooling. If a management stack depends on it, failures can interfere with service configuration, storage operations, or remote administration workflows. In an enterprise, that kind of disruption can quickly become expensive.

Consumer versus enterprise exposure

Consumer systems are usually at lower aggregate risk, but the issue can still be highly visible. A desktop user may only experience a failed operation or a frozen control panel, yet that can still be enough to undermine trust in the platform.Enterprise systems are a different story. In managed fleets, even a narrow availability bug can cascade through orchestration tools, package management, or device policy enforcement. When a central control component goes down, everything that depends on it starts to slow down too.

The real enterprise concern is not only the immediate outage but also the operational drag. Help desks field more tickets, remote management becomes less reliable, and administrators may waste time distinguishing a vulnerability-triggered outage from unrelated service instability. That is why DoS advisories in core infrastructure software deserve serious treatment.

Severity and Risk Framing

The text supplied by Microsoft is unusually blunt for a denial-of-service issue: it describes total loss of availability in the impacted component. That phrasing tells security teams not to dismiss the CVE as a minor nuisance. In operational terms, a control-plane outage can be as damaging as a breach if it halts business-critical activity.Still, severity is contextual. A local DoS bug in polkit may be less dramatic than a remotely exploitable privilege escalation, but it is often easier to trigger repeatedly and harder to ignore in production. A malicious insider, a compromised local account, or an untrusted workload on a shared host may all be enough to turn the bug into a real incident.

What “total loss of availability” means in practice

A total outage does not always mean the entire machine is unreachable. It can also mean the affected component cannot serve requests, cannot restart cleanly, or cannot complete the tasks it was built to perform. In a component as central as polkit, that distinction may be academic to the person trying to administer the system.The more important question is whether the loss persists after the attack stops. Microsoft’s language explicitly covers both sustained and persistent availability loss, which is significant because persistent conditions are often more damaging than a simple crash. Recovery requires not just ending the attack, but also restoring state, clearing malformed inputs, or applying a patch.

Why operators should care even without RCE

Some teams still mentally rank vulnerabilities by whether they lead to code execution. That is an outdated habit. A well-timed denial-of-service attack against authorization infrastructure can disrupt patching, orchestration, and incident response, making it much harder to contain other threats.In practical terms, this means security and operations teams should treat CVE-2026-4897 as a maintenance priority, not a theoretical edge case. Reliability bugs become security bugs very quickly when they hit privileged system plumbing.

Patch and Mitigation Strategy

The first recommendation is straightforward: apply the vendor fix as soon as it is available for your distribution. For a component like polkit, downstream vendors often backport fixes rather than shipping the exact upstream version, so the relevant action is not necessarily “upgrade to the latest upstream release” but “install the patched package from your platform vendor.”Where immediate patching is not possible, teams should reduce exposure by limiting who can reach the vulnerable input path and by monitoring for repeated crashes or abnormal resource use. Because the issue involves unbounded processing, even small test probes may show up as disproportionate CPU or memory spikes before a full outage occurs.

Practical defensive steps

- Identify which hosts actually use polkit in a meaningful way, rather than assuming every Linux machine is equally exposed.

- Check whether the affected package is present in desktop, server, container-host, or appliance images.

- Prioritize systems that support multiple users or remote administration.

- Watch logs for repeated daemon failures, restarts, or abnormal stdin-related errors.

- Roll out the patch in a staged manner if your fleet is large, but do not delay remediation unnecessarily.

- Verify that your configuration management and monitoring systems can detect polkit health degradation quickly.

Monitoring and detection

Because this is an availability issue, detection may be more about symptoms than signatures. Repeated restarts, sudden CPU spikes, abnormal memory growth, and authorization failures are all useful clues. If a local actor is intentionally probing the bug, you may also see a pattern of failed actions followed by service instability.Security teams should also remember that some DoS attacks are intentionally noisy while others are designed to look like flaky infrastructure. That makes correlated telemetry valuable. A single crash is a nuisance; a crash pattern is a clue.

Historical Context

The wider history of polkit vulnerabilities explains why this announcement should not be treated as an isolated oddity. Polkit has repeatedly been at the center of issues involving local privilege escalation, authentication bypass, and service destabilization. That does not mean the project is uniquely flawed; it means the component is central enough that small mistakes can have large consequences.Microsoft’s own security writing on denial-of-service bugs provides a useful lens here. In a 2011 MSRC post about a DNS vulnerability, Microsoft explained that a flaw that could theoretically enable code execution was far more likely to produce a denial-of-service condition because of the nature of the bug and platform mitigations. That is a reminder that exploitability and impact do not always line up neatly, and that availability loss can be the dominant real-world outcome even when the headline sounds less dramatic. (msrc.microsoft.com)

Why polkit keeps showing up in advisories

The answer is partly architectural. polkit sits at the junction of policy, privilege, and inter-process communication, which means it must handle untrusted requests while making decisions that affect the entire system. That is an inherently risky role.It is also partly social: bugs in central infrastructure tend to be noticed quickly because many products and distributions depend on them. That visibility is good for defenders, but it also means security teams should expect periodic polkit advisories and build update workflows accordingly.

Lessons from prior availability issues

Past incidents show that service availability bugs can be operationally severe even when they do not compromise data. In real deployments, administrators often care most about whether a system remains controllable. If a security issue breaks the control path, it can turn a minor technical defect into a major business interruption.That is the practical lesson for CVE-2026-4897. The absence of confidentiality or integrity impact does not reduce the urgency if the affected service is deeply woven into system administration.

Enterprise Response Planning

Enterprises should respond to CVE-2026-4897 as part of a broader resilience exercise, not just a patch ticket. If polkit is used across desktop fleets, server images, kiosks, or embedded Linux systems, teams need to know which environments rely on it most heavily and which ones can tolerate a brief service interruption. That inventory work is often the difference between a calm remediation and a messy one.Operations teams should also make sure their incident playbooks distinguish between a local daemon fault and a wider system compromise. If polkit is crashing or hanging, responders need to know whether the cause is configuration drift, malformed input, resource exhaustion, or a malicious local user. Clear ownership between endpoint, infrastructure, and security teams matters more than usual in cases like this.

Segmentation and least privilege

One of the best defenses against local DoS abuse is to reduce the number of untrusted actors sharing the same privileged environment. Strong segmentation, minimal shell access, and restricted interactive logins can all lower exposure. That will not eliminate the bug, but it can make exploitation much less convenient.Another useful practice is to keep system management paths separate from general-purpose user workloads. If untrusted code can run in the same trust boundary as polkit-dependent services, a denial-of-service bug becomes much easier to weaponize. Isolation is not just a cloud best practice; it is a security control for local system components too.

Operational readiness checklist

- Confirm whether your Linux estate uses a distribution package that includes the fix.

- Test the updated package on a representative subset of systems.

- Validate that polkit-dependent workflows still function after patching.

- Add alerting for repeated daemon restarts or authorization failures.

- Review which automation tools depend on the component.

- Update runbooks so responders know the expected recovery steps.

- Document any temporary mitigations used before patch deployment.

Strengths and Opportunities

The good news is that this class of vulnerability is usually among the most straightforward to remediate once it is identified. A targeted patch can often remove the unbounded processing path without requiring architectural change, and vendors are typically quick to backport such fixes. There is also an opportunity for organizations to tighten their monitoring around a component that is often treated as background plumbing until something goes wrong.- Patchability is usually strong because the defect is likely localized to one input-processing path.

- Detection can be improved with simple health and restart monitoring.

- Enterprise hardening can reduce who is able to trigger the vulnerable flow.

- Incident response maturity can improve if teams add polkit to their critical-service watchlists.

- Package management hygiene benefits because this is the kind of issue that rewards disciplined update cycles.

- Isolation practices become more valuable once a central service is known to have local DoS exposure.

- User awareness can improve as admins stop assuming only RCE bugs are operationally important.

Risks and Concerns

The main concern is that the vulnerability’s apparent simplicity may encourage underestimation. A local DoS in a core authorization component can still create wide outage conditions, especially on shared or enterprise-managed systems. Another concern is that unbounded input bugs often hide in less visible code paths, which means patch coverage may be incomplete if administrators only update the most obvious packages.- Availability impact may be broader than expected on systems with many dependent services.

- Local attackers could exploit shared environments where untrusted users have shell or workload access.

- Recovery may be harder if the component enters a repeat-crash state.

- Package fragmentation across distributions could leave some fleets unpatched longer than expected.

- Monitoring gaps may prevent teams from recognizing polkit as the source of the outage.

- Operational blind spots can emerge if automation relies on the affected path.

- False reassurance from “non-RCE” labeling may slow remediation unnecessarily.

Looking Ahead

The broader lesson from CVE-2026-4897 is that system software is still vulnerable to old-fashioned resource exhaustion bugs, even in mature components that have been audited for years. As distributions continue to centralize policy, authorization, and session management, the blast radius of a single input-handling mistake remains high. That makes defensive engineering, code review, and fast patch distribution more important, not less.For defenders, the immediate priority is simple: identify exposure, install the fix, and verify that service behavior returns to normal. The longer-term lesson is to treat availability as a first-class security property, especially for system services that sit on the critical path of administrative control. If a local user can take down the control plane, the organization has a security problem even if no data is stolen.

- Track vendor advisories for patched polkit builds across your supported distributions.

- Audit which endpoints and servers actually rely on the component for day-to-day operations.

- Expand crash and restart monitoring to include authorization services.

- Test business-critical workflows after remediation, not just service startup.

- Reassess whether untrusted workloads share the same trust boundary as system management tools.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Last edited: