The AI era is already changing who we hire, how we train, and what work is worth—and the choice facing business leaders and policymakers is not whether to adopt AI, but how to design that adoption so it sustains human workforces rather than hollowing them out.

AI-driven productivity gains are real, rapid, and unevenly distributed. Corporations and capital markets have placed enormous bets on AI: hardware, models, and applications are concentrated in a handful of firms even as startups and national champions race to commercialize agentic AI systems that can coordinate multi-step workflows. These shifts show up in equity rallies for AI‑native stocks and in municipal strategies that embed AI into city planning and public services.

But technology alone does not determine outcomes in labour markets. Empirical analyses and modelling from major research institutions indicate that a very large share of work hours could be automated or altered by AI within a decade — creating both opportunities to boost productivity and real risks of displacement for millions of workers. The policy and corporate question is how to convert productivity into broadly shared gains rather than concentrated returns and social dislocation.

An independent, more recent labour‑simulation exercise (Project Iceberg from MIT and partners) found that current AI systems are already cost‑competitive with tasks equal to roughly 11.7% of U.S. payroll (about $1.2 trillion in wages)—a measurement of capability rather than a deterministic forecast of job losses. Both types of studies agree on the central point: capability does not equal inevitability, but capability dramatically reshapes employer choices and py

Conclusion

AI will reshape the work we do, but it need not erase the human center of the economy. With clear metrics, funded transitions, governance that keeps humans in control of high‑stakes decisions, and a commitment to portable learning pathways, societies can steer AI adoption toward a future in which machines amplify human capacity rather than replace the social scaffolding that makes work a pathway to dignity and shared prosperity.

Source: chinadailyasia.com https://www.chinadailyasia.com/article/628641/

Background

Background

AI-driven productivity gains are real, rapid, and unevenly distributed. Corporations and capital markets have placed enormous bets on AI: hardware, models, and applications are concentrated in a handful of firms even as startups and national champions race to commercialize agentic AI systems that can coordinate multi-step workflows. These shifts show up in equity rallies for AI‑native stocks and in municipal strategies that embed AI into city planning and public services. But technology alone does not determine outcomes in labour markets. Empirical analyses and modelling from major research institutions indicate that a very large share of work hours could be automated or altered by AI within a decade — creating both opportunities to boost productivity and real risks of displacement for millions of workers. The policy and corporate question is how to convert productivity into broadly shared gains rather than concentrated returns and social dislocation.

Why this moment matters: scope and speed of change

Automation potential — the numbers that shape strategy

Recent, widely cited analyses suggest that the potential for automation with current and near‑term AI tools is substantial. McKinsey’s modelling finds that, under a faster adoption scenario, up to about 30% of hours worked in advanced economies could be automated by 2030 — a change that would imply millions of occupational transitions and large-scale task redesign. The same body of research estimates millions of workers will need to change occupations or significantly reskill to match new demand.An independent, more recent labour‑simulation exercise (Project Iceberg from MIT and partners) found that current AI systems are already cost‑competitive with tasks equal to roughly 11.7% of U.S. payroll (about $1.2 trillion in wages)—a measurement of capability rather than a deterministic forecast of job losses. Both types of studies agree on the central point: capability does not equal inevitability, but capability dramatically reshapes employer choices and py

Agentic AI vs. narrow automation

This wave differs from past automation in two critical ways:- Agentic AI can perform multi‑step tasks end to end (not just one narrow step), collapsing workflows that previously required multiple people. This accelerates the rate at which certain job definitions become obsolete.

- AI expands automation beyond manual and routine tasks into cognitive, creative, and managerial areas — raising exposure for high‑skill white‑collar roles as well as routine roles. OECD analysis and other international work show the geographic and occupational distribution is uneven: urban, highly connected regions and many high‑skill roles are now significantly exposed.

The human cost if we get design wrong

If AI adoption is left solely to firmses, three systemic harms are likely:- Mass displacement of routine and mid‑level roles without adequate transitions, eroding livelihoods and local economies dependent on particular employers or industries. Empirical trackers and consultlayoffs and reorganizations in the tech sector and beyond increasingly cite automation and AI as proximate reasons.

- Erosion of apprenticeship pathways. When entry‑level and training roles are automated away, firms lose the mechanisms that produce future managers and domain experts. That creates long‑term talent pipeline damage that is expensive and slow to repair.

- Concentration of risk and vendor lock‑in. Heavy reliance on a few hyperscalers for models and runtime concentrates operational and systemic risk—outages, supply constraints, and vendor disputes have outsized labor market consequences.

What responsible adoption looks like: three pillars

To sustain human workforces, the strategy must be multi‑dimensional. Successful organizations and jurisdictions combine technology, governance, and human capital investment.1) Task‑level workforce design and protected learning pathways

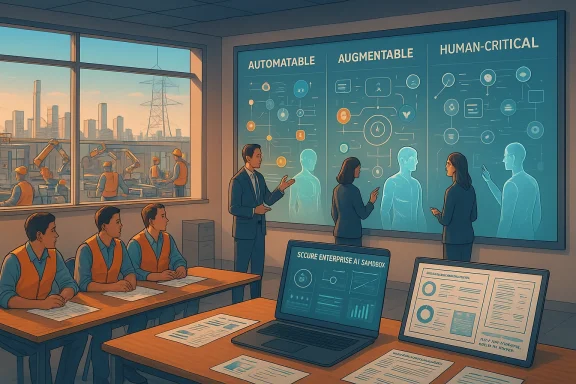

- Map work at the task level, not only at the role level. Label tasks as automatable, augmentable, or human‑critical and preserve training‑rich tasks for junior staff. This granular mapping reduces false positives (declaring whole jobs obsolete) and preserves pathways for skill accumulation.

- Create paid apprenticeship and rotational programs that replace the tacit learning opportunities lost when routine tasks are automated. Germany’s dual‑education model and industrial apprenticeship programs show stronger on‑the‑job readd rotations; scaled versions for AI‑era skills are already being piloted.

- Tie microcredentials and learning achievements to promotion and compensation so that reskilling is not merely cosmetic but career‑relevant. Private‑sector consortia and major vemitting to scale credentialing; policy should ensure portability and quality assurance.

2) Governance, pn‑the‑loop controls

- Build AI sandboxes and enterprise instances to prevent accidental data leakage and to let employees learn safely without exposing corporate IP to consumer models. Mandate audit trails and human sign‑off thresholds for high‑impact decisions (hiring, promotions, clinical decisions, contract signings).

- Negotiate vendor contracts that require exportable logs, SLAs for incident response, and explicit clauses on model provenance and retraining. These procurement terms protect operational continuity and clarify who is accountable when automation fails.

- Design human‑in‑the‑loop checkpoints proportionate to risk. Low‑risk summarization can be fully automated; high‑risk clinical or legal outputs must have mandatory human verification. This differentiated approach reduces both costs and catastrophic error risk.

3) Public policy levers that create shared incentives

- Tie a portion of productivity windfalls to workforce transition funds. Whether through targeted levies, an updated corporate tax structure, or an AI/compute tax as proposed in various policy debates, public revenues can underwrite reskilling, transitional income support, and local economic regeneration. China Daily’s piece argues for similar redistributive ence of voluntary corporate commitments.

- Scale public–private partnerships to create recognized, transferable microcredentials and apprenticeship placements that align employer demand with curriculum design. Industry coalitions (including major cloud and software firms) have committed to massive upskilling initiatives; governments should standardize quality and portability.

- Mandate transparency for AI‑related workforce reductions. When firms restructure citing automation, public disclosure of retraining, redeployment, and severance commitments creates accountability and reduces the social shock to communities. Early indicators, like the number of job families with AI exposure audits and the placement rate from reskilling programs, should be reportable.

Corporate playbook: practical steps for IT, HR and leaders

Organizations that have already begun responsible transitions follow a clea. This is not a distant theory — many firms are rolling pilot programs today.- Map tasks, not only roles. Identify which activities are safe to automate and which are essential for institutional memory.

- Fund structured learning time and micro‑credentials tied to promotion criteria.

- Create internal mobility windows: paid, guaranteed trial rotations into AI‑adjacent roles.

- Build secure enterprise AI sandboxes and prohibit uncontrolled use of consumer models for sensitive data.

- Embed governance: audit trails, human sign‑offs for critical outputs, and continuous bias testing.

- Protect apprenticeship and mentorship sequences during transitions, ensuring senior‑junior pairing continues even as tools change.

What governments should prioritize now

Policymakers need to move beyond framing debates and toward operational levers that preserve mobility and opportunity.- Fund dual‑education and apprenticeship expansions, with guaranteed workplace rotations and employer placement commitments.

- Create standards and certifications for AI oversight roles (model auditor, data steward, agent manager) so employers als and workers carry portable credentials.

- Support regional transitions through infrastructure, because nearshoring or new AI facilities are only valuable if energy, grid reliability, and connectivity exist.

- Require disclosurn‑related workforce changes and set minimum redeployment or retraining commitments for employers above threshold sizes.

Who wins and who faces the greatest risk

- At risk (near term): routine back‑office functions, scripted contact‑centre roles, mid‑level repeatable technical tasks, and many entry‑level positions that historically served as apprenticeship pathways.

- Likely to grow: roles that design, audit, deploy and govern AI — MLOps, model productisation engineers, AI ethics/audit teams, solution engineers and AI supervisors. These roles require both technical skill and domain knowledge.

- Structural risk: apprenticeship erosionce if reskilling programs are uneven or unpaid, concentrating opportunities among workers who can access private training. International analyses warn of widening urban‑rural divides and gendered exposure to automation.

Evidence from pilots and early programs

Early public–private pilots provide instructive signals:- Corporate microcredentials and partnerships with accreditation bodies compress training time and give employers verifiable skills evidence for hiring. Large tech and industrial firms have announced training targets that reach millions of workers over the coming decade. Governments should scale what works and ensure programs reach underrepresented groups. (investor.cisco.com)

- Firms that adopt Agent System of Record patterns — centralized registries for AI agents plus human‑in‑the‑loop governance — lower operational risk while enabling broader agent usage across teams. These enterprise patterns are emerging as bes that measure outcomes, not adoption, deliver better accountability. Track quality metrics (error rates, rework), promotion rates of reskilled workers, and local placement statistics to ensure that training translates into employment.

Hard trade‑offs and real uncertainties

No single policy or corporate action guarantees success. Several claims circulating in public discourse deserve cautious treatment:- Projections about exact job loss counts or precise percentages of job automation vary widely by methodology. Studies measure exposure, technical feasibility, or economic cost‑competitiveness — distinct metrics that produce different headlines. Treat headline numbers as directional and check underlying models before building policy on them.

- Some high‑profile predictions (including individual commentators forecasting mass displacement within a short time frame) are speculative; they should be presented alongside modelling assumptions and alternative scenarios. Where claims cannot be independently verified, label them as such.

- Energy, infrastructure and vendor concentration are non‑trivial constraints. Local grid capacity and data‑centre siting are practical prerequisites for large AI deployments; neglecting these can turn workforce investment into stranded capital.

A practical, phased 12‑moanisations and governments

To avoid reactive, headline‑driven policy, measure what matters:- Percentage of job families with completed AI exposure audits (goal: 100% for mission‑critical departments).

- Number of funded apprenticeships and placement rate within six months (goal: >80% placement for apprenticeships tied to AI‑exposed functions).

- Adoption rate of portable microcredentials for oversight roles (goal: create registries and employer signatories).

- Turnover and promotion rates for entry cohorts pre‑ and post‑reskilling (goal: maintain or improve historical promotion rates for juniors).

- Share of recruitment and HR decision models audited for fairness and data privacy (goal: annual independent audit).

What individuals should do now

Workers and professionals have agency. The market will reward those who combine domain expertise with AI‑adjacent skills:- Inventory transferable skills and map them to adjacent AI roles (domain expert + MLOps, prompt engineering + product design).

- Build demonstrable projects that show workflow automation, model evaluation, or cloud inference pipelines.

- Demand employer‑funded training and insist that reskilling is tied to career progression and protected time.

- Engage with industry consortia, local workforce boards, and community colleges to access validated, portable credentials.

Final analysis — a design problem, not an inevitability

AI’s potential to increase productivity is enormous and real; the economic upside is measurable, whether in enterprise returns or national GDP projections. But how those gains translate into broad prosperity or concentrated dislocation will depend on design choices made now by firms, governments and civil society. The practical path that sustains human workforces is clear in outline:- Treat workforce transition as a design problem, not an accounting exercise.

- Invest in task‑level redesign and paid learning pathways that preserve apprenticeship value.

- Build procurement and governance guardrails so automation does not create brittle operational dependencies.

- Tie public policy and corporate practice to measurable outcomes that preserve mobility, inclusion and market dynamism.

Conclusion

AI will reshape the work we do, but it need not erase the human center of the economy. With clear metrics, funded transitions, governance that keeps humans in control of high‑stakes decisions, and a commitment to portable learning pathways, societies can steer AI adoption toward a future in which machines amplify human capacity rather than replace the social scaffolding that makes work a pathway to dignity and shared prosperity.

Source: chinadailyasia.com https://www.chinadailyasia.com/article/628641/