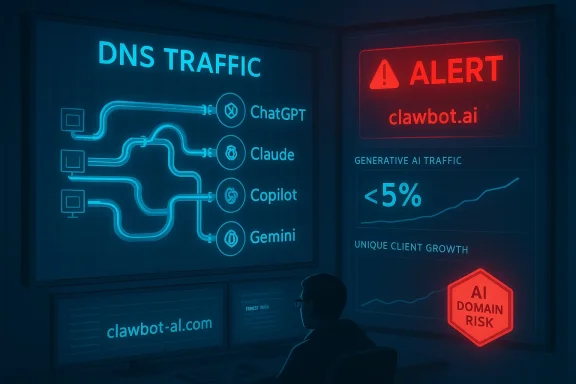

Cisco’s snapshot from the Amsterdam floor showed what many security teams already suspected: generative AI is woven into everyday workflows, and DNS telemetry is one of the most reliable early-warning signals for both adoption trends and emerging risk. The incident that kicked off the investigation — two hosts resolving clawbot[.]ai, a domain flagged malicious by security intelligence — is a textbook example of how typo‑squatting and agentic AI tooling overlap with enterprise risk, and why network teams should treat AI-resolved domains as high-fidelity indicators of intent and exposure.

DNS is the plumbing of the internet: before any client can reach a cloud-hosted AI service — whether ChatGPT, Claude, Copilot, Gemini, or a lesser-known agent runtime — it must first ask the network “where is that service?” That simple fact makes DNS telemetry an attractive, low‑cost source of truth for analyzing AI usage patterns without decrypting traffic or breaking end‑user privacy guarantees.

At Cisco Live EMEA, analysts used two complementary methods to quantify AI usage from DNS logs: (1) query DNS events categorized by a “Generative AI” classification to surface long‑tail and emerging services, and (2) apply a curated lookup table mapping known AI domains to vendor/platform names and then aggregate counts and unique clients. The combination produced both breadth (discovering new, potentially suspicious domains) and depth (measuring per‑platform adoption). Those methods — DNS enrichment + curated lookups — are reproducible and scale to very large telemetry sets.

Multiple independent industry datasets and community telemetry point to a similar pattern: ChatGPT retains large share and mindshare, while competitors are closing gaps through product integrations and targeted enterprise features. Those independent observations accord with the conference DNS snapshot and illustrate the dual reality facing security teams — one dominant public platform, and an expanding constellation of second‑tier vendors and specialist services.

Because DNS is emitted for every connection attempt, being a small fraction of total queries does not equate to low risk. A single malicious domain used by a piece of agentic malware or a typo‑squat aimed at credential capture can easily become a high‑value foothold. The telemetry snapshot therefore functions less as a volume argument and more as a prioritization signal for security teams.

Security advisories and public commentary have raised alarms about self‑hosted agent runtimes that can run arbitrary community plugins or skills with little isolation. In real incidents, that capability has been abused to exfiltrate data, compromise API keys, or escalate access — precisely the scenario DNS detection is well‑suited to detect early. The security community has already started treating OpenClaw‑style agent environments as untrusted execution contexts when run on general‑purpose endpoints.

AI tools accelerate productivity and transform work, but they also change the attack surface. DNS telemetry gives defenders an early, privacy‑preserving view into that new surface. By combining curated domain lookups, classification, endpoint correlation, and a well‑rehearsed incident playbook, security teams can balance innovation and protection: enabling legitimate AI adoption while quickly containing impersonation, malware, and untrusted agent runtimes that threaten data and identity.

The takeaway for network and security teams is clear: treat AI domain resolution as a first‑class telemetry signal. Detect early, investigate in context, and contain decisively — because the next malicious AI domain may appear in DNS long before anyone notices its damage.

Source: Cisco Blogs How popular are AI tools like OpenClaw? Understanding AI usage across the network

Background / Overview

Background / Overview

DNS is the plumbing of the internet: before any client can reach a cloud-hosted AI service — whether ChatGPT, Claude, Copilot, Gemini, or a lesser-known agent runtime — it must first ask the network “where is that service?” That simple fact makes DNS telemetry an attractive, low‑cost source of truth for analyzing AI usage patterns without decrypting traffic or breaking end‑user privacy guarantees.At Cisco Live EMEA, analysts used two complementary methods to quantify AI usage from DNS logs: (1) query DNS events categorized by a “Generative AI” classification to surface long‑tail and emerging services, and (2) apply a curated lookup table mapping known AI domains to vendor/platform names and then aggregate counts and unique clients. The combination produced both breadth (discovering new, potentially suspicious domains) and depth (measuring per‑platform adoption). Those methods — DNS enrichment + curated lookups — are reproducible and scale to very large telemetry sets.

What the telemetry showed: adoption patterns and market share signals

ChatGPT remains dominant, but diversity is growing

Across the conference snapshot, DNS counts and unique client metrics indicated that ChatGPT dominated, with an order‑of‑magnitude lead in unique clients over its nearest competitors. Anthropic’s Claude and Microsoft Copilot were the second and third most‑queried services, while Google Gemini and specialized or newer players such as xAI Grok, Mistral, and DeepSeek were visible in smaller volumes.Multiple independent industry datasets and community telemetry point to a similar pattern: ChatGPT retains large share and mindshare, while competitors are closing gaps through product integrations and targeted enterprise features. Those independent observations accord with the conference DNS snapshot and illustrate the dual reality facing security teams — one dominant public platform, and an expanding constellation of second‑tier vendors and specialist services.

Generative AI formed a small but meaningful slice of DNS traffic

The Cisco team estimated that DNS events categorized as Generative AI were under 5% of total DNS queries during the conference window. That number sounds small, but the real meaning is in the trend and context: even at a few percent of DNS activity, AI queries represent sensitive, high‑value interactions — search, content creation, code assistance, and credentials exchange — where risk is concentrated.Because DNS is emitted for every connection attempt, being a small fraction of total queries does not equate to low risk. A single malicious domain used by a piece of agentic malware or a typo‑squat aimed at credential capture can easily become a high‑value foothold. The telemetry snapshot therefore functions less as a volume argument and more as a prioritization signal for security teams.

The Claw/Clawbot incident: why typo‑squatting and agent runtimes are dangerous

The investigation began with a defensive alert: two endpoints resolved clawbot[.]ai, which threat intelligence marked malicious. The name pattern resembles legitimate AI agent tooling and suggests a likely typo‑squat or impersonation aimed at users curious about agent runtimes. What makes this more than a curious footnote is the agentic model’s capability surface: many agent frameworks (including some community agent runtimes) are designed to download “skills,” interact with external systems, and — in some cases — use persistent credentials stored locally or in the browser.Security advisories and public commentary have raised alarms about self‑hosted agent runtimes that can run arbitrary community plugins or skills with little isolation. In real incidents, that capability has been abused to exfiltrate data, compromise API keys, or escalate access — precisely the scenario DNS detection is well‑suited to detect early. The security community has already started treating OpenClaw‑style agent environments as untrusted execution contexts when run on general‑purpose endpoints.

Methodology matters: how Cisco derived these insights (and how you can replicate them)

Two complementary approaches: classification + curated lookups

- Generative AI classification: use DNS enrichment that tags domains as “Generative AI” or equivalent category. This helps discover new domains and long‑tail services without an exhaustive maintained list.

- Curated lookup table: maintain a CSV of known vendor domains (ChatGPT, chat.openai, api.openai.com, claude.ai, copilot.microsoft.com, gemini.google, etc.) and perform fast joins against DNS logs to collate platform‑level metrics such as total requests and unique client hosts.

- Classification reveals emergent threats and unknown domains.

- Lookups provide fast aggregation and consistent platform naming for dashboards.

Practical Splunk patterns (conceptual)

While the exact production SPL used in Cisco’s environment wasn’t published in the snippet, the conceptual steps are straightforward and portable:- Ingest DNS telemetry that includes at minimum: query name, timestamp, client IP, action/response, and any enrichment fields (security scores, content category).

- Run a daily stats aggregation:

- stats count as dns_events by dest_domain

- dc(client_ip) as unique_clients by dest_domain

- Join with a maintained lookup table mapping dest_domain to platform/vendor.

- Create timecharts to show platform trend, and percent of total DNS to compute ratios like the “<5%” snapshot.

- Flag domains that:

- Are categorized as Generative AI but not in your lookup table (long tail)

- Show spikes in unique client counts or repeated failed resolutions

- Are flagged by threat intel feeds as malicious or suspicious

Strengths of DNS‑based AI visibility

- High signal‑to‑noise for intent: DNS captures what a client tried to reach without decrypting content, which preserves privacy while revealing intent.

- Low operational cost: DNS logs are smaller and less sensitive than full packet captures or decrypted traffic, making long‑term retention easier.

- Early detection of suspicious domains: domains used for credential capture, command-and-control, or typo‑squatting appear in DNS before any successful data exfiltration.

- Platform‑agnostic: works across browsers, native apps, CLI tools, and SDKs — everything that resolves a hostname emits DNS.

Important limitations and sources of error (what DNS misses)

- Lack of payload visibility: DNS shows intent but not the content of what was sent or received. It can’t directly prove data exfiltration.

- Shared infrastructure and CNAME masking: Many AI vendors use CDN or cloud fronting; services can share upstream CNAMEs making attribution by domain tricky.

- Background telemetry and SDKs: Some AI SDKs perform frequent, automated resolution that inflates counts. Distinguish human‑driven queries from background SDK heartbeats.

- False negatives when private endpoints or IP‑only connections are used (e.g., hosts that connect by IP or use local proxies).

- Time‑bounded snapshots: conference telemetry is useful for a snapshot but may not generalize to normal enterprise usage.

Risks unique to agentic and self‑hosted AI tooling

Agent runtimes that can download and execute community‑contributed “skills” pose several compounded risks:- Credential use and persistence: an agent running on an endpoint may use cached credentials (browser sessions, local tokens, or cloud SDK credentials) to act on behalf of the user with persistent access.

- Supply‑chain of skills: community skills are frequently unvetted code that may include exfiltration logic, cryptomining, or privileged operations.

- Escalation via automation: combined with scripting and local OS access, agents can automate lateral movement and manipulation of cloud resources.

- Impersonation / typo‑squatting: domains that mimic names of popular vendors can harvest credentials or trick users into installing malicious agent packages.

Actionable recommendations for enterprise security teams

Below is a prioritized lifecycle for defending against AI-related network risk, grounded in DNS telemetry best practices and zero‑trust principles.1. Inventory and classify AI-related domains and clients

- Maintain a curated, versioned lookup table of known AI domains and update it weekly.

- Use DNS enrichment to classify “Generative AI” queries and detect unknowns.

- Map unique client counts to business units — understand who is using which tools.

2. Baseline and detect anomalies

- Compute daily and weekly baselines of AI‑category DNS events.

- Alert on spikes in unique clients resolving an unknown or newly classified domain.

- Use statistical anomaly detection (z‑score, EWMA) to reduce noise.

3. Harden endpoints and limit exposure

- Apply application allow‑listing and limit which user groups can run self‑hosted agent runtimes on corporate devices.

- Treat agent runtimes as high‑risk sandboxed workloads; isolate them via virtualization or dedicated VMs when possible.

- Enforce least privilege for local service accounts and remove persistent credentials from standard user profiles.

4. Network controls and policy enforcement

- Enforce egress policies (SASE / SSE) that block known‑malicious AI domains and flag long‑tail services for review.

- Use DNS recursive filtering with threat intelligence and content categorization for pre‑emptive blocking.

- Implement split DNS for internal services and consistent CNAME inspection to reduce attribution ambiguity.

5. Detection and response playbooks

- Create XDR playbooks for “AI domain resolved + outbound TLS to suspicious host + abnormal process spawn” sequences.

- Investigate early-stage DNS hits before content is exfiltrated — the DNS hit is often the first observable event.

- Correlate DNS logs with process telemetry to identify which binary or browser initiated the resolution.

6. Governance and user education

- Define clear Acceptable Use and Shadow AI policies that specify approved platforms and how to request new integrations.

- Educate developers and analysts about the risks of installing community skills or agent runtimes on corporate devices.

- Require secure development practices for any in‑house agent code, including code review, signing, and runtime confinement.

7. Cloud and API key hygiene

- Treat API keys as high‑value secrets with rotation policies, scoped permissions, and never hard‑code them in local agents.

- Monitor cloud audit logs for unusual API key use patterns tied to endpoints that also resolved suspicious domains.

Detection recipes and engineering tips

- Use a staged lookup approach: first join DNS logs to a static AI domain lookup, then run a detection query for any Generative AI categorized domain absent from the lookup table.

- Track unique client IPs over a sliding window (24–72 hours) to spot rapid onboarding of devices to a new AI endpoint.

- Correlate DNS event outcomes (NXDOMAIN, SERVFAIL, success) — repeated failed resolutions followed by a successful resolution can indicate automated retries or fallback behavior that merits investigation.

- Watch for frequent TLS SNI changes to the same IP — this often indicates shared hosting/CDN scenarios and requires mapping CNAMEs to vendor context.

Critical analysis: strengths, weaknesses, and the path forward

What Cisco’s approach does well

- Combines high‑fidelity telemetry with practical observability methods — DNS classification plus curated lookups is both scalable and discoverable.

- Prioritizes safety without invasive inspection, preserving user privacy while enabling security operations.

- Recognizes the long‑tail risk of new AI domains and agent runtimes, which is essential for proactive defense.

What the approach misses or underestimates

- Attribution complexity: shared CDN and cloud fronting can obscure which vendor a domain truly represents, leading to misclassification.

- Context of use: DNS alone can’t tell whether a ChatGPT call came from an approved tool or a risky browser extension or agent plugin.

- Scale and representativeness: conference snapshots are useful but may overstate or understate normal enterprise behavior; continuous monitoring is necessary.

Where teams should invest next

- Invest in linking DNS telemetry to endpoint process data and cloud audit trails so that a DNS hit becomes an actionable investigation with rich context.

- Build an AI‑tooling risk register that maps platforms to business use cases, data sensitivity, and approved integration controls.

- Prioritize runtime isolation tooling for agent environments and enforce least‑privilege access to cloud resources.

Concluding assessment

The Cisco Live EMEA DNS analysis provides a practical, replicable model for security teams to measure and manage AI usage across the enterprise. The headline findings — strong ChatGPT adoption, meaningful presence of Claude/Copilot/Gemini, and low‑volume but high‑impact long‑tail AI DNS activity — align with independent telemetry and industry reporting. But the real operational imperative is not market share; it is risk management.AI tools accelerate productivity and transform work, but they also change the attack surface. DNS telemetry gives defenders an early, privacy‑preserving view into that new surface. By combining curated domain lookups, classification, endpoint correlation, and a well‑rehearsed incident playbook, security teams can balance innovation and protection: enabling legitimate AI adoption while quickly containing impersonation, malware, and untrusted agent runtimes that threaten data and identity.

The takeaway for network and security teams is clear: treat AI domain resolution as a first‑class telemetry signal. Detect early, investigate in context, and contain decisively — because the next malicious AI domain may appear in DNS long before anyone notices its damage.

Source: Cisco Blogs How popular are AI tools like OpenClaw? Understanding AI usage across the network