I’m going to turn this into a publish-ready feature article and ground it in current OpenAI, HHS, FTC, and Suki material.

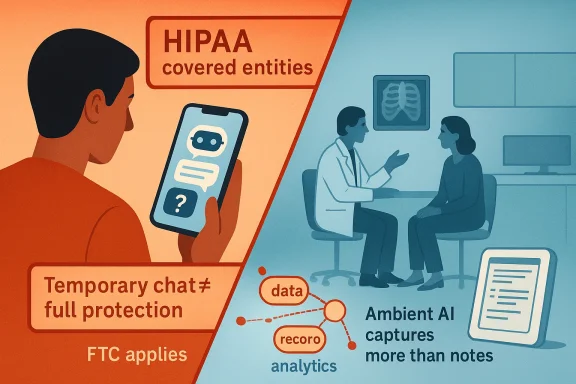

Americans are already using AI chatbots for questions that used to feel private enough to reserve for a doctor’s office, and that shift is happening faster than the law is evolving around it. The result is a dangerous mismatch: people believe they are entering protected health information into a confidential environment, while in many cases they are simply sharing sensitive data with a consumer platform. At the same time, the same industry is moving health AI deeper into both consumer apps and clinical workflows, making the privacy problem bigger, not smaller. The lesson is uncomfortable but simple: privacy expectations have outrun privacy law, and health data is now flowing through systems that were never built around HIPAA’s old assumptions. central warning in the AOL opinion piece is that Americans often assume health-related conversations are protected because they sound medical, not because they are actually covered by the law. That is an understandable assumption, but it is also an increasingly outdated one. HIPAA applies to covered entities and their business associates, not to every app, chatbot, or consumer-facing AI service that can receive a symptom description, a lab result, or a medication list. HHS says plainly that if an entity does not meet the definition of a covered entity or business associate, it does not have to comply with the HIPAA Rules.

That gap matters because the modern consumer AI experience encourages disclosure. OpenAI says more than 230 million people globally ask health and wellness questions on ChatGPT every week, and its January 2026 materials say health is one of the most common ways people use the product. The company also says users can now connect records and wellness apps in ChatGPT Health, turning the chatbot from a general-purpose assistant into a deeply contextual health interface. In other words, the consumer conversation is no longer limited to “What does this symptom mean?” It can now include medical history, test results, insurance questions, and behavioral context.

That scale is why the privacy issue is not a niche legal debate anymore. OpenAI’s own reporting says one in four regular users submits a prompt about healthcare every week, and the company frames that use as part of the product’s everyday utility. The same dynamic makes health-related chat especially sensitive: people tend to ask their most vulnerable questions in the least formal environment, and AI systems are designed to reward that trust with speed, empathy, and follow-up memory. The more conversational the interface becomes, the easier it is for users to overshare without realizing what they have done.

At the same time, the health system itself is being re-engineered around AI capture. Suki describes its ambient documentation tools as listening to patient-provider conversations, transcribing them, and turning them into structured clinical notes. That may sound like a productivity feature, and in many respects it is, but it also means that whole conversations are becoming machine-readable records. The record is no longer just a physician’s summary; it is increasingly the output of a third-party system that can be searched, retained, analyzed, and integrated downstream.

What makes this moment different from the early internet is the density of the data. A search query is brief; a chatbot conversation can be long, emotional, and full of context. A clinician’s notbient scribe can capture the entire encounter. That makes the present moment a privacy inflection point, because the industry is building a new health-data supply chain that spans consumer prompts, wearable integrations, clinical recordings, and analytics systems.

The most important legal fact in the AOL piece is also the simplest: HIPAA is not a general privacy shield for anything related to health. HHS says the HIPAA Rules apply only to covered entities and business associates, and an app developer that merely makes a product available in the app store is not automatically covered. That distinction is crucial because many users assume that if a conversation is about medicine, the law automatically follows the conversation. It does not.

This is where consumer AI becomes legally awkward. If a patient copies a lab result into a chatbot, the health content may still be highly sensitive, but the platform may not be a HIPAA-regulated actor. The result is not a vacuum of law, but a patchwork. FTC guidance says many non-HIPAA health apps and connected devices can still fall under the Health Breach Notification Rule, and the FTC also warns that health apps can be subject to the FTC Act when data practices are deceptive or misleading. So the answer is not that such services are unregulated; the answer is that they are often regulated under a different, less intuitive regime.

The policy problem is not hypothetical. OpenAI’s new health-focused materials show that vendors increasingly want to connect medical records and wellness data directly into AI experiences. That can be convenient, but it also creates a direct path from intimate health details into a consumer technology stack. Once that data lands outside the provider-payer ecosystem, the privacy regime becomes less standardized and more dependent on vendor policy, product design, and state or federal consumer protection law.

OpenAI’s health materials underscore how rapidly the space is moving. The company now talks about connecting medical records and wellness apps, and it says it designed ChatGPT Health to support, not replace, clinician care. That framing is important, but it also reveals the strategic direction of the market: AI wants to become the front door to personal health management. Once a platform occupies that role, it is no longer just answering questions; it is mediating access to the user’s health identity.

That shift has practical consequences. When the full conversation is captured, the data can be reused for transcription, coding, analytics, and workflow automation. That may improve efficiency, but it also increases the number of parties and systems that touch the information. Every additional handoff is another privacy decision, whether the patient knows it or not.

OpenAI’s own materials reinforce that tension. On one hand, the company says health is already one of the most common uses of ChatGPT. On the other hand, it is building product-specific health pathways and safety features around those conversations. That means the market is now admitting, explicitly, that health questions are not an edge case. They are core to the product’s future.

There is also an organizational effect. Once ambient notes feed into coding, billing, and analytics, the record stops being merely clinical and becomes financial. The AOL piece warns that companies like Optum are linking encounter data to revenue-cycle workflows, and that is exactly the sort of integration that worries privacy advocates. What was once a conversation becomes part of a broader actuarial pipeline.

This is where the consumer and enterprise stories converge. In consumer AI, the user chooses to overshare with a chatbot. In ambient clinical AI, the patient may not even know how much is being captured. Both systems create data, but only one of them looks obviously like data collection. That asymmetry is a major reason policy lags practice.

The problem is that the FTC framework is still not the same as HIPAA. HIPAA is designed around the healthcare system’s duty relationships and administrative safeguards. FTC enforcement is broader, but less tailored to the day-to-day expectations patients have when they think they are talking to something “medical.” That is why so many people get this wrong: the law is real, but it is split across agencies and categories the public does not naturally track.

A meaningful privacy framework for AI health data would need to answer a few questions more directly: who can see the data, how long it is kept, whether it can be used for product improvement, whether humans review it, and whether it can be tied to identity outside the original purpose. Until those questions are answered in plain language, users will continue to mistake product friendliness for legal protection.

Consumer users also need to understand that “private” does not mean “invisible.” Even if a platform does not train on the conversation, it may still retain logs for safety, debugging, or abuse prevention. That is not necessarily sinister, but it is materially different from the average user’s expectation of a private medical exchange. That gap is the whole problem in miniature.

The enterprise lesson is that AI privacy is now part of security architecture. It is not just a legal memo or a consumer settings page. If a hospital, clinic, or insurer allows AI tools into the workflow, it has to think about vendor classification, business associate agreements, retention windows, and internal access boundaries. That is a lot more complicated than turning off chat history.

If policy is going to catch up, it probably has to move in three directions at once. First, definitions of health data need to be broader. Second, disclosure and consent need to be clearer and more standardized. Third, vendors need more accountable limits on retention and reuse. Without those changes, the burden remains on the least informed user to understand a system that even many clinicians and administrators do not fully track.

This is the correct policy lens for WindowsForum readers too, because the same pattern is emerging across the broader digital stack. Consumer tools are becoming intimate. Enterprise tools are becoming conversational. And the line between convenience and surveillance is increasingly a matter of default settings, not user intent. That is why the privacy story is no longer about one app; it is about the whole ecosystem.

We are also likely to see stronger pressure for clearer separation between training, retention, personalization, and deletion. Those terms are already being used by vendors, but not in ways most users can easily compare. The companies that win trust will be the ones that make those choices visible, auditable, and simple enough to explain to a patient, not just a compliance officer.

Source: AOL.com Opinion - Beware Dr. Chatbot: Privacy laws don’t protect health care data from AI

Americans are already using AI chatbots for questions that used to feel private enough to reserve for a doctor’s office, and that shift is happening faster than the law is evolving around it. The result is a dangerous mismatch: people believe they are entering protected health information into a confidential environment, while in many cases they are simply sharing sensitive data with a consumer platform. At the same time, the same industry is moving health AI deeper into both consumer apps and clinical workflows, making the privacy problem bigger, not smaller. The lesson is uncomfortable but simple: privacy expectations have outrun privacy law, and health data is now flowing through systems that were never built around HIPAA’s old assumptions. central warning in the AOL opinion piece is that Americans often assume health-related conversations are protected because they sound medical, not because they are actually covered by the law. That is an understandable assumption, but it is also an increasingly outdated one. HIPAA applies to covered entities and their business associates, not to every app, chatbot, or consumer-facing AI service that can receive a symptom description, a lab result, or a medication list. HHS says plainly that if an entity does not meet the definition of a covered entity or business associate, it does not have to comply with the HIPAA Rules.

That gap matters because the modern consumer AI experience encourages disclosure. OpenAI says more than 230 million people globally ask health and wellness questions on ChatGPT every week, and its January 2026 materials say health is one of the most common ways people use the product. The company also says users can now connect records and wellness apps in ChatGPT Health, turning the chatbot from a general-purpose assistant into a deeply contextual health interface. In other words, the consumer conversation is no longer limited to “What does this symptom mean?” It can now include medical history, test results, insurance questions, and behavioral context.

That scale is why the privacy issue is not a niche legal debate anymore. OpenAI’s own reporting says one in four regular users submits a prompt about healthcare every week, and the company frames that use as part of the product’s everyday utility. The same dynamic makes health-related chat especially sensitive: people tend to ask their most vulnerable questions in the least formal environment, and AI systems are designed to reward that trust with speed, empathy, and follow-up memory. The more conversational the interface becomes, the easier it is for users to overshare without realizing what they have done.

At the same time, the health system itself is being re-engineered around AI capture. Suki describes its ambient documentation tools as listening to patient-provider conversations, transcribing them, and turning them into structured clinical notes. That may sound like a productivity feature, and in many respects it is, but it also means that whole conversations are becoming machine-readable records. The record is no longer just a physician’s summary; it is increasingly the output of a third-party system that can be searched, retained, analyzed, and integrated downstream.

What makes this moment different from the early internet is the density of the data. A search query is brief; a chatbot conversation can be long, emotional, and full of context. A clinician’s notbient scribe can capture the entire encounter. That makes the present moment a privacy inflection point, because the industry is building a new health-data supply chain that spans consumer prompts, wearable integrations, clinical recordings, and analytics systems.

Why HIPAA Does Not Cover Most AI Chats

Why HIPAA Does Not Cover Most AI Chats

The most important legal fact in the AOL piece is also the simplest: HIPAA is not a general privacy shield for anything related to health. HHS says the HIPAA Rules apply only to covered entities and business associates, and an app developer that merely makes a product available in the app store is not automatically covered. That distinction is crucial because many users assume that if a conversation is about medicine, the law automatically follows the conversation. It does not.The covered-entity problem

Under HIPAA, the protected zone is the healthcare system itself: health plans, providers, and business associates working on their behalf. HHS has also made clear that an app facilitating access to records at the individual’s request does not automatically create a business associate relationship. That means the legal perimeter depends on who operates the system, who receives the data, and whose function the data serves. The conversational nature of AI does not change that structure; it only makes the boundary harder for ordinary users to see.This is where consumer AI becomes legally awkward. If a patient copies a lab result into a chatbot, the health content may still be highly sensitive, but the platform may not be a HIPAA-regulated actor. The result is not a vacuum of law, but a patchwork. FTC guidance says many non-HIPAA health apps and connected devices can still fall under the Health Breach Notification Rule, and the FTC also warns that health apps can be subject to the FTC Act when data practices are deceptive or misleading. So the answer is not that such services are unregulated; the answer is that they are often regulated under a different, less intuitive regime.

Why consumer AI feels “medical” even when it isn’t

People trust the tone of the product. A chatbot that says “I’m sorry you’re dealing with this” and asks follow-up questions can feel more private than a web form, even though the legal status of the interaction may be far weaker. That is a dangerous UX illusion because the interface creates emotional confidence without supplying legal protection. The machine sounds like a clinician, but the law does not treat it like one.The policy problem is not hypothetical. OpenAI’s new health-focused materials show that vendors increasingly want to connect medical records and wellness data directly into AI experiences. That can be convenient, but it also creates a direct path from intimate health details into a consumer technology stack. Once that data lands outside the provider-payer ecosystem, the privacy regime becomes less standardized and more dependent on vendor policy, product design, and state or federal consumer protection law.

- HIPAA is not universal.

- App-store availability does not equal legal coverage.

- Consumer AI can still be sensitive without being HIPAA-protected.

- FTC rules can apply even when HIPAA does not.

- Tone and trust are not substitutes for legal status.

The New Health Data Supply Chain

The AOL essay is strongest when it stops treating AI chat as a standalone issue and instead describes a broader data ecosystem. That ecosystem begins with the prompt, but it does not end there. Once a user reveals symptoms, diagnoses, medications, or emotional stress in a chatbot, the platform can potentially process, retain, route, or combine that content with other account signals. The same is true in clinical settings, where ambient tools can record conversations and feed them into documentation and billing workflows.From prompt to profile

Health data is uniquely valuable because it is predictive. It can reveal current conditions, but it can also imply future risk, treatment cost, and longevity. That makes it attractive not just to healthcare companies, but to broader analytics and monetization systems. The article’s warning that a conversation can become a “queryable record” is more than rhetoric; it reflects how AI systems transform informal speech into structured data.OpenAI’s health materials underscore how rapidly the space is moving. The company now talks about connecting medical records and wellness apps, and it says it designed ChatGPT Health to support, not replace, clinician care. That framing is important, but it also reveals the strategic direction of the market: AI wants to become the front door to personal health management. Once a platform occupies that role, it is no longer just answering questions; it is mediating access to the user’s health identity.

What ambient scribes change inside the exam room

Ambient documentation tools like Suki’s are often promoted as burnout relief, and that part of the story is real. Suki says the technology listens to patient-provider conversations and produces structured notes, which can reduce typing and improve attention in the exam room. But the technolota gravity of the encounter. Instead of a clinician deciding what matters most, a system captures the raw conversation first and interprets it later.That shift has practical consequences. When the full conversation is captured, the data can be reused for transcription, coding, analytics, and workflow automation. That may improve efficiency, but it also increases the number of parties and systems that touch the information. Every additional handoff is another privacy decision, whether the patient knows it or not.

- Consumer AI turns dialogue into data.

- Ambient AI turns the visit into a record.

- Records can be reused beyond the original purpose.

- The more context captured, the harder selective disclosure becomes.

- Data supply chains matter as much as the model itself.

The OpenAI Health Pivot

OpenAI’s January 2026 health rollout is a major inflection point because it shows the consumer AI business moving from general-purpose advice toward health-specific utility. The company says ChatGPT Health can help users understand test results, prepare for appointments, and connect records and wellness apps. It also says it has built protective features like temporary chats, deletion within 30 days, and model behavior that avoids retaining personal information from user chats. That is a meaningful improvement in controls, but it is not the same thing as a universal privacy solution.Why health is a strategic category

Health is a sticky use case because it creates repeat engagement. Users do not ask about a rash once and move on; they ask follow-up questions, review test results, compare options, and often return with more detail. That makes health one of the strongest pathways to retention, but it also makes the privacy stakes much higher than in casual productivity use. A chatbot that remembers your preferences may be convenient, but a chatbot that remembers your anxiety, medication, or diagnosis history creates a different kind of trust burden.OpenAI’s own materials reinforce that tension. On one hand, the company says health is already one of the most common uses of ChatGPT. On the other hand, it is building product-specific health pathways and safety features around those conversations. That means the market is now admitting, explicitly, that health questions are not an edge case. They are core to the product’s future.

The promise and the limits of “privacy bychats and 30-day deletion windows are useful, but they should not be mistaken for a complete privacy architecture. They help reduce retention and surface area, yet they do not answer every question about onward transfer, debugging, abuse monitoring, or connected apps. OpenAI says health data can be securely connected to medical records and wellness apps, which means the user’s privacy now depends on multiple systems working together. If any one of those systems is misconfigured or too permissive, the burden falls on the user.

This is why the AOL article’s policy critique lands. The problem is not that companies are doing nothing; the problem is that they are solving privacy one product layer at a time while users experience the entire stack as a single conversation. That mismatch is what makes the situation feel safe when it is not.- Health is becoming a core AI use case.

- Temporary chat helps, but it is not a full legal shield.

- Connected apps create new privacy dependencies.

- Memory and retention are different controls.

- Trust now depends on product architecture, not just policy language.

Ambient AI in Clinical Care

The exam room is the second half of the privacy story, and arguably the more consequential one for regulated healthcare. Suki’s ambient documentation tools show how quickly AI is moving from a consumer helper to an embedded clinical infrastructure layer. The company says its system listens to patient-provider conversations and generates clinical notes, which means the conversation itself becomes machine-readable documentation. That is efficient, but it also turns spoken care into a data asset. ([dev://developer.suki.ai/documentation/ambient-documentation)Documentation becomes extraction

Traditional charting is selective by design. A physician summarizes the important parts of an encounter and leaves out the rest. Ambient systems change that by capturing everything first and filtering later. That can improve completeness, but it can also create a broader informational footprint than patients expect. In a privacy-sensitive environment, the difference between “summarized” and “captured” is enormous.There is also an organizational effect. Once ambient notes feed into coding, billing, and analytics, the record stops being merely clinical and becomes financial. The AOL piece warns that companies like Optum are linking encounter data to revenue-cycle workflows, and that is exactly the sort of integration that worries privacy advocates. What was once a conversation becomes part of a broader actuarial pipeline.

The business case is real, which is why the risk is too

Physicians are under enormous documentation pressure, and ambient AI can help reduce administrative burden. That is the strongest argument for the technology, and it should not be dismissed. But the more the system is used to streamline care, the more attractive it becomes as a source of reusable clinical signal. That creates pressure to expand access, improve model performance, and connect the notes to other internal systems. Efficiency is not free; it often arrives as a new privacy obligation.This is where the consumer and enterprise stories converge. In consumer AI, the user chooses to overshare with a chatbot. In ambient clinical AI, the patient may not even know how much is being captured. Both systems create data, but only one of them looks obviously like data collection. That asymmetry is a major reason policy lags practice.

- Ambient AI captures more than a clinician might later note.

- Documentation can become extraction.

- Billing and analytics expand the downstream value of the record.

- Operational efficiency can normalize deeper collection.

- Patients may not realize how much is being recorded.

The Legal and Regulatory Patchwork

The article’s broader thesis is that we are not dealing with a single privacy failure. We are dealing with multiple legal systems that were built for different eras and now overlap imperfectly. HIPAA governs certain healthcare actors. The FTC can police deceptive practices and require breach notice in certain non-HIPAA contexts. State privacy laws may add their own obligations. But none of those regimes neatly maps onto a chatbot conversation that feels medical yet originates in a consumer AI product.Why the FTC matters more than most readers realize

The FTC has been unusually clear that health apps and connected devices not covered by HIPAA can still face obligations, including under the Health Breach Notification Rule. The agency says those rules can apply to vendors of personal health records, related entities, and third-party service providers. It also warns that companies should be transparent about the health data they collect and how it is used. In practice, that makes the FTC one of the most important backstops for health data outside the traditional healthcare system.The problem is that the FTC framework is still not the same as HIPAA. HIPAA is designed around the healthcare system’s duty relationships and administrative safeguards. FTC enforcement is broader, but less tailored to the day-to-day expectations patients have when they think they are talking to something “medical.” That is why so many people get this wrong: the law is real, but it is split across agencies and categories the public does not naturally track.

Consumer consent is not always meaningful consent

The AOL piece also correctly points to the weakness of disclosure-based consent. If the permission language is buried in a long policy, the user may technically agree without understanding the consequences. FTC guidance repeatedly emphasizes that disclosures should be clear and that consumers should be told about sensitive or unexpected data collection in advance. That is a good principle, but the reality of consumer software is still far from ideal.A meaningful privacy framework for AI health data would need to answer a few questions more directly: who can see the data, how long it is kept, whether it can be used for product improvement, whether humans review it, and whether it can be tied to identity outside the original purpose. Until those questions are answered in plain language, users will continue to mistake product friendliness for legal protection.

- HIPAA is only one part of the story.

- FTC enforcement fills some of the gaps.

- Consent can be technically valid and practically meaningless.

- Privacy notices often fail the comprehension test.

- Users need simpler, not just longer, disclosures.

Consumer Versus Enterprise Impact

One of the strongest implications of the AOL piece is that consumer and enterprise privacy risks are related but not identical. Consumers worry about embarrassment, diagnosis sensitivity, or a private health conversation becoming visible to a company. Enterprises worry about regulated data, workflow contamination, retention controls, and whether employees are sending company information into tools that blur personal and professional boundaries. Both problems are real, but the governance burden is much heavier in the enterprise.What consumers need most

Consumers need clarity. They need to know whether a chatbot retains their prompts, whether health-related conversations can be used for training, and whether connected records change the privacy posture. They also need easy deletion and visible settings, not buried menus and contradictory wording. The current market is too fragmented to assume users will self-manage this well.Consumer users also need to understand that “private” does not mean “invisible.” Even if a platform does not train on the conversation, it may still retain logs for safety, debugging, or abuse prevention. That is not necessarily sinister, but it is materially different from the average user’s expectation of a private medical exchange. That gap is the whole problem in miniature.

What enterprises need most

Enterprise buyers need auditable controls. They need to know where data flows, how long it stays, and whether assistant tools can touch records that fall under contractual or regulatory obligations. They also need policy enforcement, because user-level preferences are not enough when the organization owns the devices, the identity stack, or the productivity suite. That is especially true in healthcare, where ambient documentation, EHR integrations, and billing systems can all intersect.The enterprise lesson is that AI privacy is now part of security architecture. It is not just a legal memo or a consumer settings page. If a hospital, clinic, or insurer allows AI tools into the workflow, it has to think about vendor classification, business associate agreements, retention windows, and internal access boundaries. That is a lot more complicated than turning off chat history.

- Consumers need simplicity and visible controls.

- Enterprises need governance and auditability.

- User preference does not equal organizational compliance.

- Healthcare systems must track vendor roles carefully.

- Policy is now an IT problem as much as a legal one.

Why the Policy Lag Matters

The AOL essay is ultimately a warning about time. Technology has moved quickly enough that people now behave as though AI chat is a new kind of doctor’s office, but the legal framework still treats most of these systems as ordinary consumer services unless a specific regulatory hook applies. That lag is not just inconvenient; it creates a false sense of confidentiality at the exact moment users are disclosing the most sensitive details of their lives.The boundary problem

The boundary between health advice, health administration, and general-purpose assistance is increasingly blurry. A chatbot can help a user understand a lab result, draft a message to a doctor, compare insurance options, and track wellness goals all in one thread. Yet each of those actions may fall into a different legal bucket depending on the platform and its relationships. That complexity is invisible to most users, which is why a simple “you are not protected” warning, while directionally correct, still understates the structural issue. ([opena.com/index/introducing-chatgpt-health//)If policy is going to catch up, it probably has to move in three directions at once. First, definitions of health data need to be broader. Second, disclosure and consent need to be clearer and more standardized. Third, vendors need more accountable limits on retention and reuse. Without those changes, the burden remains on the least informed user to understand a system that even many clinicians and administrators do not fully track.

Why “catch policy up” is the right frame

The piece’s final argument is not anti-AI. It is pro-governance. That distinction matters because there is a real upside to health AI: easier access, lower friction, and more help for people who are far from care or struggling to navigate the system. OpenAI’s own health materials emphasize that users are already relying on ChatGPT for exactly those kinds of problems. The challenge is to preserve that utility without turning every helpful exchange into a long-lived data exhaust trail.This is the correct policy lens for WindowsForum readers too, because the same pattern is emerging across the broader digital stack. Consumer tools are becoming intimate. Enterprise tools are becoming conversational. And the line between convenience and surveillance is increasingly a matter of default settings, not user intent. That is why the privacy story is no longer about one app; it is about the whole ecosystem.

- The legal framework is lagging the product stack.

- Health AI crosses multiple regulatory categories.

- Broader definitions of health data are overdue.

- Clearer retention limits would reduce user harm.

- Governance, not fear, is the realistic path forward.

Strengths and Opportunities

The strongest part of the AOL opinion is that it identifies a real disconnect between user expectation and legal reality, then connects that gap to a broader shift in how AI systems collect health data. It is also right to separate consumer chatbot use from clinical ambient documentation, because those are two sides of the same privacy transformation. The opportunity now is to turn that warning into better product design, better disclosure, and better regulation. The market is clearly moving toward deeper health integration, so the question is whether privacy will be bolted on afterward or built in from the start.- It captures the core privacy illusion clearly.

- It links consumer AI and clinical AI in one framework.

- It correctly highlights the limits of HIPAA.

- It points toward a real policy gap, not a theoretical one.

- It reflects how fast health AI is becoming mainstream.

- It gives consumers a reason to be more careful without sounding alarmist.

- It underscores the need for better consent design.

Risks and Concerns

The biggest risk is that users will continue to overshare because the interface feels intimate and helpful. A chatbot can make a sensitive question feel like a private conversation even when the underlying system is a general-purpose platform with retention rules the user does not understand. A second risk is that ambient clinical tools normalize far more collection than patients realize, especially when the data flows into coding and billing systems. A third is that privacy controls will keep fragmenting across products, leaving ordinary users with too many settings and too little clarity.- Users may confuse conversational tone with legal confidentiality.

- Hidden retention windows can undermine expectations.

- Ambient documentation can capture more than patients expect.

- Downstream billing and analytics can expand data use.

- Fragmented controls make real consent hard.

- Default settings will keep favoring collection over caution.

- The more useful the system becomes, the more data it will want.

Looking Ahead

The next phase of this issue will be decided less by rhetoric than by product defaults. If AI vendors keep pushing deeper into health records, wellness apps, and ambient clinical capture, then privacy settings will need to become much more centralized and much easier to understand. If they do not, the public will keep treating health AI like a private service even when it functions more like a data platform. That is the mismatch regulators should focus on, because it is where harm becomes routine.We are also likely to see stronger pressure for clearer separation between training, retention, personalization, and deletion. Those terms are already being used by vendors, but not in ways most users can easily compare. The companies that win trust will be the ones that make those choices visible, auditable, and simple enough to explain to a patient, not just a compliance officer.

- Watch for tighter FTC scrutiny of consumer health apps.

- Watch for more explicit health-specific AI product lines.

- Watch for stronger retention and deletion promises.

- Watch for enterprise controls in ambient documentation platforms.

- Watch for pressure to standardize consent language.

Source: AOL.com Opinion - Beware Dr. Chatbot: Privacy laws don’t protect health care data from AI