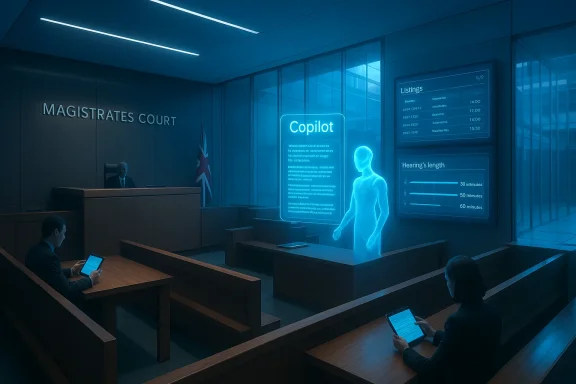

The government’s plan to roll Microsoft’s Copilot and other AI tools deeper into England and Wales’s courts marks a decisive shift: routine tasks from transcribing hearings to summarising judgments and scheduling cases will increasingly be handed to algorithms as ministers promise faster justice and a £12 million uplift for the Ministry of Justice’s in‑house Justice AI Unit.

The Ministry of Justice has framed this push as part of a wider package of funding, reform and modernisation intended to tackle a chronic backlog and strained court estate. Crown Court waiting lists have grown markedly in recent years, prompting ministers to promise unprecedented multi‑year funding settlements, more sitting days and targeted measures such as so‑called “blitz courts” that concentrate similar case types in short bursts to speed outcomes. Alongside these operational measures, ministers say AI will be pressed into service as an efficiency lever — used to transcribe routine audio, anonymise documents, draft preliminary notes, summarise judgments and support national case‑listing. The department has also announced an expansion of its Justice AI Unit with more than £12 million of additional funding for the next financial year and plans to launch a Justice AI academy to build in‑house expertise.

Those headline commitments come from a speech delivered at a Microsoft AI event on 24 February, where the Justice Secretary and Deputy Prime Minister outlined a vision of a more digital, less paper‑bound court system and cited early pilots. The Probation Service pilot, branded internally as “Justice Transcribe,” is presented as a proof of concept: ministers report that more than 150,000 meetings were transcribed and that staff time savings of roughly 25,000 hours were realised because officers no longer had to type up notes by hand. The Ministry says the same underlying technology is now being trialled in tribunals and magistrates’ courts, and that some judges in immigration and asylum chambers are already using AI tools to draft notes and preliminary remarks.

But this agenda lands amid renewed scrutiny of generative AI — including recent episodes where AI outputs were shown to be unreliable and where law officers and police forces inadvertently used hallucinated material. Those examples have focused attention on the trade‑offs between operational gains and the legal, ethical and technical risks of embedding AI inside processes that determine liberty, welfare or livelihoods.

But courtroom audio is one of the hardest environments for ASR:

Technical mitigations exist — better microphones, courtroom audio standards, fine‑tuning models on legal audio, speaker diarisation algorithms — but none remove the need for robust human‑in‑the‑loop checks. The evidence disclosed so far does not include public, independently audited accuracy rates for the Probation Service pilot, the nature and cost of human corrections, or the failure modes that appear when models mis‑recognise legal terms or sensitive statements. Those gaps matter: without transparent metrics for word‑error rates, correction effort and post‑processing, the headline time‑savings numbers are necessary but insufficient to judge net benefit or risk.

When a court relies on a tool to produce legal research, citations or even factual summaries, the potential for incorrect legal authority or invented precedent is not an abstract risk; it has concrete consequences for fairness and the integrity of judgments. That is why many judges and regulatory bodies stress that AI can only be an assistive tool — and that legal professionals remain solely responsible for verifying every fact and citation placed before the court.

But courts are not just another administrative service. They adjudicate rights, liberty and entitlement. That difference elevates the standard of evidence, transparency and governance required for any AI tool that touches the process. The empirical record of AI hallucinations and fabricated citations — and high‑profile misuses of Copilot in policing — emphasises that speed without rigor is dangerous.

If ministers are to justify embedding Copilot‑style assistants into the machinery of justice they must do three things in plain sight: publish rigorous evaluation data (accuracy, human correction time, failure modes), lock down data governance with enforceable contractual safeguards and independent audits, and adopt strong human‑in‑the‑loop policies that preserve legal responsibility and judicial oversight. Absent those safeguards, the very efficiencies promised could become sources of error, unfairness and erosion of public confidence — outcomes no justice system can afford.

Source: theregister.com Britain's creaking courts to use Copilot for transcriptions

Background

Background

The Ministry of Justice has framed this push as part of a wider package of funding, reform and modernisation intended to tackle a chronic backlog and strained court estate. Crown Court waiting lists have grown markedly in recent years, prompting ministers to promise unprecedented multi‑year funding settlements, more sitting days and targeted measures such as so‑called “blitz courts” that concentrate similar case types in short bursts to speed outcomes. Alongside these operational measures, ministers say AI will be pressed into service as an efficiency lever — used to transcribe routine audio, anonymise documents, draft preliminary notes, summarise judgments and support national case‑listing. The department has also announced an expansion of its Justice AI Unit with more than £12 million of additional funding for the next financial year and plans to launch a Justice AI academy to build in‑house expertise.Those headline commitments come from a speech delivered at a Microsoft AI event on 24 February, where the Justice Secretary and Deputy Prime Minister outlined a vision of a more digital, less paper‑bound court system and cited early pilots. The Probation Service pilot, branded internally as “Justice Transcribe,” is presented as a proof of concept: ministers report that more than 150,000 meetings were transcribed and that staff time savings of roughly 25,000 hours were realised because officers no longer had to type up notes by hand. The Ministry says the same underlying technology is now being trialled in tribunals and magistrates’ courts, and that some judges in immigration and asylum chambers are already using AI tools to draft notes and preliminary remarks.

But this agenda lands amid renewed scrutiny of generative AI — including recent episodes where AI outputs were shown to be unreliable and where law officers and police forces inadvertently used hallucinated material. Those examples have focused attention on the trade‑offs between operational gains and the legal, ethical and technical risks of embedding AI inside processes that determine liberty, welfare or livelihoods.

What ministers are proposing — the concrete changes

Transcription and note‑taking

- Expand automated speech‑to‑text in frontline settings, following the Probation Service pilot that converted meetings into searchable transcripts.

- Trial courtroom transcription for hearings that do not currently have live stenography, particularly in magistrates’ courts and some tribunal chambers.

- Use machine summaries to accelerate case progression and reduce administrative bottlenecks.

Summarisation and drafting aids

- Deploy summarisation tools to produce concise digests of judgments and case papers, freeing lawyers and judicial support staff from repetitive drafting.

- Offer AI‑assisted drafting for legal advisers and magistrates’ clerks to speed the preparation of notes and standard documents.

AI‑assisted listing and case scheduling

- Pilot a national AI‑assisted listing assistant to help allocate hearing dates, estimate hearing lengths, and flag cases that require urgent action — a digital alternative to currently manual, inconsistent local practices.

Governance, skills and investment

- Expand the Justice AI Unit with a multi‑million‑pound uplift, create an AI academy and fellowship programme to bring AI engineers into the justice system, and trial systems alongside procurement relationships with major suppliers, notably Microsoft.

Why transcription is tempting — and where it trips up

Automated speech recognition (ASR) promises clear operational wins. Court and probation staff spend large amounts of time turning spoken words into written records; automation can cut that burden and make content searchable and auditable. Pilot figures cited by the Ministry — 150,000 meetings and ~25,000 hours saved — show the scale of potential administrative savings when transcription replaces manual note‑taking for routine, structured interviews.But courtroom audio is one of the hardest environments for ASR:

- Sound quality varies widely (old hearing rooms, distant microphones, mobile devices).

- Multiple speakers talk over each other; accents, rapid speech, whispering and legal jargon reduce accuracy.

- Names, legal citations, statute references and case law are domain‑specific and highly sensitive to mis‑recognition.

- Speaker attribution (who said what) matters legally; mis‑diarisation can materially affect evidential record.

Technical mitigations exist — better microphones, courtroom audio standards, fine‑tuning models on legal audio, speaker diarisation algorithms — but none remove the need for robust human‑in‑the‑loop checks. The evidence disclosed so far does not include public, independently audited accuracy rates for the Probation Service pilot, the nature and cost of human corrections, or the failure modes that appear when models mis‑recognise legal terms or sensitive statements. Those gaps matter: without transparent metrics for word‑error rates, correction effort and post‑processing, the headline time‑savings numbers are necessary but insufficient to judge net benefit or risk.

The ghost in the machine: hallucinations, fabrication and legal integrity

One of the most corrosive risks of generative systems is “hallucination” — confidently produced content that is false or fabricated. In legal contexts the danger has already surfaced in documented cases where AI‑generated citations or facts were included in filings and later found to be non‑existent. Judges in other jurisdictions have rejected material or required refiling where submissions relied on AI that produced fabricated case law. Separate public reporting has shown police forces using outputs from Copilot that included fictitious details — a high‑profile episode that led to political and operational scrutiny.When a court relies on a tool to produce legal research, citations or even factual summaries, the potential for incorrect legal authority or invented precedent is not an abstract risk; it has concrete consequences for fairness and the integrity of judgments. That is why many judges and regulatory bodies stress that AI can only be an assistive tool — and that legal professionals remain solely responsible for verifying every fact and citation placed before the court.

Data protection, confidentiality and vendor dependence

Introducing cloud‑hosted AI assistants into a justice system raises immediate data governance and confidentiality questions:- Court proceedings and probation meetings include highly sensitive personal data — criminal records, medical information, immigration status — which is protected under UK data‑protection law and the common law duty of confidentiality.

- The legal ground for processing, retention policies, and minimisation strategies must be explicit: transcription and summarisation processes must satisfy Data Protection Impact Assessments and comply with the UK GDPR.

- Enterprise AI products increasingly promise that organisational content will not be used to further train public foundation models. Yet telemetry, diagnostic logs and metadata may still be processed for service maintenance, and contractual protections vary across vendors.

- Vendor contracts must address compelled disclosure to foreign law enforcement, data residency, access controls and indemnities for intellectual property or confidentiality breaches.

Procedural and constitutional questions

Beyond technical and privacy concerns, the proposals raise deeper procedural and constitutional questions:- Evidence and admissibility: Will machine transcripts be treated as reliable evidence? If so, on what basis, and how will courts handle disputes about transcription errors?

- Disclosure and fairness: Automated summaries could speed case management, but defence representatives must be satisfied that summaries are accurate and that underlying data are preserved and accessible for challenge.

- Transparency and audit: Courts demand reasons. AI outputs that influence listing decisions, scheduling or the framing of issues must be auditable, reproducible and explainable — not black‑box outputs that judges cannot interrogate.

- Access to justice: Digitalisation can improve access for some users, but it can also create new barriers for those without digital literacy or reliable connections. Video hearings and AI‑driven triage must not compound inequality.

- Jury trials and human judgment: The move to expand judge‑only trials for many offences (a political proposal also under discussion) changes the human composition of decision‑making; pairing this with AI decision‑support shifts the balance of procedural risk and public confidence.

Governance and mitigation: what a responsible rollout must include

To reconcile the operational benefits with structural risk, a credible implementation must include several parallel pillars:- Clear legal framework and DPIAs

- Completed, published Data Protection Impact Assessments for every AI deployment.

- Explicit legal bases for processing, retention schedules and redaction policies.

- Technical safeguards

- Courtroom audio standards (microphone quality, channel separation).

- Model‑specific accuracy targets, and mandatory human verification thresholds before outputs are used in decisions.

- Full audit logs, immutable chaining of outputs to inputs, and version‑controlled model records to allow post‑hoc review.

- Procurement and vendor accountability

- Contract clauses that prevent the use of court data to train public models.

- Defined data residency and law enforcement disclosure handling.

- Third‑party independent audits and red‑team tests of hallucination risk and bias.

- Human‑in‑the‑loop workflows

- Require human sign‑off for any AI‑produced legal research, citations or final transcripts used in formal filings.

- Provide training and competence thresholds for staff using AI tools; create an internal accreditation pathway via the Justice AI academy.

- Transparency and public trust

- Publish evaluation metrics: word‑error rates, correction time, failure modes, and a clear escalation pathway for disputed transcripts or summaries.

- Create independent oversight, involving judiciary representatives and civil‑society voices, to review pilot results and governance.

- Contingency and remediation

- Protocols for swift remediation if AI outputs contribute to procedural unfairness — including a mechanism for reopening or reviewing affected cases if necessary.

Where the gains could be real

Despite the risks, the potential efficiency benefits are tangible if the rollout is cautious and well governed:- Administrative relief: Transcription and summarisation can free judicial and administrative staff from repetitive tasks, allowing resources to be redirected to case analysis and engagement that only humans can deliver.

- Searchability and records: Digitised, searchable records can speed case preparation and disclosure, and improve inter‑agency sharing when combined with appropriate redaction and anonymisation.

- Intelligent listing: A well‑designed listing assistant could reduce wasted court time by better estimating hearing lengths, matching specialist judges with complex cases, and flagging at‑risk files before they stall.

- Scalability: Where staff shortages and estate constraints limit capacity, technology is one lever that — combined with funding for more sittings and targeted “blitz” approaches — could materially reduce waiting times for victims and defendants.

Political and cultural hurdles

The policy sits at the intersection of technology, politics and professional practice. There will be opposition on several fronts:- Backbench MPs and civil liberties groups will press on jury rights and the scope of judge‑only trials.

- Legal professionals will insist on strict competence and professional responsibility requirements for any AI‑aided filing.

- Civil‑society and privacy advocates will demand public reporting and independent oversight of system performance and data handling.

- The police Copilot episode has created scepticism among the public and parliamentarians alike; ministers will have to rebuild trust and demonstrate robust governance to justify broader adoption.

Bottom line: a cautious, measured path forward

The MoJ’s plan to scale AI in courts is bold and operationally attractive: automated transcription, summaries and an AI‑assisted listing assistant promise real efficiency gains in a system strained by backlog and resource limits. The Probation Service numbers show what automation can deliver in routine settings; the funding boost for the Justice AI Unit indicates political will to build capacity.But courts are not just another administrative service. They adjudicate rights, liberty and entitlement. That difference elevates the standard of evidence, transparency and governance required for any AI tool that touches the process. The empirical record of AI hallucinations and fabricated citations — and high‑profile misuses of Copilot in policing — emphasises that speed without rigor is dangerous.

If ministers are to justify embedding Copilot‑style assistants into the machinery of justice they must do three things in plain sight: publish rigorous evaluation data (accuracy, human correction time, failure modes), lock down data governance with enforceable contractual safeguards and independent audits, and adopt strong human‑in‑the‑loop policies that preserve legal responsibility and judicial oversight. Absent those safeguards, the very efficiencies promised could become sources of error, unfairness and erosion of public confidence — outcomes no justice system can afford.

Source: theregister.com Britain's creaking courts to use Copilot for transcriptions