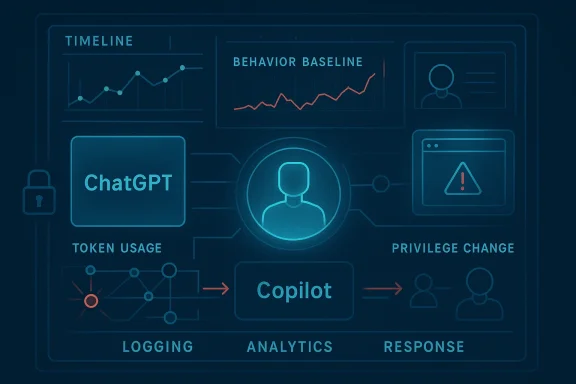

Exabeam’s push to watch ChatGPT, Microsoft Copilot, and Google Gemini is more than another product update. It is a sign that enterprise security teams are being forced to treat AI agents as a new class of identity, one that can hold privileges, touch data, and make mistakes at machine speed. The company’s latest expansion of Agent Behavior Analytics aims to detect anomalous agent activity, catch prompt injection, verify access rights, and close the visibility gap that has made “shadow AI” such a fast-growing risk. (exabeam.com)

The security industry has spent the last several years learning an uncomfortable lesson: once an AI system can take actions, it stops behaving like a chatbot and starts behaving like an employee with credentials. That shift matters because traditional security tools were built around users, endpoints, and network traffic, not around autonomous software that can read email, call APIs, query databases, and chain tasks together. Exabeam is now trying to turn that conceptual gap into a measurable security category. (exabeam.com)

The company first framed Agent Behavior Analytics in January 2026 as part of its New-Scale launch, positioning it as behavioral analytics for non-human workers. In that release, Exabeam described scenarios where an agent could be tricked into copying finance files to an unauthorized endpoint or deleting security logs after hours, emphasizing that legacy SIEM and XDR products may miss those patterns if they only search for predeclared indicators. (exabeam.com)

Since then, Exabeam has broadened the idea beyond generic automation and into the specific ecosystems enterprises are using most. Its own product pages now list support for ChatGPT, Microsoft Copilot, and Google Gemini, which is significant because those tools sit inside the daily workflow of office users rather than inside an isolated lab. That makes the attack surface both bigger and more invisible, especially when employees adopt AI tools without a formal rollout or security review. (exabeam.com)

The timing is no accident. Microsoft’s own documentation says Microsoft 365 Copilot operates within the user’s identity and access context, while also relying on multi-layered defenses against prompt injection. Google likewise documents protections for Gemini users against malicious content and prompt injection. OpenAI has also repeatedly acknowledged prompt injection as a serious frontier-security problem. Together, those vendor disclosures show that the risk is real, persistent, and not confined to one platform.

What makes Exabeam’s message credible is that it aligns with broader security thinking rather than inventing a brand-new fear. OWASP’s current Agentic Skills Top 10 says the intermediate behavior layer of agentic systems is under-protected, and it recommends inventorying skills, restricting permissions, and monitoring runtime behavior. In other words, the industry is converging on the idea that visibility is the first control, not the last. (owasp.org)

The core problem is delegated trust. A human user may be trained to ignore suspicious email, but an agent may ingest that same content as operational input and then faithfully obey malicious instructions hidden inside it. If the agent has access to calendars, files, tickets, source repositories, or cloud apps, the blast radius becomes a function of permissions, not intent.

The phrase shadow AI matters here because many organizations will never get a formal asset inventory before users begin experimenting with AI assistants. Once that happens, the security team is already behind. Visibility tools that can identify AI activity, normalize it, and compare it to expected behavior may become as important as identity governance in the previous era of SaaS sprawl. (exabeam.com)

The most practical of those features is behavior baselining. Exabeam says it builds dynamic profiles for users and their AI agents and then tracks request volume, token usage, tool calls, and outbound traffic for deviations. That matters because many compromises will not look like malware; they will look like a bot that suddenly starts behaving outside its normal scope. (exabeam.com)

The same logic applies to prompt and model abuse detection. Exabeam says it is looking for prompt injection, model manipulation, and tool exploitation, and it says its detection library is now five times larger than before. That is a meaningful detail because prompt-injection defense tends to fail when defenders rely on a small number of fixed signatures. (exabeam.com)

That lifecycle lens is especially important for enterprises with many custom agents. A deployment may start as a narrow pilot, then accumulate permissions and dependencies over months. The result is a system that no one fully owns, even though many teams rely on it. That is the classic recipe for a security blind spot. (exabeam.com)

Microsoft says Copilot works in the user’s identity and access context, which is helpful for containment but also means the agent inherits the user’s reach. Google’s admin guidance explicitly discusses how Gemini protections may block suspicious prompts or content references. OpenAI has published multiple posts on hardening ChatGPT against prompt injection, underscoring that even the most advanced vendors are still treating this as an active frontier.

That is also where vendor-native security controls and third-party visibility should be complementary, not competing. Microsoft and Google can block certain classes of malicious behavior inside their own platforms, but enterprises still need an independent layer that can correlate cross-app activity, user context, and downstream consequences. Relying on one vendor’s internal protections is a brittle strategy.

That framework also helps explain why agentic security is different from classic LLM security. The model may be the brain, but the skills, tools, and runtime environment are what turn inference into action. If the model is manipulated, the outcome is no longer just a bad answer; it may be an unauthorized action in the real world. (owasp.org)

Exabeam’s inclusion of OWASP coverage therefore serves a second purpose: it makes the platform easier to justify as a control layer rather than a point solution. In a crowded market, alignment with a recognized security taxonomy can be nearly as valuable as a technical feature because it shortens the path from demo to policy adoption. That is especially true in regulated industries. (owasp.org)

For analysts, the promise is reduced alert fatigue. Exabeam says the new features can correlate and sequence agent activity automatically, generate machine-built timelines, and produce summaries that shorten investigation time. In practical terms, that means the platform is trying to convert AI activity from a flood of raw logs into a small number of higher-confidence cases. (exabeam.com)

That integration strategy is commercially shrewd. Many enterprises are not ready to rip out their SIEM, but they are willing to add capabilities that improve detection without forcing a platform migration. Exabeam’s messaging makes clear that the company wants to modernize the SOC without making customers rebuild it from scratch.

The competitive edge here is not just detection. It is the combination of behavior analytics, identity-aware visibility, and SOC workflow integration. If Exabeam can consistently show that AI agent anomalies resemble familiar insider-risk patterns, it can position itself as a bridge between legacy UEBA and the new world of agentic AI. (exabeam.com)

There is also a subtle positioning battle under way. If AI agents are treated as a new identity class, then security vendors that already live in the identity, SIEM, or UEBA layers get a major advantage. If they are treated as application features, then AI platform providers may keep the control plane in-house. Exabeam is betting that enterprises will prefer an independent behavioral layer. (exabeam.com)

For enterprises, the stakes are much higher because agent compromise can lead to data exposure, unauthorized actions, and compliance failures. If an AI assistant has access to HR, finance, customer service, or engineering systems, then a single misbehaving agent can create incidents that look more like insider abuse than classic malware. That is why visibility and policy enforcement have to be coordinated. (exabeam.com)

There is also a governance dimension. Board members and auditors are increasingly asking how organizations are managing AI risk, not just whether they have adopted AI. Exabeam’s emphasis on outcomes, benchmarks, and coverage scoring suggests it wants to make agentic security legible to non-technical stakeholders as well as to analysts. That may prove to be one of the most important features of all. (exabeam.com)

The broader opportunity is that AI agents may become the next major workload category to require dedicated observability. If that happens, the vendors that can normalize agent telemetry into security operations will gain an important early foothold. Exabeam seems to understand that the winner will be the platform that makes invisible behavior visible before the incident, not just after it. (exabeam.com)

There is also the danger of alert inflation. If the platform flags too many benign deviations, analysts will lose trust and the whole point of behavioral monitoring weakens. The success of these controls will depend on tuning quality, context awareness, and whether Exabeam can keep false positives low enough for busy SOCs to act on the signals. (exabeam.com)

There is also a subtle cultural risk. If enterprises treat AI agents as harmless productivity aids, they may underfund the controls needed to secure them. If they treat them as fully trusted coworkers, they may miss the fact that an agent’s trust can be manipulated faster than a human’s judgment. That mismatch is where many incidents will start. (exabeam.com)

If that proves true, then AI agent monitoring will stop looking like a niche feature and start looking like table stakes. The winners will be the vendors that can combine identity, behavior, and governance into one coherent control plane without overwhelming analysts. The losers will be the ones still treating agent activity like a side issue. (owasp.org)

Source: Techzine Global Exabeam now monitors AI agents in ChatGPT, Copilot, and Gemini

Background

Background

The security industry has spent the last several years learning an uncomfortable lesson: once an AI system can take actions, it stops behaving like a chatbot and starts behaving like an employee with credentials. That shift matters because traditional security tools were built around users, endpoints, and network traffic, not around autonomous software that can read email, call APIs, query databases, and chain tasks together. Exabeam is now trying to turn that conceptual gap into a measurable security category. (exabeam.com)The company first framed Agent Behavior Analytics in January 2026 as part of its New-Scale launch, positioning it as behavioral analytics for non-human workers. In that release, Exabeam described scenarios where an agent could be tricked into copying finance files to an unauthorized endpoint or deleting security logs after hours, emphasizing that legacy SIEM and XDR products may miss those patterns if they only search for predeclared indicators. (exabeam.com)

Since then, Exabeam has broadened the idea beyond generic automation and into the specific ecosystems enterprises are using most. Its own product pages now list support for ChatGPT, Microsoft Copilot, and Google Gemini, which is significant because those tools sit inside the daily workflow of office users rather than inside an isolated lab. That makes the attack surface both bigger and more invisible, especially when employees adopt AI tools without a formal rollout or security review. (exabeam.com)

The timing is no accident. Microsoft’s own documentation says Microsoft 365 Copilot operates within the user’s identity and access context, while also relying on multi-layered defenses against prompt injection. Google likewise documents protections for Gemini users against malicious content and prompt injection. OpenAI has also repeatedly acknowledged prompt injection as a serious frontier-security problem. Together, those vendor disclosures show that the risk is real, persistent, and not confined to one platform.

What makes Exabeam’s message credible is that it aligns with broader security thinking rather than inventing a brand-new fear. OWASP’s current Agentic Skills Top 10 says the intermediate behavior layer of agentic systems is under-protected, and it recommends inventorying skills, restricting permissions, and monitoring runtime behavior. In other words, the industry is converging on the idea that visibility is the first control, not the last. (owasp.org)

Why AI Agents Change the Security Model

AI agents are attractive because they compress work. They can gather context, make decisions, and execute multi-step tasks with far less human intervention than a traditional workflow engine. That efficiency is also what makes them dangerous: once the agent is allowed to act on behalf of a person or department, any compromise of the agent can become a compromise of the process itself. (exabeam.com)The core problem is delegated trust. A human user may be trained to ignore suspicious email, but an agent may ingest that same content as operational input and then faithfully obey malicious instructions hidden inside it. If the agent has access to calendars, files, tickets, source repositories, or cloud apps, the blast radius becomes a function of permissions, not intent.

The hidden employee problem

Exabeam’s framing of AI agents as “digital employees” is useful because it captures how organizations actually deploy them. They authenticate, use tools, and carry out business processes, which means they occupy a strange middle ground between software and staff. That is precisely why security teams need to know not just what the agent can do, but what it actually does under pressure. (exabeam.com)The phrase shadow AI matters here because many organizations will never get a formal asset inventory before users begin experimenting with AI assistants. Once that happens, the security team is already behind. Visibility tools that can identify AI activity, normalize it, and compare it to expected behavior may become as important as identity governance in the previous era of SaaS sprawl. (exabeam.com)

- AI agents can inherit user privileges

- Prompt injection can redirect legitimate actions

- Over-permissioned tools multiply the blast radius

- Untracked adoption makes response slower

- Behavioral baselines can reveal compromise faster

What Exabeam Says It Is Adding

Exabeam says the expansion now covers the major agentic AI risk areas through five new features. The company’s own product language highlights AI behavior baselining, prompt and model abuse detection, identity and privilege monitoring, agent lifecycle monitoring, and coverage for the OWASP Top 10 for Agentic AI. Those controls are meant to work together, rather than as isolated alerting rules.The most practical of those features is behavior baselining. Exabeam says it builds dynamic profiles for users and their AI agents and then tracks request volume, token usage, tool calls, and outbound traffic for deviations. That matters because many compromises will not look like malware; they will look like a bot that suddenly starts behaving outside its normal scope. (exabeam.com)

Behavioral baselining as the core control

This approach is strongest when an organization already knows what “normal” looks like for a given AI workflow. A finance assistant that normally drafts summaries should not suddenly start scraping large volumes of records or calling external endpoints at odd hours. The value is not just in detecting wrongdoing, but in making the difference between normal automation and suspicious automation visible. (exabeam.com)The same logic applies to prompt and model abuse detection. Exabeam says it is looking for prompt injection, model manipulation, and tool exploitation, and it says its detection library is now five times larger than before. That is a meaningful detail because prompt-injection defense tends to fail when defenders rely on a small number of fixed signatures. (exabeam.com)

- Request volume spikes can indicate abuse

- Token usage may reveal abnormal task scope

- Unexpected tool calls can expose hijacked workflows

- Outbound traffic can show exfiltration attempts

- Larger detection libraries improve coverage breadth

Identity, privilege, and lifecycle tracking

Identity and privilege monitoring are just as important as anomaly detection. If an agent is granted access it does not need, the organization is effectively turning a software workflow into an over-privileged insider. Exabeam’s lifecycle monitoring is meant to show where the agent came from, how it was provisioned, and where it may have drifted over time. (exabeam.com)That lifecycle lens is especially important for enterprises with many custom agents. A deployment may start as a narrow pilot, then accumulate permissions and dependencies over months. The result is a system that no one fully owns, even though many teams rely on it. That is the classic recipe for a security blind spot. (exabeam.com)

ChatGPT, Copilot, and Gemini as Security Targets

It is notable that Exabeam is not treating these as interchangeable AI tools. ChatGPT, Microsoft Copilot, and Google Gemini each sit in different enterprise contexts, but they all share the same basic risk pattern: they are deeply connected to user identity, organizational content, and action-taking tools. Once those systems are connected, the security problem becomes behavioral, not merely technical. (exabeam.com)Microsoft says Copilot works in the user’s identity and access context, which is helpful for containment but also means the agent inherits the user’s reach. Google’s admin guidance explicitly discusses how Gemini protections may block suspicious prompts or content references. OpenAI has published multiple posts on hardening ChatGPT against prompt injection, underscoring that even the most advanced vendors are still treating this as an active frontier.

Why platform-specific support matters

Exabeam’s support for these platforms is strategically important because security teams do not buy AI in the abstract. They buy the exact tools their employees are using. If a monitoring stack cannot ingest the logs, behaviors, and identity signals from the dominant platforms, then it will miss the real risk and simply generate generic AI alerts.That is also where vendor-native security controls and third-party visibility should be complementary, not competing. Microsoft and Google can block certain classes of malicious behavior inside their own platforms, but enterprises still need an independent layer that can correlate cross-app activity, user context, and downstream consequences. Relying on one vendor’s internal protections is a brittle strategy.

- ChatGPT introduces browser and task autonomy risks

- Copilot inherits Microsoft 365 identity context

- Gemini sits close to email, documents, and workspace data

- Each platform exposes a different operational threat path

- A unified analytics layer reduces tool-by-tool blindness

The OWASP Angle and the Rise of Agentic Taxonomies

Exabeam’s decision to map its coverage to the OWASP Top 10 for Agentic AI is a smart move because security buyers increasingly want controls that align with public frameworks. OWASP’s agentic guidance describes a class of risks around malicious skills, over-privileged skills, unsafe isolation, weak governance, and cross-platform reuse. Those are not theoretical concerns; they mirror the exact sorts of mistakes enterprises make when they operationalize automation too quickly. (owasp.org)That framework also helps explain why agentic security is different from classic LLM security. The model may be the brain, but the skills, tools, and runtime environment are what turn inference into action. If the model is manipulated, the outcome is no longer just a bad answer; it may be an unauthorized action in the real world. (owasp.org)

Why frameworks matter to buyers

Security leaders do not just need detection; they need a vocabulary to explain risk to auditors, executives, and cloud architects. Frameworks like OWASP’s allow them to translate “AI agent did something odd” into categories such as excessive privilege, governance failure, or unsafe isolation. That makes investment decisions easier and budget conversations less speculative. (owasp.org)Exabeam’s inclusion of OWASP coverage therefore serves a second purpose: it makes the platform easier to justify as a control layer rather than a point solution. In a crowded market, alignment with a recognized security taxonomy can be nearly as valuable as a technical feature because it shortens the path from demo to policy adoption. That is especially true in regulated industries. (owasp.org)

- OWASP helps standardize agentic risk language

- Behavioral controls map well to governance categories

- Cross-platform controls are easier to defend with frameworks

- Taxonomy alignment can speed board-level approval

- Security teams need explainability, not just telemetry

The SIEM Angle: New-Scale and LogRhythm

The other half of this story is that Exabeam is not only selling AI agent security. It is also reinforcing the value of its New-Scale and LogRhythm platforms by tying the new capabilities into broader SIEM and SOC workflows. That is a classic strategic move: if AI agents become a new attack surface, then the vendor that can correlate those signals inside existing operations tools gets to claim relevance across the whole security stack.For analysts, the promise is reduced alert fatigue. Exabeam says the new features can correlate and sequence agent activity automatically, generate machine-built timelines, and produce summaries that shorten investigation time. In practical terms, that means the platform is trying to convert AI activity from a flood of raw logs into a small number of higher-confidence cases. (exabeam.com)

Why SOC teams care

SOC teams are overwhelmed precisely because modern environments generate too many low-quality alerts. If AI agents create another stream of ambiguous events, the obvious failure mode is that analysts simply start ignoring them. Exabeam’s answer is to inject agent telemetry into existing investigative workflows so that non-human behavior becomes part of the same case narrative as user and endpoint activity.That integration strategy is commercially shrewd. Many enterprises are not ready to rip out their SIEM, but they are willing to add capabilities that improve detection without forcing a platform migration. Exabeam’s messaging makes clear that the company wants to modernize the SOC without making customers rebuild it from scratch.

- Timelines turn raw agent activity into cases

- Correlation reduces manual log stitching

- Summaries help overwhelmed analysts move faster

- SIEM integration lowers adoption friction

- Existing SOC workflows are easier to preserve

Competitive Implications

Exabeam’s move puts pressure on both established security vendors and AI platform providers. For security vendors, the question is whether they can add agent visibility fast enough to remain relevant as AI workflows become routine. For AI vendors, the question is whether native controls are enough, or whether enterprises will expect a parallel monitoring layer that sits above the model itself. (exabeam.com)The competitive edge here is not just detection. It is the combination of behavior analytics, identity-aware visibility, and SOC workflow integration. If Exabeam can consistently show that AI agent anomalies resemble familiar insider-risk patterns, it can position itself as a bridge between legacy UEBA and the new world of agentic AI. (exabeam.com)

What rivals will need to answer

Vendors competing in this space will need to show more than simple prompt filtering. They will need to answer questions about role-based access, runtime containment, behavioral drift, and whether their products can correlate agent actions with business processes rather than just with security logs. That is a much harder standard, but it is the one the market is drifting toward. (owasp.org)There is also a subtle positioning battle under way. If AI agents are treated as a new identity class, then security vendors that already live in the identity, SIEM, or UEBA layers get a major advantage. If they are treated as application features, then AI platform providers may keep the control plane in-house. Exabeam is betting that enterprises will prefer an independent behavioral layer. (exabeam.com)

- Security vendors must support non-human identities

- AI vendors must prove native defenses are sufficient

- Behavioral analytics creates differentiation

- Integration depth matters more than branding

- Independent oversight may win enterprise trust

Enterprise vs. Consumer Impact

For consumers, the immediate effect is limited. Most of Exabeam’s value lands in enterprise environments where AI agents are tied to corporate data, privileged workflows, and regulated processes. Consumers may still benefit indirectly as employers get better at protecting the tools they use, but the bigger story is squarely about business risk. (exabeam.com)For enterprises, the stakes are much higher because agent compromise can lead to data exposure, unauthorized actions, and compliance failures. If an AI assistant has access to HR, finance, customer service, or engineering systems, then a single misbehaving agent can create incidents that look more like insider abuse than classic malware. That is why visibility and policy enforcement have to be coordinated. (exabeam.com)

Different maturity levels, different priorities

Large enterprises will likely focus first on inventory, logging, and privilege review. Mid-market organizations may care more about turnkey detection and whether the platform can map agent events into existing SIEM workflows without a large services project. Smaller companies may simply want to know which AI tools are being used before they decide whether to monitor them at all. (exabeam.com)There is also a governance dimension. Board members and auditors are increasingly asking how organizations are managing AI risk, not just whether they have adopted AI. Exabeam’s emphasis on outcomes, benchmarks, and coverage scoring suggests it wants to make agentic security legible to non-technical stakeholders as well as to analysts. That may prove to be one of the most important features of all. (exabeam.com)

- Consumers mostly feel the impact indirectly

- Enterprises face direct data and compliance risk

- Large firms need governance and reporting

- Mid-market buyers want fast integration

- Small firms need basic visibility before policy maturity

Strengths and Opportunities

Exabeam’s announcement lands well because it connects a real market problem to a mature security category. It is not trying to convince buyers that AI agents are scary in the abstract; it is arguing that existing behavioral analytics can be extended to a new class of identities. That is a compelling story, especially for organizations already invested in SIEM and UEBA. (exabeam.com)The broader opportunity is that AI agents may become the next major workload category to require dedicated observability. If that happens, the vendors that can normalize agent telemetry into security operations will gain an important early foothold. Exabeam seems to understand that the winner will be the platform that makes invisible behavior visible before the incident, not just after it. (exabeam.com)

- Strong alignment with a real enterprise pain point

- Behavioral analytics maps naturally to agent risk

- Framework support improves buyer confidence

- SIEM integration lowers adoption barriers

- Board-ready reporting strengthens governance

- Cross-platform support improves market reach

- Early mover advantage in a new security niche

Risks and Concerns

The biggest risk is overpromising. Agentic AI security is still an emerging field, and no vendor is likely to detect every prompt injection, privilege abuse, or malicious workflow in real time. Exabeam’s feature set may reduce exposure substantially, but it should not be mistaken for a complete answer to a problem that the entire industry is still trying to define.There is also the danger of alert inflation. If the platform flags too many benign deviations, analysts will lose trust and the whole point of behavioral monitoring weakens. The success of these controls will depend on tuning quality, context awareness, and whether Exabeam can keep false positives low enough for busy SOCs to act on the signals. (exabeam.com)

- No platform can promise perfect prompt-injection defense

- False positives could erode analyst trust

- Overly broad baselines may create noise

- Customers may misread coverage as completeness

- Shadow AI remains hard to inventory

- Native vendor controls may duplicate third-party tooling

- The market could fragment around incompatible taxonomies

Governance and implementation challenges

A second concern is organizational readiness. Many companies still do not know which AI agents are in production, which teams own them, or what permissions they currently have. Without that inventory, even a strong monitoring platform only helps after the fact. The harder work is still policy, ownership, and lifecycle discipline. (owasp.org)There is also a subtle cultural risk. If enterprises treat AI agents as harmless productivity aids, they may underfund the controls needed to secure them. If they treat them as fully trusted coworkers, they may miss the fact that an agent’s trust can be manipulated faster than a human’s judgment. That mismatch is where many incidents will start. (exabeam.com)

Looking Ahead

The next phase of this market will likely be defined by three questions: which AI platforms get the most enterprise traction, how quickly agentic workflows expand, and whether security tools can keep pace with the complexity of those workflows. Exabeam’s latest update suggests the company believes the market is already moving from experimentation to operational dependence. (exabeam.com)If that proves true, then AI agent monitoring will stop looking like a niche feature and start looking like table stakes. The winners will be the vendors that can combine identity, behavior, and governance into one coherent control plane without overwhelming analysts. The losers will be the ones still treating agent activity like a side issue. (owasp.org)

- Expect more vendor support for agent visibility

- Watch for tighter integration with identity tools

- Look for better agent lifecycle governance

- Expect more framework-based security language

- Monitor whether false positives stay manageable

Source: Techzine Global Exabeam now monitors AI agents in ChatGPT, Copilot, and Gemini