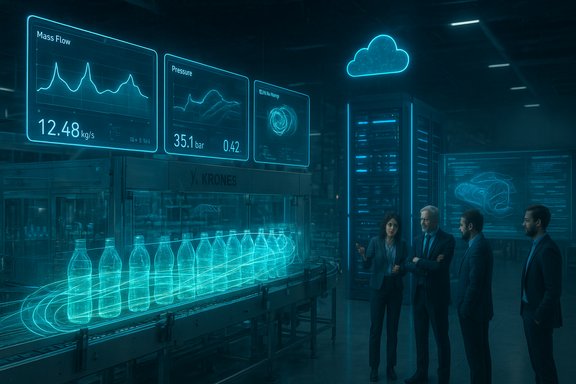

Synopsys’ Microsoft Ignite demonstration promised a step-change for factory-floor decisioning: a cloud-native, GPU-accelerated digital-twin framework that stitches together Ansys Fluent, NVIDIA Omniverse/OpenUSD, Synopsys’ accelerated physics orchestration, and Microsoft Azure to cut conventional CFD turnaround from hours into minutes — and to deliver near–real-time scenario optimization for a full bottling assembly line first deployed by Krones.

High-fidelity computational fluid dynamics (CFD) has been indispensable to engineering design and process optimization for decades, but its traditional time-to-solution — measured in hours or even days for production-grade, multiphysics problems — has limited its use as an operational, on-shift tool. The Synopsys framework demonstrated at Microsoft Ignite reframes that constraint by combining GPU-native solver acceleration, real-time scene interoperability via OpenUSD/Omniverse, and cloud orchestration on Azure so teams can run broad parameter sweeps and inspect results inside a factory-scale digital twin. The publicly broadcast claims are ambitious: Synopsys’ announcement and multiple syndicated press pieces state that the stack enabled a reduction in CFD runtimes “from 3–4 hours to less than 5 minutes” for the Krones filling-line demo. At the same time, a partner case study from SoftServe — one of the systems integrators on the project — documents a fielded implementation that produced simulation cycles of about 30 minutes after a two‑month delivery, a materially different, but still very meaningful outcome. Both figures appear in the public record and must be read together.

Synopsys’ Microsoft Ignite demo frames a credible path toward bringing high‑fidelity simulation into the operational tempo of the shop floor. The demonstrable field outcome reported by SoftServe — reducing cycle times to roughly 30 minutes — already changes how engineering and operations can collaborate in a shift. The headline promise of sub‑5‑minute responses is an important technical aspiration that signals where the industry is heading, but it should be treated as an optimized-case metric until vendors publish reproducible runbooks and auditors validate fidelity trade-offs. For manufacturers, the prudent approach is to pilot with clarity of metrics, require auditable benchmarks, and treat the digital twin as both a technical platform and an operational governance challenge.

Source: dqindia.com Synopsys demos framework for optimizing manufacturing processes with Digital Twins @ Microsoft Ignite

Background / Overview

Background / Overview

High-fidelity computational fluid dynamics (CFD) has been indispensable to engineering design and process optimization for decades, but its traditional time-to-solution — measured in hours or even days for production-grade, multiphysics problems — has limited its use as an operational, on-shift tool. The Synopsys framework demonstrated at Microsoft Ignite reframes that constraint by combining GPU-native solver acceleration, real-time scene interoperability via OpenUSD/Omniverse, and cloud orchestration on Azure so teams can run broad parameter sweeps and inspect results inside a factory-scale digital twin. The publicly broadcast claims are ambitious: Synopsys’ announcement and multiple syndicated press pieces state that the stack enabled a reduction in CFD runtimes “from 3–4 hours to less than 5 minutes” for the Krones filling-line demo. At the same time, a partner case study from SoftServe — one of the systems integrators on the project — documents a fielded implementation that produced simulation cycles of about 30 minutes after a two‑month delivery, a materially different, but still very meaningful outcome. Both figures appear in the public record and must be read together. What Synopsys and partners built

The integration pattern (high level)

- Solver layer: Ansys Fluent (GPU-enabled) provides the CFD backbone for multiphase and single-phase fluid dynamics runs.

- Acceleration and orchestration: Synopsys contributes an accelerated physics layer and cloud-native orchestration to schedule jobs, manage multi-GPU runs, and reduce orchestration overhead.

- Interoperability and visualization: NVIDIA Omniverse libraries and the OpenUSD scene model are used as the canonical scene/visualization and collaboration layer so CAD, CAE, telemetry and simulation overlays live in the same twin.

- Cloud platform: Microsoft Azure supplies elastic GPU infrastructure and the Ansys Access on Azure service to host preconfigured Ansys/Fluent environments.

- Systems integration / solver tuning: CADFEM customized Fluent settings; SoftServe implemented integration, delivery automation and the operator-facing dashboard.

The Krones use case — what was demoed

Krones’ bottling/filling line was modeled as a physically accurate digital twin. The demonstration allowed parameterized sweeps of variables such as:- bottle geometry and neck shape

- liquid viscosity and surface tension proxies

- fill levels and valve timing

- conveyor speed and alignments

Verifiable claims and where the record diverges

The headline: “3–4 hours down to less than 5 minutes”

Synopsys’ corporate announcement and many syndicated press reports list a headline reduction from typical 3–4 hour CFD runs to under five minutes on the Krones demo. That is presented as the aspirational, lab‑condition capability of the integrated stack when solver pathways, mesh density, GPU count and surrogate techniques are tuned to aggressive latency goals.The fielded outcome: SoftServe’s 30‑minute cycle

SoftServe’s detailed case study — the integrator responsible for delivering the Krones pilot — explicitly reports simulation cycles of about 30 minutes per run after the two‑month deployment, framing that outcome as a dramatic reduction from prior hours‑long runtimes and a practical shift into the same shift cadence as plant operations. This case-study outcome is a real, reproduced field result and carries operational credibility.Why both numbers can be true (and why they are not interchangeable)

The divergence between “under 5 minutes” and “~30 minutes” is not necessarily a sign of bad faith — it reflects different points on the accuracy vs. latency curve. Key technical levers that drive run-time include:- Mesh density and cell counts — run time scales nonlinearly with total cell count; production‑grade meshes are far larger than benchmark meshes.

- Physics fidelity — multiphase flows, free-surface tracking and complex turbulence or particle-tracking models dramatically increase solver cost.

- Solver configuration and numerical schemes — reduced-order models (ROMs), implicit vs. explicit time-stepping, and solver tolerances impact both accuracy and wall clock time.

- Hardware topology — sub‑5‑minute responses typically require large, high-memory server GPUs and multi‑GPU parallelism (for example, NVIDIA H100/A100 or equivalent accelerators) plus NVMe/PCIe throughput that minimizes I/O bottlenecks.

- Use of surrogates or ML-assisted inference — trading small amounts of fidelity for orders-of-magnitude speedups is a common path to real‑time performance, but it requires validation and error budgeting.

Why OpenUSD / Omniverse matters here

OpenUSD (Universal Scene Description) serves as the neutral scene model so geometry from CAD, meshes from CAE, and telemetry from MES/PLC systems can be composed into a single digital twin. NVIDIA Omniverse provides the real‑time runtime, rendering primitives and multi‑user collaboration features to make simulation outputs consumable across engineering, operations and management teams. This is a pragmatic choice to reduce friction between specialized toolchains and to give non‑CAE stakeholders a visual, contextual way to interpret simulation outputs. The interoperability wins are real and materially lower the barrier to cross-functional adoption.Technical evaluation — what to validate in any pilot

Manufacturers evaluating Synopsys’ framework — or any similar GPU-accelerated digital-twin pipeline — should insist on explicit, auditable benchmarks and acceptance criteria. A rigorous evaluation should include:- A reproducible runbook that lists hardware (GPU model, count, memory), solver versions, mesh sizes, numerical schemes, and all solver flags used.

- A fidelity error budget that relates reduced-order or surrogate predictions to a validated high-fidelity baseline (e.g., integrated error statistics for pressure, mass flow, and free-surface position).

- Measured end‑to‑end latency that includes orchestration overhead: data staging, container spin-up, multi‑GPU synchronization, and visualization streaming.

- Cost-per-run FinOps guidance: cloud GPU minutes, storage I/O, and data egress allowance for expected production cadence.

- Operational constraints such as determinism, reproducibility between runs, and the ability to reproduce results in on-prem or hybrid environments if cloud connectivity degrades.

Operational and commercial risks

1) Fidelity vs. latency trade-off

The central trade-off is non-negotiable: faster runs usually require either higher hardware investment (more/faster GPUs) or lower numerical fidelity. Both options create downstream consequences — either higher operational costs or the risk of suboptimal, fidelity-compromised advisories on the line.2) Hidden costs and FinOps

Elastic GPU clusters deliver speed but can be costly if adopted without guardrails. Spot instances, auto-scaling policies, job backpressure controls and efficient data tiering are mandatory to avoid runaway cloud bills. Also factor in licensing models for Ansys Fluent on GPU and any per-GPU or per-minute add-ons from partners.3) Vendor and platform lock-in

Deep integration with Omniverse/OpenUSD, Ansys Access on Azure and Synopsys’ orchestration layer can accelerate deployment but also increase coupling to specific cloud services and runtime libraries. Buyers should insist on portability tests and data-export guarantees.4) Validation, certification and safety

When simulation output influences on-line decisions (valve timing, fill control), it becomes part of the safety and QA chain. Regulatory compliance, traceability, and verifiable test cases must be part of any operational rollout. Simulations used in production control loops should carry the same audit and validation disciplines as any software that directly affects manufacturing quality.5) Data movement and latency

Factory telemetry and twin updates must flow with low jitter to prevent stale decisioning. In hybrid deployments, round‑trip latency between edge sensors and cloud simulation engines can be a limiting factor. Strategies such as lightweight edge inference or partial on‑prem acceleration should be explored where latency is critical.Strategic benefits that are credible today

Despite legitimate caveats, the Synopsys‑led stack demonstrates several practical, credible wins for manufacturers adopting digital twins intelligently:- Faster design-to-ops loop: even the 30‑minute fielded cycle SoftServe reports enables same-shift what‑if analyses, dramatically accelerating engineering/operations collaboration.

- Reduced waste and improved yield: validated scenario sweeps can identify valve and timing settings that materially reduce overfill or spillage.

- Broadened access to simulation insights: Omniverse/OpenUSD visualization surfaces complex CAE results to non-specialists, improving cross-team decision quality.

- Foundations for higher-value use cases: once a production-grade twin and orchestration layer exist, teams can extend the platform to predictive maintenance, layout optimization, and operator training.

Practical guidance for WindowsForum readers and manufacturing IT teams

- Start with a small, representative pilot: choose a single machine or line with known failure modes and measurable KPIs (waste rate, line speed variance).

- Require reproducible benchmarks with explicit runbooks from any vendor claiming sub‑5‑minute latency.

- Assess hardware strategy: evaluate cloud-only vs hybrid (on-prem GPU) and identify the target GPU family (H100/A100/MI300) and corresponding procurement / cloud instance types.

- Define fidelity bands: require vendors to publish error tolerances at each fidelity tier (e.g., “fast mode” vs “high‑fidelity mode”) and accept only modes that meet your QA thresholds for production decisions.

- Embed cost controls in contracts: capped GPU hours, pre-approved autoscaling policies, and expense alerts.

- Validate operational workflows: dashboards, operator UIs and the handoff between simulation advisory and PLC/control actions must be tested end-to-end.

- Insist on data portability and exported URIs for USD/scene artifacts so you own the digital twin, not just the runtime.

Quotes and partner perspective

Synopsys framed the announcement as a milestone for scalable, simulation-driven applications; NVIDIA highlighted Omniverse and GPU acceleration as the enabler of factory-scale simulation; and Microsoft emphasized Azure’s cloud foundation for performance and governance. Synopsys’ materials include statements from industry executives endorsing the collaborative approach — but the most operationally useful evidence is the integrator’s field case that documents a 30‑minute production cadence.Where this fits in the wider industrial digital‑twin landscape

The Synopsys + Ansys + NVIDIA + Microsoft + integrator approach is representative of a broader industry trend: moving from single-asset twins and offline CAE to multi‑physics, factory-scale twins that close the loop into operations. Several platform vendors are now integrating real‑time visualization, GPU acceleration and cloud HPC orchestration to enable use cases that were previously impractical outside laboratory settings. The differentiator will be repeatable performance at production fidelity and predictable run costs.Final assessment — strengths and caveats

- Strengths

- Modular, pragmatic architecture that leverages established CAE and cloud components.

- Actionable field evidence: SoftServe’s 30‑minute Krones deployment demonstrates real operational benefit.

- Interoperability via OpenUSD which materially lowers integration friction across CAD/CAE/MES stacks.

- Caveats and risks

- Performance claims require audit: the “under 5 minutes” number is plausible under tightly controlled conditions but is not yet a broadly reproducible production metric without published runbooks.

- Fidelity trade-offs must be explicit and contractually enforced; fast answers that mislead operations are worse than slow, accurate ones.

- Cloud cost and vendor lock‑in can be real long-term burdens unless portability is engineered from the start.

Recommended next steps for manufacturers

- Run a short pilot with three explicit goals: (a) reproduce vendor claims on your topology, (b) measure fidelity against a validated baseline, (c) estimate per-run cloud costs.

- Require a vendor-supplied runbook for any headline latency claim that includes GPU family, mesh size, solver flags, surrogate usage, and error metrics.

- Build governance that treats simulation advisories as advisory first — require human-in-the-loop confirmations until the twin’s fidelity is proven in production.

- Negotiate FinOps protections: budget caps, reserved instance options and autoscale thresholds.

- Keep an exportable copy of your USD scenes and numeric outputs under your control so the twin remains an asset you can host or migrate.

Synopsys’ Microsoft Ignite demo frames a credible path toward bringing high‑fidelity simulation into the operational tempo of the shop floor. The demonstrable field outcome reported by SoftServe — reducing cycle times to roughly 30 minutes — already changes how engineering and operations can collaborate in a shift. The headline promise of sub‑5‑minute responses is an important technical aspiration that signals where the industry is heading, but it should be treated as an optimized-case metric until vendors publish reproducible runbooks and auditors validate fidelity trade-offs. For manufacturers, the prudent approach is to pilot with clarity of metrics, require auditable benchmarks, and treat the digital twin as both a technical platform and an operational governance challenge.

Source: dqindia.com Synopsys demos framework for optimizing manufacturing processes with Digital Twins @ Microsoft Ignite