Finastra’s plan to embed an agentic AI assistant into its Mortgagebot loan‑origination suite — with a public target of launching “by yearend” — is the clearest signal yet that mainstream lending platforms are moving from generative helpers to systems that can act inside origination workflows on behalf of lenders and loan officers. (finainews.com)

Finastra is a long‑standing vendor in the banking and lending software market, operating a portfolio of solutions that includes Mortgagebot — an end‑to‑end cloud mortgage origination platform used by banks, credit unions and mortgage lenders. The company has steadily expanded its AI feature set across trade finance, retail banking and lending products over the last two years.

“Agentic AI” denotes a class of systems that go beyond generating text or recommendations: they plan, call services or tools, take multi‑step actions, and verify outcomes. Over 2025–2026 the industry has accelerated efforts to standardize and govern these capabilities — including the formation of the Agentic AI Foundation under the Linux Foundation to steward open protocols and runtime practices — because production agentic systems raise operational, auditability and security questions that generative assistants do not.

An agentic assistant embedded in Mortgagebot can, in principle:

Expected near‑term benefits

Key technical workstreams

That said, the technical and governance practices that will determine long‑term success are stil standards projects and academic safety work are racing to produce testable, auditable norms for agentic behavior; production success will hinge on whether vendors bake reputable safety controls, traceability, and compliance features into general availability releases.

However, the difference between a promising pilot and safe, compliant production is documentation, governance, and independent verification. Lenders that move quickly should insist on explicit autonomy definitions, tenant isolation for models, auditable decision trails, and third‑party safety testing before enabling any agentic actions that materially affect borrower outcomes or regulatory disclosures. The industry is maturing fast — standards bodies and vendor roadmaps are following — but for now the correct posture is pragmatic optimism with a strict procurement checklist.

Finastra’s public statements and the FinAi News coverage make it clear that agentic AI in lending is no longer theoretical; it is being productized. The real story over the next nine months will be whether Finastra (and its peers) can deliver measurable operational gains while meeting the non‑negotiable regulatory, security, and audit requirements that lenders — and their regulators — will insist upon.

Source: FinAi News Finastra to launch agentic AI lending tool by yearend

Background

Background

Finastra is a long‑standing vendor in the banking and lending software market, operating a portfolio of solutions that includes Mortgagebot — an end‑to‑end cloud mortgage origination platform used by banks, credit unions and mortgage lenders. The company has steadily expanded its AI feature set across trade finance, retail banking and lending products over the last two years.“Agentic AI” denotes a class of systems that go beyond generating text or recommendations: they plan, call services or tools, take multi‑step actions, and verify outcomes. Over 2025–2026 the industry has accelerated efforts to standardize and govern these capabilities — including the formation of the Agentic AI Foundation under the Linux Foundation to steward open protocols and runtime practices — because production agentic systems raise operational, auditability and security questions that generative assistants do not.

What FinAi News reported — the core announcement

- FinAi News published a March 16, 2026, piece reporting that Finastra plans to add an agentic AI tool to its Mortgagebot loan origination system, with a launch target of “by yearend” (which, given the article date, implies the company is aiming for deployment by December 31, 2026). The piece says the tool will support originators across the application lifecycle, including document processing and customer engagement, and that Microsoft Azure will provide the AI capabilities for the mortgage process. (finainews.com)

- The story quotes Andrew Bateman, Finastra’s Executive Vice President for Lending, describing the integration as a way to increase accuracy and efficiency for mortgage originations — identifying abnormalities, assisting with document handling, and improving the borrower experience. The article gives a short, practical description rather than deep technical detail. (finainews.com)

Why this matters: the practical case for an agentic mortgage assistant

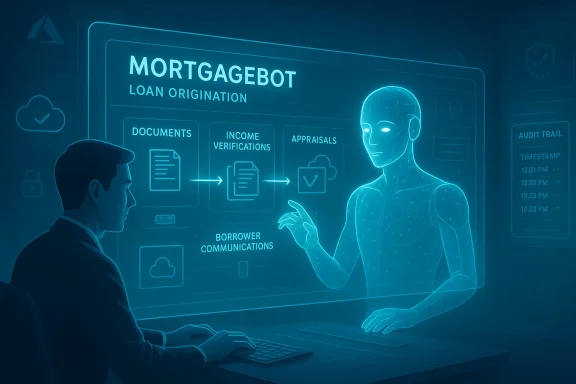

Lending workflows — especially mortgage origination — are document‑heavy, rules‑driven, and highly exception‑prone. A loan that fails to close on time often does so because of missing documents, mis‑matched data, or manual bottlenecks between channels and back‑office systems.An agentic assistant embedded in Mortgagebot can, in principle:

- Read and extract data from uploaded documents, reconcile those values against the application and third‑party feeds (e.g., income verifications, credit bureau returns).

- Create and submit requests for missing items, draft borrower communications, and update case statuses inside the LOS (Loan Origination System).

- Flag anomalies or policy breaches to underwriters with prebuilt rationale and evidence, reducing triage time.

- Orchestrate cross‑system tasks (e.g., order an appraisal, schedule an underwriter review, trigger compliance checks) and close the loop with timestamped audit trails.

How credible is the timeline and the technical stack?

- Timeline credibility: FinAi News’ “by yearend” statement is a vendor‑sourced roadmap fragment rather than a formal product release. Finastra’s public history shows a pattern of staged feature rollouts (for example, generative features for Filogix earlier in 2025), so a yearend target is plausible for a phased availability program, but it should be treated as aspirational until Finastra issues an official product release or publishes a firm availability schedule.

- Cloud and model stack: FinAi News explicitly says Microsoft Azure will be used for the mortgage AI capabilities. That aligns with Finastra’s prior Azure collaborations — notably for Assist.AI in trade finance, which uses Microsoft Azure OpenAI Service — and with Finastra’s enterprise‑grade preference for major cloud providers. It’s reasonable to expect Azure as the runtime and model hosting environment, though precise model choices (e.g., which LLM(s), whether fine‑tuned or proprietary layers) are not specified in the public reporting. That detail remains unverifiable without a Finastra spec sheet or partner statement. (finainews.com)

- Integration approach: Finastra’s architectural pattern tends to be “open platform + partner integrations.” Mortgagebot already ships with workflow automation and third‑party connectors, which is consistent with deploying an agent as a workflow engine layer that orchestrates existing services rather than a monolithic autonomous product. Expect the agent to operate with clearly defined system roles and API contracts inside the LOS.

Technical design expectations and what’s missing from public reporting

What we can reasonably expect

- A hybrid architecture: agentic orchestration layer + cloud LLMs for reasoning + classical ML/OCR for document parsing. This is the dominant pattern used by lenders moving from prototypes to production. It balances explainability and control with advanced reasoning.

- Role‑based access and audit logs: any responsible deployment for mortgage origination must include detailed auditable traces for actions the agent takes (documents read, API calls, messages sent), both for compliance and for later remediation. Expect this to be a formal requirement for lenders adopting an agentic tool.

- Human‑in‑the‑loop gates: early deployments will likely preserve human decision authority for any underwriting decision or policy exception. Agents will automate tasks and prepare analyses, but sign‑off points will remain with loan officers or underwriters for the foreseeable future. Finastra’s own public posture emphasizes augmentation over replacement.

What the public reporting does not disclose (and must be verified)

- Exact autonomy boundaries: how many and which actions the agent can take without human review? The FinAi News item describes assistance across document processing and borrower engagement but does not list hard autonomy limits. That is a central safety and regulatory question and is currently underdocumented. (finainews.com)

- Data residency, model governance, and training sources: mortgage data is sensitive and regulated. There is no public specification in the report about whether models are trained on customer data, how customer data is protected, or whether models operate in a tenant‑isolated manner. The vendor’s Finastra whitepapers emphasize governance, but the details must be confirmed before any lender adopts agentic actions in production.

- Fail‑safe mechanisms: what happens if the agent misclassifies a document or accidentally triggers an escalation? Public reporting is silent — vendors need to publish incident response, rollbacks, and observability playbooks for enterprise buyers.

Governance, regulation and safety — the non‑negotiables

Agentic tools are quickly becoming subject to scrutiny from multiple perspectives: internal risk teams, external regulators, and open‑standards groups. The industry is already creating the governance scaffolding that will shape production deployments:- Standards and interoperability: the Agentic AI Foundation (AAIF) and naming projects such as the Model Context Protocol and AGENTS.md aim to provide neutral protocols and tooling to make agentic systems more auditable and composable. Lenders and vendors will be pressured to adopt these standards or equivalent controls to manage third‑party risk.

- Security and adversarial risk: agentic systems that can call services open new attack surfaces, including prompt injection, unauthorized action execution, and supply‑chain attacks. Academic work and vendor whitepapers are already proposing runtime defenses, behavior certificates, and authenticated workflow models; these must be part of any banking deployment.

- Regulatory expectations: financial regulators (national and regional) will demand demonstrable controls around decision explainability, data handling, and escalation paths. These are not just theoretical: the compliance obligations that surround mortgage disclosures and anti‑money‑laundering checks mean vendor certifications and independent audits will be a gating requirement for many banks. Finastra’s public materials stress compliance and auditability as product pillars — but lenders should demand detailed, testable evidence in procurement.

Competitive landscape: who else is moving and why it matters

Finastra is not alone. A growing cohort of specialist vendors, system integrators and platforms are building agentic modules for lending:- Niche LOS vendors and originator platforms are experimenting with “Broker Assist” and similar agents to reduce broker friction and accelerate decisioning. Recent partner announcements in the UK and Canada show regional pilots.

- System integrators and consultancies are packaging agentic hubs to industrialize deployment across KYC, underwriting, and servicing; Sutherland’s FinAI Hub is a recent example targeting banking and FS use cases. This signals the move from pilots to productized solutions.

- Major platform vendors (including cloud providers and ERP/CRM players) are embedding agentic features into enterprise product lines. That amplifies both adoption velocity and the push for open governance standards.

Business impact: operational wins and new cost centers

Agentic assistants promise measurable short‑term benefits — but also create new operational responsibilities.Expected near‑term benefits

- Faster turn times: automating document triage and evidence assembly can reduce cycle times and speed closings.

- Reduced rework: consistent checks and automated reconciliation reduce human error and rework costs.

- Better borrower experience: proactive communications and faster response times reduce dropouts.

- Model ops and governance teams: lenders will need in‑house or outsourced MLOps to manage models, monitor drift and run audits.

- Incident response and remediation: if an agent takes a misstep, lenders need playbooks for corrective action and remediation.

- Vendor risk management: integrating an agent alters vendor risk profiles; procurement and legal teams must expand SLAs, audit rights and data protection clauses.

- Clear autonomy matrix (which actions the agent can take autonomously).

- Audit trail and explainability guarantees.

- Data residency and encryption commitments.

- Incident response SLAs and test evidence.

- Third‑party security certifications and independent evaluations.

Developer and IT operations implications

For IT teams inside banks, integrating an agent into Mortgagebot will feel like a typical integration project — with extra emphasis on safety and observability.Key technical workstreams

- Integration testing across LOS APIs, appraisal ordering, credit feeds, and document repositories.

- Instrumentation for tracing agent decisions end‑to‑end (who/what changed what, and why).

- Access controls and identity mapping between agent identities and human roles.

- Replay and audit capabilities so regulators and internal auditors can reconstruct any automated decision.

Where the gaps and risks remain — a cautionary appraisal

- Unverified autonomy limits: public statements do not yet enumerate the precise actions the Finastra agent will take without a human signer. That lack of specificity increases procurement risk and should be an immediate question for prospective buyers. (finainews.com)

- Data governance ambiguity: mortgage files contain PII, income data, and other regulated attributes. Vendors must disclose whether inference or fine‑tuning will use customer data, and how they guarantee tenant isolation. If a model is trained on pooled or third‑party corpora without strict separation, the vendor‑hosted model becomes a regulatory liability. The FinAi News article references Azure as the platform, but not model training policies — that needs a clear, auditable statement. (finainews.com)

- Adversarial exposure: agents that accept uploaded documents and call services are a new target for financially motivated adversaries. Prompt injection, manipulated documents, and supply‑chain compromise are real threats; the academic community and industry groups recommend runtime enforcement, authenticated prompts, and behavior certificates to mitigate these. Procurement teams should require independent testing against adversarial scenarios.

- Human capital impacts: while vendors promise efficiency gains, lenders must plan reskilling and role redesign for loan officers, processors and underwriters. Early adopters who fail to invest in change management will create internal friction and undercut the projected ROI.

A short checklist for lenders considering Finastra’s agentic offering

- Demand concrete timelines and staged availability milestones with acceptance criteria tied to compliance tests.

- Require an autonomy specification that lists every agent capability and the exact point where human sign‑off is enforced.

- Insist on tenant‑isolated model hosting, explicit non‑use or explicit consent for training on customer data, and strong data residency guarantees.

- Ask for third‑party security and safety evaluations including adversarial testing, and request sample audit logs and replay capability.

- Negotiate contractual indemnities or remediation commitments related to agent‑driven errors that affect regulatory filings or borrower disclosures.

Where this fits in the larger market shift

Finastra’s announcement (as reported by FinAi News) sits inside a broader industry movement: large platform vendors and integrators are packaging agentic capabilities for finance, standards bodies are forming to govern agent behavior, and specialist vendors are building narrow, risk‑focused agents for mortgage workflows. This is not a single vendor trend — it’s the start of a structural technology shift for operational finance. (finainews.com)That said, the technical and governance practices that will determine long‑term success are stil standards projects and academic safety work are racing to produce testable, auditable norms for agentic behavior; production success will hinge on whether vendors bake reputable safety controls, traceability, and compliance features into general availability releases.

Conclusion: pragmatic optimism — but require proof

Finastra’s move to add an agentic AI assistant to Mortgagebot is an important and expected step for modern lending platforms. The use case is obvious: mortgage origination benefits enormously from automation that can reason across documents, services and workflows. The FinAi News report is credible in light of Finastra’s prior Azure‑backed AI work and public statements about agentic strategies. (finainews.com)However, the difference between a promising pilot and safe, compliant production is documentation, governance, and independent verification. Lenders that move quickly should insist on explicit autonomy definitions, tenant isolation for models, auditable decision trails, and third‑party safety testing before enabling any agentic actions that materially affect borrower outcomes or regulatory disclosures. The industry is maturing fast — standards bodies and vendor roadmaps are following — but for now the correct posture is pragmatic optimism with a strict procurement checklist.

Additional reading and context (vendor and industry materials cited in this report)

- FinAi News’ reporting on the Mortgagebot agentic launch. (finainews.com)

- Finastra’s Assist.AI announcement and their Azure OpenAI collaboration (trade finance example).

- Finastra product pages and viewpoint pieces on Mortgagebot and agentic AI strategy.

- Filogix generative AI press release (Finastra mortgage business).

- Industry moves and platform builds (Sutherland FinAI Hub).

- Neutral governance efforts and standards coordination (Agentic AI Foundation / Linux Foundation).

- Academic and technical analysis of agentic execution risk and auditability (FinVault and related research).

Finastra’s public statements and the FinAi News coverage make it clear that agentic AI in lending is no longer theoretical; it is being productized. The real story over the next nine months will be whether Finastra (and its peers) can deliver measurable operational gains while meeting the non‑negotiable regulatory, security, and audit requirements that lenders — and their regulators — will insist upon.

Source: FinAi News Finastra to launch agentic AI lending tool by yearend