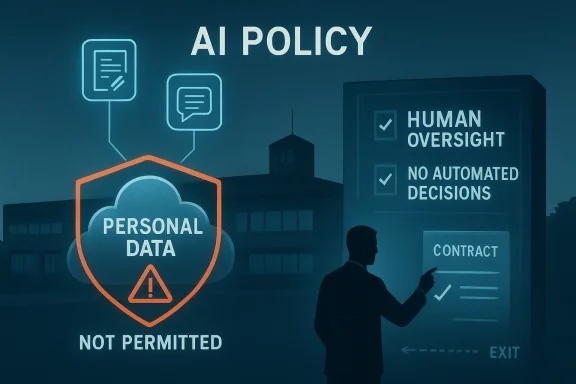

Flintshire County Council’s move toward a formal AI policy is a telling sign of how local government is trying to catch up with a technology that is already seeping into day-to-day public services. The council’s Corporate Resources Overview and Scrutiny Committee has recommended Cabinet adopt rules that would keep residents’ personal data out of AI systems, require human oversight for decisions, and give the authority the ability to exit agreements if serious breaches arise. The message is clear: Flintshire wants the productivity gains of AI, but not at the expense of privacy, accountability, or public trust.

The debate in Flintshire is not really about whether AI exists; that battle has already been lost. It is about how a council uses AI without drifting into risky, unregulated territory where staff experiment with consumer tools, data protection rules blur, and residents’ information becomes collateral damage. In that sense, the policy is as much about governance as technology.

The council’s draft framework sets a narrow perimeter. It allows AI for research, drafting, summarising, transcription, presentations, and data analysis, but blocks the use of residents’ personal information and rejects automated decision-making at every level. That distinction matters because many of the highest-risk public sector uses of AI are not flashy chatbots, but mundane back-office shortcuts where sensitive data can be copied into tools without proper scrutiny.

Officers were careful to say the policy is not about cutting staff numbers. That point is politically important. In local government, AI often triggers suspicion that efficiency is a euphemism for headcount reduction, and that residents will be told they are getting better service while the workforce is quietly thinned out. Flintshire’s managers appear keen to present AI as an assistive layer, not a replacement strategy.

The timing is also notable. The policy was on the committee’s agenda for 19 March 2026, with Cabinet due to consider it on 24 March 2026. That places Flintshire squarely among councils trying to formalise AI use now, rather than waiting until an incident forces the issue. In local government, that is often the difference between controlled adoption and crisis-driven policy making.

At the same time, the council operates in the same constrained environment as many UK local authorities: rising demand, tight budgets, legacy systems, and strong obligations around information governance. AI can help with some of those pressures, but only if the authority has a clear framework for risk, procurement, and accountability. Without that, the promise of speed can quickly become the reality of exposure.

This is not Flintshire’s first brush with AI-related debate. In September 2025, the council considered a Notice of Motion 6 on artificial intelligence in council services. That motion noted that AI use had already begun and argued for a defined regulatory framework based on values such as data security, accountability, ethics, informed consent, transparency, and contingency planning. In other words, the policy now before the council has been shaped by a year of discussion about the gap between technical enthusiasm and institutional caution. (committeemeetings.flintshire.gov.uk)

The motion also shows how broad the concerns became. It did not focus only on chatbots or productivity tools, but on whether AI systems could access council information, whether vendors might monitor interactions, and whether residents should have meaningful opt-outs where data is shared with AI. That is a strong hint that Flintshire’s policy is not just about today’s tools; it is also about anticipating the next wave of AI-enabled procurement. (committeemeetings.flintshire.gov.uk)

Wales is also developing a broader policy environment around responsible AI use, with emphasis on ethics, transparency, human oversight, and digital skills. That wider direction helps frame Flintshire’s policy as part of a public-sector trend rather than an isolated local decision. The local authority is effectively trying to align innovation with public-sector caution, which is exactly where a council ought to be.

The policy also rejects automated decision-making. That may sound obvious, but in practice it is a crucial statement. Many modern systems quietly move from “assistive” to “decision-shaping” by offering a recommended action, ranking cases, or generating a near-complete response for human sign-off. Flintshire appears to be insisting that a person remains responsible for the decision, not merely the final click.

Another important feature is the idea of individual responsibility. Officers and members would be personally accountable for ensuring AI is used appropriately, and the authority would retain the ability to end agreements if serious data breaches occur. That means the policy is not only aimed at software vendors; it is also about staff discipline and procurement leverage.

The most interesting part is that the council seems to accept the inevitability of AI use while trying to corral it. That is a more mature stance than a blanket ban, because a ban often just drives usage underground. A controlled policy, by contrast, gives the council a chance to monitor, train, and enforce.

The policy’s decision to prohibit residents’ personal data from being entered into AI is therefore the key safeguard. It is a blunt instrument, but blunt instruments can be useful when the technology landscape is still shifting fast. In a setting where not every staff member will understand model retention, prompt logging, or vendor training policies, a bright-line rule is often more enforceable than a nuanced exception process.

There is also a procurement angle here. The council wants safeguards that would allow it to exit arrangements if serious breaches arise. That matters because the real risk is not only accidental misuse by staff, but contractual lock-in with vendors whose terms are too vague, too broad, or too permissive about data use. Once AI gets embedded in a workflow, changing provider can become both technically and financially difficult.

Flintshire’s approach reflects a growing local-government recognition that information governance is now product governance. It is no longer enough to ask whether software works; councils also have to ask where the data goes, who can see it, whether it can train a model, and what happens if the service disappears or changes hands.

That distinction is central to legitimacy. Residents are far more likely to trust an authority when a named human remains responsible for advice, triage, and final decisions. If a system simply spits out a recommendation and the human becomes a rubber stamp, accountability has already been diluted. Flintshire seems to understand that and wants to preserve the human chain of responsibility.

The policy also places responsibility on individual users. In practice, that means staff can’t hide behind the tool if something goes wrong. If a worker uses a personal AI account to draft a resident response, or pastes sensitive content into an unauthorised system, the policy would treat that as misconduct. That is a strong signal that the authority intends to police behaviour, not merely issue guidance and hope for the best.

Ethics is not just about offensive content. It is also about whether a model encodes unfair assumptions in its training data, whether it behaves differently for different groups, and whether the authority can actually test that behaviour. For a council, the bar should be high because public services are meant to be equitable by design. A tool that is “usually accurate” but fails systematically for certain groups is not acceptable in a civic context.

The policy also addresses transparency. The committee’s motion said the council should publish a list of AI tools used, the purposes they serve, and assessments made under the principles. That is important because transparency is how public bodies earn trust when using systems that can feel invisible or abstract. Residents may not care which model produced a draft letter, but they absolutely care if the council is using their data in ways they were never told about. (committeemeetings.flintshire.gov.uk)

That matters because local government is not a factory line. Service demand is variable, human needs are complex, and many tasks require judgement, empathy, and context. A social worker, a housing officer, or a benefits advisor does not just process information; they interpret circumstances. AI can help with the paperwork, but it cannot absorb the human element that residents expect from a council.

There is also an internal morale issue. If AI is presented as a surveillance tool or a stealth redundancy programme, staff will resist it. If it is positioned as a way to reduce repetitive work and improve response times, adoption is more likely. Flintshire’s messaging suggests it wants the latter framing, which is wise.

This is an especially important issue because modern software procurement increasingly bundles AI into products by default. A council may think it is buying a generic records system or collaboration suite, only to find that AI assistants, transcription engines, or content suggestions are activated as part of a later update. That means procurement teams need to look beyond the headline product and inspect the hidden AI functions. That is where many authorities get caught out.

Flintshire’s concern about ending agreements after serious breaches is also realistic. If a vendor mishandles data, the council needs to be able to act quickly, not wait for a long contractual wrangle. The ability to suspend or terminate service is a core safeguard, especially where resident data and statutory duties are involved.

The broader lesson is that local government AI policies are beginning to converge around a few shared principles: no personal data in consumer tools, no automated decisions without oversight, transparent use, staff accountability, and regular policy review. Flintshire’s six-month review cycle is particularly smart because it acknowledges that AI governance is a moving target, not a one-off document. That pace of review is probably the minimum sensible standard in 2026.

There is also a political dimension. Councillors are under pressure to show that digital transformation is not just jargon. Voters want better services, but they are wary of being experimented on. A local authority that can say, credibly, “we use AI carefully, we keep humans in charge, and we don’t feed personal data into public models” will likely find it easier to defend.

The next six months will matter because the technology will keep changing even if the policy does not. New AI features will appear in software suites, vendors will refine their privacy terms, and staff will continue experimenting with tools they already know from home or personal use. The council’s challenge will be to keep pace without overreacting.

What to watch next:

Source: Leader Live Flintshire councillors back AI policy to protect residents personal data

Overview

Overview

The debate in Flintshire is not really about whether AI exists; that battle has already been lost. It is about how a council uses AI without drifting into risky, unregulated territory where staff experiment with consumer tools, data protection rules blur, and residents’ information becomes collateral damage. In that sense, the policy is as much about governance as technology.The council’s draft framework sets a narrow perimeter. It allows AI for research, drafting, summarising, transcription, presentations, and data analysis, but blocks the use of residents’ personal information and rejects automated decision-making at every level. That distinction matters because many of the highest-risk public sector uses of AI are not flashy chatbots, but mundane back-office shortcuts where sensitive data can be copied into tools without proper scrutiny.

Officers were careful to say the policy is not about cutting staff numbers. That point is politically important. In local government, AI often triggers suspicion that efficiency is a euphemism for headcount reduction, and that residents will be told they are getting better service while the workforce is quietly thinned out. Flintshire’s managers appear keen to present AI as an assistive layer, not a replacement strategy.

The timing is also notable. The policy was on the committee’s agenda for 19 March 2026, with Cabinet due to consider it on 24 March 2026. That places Flintshire squarely among councils trying to formalise AI use now, rather than waiting until an incident forces the issue. In local government, that is often the difference between controlled adoption and crisis-driven policy making.

Why this matters now

AI tools are now embedded into email systems, office suites, transcription apps, document assistants, and analytics products by default. Even when an authority thinks it has “banned AI,” the reality is that staff can still encounter it inside everyday software, which makes blanket prohibition hard to enforce. Flintshire’s approach is more realistic because it tries to regulate use rather than pretend the technology can simply be wished away.- The policy is designed to manage real-world staff behaviour, not idealised compliance.

- It prioritises data protection over novelty.

- It creates a formal approval route for future AI adoption.

- It signals that automation must remain accountable to humans.

Background

Flintshire has already shown signs that it wants to be a digitally active council rather than a passive consumer of vendor promises. Its wider digital strategy and service transformation agenda suggest an organisation that sees technology as a route to better public services, improved efficiency, and more resilient operations. That broader context helps explain why AI is being considered now rather than treated as a fringe topic.At the same time, the council operates in the same constrained environment as many UK local authorities: rising demand, tight budgets, legacy systems, and strong obligations around information governance. AI can help with some of those pressures, but only if the authority has a clear framework for risk, procurement, and accountability. Without that, the promise of speed can quickly become the reality of exposure.

This is not Flintshire’s first brush with AI-related debate. In September 2025, the council considered a Notice of Motion 6 on artificial intelligence in council services. That motion noted that AI use had already begun and argued for a defined regulatory framework based on values such as data security, accountability, ethics, informed consent, transparency, and contingency planning. In other words, the policy now before the council has been shaped by a year of discussion about the gap between technical enthusiasm and institutional caution. (committeemeetings.flintshire.gov.uk)

The motion also shows how broad the concerns became. It did not focus only on chatbots or productivity tools, but on whether AI systems could access council information, whether vendors might monitor interactions, and whether residents should have meaningful opt-outs where data is shared with AI. That is a strong hint that Flintshire’s policy is not just about today’s tools; it is also about anticipating the next wave of AI-enabled procurement. (committeemeetings.flintshire.gov.uk)

The policy backdrop

Local government across Wales has been moving toward more formal AI governance. Flintshire’s own committee papers show an AI policy on the forward plan for March 2026, with the decision due first at the Corporate Resources Overview & Scrutiny Committee and then at Cabinet. That sequencing is important because it suggests AI is being treated as a corporate governance issue rather than a niche IT matter. (committeemeetings.flintshire.gov.uk)Wales is also developing a broader policy environment around responsible AI use, with emphasis on ethics, transparency, human oversight, and digital skills. That wider direction helps frame Flintshire’s policy as part of a public-sector trend rather than an isolated local decision. The local authority is effectively trying to align innovation with public-sector caution, which is exactly where a council ought to be.

- Local government AI policy is now moving from theory to procedure.

- Governance and audit processes are central to adoption.

- Vendor risk is becoming as important as technical capability.

- Councils are under pressure to modernise without weakening safeguards.

What the committee is actually proposing

The core of the proposal is simple: AI can be used, but only within strict boundaries. According to the reporting, Flintshire’s draft policy allows AI for research, information gathering, drafting text or images, summarising emails and documents, transcribing meetings, and data analysis. What it explicitly does not allow is the input of residents’ personal data into AI systems. That is an important line in the sand.The policy also rejects automated decision-making. That may sound obvious, but in practice it is a crucial statement. Many modern systems quietly move from “assistive” to “decision-shaping” by offering a recommended action, ranking cases, or generating a near-complete response for human sign-off. Flintshire appears to be insisting that a person remains responsible for the decision, not merely the final click.

Another important feature is the idea of individual responsibility. Officers and members would be personally accountable for ensuring AI is used appropriately, and the authority would retain the ability to end agreements if serious data breaches occur. That means the policy is not only aimed at software vendors; it is also about staff discipline and procurement leverage.

The most interesting part is that the council seems to accept the inevitability of AI use while trying to corral it. That is a more mature stance than a blanket ban, because a ban often just drives usage underground. A controlled policy, by contrast, gives the council a chance to monitor, train, and enforce.

Permitted uses and prohibited uses

The policy’s permitted uses are telling because they focus on efficiency, not authority. AI can help draft a document, summarise a meeting, or organise information, but it cannot be used to decide a resident’s fate, process personal data casually, or operate without human accountability. That is a sensible line for a public body.- Permitted: drafting and editing content.

- Permitted: summarising large volumes of text.

- Permitted: transcription and meeting notes.

- Permitted: analysis of non-sensitive data.

- Prohibited: inputting residents’ personal information.

- Prohibited: automated decision-making without oversight.

Data protection at the centre

The strongest theme running through Flintshire’s policy is not innovation but data protection. That is unsurprising, because councils handle highly sensitive information: social care records, housing data, benefits queries, educational needs, and correspondence from vulnerable residents. Once that data enters a third-party AI model, the authority may lose visibility over storage, reuse, training, and access controls.The policy’s decision to prohibit residents’ personal data from being entered into AI is therefore the key safeguard. It is a blunt instrument, but blunt instruments can be useful when the technology landscape is still shifting fast. In a setting where not every staff member will understand model retention, prompt logging, or vendor training policies, a bright-line rule is often more enforceable than a nuanced exception process.

There is also a procurement angle here. The council wants safeguards that would allow it to exit arrangements if serious breaches arise. That matters because the real risk is not only accidental misuse by staff, but contractual lock-in with vendors whose terms are too vague, too broad, or too permissive about data use. Once AI gets embedded in a workflow, changing provider can become both technically and financially difficult.

Flintshire’s approach reflects a growing local-government recognition that information governance is now product governance. It is no longer enough to ask whether software works; councils also have to ask where the data goes, who can see it, whether it can train a model, and what happens if the service disappears or changes hands.

What makes this risk different from older software

Traditional software generally performs a fixed task. AI systems, by contrast, are often probabilistic, vendor-managed, and updated continually. That means the privacy and safety profile can change after procurement, even if the original pilot looked safe.- AI systems can retain or route prompts in ways staff may not expect.

- Vendor terms can shift after deployment.

- Outputs can be persuasive even when wrong.

- Privacy risks may be hidden behind productivity gains.

- Data handling can be hard to audit retrospectively.

Human oversight and accountability

Perhaps the most reassuring element of the proposal is its rejection of machine-led decisions. Councillor and officer language alike emphasised that AI should not replace staff roles such as social work or customer-facing decision-making. The idea is that AI can support service delivery, not become service delivery.That distinction is central to legitimacy. Residents are far more likely to trust an authority when a named human remains responsible for advice, triage, and final decisions. If a system simply spits out a recommendation and the human becomes a rubber stamp, accountability has already been diluted. Flintshire seems to understand that and wants to preserve the human chain of responsibility.

The policy also places responsibility on individual users. In practice, that means staff can’t hide behind the tool if something goes wrong. If a worker uses a personal AI account to draft a resident response, or pastes sensitive content into an unauthorised system, the policy would treat that as misconduct. That is a strong signal that the authority intends to police behaviour, not merely issue guidance and hope for the best.

The practical enforcement challenge

This kind of policy only works if staff understand it and managers enforce it. Otherwise, it risks becoming a nice document that is read once and forgotten. Training, software controls, and periodic checks will matter as much as the wording.- Staff must know what is permitted.

- Managers must know how to spot misuse.

- IT teams must identify embedded AI features in existing software.

- Procurement teams must check vendor terms carefully.

- Governance officers must review exceptions regularly.

Ethics, bias, and public trust

One of the more interesting aspects of Flintshire’s discussion is the ethical framing. The committee’s earlier motion went beyond generic caution and argued that AI systems must not reproduce discrimination or bias, and that equality considerations should be central. That is particularly relevant in local government, where even small biases can have major consequences for service access, eligibility, or triage. (committeemeetings.flintshire.gov.uk)Ethics is not just about offensive content. It is also about whether a model encodes unfair assumptions in its training data, whether it behaves differently for different groups, and whether the authority can actually test that behaviour. For a council, the bar should be high because public services are meant to be equitable by design. A tool that is “usually accurate” but fails systematically for certain groups is not acceptable in a civic context.

The policy also addresses transparency. The committee’s motion said the council should publish a list of AI tools used, the purposes they serve, and assessments made under the principles. That is important because transparency is how public bodies earn trust when using systems that can feel invisible or abstract. Residents may not care which model produced a draft letter, but they absolutely care if the council is using their data in ways they were never told about. (committeemeetings.flintshire.gov.uk)

Ethics is operational, not decorative

There is a temptation to treat ethics as a branding exercise. In reality, it needs to be embedded into procurement, monitoring, and use-case approval. If not, “ethical AI” becomes a phrase that appears in policy but never reaches practice.- Ethics must be tested before deployment.

- Bias checks must be repeated, not assumed.

- Transparency must be public, not internal-only.

- Equality impacts must be documented.

- Complaints and incidents must feed back into policy.

Services, productivity, and the workforce question

Flintshire’s officers were explicit that AI should improve service delivery rather than reduce staff numbers. That is a politically careful line, but it is also economically plausible. The first gains from AI in local government are usually administrative: faster drafting, summarisation, transcription, and triage. Those uses can free up staff time, but they do not automatically translate into fewer jobs. More often, they allow stretched teams to cope with growing caseloads.That matters because local government is not a factory line. Service demand is variable, human needs are complex, and many tasks require judgement, empathy, and context. A social worker, a housing officer, or a benefits advisor does not just process information; they interpret circumstances. AI can help with the paperwork, but it cannot absorb the human element that residents expect from a council.

There is also an internal morale issue. If AI is presented as a surveillance tool or a stealth redundancy programme, staff will resist it. If it is positioned as a way to reduce repetitive work and improve response times, adoption is more likely. Flintshire’s messaging suggests it wants the latter framing, which is wise.

Likely productivity gains

The best near-term gains are probably low-risk and repetitive. That includes summarising long email trails, drafting routine correspondence, converting meetings into notes, and helping staff search across internal documents. Those are the kinds of tasks where AI can be genuinely helpful without directly touching sensitive decisions.- Faster first drafts.

- Better meeting capture.

- Easier document summarisation.

- Improved internal knowledge retrieval.

- Reduced admin burden on frontline teams.

Procurement, contracts, and vendor lock-in

A council AI policy is not only a staff handbook. It is also a procurement tool. Once an authority begins buying AI-enabled software, it needs clear questions about data processing, sub-processing, training rights, retention, auditability, and exit terms. Flintshire’s draft appears to recognise that by demanding safeguards and by acknowledging the difficulty of disaggregating an embedded tool later on.This is an especially important issue because modern software procurement increasingly bundles AI into products by default. A council may think it is buying a generic records system or collaboration suite, only to find that AI assistants, transcription engines, or content suggestions are activated as part of a later update. That means procurement teams need to look beyond the headline product and inspect the hidden AI functions. That is where many authorities get caught out.

Flintshire’s concern about ending agreements after serious breaches is also realistic. If a vendor mishandles data, the council needs to be able to act quickly, not wait for a long contractual wrangle. The ability to suspend or terminate service is a core safeguard, especially where resident data and statutory duties are involved.

Questions every council should ask

A strong AI procurement process should ask more than “does it work?” It should ask how the system handles data, what the vendor can see, and whether human reviewers can override its recommendations. That is the difference between responsible adoption and hidden risk transfer.- Does the tool process personal data?

- Can residents’ information be excluded from model training?

- Is the AI feature optional or embedded?

- Can the council audit outputs and access logs?

- What is the exit path if the vendor breaches trust?

- How often does the vendor change model behaviour?

Wider implications for Welsh councils

Flintshire’s policy will almost certainly be watched by other Welsh authorities. Councils across Wales face similar pressures: budget restraint, digital transformation targets, rising demand, and public expectations for faster service. Many will be tempted to use AI for the same reasons Flintshire is considering it. What varies is the level of procedural discipline each authority can bring to the table.The broader lesson is that local government AI policies are beginning to converge around a few shared principles: no personal data in consumer tools, no automated decisions without oversight, transparent use, staff accountability, and regular policy review. Flintshire’s six-month review cycle is particularly smart because it acknowledges that AI governance is a moving target, not a one-off document. That pace of review is probably the minimum sensible standard in 2026.

There is also a political dimension. Councillors are under pressure to show that digital transformation is not just jargon. Voters want better services, but they are wary of being experimented on. A local authority that can say, credibly, “we use AI carefully, we keep humans in charge, and we don’t feed personal data into public models” will likely find it easier to defend.

Why local consistency matters

If every council writes radically different AI rules, suppliers will exploit the confusion and staff will struggle to understand what is allowed. A broadly consistent public-sector position would make compliance easier and reduce the chance of accidental misuse. Flintshire’s policy contributes to that emerging standard.- It makes AI governance more concrete.

- It creates a benchmark for other councils.

- It puts privacy before convenience.

- It reinforces human accountability.

- It treats policy as adaptable, not final.

Strengths and Opportunities

The policy’s biggest strength is that it tries to be practical without being reckless. It gives staff room to use AI for productivity, but it does so inside a framework that protects data, preserves human decision-making, and forces the council to think about vendor risk before things go wrong. That combination is exactly what a modern public authority needs.- Clear red lines on personal data and automated decisions.

- Human oversight remains central to service delivery.

- Staff accountability reduces the risk of casual misuse.

- Regular reviews keep the policy current as AI changes.

- Transparency can build resident trust.

- Procurement discipline may improve future contracts.

- Productivity gains can be realised without pretending AI is a workforce replacement.

Risks and Concerns

The biggest risk is that the policy looks strong on paper but weak in day-to-day practice. Without training, monitoring, and technical controls, staff may still use unauthorised tools, paste data into consumer AI systems, or rely too heavily on machine-generated drafts. There is also the challenge of embedded AI inside software that users may not even realise is enabled.- Shadow AI use by staff using personal accounts.

- Embedded vendor features that are hard to detect.

- Overconfidence in AI outputs even when they are wrong.

- Policy drift if reviews are too infrequent.

- Procurement lock-in once a tool becomes embedded.

- Uneven enforcement across departments.

- Public misunderstanding if residents assume AI is making decisions.

Looking Ahead

The immediate question is whether Cabinet will adopt the policy as recommended and how quickly the council will turn the draft into operational guidance. Adoption is only the first step; the harder work will be implementation, training, procurement alignment, and periodic audit. If Flintshire gets that right, it could become a useful example of cautious but confident AI adoption in Welsh local government.The next six months will matter because the technology will keep changing even if the policy does not. New AI features will appear in software suites, vendors will refine their privacy terms, and staff will continue experimenting with tools they already know from home or personal use. The council’s challenge will be to keep pace without overreacting.

What to watch next:

- Cabinet’s formal decision on the AI policy.

- Any staff guidance issued alongside adoption.

- Whether the council publishes a public AI register.

- How procurement language is updated for future contracts.

- Whether other Welsh councils adopt similar safeguards.

- The first signs of practical AI use in council workflows.

- Any clarification on enforcement and disciplinary procedures.

Source: Leader Live Flintshire councillors back AI policy to protect residents personal data