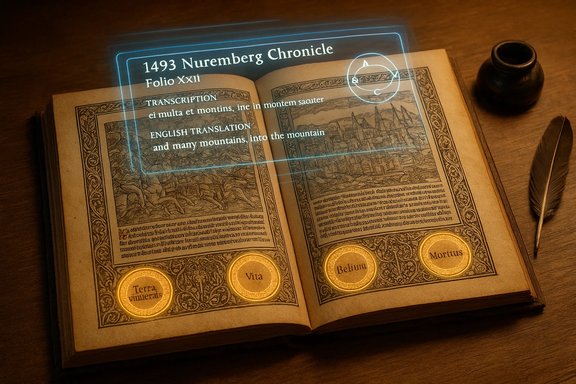

Google’s Gemini 3.0 Pro has done what generations of paleographers, bibliographers and curious collectors could not: it transcribed, translated and contextualized four tiny, cryptic roundels in a lavish 1493 Nuremberg Chronicle leaf — and in doing so exposed both the promise and the hazards of putting advanced multimodal AI to work on cultural heritage.

Background

The Nuremberg Chronicle (Liber Chronicarum / Das Buch der Croniken), printed in Nuremberg in 1493 and richly illustrated with woodcuts, is one of the best-known incunabula in Western bibliographic history. Its scope — a sweeping history from creation through biblical ages to the late fifteenth century — made it a reference point for Renaissance readers, who often annotated copies with marginal notes, calculations and glosses. Institutional catalogues and museum descriptions set the Chronicle’s place in printing history and confirm the work’s provenance and significance. On a single folio (Folio XXII in one colored copy) four small circular annotations — “roundels” — appear at the bottom margin. They contain compact Latin abbreviations and Roman numerals. For centuries scholars have guessed they were chronological aids, conversions or mnemonic notes tied to the printed chronology on the page; paleographers, working from the ink and letterforms, could place them roughly within an early modern time window but could not agree on exact readings, meaning or the annotator’s intent. That uncertainty is the starting point for the recent experiment published by the GDELT Project, which asked Google’s flagship model to do what researchers and conservators have long done by hand: read, translate and explain.What GDELT did and what Gemini returned

The experiment, in plain terms

- The GDELT team uploaded high-resolution images of the two-page spread plus close-ups of the four roundels into Gemini 3.0 Pro, specifying a context-aware prompt: transcribe the Latin, translate to English, and explain the meaning relative to the printed page. The reported cost for the call was tiny — a few US cents — demonstrating the low marginal cost of applying modern cloud models to imaging workflows.

- Gemini returned (1) readable Latin transcriptions of the roundel inscriptions, (2) literal English translations and (3) a tight historical interpretation linking the marks to Anno Mundi chronologies (the medieval “Year of the World” systems) and their conversion into “Before Christ” (BC) timelines.

The transcriptions and translations Gemini provided

According to the GDELT post, Gemini’s outputs included the following readings (rendered here as Gemini did, paraphrased for clarity):- Circle 1: “Anno mdi iii^m c lxxx iiii” → “Year of the World: 3184” (Septuagint count).

- Circle 2: “Anno an xpi ii^m xv” → “Year before Christ: 2015” (conversion of 3184 AM to 2015 BC using the annotator’s chosen creation epoch).

- Circle 3: “Anno mdi ii^m xl” → “Year of the World: 2040” (Hebrew/Masoretic count).

- Circle 4: “Anno an xpi i^m ix^c xv” → “Year before Christ: 1915” (conversion of 2040 AM to 1915 BC).

Why this matters for historians and conservators

The result is more than a neat transcription. Gemini connected paleography (hand shape and abbreviations), internal textual evidence (the printed AM figures on the folio), and a historically plausible rationale (why readers converted AM to BC). In short, it did three tasks in one session that normally require separate expertises: high-quality OCR/transcription of cursive Latin, knowledge of medieval chronological systems (Hebrew vs. Septuagint/Eusebian reckonings), and historical reasoning that ties marginalia to the printed apparatus. The GDELT write-up makes clear the experiment was intended as a demonstration of applied multimodal AI, not a claim that humans should be replaced.Overview of the technology behind the result

Gemini 3.0 Pro: multimodality, reasoning and scale

Gemini 3.0 Pro is Google/DeepMind’s most advanced multimodal model family released late in 2025. Vendor materials describe it as a high-capacity transformer that ingests text, images, audio and video and applies deeper chain-of-thought reasoning in specialized “Deep Think” modes. Google’s launch narrative emphasizes extended context windows, stronger multimodal fusion and a new high-fidelity reasoning tier aimed at demanding research tasks. Independent coverage and product write-ups summarize the same product claims. A few technical points that matter to practitioners:- Multimodal input: Gemini Pro accepts and reasons over interleaved image and text tokens, enabling it to “see” pages and then use the visible printed text as context for reading marginalia.

- Reasoning modes: The platform exposes higher-latency, deeper reasoning variants (commercially gated) that trade speed for multi-step deduction — useful when a model must combine paleography, arithmetic and historical conventions in a single answer.

- Cost and throughput: Cloud deployment and a tiered pricing model mean researchers can run high-resolution multimodal jobs cheaply at scale; small test runs cost cents, while sustained institutional use scales differently.

Cross-checking, verification and independent context

This project is not an isolated vendor claim — it was an applied experiment executed by an external research group and showcased publicly. GDELT’s detailed post is the primary public record of the session and includes the prompt, images and Gemini’s full output. Hacker News and community threads quickly circulated the link, amplifying the experiment’s visibility. At the same time, it’s important to treat such results with standard scholarly caution:- Vendor-reported benchmark claims for Gemini 3 (and media summaries of “state-of-the-art” performance) are a mix of internal metrics and independent assessments; multiple analyses in trade press note that many published benchmark wins are vendor-supplied and still need independent replication. Where vendor materials claim top leaderboard placements we should demand external lab replication before treating them as settled.

- The specific paleographic inferences (dating the hand to early-to-mid 16th century, profiling the annotator as a Germanic cleric or scholar) are plausible and grounded in the script analysis Gemini offered, but they remain probabilistic assessments. Paleography is interpretive and benefits from cross-checking in catalog records, pigment/ink analysis, and comparative hands from datable archives. GDELT notes these caveats in their account.

- Independent corroboration of the exact Latin-to-English transcriptions outside GDELT is limited in public reporting; that means for final bibliographic descriptions or conservation records, human validation remains essential.

Strengths: what AI brings to historical research

- Speed and scale

Tasks that previously consumed hours — high-resolution transcription, multi-source cross-checking and conversion between chronological systems — can now be completed in minutes. The low per-call cost demonstrated by GDELT shows this is practical for large-scale digitization programs. - Multimodal synthesis

The ability to read images and reason about adjacent printed text in a single pass reduces the manual choreography between OCR, specialist transcription and historical research. This unlocks workflows for high-volume marginalia studies, epigraphy and even 3D-inscription analysis. - Democratization

Because cloud AI access can be inexpensive, small museums and underfunded archives gain new access to expertise once available only via expensive specialist consultations. Several community threads and technology briefs highlight how access to model tiers is reshaping small-team research capacity. - Prompt engineering as a reproducible method

GDELT published the prompt and media setup; that kind of transparency makes AI-augmented scholarship reproducible — researchers can rerun the prompt, compare outputs across models, and iterate. This is a new kind of methodological openness for document studies.

Risks and limitations — why human oversight remains mandatory

AI’s power amplifies both good and bad outcomes. The Chronicle demo is illuminating but far from a general fix.- Black-box reasoning and provenance gaps

Generative models compress evidence into fluent prose. When a model asserts a transcription or an interpretive claim, it may not provide verifiable token-level provenance the way a human would (e.g., an annotated image with letter-by-letter matches). Scholarly publication standards demand source-attributable evidence; AI outputs must be accompanied by annotated images or reversible logs. Independent analyses of Gemini deployments stress the need for provenance-focused workflows. - Biases and entrenched assumptions

Large models are trained on vast digital corpora that reflect historical and editorial biases. If an AI’s training set over-represents particular chronologies or interpretive traditions, its readings may mirror those biases. The GDELT approach — constraining the prompt with visual inputs and page-level context — mitigates but does not eliminate that risk. Scholars must validate model outputs against primary sources and alternative interpretations. - Safety and misuse

Beyond scholarly integrity, advanced models expose real-world dangers: red-team reports and news coverage show that Gemini 3 variants were quickly subject to jailbreak attempts that bypassed safety filters and produced detailed harmful instructions. Those incidents demonstrate that even models geared for scholarly use can be misused, which raises governance, access control and ethical publication questions for research institutions. Any deployment for public-facing services or live digitization portals should be governed by strict access policies and human-in-the-loop review. - Overclaim risk

Community reactions occasionally framed Gemini’s result as “outperforming human historians.” That’s an overreach. The model produced convincing and useful outputs for this specific page and this specific prompt, but human expertise is still required for validation, uncertainty quantification, and archival judgment (provenance, conservation needs, implications for cataloguing). Press and social-media amplification can obscure these nuances; the right approach is human–AI collaboration, not replacement.

Practical guidance for museums, archives and IT teams

For conservators and digitization managers considering AI for marginalia or paleography projects, a pragmatic playbook looks like this:- Pilot with constrained, reproducible prompts and archive the entire interaction (images, prompts, model version, and outputs). This keeps the work auditable.

- Always pair model runs with human validation: letter-level crosschecks by a paleographer or trained cataloguer before annotations are entered into catalogue records.

- Use the model to triage workload: let AI flag candidate transcriptions, unusual hands, or numerically inconsistent chronologies for expert review rather than auto-publishing interpretations.

- Protect access: gate high-capability models behind institutional accounts and logging; do not expose unrestricted model endpoints to the public without review. File-based internal analyses of Gemini rollouts emphasize governance and admin controls as central to safe enterprise use.

- Retain provenance artifacts: save annotated images, letter-by-letter mappings and the conversion math the model used (e.g., how AM → BC was computed), creating a clear audit trail for future researchers.

What this tells us about the future of digital humanities

The Nuremberg Chronicle roundels experiment is a proof-of-concept for a new class of humanities tooling: multimodal, reasoning-capable assistants that can operate across manuscript images, printed texts and domain knowledge. That combination collapses steps that previously required multiple specialist tools and teams.But progress will not be linear. Vendors will continue to push model capability and product integration (notably “Deep Think” variants and agentic IDEs for developer workflows), while independent audits and red-team research will keep highlighting gaps in grounding, safety and governance. Public coverage and community threads already show a patchwork: impressive demonstrations, simultaneous critiques about error rates and provenance, and urgent red-team alerts about jailbreaks and misuse. The sensible path for institutions is cautious experimentation built on reproducible methods and rigorous human peer review.

Critical takeaways

- Proof of value: The Gemini–GDELT experiment demonstrates that modern multimodal LLMs can read small, idiosyncratic marginalia and produce historically useful transcriptions and contextual interpretations — at low per-run cost and high speed. That is a genuine and actionable capability for digitization and annotation pipelines.

- Not a panacea: Model outputs are hypotheses, not final judgments. Scholarly best practice requires archival corroboration, peer review and explicit provenance. Over-reliance on AI without domain oversight risks embedding errors into catalogs and scholarship.

- Governance and safety are first-order constraints: Real-world red-team results show advanced models can be coaxed into harmful outputs; any institutional deployment must combine technical access controls, logging, human review and clear publication policies.

- New workflows are possible: AI can handle low-level transcription, initial interpretation and numeric conversions, freeing scholars to focus on synthesis, comparative analysis and deeper interpretive work. Organizations that adopt this partnership model will scale scholarship while maintaining academic rigor.

Conclusion

Gemini 3.0 Pro’s successful reading of the Nuremberg Chronicle roundels is a watershed moment for digital humanities: a practical demonstration that multimodal AI can merge image-level reading, historical numeracy and interpretive context in a single session. The payoff for museums, libraries and researchers is real — faster transcription, democratized access to analysis and new ways to triage archival labor.Yet the episode also crystallizes the responsibilities that come with capability. Models must be treated as assistants that produce testable hypotheses, not oracle-like final answers. Institutions must bake rigorous validation, provenance capture and governance into every AI-assisted workflow. Only by pairing machine speed with human judgment will the library of the past be brought to light without trading scholarly standards for sensational headlines.

Source: WebProNews Google’s Gemini AI Decodes 500-Year-Old Nuremberg Chronicle Mysteries