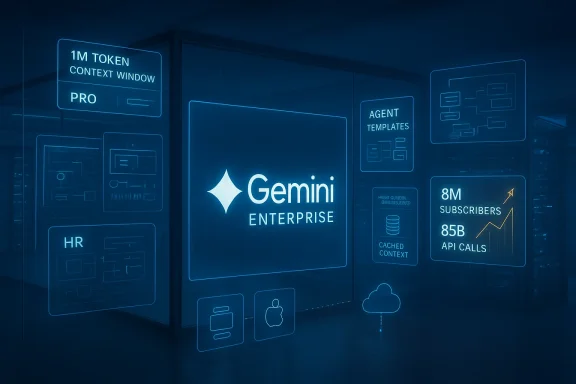

Google’s enterprise AI business has crossed a new inflection point: Gemini Enterprise now reportedly counts roughly 8 million paid subscribers while Gemini API traffic surged dramatically, jumping from about 35 billion to 85 billion requests in a five‑month span. This milestone marks a notable commercial turn for Google Cloud’s AI stack, driven by a combination of model advances, platform features aimed at non‑technical users, handset and OS integrations, and heavy investments in custom silicon and infrastructure.

Over the past 18 months the market for enterprise AI has shifted from experimentation toward production — and from simple chatbots to agentic platforms capable of automating multi‑step workflows, reasoning across massive context, and integrating directly with corporate data and business processes. Google’s Gemini family evolved on two fronts: the underlying models (Gemini 2.5 → Gemini 3 series) and the application layer around them (Gemini Enterprise, agent builders, and vertical offerings such as Gemini Enterprise for Customer Experience). Together, these moves have been central to the growth numbers now being reported. The Information’s reporting — corroborated by contemporaneous business coverage — is the clearest public source for the 8M figure and the API growth curve; both reflect internal usage and sales telemetry that point to rapid enterprise adoption and developer engagement. That said, the precise subscriber definition (paid seats vs. registered seats, active vs. cumulative) and the breakdown by contract type are not fully public; for readers and procurement teams, treat headline counts as directional rather than granular audit figures.

For CIOs and procurement teams, the practical path forward is clear: validate the new capabilities with focused, measurable pilots; insist on strong contractual protections for data and portability; and prepare governance frameworks that let the business safely scale agentic automation. The headline numbers show supply and demand aligning — but the lasting winners will be the organizations that combine these powerful tools with disciplined engineering, compliance, and procurement practices.

Source: Lapaas Voice Gemini Enterprise reach 8 million subscribers

Background

Background

Over the past 18 months the market for enterprise AI has shifted from experimentation toward production — and from simple chatbots to agentic platforms capable of automating multi‑step workflows, reasoning across massive context, and integrating directly with corporate data and business processes. Google’s Gemini family evolved on two fronts: the underlying models (Gemini 2.5 → Gemini 3 series) and the application layer around them (Gemini Enterprise, agent builders, and vertical offerings such as Gemini Enterprise for Customer Experience). Together, these moves have been central to the growth numbers now being reported. The Information’s reporting — corroborated by contemporaneous business coverage — is the clearest public source for the 8M figure and the API growth curve; both reflect internal usage and sales telemetry that point to rapid enterprise adoption and developer engagement. That said, the precise subscriber definition (paid seats vs. registered seats, active vs. cumulative) and the breakdown by contract type are not fully public; for readers and procurement teams, treat headline counts as directional rather than granular audit figures. What changed: product and platform drivers

Gemini 3: longer memory, deeper reasoning, multimodal scale

Gemini 3 (the “3” series) introduced capabilities that materially change how organizations use generative AI. Google’s developer documentation lists the flagship specs that organizations care about: a 1‑million‑token input context window (with substantial output buffers) and “Pro” / “Flash” variants tuned for high‑reasoning and multimodal workloads. The docs also expose new runtime controls — such as a thinking_level parameter — designed to make the models behave more deliberately for complex tasks. These features let enterprises feed whole codebases, legal libraries, or long technical documents into a single prompt, enabling faster end‑to‑end analysis and automation. Why it matters: a 1M token window plus improved reasoning reduces the need to shard or pipeline long documents and enables new use cases — from automated contract review and compliance analysis to large‑scale code refactoring — that used to be slow, error‑prone, or simply impractical. For enterprise buyers, that translates to work saved and latency removed, which makes adoption decisions less about experiments and more about immediate operational ROI.Agentic workflows and no‑code agent builders

Gemini Enterprise is being positioned as more than a chat surface — it’s now marketed as a platform for "agentic" automation. Google’s enterprise tooling provides prebuilt agent templates and a no‑code/low‑code studio that lets HR, Finance, Sales, and CX teams create multi‑step agents (refund processing, supply‑chain audits, claims triage) without writing model orchestration code. This democratization of agent building reduces friction for business adopters and accelerates parity between technical and non‑technical productivity users. PR announcements presented at NRF 2026 highlighted specialized CX agents and a centralized Agent Studio for management and oversight. The practical consequence is a larger, stickier addressable market: seat licenses no longer need to be limited to developers and data scientists; knowledge workers become active consumers and creators of value on the same platform.Verticalized packaging: Gemini Enterprise for CX

At NRF 2026 Google launched a specialized offering — Gemini Enterprise for Customer Experience (CX) — that bundles shopping and customer service agents into a single interface. Retailers such as Kroger, Lowe’s and Woolworths were named early adopters in PR materials, reflecting a strategy of offering vertical prepacks to accelerate enterprise deployments. The CX package emphasizes conversational commerce, intent analysis across customer interactions, and post‑purchase resolution agents — precisely the types of functionality retail and foodservice enterprises want to scale.Infrastructure and pricing: Ironwood TPUs and competitive commercials

Behind the product story is a huge infrastructure play. Google’s Ironwood TPU family — its seventh‑generation, inference‑focused TPU — was introduced to supply the throughput and memory footprint that Gemini 3 and agentic workloads require. Ironwood is described in Google’s materials as dramatically more capable (higher HBM capacity, improved interconnects, and much larger inter‑chip scaling), which helps reduce latency and costs for very large inference loads. Google also adjusted pricing and introduced batch APIs and cached contexts to improve cost per operation for high‑volume enterprise customers. On pricing, industry commentary suggests Google has been aggressive on enterprise contracts — often undercutting rivals on headline per‑seat or per‑usage rates. That pricing — combined with deep integration into Google Workspace, Android, and cloud infrastructure — is an important commercial lever behind fast uptake. Pricing claims are market‑sourced and vary by contract; procurement teams should still run direct TCOs and pilot tests against real workloads.Evidence and verification: the numbers behind the headlines

- 8 million enterprise subscribers: reported by The Information and picked up by multiple industry outlets; Google reportedly confirmed the figure to reporters (the line between “subscribers” and “seats” remains opaque). This is a major commercial signal, but the underlying definitions (paid active seats vs. registered accounts) are not fully public. Treat the 8M figure as credible directionally while seeking contract‑level confirmation for large procurement decisions.

- API traffic: the jump from approximately 35 billion API calls to 85 billion calls over five months was reported from internal telemetry reviewed by reporters. Independent industry outlets and blog coverage have echoed the magnitude of growth, which aligns with other public metrics pointing to increased developer activity. Rapid API growth is highly consistent with a model + platform + device distribution strategy.

- Model specs: Gemini 3’s 1‑million‑token context window and Pro/Thinking variants are documented in Google’s Gemini API and developer pages, which also list pricing tiers and the new runtime controls for deeper reasoning. These specifications are verifiable on Google’s developer site and documentation.

- Vertical wins and launches: Google’s PR material and customer press releases (Kroger specifically) confirm the rollout and early enterprise adoption of Gemini Enterprise for CX at NRF 2026. These are verifiable through corporate press statements.

- Infrastructure and financials: Google Cloud’s revenue growth of roughly 34% year‑over‑year (reported in recent quarterly recaps) and the public rollout of Ironwood TPU family are corroborated by Google filings, earnings summaries and Google Cloud communications. Google’s improved cloud performance metrics help explain investor sentiment and the broader commercial viability of large model deployments.

What this means for enterprises — strategic implications

1. Faster move from pilot to scale

The combination of deeper model capabilities (1M tokens + improved reasoning) and no‑code agent builders means enterprises can move more use cases into production with fewer specialist engineers. Internal teams can instrument agents that touch CRM, finance systems, and ticketing platforms, shortening the path from PoC to live automation.2. Mobile and device distribution matters

Gemini’s presence on Android devices and the newly announced Apple tie‑up (Apple’s decision to base the next generation of Apple Foundation Models on Gemini) creates a “device halo” that feeds enterprise adoption. When the AI powering key mobile experiences is the same stack used in the office, mobile‑first workforces get a consistent experience and IT organizations find it easier to standardize on a single vendor for device, endpoint and cloud AI. This cross‑device synergy is a real commercial tailwind.3. Infrastructure economics shift

Ironwood and related hardware announcements show Google is pushing to lower the marginal cost of inference for very large models. That improves unit economics for high‑volume customers and enables new pricing constructs (cached contexts, batch discounting). Enterprises that require scale should weigh vendor‑run TPU pods and Axion VM offerings against alternatives to understand total cost and latency profiles.4. Vendor concentration and data strategy

Large‑scale adoption of a single vendor’s model and platform intensifies vendor concentration risk. The Apple‑Google collaboration underscores how major OEM and cloud relationships can amplify platform reach — but also raises questions about long‑term portability, negotiation leverage, and data flows. Enterprises must plan for multi‑vendor strategies, clear data residency guarantees, and exit paths.Strengths: what Google is doing well

- Product depth: Gemini 3’s extended context and new reasoning controls materially broaden feasible enterprise use cases. These are not incremental tweaks; they change the complexity ceiling for tasks the model can handle.

- Platform unification: from Workspace and Cloud to device integrations and verticalized CX packages, Google is building an end‑to‑end stack that simplifies procurement and integration for many customers. That reduces the operational friction that often stalls enterprise LLM projects.

- Infrastructure investment: Ironwood TPUs and related compute innovations are real differentiators for latency‑sensitive and memory‑hungry inference workloads. For enterprises with large inference loads, access to this hardware can lower TCO and reduce latency.

- Aggressive commercial strategy: competitive pricing and verticalized offerings speed seat adoption and expand the buyer base beyond developer teams into business functions. That drives scale and stickiness.

Risks and open questions

Data privacy and sovereignty

As enterprises push sensitive workloads into vendor AI stacks, data residency, sovereign cloud options, and customer‑managed encryption become primary concerns. Google is reportedly expanding sovereign data options, but firms operating under strict compliance regimes (financial services in the EU, Middle East sovereign clouds) should validate encryption, logging, and regional custody guarantees before a wide rollout. Claims about expanded residency options and VPC controls are positive but should be validated contractually.Regulatory and antitrust scrutiny

The Apple‑Google tie‑up and intensifying partnerships among hyperscalers invite regulatory attention. Antitrust authorities have previously scrutinized search defaults and distribution agreements; closer commercial coupling that shapes mobile OS experiences or creates quasi‑exclusive stacks could prompt fresh regulatory review. Legal teams should monitor filings and be prepared to document compliance and risk controls.Model behavior and hallucinations

High reasoning scores and larger context windows do not eliminate model errors. Extended reasoning modes increase internal chain‑of‑thought processing but can also generate plausible‑sounding hallucinations if grounding signals are weak. Enterprises must keep human‑in‑the‑loop checkpoints for high‑risk decisions and instrument audit trails, provenance, and test harnessing, particularly for legal, financial, and regulated workflows. Public benchmarks show breakthroughs, but real‑world correctness still depends on prompt design and grounding.Vendor lock‑in and portability

No‑code agent builders and deep Workspace integrations speed value capture — but they can also increase switching costs. Design integration layers with abstraction where feasible, and insist on exportable logs, agent definitions and retrainable artifacts. Contractual portability guarantees can reduce future migration costs.Commercial sustainability

Rapid adoption at discounted rates can mask long‑term profitability questions. Industry commentary noted Google’s earlier discounting strategies and the need to convert usage into higher‑margin software subscriptions. Procurement teams should model multi‑year pricing scenarios, including possible rate adjustments for high‑throughput inference or premium reasoning modes.Practical advice for IT leaders

- Begin with high‑ROI, bounded workloads: legal discovery, codebase analysis, and CX automation where accuracy can be validated and moderate errors are acceptable.

- Pilot agentic workflows with clear SLAs: measure time saved, defect rates, and downstream operational impact before broad seat purchases.

- Lock down data residency and encryption requirements early: obtain contractual commitments for CMEK, VPC controls, and regional hosting.

- Instrument audit trails and human review gates for decisioning workflows: compliance requires traceability, not just better UX.

- Avoid single‑vendor lock‑in for core bundles: prefer exportable agent definitions and multi‑cloud deployment tests where feasible.

The competitive landscape and what to watch next

- OpenAI / Microsoft: Copilot and OpenAI offerings continue to dominate in certain installed bases (Office 365 / Microsoft 365 environments). However, Microsoft sometimes faces “feature fatigue” among knowledge workers; Google’s momentum is primarily in enterprise subscriptions and platform breadth at mobile + cloud + workspace. Comparisons should be workload specific.

- Hardware race: accelerated TPUs and custom CPUs (Google’s Axion and Ironwood) change the competitive tradeoffs. Enterprises will evaluate which cloud provider delivers the best price/latency for their specific model choices and performance tiers.

- Device ecosystems: the Apple‑Google collaboration and deep Android integrations mean device OEMs now materially affect enterprise AI adoption. Workforces that rely on mixed device fleets should plan for integration and authentication flows across ecosystems.

Conclusion

The reported 8‑million‑subscriber milestone for Gemini Enterprise and the accompanying API surge are more than headline metrics — they are evidence of a larger market shift. Google’s product strategy (Gemini 3’s expanded context/reasoning), platform investments (Ironwood and agent tooling), and distribution plays (device integrations and vertical CX packaging) together create a compelling proposition for many enterprises. At the same time, legitimate risks remain: data sovereignty, regulatory friction, model reliability, and vendor lock‑in.For CIOs and procurement teams, the practical path forward is clear: validate the new capabilities with focused, measurable pilots; insist on strong contractual protections for data and portability; and prepare governance frameworks that let the business safely scale agentic automation. The headline numbers show supply and demand aligning — but the lasting winners will be the organizations that combine these powerful tools with disciplined engineering, compliance, and procurement practices.

Source: Lapaas Voice Gemini Enterprise reach 8 million subscribers