GitHub Copilot’s latest controversy says less about one awkward prompt than it does about where the entire AI developer-tools market is heading. If the reports are accurate, Copilot has been surfacing promotional-looking “tips” inside pull requests, including references to the Raycast integration, and doing so in a way that some developers feel crosses the line from assistance into marketing. That matters because pull requests are not casual surfaces; they are part of the written record of software delivery, and any hidden commercial signal inside them threatens the trust developers place in the workflow. The allegation is still just that—an allegation—but it lands in a moment when AI tools are becoming more autonomous, more embedded, and more commercialized at the same time. ub Copilot began as a coding assistant, but it is now much more than autocomplete in an editor. GitHub’s own materials describe Copilot as a system that can create pull requests, update descriptions, respond to comments, and participate in review loops, while Microsoft Research frames “Copilot for Pull Requests” as part of the broader PR experience rather than a side feature. That shift changes the stakes dramatically, because once an AI tool is writing into a pull request, it is no longer merely helping a developer type faster; it is shaping a shared artifact that reviewers, maintainers, and sometimes entire communities will see.

That evolution also helps explain why the current backlash is sharper than the usual complaints about AI output quality. Developers have become used to AI hallucinations, odd completions, and the occasional irrelevant suggestion. What they are much less willing to accept is the idea that a trusted workflow surface might be used to nudge them toward a product, partner, or ecosystem relationship without clear disclosure. In developer tooling, perceived neutrality is not a nice-to-have; duct’s functional value.

GitHub has also spent the last year making the PR flow more agentic. Copilot can now be asked to create a pull request from GitHub Issues, the agents panel, Copilot Chat, the CLI, or other agentic tools, and GitHub’s own blog has highlighted internal examples where Copilot authored broad codebase changes and PRs inside GitHub’s own repositories. That makes it clear the company wants Copilot to be a workflow actor, not just a suggestion engine. The more authority the tool gains, however, the more carefully its outputs must be governed.

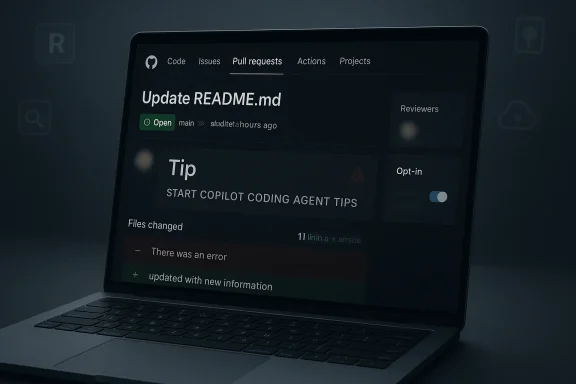

The reason the present story resonates is not just that a promotional sentence allegedly appeared, but that it reportedly appeared at scale. The file search material points to descriptions of the same or similar copy across thousands of pull requests, with some versions tied to a hidden marker labeled like “START COPILOT CODING AGENT TIPS.” Scale matters because a one-off oddity can be dismissed as a bug, but repeated behavior across repositories suggests a centralized mechanism, a shared template, or at minimum a repeatable system path. That shifts the question from “what happened once?” to “what kind of system allows this to happen at all?”

There is also a broader industry backdrop worth noting. AI vendors are under pressure to find new revenue paths beyond subscriptions and enterprise licensing, and the market has already started normalizing commercial experimentation inside AI products. OpenAI, for example, has publicly discussed testing ads in ChatGPT while stressing that ads are labeled and separated from answers. That transparency may not make but it is a far cry from hidden or ambiguous inserts inside a developer workflow.

At the center of the controversy is a simple but uncomfortable question: when does a “tip” become an ad? If a tool inserts a suggestion that points users toward an adjacent product or integration, the line between helpful guidance and marketing can be surprisingly thin. That line gets even blurrier when the message appears inside generated PR descriptions, because the wording looks like itant’s own judgment rather than from a disclosed sponsorship.

If developers are seeing the same phrasing repeated across many repositories, that makes the message feel less like a context-sensitive recommendation and more like a distributed placement. That distinction is critical. A contextual suggestion can be ignored; a repeated insertion into a written artifact looks like a design choice. Once the audience suspects a design choice, trust becomes the thing under review, not just the wording.

This is why some developers are reacting so strongly even if the inserted copy is not a classic banner ad. The problem is not simply monetization; it is opacity. If an AI assistant is writing into a PR and the user cannot easily tell whether the wording came from a prompt, a template, a partner rule, or a hidden system message, then the output ceases to feel attributable. In a review environment, that uncertainty is poisonous.

The file search material repeatedly returns to this theme: hidden influence is what users resist most. A visible recommendation can be assessed on its merits. An invisible or ambiguous one forces the developer to become a detective. That extra cognitive load may sound small, but in large codebases and busy review cycles it compounds quickly.

That distinction matters because GitHub Copilot is not just a toy for hobbyists anymore. It sits inside corporate repositories, regulated industries, and open-source projects that are scrutinized at scale. A stray promotional sentence in a public PR can be archived, screenshot, quoted, and amplified far beyond the original context. In a world where every workflow artifact can become public evidence, visibility itself becomes part of the risk model.

That matters because the tolerances for an assistant in a chat window are different from the tolerances for an assistant in a merge request. A loose, conversational suggestion can be ignored. A PR description becomes part of the project record. When a tool can write into that record, the bar for transparency should rise with its capability.

The problem is amplified by scale. A hidden message that appears in a single repo may be shrugged off as an error. A repeated insertion across many repositories looks systemic, even if intent has not been established. That is why the burden of explanation now sits with the platform owner. A centralized workflow feature creates centralized accountability.

If the goal is genuine workflow improvement, the recommendation can be made explicit. If it is a partner promotion, it should be labeled as such. The worst possible version is a natively phrased “tip” that looks like the assistant’s independent judgment while quietly serving a platform relationship. That is the kind of ambiguity that turns a useful ecosystem into a credibie monetization backdrop changes perception

The broader AI market is increasingly monetized, and users know it. OpenAI’s public discussion of ads in ChatGPT has already normalized the idea that AI interfaces may carry commercial signals, even if the implementation is meant to be transparent. That makes opaque inserts in another AI product feel even more suspect, because users now know what a more honest ad model looks like.

This is where the business logic runs into user psychology. AI infrastructure is expensive, and every vendor is looking for revenue beyond subscriptions. But the method matters almost as much as the revenue. If commercialization starts showing up inside trusted workflow artifacts, the backlash may cost more than the monetization gains are worth.

The result is a simple but uncomfortable rule: if a system can write into a PR, it must not only be capable but also explainable. Developers need to know where the generated text came from, whether it can be edited, and whether anything outside the task itself influenced it. Without that, the assistant is no longer just an assistant; it becomes an opaque actor in the supply chain.

A robust governance model would probably include the folpt-in for any partner suggestions.

2. Separate labeling for marketing copy versus workflow advice.

3. Audit logs showing what prompted generated text.

4. Admin controls to disable promotional inserts.

5. The ability to review or block hidden markers.

6. Clear documentation of all content pathways.

7. A simple way to prevent unsolicited PR rewrites.

The hardest part is that the appearance of hidden influence can matter as much as the reality. Even if Microsoft eventually explains the mechanism as a benign template or an experimental tip system, the company will still have to deal with the fact that users now associate the behavior with stealth. Once that association forms, it tends to stick.

This is also why the issue has broader implications than GitHub alone. If the market starts to believe that agentic tools may double as distribution surfaces, the reaction could reshape expectations around all AI coding assistants. Developers may demand more disclosure, more configurability, and more separation between recommendation and promotion across the board.

There is also a subtler strategic risk. GitHub has been trying to make Copilot feel like the default AI layer for software development. If the product starts to feel commercially noisy, that default position becomes easier to challenge. Once confidence slips, every other Copilot feature—summaries, reviews, and task generation—comes under a brighter, harsher light.

The deeper issue is larger than one workflow quirk. AI assistants are moving into places where people do real work, commit real code, and make real business decisions. That means the standards for disclosure, neutrality, and observability have to rise with the capabilities of the model. The more useful these tools become, the less room there is for ambiguity.

Source: thewincentral.com GitHub Copilot Caught Injecting Ads Into Pull Requests — Developers Reac - WinCentral

That evolution also helps explain why the current backlash is sharper than the usual complaints about AI output quality. Developers have become used to AI hallucinations, odd completions, and the occasional irrelevant suggestion. What they are much less willing to accept is the idea that a trusted workflow surface might be used to nudge them toward a product, partner, or ecosystem relationship without clear disclosure. In developer tooling, perceived neutrality is not a nice-to-have; duct’s functional value.

GitHub has also spent the last year making the PR flow more agentic. Copilot can now be asked to create a pull request from GitHub Issues, the agents panel, Copilot Chat, the CLI, or other agentic tools, and GitHub’s own blog has highlighted internal examples where Copilot authored broad codebase changes and PRs inside GitHub’s own repositories. That makes it clear the company wants Copilot to be a workflow actor, not just a suggestion engine. The more authority the tool gains, however, the more carefully its outputs must be governed.

The reason the present story resonates is not just that a promotional sentence allegedly appeared, but that it reportedly appeared at scale. The file search material points to descriptions of the same or similar copy across thousands of pull requests, with some versions tied to a hidden marker labeled like “START COPILOT CODING AGENT TIPS.” Scale matters because a one-off oddity can be dismissed as a bug, but repeated behavior across repositories suggests a centralized mechanism, a shared template, or at minimum a repeatable system path. That shifts the question from “what happened once?” to “what kind of system allows this to happen at all?”

There is also a broader industry backdrop worth noting. AI vendors are under pressure to find new revenue paths beyond subscriptions and enterprise licensing, and the market has already started normalizing commercial experimentation inside AI products. OpenAI, for example, has publicly discussed testing ads in ChatGPT while stressing that ads are labeled and separated from answers. That transparency may not make but it is a far cry from hidden or ambiguous inserts inside a developer workflow.

What the Allegation Actually Means

What the Allegation Actually Means

At the center of the controversy is a simple but uncomfortable question: when does a “tip” become an ad? If a tool inserts a suggestion that points users toward an adjacent product or integration, the line between helpful guidance and marketing can be surprisingly thin. That line gets even blurrier when the message appears inside generated PR descriptions, because the wording looks like itant’s own judgment rather than from a disclosed sponsorship.The wording matters

The reported Raycast mention is significant not because Raycast is obscure, but because it is already part of the Copilot ecosystem conversation. Raycast’s own Copilot extension is marketed as a way to start and track Copilot coding agent tasks from its launcher, including task creation and PR tracking, so a mention of it can plausibly be framed as workflow advice. But plausibility is not the same as consent, and a suggestion that appears automatically inside a PR description is not the same as a note in documentation or a marketplace listing.If developers are seeing the same phrasing repeated across many repositories, that makes the message feel less like a context-sensitive recommendation and more like a distributed placement. That distinction is critical. A contextual suggestion can be ignored; a repeated insertion into a written artifact looks like a design choice. Once the audience suspects a design choice, trust becomes the thing under review, not just the wording.

Why a hidden marker alarmed people

The hidden-comment angle is what really escalated the story. File search results describe references to a concealed HTML comment such as “START COPILOT CODING AGENT TIPS,” and that detail resonates because GitHub itself has long treated hidden instructions as a prompt-injection risk. In other words, the company already knows that invisible text inside issues and pull requests can be used to manipulate AI behavior, and its docs say the agent filters hidden characters for exactly that reason. Hidden channels are hard to audit, easy to misuse, and terrible for confidence.This is why some developers are reacting so strongly even if the inserted copy is not a classic banner ad. The problem is not simply monetization; it is opacity. If an AI assistant is writing into a PR and the user cannot easily tell whether the wording came from a prompt, a template, a partner rule, or a hidden system message, then the output ceases to feel attributable. In a review environment, that uncertainty is poisonous.

A workflow artifact is not an are collaborative records. They are the place where teams discuss risk, correctness, architecture, and merge intent. That makes them fundamentally different from a sidebar recommendation or a promotional card in a consumer app. Once a partner mention appears in the PR body, it becomes part of the code review narrative, and that is why the same text can feel harmless in one context and invasive in another.

The practical consequence is that a seemingly small inseous governance questions. Who approved the text? Was it intended to be shown to all users? Can admins disable it? Was there an opt-in mechanism? Those are not cosmetic concerns. They are questions about disclosure, auditability, and consent.Why Developers React So Strongly

Developers are not just annoyed because they dislike ads. They are alarmed because PRs occupy a high-trust space where people expect explicit, reviewable, and attributable information. A coding assistant can be wrong, but it should not feel like it is trying to sell the team something while it is helping them ship code. In that sense, the backlash is about process integrity as much as commercial intrusion.Trust is a feature

In developer products, trust is not abstract branding. It affects whether people accept ecurity teams allow the tool into production workflows, and whether enterprise buyers are willing to expand deployment. If users start suspecting that generated content may carry undisclosed commercial bias, every suggestion becomes harder to interpret. That creates friction even when the underlying code assistance remains useful.The file search material repeatedly returns to this theme: hidden influence is what users resist most. A visible recommendation can be assessed on its merits. An invisible or ambiguous one forces the developer to become a detective. That extra cognitive load may sound small, but in large codebases and busy review cycles it compounds quickly.

Enterprise and consumer users do not react the same way

Consumer apps can sometimes get away with awkward experiments because users expect novelty and churn. Enterprise environments are much less forgiving. Organizations buy software partly on the promise that it will remain predictable, auditable, and policy-compliant, so any suggestion that Copilot output might be shaped by hidden commercial relationships is enough to trigger procurement, compliance, and security concerns.That distinction matters because GitHub Copilot is not just a toy for hobbyists anymore. It sits inside corporate repositories, regulated industries, and open-source projects that are scrutinized at scale. A stray promotional sentence in a public PR can be archived, screenshot, quoted, and amplified far beyond the original context. In a world where every workflow artifact can become public evidence, visibility itself becomes part of the risk model.

The comparison to normal ads is misleading

A banner ad is at least obvious. It is marked, separated from content, and usually easier to ignore. The concern here is not a clearly labeled promotional unit; it is a message that may have been embedded in the assistant’s own prose, making it appear native to the workflow. That difference is why the word “tip” bothers people so much. It sounds helpful, but it may function like placement.- Developers want clarity, not clever framing.

- They want opt-in promotion, not surprise inserts.

- They want audit trails, not invisible system markers.

- They want workflow assistance, not stealth marketing.

- They want predictable output, especially in PRs.

- They want separation between help and monetization.

Copilot’s Expanding Role Changes the Stakes

This controversy would have landed differently two years ago, when Copilot was still mostly associated with inline completion and chat-based assistance. Today it is part of a broader agentic workflow that can touch issues, PRs, reviews, and multi-step task execution. That expansion is strategically smart for Microsoft and GitHub, but it also multiplies the number of places where the assistant can do something surprising.From autocomplete to workflow actor

The path Copilot has taken is familiar: first suggestions, then chat, then code generation, then PR assistance, and now background task execution. Each step adds value, but each step also increases the tool’s authority inside the development process. If the system can open a draft PR and revise its description, it is no longer just helping a developer think; it is making public-facing changes on their behalf.That matters because the tolerances for an assistant in a chat window are different from the tolerances for an assistant in a merge request. A loose, conversational suggestion can be ignored. A PR description becomes part of the project record. When a tool can write into that record, the bar for transparency should rise with its capability.

Hidden-instruction risk is already well understood

GitHub’s own documentation acknowledges that hidden text can be used for prompt injection and says the agent filters hidden characters, including HTML comments. That is an important admission, because it proves the company understands that invisible instructions are a real security and governance problem in AI workflows. If a promotional insert is reaching users via a hidden path, then the concern is not just marketing ethics but the integrity of the agent’s control surface.The problem is amplified by scale. A hidden message that appears in a single repo may be shrugged off as an error. A repeated insertion across many repositories looks systemic, even if intent has not been established. That is why the burden of explanation now sits with the platform owner. A centralized workflow feature creates centralized accountability.

Agentic tools need stricter guardrails

As AI tools become more autonomous, they also need stronger boundaries. In a pull request workflow, that means separate controls for code generation, content generation, partner recommendations, and any form of sponsored guidance. The more a system can write into a public artifact, the more the system needs content policy controls, not just capability controls.- More autonomy demands more disclosure.

- More integration demands more auditability.

- More scale demands more control.

- More influence demands more consent.

- More workflow authority demands more transparency.

Raycast, Ecosystem Strategy, and the Monetization Question

Raycast is not ans story. Its Copilot extension is explicitly designed to help users delegate tasks to GitHub Copilot coding agent from the Raycast launcher, which makes it a legitimate part of the surrounding workflow ecosystem. That means the mention itself is not inherently suspicious; what is suspicious is the possibility that it appeared automatically and repeatedly inside PR content.Ecosystem promotion can be useful or manipulative

A mature platform often wants to steer users toward adjacent tools that improve the experience. That is normal ecosystem strategy, and it has worked for years in app stores, browser platforms, and operating systems. The problem is not that Microsoft or GitHub might want to promote integrations; it is the channel and the framing. An integration note in docs is one thing. A repeated line inside a PR body is another.If the goal is genuine workflow improvement, the recommendation can be made explicit. If it is a partner promotion, it should be labeled as such. The worst possible version is a natively phrased “tip” that looks like the assistant’s independent judgment while quietly serving a platform relationship. That is the kind of ambiguity that turns a useful ecosystem into a credibie monetization backdrop changes perception

The broader AI market is increasingly monetized, and users know it. OpenAI’s public discussion of ads in ChatGPT has already normalized the idea that AI interfaces may carry commercial signals, even if the implementation is meant to be transparent. That makes opaque inserts in another AI product feel even more suspect, because users now know what a more honest ad model looks like.

This is where the business logic runs into user psychology. AI infrastructure is expensive, and every vendor is looking for revenue beyond subscriptions. But the method matters almost as much as the revenue. If commercialization starts showing up inside trusted workflow artifacts, the backlash may cost more than the monetization gains are worth.

Why the partner angle is strategically awkward

If Microsoft is trying to exystem, partner surfaces are inevitable. But the more those surfaces resemble stealth promotion, the more they undermine the product’s core promise: that Copilot is there to help, not to steer. The irony is that ecosystem distribution works best when it is obvious. Hidden promotion often triggers the exact skepticism it was meant to avoid.- Raycakflow partner.

- Legitimate partners still need clear labeling.

- Ecosystem growth is not the same as silent placement.

- Partner value rises when users consent to it.

- Hidden promotion risks turning a feature into a funnel.

Security, Governance, and Auditability

There is a reason security-minded teams are paying attention to this story even if they never click on a Copilot tip. Hidden text inside a PR is not just a branding problem; it is a governance issue, a compliance issue, and potentially a supply-chain trust issue if it influences tooling choices or review decisions. In a mature enterprise environment, one opaque mechanism can trigger multiple review teams at once.Why hidden channels are dangerous

GitHub’s own docs treat hidden characters as a prompt-injection risk, which is exactly the right instinct. Invisible text makes it harder to know what the model saw, what it ignored, and why it generated the output it did. That lack of provenance is bad enough when the content is merely odd; it is much worse if the content carries commercial or partner implications.The result is a simple but uncomfortable rule: if a system can write into a PR, it must not only be capable but also explainable. Developers need to know where the generated text came from, whether it can be edited, and whether anything outside the task itself influenced it. Without that, the assistant is no longer just an assistant; it becomes an opaque actor in the supply chain.

Enterprise admins will want controls

If GitHub wants Copilot to remain credible in enterprise environments, administrators will need stronger controls over what kinds of content can be generated and where it can appear. That likely means tenant-level toggles, audit logs, opt-in partner guidance, and perhaps the ability to lock PR descriptions against unsolicited edits. None of that is exotic. In fact, most enterprises would consider those baseline controls if the tool can affect written review artifacts.A robust governance model would probably include the folpt-in for any partner suggestions.

2. Separate labeling for marketing copy versus workflow advice.

3. Audit logs showing what prompted generated text.

4. Admin controls to disable promotional inserts.

5. The ability to review or block hidden markers.

6. Clear documentation of all content pathways.

7. A simple way to prevent unsolicited PR rewrites.

The reputational issue is part of the security issue

Security teams do not like surprises, but neither do legal, compliance, or procurement teams. If a generatein ecosystem promotion, then administrators may reasonably ask what else can be nudged into the workflow. The perceived risk is not necessarily that the ad is malicious; it is that the assistant is no longer guaranteed to be neutral. That is enough to change policy.The hardest part is that the appearance of hidden influence can matter as much as the reality. Even if Microsoft eventually explains the mechanism as a benign template or an experimental tip system, the company will still have to deal with the fact that users now associate the behavior with stealth. Once that association forms, it tends to stick.

The Competitive Implications

If developers start to believe Copilot output can be shaped by commercial incentives, GitHub’s rivals do not need to do much to benefit. They can position themselves as cleaner, more transparent, or less commercially invasive alternatives. In a developer-tools market where switching costs are lower than they look, trust can be as differentiating as model quality or feature breadth.Trust becomes a product axis

The most successful competitor won’t necessarily be the one with the cleverest model. It may be the one that convinces teams its assistant is the least likely to sneak a product pitch into a change set. That is especially true for enterprises, where procurement teams are already cautious about AI-generated content and increasingly concerned about governance.This is also why the issue has broader implications than GitHub alone. If the market starts to believe that agentic tools may double as distribution surfaces, the reaction could reshape expectations around all AI coding assistants. Developers may demand more disclosure, more configurability, and more separation between recommendation and promotion across the board.

Perception can move faster than engineering nuance

Microsoft and GitHub may ultimately prove that the behavior was a bug, an experiment, or a misconfigured output path. But in platform wars, the first story often does the most damage. If the public takeaway is that Copilot might be a little too eager to recommend adjacent products, rival vendors get an easy narrative: ours is the assistant that helps you ship code, not the assistant that markets to you.There is also a subtler strategic risk. GitHub has been trying to make Copilot feel like the default AI layer for software development. If the product starts to feel commercially noisy, that default position becomes easier to challenge. Once confidence slips, every other Copilot feature—summaries, reviews, and task generation—comes under a brighter, harsher light.

Strengths and Opportunities

The controversy is serious, but it also creates a chance for GitHub and the wider AI tooling market to raise standards. If the company responds clearly, it can turn a trust problem into a governance improvement story. Developers may not forgive ambiguity quickly, but they do respect transparent fixes, especially when those fixes are tied to controls they have been asking for anyway.- GitHub can clarify whether the behavior was experimental, accidental, or intentional.

- Copilot can separate workflow guidance from partner promotion more cleanly.

- Enterprises can demand stronger audit trails for generated PR content.

- Developers may benefit from stricter prompt-sanitization practices.

- Competing tools may raise the bar on disclosure and user control.

- The industry could end up with better norms for agent-generated artifacts.

- Trust could improve if hidden channels are removed or fully exposed.

Risks and Concerns

The biggest risk is not the promotional message itself, but the erosion of confidence in Copilot’s output. Once users suspect that generated content may be shaped by undisclosed commercial relationships, every recommendation becomes harder to interpret. That would be a meaningful setback for a product whose value depends on people letting it operate inside their most important workflows.- Hidden promotional content could undermine developer trust.

- The issue may be interpreted as a governance failure.

- Enterprise buyers may demand new controls or restrictions.

- Public repositories could amplify reputational damage.

- Future recommendations may be viewed with suspicion.

- The hidden-comment angle raises prompt-injection concerns.

- Opaque monetization could trigger broader backlash.

Looking Ahead

The next phase of this story will likely revolve around clarification, not speculation. GitHub and Microsoft will need to explain whether the Raycast-related text was a deliberate tip, a partner promotion, a template artifact, or something else entirely. If the company wants to preserve confidence, it will need to be explicit about both the mechanism and the policy behind it.The deeper issue is larger than one workflow quirk. AI assistants are moving into places where people do real work, commit real code, and make real business decisions. That means the standards for disclosure, neutrality, and observability have to rise with the capabilities of the model. The more useful these tools become, the less room there is for ambiguity.

- Watch for a public technical explanation from GitHub.

- Watch for enterprise admin controls around generated PR text.

- Watch for whether hidden markers are confirmed or denied.

- Watch for whether users can disable partner guidance.

- Watch for rival vendors to sharpen their transparency messaging.

Source: thewincentral.com GitHub Copilot Caught Injecting Ads Into Pull Requests — Developers Reac - WinCentral

Last edited: