Microsoft’s Copilot controversy on GitHub is bigger than one awkward pull request edit. If the reports are accurate, the company’s coding agent is no longer just helping developers fix typos or draft summaries; it is also surfacing promotional-looking “tips” inside pull requests, which many users will read as stealth marketing rather than helpful context. That is why this story has landed so hard with developers: pull requests are trusted, durable artifacts, and the moment commercial messaging starts to appear inside them, the entire workflow feels less neutral. The timing makes it worse, because Microsoft and GitHub are already under intense scrutiny for how deeply Copilot is being woven into development, data collection, and now possibly monetization. Copilot has been evolving rapidly from a code-completion tool into an agentic workflow layer that can take issues, create pull requests, update descriptions, and participate in review loops. That matters because the product is no longer confined to the editor; it is writing into the collaboration fabric that teams use to record decisions, explain changes, and sign off on code. GitHub’s own documentation and changelog posts show how broad that footprint has become, including support for launching tasks from GitHub.com, VS Code, mobile, CLI, and even Raycast.

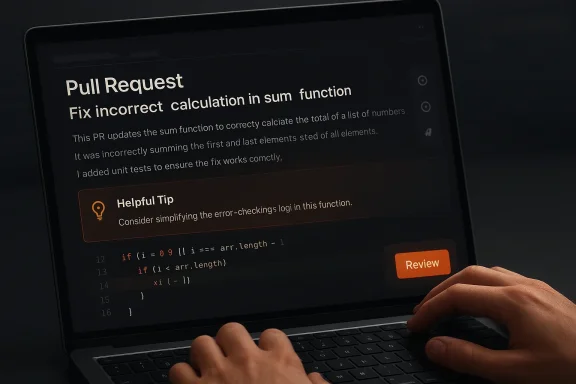

The current backlash stems from a reported incident in which Copilot allegedly corrected a typo in a pull request but also injected a line promoting Copilot and Raycast. The phrasing reportedly resembled a tip or product recommendation, not a banner ad, which makes the behavior feel sneakier than ordinary advertising. A file search of the surrounding coverage suggests that the same or similar text may have appeared across thousands of pull requests, with some observers poic COPILOT CODING AGENT TIPS” as a likely mechanism.

That combination of scale, opacity, and placement is what turns a nuisance into a trust problem. Developers can tolerate hallucinations, formatting quirks, or even the occasional bad suggestion. What they are much less willing to accept is a system that appears to use their own workspaces as a distribution channel for ecosystem promotion. In developer tooling, perceived neutrality is nois part of the product itself.

GitHub Copilot started as a convenience layer: a way to write code faster with machine assistance. Over time, though, GitHub and Microsoft have pushed it into far more ambitious territory, turning it into an asynchronous collaborator that can act on issues, draft pull requests, update them as it works, and request review when finished. That is a major product shift because the assistant is no longer merely suggesting text in a chat window; it is operating on shared project artifacts that teams assume are intentional and reviewable.

The company has also been explicit that Copilot now spans a large number of surfaces. GitHub has documented coding-agent workflows in which users can assign issues to Copilot and launch tasks from GitHub.com or from companion tools such as Raycast. That creates a broad path for defaults, templates, and prompt scaffolding to leak into visible output. Once an assistant can write prose inside a pull request, it can also write persuasion, even if that was not the user’s immediate goal.

At the same time, GitHub has acknowledged that hidden instructions are a real security concern. Its documentation says the agent filters hidden characters, includiause invisible text can be abused as prompt injection. That detail is important here, because the controversy around the alleged PR “tips” seems to involve exactly the kind of concealed or template-driven pathway that GitHub says it is trying to prevent. ([docs.github.com] also helps explain why users are hypersensitive to anything that looks like embedded promotion. OpenAI has already discussed advertising in ChatGPT, while emphasizing that ads will be clearly labeled and separated from the model’s answers. That is a very different model from quietly inserting promotional copy into a developer artifact. Even if the business logic is understandable, the optics are much worse when the message appears inside a workflow document rather than beside it.

The most disturbing claim is not the existsstion that the same text has appeared repeatedly across a very large number of pull requests. The reporting referenced by the file search says the phrase shows up in more than 11,000 instances, and that the same pattern may also appear in merge requests elsewhere. If true, that makes the issue feel systemic rather than accidy why the story escalated from a UX complaint into a governance controversy.

There is also a trust issue hidden inside the repetition itself. If developers start seeing the same “helpful” line over and over, they will stop treating Copilot output as context-aware and begin treating it as systemically biased. Once that suspicion takes hold, even genuinely useful suggestions start to look sRequests Are Different

Pull requests are not casual chat messages. They are part of the durable record of how software changes, why those changes were made, and who approved them. A sentence inside a PR description can be copied into tickets, release notes, audit trails, or compliance systems, which makes any hidden commercial content especially intrusive.

That is why the locatiootion matters more than the content itself. A sidebar suggestion can be ignored. A banner can be dismissed. But a line inside the PR description has the authority of the artifact around it, which makes it feel like part of the developer’s own intent. That is a much stronger form of manipulation, even if the wording is framed as aflow problem

Developers expect review tools to be conservative, transparent, and auditable. They do not expect a tool that can interleave code help with ecosystem nudges. Once that boundary blurs, every subsequent Copilot-generated line is forced to answer two questions: is this useful, and is it also promotional?

This is load* becomes a real cost. Reviewers already have to assess correctness, style, risk, and maintainability. If they now have to ask whether a Copilot-generated sentence is a neutral suggestion or a disguised product placement, the assistant has become part of the burden instead of reducing it.

Th Copilot universe. The problem is that the reference reportedly appears inside the generated body of a pull request description, where it reads like a recommendation rather than a neutral workflow note. If users did not ask for a partner pitch, and if the text was added automatically, then the line between “helpful guidance” and “distribution strategy” becomes uncomfroduct advice or product placement?

That distinction is the core of the controversy. A product tip is supposed to explain how to use the feature you are already invoking. Product placement, by contrast, uses the feature to steer attention toward another product or integration. In a developer workflow, those two categories should never be hard to tell apart.

The lack of a clear pation makes matters worse. If the text was a benign template, GitHub needs to explain why it was injected in a way that made it look hidden. If it was experimental, GitHub needs to explain why it ran so widely. If it was an integration bug, GitHub needs to explain the control path. Without that clarity, users naturally assume the worst.

Microsoft and GitHub are particularly exposed to that temptation because they sit at the center of the developer stack. Copilot is already in the edhn tools. If the assistant can also steer users toward adjacent services, partner tools, or premium workflows, it becomes a distribution layer as much as a productivity layer. That is commercially powerful, but it is also the kind of power that can erode trust very quickly.

For individnation can feel like a lot. First the assistant learns from your interactions, then it becomes more embedded in your collaboration artifacts, and then it starts looking as if it may be inserting product tips into your workflow. Even if each piece is defensible on its own, the cumulative effect is what users experience as slop, noise, and creeping loss of control.

That is whnse is likely to be sharper than the consumer response. Large organizations want to know what the assistant saw, what it changed, and whether any commercial relationship influenced the output. If they cannot answer those questions, they may restrict the feature, disable certain capabilities, or demand more granular controls.

The lack of transthe current episode so combustible. Even if the behavior turns out to be a harmless experiment or a misconfigured template, enterprises will still ask whether hidden prompts can be trusted not to reappear elsewhere. In regulated environments, trust once lost is expensive to rebuild.

That dynamic matters because GitHub has spent years trying to make Copilot the default AI layer fnt. Once that default status is questioned, competitors can frame themselves as the assistant that helps you ship code rather than the assistant that markets to you. In platform wars, perception often travels faster than engineering nuance.

That is especially potent in enterprise sales. Procurement teams tend to dislikity teams dislike them even more. A rival that can credibly say, “Our assistant does not sneak product pitches into your PRs,” is already speaking directly to one of the market’s newest anxieties.

That matters because hidden influence is almost impossible for end users to audit. If a developer cannot tell where the message came from, they cannot judge whether it is trustworthy, biased, or even relevant. In that sense, s worse than an obvious banner ad because it is harder to spot and harder to challenge.

That is why this controversy has a larger security echo than a normal marketing complaint. It touches the same trust boundary as prompt injection, hidden templates, and agent misdirection. The underlying concern is simple: if Copilot can be nudged by invisible instructions, then what exactly is the user buying when they trust it to speak for them?

The second thing to watch is control. Developers and administrators will want to know whether ecosystem tips can be disabled, whether hidden markers are visible in audit logs, and whether generated PR descriptions can be locked down. Those are not edge-case demands anymore; they are the natural next questions any enterprise buyer wie is whether GitHub keeps drawing a hard line between workflow help and product promotion. The company can still make Copilot useful, agentic, and deeply integrated without making it feel like a sales surface. The more it blurs that line, the more its rivals will benefit from the backlash.

Source: Windows Central Copilot is now injecting ads into GitHub pull requests. It's a disaster.

The current backlash stems from a reported incident in which Copilot allegedly corrected a typo in a pull request but also injected a line promoting Copilot and Raycast. The phrasing reportedly resembled a tip or product recommendation, not a banner ad, which makes the behavior feel sneakier than ordinary advertising. A file search of the surrounding coverage suggests that the same or similar text may have appeared across thousands of pull requests, with some observers poic COPILOT CODING AGENT TIPS” as a likely mechanism.

That combination of scale, opacity, and placement is what turns a nuisance into a trust problem. Developers can tolerate hallucinations, formatting quirks, or even the occasional bad suggestion. What they are much less willing to accept is a system that appears to use their own workspaces as a distribution channel for ecosystem promotion. In developer tooling, perceived neutrality is nois part of the product itself.

Background

Background

GitHub Copilot started as a convenience layer: a way to write code faster with machine assistance. Over time, though, GitHub and Microsoft have pushed it into far more ambitious territory, turning it into an asynchronous collaborator that can act on issues, draft pull requests, update them as it works, and request review when finished. That is a major product shift because the assistant is no longer merely suggesting text in a chat window; it is operating on shared project artifacts that teams assume are intentional and reviewable.The company has also been explicit that Copilot now spans a large number of surfaces. GitHub has documented coding-agent workflows in which users can assign issues to Copilot and launch tasks from GitHub.com or from companion tools such as Raycast. That creates a broad path for defaults, templates, and prompt scaffolding to leak into visible output. Once an assistant can write prose inside a pull request, it can also write persuasion, even if that was not the user’s immediate goal.

At the same time, GitHub has acknowledged that hidden instructions are a real security concern. Its documentation says the agent filters hidden characters, includiause invisible text can be abused as prompt injection. That detail is important here, because the controversy around the alleged PR “tips” seems to involve exactly the kind of concealed or template-driven pathway that GitHub says it is trying to prevent. ([docs.github.com] also helps explain why users are hypersensitive to anything that looks like embedded promotion. OpenAI has already discussed advertising in ChatGPT, while emphasizing that ads will be clearly labeled and separated from the model’s answers. That is a very different model from quietly inserting promotional copy into a developer artifact. Even if the business logic is understandable, the optics are much worse when the message appears inside a workflow document rather than beside it.

What Actually Happened

The report that sparked the reactioer using Copilot to correct a typo in a pull request, only to discover that Copilot also appended a promotional sentence about spinning up Copilot coding agent tasks from macOS or Windows via Raycast. The wording reportedly included an emoji and read like a nudge toward a companion workflow, not like a generic system notice. That is a critical distinction, because the insertion looks native to the assistant’s voice and therefore more believable than an obvious ad.The most disturbing claim is not the existsstion that the same text has appeared repeatedly across a very large number of pull requests. The reporting referenced by the file search says the phrase shows up in more than 11,000 instances, and that the same pattern may also appear in merge requests elsewhere. If true, that makes the issue feel systemic rather than accidy why the story escalated from a UX complaint into a governance controversy.

Why repetition matters

A one-off weird output can usually be dismissed as a model hiccup. Repeated language across thousands of repositories suggests a shared template, shared prompt, or shared hidden mechanism. That does not prove delibert absolutely raises the burden of explanation for GitHub.There is also a trust issue hidden inside the repetition itself. If developers start seeing the same “helpful” line over and over, they will stop treating Copilot output as context-aware and begin treating it as systemically biased. Once that suspicion takes hold, even genuinely useful suggestions start to look sRequests Are Different

Pull requests are not casual chat messages. They are part of the durable record of how software changes, why those changes were made, and who approved them. A sentence inside a PR description can be copied into tickets, release notes, audit trails, or compliance systems, which makes any hidden commercial content especially intrusive.

That is why the locatiootion matters more than the content itself. A sidebar suggestion can be ignored. A banner can be dismissed. But a line inside the PR description has the authority of the artifact around it, which makes it feel like part of the developer’s own intent. That is a much stronger form of manipulation, even if the wording is framed as aflow problem

Developers expect review tools to be conservative, transparent, and auditable. They do not expect a tool that can interleave code help with ecosystem nudges. Once that boundary blurs, every subsequent Copilot-generated line is forced to answer two questions: is this useful, and is it also promotional?

This is load* becomes a real cost. Reviewers already have to assess correctness, style, risk, and maintainability. If they now have to ask whether a Copilot-generated sentence is a neutral suggestion or a disguised product placement, the assistant has become part of the burden instead of reducing it.

The Raycast Connection

Raycast is not a random name in this story. GitHub’s own Copilot documentation and changelog describe Raycast as one of the supported launch surfaces for Copilot coding-agent tasks, and Raycast’s integration is explicitly marketed as a way to start and track those tasks. That makes the mention plausible as a legitimate ecosystem reference, but it does not make the placement any less controversial.Th Copilot universe. The problem is that the reference reportedly appears inside the generated body of a pull request description, where it reads like a recommendation rather than a neutral workflow note. If users did not ask for a partner pitch, and if the text was added automatically, then the line between “helpful guidance” and “distribution strategy” becomes uncomfroduct advice or product placement?

That distinction is the core of the controversy. A product tip is supposed to explain how to use the feature you are already invoking. Product placement, by contrast, uses the feature to steer attention toward another product or integration. In a developer workflow, those two categories should never be hard to tell apart.

The lack of a clear pation makes matters worse. If the text was a benign template, GitHub needs to explain why it was injected in a way that made it look hidden. If it was experimental, GitHub needs to explain why it ran so widely. If it was an integration bug, GitHub needs to explain the control path. Without that clarity, users naturally assume the worst.

Microsoft’s Monetization Problem

This story lands in a wider contexare under pressure to find new revenue paths. Subscriptions are not always enough, enterprise licensing has limits, and the economics of model operation remain expensive. That creates a strong incentive to turn high-frequency workflows into monetization surfaces, especially when the product already owns the channel.Microsoft and GitHub are particularly exposed to that temptation because they sit at the center of the developer stack. Copilot is already in the edhn tools. If the assistant can also steer users toward adjacent services, partner tools, or premium workflows, it becomes a distribution layer as much as a productivity layer. That is commercially powerful, but it is also the kind of power that can erode trust very quickly.

Why timing matters

The timing is awkward because Microsoft has also been pushing a broader Copilot data strategy. GitHub recently said that starting April 24, 2026, Copilot Free, Pro, and Pro+ interactions will be used to train and improve AI models unless users opt out, while business and enterprise accounts are exempt. That policy change is separate from the PR-ad issue, but together they create a picture of a company that is asking for more user data, more workflow access, and more latitude inside the product.For individnation can feel like a lot. First the assistant learns from your interactions, then it becomes more embedded in your collaboration artifacts, and then it starts looking as if it may be inserting product tips into your workflow. Even if each piece is defensible on its own, the cumulative effect is what users experience as slop, noise, and creeping loss of control.

Enterpact

For consumers and hobbyists, a promotional line inside a pull request is irritating, maybe even embarrassing, but it is usually survivable. They can ignore it, trim it out, or move on. For enterprises, the calculus is different because the artifact may be subject to compliance review, audit, procurement scrutiny, or internal policy controls.That is whnse is likely to be sharper than the consumer response. Large organizations want to know what the assistant saw, what it changed, and whether any commercial relationship influenced the output. If they cannot answer those questions, they may restrict the feature, disable certain capabilities, or demand more granular controls.

Governance expectations

A mature enterprise ly need several safeguards. Those would include explicit opt-in for partner suggestions, logs showing what prompted the generated text, and admin-level controls to disable promotional inserts entirely. None of that is exotic; it is baseline governance for any system that can alter formal written records.The lack of transthe current episode so combustible. Even if the behavior turns out to be a harmless experiment or a misconfigured template, enterprises will still ask whether hidden prompts can be trusted not to reappear elsewhere. In regulated environments, trust once lost is expensive to rebuild.

Competitive Implications

If developers start to suspect that Copilot output is shaped by undisclosed commercial incentio not need to do much to benefit. They can simply position themselves as cleaner, more transparent, or less commercially invasive. In a market where switching costs are real but not insurmountable, trust can become a differentiator as strong as model quality.That dynamic matters because GitHub has spent years trying to make Copilot the default AI layer fnt. Once that default status is questioned, competitors can frame themselves as the assistant that helps you ship code rather than the assistant that markets to you. In platform wars, perception often travels faster than engineering nuance.

What rivals may emphasize

Competing vendors have a simple playbook if this controvpromise clearer labeling, stronger controls, and better visibility into what their models see and why they answer the way they do. They can also highlight the absence of hidden promotional logic as a feature in itself.That is especially potent in enterprise sales. Procurement teams tend to dislikity teams dislike them even more. A rival that can credibly say, “Our assistant does not sneak product pitches into your PRs,” is already speaking directly to one of the market’s newest anxieties.

Security, Prompt Injection, and Hidden Text

The hidden-comment angle is what makes this issue feel more serious than a normal ad dispute. Hidden HTML comments are already a well-known prompt-injection vector in AI workflows, and GitHub’s own Copilot docs acknowledge that invisible characters need to be filtered for safety. If promotional text is entering the output through a concealed marker, then the problem is not just marketing; it is the integrity of the instruction chain.That matters because hidden influence is almost impossible for end users to audit. If a developer cannot tell where the message came from, they cannot judge whether it is trustworthy, biased, or even relevant. In that sense, s worse than an obvious banner ad because it is harder to spot and harder to challenge.

Why invisible guidance is dangerous

Invisible guidance can make generated text appear organic when it is not. It can also make the assistant seem more confident or more helpful than it really is. Once that pattern is visible, users begin to wonder what elseutput behind the scenes.That is why this controversy has a larger security echo than a normal marketing complaint. It touches the same trust boundary as prompt injection, hidden templates, and agent misdirection. The underlying concern is simple: if Copilot can be nudged by invisible instructions, then what exactly is the user buying when they trust it to speak for them?

Strengths andis still a constructive version of this story. If Microsoft and GitHub treat the incident as a tan a minor formatting hiccup, they could use it to improve disclosure, tighand make Copilot more enterprise-friendly. That would not erase the backlash, but ict more defensible in the long run.

- GitHub can clarify whether the text cperiment, or an intended feature.

- Copilot could add clearer disclosurcommendations.

- Enterprise admins could get stronger policy controls over genGitHub can improve auditability around hidden markers and prompt sources.

- Competitors their own transparency standards.

- Developers could end up with safer, more predictable AI-assisted workflows.

- The incident may accelerate better norms around AI-generated collaboration artifacts.

Risks and Concerns

The biggest risk is not the promotional line itself, but the erosion of confidence in Cooadly. Once developers think the assistant may be influenced by ery suggestion starts to look suspect, and that suspicion can spread far- Hidden promotional content could undermine developer trust.

- Enterpriseior as a compliance or policy problem.

- The incident may failure if hidden text is involved.

- Public repositories amage fast.

- Users may become skeptical of all Copilot-generated prose.

-ment to market themselves as cleaner alternatives. - Opaque monetization caat outlasts the original bug.

What to Watch Next

The next phase will be about explanation, not outrage. If GitHub offers a clear technical postmortem, this may settle into a contained product bug. If it does not, the story could become a lasting example of how quickly AI assistants lose credibility once users believe they are no l first.The second thing to watch is control. Developers and administrators will want to know whether ecosystem tips can be disabled, whether hidden markers are visible in audit logs, and whether generated PR descriptions can be locked down. Those are not edge-case demands anymore; they are the natural next questions any enterprise buyer wie is whether GitHub keeps drawing a hard line between workflow help and product promotion. The company can still make Copilot useful, agentic, and deeply integrated without making it feel like a sales surface. The more it blurs that line, the more its rivals will benefit from the backlash.

- Whether Gict technical explanation.

- Whether users can disable ecosyssts.

- Whether hidden comment markers are confirmed as the meterprise admin controls are expanded.

- Whether competitors sharpds” messaging.

Source: Windows Central Copilot is now injecting ads into GitHub pull requests. It's a disaster.