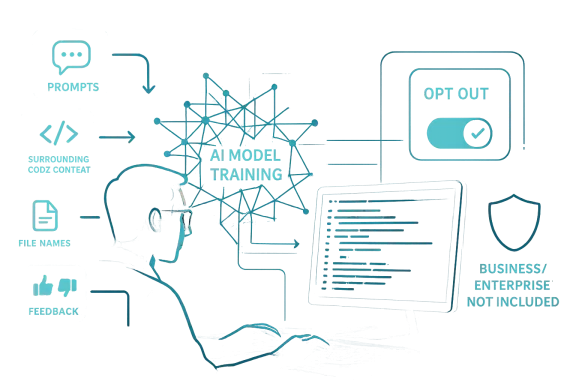

GitHub is making one of its most consequential Copilot policy changes yet, and this time the company is being unusually direct about what it plans to do with user data. Beginning on April 24, 2026, GitHub says it will use interaction data from Copilot Free, Pro, and Pro+ accounts to train and improve its AI models unless users opt out, while Business and Enterprise customers are explicitly excluded from the update (github.com). For individual developers, that means prompts, outputs, code snippets, surrounding context, and feedback are moving from being merely functional telemetry into potential model-training material, a shift that will sharpen the long-running tension between AI convenience and data consent (github.com).

GitHub Copilot has always sat at the intersection of utility and controversy. When it launched, the service was praised for making code completion feel more like collaboration than autocomplete, but it also arrived in the shadow of criticism over how generative AI systems were trained in the first place. The original backlash centered on the broader question of whether code hosted on GitHub had been used, directly or indirectly, to build a product that could then recreate coding patterns in ways that felt too close for comfort.

That debate never fully disappeared. As Copilot matured, GitHub and Microsoft kept expanding the product with chat, inline suggestions, and enterprise controls, while also trying to reassure organizations that their private data would not be folded back into training pipelines. GitHub’s current Copilot page still draws a clear line between business and consumer tiers, noting that Copilot Business and Enterprise data are not used to train GitHub’s models (github.com). The new policy shift is therefore not a universal change; it is a more targeted move aimed at the consumer and prosumer tiers that have always been closer to the company’s monetization and data-collection experiments.

What makes the April 24 update notable is not just that GitHub wants more training data. It is that the company is now spelling out the exact kinds of interaction data it wants to use: accepted or modified outputs, inputs, code context around the cursor, comments, documentation, file names, repository structure, navigation patterns, and direct feedback such as thumbs up or thumbs down ratings (github.com). In other words, GitHub is not merely collecting abstract usage metrics; it is seeking a dense behavioral record of how developers think, browse, and edit.

This also lands at a time when GitHub Copilot has become more central to Microsoft’s broader AI strategy. The product is no longer just a coding helper. It is part of an ecosystem that includes multiple models, richer chat experiences, premium requests, enterprise governance, and increasing model choice across the platform (github.com). The more Copilot becomes a system for generating and orchestrating work, the more valuable the interaction data becomes to the company trying to improve it.

The company’s argument, unsurprisingly, is that real-world interactions make the system better. GitHub says the change will help build “more intelligent, context-aware coding assistance” based on “real-world development patterns” (github.com). That claim is plausible, but it is also the same basic pitch every major AI vendor now makes: better models need better data, and better data increasingly comes from people who may not realize they are helping train the next version of the tool they are using.

The distinction between consumer and enterprise plans is crucial. GitHub says Copilot Business and Copilot Enterprise data are not used to train its models, and those customers are not affected by this update (github.com). That is a familiar boundary in modern SaaS AI: enterprises pay for stronger guarantees, while individual users live closer to the product’s data-harvesting layer.

From a model-training standpoint, that breadth matters. A coding assistant is not merely trying to predict the next token; it is trying to infer intent, local project structure, and task context. GitHub’s data categories map closely to those needs, which is why the company is likely eager to use them. Still, the inclusion of repository structure and navigation patterns will make some users uneasy, because those are not abstract metrics; they are a map of how work is organized.

The policy language also mentions that GitHub may share collected data with affiliates, including Microsoft (github.com). That line is easy to overlook, but it matters because it hints at a broader internal reuse story. Once data is inside a corporate family’s AI stack, “training” is rarely the only destination.

The concern is not limited to secrets or private IP. Even benign-looking development data can reveal project architecture, business logic, deployment patterns, and the shape of unreleased work. A repository structure can be as revealing as source code itself, especially when paired with prompts that describe what the developer is trying to achieve. That is why the new policy feels broader than a standard “improve the service” checkbox.

The company says users who previously opted out of GitHub collecting data should already be set, though it recommends checking the Privacy section in account settings anyway (github.com). That advice is sensible, but it also reveals the complexity of modern AI privacy controls: there are usually multiple settings, different product tiers, and overlapping data-use policies that can be hard to track.

If you do not want your Copilot interactions used for training, the safest approach is to review the Privacy settings in your GitHub account and confirm the relevant opt-out remains in place. A “set it once and forget it” mindset is risky here because policy language, product tiers, and defaults can change over time.

That separation reflects a broader market reality. Enterprises will adopt AI much faster if vendors promise that internal code, customer data, and proprietary workflows are not feeding public model training runs. If that wall disappears, procurement departments get nervous very quickly. GitHub knows this, and the policy update makes it clear that business users are still the more protected class.

That is especially important because GitHub Copilot is no longer a single feature with a single model. GitHub’s own product pages now talk about access to multiple models and a mix of GitHub, OpenAI, and Microsoft AI systems (github.com). The more complex the product becomes, the more enterprise buyers will ask where their data is going and which model family is touching it.

The enterprise exemption also helps GitHub avoid a potentially expensive trust crisis. If corporate code were ever folded into training in a way customers considered ambiguous, the backlash could be severe. By keeping those accounts out of scope, GitHub is trying to prevent a high-value business line from being contaminated by a consumer-style data policy.

When a public-code platform starts reusing user interactions to improve its AI, some developers will see that as a natural evolution. Others will see it as a feedback loop that draws value from community labor twice: once when code is published and again when usage traces are harvested to make the assistant more capable. That tension will not disappear simply because the policy is disclosed.

That distinction matters because AI systems do not just ingest source code anymore. They ingest human behavior around source code. A prompt like “why does this test fail when I change the schema?” is more than a question; it is an action signal, a support signal, and a modeling signal all at once.

This is where GitHub’s policy may feel especially fraught for open-source maintainers. They already contribute public work to the ecosystem, and now their use of the assistant around that work may also feed the same company’s model-building machinery. That is not necessarily unethical, but it is a boundary worth scrutinizing.

Another risk is that the product becomes more self-referential. If a coding assistant learns from how people use coding assistants, it may end up amplifying the habits of the tool rather than the habits of the developer. That sounds abstract, but in AI products abstraction often becomes product design.

What makes GitHub notable is the scale and specificity of the data. Coding assistants generate unusually rich signals because they sit inside a working environment where intent, structure, and output quality are all visible. A thumbs-down on a bad completion is more actionable than a generic complaint. A modified snippet tells the model what the user wanted but did not quite receive.

GitHub’s policy language appears designed to do exactly that: it foregrounds the data categories, the opt-out, and the enterprise carve-out. Still, transparency is not the same thing as reassurance. Users may understand what is happening and still dislike it.

The fact that GitHub says it may share data with affiliates, including Microsoft, only heightens the sense that this is part of a bigger AI flywheel (github.com). In a modern platform company, data rarely stays in a single product lane for long.

That kind of date-driven rollout matters because AI policies are often introduced quietly and retrospectively discovered later. Here, GitHub is at least making the move visible in advance. Whether users appreciate the candor is another matter.

But the user experience side cuts both ways. If developers become more skeptical about what Copilot is observing, they may use the tool differently or less freely. A coding assistant works best when the user treats it like a trusted collaborator. Any policy that makes the assistant feel more like an observer than a partner can erode that trust.

That is why opt-out design matters so much. The setting has to be easy to find, easy to understand, and easy to verify. If users have to hunt through nested privacy menus, the policy will feel less like a choice and more like an ambush.

GitHub says the option sits under Privacy in account settings, which is a sensible placement (github.com). The real question is whether users will know to look there before the new policy starts affecting their data.

That gap is why the Copilot tiering strategy matters so much. If consumers are effectively contributing to product improvement while enterprises are shielded, then the business model becomes a ladder: free or cheap usage helps improve the product, and paid org usage buys stronger data control.

The broader opportunity is that GitHub can now improve quality in a more targeted way while preserving the enterprise trust boundary. If it executes well, the company may end up with a stronger assistant for individuals and a cleaner governance story for businesses.

There is also the risk of uneven understanding. Many users will never see the policy change, and others will assume they are protected when they are not. The difference between opt-in, opt-out, and not affected is easy for policy teams to track but much harder for everyday users to internalize.

What happens next will likely depend on two things: how visibly GitHub communicates the change when April 24 arrives, and whether users begin to notice a measurable improvement in Copilot quality. If the model gets better quickly, the controversy may soften. If not, the policy will read as a data grab dressed up as a product update.

Source: Windows Central GitHub to use Copilot interaction data for AI model training

Background

Background

GitHub Copilot has always sat at the intersection of utility and controversy. When it launched, the service was praised for making code completion feel more like collaboration than autocomplete, but it also arrived in the shadow of criticism over how generative AI systems were trained in the first place. The original backlash centered on the broader question of whether code hosted on GitHub had been used, directly or indirectly, to build a product that could then recreate coding patterns in ways that felt too close for comfort.That debate never fully disappeared. As Copilot matured, GitHub and Microsoft kept expanding the product with chat, inline suggestions, and enterprise controls, while also trying to reassure organizations that their private data would not be folded back into training pipelines. GitHub’s current Copilot page still draws a clear line between business and consumer tiers, noting that Copilot Business and Enterprise data are not used to train GitHub’s models (github.com). The new policy shift is therefore not a universal change; it is a more targeted move aimed at the consumer and prosumer tiers that have always been closer to the company’s monetization and data-collection experiments.

What makes the April 24 update notable is not just that GitHub wants more training data. It is that the company is now spelling out the exact kinds of interaction data it wants to use: accepted or modified outputs, inputs, code context around the cursor, comments, documentation, file names, repository structure, navigation patterns, and direct feedback such as thumbs up or thumbs down ratings (github.com). In other words, GitHub is not merely collecting abstract usage metrics; it is seeking a dense behavioral record of how developers think, browse, and edit.

This also lands at a time when GitHub Copilot has become more central to Microsoft’s broader AI strategy. The product is no longer just a coding helper. It is part of an ecosystem that includes multiple models, richer chat experiences, premium requests, enterprise governance, and increasing model choice across the platform (github.com). The more Copilot becomes a system for generating and orchestrating work, the more valuable the interaction data becomes to the company trying to improve it.

The company’s argument, unsurprisingly, is that real-world interactions make the system better. GitHub says the change will help build “more intelligent, context-aware coding assistance” based on “real-world development patterns” (github.com). That claim is plausible, but it is also the same basic pitch every major AI vendor now makes: better models need better data, and better data increasingly comes from people who may not realize they are helping train the next version of the tool they are using.

What GitHub Says Is Changing

GitHub’s current Copilot page is the clearest public statement of the policy. It says that from April 24 onward, interactions from Copilot Free, Copilot Pro, and Copilot Pro+ users may be used to train and improve GitHub’s AI models unless the user opts out (github.com). It also says users were notified 30 days before the change took effect and can disable training use through account settings at any time (github.com).The distinction between consumer and enterprise plans is crucial. GitHub says Copilot Business and Copilot Enterprise data are not used to train its models, and those customers are not affected by this update (github.com). That is a familiar boundary in modern SaaS AI: enterprises pay for stronger guarantees, while individual users live closer to the product’s data-harvesting layer.

The Data GitHub Wants

GitHub is unusually explicit about the inputs it may use. The list includes prompts, outputs, code snippets, surrounding code context, file names, repository structure, navigation patterns, and feedback signals such as thumbs up and thumbs down ratings (github.com). That is a broad enough set of signals to teach a model not just what code looks like, but how developers move through codebases and decide whether a suggestion is useful.From a model-training standpoint, that breadth matters. A coding assistant is not merely trying to predict the next token; it is trying to infer intent, local project structure, and task context. GitHub’s data categories map closely to those needs, which is why the company is likely eager to use them. Still, the inclusion of repository structure and navigation patterns will make some users uneasy, because those are not abstract metrics; they are a map of how work is organized.

The policy language also mentions that GitHub may share collected data with affiliates, including Microsoft (github.com). That line is easy to overlook, but it matters because it hints at a broader internal reuse story. Once data is inside a corporate family’s AI stack, “training” is rarely the only destination.

Why This Matters for Developers

For individual developers, the practical issue is not whether Copilot can improve in theory. It is whether the value of that improvement outweighs the privacy and workflow trade-offs. Many users will never care, especially if the data involved is mostly generic code and ordinary prompts. Others, particularly open-source maintainers and people working on sensitive proprietary projects, will see this as a meaningful boundary change.The concern is not limited to secrets or private IP. Even benign-looking development data can reveal project architecture, business logic, deployment patterns, and the shape of unreleased work. A repository structure can be as revealing as source code itself, especially when paired with prompts that describe what the developer is trying to achieve. That is why the new policy feels broader than a standard “improve the service” checkbox.

The Consent Problem

GitHub is offering an opt-out, which is better than a silent default. But opt-out is still not the same thing as informed, affirmative consent. In practice, many users will miss the change, leave the setting untouched, or assume they already opted out in the past and are therefore covered.The company says users who previously opted out of GitHub collecting data should already be set, though it recommends checking the Privacy section in account settings anyway (github.com). That advice is sensible, but it also reveals the complexity of modern AI privacy controls: there are usually multiple settings, different product tiers, and overlapping data-use policies that can be hard to track.

If you do not want your Copilot interactions used for training, the safest approach is to review the Privacy settings in your GitHub account and confirm the relevant opt-out remains in place. A “set it once and forget it” mindset is risky here because policy language, product tiers, and defaults can change over time.

What This Means in Practice

For developers who rely on Copilot heavily, the policy creates a subtle but important new bargain. You get a better-funded, more data-rich AI assistant, but your interactions may help train that assistant unless you actively decline. That is a reasonable commercial model, but it should be recognized for what it is: a transaction between convenience and contribution.- Consumers and freelancers now sit closer to the training loop.

- Enterprise customers remain behind a stronger data wall.

- The policy will probably improve model relevance for everyday coding tasks.

- It may also increase user suspicion around prompt privacy.

- The opt-out process becomes a meaningful user-rights checkpoint.

- Open-source contributors may be especially sensitive to the change.

The Enterprise Line in the Sand

GitHub’s decision to exempt Copilot Business and Copilot Enterprise customers is not accidental; it is the company’s way of preserving trust in the segment that pays for stronger guarantees. GitHub says those data sets will not be used to train its models, and that remains one of the clearest differentiators between the consumer and corporate sides of the product (github.com).That separation reflects a broader market reality. Enterprises will adopt AI much faster if vendors promise that internal code, customer data, and proprietary workflows are not feeding public model training runs. If that wall disappears, procurement departments get nervous very quickly. GitHub knows this, and the policy update makes it clear that business users are still the more protected class.

Why Microsoft Needs the Wall

Microsoft sits at a delicate intersection here. On one hand, it wants Copilot to learn from realistic development behavior. On the other, it must preserve the enterprise story that has powered so much of its AI revenue push. These two goals are compatible only if the company keeps consumer and business data separated in ways customers can understand.That is especially important because GitHub Copilot is no longer a single feature with a single model. GitHub’s own product pages now talk about access to multiple models and a mix of GitHub, OpenAI, and Microsoft AI systems (github.com). The more complex the product becomes, the more enterprise buyers will ask where their data is going and which model family is touching it.

The enterprise exemption also helps GitHub avoid a potentially expensive trust crisis. If corporate code were ever folded into training in a way customers considered ambiguous, the backlash could be severe. By keeping those accounts out of scope, GitHub is trying to prevent a high-value business line from being contaminated by a consumer-style data policy.

Competitive Implications

This move also sharpens the competitive split between GitHub Copilot and rival coding assistants. Many developers now choose tools not just on code quality, but on data policy. If GitHub can say its enterprise customers are excluded while its consumer products are opt-out, it may retain users who would otherwise move to a more privacy-conscious competitor.- Enterprise buyers often care as much about policy as model quality.

- Consumer users are more price-sensitive and less likely to read every setting.

- Privacy assurances can become a competitive moat.

- Trust is now part of the coding-assistant feature list.

- Model quality and data governance are increasingly inseparable.

The Open-Source Question

The most sensitive part of the story is not simply that GitHub wants training data. It is that so much of GitHub’s surface area is shaped by open-source development. The platform hosts a massive amount of public code, and Copilot itself was originally trained partly on publicly available repositories. That history makes any discussion of “interaction data” more politically charged than it might be for a closed SaaS code editor.When a public-code platform starts reusing user interactions to improve its AI, some developers will see that as a natural evolution. Others will see it as a feedback loop that draws value from community labor twice: once when code is published and again when usage traces are harvested to make the assistant more capable. That tension will not disappear simply because the policy is disclosed.

Data From Public Work

The fact that GitHub-hosted projects are often open source does not automatically make Copilot training uncontroversial. Code being public does not mean every interaction around that code should be treated as freely reusable training material. A developer’s prompt can reveal debugging strategy, project structure, or the intended use of a code path in ways the repository itself does not.That distinction matters because AI systems do not just ingest source code anymore. They ingest human behavior around source code. A prompt like “why does this test fail when I change the schema?” is more than a question; it is an action signal, a support signal, and a modeling signal all at once.

This is where GitHub’s policy may feel especially fraught for open-source maintainers. They already contribute public work to the ecosystem, and now their use of the assistant around that work may also feed the same company’s model-building machinery. That is not necessarily unethical, but it is a boundary worth scrutinizing.

The Feedback Loop Risk

One risk is that model training on Copilot interactions could reinforce dominant coding styles while flattening edge cases. If enough users accept the same kinds of suggestions, the model may become increasingly optimized for mainstream patterns and less helpful in unusual or highly specialized environments. That could be a feature for mass-market coding, but a bug for advanced users.Another risk is that the product becomes more self-referential. If a coding assistant learns from how people use coding assistants, it may end up amplifying the habits of the tool rather than the habits of the developer. That sounds abstract, but in AI products abstraction often becomes product design.

- Open-source communities may scrutinize the policy more heavily.

- Public code does not erase prompt privacy concerns.

- Feedback loops can entrench common patterns.

- Specialized workflows may receive less benefit than mainstream ones.

- The product may become more polished and less surprising.

How This Fits the AI Industry

GitHub is not inventing a new practice here; it is following a pattern that has become common across consumer AI products. The logic is simple: if a company owns the interface and the usage data, it can improve the model with real-world interactions. That is how tools get better, but it is also how user behavior becomes valuable data.What makes GitHub notable is the scale and specificity of the data. Coding assistants generate unusually rich signals because they sit inside a working environment where intent, structure, and output quality are all visible. A thumbs-down on a bad completion is more actionable than a generic complaint. A modified snippet tells the model what the user wanted but did not quite receive.

Model Improvement Versus User Surveillance

The line between model improvement and user surveillance is thinner than companies like to admit. When an AI system uses prompts, outputs, file names, and navigation behavior to refine itself, it is learning from the user in a very intimate way. That does not automatically make the practice wrong, but it does make it important to explain clearly.GitHub’s policy language appears designed to do exactly that: it foregrounds the data categories, the opt-out, and the enterprise carve-out. Still, transparency is not the same thing as reassurance. Users may understand what is happening and still dislike it.

The fact that GitHub says it may share data with affiliates, including Microsoft, only heightens the sense that this is part of a bigger AI flywheel (github.com). In a modern platform company, data rarely stays in a single product lane for long.

Why Timing Matters

The April 24 timeline is also interesting because it gives the change a firm operational date, not just a vague policy note. That means the company is likely aligning legal, product, and training pipelines to begin ingesting the data at scale. It also gives users a narrow window to review settings before the policy becomes active.That kind of date-driven rollout matters because AI policies are often introduced quietly and retrospectively discovered later. Here, GitHub is at least making the move visible in advance. Whether users appreciate the candor is another matter.

- The data economy around AI is maturing fast.

- More visible transparency may not reduce distrust.

- Companies now treat interaction logs as training assets.

- Product feedback has become model fuel.

- Consumers are increasingly the training layer for premium AI.

The User Experience Angle

From a product perspective, GitHub’s decision could improve the Copilot experience in ways users will actually notice. Better training data should, in theory, produce better completions, better context handling, and fewer hallucinated code suggestions. If the system learns from accepted, modified, and rejected suggestions, it can adapt more effectively to how developers actually work (github.com).But the user experience side cuts both ways. If developers become more skeptical about what Copilot is observing, they may use the tool differently or less freely. A coding assistant works best when the user treats it like a trusted collaborator. Any policy that makes the assistant feel more like an observer than a partner can erode that trust.

Trust Is Part of the UX

This is an underappreciated truth in AI product design: trust is a feature. If users believe an assistant is quietly reusing their interactions in ways they do not fully control, even a technically better model can feel worse. The UI might remain the same, but the emotional contract changes.That is why opt-out design matters so much. The setting has to be easy to find, easy to understand, and easy to verify. If users have to hunt through nested privacy menus, the policy will feel less like a choice and more like an ambush.

GitHub says the option sits under Privacy in account settings, which is a sensible placement (github.com). The real question is whether users will know to look there before the new policy starts affecting their data.

Personal Versus Professional Use

There is also a practical difference between casual individual use and serious professional development. A student or hobbyist may be happy to trade some interaction data for a better assistant. A startup engineer working on unreleased software may not be nearly as comfortable, even if the repository is private.That gap is why the Copilot tiering strategy matters so much. If consumers are effectively contributing to product improvement while enterprises are shielded, then the business model becomes a ladder: free or cheap usage helps improve the product, and paid org usage buys stronger data control.

- A better model could yield fewer frustrating completions.

- More context-aware suggestions could save time.

- Trust concerns may reduce willingness to share context.

- Privacy settings need to be simple enough to verify quickly.

- Different user types will tolerate different trade-offs.

Strengths and Opportunities

GitHub’s update is controversial, but it is also strategically coherent. The company is acknowledging that modern AI systems improve from usage, and it is offering users a way out rather than pretending the data is irrelevant. That is not a perfect answer, but it is better than opacity, and it may help GitHub continue refining Copilot without leaving the model starved of real-world feedback.The broader opportunity is that GitHub can now improve quality in a more targeted way while preserving the enterprise trust boundary. If it executes well, the company may end up with a stronger assistant for individuals and a cleaner governance story for businesses.

- Better training signal quality from real-world coding interactions.

- Improved context awareness from prompts, outputs, and code structure.

- Stronger product tuning from thumbs-up/down feedback and edits.

- Clearer enterprise separation that protects high-value business trust.

- Opt-out controls that give users at least some agency.

- Potential UX gains if model quality improves materially.

- Commercial flexibility as GitHub deepens its AI monetization strategy.

Risks and Concerns

The risk is not just that users dislike the policy. The risk is that they stop trusting the product ecosystem around it. Once developers feel that Copilot is studying more than they intended, every interaction becomes slightly more cautious, and that caution can blunt the very real benefits of an AI coding assistant. A good policy should not only be defensible; it should be easy to live with.There is also the risk of uneven understanding. Many users will never see the policy change, and others will assume they are protected when they are not. The difference between opt-in, opt-out, and not affected is easy for policy teams to track but much harder for everyday users to internalize.

- Confusion over defaults may lead to accidental participation.

- Prompt and context sensitivity could expose more than users expect.

- Open-source maintainers may view the policy as extractive.

- Trust erosion could push some users toward competitors.

- Policy complexity makes settings easy to misunderstand.

- Cross-company data sharing may worry privacy-conscious users.

- Overreliance on telemetry could intensify the AI feedback loop.

Looking Ahead

The key question now is whether GitHub can use this data responsibly enough to justify the trade-off. If the company delivers sharper completions, better chat behavior, and fewer irrelevant suggestions, many users will tolerate the arrangement, especially if the opt-out remains easy to find and effective in practice. If the rollout feels sneaky, however, the policy could become yet another example of AI vendors pushing boundaries just because they can.What happens next will likely depend on two things: how visibly GitHub communicates the change when April 24 arrives, and whether users begin to notice a measurable improvement in Copilot quality. If the model gets better quickly, the controversy may soften. If not, the policy will read as a data grab dressed up as a product update.

- Watch whether GitHub surfaces the opt-out more prominently.

- Watch for changes in Copilot quality after April 24.

- Watch whether enterprise customers demand even stricter data assurances.

- Watch whether competitors lean harder into privacy-first positioning.

- Watch whether open-source voices push back publicly on the new training policy.

Source: Windows Central GitHub to use Copilot interaction data for AI model training