Glean’s move beneath the flashy chat windows — turning enterprise search into a permissions‑aware, multi‑model “intelligence layer” — may be the quiet architectural bet that decides whether generative AI becomes useful at scale in the enterprise.

When Glean launched in 2019 it marketed itself as a smarter way to find documents across Slack, Drive, Confluence, and myriad enterprise apps. That original engineering problem — indexing dispersed content, mapping relationships between people and artifacts, and mirroring permissions — turned into a durable asset as generative models emerged. Over the last two years Glean has recast that asset as a strategic layer that sits between powerful but generic LLMs and the messy, permissioned reality inside corporations. This repositioning is captured in recent reporting and the piece the user supplied, which frames Glean’s current product and go‑to‑market thesis as an “intelligence layer” built on three core pillars. is not just marketing. Investors backed the thesis in June 2025 with a $150 million Series F that pushed the company’s post‑money valuation to roughly $7.2 billion, a round widely reported across business press and data providers. Those announcements also repeated a critical claim: Glean passed the $100 million ARR milestone in the prior fiscal year, a conventional threshold that signals the company is beyond proof‑of‑concept and into durable enterprise revenue.

Why should WindowsForum readers care? The obvious answer is that Microsoft, Google, and the major SaaS vendors are all embedding AI into the surfaces users touch: sidebars, chat panes, document assistants. That surface war is important, but it doesn’t guarantee safe, auditable, and actionable AI inside organizations. Glean’s bet is that the real value — and the real risk mitigation — happens under the surface: in how a platform discovers, filters, verifies, and executes against the right sources, and how it ties each action to the person who requested it.

Why is this material? Because enterprise value is unlocked not when an AI gives you a summary, but when it reliably completes multi‑step workflows — fetch history, draft a change, verify with a human, and apply the update. Glean’s product documentation and releases describe exactly these patterns (pipeline hygiene agents, embedded experiences inside CRMs, and workflow‑orchestration primitives), which are concrete engineering features rather than speculative promises.

Note the practical caveat: some permission semantics (field‑level security, inherited or highly customized sharing models) are hard to mirror perfectly across every third‑party system. Glean’s docs call out common caveats — for example, recommending the exclusion of objects where field‑level security is the primary protection mechanism. That level of candor is important for CIOs planning pilots.

That said, public filings and private‑market valuations are not the same as durable product‑market fit. A high multiple or a large round signals belief in a thesis; adoption across Fortune‑scale customers, demonstrable ROI metrics, and retention curves will determine long‑term viability. Glean’s deposits of customer wins and product enhancements matter more for long‑term survivability than headline valuations.

Where neutrality can fail: hyperscalers can subsidize integrations, bundle AI into existing paid suites, and push down the cost of switching by making certain functionality available “for free” to their existing customer base. If the convenience of a single‑stack experience outweighs the strategic flexibility a neutral layer provides, platform consolidation wins. The neutral approach can prevail only if it demonstrably reduces risk, simplifies operations, and yields clear ROI.

But the outcome is conditional. Neutral layers win when they make heterogeneity frictionless and reduce governance risk without becoming another heavyweight platform customers must manage. Hyperscalers win when the convenience and economics of a single stack outweigh the strategic value of neutrality. The practical battleground will be onboarding friction, real ROI evidence, and whether neutral vendors can keep connector and policy maintenance almost invisible to customers.

In short: Glean’s approach addresses the real engineering problem that underlies enterprise adoption of generative AI. If it can operationalize governance, model routing, friction and prove enterprise ROI at scale, it will be more than an interesting vendor — it will be foundational infrastructure. If it cannot, the convenience of embedded hyperscaler features will likely absorb much of the market.

The visible battle for chat UI is only half the story; the strategic fight that will determine whether enterprise AI becomes reliably useful is happening beneath the surface. Glean’s engineering history, product choices, and recent capital infusion make its intelligence‑layer thesis credible — but the ultimate test will be whether it can make governance, model routing, and multi‑connector operations invisible enough to win entire organizations, not just early adopters.

Source: CryptoRank Enterprise AI’s Critical Layer: How Glean’s Ingenious Strategy Builds the Intelligence Beneath the Interface | artificial intelligence AI News | CryptoRank.io

Background / Overview

Background / Overview

When Glean launched in 2019 it marketed itself as a smarter way to find documents across Slack, Drive, Confluence, and myriad enterprise apps. That original engineering problem — indexing dispersed content, mapping relationships between people and artifacts, and mirroring permissions — turned into a durable asset as generative models emerged. Over the last two years Glean has recast that asset as a strategic layer that sits between powerful but generic LLMs and the messy, permissioned reality inside corporations. This repositioning is captured in recent reporting and the piece the user supplied, which frames Glean’s current product and go‑to‑market thesis as an “intelligence layer” built on three core pillars. is not just marketing. Investors backed the thesis in June 2025 with a $150 million Series F that pushed the company’s post‑money valuation to roughly $7.2 billion, a round widely reported across business press and data providers. Those announcements also repeated a critical claim: Glean passed the $100 million ARR milestone in the prior fiscal year, a conventional threshold that signals the company is beyond proof‑of‑concept and into durable enterprise revenue.Why should WindowsForum readers care? The obvious answer is that Microsoft, Google, and the major SaaS vendors are all embedding AI into the surfaces users touch: sidebars, chat panes, document assistants. That surface war is important, but it doesn’t guarantee safe, auditable, and actionable AI inside organizations. Glean’s bet is that the real value — and the real risk mitigation — happens under the surface: in how a platform discovers, filters, verifies, and executes against the right sources, and how it ties each action to the person who requested it.

The problem: generic models, specific businesses

Large language models are extraordinary pattern matchers. They generalize across text at scale and can synthesize human‑like output. But they are not business‑aware out of the box: they don’t automatically know your org chart, your customer contracts, who can see what in Salesforce, or which Jira projects are confidential. That gap creates three enterprise‑level failure modes:- Hallucination — confidently wrong answers because the model has no reliable way to ground its output in the company’s authoritative sources.

- Data leakage — exposing sensitive records to users (or downstream models) who lack rights to see them.

- Action risk — being able to draft text is one thing; being able to perform or authorize changes in systems-of-record is another.

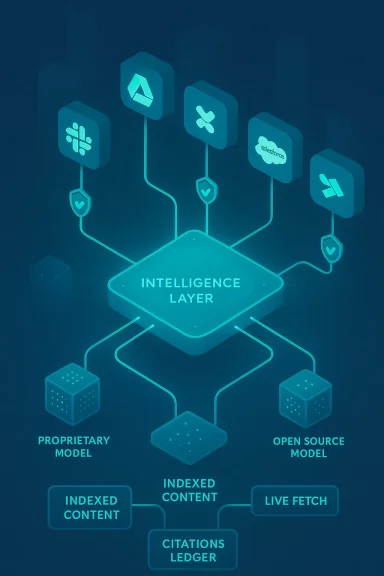

The three pillars of Glean’s intelligence layer

Glean’s architecture — as described in product materials, public interviews, and the reporting you supplied — is built around three interlocking capabilities. Each one answers a recognized enterprise need and each introduces a different set of engineering and commercial tradeoffs.1. Model access and abstraction: a switchboard for LLMs

Glean positions itself as model‑agnostic — an orchestration fabric that can route specific tasks to the best model for the job, whether that’s a proprietary model from an established lab or a tuned open‑source alternative. The idea is simple and powerful:- Let compliance teams pick models that satisfy contractual or regulatory constraints.

- Let engineering pick models optimized for low latency or coding tasks.

- Let procurement avoid vendor lock‑in by retaining the flexibility to swap providers.

2. Deep system connectors: intelligence that can act

Glean’s origin as an enterprise search provider gave it a head start building robust connectors for Slack, Google Drive, Confluence, Jira, Salesforce, and more. The next step is enabling agents that can take actions — not merely suggest language. Glean’s documentation explains how connectors support both indexed search and a “live mode” fetch at query time, plus action‑level controls that require per‑user OAuth and role‑based access controls for writes. Those capabilities let agents do things like propose sales pipeline updates or triage support cases and, with proper approvals, apply changes directly in Salesforce or another system.Why is this material? Because enterprise value is unlocked not when an AI gives you a summary, but when it reliably completes multi‑step workflows — fetch history, draft a change, verify with a human, and apply the update. Glean’s product documentation and releases describe exactly these patterns (pipeline hygiene agents, embedded experiences inside CRMs, and workflow‑orchestration primitives), which are concrete engineering features rather than speculative promises.

3. Governance and permissions‑aware retrieval: trust, traceability, and safety

If you remove only one sentence from this article, make it this one: permissioning is the difference between a pilot and an enterprise roll‑out. Glean’s retrieval layer is explicitly designed to mirror source permissions (e.g., Salesforce record‑level access) and to filter results at query time so that the answer a user receives is scoped to what they’re allowed to see. It can also produce citations to the underlying sources and maintain audit trails for compliance and incident review. Those features address the two most acute enterprise risks — hallucination and data leakage — and are central to the company’s enterprise pitch.Note the practical caveat: some permission semantics (field‑level security, inherited or highly customized sharing models) are hard to mirror perfectly across every third‑party system. Glean’s docs call out common caveats — for example, recommending the exclusion of objects where field‑level security is the primary protection mechanism. That level of candor is important for CIOs planning pilots.

Market validation: funding, ARR, and the investor thesis

Glean’s 2025 funding round is the clearest external signal that investors buy the middleware thesis. Reports across TechCrunch, CB Insights and market data sites document a $150 million Series F in June 2025 and cite a $7.2 billion post‑money valuation. Those pieces also repeat Glean’s public claim of surpassing $100 million in ARR, which is consistent across multiple industry reports. Taken together, capital inflows and reported revenue milestones indicate investor confidence in a capital‑efficient software company that can monetize enterprise AI beyond early pilot budgets.That said, public filings and private‑market valuations are not the same as durable product‑market fit. A high multiple or a large round signals belief in a thesis; adoption across Fortune‑scale customers, demonstrable ROI metrics, and retention curves will determine long‑term viability. Glean’s deposits of customer wins and product enhancements matter more for long‑term survivability than headline valuations.

The strategic question: can a neutral layer survive hyperscaler pressure?

This is the pivotal strategic debate: can a standalone intelligence layer remain relevant as Microsoft, Google, and other platform giants embed their own AI across the stacks customers already use?- Microsoft has aggressively integrated Copilot into Microsoft 365 and Windows and is shipping features tied directly to Microsoft’s Semantic Index and Copilot for Microsoft 365 offerings. That gives Microsoft enormous surface‑area advantages because billions of seats already live in the Microsoft stack.

- Google has likewise embedded Gemini across Workspace apps — Gmail, Docs, Meet, and more — and offers enterprise controspace to address data protection and privacy. That integration also gives Google a powerful wedge into enterprise collaboration flows.

Where neutrality can fail: hyperscalers can subsidize integrations, bundle AI into existing paid suites, and push down the cost of switching by making certain functionality available “for free” to their existing customer base. If the convenience of a single‑stack experience outweighs the strategic flexibility a neutral layer provides, platform consolidation wins. The neutral approach can prevail only if it demonstrably reduces risk, simplifies operations, and yields clear ROI.

Real‑world impact: what Glean actually enables in production

The product patterns Glean sells are tangible and enterprise focused. Examples from product docs and public demos illustrate realistic value paths:- A marketing employee asks Glean Assistant for the latest product roadmap summary. The platform:

- Authenticates the user and evaluates their entitlements across Confluence, Slack, and Jira.

- Performs permission‑aware retrieval, combining indexed content and live fetches.

- Synthesizes a grounded answer with citations and links back to the source records the user can view.

- Optionally suggests follow‑up actions (e.g., open a Jira backlog item) that are gated by admin policy and per‑user OAuth.

- A sales operations triage agent can scan opportunities, identify stale deals, propose field updates, and — after human review — apply pipeline hygiene changes in Salesforce. Admins control which agents can write and which users can approve. Those are not theoretical features; they appear in product documentation and in published examples of embedding Glean into Service Cloud workfl]

Strengths: why the thesis is credible

- Engineering moat from search origins. Glean’s early investment in indexing, cross‑tool mapping, and permission mirroring is hard to replicate quickly and is directly relevant to the problem of safe retrieval for LLMs.

- Capital and runway. A substantial Series F and reported ARR north of $100M provide resources to scale engineering and enterprise sales.

- Neutrality as a commercial lever. For multi‑vendor estates, the option to route across models and unify connectors reduces procurement risk and gives buyers choices—an attractive differentiator against single‑stack strategies.

- Concrete, measurable use cases. The product focuses on workflow automation and measurable KPIs (triage speed, pipeline hygiene outcomes) rather than purely conversational novelty, which aligns with CIO demands for ROI.

Risks and open questions

- Hyperscaler encroachment. Microsoft and Google can integrate deep governance and cross‑tool connectors into their existing productivity suites, and customers may trade som convenience of one vendor. The hyperscalers’ installed base and ability to bundle create a powerful counterforce.

- Connector maintenance costs. Neutrality requires supporting a widening array of systems, APIs, and bespoke on‑prem elements. That ongoing engineering burden is expensive and often underestimated.

- Model licensing and data‑use policies. Changes in model vendors’ enterprise licensing (e.g., new restrictions on data use or retention, different privacy commitments) can materially affect a neutral vendor that routes queries to many providers.

- Complex permission semantics. Field‑level security, custom sharing rules, and nonstandard access patterns in enterprisecult to mirror perfectly — and imperfections create edge cases that are hard to manage at scale. Glean’s docs acknowledge these limitations and recommend mitigation patterns for highly sensitive fields.

- Proving measurable ROI at scale. The clearest path to durable enterprise budgets is hard numbers: time saved, incidents prevented, dollars recovered. The market will watch for customer case studies with rigorous ROI metrics, not just anecdotes.

What to watch next (operational signals)

- Published customer case studies with hard ROI figures: look for time‑saved metrics, compliance incidents avoided, and measured revenue impact.

- Hyperscaler behavior: whether Microsoft and Google meaningfully open interoperability (or only deepen their own walled‑garden features).

- Model licensing changes: stricter enterprise terms or new data‑use clauses could alter economics for middleware vendors.

- Regulatory pressure and standards: auditability, provenance, and record‑level traceability will become table stakes for regulated industries.

A balanced verdict

Glean’s thesis — that the enterprise needs a neutral intelligence layer to make LLMs safe, relevant, and actionable — is both plausible and pragmatic. The company’s engineering lineage, explicit product features around permission‑aware retrieval and write‑action controls, and investor support lend credibility to the claim. Independent reporting and product documentation substantiate the central elements of this thesis: the Series F, the valuation, ARe connector/gov capabilities are corroborated across multiple sources.But the outcome is conditional. Neutral layers win when they make heterogeneity frictionless and reduce governance risk without becoming another heavyweight platform customers must manage. Hyperscalers win when the convenience and economics of a single stack outweigh the strategic value of neutrality. The practical battleground will be onboarding friction, real ROI evidence, and whether neutral vendors can keep connector and policy maintenance almost invisible to customers.

In short: Glean’s approach addresses the real engineering problem that underlies enterprise adoption of generative AI. If it can operationalize governance, model routing, friction and prove enterprise ROI at scale, it will be more than an interesting vendor — it will be foundational infrastructure. If it cannot, the convenience of embedded hyperscaler features will likely absorb much of the market.

FAQs (short, actionable)

- What is an “AI intelligence layer”?

An intelligence layer is middleware that mediates between LLMs and enterprise data/apps: it provides context, enforces permissions, routes across models, and orchestrates actions so AI can operate safely inside a company. - How is Glean different from Microsoft Copilot or Google Gemini?

Copilot and Gemini are tightly integrated into their vendor’s productivity suites. Glean aims to be neutral: it connects multiple models to a heterogeneous set of enterprise systems and emphasizes permissioned retrieval and action controls. - Why does governance matter?

Governance enforces who can see what, prevents data leakage, enables source grounding (reducing hallucinations), and produces audit trails — all prerequisites for organization‑wide deployment. - What does “model abstraction” mean in practice?

It means the platform can route tasks to different LLMs (proprietary or open‑source) and swap providers without breaking connectors, enabling cost, latency, or compliance‑driven choices. - Can a company like Glean compete with tech giants?

It can if it reduces operational friction for customers, proves measurable ROI, and maintains rapid connector and policy coverage. The market is signaling belief in the thesis today, but the long game depends on execution and hyperscaler responses.

The visible battle for chat UI is only half the story; the strategic fight that will determine whether enterprise AI becomes reliably useful is happening beneath the surface. Glean’s engineering history, product choices, and recent capital infusion make its intelligence‑layer thesis credible — but the ultimate test will be whether it can make governance, model routing, and multi‑connector operations invisible enough to win entire organizations, not just early adopters.

Source: CryptoRank Enterprise AI’s Critical Layer: How Glean’s Ingenious Strategy Builds the Intelligence Beneath the Interface | artificial intelligence AI News | CryptoRank.io