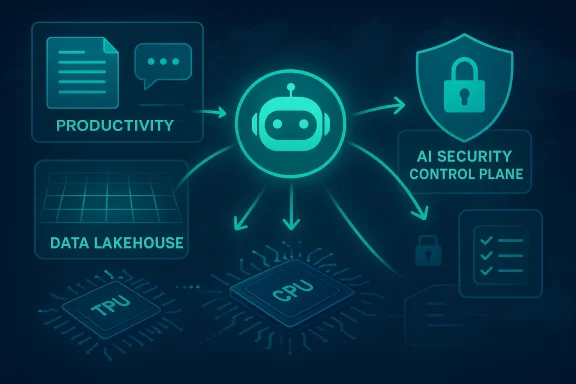

Google Cloud is making a blunt bet: the next phase of enterprise AI will not be about chat windows, but about agents that complete multi-step work across apps, data stores, and security tools. That shift is central to Google Cloud’s latest push against AWS and Microsoft Azure, and it is designed to pull cloud buying decisions away from raw infrastructure comparisons and toward a more integrated AI operating stack. For enterprises, the message is clear: if you want AI that can act, not just answer, Google wants to sell you the platform behind it.

Google Cloud’s current strategy did not appear overnight. Over the past two years, the company has steadily reframed its cloud business around AI hypercomputer infrastructure, Gemini models, and enterprise software that can sit directly in the workflow. At Next ’24, Google already described a path “to agents” built on its AI-optimized infrastructure, models, and platform, alongside broader enterprise tools across cloud and Workspace. That framing matters because it shows Google has been moving from model demos to a full-stack commercialization plan.

The company’s hardware story has also been tightening. In December 2024, Google Cloud made Trillium, its sixth-generation TPU, generally available and positioned it as the latest Cloud TPU with significant gains in training performance, inference throughput, and energy efficiency. The release notes said Trillium was used to train Gemini 2.0 and that it offered more than a 4x improvement in training performance and up to 3x higher inference throughput versus prior generations. That gives Google a differentiated pitch versus rivals that rely primarily on general-purpose GPU fleets.

Security has been another pillar of the strategy. Google announced a definitive agreement to acquire Wiz for $32 billion in March 2025, saying the combination would strengthen cloud security and support multiple clouds. Separately, Google has tied Mandiant and Wiz together in its security narrative, emphasizing faster prevention, detection, and response across hybrid environments. The point is not just to stop threats; it is to make security a reason customers consolidate around Google Cloud. (blog.google)

Then came the productization of agents. Google’s Gemini Enterprise offering is now framed as a secure platform to discover, create, share, and run AI agents, with connectors to Google Workspace, Microsoft 365, Salesforce, SAP, and BigQuery. The release notes show rapid expansion: support for ADK and A2A agents, connectors, shared agents, and a rebranding of Google Agentspace into Gemini Enterprise. This is not a side feature. It is the customer-facing shell for Google’s broader enterprise AI stack.

The timing is important too. Google Cloud’s latest messaging lands in a market where enterprise AI fatigue is setting in. Many companies can now generate text or summarize documents, but they still struggle to operationalize AI in secure, governed, repeatable workflows. Google is trying to position itself as the vendor that can turn interesting pilots into production systems.

This also changes cloud economics. A single prompt can be cheap; a multi-step workflow can consume infrastructure, data access, and security services every time it runs. That means the winning vendor is not necessarily the one with the strongest model alone, but the one that can monetize the surrounding stack. Google’s strategy is to own more of that stack than competitors do.

The challenge is trust. Companies will not hand real operational authority to a black box unless it can be governed, monitored, and limited. That is why Google keeps tying agents to enterprise controls such as security, compliance, sovereignty, and auditability. It knows the agent era will stall without a convincing control plane.

Training hardware wins on throughput and scaling. Inference hardware wins on latency, density, and cost per request. Google’s message is that a modern enterprise AI stack needs both, and that a custom TPU family can better optimize the tradeoff than a one-size-fits-all approach. That is a direct challenge to cloud rivals whose AI offerings are often built around broad accelerator portfolios rather than a vertically integrated chip story.

The strategic value here is not just performance. It is control. By owning the chip stack, Google can better tune pricing, availability, and system design for Gemini and customer workloads. In a market where GPU supply and cost remain strategic battlegrounds, TPU differentiation gives Google a story that AWS and Azure cannot easily copy without massive infrastructure commitments.

Google is trying to turn that friction into an opportunity. If the company can prove that customers can run AI workflows over distributed data without migrating everything first, it weakens one of AWS and Azure’s biggest advantages: existing footprint. In effect, Google is saying, you do not need to start over to get the AI benefits.

This is where enterprise AI becomes more than search. A knowledge layer can help agents reason across policy documents, customer records, and operational systems. That said, the hard part is not indexing content; it is preserving permissions, lineage, freshness, and trust. Google’s stack promises to do that, but the market will want proof in real deployments.

Google’s recent security messaging reflects this. The company has talked about unified security, agentic automation, and more centralized cloud workflows. The implication is that AI security is no longer just about monitoring threats. It is about governing how AI itself interacts with enterprise systems.

The strategic significance is clear: security becomes part of the differentiation, not just a compliance checkbox. Google wants buyers to believe that its AI platform is safer to scale than rival stacks because the detection and response fabric is built in. That can be persuasive for regulated sectors, though it will still require extensive validation.

The platform also appears designed to encourage multi-agent collaboration. Google’s language around deploying and managing agents “in one place” and automating cross-platform workflows suggests it wants customers to think of Gemini Enterprise as an orchestrator, not just an assistant. That is a sharper and more ambitious product vision than simply embedding Gemini into a chat box.

Partner validation is also strategically smart. By creating designations such as “Google Cloud Ready - Gemini Enterprise,” Google is trying to establish quality control in a rapidly expanding market. In a sector flooded with experimental agents, a trusted badge can become a meaningful purchasing signal.

That is a clever repositioning because it brings Google closer to the actual pain point. CIOs are not merely buying compute; they are buying a way to make employees more effective, secure operations more automated, and data more useful. If Google can show a cleaner path from model to agent to workflow, it can sidestep some of the incumbents’ historical advantages.

That is a harder sell than it sounds. Enterprises reward consistency over flash, and they often resist platform shifts unless the business case is concrete. Still, the current moment favors vendors that can promise operational outcomes, not just shiny demos.

The most revealing tests will come from customers trying to deploy agents across messy, hybrid, multi-cloud realities. That is where the promise of a unified stack will either become a practical advantage or expose the limits of orchestration, governance, and economics. Google has set the bar high, and now it has to show that the architecture can survive contact with enterprise reality.

Source: Chosunbiz Google Cloud challenges AWS and Azure with enterprise AI agents push

Background

Background

Google Cloud’s current strategy did not appear overnight. Over the past two years, the company has steadily reframed its cloud business around AI hypercomputer infrastructure, Gemini models, and enterprise software that can sit directly in the workflow. At Next ’24, Google already described a path “to agents” built on its AI-optimized infrastructure, models, and platform, alongside broader enterprise tools across cloud and Workspace. That framing matters because it shows Google has been moving from model demos to a full-stack commercialization plan.The company’s hardware story has also been tightening. In December 2024, Google Cloud made Trillium, its sixth-generation TPU, generally available and positioned it as the latest Cloud TPU with significant gains in training performance, inference throughput, and energy efficiency. The release notes said Trillium was used to train Gemini 2.0 and that it offered more than a 4x improvement in training performance and up to 3x higher inference throughput versus prior generations. That gives Google a differentiated pitch versus rivals that rely primarily on general-purpose GPU fleets.

Security has been another pillar of the strategy. Google announced a definitive agreement to acquire Wiz for $32 billion in March 2025, saying the combination would strengthen cloud security and support multiple clouds. Separately, Google has tied Mandiant and Wiz together in its security narrative, emphasizing faster prevention, detection, and response across hybrid environments. The point is not just to stop threats; it is to make security a reason customers consolidate around Google Cloud. (blog.google)

Then came the productization of agents. Google’s Gemini Enterprise offering is now framed as a secure platform to discover, create, share, and run AI agents, with connectors to Google Workspace, Microsoft 365, Salesforce, SAP, and BigQuery. The release notes show rapid expansion: support for ADK and A2A agents, connectors, shared agents, and a rebranding of Google Agentspace into Gemini Enterprise. This is not a side feature. It is the customer-facing shell for Google’s broader enterprise AI stack.

The timing is important too. Google Cloud’s latest messaging lands in a market where enterprise AI fatigue is setting in. Many companies can now generate text or summarize documents, but they still struggle to operationalize AI in secure, governed, repeatable workflows. Google is trying to position itself as the vendor that can turn interesting pilots into production systems.

Why the Agent Shift Matters

Thomas Kurian’s core argument is simple: AI is moving from question-and-answer toward systems that can use tools, follow procedures, and complete tasks over multiple steps. That sounds conceptual, but the business implication is huge. Once AI agents can act, companies stop evaluating them like novelty chatbots and start evaluating them like software workers. That raises the value of integration, governance, auditability, and latency.From prompts to workflows

The old AI model was largely reactive. A user typed a prompt, the model responded, and the interaction ended there. Agentic AI changes that relationship by allowing software to chain actions across systems, retrieve context, and carry out a task with limited supervision. Google is effectively arguing that the real enterprise market begins where the chat demo ends.This also changes cloud economics. A single prompt can be cheap; a multi-step workflow can consume infrastructure, data access, and security services every time it runs. That means the winning vendor is not necessarily the one with the strongest model alone, but the one that can monetize the surrounding stack. Google’s strategy is to own more of that stack than competitors do.

Why enterprises care

Enterprises do not buy AI in a vacuum. They buy it to reduce manual effort in areas like support, finance, HR, code review, security triage, and data analysis. In that context, the promise of agents is less about novelty and more about labor substitution, or at least labor compression. If an agent can gather data, assess policy, draft a response, and open the right workflow in another system, the ROI becomes easier to defend.The challenge is trust. Companies will not hand real operational authority to a black box unless it can be governed, monitored, and limited. That is why Google keeps tying agents to enterprise controls such as security, compliance, sovereignty, and auditability. It knows the agent era will stall without a convincing control plane.

Infrastructure: TPUs as the Differentiator

Google’s infrastructure pitch remains one of its strongest cards. The company has spent years building custom silicon, and the new TPU line is central to how it wants to stand apart from AWS and Azure. In Google’s framing, the AI race is no longer just about “more compute”; it is about compute architecture optimized for training and inference at scale.Training versus inference

The reported introduction of TPU 8T and TPU 8I signals a sharper product split between training and inference. Even without every technical detail publicly confirmed, the naming alone suggests Google is trying to separate the economics of model development from the economics of live deployment. That matters because enterprises increasingly want to do both, but not necessarily on the same hardware profile.Training hardware wins on throughput and scaling. Inference hardware wins on latency, density, and cost per request. Google’s message is that a modern enterprise AI stack needs both, and that a custom TPU family can better optimize the tradeoff than a one-size-fits-all approach. That is a direct challenge to cloud rivals whose AI offerings are often built around broad accelerator portfolios rather than a vertically integrated chip story.

The TPU advantage

Google’s public TPU documentation already highlights the role of TPUs in training and inference across AI workloads, including agents, code generation, and recommendation systems. Trillium, the sixth-generation TPU, is described as the latest Cloud TPU and the basis for large-scale AI work in Google Cloud. It is also embedded in Google’s AI Hypercomputer narrative, which wraps hardware, software, scheduling, and networking into one package.The strategic value here is not just performance. It is control. By owning the chip stack, Google can better tune pricing, availability, and system design for Gemini and customer workloads. In a market where GPU supply and cost remain strategic battlegrounds, TPU differentiation gives Google a story that AWS and Azure cannot easily copy without massive infrastructure commitments.

What this means for customers

For enterprises, custom silicon sounds abstract until it affects time-to-train, inference speed, or bill shock. If Google can prove that its TPU environment lowers the cost of deploying agents at scale, that becomes a serious procurement advantage. If the economics hold, the hardware story could pull customers into Google Cloud even if their core workloads remain multi-cloud.- Faster training can shorten the experimentation cycle.

- Lower-cost inference can make always-on agents viable.

- Integrated scheduling can simplify scaling across teams.

- Custom hardware can improve energy efficiency.

- Dedicated AI infrastructure can reduce dependency on general-purpose cloud capacity.

Data Without Dragging It Around

Google’s data message is just as important as its silicon story. The company is pushing a cross-cloud lakehouse model that lets customers analyze data where it already lives, including in AWS and Azure environments. That is a subtle but powerful pitch because it avoids the hardest part of cloud migration: moving the data before you can start the AI work.Cross-cloud as a wedge

Many enterprises have spent years building data estates across more than one cloud. In practice, that means the real bottleneck is often not model quality but data movement, governance, and duplication. A cross-cloud lakehouse reduces the friction by letting organizations query and analyze without a massive relocation project. That is especially attractive to companies that are already locked into multi-cloud politics inside their own IT departments.Google is trying to turn that friction into an opportunity. If the company can prove that customers can run AI workflows over distributed data without migrating everything first, it weakens one of AWS and Azure’s biggest advantages: existing footprint. In effect, Google is saying, you do not need to start over to get the AI benefits.

Knowledge catalog and context

The knowledge catalog feature is another piece of this puzzle. By consolidating scattered information into a form Gemini can understand, Google is addressing one of the main reasons enterprise AI underperforms: context fragmentation. Models are only as useful as the data they can reliably ground on, and most organizations have critical information hidden in silos, documents, and apps.This is where enterprise AI becomes more than search. A knowledge layer can help agents reason across policy documents, customer records, and operational systems. That said, the hard part is not indexing content; it is preserving permissions, lineage, freshness, and trust. Google’s stack promises to do that, but the market will want proof in real deployments.

The cloud politics of data

There is also a competitive angle here. AWS and Azure have long benefited from customer inertia. Once data pipelines are in place, moving becomes expensive and politically complicated. Google’s cross-cloud and knowledge-centric approach is an attempt to bypass that inertia and make AI the reason to re-evaluate the stack.- Data locality lowers migration barriers.

- Better context improves agent accuracy.

- Unified metadata supports governance.

- Cross-cloud analysis fits hybrid enterprise reality.

- Context management becomes a buying criterion, not an afterthought.

Security as an AI Control Plane

Security may be the most underappreciated part of Google Cloud’s AI pitch. Enterprises will not let agents touch meaningful systems unless they trust the detection, response, and governance layers around them. Google is leaning hard into that reality by combining Mandiant capabilities with the Wiz platform and wrapping them in Gemini-driven automation. (blog.google)Why security now matters more

Agentic systems widen the blast radius of mistakes. A chatbot that hallucinates is annoying; an agent that opens tickets, changes access, or triggers a workflow can create operational damage. That is why security cannot be bolted on later. It must be embedded into the same platform that launches the agent in the first place.Google’s recent security messaging reflects this. The company has talked about unified security, agentic automation, and more centralized cloud workflows. The implication is that AI security is no longer just about monitoring threats. It is about governing how AI itself interacts with enterprise systems.

Mandiant plus Wiz

The combination of Mandiant and Wiz gives Google a two-layer story. Mandiant brings incident response heritage and threat intelligence credibility. Wiz brings modern cloud posture and exposure management expertise. Together, they create the basis for a security agent that can detect threats, investigate exposures, and recommend or automate responses. That is exactly the kind of control plane enterprises will expect if they are going to trust AI agents with critical work.The strategic significance is clear: security becomes part of the differentiation, not just a compliance checkbox. Google wants buyers to believe that its AI platform is safer to scale than rival stacks because the detection and response fabric is built in. That can be persuasive for regulated sectors, though it will still require extensive validation.

Trust is a product feature

There is a deeper lesson here. In the agent era, trust is not just a policy outcome; it is a product feature. If Google can make governance, audit trails, and response automation intuitive, it gains a crucial advantage over competitors that may offer similar model quality but less coherent security integration. In theory, that is where platform leadership gets decided.- Threat detection must be integrated with workflow automation.

- AI actions need authorization and auditability.

- Security data must be understandable by agents.

- Compliance controls must work across cloud boundaries.

- Response tools must be fast enough to matter in real incidents.

Gemini Enterprise and the Platform Play

The most visible expression of Google’s strategy is Gemini Enterprise, which now serves as the company’s flagship business AI environment. Google says it lets teams discover, create, share, and run AI agents in one secure platform, with connectors to systems such as Google Workspace and Microsoft 365. This is an explicit attempt to become the control center for enterprise AI work rather than merely a model provider.One platform, many agents

Google’s latest release notes show the platform maturing quickly. Support for ADK and A2A agents, administration tools, shared agents, and additional connectors all point to one direction: a marketplace-plus-control-plane model. That is important because enterprises rarely want to build every agent from scratch. They want reusable building blocks, approved access patterns, and a centralized way to manage the sprawl.The platform also appears designed to encourage multi-agent collaboration. Google’s language around deploying and managing agents “in one place” and automating cross-platform workflows suggests it wants customers to think of Gemini Enterprise as an orchestrator, not just an assistant. That is a sharper and more ambitious product vision than simply embedding Gemini into a chat box.

Ecosystem matters

Google is also building around partners. The company says thousands of agents are available through its ecosystem, and it has highlighted integrations from ServiceNow, Workday, Deloitte, Accenture, PwC, and others. That matters because enterprise software buyers often trust domain-specific partners more than a general-purpose AI vendor. A rich ecosystem helps Google reduce the fear of lock-in while increasing platform gravity.Partner validation is also strategically smart. By creating designations such as “Google Cloud Ready - Gemini Enterprise,” Google is trying to establish quality control in a rapidly expanding market. In a sector flooded with experimental agents, a trusted badge can become a meaningful purchasing signal.

Why this competes with AWS and Azure

AWS and Azure have their own AI and agent strategies, but Google’s approach is more visibly centered on the agent as the organizing unit. That distinction could matter. If enterprises begin to choose clouds based on how naturally they support cross-app autonomous workflows, then the competition shifts from model benchmarks to platform ergonomics.- Google offers a single agent-centric brand.

- Google ties agents to security and data.

- Google promotes partner-certified agent ecosystems.

- Google emphasizes enterprise workflow integration.

- Google is trying to own the control layer, not just the API.

Competitive Stakes: AWS, Azure, and the Cloud Reset

Google Cloud remains the No. 3 player behind AWS and Azure, but the market is not frozen. AI is reshaping buying criteria, and that gives Google a chance to attack the hierarchy. Instead of trying to out-AWS AWS on raw cloud breadth, Google is seeking advantage through AI specialization, custom infrastructure, and an enterprise agent stack that feels more coherent than fragmented point solutions.A different kind of cloud pitch

AWS still benefits from deep enterprise penetration and broad service coverage. Azure has the advantage of Microsoft’s productivity and identity footprint, especially in Windows-heavy and Microsoft 365-heavy organizations. Google’s answer is to frame the next cloud decision around how work gets done in an AI-native enterprise, rather than just where virtual machines run.That is a clever repositioning because it brings Google closer to the actual pain point. CIOs are not merely buying compute; they are buying a way to make employees more effective, secure operations more automated, and data more useful. If Google can show a cleaner path from model to agent to workflow, it can sidestep some of the incumbents’ historical advantages.

The enterprise versus consumer divide

Consumer AI is about delight, speed, and novelty. Enterprise AI is about control, permissions, policy, and reliability. Google has long excelled at consumer-scale AI, but this latest push is aimed squarely at the enterprise divide. The company is trying to turn its consumer AI credibility into a corporate procurement story.That is a harder sell than it sounds. Enterprises reward consistency over flash, and they often resist platform shifts unless the business case is concrete. Still, the current moment favors vendors that can promise operational outcomes, not just shiny demos.

Google’s opening

The best opening for Google is probably not a full displacement of AWS or Azure. It is a share gain in workloads where AI value is immediate and where customers are willing to reconsider infrastructure if the platform reduces complexity. Agentic automation, security operations, data analysis, and workflow orchestration are all plausible entry points.- AI can be the wedge into broader cloud adoption.

- Security can de-risk cross-cloud operations.

- Data tools can unlock existing estates.

- Partners can accelerate enterprise trust.

- TPU economics can improve the value proposition.

Strengths and Opportunities

Google’s push is compelling because it links infrastructure, software, data, and security into a single narrative instead of treating AI as a bolt-on feature. That coherence gives it a real chance to win mindshare with buyers who are exhausted by scattered point products and are looking for an enterprise-grade path to production.- Custom TPUs give Google a credible performance and cost story.

- Gemini Enterprise provides a unified front door for agent adoption.

- Cross-cloud data access reduces migration friction.

- Mandiant plus Wiz strengthens the security narrative.

- Partner ecosystems expand use cases beyond Google-native tools.

- Workflow automation aligns AI with measurable business value.

- Multi-agent orchestration could become a durable platform moat.

Risks and Concerns

The strategy is ambitious, but ambition cuts both ways. Google is trying to unify too many moving parts at once, and enterprise customers may worry that the platform is still evolving faster than they can operationalize it. The more integrated the stack becomes, the more damaging any weakness in one layer could be.- Integration complexity may slow adoption in large organizations.

- Governance gaps could undermine trust in autonomous agents.

- AI cost inflation could emerge if agent usage scales quickly.

- Vendor concentration may worry buyers who prefer modular architectures.

- Migration inertia still favors AWS and Azure in many accounts.

- Security automation can create new failure modes if misconfigured.

- Proof of ROI will need to be stronger than marketing claims.

Looking Ahead

The next stage will be about execution, not announcements. Google must prove that its agent stack works reliably in real enterprise environments, with real permissions, real data, and real business constraints. If it can do that, the company could convert AI enthusiasm into durable cloud share gains. If it cannot, the market will remember the vision more than the product.The most revealing tests will come from customers trying to deploy agents across messy, hybrid, multi-cloud realities. That is where the promise of a unified stack will either become a practical advantage or expose the limits of orchestration, governance, and economics. Google has set the bar high, and now it has to show that the architecture can survive contact with enterprise reality.

- Watch for broader availability of the new TPU lineup.

- Watch for adoption metrics around Gemini Enterprise.

- Watch for evidence that cross-cloud lakehouse usage reduces migration pain.

- Watch for security automation outcomes tied to Mandiant and Wiz.

- Watch for partner-led agents that move from pilot to production.

Source: Chosunbiz Google Cloud challenges AWS and Azure with enterprise AI agents push