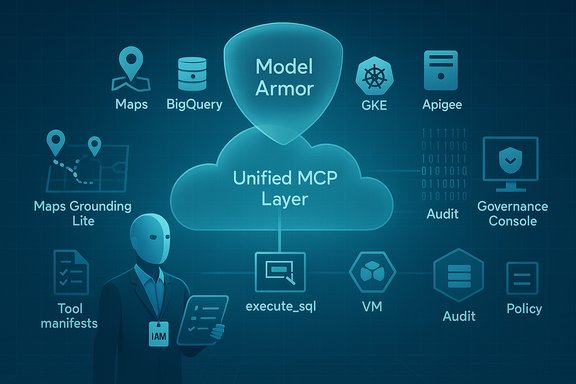

Google’s announcement that it will offer fully managed remote Model Context Protocol (MCP) servers as a “unified layer” across Google and Google Cloud services marks a decisive shift from model-centric product experiments to infrastructure that treats agents as first-class, discoverable, and governable consumers of enterprise data and tooling. The change is operational, not merely rhetorical: developers can now point an MCP-aware agent at a single Google endpoint and—subject to IAM and organizational policy—have that agent discover and call tools for Maps, BigQuery, GKE, GCE and Apigee-managed APIs without building bespoke adapters.

In short: Google’s MCP launch is an important maturity milestone for agentic AI—one that enterprise teams should evaluate urgently, pilot cautiously, and govern meticulously.

Source: Cloud Wars MCP is now fully integrated with Google Cloud, enabling AI agents to access unified services from Maps to BigQuery without complex multi-endpoint navigation.

Background

Background

What is MCP and why it matters

The Model Context Protocol (MCP) is an open specification, originally developed and popularized by Anthropic, that defines how language models and AI agents discover, describe and safely call external “tools” or services. Instead of embedding bespoke API calls or moving large data blobs into a model’s context window, MCP lets a model query a server of tools that advertises machine‑readable tool manifests, input/output schemas, and invocation semantics. This work is the practical plumbing that turns an LLM into an agent that can query a database, inspect a map, or trigger an infrastructure change in a controlled way. MCP’s design reduces prompt engineering friction, centralizes access controls, and enables observability and auditing at the connector layer.The industry move toward agentic interoperability

Over the past 18 months MCP has moved fast from an experimental idea into a de‑facto interoperability layer. Major vendors and community projects have implemented MCP SDKs and public registries; cloud providers and platform vendors are building MCP server support (both managed and local) into their tools; and third‑party connector platforms are wrapping legacy APIs as MCP tools to let agents reason about them. This momentum matters because agents only become practical once they can reliably and securely reach current, authoritative data without replicating or reshaping it for every model.What Google announced — the essentials

A unified, fully managed MCP layer across Google and Google Cloud

Google’s core claim is simple: it will operate fully managed remote MCP servers for a range of its own products, and integrate MCP as a first‑class “API style” across Apigee and Google Cloud tooling. Practically this means:- A single, documented MCP endpoint per product domain (for example, maps-grounding-lite-mcp, bigquery.googleapis.com/mcp, container.googleapis.com/mcp) that agent runtimes can call.

- Apigee will treat MCP as a first‑class API style and can expose existing APIs as MCP tools without requiring developers to rewrite or re‑deploy them. Apigee handles the translation and hosting.

- Managed controls for identity (Google Cloud IAM), security scanning (Model Armor), and centralized audit logging so enterprise administrators can govern agent access in the same way they govern human‑initiated API use.

Service-by-service breakdown

Google Maps: Maps Grounding Lite

Maps Grounding Lite exposes geospatial tools via an MCP server that can provide place searches, weather snapshots and route computations. Agents can call the MCP endpoint to get place metadata (Place IDs, coordinates, short summaries) and use those results to enrich conversational responses or trigger follow‑on actions (show a location on a map, compute an ETA). Grounding Lite intentionally excludes step‑by‑step navigation and some real‑time traffic details—Google documents the limitations and offers an OAuth/API key–based enablement flow for projects. Key takeaways:- Useful for grounding recommendations and contextual location queries.

- Designed to be agent‑discoverable with explicit credentials and billing requirements.

- Not a replacement for full navigation APIs when strict turn‑by‑turn accuracy is required.

BigQuery: native SQL tooling for agents

BigQuery’s remote MCP server provides discoverable tools such as listing datasets, inspecting table schemas and executing parameterized SELECT queries. The execute_sql tool is explicitly limited to read‑only SELECT statements (writes and stored procedures are blocked), and all execution is subject to BigQuery IAM roles and project billing. This preserves enterprise data residency and auditability while letting an agent formulate and run queries without shipping data outside the cloud project. What this enables:- Agents that can construct efficient SQL, run it, and interpret results as structured data.

- Reduced token consumption, since agents receive structured results rather than massive dumps of text.

- Clear billing and quota visibility: queries are charged to the project and require the same permissions as human query runners.

GKE and GCE: infrastructure as agentable tools

Google’s GKE and GCE MCP servers expose cluster and VM management operations in an agent‑friendly form. Use cases span inspection (list clusters, read node pool configs), diagnostics (fetch logs, view pod YAML), and guided operational flows (recommend remediation, suggest cost optimizations). These tools aim to let agents assist with common infrastructure workflows while enforcing IAM scopes and optionally routing MCP traffic through Model Armor inspection for prompt‑injection defenses. Important constraints:- The remote MCP servers are intended for controlled, auditable operations; local MCPs remain useful for development and offline scenarios.

- Google documents recommended patterns to run agents with dedicated identities to make audits clear and limit blast radius.

Apigee: turning APIs into MCP tools

By integrating MCP as an API style in Apigee API Hub, Google allows organizations to catalog existing APIs as MCP tools and manage them with Apigee’s enterprise governance: API policies, quotas, authentication, and developer portal metadata. That means legacy REST or RPC endpoints can be surfaced to agents—complete with the same guardrails used for human apps—without rewriting the APIs. Apigee will auto‑parse MCP specification files attached to APIs and list tools in its UI. Developer benefits:- Faster agent integration with existing backend investments.

- Single control plane for both human and agent consumers of an API.

- Easier discovery via API Hub and auto‑generated tool manifests.

How Google is trying to make this safe and governable

Identity, IAM and Model Armor

Google emphasizes three pillars for risk reduction:- IAM scoping: MCP tool calls are gated by Google Cloud IAM—agents must use identities that carry explicit permissions to call MCP tools. This ties every agent action to existing identity and audit systems.

- Model Armor: a platform service Google uses to scan MCP traffic for prompt‑injection patterns, malicious URIs, and suspicious payloads. Model Armor can be added as a content security provider for MCP services and provides configurable sanitization settings.

- Centralized audit logging and org‑level controls: MCP tool invocations are logged and can be filtered by labels identifying MCP usage; organization policies can restrict which MCP services are allowed. Apigee and API Hub extend these governance controls to developer‑facing cataloguing.

Why this matters to enterprise IT and developers

Operational advantages

- Reduced integration cost: Instead of one‑off connectors for every agent runtime or model, an MCP server is a single, reusable adapter that multiple agents can discover and call. This reduces engineering duplication and speeds up pilot timelines.

- Lower hallucination and better reasoning: When an agent can ask for structured data (e.g., table schema, query results, place metadata), it can reason more reliably about facts and avoid inventing uncertain details. BigQuery’s execute_sql tool and Maps Grounding Lite’s place metadata are explicit examples.

- Unified governance: Exposing connectors through Apigee and Google Cloud MCP servers lets security and compliance teams use familiar tools—policies, logs, audits—rather than invent new governance silos for agents.

New responsibilities for IT

Agentic workflows change the identity and data‑security model in three ways:- Explosion of non‑human identities: Agents and ephemeral agent credentials will proliferate; identity lifecycle management must be automated.

- Observability needs: Teams must collect structured telemetry (who/what/when/tool) to investigate agent-driven incidents.

- Policy as runtime guardrail: Static IAM alone is insufficient—runtime policy enforcement, content scanning (Model Armor), and tool‑level intent boundaries are necessary to prevent misuse.

Critical analysis — strengths, gaps and risks

Strengths

- Practical interoperability: Google’s choice to support MCP across widely used services (Maps, BigQuery, GKE, Apigee) accelerates real‑world agent scenarios without locking customers into a single model vendor. That aligns with MCP’s original interoperability goals.

- Enterprise‑grade governance: Integrating MCP with Apigee and Google Cloud IAM brings enterprise controls to agent access. Turning existing APIs into MCP tools via Apigee significantly reduces migration friction for large organizations with entrenched API portfolios.

- Developer productivity: The managed remote MCP model and existing CLI tooling (Gemini CLI integrations, MCP inspectors) let developers plug agents into Google services with minimal setup during early pilots.

Gaps and open questions

- Operational cost and UX: Agent-driven workflows add new API call patterns and potentially high query volumes. BigQuery query costs, GKE API calls and Model Armor scanning could generate unpredictable charges unless teams plan egress, query limits and caching strategies. Google documents roles and billing, but cost modeling remains an explicit operational responsibility.

- Registry and supply‑chain risk: Public registries of MCP servers and the increasing number of third‑party MCP endpoints enlarge the attack surface. Registry poisoning, malicious tool manifests, or compromised MCP servers could route agents to unsafe endpoints. Strong attestation and vetting of MCP servers is required—but community registries will need to mature to meet enterprise trust requirements.

- Limits of automated defenses: Model Armor and static filters are helpful but imperfect. Prompt injection remains a nuanced attack surface—tools can return cleverly structured data designed to guide an LLM toward undesired actions. Runtime anomaly detection and human‑in‑the‑loop confirmations will remain necessary for high‑risk tasks.

Security risks to prioritize

- Privilege escalation via chained tools: Low‑privilege tools combined in a multi‑step agent workflow may enable indirect escalation if tool descriptors do not capture side effects.

- Exfiltration via logging and telemetry: If Model Armor logs entire payloads when scanning, sensitive data could leak into logs unless logs are carefully redacted or access controlled. Google explicitly warns about this in its logging guidance.

- Credential exposure: Agents using service account keys or long‑lived credentials increase the blast radius if those credentials are stolen. Best practice: ephemeral agent identities, short‑lived tokens, and robust secret management.

Cross‑checks and verification

To validate the main claims in Google’s announcement:- Google Cloud’s blog post and Apigee documentation confirm the launch of managed MCP servers and Apigee’s MCP API style.

- Product documentation for Maps Grounding Lite, BigQuery’s MCP reference and GKE’s MCP pages provide concrete endpoints and implementation details (e.g., maps-grounding-lite-mcp, bigquery.googleapis.com/mcp, container.googleapis.com/mcp) and list the exact tools and OAuth/IAM scopes required.

- Independent reporting and analysis (TechCrunch and industry press) corroborate Google’s roadmap and emphasize the Model Armor and IAM controls Google highlights. These independent writeups provide additional context about operational rollout and security framing.

Practical guidance — how to pilot MCP-enabled agents safely

A short checklist for pilots

- Start with read‑only tools (Maps Grounding Lite, BigQuery SELECT) to validate discovery, authentication, and result shaping without write risk.

- Use dedicated agent identities with narrow IAM roles and enable logging and alerts on those identities.

- Enable Model Armor and configure sanitization policies to block known malicious patterns; monitor logs for false positives and sensitive payloads.

- Set cost and rate limits on MCP tool calls (BigQuery quotas, API throttles) and include preflight cost estimates for any agent‑initiated SQL or analytics workloads.

- Implement human approval gates for any agent flow that could make changes (create/delete/modify infrastructure or data). Use two‑step approvals for destructive actions.

- Vet MCP servers and manifests before adding them to an organization’s registry—require attestation, code review and signing for third‑party MCP providers.

Developer considerations

- Prefer parameterized tools (tool manifests that accept constrained inputs) over free‑form SQL or shell execution. Parameterized tools lower the chance of unexpected side effects and keep prompts more deterministic.

- Cache low‑variance results and use asynchronous checks for non‑blocking, high‑latency tool calls (the MCP spec supports async patterns). This reduces token pressure and latency impact.

Competitive and strategic implications

Google’s managed MCP push places it squarely in the practical interoperability layer of the agentic AI stack. The move reduces integration barriers for customers already invested in Google Cloud and Apigee, and it nudges enterprises toward consistent governance patterns for both human and agent consumers. At the same time:- Cloud vendors are converging on similar approaches (Microsoft, AWS and third‑party connector platforms offer compatible governance and agent registries), which makes multi‑cloud agent deployments increasingly plausible but operationally complex.

- Neutral stewardship matters: communities and consortia (including the Linux Foundation–hosted initiatives) are trying to provide neutral governance for MCP and adjacent standards to avoid capture by a single vendor; enterprises should favor open registry and attestation patterns when possible.

Final assessment

Google’s managed MCP servers are a pragmatic and consequential step toward an agentic ecosystem that scales in enterprise settings. By integrating MCP with Apigee and major Google Cloud products, Google reduces developer friction, brings enterprise governance primitives to agent flows, and demonstrates a clear enterprise pathway from pilot to production. The technical work—documented endpoints, scoped tools, IAM controls and Model Armor integrations—shows that Google is thinking beyond model capabilities to system‑level risk and operationalization. That said, the technology’s promise comes with real responsibilities. Organizations must design identity, billing and observability systems that treat agents like first‑class identities; enforce least privilege and attestation for MCP servers; and require human‑in‑the‑loop approvals for high‑impact actions. Without those controls, the same convenience that makes agents powerful also amplifies systemic risk.In short: Google’s MCP launch is an important maturity milestone for agentic AI—one that enterprise teams should evaluate urgently, pilot cautiously, and govern meticulously.

Appendix — quick reference (endpoints & actions)

- Maps Grounding Lite MCP endpoint: maps-grounding-lite-mcp (https://mapstools.googleapis.com/mcp). Tools: search_places, lookup_weather, compute_routes.

- BigQuery MCP endpoint: https://bigquery.googleapis.com/mcp. Tools include dataset/table discovery and execute_sql (SELECT only).

- GKE MCP endpoint: https://container.googleapis.com/mcp. Tools include cluster inspection, resource YAML retrieval and operation monitoring.

- Apigee: MCP API style support and MCP tool management via API Hub; Apigee can translate existing APIs into MCP tool manifests.

Source: Cloud Wars MCP is now fully integrated with Google Cloud, enabling AI agents to access unified services from Maps to BigQuery without complex multi-endpoint navigation.