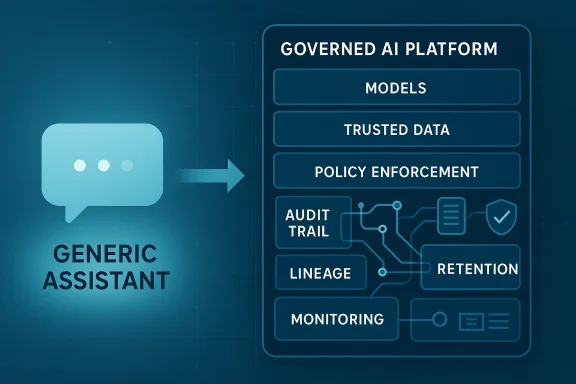

For a brief moment, it looked like generic AI assistants might become the universal interface for enterprise work. That moment is ending fast, not because businesses have lost interest in AI, but because they have learned the hard way that governance is not an optional layer you add later; it is the difference between a pilot and a production system. The new center of gravity is shifting toward platform-based AI, where models, data controls, auditability, and policy enforcement are designed together instead of stitched on afterward. In enterprise IT, the winning question is no longer “Can the model answer?” but “Can the organization defend the answer?”

The enterprise AI story of the last two years has followed a familiar pattern: enthusiastic adoption, rapid experimentation, and a growing realization that convenience is not the same thing as control. Generic assistants were the first believable interface for broad AI use because they were easy to try, easy to demo, and easy to understand. But enterprises are not buying novelty; they are buying systems that survive audits, satisfy regulators, and integrate with existing business rules.

That distinction matters because enterprise workflows are not casual conversations. They involve identity, access, compliance, finance, procurement, HR, legal review, and customer data. In those environments, a wrong answer is not just a disappointing answer; it is a liability. Once AI touches regulated data or operational decisions, the organization needs to show what happened, why it happened, and who is accountable for it.

The shift from point tools to platforms is therefore less about model quality than about system design. A point tool optimizes for a single interaction. A platform optimizes for lifecycle management: ingestion, policy, orchestration, traceability, monitoring, retention, and review. Microsoft’s Fabric governance documentation, for example, frames governance as an end-to-end discipline that includes access management, audit, lineage tracking, impact analysis, and monitoring rather than an afterthought attached to analytics or AI features (learn.microsoft.com). That is the enterprise pattern emerging across the market.

This is also why the conversation has moved toward smaller, domain-specific models. Gartner’s recent guidance predicts that organizations will use small, task-specific models far more often than general-purpose LLMs by 2027, and it explicitly ties that shift to reliability, speed, and lower operating costs. IBM has been making a similar argument from a different angle, emphasizing that smaller models can be “more cost-effective” and “more accurate” for enterprise use cases when the goal is to unlock value from private data rather than answer everything under the sun (ibm.com).

At the same time, the governance stack itself is maturing. Microsoft’s current Fabric and Purview documentation now describes practical controls for audit, retention, secure interaction capture, and compliance management around Copilot in Fabric, showing that enterprise AI is increasingly being treated like any other governed workload with policy, telemetry, and lifecycle controls (learn.microsoft.com). The industry is not abandoning AI; it is industrializing it.

The first problem is that general-purpose systems optimize for coverage, not accountability. They are designed to be useful across many contexts, but enterprise leaders need assurance inside specific contexts. When a model influences access, approves a transaction, or recommends a policy decision, the organization needs traceability that generic assistants rarely provide on their own. In practice, the more consequential the workflow, the more important the why becomes.

The second problem is that generic systems tend to blur boundaries. A user can paste data into a prompt, but that does not mean the organization has consented to that data moving through the model. Security teams are not only worried about leakage; they are worried about uncontrolled action. Legal teams are not only worried about misinformation; they are worried about defensibility. That is why a black-box answer may be acceptable for marketing copy but unacceptable for regulated decisions.

That shift changes the buying decision. A generic assistant may be cheaper and easier to deploy, but if it cannot support compliance and traceability, it is not actually cheaper at scale. It just pushes cost into review, remediation, and risk management later.

Key consequences include:

This is not just a technical preference. It is a compliance strategy. Gartner’s April 2025 guidance on small task-specific models argues that response accuracy declines when general-purpose LLMs are forced into tasks that require specific business context, while smaller models offer quicker responses and lower compute costs. That combination matters because enterprise teams care about throughput, consistency, and control as much as they care about raw capability.

The practical advantage is that narrower models can be paired with tighter guardrails. A domain model can be bound to approved datasets, configured for a specific workflow, and monitored against a smaller set of expected failure modes. That makes it easier to integrate with policy engines, approval workflows, and recordkeeping systems. The result is not just better accuracy; it is better operational fit.

A domain-specific model can still fail, of course. But if the failure is constrained to a known business domain, the organization can detect it faster and fix it more systematically. That is a very different risk profile from a general assistant producing confident but unbounded output across unrelated tasks.

That matters because enterprises rarely need a model that can answer every question. They need models that answer their questions inside a constrained business process. If the use case is invoice classification, employee policy lookup, case routing, or compliance triage, a smaller model can be the right tool precisely because it is not trying to be universal.

The economics are part of the story, but not the whole story. Lower inference costs make it more realistic to move beyond pilots and into production. Lower latency improves user experience and supports real-time workflows. Smaller footprint options can also make deployment models more flexible, including in environments where data locality or sovereignty matters. Those advantages are not abstract; they directly affect how enterprises design architecture.

That does not mean general models disappear. It means they shift toward low-risk, high-creativity, or broad-assistance tasks. Meanwhile, production AI moves toward smaller and more domain-aware systems. The market is drawing a practical line between “helpful assistant” and “trusted business system,” and SLMs sit much closer to the latter.

A few enterprise advantages stand out:

That is why platforms are overtaking standalone tools. A platform can provide a control plane for policy, a data plane for trusted inputs, and an audit plane for accountability. Microsoft Fabric’s governance documentation is built around exactly that kind of holistic view, with sections for secure and compliant data handling, data estate management, trust and discovery, and monitoring and action (learn.microsoft.com). In other words, the platform is not just where AI lives; it is where governance lives too.

This is especially important in regulated sectors. A financial institution, for example, cannot treat loan automation as a loose collection of prompts. It needs a workflow with fixed checkpoints, data lineage, policy enforcement, and record retention. If a regulator asks how a decision was made, the organization needs a reconstruction path, not a guess.

That is why platform thinking is winning. It does not just make AI more compliant; it makes AI operationally survivable. If the system can be monitored, reviewed, and adjusted centrally, it can be scaled without creating a new governance exception for every team.

Microsoft’s current documentation on Fabric and Purview is a good example of this shift. It emphasizes that managing AI interactions in Fabric requires enterprise-grade governance capabilities, including security posture management, audit, retention, and policy capture for prompts and responses (learn.microsoft.com). That is a meaningful change in posture. It says, in effect, that AI is no longer a side experiment; it is part of the enterprise control environment.

The implication is that vendors who can package trust as a feature will have an advantage. Buyers do not want to assemble governance from scratch across half a dozen tools if they can get it within a platform. They especially do not want to argue over responsibility when something goes wrong. The vendor that can say “here is the policy, here is the log, here is the lineage, and here is the retention path” is offering more than software. It is offering operational confidence.

This is why AI governance should not be viewed as a tax. It is part of the product experience. Organizations that internalize that idea are more likely to move from experimentation to scaled deployment.

Important enterprise benefits include:

That means AI architecture should be designed like any other critical enterprise service. IT leaders need clear policy layers, approved model catalogs, trusted data domains, and observable runtime behavior. Microsoft’s Fabric governance stack and Purview controls show what this looks like in practice: manage access, track lineage, apply retention, and monitor interactions rather than treating AI as a standalone toy project (learn.microsoft.com).

The leadership challenge is organizational as much as technical. Different teams care about different failure modes, and AI programs stall when those concerns are handled sequentially rather than in parallel. Security wants boundaries, legal wants defensibility, compliance wants retention, and business owners want speed. A platform approach gives those groups a shared operating surface.

That divergence is likely to deepen. The more enterprises rely on AI for operational decisions, the more they will favor systems that are auditable by design. In that world, platform vendors have a strong advantage because they can unify data, policy, and workflow in one governed environment.

Microsoft is particularly well placed here because Fabric, Purview, and related Copilot capabilities create a path from data governance to AI governance inside the same ecosystem (learn.microsoft.com). IBM is pushing a similar narrative from the model side, emphasizing smaller models, structured programming, and enterprise-grade runtime controls (ibm.com). The competition is no longer just about who has the smartest model. It is about who can make AI governable enough to use at scale.

That also means startups will need sharper differentiation. Point solutions can still win in specialized niches, but they will need clear integration stories and defensible control assumptions. In a market increasingly defined by compliance and orchestration, “nice demo” is not enough.

The vendor stack that answers those questions most convincingly will gain share.

We should also expect a more explicit split between general and specialized AI. General assistants will remain useful for ideation and low-risk productivity, but the workloads that matter most to the business will increasingly move toward smaller, domain-aware models running inside governed platforms. That will reshape budgets, procurement, and architecture decisions over the next several planning cycles.

The enterprise AI market is splitting into two lanes, and that split is overdue. Generic assistants will keep their place at the edges of work, where convenience matters most. But in the middle of the enterprise, where identity, money, data, and regulation intersect, the future belongs to governed platforms that make AI accountable enough to trust.

Source: Petri IT Knowledgebase Why Enterprise AI Is Moving to Governed Platforms

Overview

Overview

The enterprise AI story of the last two years has followed a familiar pattern: enthusiastic adoption, rapid experimentation, and a growing realization that convenience is not the same thing as control. Generic assistants were the first believable interface for broad AI use because they were easy to try, easy to demo, and easy to understand. But enterprises are not buying novelty; they are buying systems that survive audits, satisfy regulators, and integrate with existing business rules.That distinction matters because enterprise workflows are not casual conversations. They involve identity, access, compliance, finance, procurement, HR, legal review, and customer data. In those environments, a wrong answer is not just a disappointing answer; it is a liability. Once AI touches regulated data or operational decisions, the organization needs to show what happened, why it happened, and who is accountable for it.

The shift from point tools to platforms is therefore less about model quality than about system design. A point tool optimizes for a single interaction. A platform optimizes for lifecycle management: ingestion, policy, orchestration, traceability, monitoring, retention, and review. Microsoft’s Fabric governance documentation, for example, frames governance as an end-to-end discipline that includes access management, audit, lineage tracking, impact analysis, and monitoring rather than an afterthought attached to analytics or AI features (learn.microsoft.com). That is the enterprise pattern emerging across the market.

This is also why the conversation has moved toward smaller, domain-specific models. Gartner’s recent guidance predicts that organizations will use small, task-specific models far more often than general-purpose LLMs by 2027, and it explicitly ties that shift to reliability, speed, and lower operating costs. IBM has been making a similar argument from a different angle, emphasizing that smaller models can be “more cost-effective” and “more accurate” for enterprise use cases when the goal is to unlock value from private data rather than answer everything under the sun (ibm.com).

At the same time, the governance stack itself is maturing. Microsoft’s current Fabric and Purview documentation now describes practical controls for audit, retention, secure interaction capture, and compliance management around Copilot in Fabric, showing that enterprise AI is increasingly being treated like any other governed workload with policy, telemetry, and lifecycle controls (learn.microsoft.com). The industry is not abandoning AI; it is industrializing it.

Why Generic Assistants Hit the Wall

The original appeal of generic assistants was obvious: one interface, many tasks, minimal setup. For brainstorming, summarization, and ad hoc drafting, that approach is still compelling. But enterprises do not measure AI by how often it sounds useful; they measure it by how often it can be trusted. That is where broad assistants begin to break down.The first problem is that general-purpose systems optimize for coverage, not accountability. They are designed to be useful across many contexts, but enterprise leaders need assurance inside specific contexts. When a model influences access, approves a transaction, or recommends a policy decision, the organization needs traceability that generic assistants rarely provide on their own. In practice, the more consequential the workflow, the more important the why becomes.

The second problem is that generic systems tend to blur boundaries. A user can paste data into a prompt, but that does not mean the organization has consented to that data moving through the model. Security teams are not only worried about leakage; they are worried about uncontrolled action. Legal teams are not only worried about misinformation; they are worried about defensibility. That is why a black-box answer may be acceptable for marketing copy but unacceptable for regulated decisions.

Accountability Becomes the Core Feature

The enterprise version of AI is not “smart chat.” It is governed execution. That means the system needs to know what data was used, what permissions were applied, what the model did with the data, and how the output flowed into business processes. Microsoft’s Fabric governance and Purview guidance makes this explicit by centering audit, lineage, information protection, and policy-based monitoring as first-class capabilities (learn.microsoft.com).That shift changes the buying decision. A generic assistant may be cheaper and easier to deploy, but if it cannot support compliance and traceability, it is not actually cheaper at scale. It just pushes cost into review, remediation, and risk management later.

Key consequences include:

- Manual review overhead rises when AI outputs cannot be traced.

- Security exceptions multiply when data pathways are unclear.

- Legal exposure grows when decisions cannot be defended.

- Pilot projects stall when governance teams are brought in too late.

- Trust erodes when users cannot explain how outputs were produced.

Why Domain-Specific Models Matter

Domain-specific models change the enterprise risk equation because they reduce the ambiguity that makes general systems hard to govern. A model built for a narrow task can be tested against known scenarios, aligned to policy language, and validated against approved data sources. In other words, it becomes easier to prove what the system is supposed to do and easier to spot when it does something else.This is not just a technical preference. It is a compliance strategy. Gartner’s April 2025 guidance on small task-specific models argues that response accuracy declines when general-purpose LLMs are forced into tasks that require specific business context, while smaller models offer quicker responses and lower compute costs. That combination matters because enterprise teams care about throughput, consistency, and control as much as they care about raw capability.

The practical advantage is that narrower models can be paired with tighter guardrails. A domain model can be bound to approved datasets, configured for a specific workflow, and monitored against a smaller set of expected failure modes. That makes it easier to integrate with policy engines, approval workflows, and recordkeeping systems. The result is not just better accuracy; it is better operational fit.

Traceability Is the Differentiator

Accuracy alone is no longer enough in enterprise AI. What organizations increasingly need is traceability: the ability to reconstruct the decision path after the fact. Microsoft’s Fabric documentation emphasizes lineage tracking and impact analysis, while Purview guidance shows how AI interactions can be captured, retained, reviewed, and exported for compliance purposes (learn.microsoft.com). Those are not decorative features. They are the backbone of defensible automation.A domain-specific model can still fail, of course. But if the failure is constrained to a known business domain, the organization can detect it faster and fix it more systematically. That is a very different risk profile from a general assistant producing confident but unbounded output across unrelated tasks.

- Narrower scope means easier testing.

- Approved data sources reduce ambiguity.

- Clear policies reduce interpretation drift.

- Audit trails support post-incident review.

- Governance teams can sign off with more confidence.

The Rise of Smaller Language Models

One of the most important shifts in enterprise AI is the growing acceptance that bigger is not automatically better. Smaller language models, or SLMs, are becoming attractive because they are often easier to deploy, easier to control, and cheaper to run at scale. IBM has repeatedly argued that smaller models can be faster, more cost-effective, and easier to place where the business actually needs them (ibm.com).That matters because enterprises rarely need a model that can answer every question. They need models that answer their questions inside a constrained business process. If the use case is invoice classification, employee policy lookup, case routing, or compliance triage, a smaller model can be the right tool precisely because it is not trying to be universal.

The economics are part of the story, but not the whole story. Lower inference costs make it more realistic to move beyond pilots and into production. Lower latency improves user experience and supports real-time workflows. Smaller footprint options can also make deployment models more flexible, including in environments where data locality or sovereignty matters. Those advantages are not abstract; they directly affect how enterprises design architecture.

Why Fit-for-Purpose Beats Generality

The strategic logic is straightforward. Broad models are powerful, but power without tight operational boundaries creates friction. Narrower models can be trained or tuned around specific tasks, which makes them easier to validate and easier to govern. Gartner’s latest predictions go even further, forecasting that by 2027 organizations will use small task-specific models at volumes far exceeding general-purpose LLMs.That does not mean general models disappear. It means they shift toward low-risk, high-creativity, or broad-assistance tasks. Meanwhile, production AI moves toward smaller and more domain-aware systems. The market is drawing a practical line between “helpful assistant” and “trusted business system,” and SLMs sit much closer to the latter.

A few enterprise advantages stand out:

- Lower inference cost improves ROI.

- Faster response times support operational workflows.

- Simpler deployment reduces infrastructure complexity.

- Tighter scope improves evaluation.

- Easier governance supports sign-off from risk teams.

Platforms Beat Point Tools

The big architectural lesson of enterprise AI is that workflows are systems, not prompts. A point tool can help with a single task, but it usually cannot manage the chain of events that surrounds the task. Enterprise value emerges from connected processes: identity verification, access checks, data routing, exception handling, logging, retention, and auditing. If those pieces are not linked, the AI system may be productive in isolation but fragile in practice.That is why platforms are overtaking standalone tools. A platform can provide a control plane for policy, a data plane for trusted inputs, and an audit plane for accountability. Microsoft Fabric’s governance documentation is built around exactly that kind of holistic view, with sections for secure and compliant data handling, data estate management, trust and discovery, and monitoring and action (learn.microsoft.com). In other words, the platform is not just where AI lives; it is where governance lives too.

This is especially important in regulated sectors. A financial institution, for example, cannot treat loan automation as a loose collection of prompts. It needs a workflow with fixed checkpoints, data lineage, policy enforcement, and record retention. If a regulator asks how a decision was made, the organization needs a reconstruction path, not a guess.

The Control Plane Changes the Game

A platform-based control plane lets enterprises define who can use which model, with what data, under what conditions, and with what logging requirements. That is a fundamentally different posture from enabling a chatbot and hoping users behave responsibly. Microsoft’s Purview guidance for Copilot in Fabric shows how organizations can use audit, retention policies, DSPM for AI, and eDiscovery to manage AI interactions in a structured way (learn.microsoft.com).That is why platform thinking is winning. It does not just make AI more compliant; it makes AI operationally survivable. If the system can be monitored, reviewed, and adjusted centrally, it can be scaled without creating a new governance exception for every team.

- One governance layer covers many use cases.

- Audit and retention become built-in.

- Access and lineage can be standardized.

- Policy changes can propagate centrally.

- Teams move faster because the rules are known.

Compliance Is Now the Product

For years, enterprises treated compliance as overhead. In AI, that mindset is changing. Governance is no longer the thing that slows down adoption; it is becoming the condition that makes adoption possible. When compliance is embedded in the product, the AI system becomes easier to approve, easier to monitor, and easier to expand across departments.Microsoft’s current documentation on Fabric and Purview is a good example of this shift. It emphasizes that managing AI interactions in Fabric requires enterprise-grade governance capabilities, including security posture management, audit, retention, and policy capture for prompts and responses (learn.microsoft.com). That is a meaningful change in posture. It says, in effect, that AI is no longer a side experiment; it is part of the enterprise control environment.

The implication is that vendors who can package trust as a feature will have an advantage. Buyers do not want to assemble governance from scratch across half a dozen tools if they can get it within a platform. They especially do not want to argue over responsibility when something goes wrong. The vendor that can say “here is the policy, here is the log, here is the lineage, and here is the retention path” is offering more than software. It is offering operational confidence.

Governance Speeds Adoption

This is one of the most counterintuitive truths in enterprise AI: more governance can mean more velocity. Once controls are standardized, teams do not have to negotiate exceptions every time they want to use AI. That lowers friction for legal review, security review, and compliance approval. It also reduces the chance that each department invents its own unsafe workaround.This is why AI governance should not be viewed as a tax. It is part of the product experience. Organizations that internalize that idea are more likely to move from experimentation to scaled deployment.

Important enterprise benefits include:

- Faster approvals from risk and legal teams.

- Lower audit burden because records already exist.

- Reduced shadow AI because approved paths are available.

- Clearer accountability when incidents occur.

- Stronger user confidence in day-to-day adoption.

What This Means for IT Leaders

For CIOs, CISOs, and data leaders, the platform shift means the evaluation criteria for AI projects must change. The right question is not whether a model can impress in a sandbox. The right question is whether it can operate inside the organization’s existing rules for identity, access, retention, monitoring, and review. If the answer is no, the use case may still be interesting, but it is not ready for the production line.That means AI architecture should be designed like any other critical enterprise service. IT leaders need clear policy layers, approved model catalogs, trusted data domains, and observable runtime behavior. Microsoft’s Fabric governance stack and Purview controls show what this looks like in practice: manage access, track lineage, apply retention, and monitor interactions rather than treating AI as a standalone toy project (learn.microsoft.com).

The leadership challenge is organizational as much as technical. Different teams care about different failure modes, and AI programs stall when those concerns are handled sequentially rather than in parallel. Security wants boundaries, legal wants defensibility, compliance wants retention, and business owners want speed. A platform approach gives those groups a shared operating surface.

Enterprise vs. Consumer Impact

Consumer AI still rewards openness, breadth, and ease of use. Enterprise AI rewards containment, governance, and alignment to policy. Those two markets will continue to overlap, but they are already diverging in architecture and expectations. The consumer version of AI can tolerate occasional improvisation; the enterprise version cannot.That divergence is likely to deepen. The more enterprises rely on AI for operational decisions, the more they will favor systems that are auditable by design. In that world, platform vendors have a strong advantage because they can unify data, policy, and workflow in one governed environment.

- Consumer AI prioritizes convenience.

- Enterprise AI prioritizes accountability.

- Consumer models can be broad.

- Enterprise models must be defensible.

- Consumer risk is personal.

- Enterprise risk is systemic.

Competitive Implications

The shift from assistants to platforms has clear competitive consequences. Vendors that sell isolated AI features will face increasing pressure to prove how those features fit into broader governance frameworks. By contrast, vendors with data platforms, identity integration, compliance tooling, and workflow orchestration can position AI as part of a larger enterprise operating model. That is a more durable story.Microsoft is particularly well placed here because Fabric, Purview, and related Copilot capabilities create a path from data governance to AI governance inside the same ecosystem (learn.microsoft.com). IBM is pushing a similar narrative from the model side, emphasizing smaller models, structured programming, and enterprise-grade runtime controls (ibm.com). The competition is no longer just about who has the smartest model. It is about who can make AI governable enough to use at scale.

That also means startups will need sharper differentiation. Point solutions can still win in specialized niches, but they will need clear integration stories and defensible control assumptions. In a market increasingly defined by compliance and orchestration, “nice demo” is not enough.

The New Buying Criteria

Enterprise buyers are likely to ask more pointed questions than they did a year ago. Can the model be scoped to a domain? Can the data paths be audited? Can prompts and responses be retained where required? Can the system be reviewed after an incident? If the answer to any of those questions is fuzzy, adoption slows.The vendor stack that answers those questions most convincingly will gain share.

- Governance integration becomes a sales advantage.

- Data lineage becomes a differentiation point.

- Retention and audit become part of product design.

- Narrow models become easier to justify.

- Platform ecosystems become stickier over time.

Strengths and Opportunities

The move toward governed platforms is not just a defensive response to risk; it also unlocks practical business value. Organizations that get this right can scale AI faster because they spend less time reinventing controls for every project, and more time applying AI to real workflows. That makes the platform model both safer and, ultimately, more productive.- Standardized governance reduces duplicate effort across teams.

- Central policy enforcement makes approvals easier.

- Traceable AI actions simplify audits and incident response.

- Domain-specific models can improve accuracy in narrow workflows.

- Lower-cost models can expand production use cases.

- Platform integration supports broader automation strategies.

- Operational trust increases user adoption.

Risks and Concerns

The platform shift also carries real risks. The most obvious is vendor lock-in: once AI governance, data control, and workflow orchestration are tightly bound to a single ecosystem, moving becomes harder. There is also a risk that organizations mistake platform adoption for governance maturity, when in reality the tools are only as strong as the policies and operating discipline behind them.- Vendor lock-in can reduce architectural flexibility.

- False confidence can arise from buying tools without changing process.

- Over-specialization may limit a model’s usefulness outside one domain.

- Policy complexity can slow innovation if not managed well.

- Shadow AI may persist if approved paths are too rigid.

- Data quality issues can still undermine model performance.

- Accountability gaps remain if ownership is unclear.

Looking Ahead

The next phase of enterprise AI will likely be defined by orchestration, not novelty. As platforms absorb governance, audit, and policy enforcement, the center of innovation will move from prompt design to workflow design. That is a healthier place for enterprise technology to live, because workflows are measurable, reviewable, and improvable.We should also expect a more explicit split between general and specialized AI. General assistants will remain useful for ideation and low-risk productivity, but the workloads that matter most to the business will increasingly move toward smaller, domain-aware models running inside governed platforms. That will reshape budgets, procurement, and architecture decisions over the next several planning cycles.

- Expect more domain-specific AI in regulated workflows.

- Expect smaller models to gain share in enterprise deployments.

- Expect governance to become a procurement requirement.

- Expect platforms to replace point tools in core workflows.

- Expect data lineage and audit to become standard evaluation criteria.

The enterprise AI market is splitting into two lanes, and that split is overdue. Generic assistants will keep their place at the edges of work, where convenience matters most. But in the middle of the enterprise, where identity, money, data, and regulation intersect, the future belongs to governed platforms that make AI accountable enough to trust.

Source: Petri IT Knowledgebase Why Enterprise AI Is Moving to Governed Platforms