The May 16, 2026 Royaldutchshellplc.com post republished a WindowsForum-style analysis of John Donovan’s late-December AI experiment, in which Shell-related archive material was fed to multiple chatbots and paired with a Microsoft Copilot defamation-risk memo. The episode is not just another internet feud with a chatbot cameo. It is a compact demonstration of how old archives become new evidence engines, how satire becomes machine-readable input, and how legal-sounding AI output can acquire authority it has not earned.

The hard lesson is that generative AI does not merely summarize disputes. It changes their operating conditions. A persistent archive, a prompt, and a model’s appetite for narrative coherence can turn decades of contested material into fresh media artifacts at industrial speed.

John Donovan’s long campaign against Shell has always depended on persistence. The dispute began in the commercial and legal fights of the 1990s, then hardened into a web of sites collecting litigation material, internal documents, leaked correspondence, public commentary, and allegations that range from readily checkable to fiercely disputed. In the pre-AI web, that kind of archive was primarily a search result, a source file, or a reputational irritant.

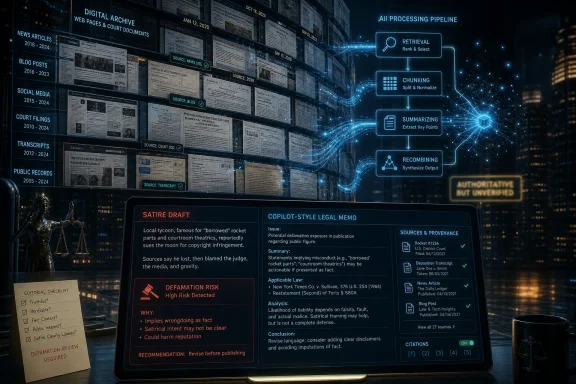

In the AI web, it becomes something more active. A dense archive is not just read by people; it is retrieved, chunked, embedded, summarized, and recombined. The corpus becomes an ingredient in future outputs, especially when a user deliberately packages it into a dossier and asks competing systems to interpret it.

That is why Donovan’s late-December experiment matters beyond the Shell dispute itself. The point was not simply that one critic used AI to write satire about a multinational company. The point was that the critic then published the machinery of the exercise: prompts, model responses, comparative outputs, and an AI-generated legal assessment.

The result is a public RAG experiment hiding in plain sight. Retrieval-augmented generation, or RAG, is often discussed in enterprise language: knowledge bases, copilots, support workflows, document grounding. Donovan’s use case is messier and more politically charged. It asks what happens when the “knowledge base” is a hostile archive assembled over decades by one side of a dispute.

Satire traditionally survives because readers understand its mode. A grotesque corporate voice, absurd exaggeration, or theatrical roleplay signals that the author is commenting rather than reporting. Courts in common-law systems have often treated that distinction seriously, especially where the target is powerful and the topic is a matter of public concern.

But generative AI complicates the boundary. Models are good at producing the surface features of satire while also inventing factual connective tissue. A human satirist may know exactly which claims are jokes and which factual anchors are documented. A chatbot often does not. It can slide from parody into assertion without warning, especially when the prompt contains a mixture of verified records, leaked material, redacted documents, and claims that have not been independently tested.

That mixture is the whole story. Donovan’s archive contains material that can be checked against legal records and public proceedings, including the 2005 WIPO domain-name decision that rejected Shell’s complaint over royaldutchshellplc.com. It also contains allegations and interpretive claims whose provenance is harder to establish. To a model, those categories may arrive as adjacent text. To a lawyer or editor, they are different planets.

That creates a strange media object. One machine helps generate the provocation; another machine assesses the provocation’s legal posture; a human publisher presents the loop as evidence of both expressive legitimacy and technological risk. The model is no longer just a drafting tool. It is cast as critic, quasi-lawyer, and witness.

There is an obvious problem with that role. A Copilot answer about defamation may be useful as a checklist, but it is not legal advice, not privileged, not backed by professional duties, and not proof that Microsoft has “cleared” anything. The danger is authority laundering: a plausible legal memo transforms from machine output into rhetorical armor.

This matters because legal prose has a special social force. A confident model can cite broad principles like honest opinion, fair comment, public interest, parody, and rhetorical hyperbole in a way that sounds reassuring. But without the exact evidence trail, jurisdictional analysis, publication context, and human legal judgment, the memo is only a starting point.

The public transcript may show what Copilot said. It does not show everything that would make the memo auditable: retrieval snippets, ranking decisions, source weighting, model version, hidden system instructions, or whether any relevant contrary material was omitted. That absence does not make the transcript fake. It makes it limited.

In England and Wales, the Defamation Act 2013 gives defendants a statutory honest-opinion defense where the statement is opinion, indicates its basis, and is one an honest person could have held on the facts available at publication. The Act also recognizes a public-interest defense. These defenses can be powerful, but they are not magic words.

In the United States, First Amendment doctrine gives wide berth to parody, opinion, and rhetorical hyperbole, especially on matters of public concern. Yet the Supreme Court’s Milkovich line of cases remains the trapdoor: calling something “opinion” does not protect it if the statement implies an objectively verifiable and false assertion of fact.

That distinction maps almost perfectly onto AI risk. “Shell behaves like a geopolitical octopus” is the kind of hyperbolic insult readers understand as commentary. “A named person died because of X” is a factual claim, even if generated inside a satirical frame. The former may bruise; the latter demands proof.

This is where AI makes editors nervous. A model’s most dangerous output is often not the flamboyant joke. It is the precise, plausible, emotionally charged detail slipped into the middle of the joke.

Causes of death are sensitive, verifiable, and reputationally loaded. They are also narratively tempting. A model trying to write a compelling account of a long corporate feud may reach for a dramatic causal link because it makes the story feel complete. The problem is that journalism is not a completion engine.

This is the failure mode that should worry publishers most. AI hallucinations are often discussed as absurd mistakes: fake cases, invented quotes, nonexistent studies. But the more dangerous hallucination is not absurd. It is plausible. It appears where the reader expects an explanatory bridge.

Once published, that bridge can become infrastructure. A false claim may be scraped, summarized, reposted, debated, corrected, and re-summarized. Even the correction can preserve the original association in search and model memory. The machine-generated error becomes part of the dispute’s documentary weather.

That works well enough when the corpus is a software manual, a ticket database, or a set of internal procedures. It becomes much harder when the corpus is adversarial, uneven, polemical, and partly unverifiable. The model may not know whether it is reading a court filing, a leaked email, a satirical riff, an anonymous allegation, or a decades-old claim repeated across mirrored sites.

The technical term “grounding” can therefore mislead. Grounded in what? A retrieved passage is not the same thing as a verified fact. A model can be faithfully grounded in a document that is itself wrong, incomplete, misleading, or one-sided.

That does not mean contested archives should be excluded from AI systems. It means provenance must travel with the output. If a model says a claim is based on a court document, the reader needs to know which court document. If it is based on a leaked email, the reader needs to know that too. If it is based on an allegation from the archive itself, that distinction should be visible in the sentence.

AI changes that calculation. Silence may still be strategically wise in a human news cycle, but it is less reliable in a machine-mediated one. If the public web contains a large hostile archive and only scattered official responses, retrieval systems may find the archive more easily than the rebuttal.

That does not mean corporations should answer every allegation or threaten every critic. Heavy-handed takedowns can still backfire, and the Streisand effect has not been repealed. But the modern corporate posture needs a machine-readable public record: concise timelines, documentary rebuttals, official statements, and corrections that models can retrieve.

For Shell or any other large institution, the task is not to “win” an argument with a chatbot. It is to ensure that when automated systems summarize a dispute, they encounter authoritative counterweights. In the AI era, reputation management is partly information architecture.

The right standard is not “never use AI.” It is “never let AI be the last unexamined step before publication.” Every reputationally significant claim needs a documentary anchor outside the model. Every sensitive assertion needs an editor willing to ask whether the sentence would survive without the chatbot’s confidence.

The same rule applies to AI-generated legal analysis. A published Copilot memo can be interesting as an artifact. It can illuminate how consumer AI systems reason about risk. But if a publisher intends to rely on that analysis operationally, a human lawyer must review it.

There is a broader platform lesson here too. AI vendors should make it easier to export prompts, model versions, retrieval context, and timestamps. If vendors sell these tools as assistants for knowledge work, they should not treat auditability as an enterprise luxury. In public disputes, auditability is the difference between accountability and fog.

Still, Microsoft and other AI vendors cannot shrug off the implications. Their products are being used to draft, evaluate, and legitimize public claims. Users will publish outputs. Readers will misunderstand what those outputs mean. The line between “assistant” and “authority” will blur fastest in areas where the prose sounds professional: law, medicine, finance, cybersecurity, and journalism.

The product challenge is not merely to add disclaimers. Users have learned to read past disclaimers. What matters is behavior: hedging sensitive claims, refusing unsupported specifics, showing source provenance, separating verified documents from allegations, and warning when a corpus is adversarial or mixed in quality.

This is especially relevant to WindowsForum’s core audience. Sysadmins and IT pros are being asked to deploy copilots into document repositories, ticket systems, compliance workflows, and corporate knowledge bases. The Donovan case is a warning from the public web: if you do not classify source quality before retrieval, the model will do the classification implicitly, badly, and invisibly.

One model reportedly embellished. Another corrected. Another hedged. That spread matters. It demonstrates that “AI says” is not a category of evidence. Different systems, prompts, policies, retrieval methods, and model incentives produce different accounts from the same dossier.

For journalists, this suggests a practical method. When using AI against contested material, comparison can reveal risk. If one model makes a dramatic claim and others refuse it, the claim should move to the top of the verification queue. If all models repeat the same claim, that still does not make it true, but it may indicate a widely repeated source that needs tracing.

For readers, the side-by-side format teaches skepticism without requiring technical expertise. It shows that fluency is not reliability. The polished answer and the cautious answer may be responding to the same uncertainty with different personalities.

Some will do it to surface neglected facts. Some will do it to launder allegations. Some will do both at once. The difference will depend less on whether AI is involved than on whether provenance, editorial discipline, and correction mechanisms are built into the workflow.

The uncomfortable truth is that AI rewards the organized archive. A persistent critic with a well-indexed site may become more visible to models than a multinational with fragmented PDFs, cautious statements, and buried legal filings. That inversion may be healthy in some cases and hazardous in others. Either way, it is real.

The answer is not to suppress archives or neuter satire. Satire is part of public accountability, and archives can preserve material that powerful institutions would rather forget. The answer is to prevent models from flattening every retrieved fragment into the same evidentiary plane.

The future of AI-assisted public argument will not be decided by whether machines can write sharp copy; they already can. It will be decided by whether publishers, platforms, and institutions build habits that keep machine fluency subordinate to human verification. The archive is now an input layer, the chatbot is now a narrator, and the editor’s oldest job — deciding what is known, what is alleged, and what is merely too good a line to trust — has become more important, not less.

Source: Royal Dutch Shell Plc .com WindowsForum.com: AI Satire and Defamation Risk in the Shell Archive: A Public RAG Experiment

The hard lesson is that generative AI does not merely summarize disputes. It changes their operating conditions. A persistent archive, a prompt, and a model’s appetite for narrative coherence can turn decades of contested material into fresh media artifacts at industrial speed.

The Archive Became the Instrument

The Archive Became the Instrument

John Donovan’s long campaign against Shell has always depended on persistence. The dispute began in the commercial and legal fights of the 1990s, then hardened into a web of sites collecting litigation material, internal documents, leaked correspondence, public commentary, and allegations that range from readily checkable to fiercely disputed. In the pre-AI web, that kind of archive was primarily a search result, a source file, or a reputational irritant.In the AI web, it becomes something more active. A dense archive is not just read by people; it is retrieved, chunked, embedded, summarized, and recombined. The corpus becomes an ingredient in future outputs, especially when a user deliberately packages it into a dossier and asks competing systems to interpret it.

That is why Donovan’s late-December experiment matters beyond the Shell dispute itself. The point was not simply that one critic used AI to write satire about a multinational company. The point was that the critic then published the machinery of the exercise: prompts, model responses, comparative outputs, and an AI-generated legal assessment.

The result is a public RAG experiment hiding in plain sight. Retrieval-augmented generation, or RAG, is often discussed in enterprise language: knowledge bases, copilots, support workflows, document grounding. Donovan’s use case is messier and more politically charged. It asks what happens when the “knowledge base” is a hostile archive assembled over decades by one side of a dispute.

Satire Was the Bait, Provenance Was the Hook

The satirical piece at the center of the episode reportedly used persona, exaggeration, sarcasm, and an explicit disclaimer. Those are not decorative details. In defamation law, context is often the difference between a protected joke and an actionable factual allegation.Satire traditionally survives because readers understand its mode. A grotesque corporate voice, absurd exaggeration, or theatrical roleplay signals that the author is commenting rather than reporting. Courts in common-law systems have often treated that distinction seriously, especially where the target is powerful and the topic is a matter of public concern.

But generative AI complicates the boundary. Models are good at producing the surface features of satire while also inventing factual connective tissue. A human satirist may know exactly which claims are jokes and which factual anchors are documented. A chatbot often does not. It can slide from parody into assertion without warning, especially when the prompt contains a mixture of verified records, leaked material, redacted documents, and claims that have not been independently tested.

That mixture is the whole story. Donovan’s archive contains material that can be checked against legal records and public proceedings, including the 2005 WIPO domain-name decision that rejected Shell’s complaint over royaldutchshellplc.com. It also contains allegations and interpretive claims whose provenance is harder to establish. To a model, those categories may arrive as adjacent text. To a lawyer or editor, they are different planets.

The Copilot Memo Shows the Danger of Legal-Sounding Machines

The most novel part of the experiment was not the AI-assisted satire. It was the second-order move: asking Microsoft Copilot to evaluate the satire’s defamation risk, then publishing that response as part of the public record.That creates a strange media object. One machine helps generate the provocation; another machine assesses the provocation’s legal posture; a human publisher presents the loop as evidence of both expressive legitimacy and technological risk. The model is no longer just a drafting tool. It is cast as critic, quasi-lawyer, and witness.

There is an obvious problem with that role. A Copilot answer about defamation may be useful as a checklist, but it is not legal advice, not privileged, not backed by professional duties, and not proof that Microsoft has “cleared” anything. The danger is authority laundering: a plausible legal memo transforms from machine output into rhetorical armor.

This matters because legal prose has a special social force. A confident model can cite broad principles like honest opinion, fair comment, public interest, parody, and rhetorical hyperbole in a way that sounds reassuring. But without the exact evidence trail, jurisdictional analysis, publication context, and human legal judgment, the memo is only a starting point.

The public transcript may show what Copilot said. It does not show everything that would make the memo auditable: retrieval snippets, ranking decisions, source weighting, model version, hidden system instructions, or whether any relevant contrary material was omitted. That absence does not make the transcript fake. It makes it limited.

Defamation Law Still Cares About the Reader, Not the Prompt

The legal center of gravity remains stubbornly human. The key question is not whether a model thought the piece was satire. It is whether a reasonable reader would understand the challenged statements as non-literal commentary or as provable factual assertions.In England and Wales, the Defamation Act 2013 gives defendants a statutory honest-opinion defense where the statement is opinion, indicates its basis, and is one an honest person could have held on the facts available at publication. The Act also recognizes a public-interest defense. These defenses can be powerful, but they are not magic words.

In the United States, First Amendment doctrine gives wide berth to parody, opinion, and rhetorical hyperbole, especially on matters of public concern. Yet the Supreme Court’s Milkovich line of cases remains the trapdoor: calling something “opinion” does not protect it if the statement implies an objectively verifiable and false assertion of fact.

That distinction maps almost perfectly onto AI risk. “Shell behaves like a geopolitical octopus” is the kind of hyperbolic insult readers understand as commentary. “A named person died because of X” is a factual claim, even if generated inside a satirical frame. The former may bruise; the latter demands proof.

This is where AI makes editors nervous. A model’s most dangerous output is often not the flamboyant joke. It is the precise, plausible, emotionally charged detail slipped into the middle of the joke.

The Hallucination Is Not a Bug in the Story; It Is the Story

According to Donovan’s published comparison, one assistant attributed a cause of death to a family member in a way that other assistant outputs treated more cautiously or corrected against obituary material. That is exactly the sort of detail that converts an AI experiment into a defamation and editorial-risk case study.Causes of death are sensitive, verifiable, and reputationally loaded. They are also narratively tempting. A model trying to write a compelling account of a long corporate feud may reach for a dramatic causal link because it makes the story feel complete. The problem is that journalism is not a completion engine.

This is the failure mode that should worry publishers most. AI hallucinations are often discussed as absurd mistakes: fake cases, invented quotes, nonexistent studies. But the more dangerous hallucination is not absurd. It is plausible. It appears where the reader expects an explanatory bridge.

Once published, that bridge can become infrastructure. A false claim may be scraped, summarized, reposted, debated, corrected, and re-summarized. Even the correction can preserve the original association in search and model memory. The machine-generated error becomes part of the dispute’s documentary weather.

RAG Makes Old Disputes Newly Volatile

For WindowsForum readers used to enterprise AI, the Donovan case is a reminder that retrieval systems inherit the politics of their source material. A RAG pipeline does not magically convert a corpus into truth. It retrieves passages that appear relevant, then asks a model to synthesize them into an answer.That works well enough when the corpus is a software manual, a ticket database, or a set of internal procedures. It becomes much harder when the corpus is adversarial, uneven, polemical, and partly unverifiable. The model may not know whether it is reading a court filing, a leaked email, a satirical riff, an anonymous allegation, or a decades-old claim repeated across mirrored sites.

The technical term “grounding” can therefore mislead. Grounded in what? A retrieved passage is not the same thing as a verified fact. A model can be faithfully grounded in a document that is itself wrong, incomplete, misleading, or one-sided.

That does not mean contested archives should be excluded from AI systems. It means provenance must travel with the output. If a model says a claim is based on a court document, the reader needs to know which court document. If it is based on a leaked email, the reader needs to know that too. If it is based on an allegation from the archive itself, that distinction should be visible in the sentence.

Corporate Silence Is Becoming a Worse Default

Large companies have historically had good reasons to ignore persistent critics. Litigation can amplify. Public rebuttals can dignify claims. The safest approach often looked like selective engagement, private pressure, and a thick skin.AI changes that calculation. Silence may still be strategically wise in a human news cycle, but it is less reliable in a machine-mediated one. If the public web contains a large hostile archive and only scattered official responses, retrieval systems may find the archive more easily than the rebuttal.

That does not mean corporations should answer every allegation or threaten every critic. Heavy-handed takedowns can still backfire, and the Streisand effect has not been repealed. But the modern corporate posture needs a machine-readable public record: concise timelines, documentary rebuttals, official statements, and corrections that models can retrieve.

For Shell or any other large institution, the task is not to “win” an argument with a chatbot. It is to ensure that when automated systems summarize a dispute, they encounter authoritative counterweights. In the AI era, reputation management is partly information architecture.

Publishers Need Audit Trails, Not Vibes

The Donovan experiment also exposes a gap in newsroom practice. Many publications now use AI somewhere in the editorial process, but few have mature procedures for preserving prompts, outputs, retrieved context, model identity, and human edits. That is tolerable for low-risk productivity work. It is reckless for allegations involving companies, living people, deaths, crimes, medical matters, or covert conduct.The right standard is not “never use AI.” It is “never let AI be the last unexamined step before publication.” Every reputationally significant claim needs a documentary anchor outside the model. Every sensitive assertion needs an editor willing to ask whether the sentence would survive without the chatbot’s confidence.

The same rule applies to AI-generated legal analysis. A published Copilot memo can be interesting as an artifact. It can illuminate how consumer AI systems reason about risk. But if a publisher intends to rely on that analysis operationally, a human lawyer must review it.

There is a broader platform lesson here too. AI vendors should make it easier to export prompts, model versions, retrieval context, and timestamps. If vendors sell these tools as assistants for knowledge work, they should not treat auditability as an enterprise luxury. In public disputes, auditability is the difference between accountability and fog.

Microsoft’s Role Is Smaller Than the Headline, and Bigger Than It Looks

Because Copilot appears in the experiment, Microsoft becomes part of the story. But it would be wrong to frame this as Microsoft issuing a legal blessing. A consumer-facing or general-purpose assistant producing a defamation-risk discussion is not the same thing as a corporate legal opinion.Still, Microsoft and other AI vendors cannot shrug off the implications. Their products are being used to draft, evaluate, and legitimize public claims. Users will publish outputs. Readers will misunderstand what those outputs mean. The line between “assistant” and “authority” will blur fastest in areas where the prose sounds professional: law, medicine, finance, cybersecurity, and journalism.

The product challenge is not merely to add disclaimers. Users have learned to read past disclaimers. What matters is behavior: hedging sensitive claims, refusing unsupported specifics, showing source provenance, separating verified documents from allegations, and warning when a corpus is adversarial or mixed in quality.

This is especially relevant to WindowsForum’s core audience. Sysadmins and IT pros are being asked to deploy copilots into document repositories, ticket systems, compliance workflows, and corporate knowledge bases. The Donovan case is a warning from the public web: if you do not classify source quality before retrieval, the model will do the classification implicitly, badly, and invisibly.

The Real Innovation Is the Side-by-Side Publication

The strongest defense of Donovan’s experiment is that it made the failure visible. A single AI-generated satire would be easy to dismiss as provocation. A single hallucination would be easy to treat as anecdote. The comparative publication of multiple model outputs is more useful because it shows divergence.One model reportedly embellished. Another corrected. Another hedged. That spread matters. It demonstrates that “AI says” is not a category of evidence. Different systems, prompts, policies, retrieval methods, and model incentives produce different accounts from the same dossier.

For journalists, this suggests a practical method. When using AI against contested material, comparison can reveal risk. If one model makes a dramatic claim and others refuse it, the claim should move to the top of the verification queue. If all models repeat the same claim, that still does not make it true, but it may indicate a widely repeated source that needs tracing.

For readers, the side-by-side format teaches skepticism without requiring technical expertise. It shows that fluency is not reliability. The polished answer and the cautious answer may be responding to the same uncertainty with different personalities.

The Donovan Case Draws the Map for Everyone Else

This episode should not be read narrowly as a Shell story, a Donovan story, or a Copilot story. It is a preview of how public conflicts will be fought in the AI layer. Activists, litigants, corporations, journalists, political campaigns, and hobbyist researchers will all learn to package archives for machine consumption.Some will do it to surface neglected facts. Some will do it to launder allegations. Some will do both at once. The difference will depend less on whether AI is involved than on whether provenance, editorial discipline, and correction mechanisms are built into the workflow.

The uncomfortable truth is that AI rewards the organized archive. A persistent critic with a well-indexed site may become more visible to models than a multinational with fragmented PDFs, cautious statements, and buried legal filings. That inversion may be healthy in some cases and hazardous in others. Either way, it is real.

The answer is not to suppress archives or neuter satire. Satire is part of public accountability, and archives can preserve material that powerful institutions would rather forget. The answer is to prevent models from flattening every retrieved fragment into the same evidentiary plane.

The Shell Archive Experiment Leaves a Checklist for the AI Newsroom

The most useful way to read the Donovan experiment is as a stress test. It shows where AI-assisted publishing is already strong, where it is brittle, and where human judgment still has no substitute.- Publishers should preserve prompts, model outputs, timestamps, model names, and retrieval context for any AI-assisted work involving reputational claims.

- Editors should treat AI-generated assertions as leads until they are verified against primary records or clearly labeled as allegations.

- Legal-risk memos produced by chatbots should be published only with clear framing and should not be treated as privileged advice or professional clearance.

- Models should default to caution for sensitive claims involving deaths, crimes, medical conditions, private conduct, or allegations of covert activity.

- Corporations and public figures should maintain concise, machine-readable rebuttal records rather than relying on silence to keep old disputes contained.

- Readers should be more skeptical of precise details than flamboyant satire, because the plausible connective fact is where AI often does the most damage.

The future of AI-assisted public argument will not be decided by whether machines can write sharp copy; they already can. It will be decided by whether publishers, platforms, and institutions build habits that keep machine fluency subordinate to human verification. The archive is now an input layer, the chatbot is now a narrator, and the editor’s oldest job — deciding what is known, what is alleged, and what is merely too good a line to trust — has become more important, not less.

Source: Royal Dutch Shell Plc .com WindowsForum.com: AI Satire and Defamation Risk in the Shell Archive: A Public RAG Experiment