Over the past decade generative artificial intelligence has moved from a niche productivity tool to a structural force reshaping hiring, promotion and workforce management—and Illinois is one of the first U.S. jurisdictions to convert that risk into concrete law and employer obligations. The state’s patchwork of statutes and proposed bills—centered on the Artificial Intelligence Video Interview Act (AVIA), amendments to the Illinois Human Rights Act (IHRA), and discussion around the Preventing Algorithmic Discrimination Act (PADA)—already changes how employers must disclose, govern and audit algorithmic decision‑making in hiring and employment. Employers that treat generative AI as a convenience rather than a regulated decision‑support system face not only reputational damage and workforce disruption but mounting legal exposure under civil‑rights, biometric privacy and consumer‑protection frameworks.

Generative AI is not a single product but a class of systems that produce new content—text, images, audio, video or structured recommendations—by learning patterns from data and responding to prompts. These models now power everyday features in search, office productivity suites and applicant‑screening systems, which means they are increasingly implicated in consequential employment choices such as who gets an interview, who gets promoted, and who faces discipline. Because generative models can both recommend and rationalize decisions, regulators and courts are treating their outputs as potential decision‑makers that require transparency, human oversight and auditable controls.

In practice, employers use AI across the hiring lifecycle:

Practical implication: employers using vendor‑provided video‑screening platforms must embed AVIA‑compliant disclosures into the candidate workflow and ensure contracts with vendors permit deletion, log export and limited sharing consistent with the statute’s constraints. Failure to operationalize those controls invites administrative enforcement and private actions where statutory notice or deletion obligations are violated.

Practical implication: employers cannot avoid civil‑rights liability simply by delegating decisions to an algorithm. The amended IHRA makes accountability and outcome testing essential: employers must show that AI‑supported decisions do not produce disparate impact or are otherwise justified by a valid, non‑discriminatory business need and subject to meaningful human oversight.

Source: The National Law Review Illinois Tightens Artificial Intelligence Use in Employment

Background

Background

Generative AI is not a single product but a class of systems that produce new content—text, images, audio, video or structured recommendations—by learning patterns from data and responding to prompts. These models now power everyday features in search, office productivity suites and applicant‑screening systems, which means they are increasingly implicated in consequential employment choices such as who gets an interview, who gets promoted, and who faces discipline. Because generative models can both recommend and rationalize decisions, regulators and courts are treating their outputs as potential decision‑makers that require transparency, human oversight and auditable controls.In practice, employers use AI across the hiring lifecycle:

- Resume parsing and ranking to pre‑screen candidates.

- Video‑interview analysis that scores speech, facial cues and answer content.

- Internal promotion and performance‑scoring systems that aggregate productivity and behavioral signals.

Those use cases promise efficiency but also concentrate risk where errors or bias have material consequences. Numerous compliance frameworks—domestic and international—now treat such systems as “high‑risk” when they materially affect rights or access to services, demanding documentation, impact assessments and human‑in‑the‑loop (HITL) mechanisms.

Regulatory snapshot: where Illinois sits in the U.S. patchwork

Illinois is notable for taking an early, statutory approach to algorithmic hiring tools at two levels.AVIA: Video interviews, disclosure and consent

The Artificial Intelligence Video Interview Act (AVIA) targets pre‑recorded interview systems that evaluate candidates via automated analysis. The core regulatory themes are notice, explanation, consent, data minimization and retention controls. Under AVIA, employers who use AI to evaluate an applicant’s video must disclose that AI will be used, explain in general terms the characteristics the tool examines, and obtain the applicant’s consent before proceeding. AVIA also restricts sharing of the video, limits retention and creates deletion obligations when an applicant requests removal. Those transparency and deletion features reflect a broader regulatory trend requiring explicit signposts when AI influences selection decisions.Practical implication: employers using vendor‑provided video‑screening platforms must embed AVIA‑compliant disclosures into the candidate workflow and ensure contracts with vendors permit deletion, log export and limited sharing consistent with the statute’s constraints. Failure to operationalize those controls invites administrative enforcement and private actions where statutory notice or deletion obligations are violated.

IHRA amendments: civil‑rights liability for AI‑driven employment decisions

Illinois recently expanded anti‑discrimination law to explicitly cover algorithmic and generative AI decision‑making in employment contexts. The amended Illinois Human Rights Act (IHRA) treats employer decisions driven by AI—recruitment, hiring, promotion, salary setting, discipline and similar personnel actions—as actionable where they result in discrimination against protected classes or use proxies (such as zip codes) that correlate with protected characteristics. The statute’s definitional clarity—that “AI” includes systems producing predictions, recommendations or content, and that “generative AI” refers to systems producing textual, visual and multimedia outputs—makes the statute applicable to a broad range of models and implementations.Practical implication: employers cannot avoid civil‑rights liability simply by delegating decisions to an algorithm. The amended IHRA makes accountability and outcome testing essential: employers must show that AI‑supported decisions do not produce disparate impact or are otherwise justified by a valid, non‑discriminatory business need and subject to meaningful human oversight.

Proposed statutory expansion: the Preventing Algorithmic Discrimination Act (PADA)

Illinois legislators have also proposed a broader regulatory approach—PADA—that would impose governance, notification and assessment requirements on deployers of algorithmic decision systems used for high‑stakes services including employment, housing and credit. While PADA’s status is uncertain, its provisions signal a legislative appetite for mandatory impact assessments, programmatic governance and public reporting for systems that affect civil rights and essential services. Even if PADA does not pass in its current form, the bill serves as a roadmap for future regulatory expectations.Why employers must care: legal, operational and reputational stakes

Employers face a multi‑vector exposure when they deploy AI in people decisions.- Civil‑rights and disparate‑impact liability. Algorithmic systems trained on historical data can reproduce and amplify structural bias. Even neutral‑appearing metrics can have disproportionate effects on protected groups, triggering disparate‑impact claims. Illinois’s IHRA explicitly targets those outcomes when AI is the decision driver.

- Privacy and biometric risk. Use of facial analysis or voice metrics in video interviews can implicate biometric‑privacy laws in Illinois and elsewhere, requiring notice and written consent before collection and strict retention and disclosure limits. Employers integrating facial‑analysis features must align with biometric statutes and AVIA deletion rules.

- Contractual and procurement risk. Vendors often resist full transparency about model training data, telemetry, or retention practices. Without contractual guarantees—no‑retrain clauses, exportable logs, deletion assurances and SOC/ISO attestations—employers may be unable to meet statutory auditing, transparency and deletion obligations.

- Operational and human‑capital risk. Overreliance on AI can erode apprenticeship and training pathways, compress entry‑level opportunities and damage long‑term talent pipelines. Employers must balance short‑term productivity against long‑term workforce development to avoid attrition, skill erosion and morale issues.

- Litigation and enforcement risk. Automated decisioning that lacks human oversight, documentation and impact testing is an obvious target for class actions, regulatory investigations and administrative complaints—especially where results align with protected‑class disparities. Employers will face discovery demands for training data, logs and model versions unless they have contractual and technical controls in place.

Notable precedents and market failures

High‑profile failures and enforcement outcomes already illustrate common pitfalls.- Historical hiring‑model failures show how using biased training data can reproduce discriminatory outcomes: when systems are trained on past hiring decisions, they can learn to prefer the historical demographics of a workforce rather than the best predictors of job performance. Those failure modes drove early vendor and internal redesigns in multiple industries and remain a primary concern for auditors and regulators.

- Large privacy settlements under biometric privacy laws signal the financial scale of exposure if employers mishandle biometric data. Recent multi‑million‑dollar settlements in the biometric privacy space underscore that procedural lapses—lack of notice, absence of retention limits, or failure to secure written consent—translate into real monetary risk for organizations of all sizes. Employers using facial or voice analysis in interviews must therefore treat biometric controls as a first‑order compliance task.

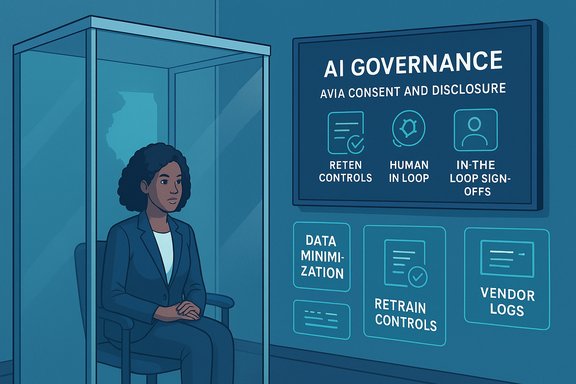

Technical and governance controls that make AI defensible (not just usable)

To move from risky experimentation to legally defensible deployment, employers must combine technical safeguards with policy and process changes.1. Inventory and risk classification

Maintain a living register of every AI system (including third‑party copilots, vendor modules and in‑house agents), the decisions it influences, the data flows it consumes and its risk level for employment outcomes. Classify HR and decision‑support systems as high‑risk when they materially affect hiring, promotion, termination, wages or discipline. That classification drives required controls and documentation.2. Human‑in‑the‑loop (HITL) design

Require a documented human verification step for any AI output that materially influences employment status or compensation. Implement role‑based sign‑offs, competency gates for verifiers and mandatory audit trails showing the human rationale for accepting or overruling AI suggestions. HITL is not a checkbox but an audit function: regulators expect demonstrable human judgment, not perfunctory approval.3. Bias and fairness testing

Run pre‑deployment impact assessments that measure disparate outcomes across protected classes, and establish continuous fairness testing post‑deployment. Include representative samples, counterfactual checks and remediation workflows when inequities appear. Where differences persist, pause deployments and require model retraining or rule changes before scaling.4. Data minimization, retention and tenant grounding

Avoid sending sensitive HR data or candidate PII to public, unrecoverable consumer models. Use tenant‑grounded or on‑prem instances for high‑risk processing, enforce endpoint DLP, and limit retention windows consistent with deletion obligations (for example, AVIA’s deletion timelines). Contractually lock down vendor telemetry and require exportable logs for eDiscovery and audits.5. Procurement redlines and vendor audit rights

Standard procurement must include: no‑retrain clauses on matter or candidate data; machine‑readable prompt/response logs; deletion and egress guarantees; SOC 2/ISO attestation; and audit access for independent third‑party testing. Without these contractual protections, employers will be unable to comply with statutory transparency or deletion requirements.6. Transparent notices and candidate rights

Where statutes require notice and consent (for example, AVIA), integrate clear explanations into candidate workflows: what the AI does, what characteristics are evaluated, opt‑out consequences, data retention controls and deletion request mechanisms. For covered systems, provide annual or periodic reporting where statutes demand demographic breakdowns of AI decisions.Practical checklist — an operational roadmap for HR, IT and legal

- Inventory all AI systems and classify HR use cases by risk.

- Require vendor contract redlines (no‑retrain, exportable logs, deletion guarantees).

- Implement mandatory human verification for hiring, promotion and discipline decisions.

- Run pre‑deployment fairness/impact assessments and schedule periodic audits.

- Protect biometric and sensitive data with DLP, tenant grounding and consent workflows.

- Train HR and managers on verification protocols and create internal appeal channels.

Strengths of the Illinois approach — and the gaps to watch

Illinois’s statutes and legislative proposals present several strengths:- Clarity of scope: Definitional inclusions of “AI” and “generative AI” reduce regulatory ambiguity about covered systems.

- Focus on outcomes, not just process: By tying liability to discriminatory results as well as proxies, the IHRA amendments create enforceable obligations on results and testing.

- Practical transparency requirements: AVIA’s consent, disclosure and deletion rules put actionable duties on employers that can be operationalized in recruitment workflows.

- Vendor opacity and evidence collection: Many providers do not disclose training data provenance at the level regulators prefer; enforcement depends on employer contractual leverage that smaller organizations may lack.

- Implementation burden for SMEs: Conformity assessments, auditing and documentation can be resource‑intensive; regulators will need to provide scaled guidance to avoid entrenching vendor lock‑in.

- Enforcement and litigation uncertainty: As doctrines develop around automated decision‑making, employers will face novel discovery demands for training datasets, prompts and internal governance artifacts—areas courts are still interpreting.

Red flags and unverifiable claims (what to watch for)

- Be skeptical of vendor marketing that promises “bias elimination” or complete lineage of training data—those claims are often unverifiable without contractual audit rights or independent third‑party testing. Seek written audit rights and independent verification before relying on such claims.

- Predictions about large‑scale job losses or precise headcount impacts are scenario‑based and highly dependent on firm choices, sectoral adoption rates and public policy. Use scenario planning, not deterministic forecasts, to guide workforce strategy. The empirical evidence to date points to disproportionate impacts at the entry level rather than wholesale replacement of senior roles, but replication and sectoral study are ongoing.

- When statutes or proposals (like PADA) are under consideration, legislative language can change materially during committee review. Treat proposals as directional signals rather than settled law, and verify operative dates and final text before implementing compliance programs predicated on proposed language.

Conclusion — convert risk into a competitive advantage

Generative AI is reshaping employer decision‑making with speed and scale. The Illinois legislative landscape—forcing disclosure, consent, human oversight and outcome accountability—signals a new normal: AI that touches employment rights must be governed, audited and explained. Employers that build robust AI governance programs—integrating legal, HR and IT controls, contractual protections with vendors, and systematic fairness testing—will not only reduce legal exposure but also capture more durable productivity gains and workforce trust. Framing AI adoption as a strategic talent and compliance program, rather than a narrow efficiency play, is the single most important shift organizations must make to succeed in a regulated, AI‑enabled future.Source: The National Law Review Illinois Tightens Artificial Intelligence Use in Employment