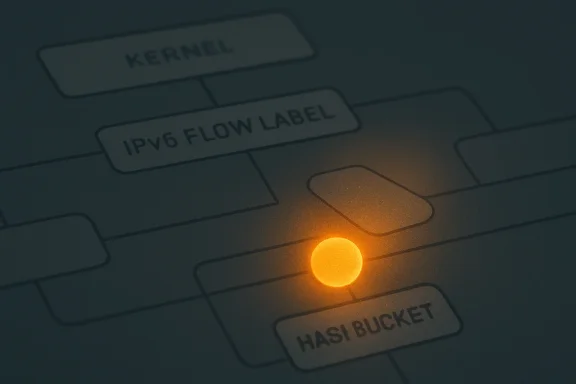

A newly published Linux kernel vulnerability, CVE-2026-31680, highlights a familiar but consequential class of networking bugs: a lifetime mismatch in code protected by RCU, Linux’s high-performance read-side synchronization model. The flaw sits in the IPv6 flow label implementation, where a

IPv6 flow labels were designed to let hosts tag related packets so routers and middleboxes could recognize a flow without deeply inspecting transport-layer headers. The field is only 20 bits wide, but its operational implications are larger: it sits directly in the IPv6 header, interacts with traffic classification, and has accumulated decades of kernel plumbing around socket options, policy, and diagnostic visibility. In Linux, that plumbing includes global flow label data structures and a procfs view exposed through

The vulnerability described in CVE-2026-31680 is not about malformed packets crossing the internet and instantly compromising a machine. It is about internal kernel state becoming visible to one code path while another path has already freed part of that state. That distinction matters, because it shifts the likely threat model toward local users, containers, privileged network namespaces, automated diagnostics, or software that can manipulate IPv6 flow labels while another process reads the procfs table.

The key technical phrase is “defer exclusive option free until RCU teardown.” Linux’s Read-Copy-Update model allows readers to traverse shared data structures with very low overhead, provided writers do not reclaim memory until all relevant readers have exited. Here, the enclosing

That mismatch created a classic use-after-free window. A procfs reader in

That is why the fix is conceptually simple but important: keep

The crash path involves

Key elements of the issue include:

The problem in CVE-2026-31680 is that RCU protected the visibility of the enclosing flow label object, but the options block attached to it followed a shorter lifecycle. That is a small discrepancy in code, yet it defeats the mental contract readers rely on. If a reader can reach an object under RCU, all fields it dereferences must either be stable, separately protected, or safely absent.

This is why kernel memory bugs often survive code review. The dangerous line is rarely a dramatic one; it may be a

For Windows-focused administrators, the analogy is useful. This is less like a bad firewall rule and more like a kernel driver freeing a shared object while another thread still has a valid route to it. The operating system’s concurrency model is doing exactly what it was designed to do, but one object’s lifetime was shorter than the contract implied.

The RCU lesson is straightforward:

Linux exposes flow label management through kernel networking code that has accumulated support for socket APIs, reference counting, options, and visibility through procfs. That makes the subsystem a classic example of quiet complexity. Few users think about IPv6 flow labels directly, yet enterprise servers, container hosts, test tools, and network stacks can still touch the code.

The vulnerable path involves exclusive flow labels, where option state was freed once

The distinction is important for assessing exposure. A machine does not need to be a public IPv6 router to have IPv6 code compiled and reachable. Many modern Linux distributions enable IPv6 by default, even in environments where administrators rarely use IPv6 intentionally.

Relevant exposure questions include:

That said, security teams should avoid dismissing the issue simply because no CVSS score was available at publication. Kernel crashes matter in production. A local crash on a developer workstation is annoying; a crash on a Kubernetes node, CI runner, database host, or appliance can trigger service disruption, failover storms, and forensic uncertainty.

The attack preconditions are likely narrower than for a remote packet-processing vulnerability. An attacker would need to create or release relevant flow label state and race a procfs reader at the right moment. However, modern Linux deployments are full of automation, namespace boundaries, and observability agents that can unintentionally widen obscure race windows.

The most realistic operational scenarios are not cinematic remote exploits. They are low-noise local crash attempts, accidental triggers during testing, or bug reproduction in containerized environments where users can exercise parts of the IPv6 stack. That makes patching important even if emergency internet-facing mitigation is not warranted.

A practical impact ranking looks like this:

Microsoft’s security ecosystem now routinely references vulnerabilities outside the traditional Windows codebase when those components affect supported scenarios. That does not mean this flaw is a Windows kernel bug. It means administrators who live in Microsoft tooling may still see the CVE appear in dashboards, vulnerability feeds, or cloud security inventories.

The practical question is where the Linux kernel actually runs. WSL 2 uses a real Linux kernel inside a lightweight virtual machine. Azure hosts countless Linux VMs. Developers may run Docker Desktop, build agents, or test clusters from Windows machines while relying on Linux kernel networking under the surface.

For enterprise defenders, this creates a visibility challenge. Windows patch compliance does not automatically prove Linux kernel compliance. If your security reporting merges endpoint, cloud, and container findings, a Linux kernel CVE can surface beside Windows Server bulletins even though the remediation path is entirely different.

Windows-adjacent places to check include:

The CVE record lists multiple stable references, which strongly suggests the fix was propagated across supported kernel branches. That is normal for kernel security fixes. Stable maintainers take targeted patches and apply them to maintained trees so users are not forced into a major kernel jump just to receive one memory-safety correction.

Administrators should therefore rely on vendor advisories, package changelogs, and actual kernel build metadata rather than generic version comparisons. On enterprise Linux, the important unit is often the distribution package release. On rolling distributions, the answer is usually to install the latest kernel package and reboot into it.

The reboot point is worth stressing. Kernel package installation does not protect a running system until the patched kernel is active. Live patching may help in some environments, but teams should confirm whether this specific fix is covered by their live patch provider rather than assuming it is.

A sensible remediation flow is:

Triage should start with asset classification. Shared systems with local users deserve more urgency than isolated appliances with no shell access. Container platforms need careful review because network namespace capabilities can change the practical boundary between “local only” and “tenant reachable.”

Temporary mitigation is less satisfying than patching. Disabling IPv6 may reduce exposure in some environments, but it can break services, violate assumptions in modern software, or create hidden operational debt. Restricting local access and container capabilities is safer as a general hardening measure, but it should not replace kernel updates.

The best short-term approach is a layered one. Patch where possible, reduce unnecessary access to sensitive networking capabilities, and watch for kernel crash telemetry. If you operate a fleet, prioritize systems where untrusted code can run.

Useful triage checks include:

Cloud and virtualization do not automatically erase the concern. If the vulnerable kernel runs inside a guest VM, the likely crash impact is confined to that guest. But if that guest hosts many containers or build jobs, a crash still has business impact. Availability loss at the wrong layer can cascade into failed deployments, interrupted pipelines, or service-level incidents.

Security teams should also consider compliance workflows. Many scanners will flag the CVE before NVD enrichment is complete, leaving administrators with a finding that has no official CVSS score. That can create tension between vulnerability management SLAs and engineering reality.

The absence of a score should be handled with judgment, not paralysis. Treat it as a kernel memory-safety flaw with credible crash impact, prioritize by exposure, and track vendor updates. The operational goal is to avoid both extremes: ignoring the issue because it is not remotely wormable, or declaring a fleet-wide emergency without evidence.

Enterprise priorities should include:

That does not make the flaw irrelevant. Linux desktops often run browsers, development tools, containers, VPN clients, and local services. Enthusiasts also tend to experiment with networking features and custom kernels, which can make obscure kernel paths more reachable than they are on a locked-down appliance.

Windows users who run WSL 2 should keep an eye on kernel update mechanisms. WSL’s Linux kernel is not updated by the same path as a normal Ubuntu or Fedora package inside the distribution. The user-space distro and the kernel underneath it are related operationally, but they are not the same component.

The best advice for enthusiasts is simple: update through the supported channel, reboot when required, and avoid disabling IPv6 unless you have a clear reason. Security hardening that breaks networking mysteriously is not a win. Timely patching is cleaner.

For home and enthusiast systems:

Compared with proprietary operating systems, Linux often exposes more implementation context earlier. For researchers and administrators, that is a strength. For vendors shipping slow-moving appliances, it is a pressure test. Once a kernel CVE is public, customers expect answers about backports, build numbers, and maintenance windows.

For Microsoft, the relevance is strategic. The company’s customers increasingly operate hybrid estates where Windows, Linux, Azure, containers, and developer tooling overlap. A Linux kernel CVE appearing in Microsoft-facing security channels reinforces the reality that platform security is no longer bounded by a single OS brand.

Rivals in cloud, endpoint, and vulnerability management will interpret this kind of CVE through their own tooling. The vendors that provide the clearest mapping from CVE to affected kernel build will help customers most. The vendors that simply display “NVD score unavailable” without context will generate noise.

The broader ecosystem lessons are:

Security teams should treat unenriched CVEs with a structured process. First, identify the affected component and whether it exists in your environment. Second, determine the impact described by the CNA or upstream project. Third, assess exploit preconditions against your actual deployment model.

For this CVE, the upstream description provides enough to classify it as a kernel use-after-free with crash potential in IPv6 flow label procfs reporting. That is sufficient to prioritize patching on shared Linux systems. It is also sufficient to avoid overstating the case as proven remote code execution.

A mature triage model looks for evidence-backed urgency. In other words, it asks what is known, what is unknown, and what decision can be made today. Waiting for a numeric score can be convenient, but it should not be the only gate for kernel memory-safety fixes.

When CVSS is unavailable, consider:

Security researchers may also publish reproducer code or deeper analysis of the race. If public proof-of-concept material appears, the urgency for shared systems will rise, even if the impact remains denial of service. A reliable crash against multi-tenant infrastructure is still a security event.

Watch for these items over the coming days and weeks:

The broader takeaway from CVE-2026-31680 is that kernel security still turns on details most users never see: pointer lifetimes, RCU grace periods, procfs iterators, and the timing of object teardown. For WindowsForum readers managing modern hybrid environments, that is precisely why Linux kernel CVEs deserve a place in the same operational conversation as Windows updates, cloud advisories, and container hardening. Patch promptly, verify the running kernel, and treat “local-only” bugs with the seriousness appropriate to the shared systems that now define modern computing.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

/proc/net/ip6_flowlabel reader can race with flow label teardown and dereference an already freed options structure. No NVD CVSS score had been assigned at publication time, but the practical concern is clear: affected systems can crash under the wrong concurrency conditions, turning a subtle memory-management bug into a possible local denial-of-service issue.

Background

Background

IPv6 flow labels were designed to let hosts tag related packets so routers and middleboxes could recognize a flow without deeply inspecting transport-layer headers. The field is only 20 bits wide, but its operational implications are larger: it sits directly in the IPv6 header, interacts with traffic classification, and has accumulated decades of kernel plumbing around socket options, policy, and diagnostic visibility. In Linux, that plumbing includes global flow label data structures and a procfs view exposed through /proc/net/ip6_flowlabel.The vulnerability described in CVE-2026-31680 is not about malformed packets crossing the internet and instantly compromising a machine. It is about internal kernel state becoming visible to one code path while another path has already freed part of that state. That distinction matters, because it shifts the likely threat model toward local users, containers, privileged network namespaces, automated diagnostics, or software that can manipulate IPv6 flow labels while another process reads the procfs table.

The key technical phrase is “defer exclusive option free until RCU teardown.” Linux’s Read-Copy-Update model allows readers to traverse shared data structures with very low overhead, provided writers do not reclaim memory until all relevant readers have exited. Here, the enclosing

struct ip6_flowlabel remained reachable from the global hash table until later garbage collection, while its fl->opt pointer could be freed earlier when the flow label’s user count dropped to zero.That mismatch created a classic use-after-free window. A procfs reader in

ip6fl_seq_show() could walk the global flow label hash under an RCU read-side lock, see a flow label that had not yet been fully removed, and then print fl->opt->opt_nflen from an options block that no longer existed. In kernel space, that kind of stale pointer is not a harmless bookkeeping error; it can translate into an immediate crash.The Bug in Plain English

A lifetime mismatch, not an IPv6 design failure

At its core, CVE-2026-31680 is a memory lifetime bug. One object, the flow label itself, lived long enough for RCU readers to keep seeing it. A related child object, the options block, did not live as long, even though readers still treated it as valid when the parent object was visible.That is why the fix is conceptually simple but important: keep

fl->opt alive until fl_free_rcu() tears down the surrounding flow label. This aligns the child object’s lifetime with the parent object’s RCU-visible lifetime. The patch does not reinvent IPv6 flow labels, change the wire protocol, or introduce a new user-facing security model.The crash path involves

/proc/net/ip6_flowlabel, a diagnostic interface that exposes kernel networking state. Diagnostic interfaces are often underestimated because they appear passive. In practice, they can be security-relevant because they traverse complex live kernel state while administrators, monitoring agents, and unprivileged tools read them concurrently.Key elements of the issue include:

- Affected component: Linux kernel IPv6 flow label handling

- Observable crash site:

ip6fl_seq_show() - Data structure involved:

struct ip6_flowlabel - Problem pointer:

fl->opt - Root cause: early free of option state while parent remains RCU-visible

- Likely impact: local or namespace-scoped denial of service

- Fix strategy: defer freeing option memory until RCU teardown

Why RCU Makes This Subtle

Readers are cheap, but reclamation must be disciplined

RCU, short for Read-Copy-Update, is one of the reasons Linux scales well on multiprocessor systems. It allows readers to inspect shared structures without taking traditional heavy locks, while writers remove or replace structures and defer actual memory reclamation until a grace period has passed. That model is powerful, but it demands precise object lifetime discipline.The problem in CVE-2026-31680 is that RCU protected the visibility of the enclosing flow label object, but the options block attached to it followed a shorter lifecycle. That is a small discrepancy in code, yet it defeats the mental contract readers rely on. If a reader can reach an object under RCU, all fields it dereferences must either be stable, separately protected, or safely absent.

This is why kernel memory bugs often survive code review. The dangerous line is rarely a dramatic one; it may be a

kfree() in a release path that looks locally correct. The failure only appears when you reason across another CPU, another reader, a procfs sequence iterator, and delayed garbage collection.For Windows-focused administrators, the analogy is useful. This is less like a bad firewall rule and more like a kernel driver freeing a shared object while another thread still has a valid route to it. The operating system’s concurrency model is doing exactly what it was designed to do, but one object’s lifetime was shorter than the contract implied.

The RCU lesson is straightforward:

- Removal is not the same as reclamation

- Visibility must determine memory lifetime

- Child pointers need the same protection assumptions as parent objects

- Procfs readers can expose races that normal packet paths rarely trigger

- Low-overhead readers shift complexity into writer-side teardown

- Delayed free is often safer than early cleanup in RCU-visible structures

The IPv6 Flow Label Angle

A small header field with deep kernel state

The IPv6 flow label was meant to help identify packet flows efficiently. In theory, it can support load balancing, quality-of-service decisions, and flow-aware treatment without requiring routers to inspect ports or extension headers. In practice, deployment has been uneven, but operating systems still need to implement it correctly because the field is part of the IPv6 architecture.Linux exposes flow label management through kernel networking code that has accumulated support for socket APIs, reference counting, options, and visibility through procfs. That makes the subsystem a classic example of quiet complexity. Few users think about IPv6 flow labels directly, yet enterprise servers, container hosts, test tools, and network stacks can still touch the code.

The vulnerable path involves exclusive flow labels, where option state was freed once

fl->users dropped to zero. That might sound reasonable if viewed only through the release path. The flaw appears because the global hash table can still contain the flow label until later cleanup, so the object is not truly gone from every reader’s perspective.The distinction is important for assessing exposure. A machine does not need to be a public IPv6 router to have IPv6 code compiled and reachable. Many modern Linux distributions enable IPv6 by default, even in environments where administrators rarely use IPv6 intentionally.

Relevant exposure questions include:

- Is IPv6 enabled in the running kernel?

- Are untrusted users present on the host?

- Are containers or network namespaces allowed to manipulate IPv6 settings?

- Do monitoring tools read

/proc/net/ip6_flowlabel? - Are kernel builds tracking stable updates quickly?

- Does the environment rely on long-lived vendor kernels?

Exploitability and Real-World Impact

Crash risk is clearer than code execution risk

The published description points to a crash inip6fl_seq_show(), which makes denial of service the most immediate and defensible impact. A use-after-free in kernel space is always serious, but not every stale pointer creates a practical privilege escalation path. In this case, the described dereference reads option metadata for display, and the public record does not establish reliable arbitrary code execution.That said, security teams should avoid dismissing the issue simply because no CVSS score was available at publication. Kernel crashes matter in production. A local crash on a developer workstation is annoying; a crash on a Kubernetes node, CI runner, database host, or appliance can trigger service disruption, failover storms, and forensic uncertainty.

The attack preconditions are likely narrower than for a remote packet-processing vulnerability. An attacker would need to create or release relevant flow label state and race a procfs reader at the right moment. However, modern Linux deployments are full of automation, namespace boundaries, and observability agents that can unintentionally widen obscure race windows.

The most realistic operational scenarios are not cinematic remote exploits. They are low-noise local crash attempts, accidental triggers during testing, or bug reproduction in containerized environments where users can exercise parts of the IPv6 stack. That makes patching important even if emergency internet-facing mitigation is not warranted.

A practical impact ranking looks like this:

- Highest concern: multi-user Linux hosts, shared CI, container platforms, and managed compute nodes.

- Moderate concern: enterprise Linux servers with local shell access and active IPv6 tooling.

- Lower concern: single-user desktops with timely kernel updates and limited untrusted code.

- Special concern: appliances or embedded systems that expose shell-like management roles.

- Unknown concern: custom kernels with backported IPv6 changes and unusual procfs access patterns.

Why WindowsForum Readers Should Care

Linux kernel CVEs increasingly intersect with Windows estates

At first glance, CVE-2026-31680 looks like a Linux-only story. For WindowsForum readers, the hook is that modern Windows environments increasingly run Linux workloads directly or indirectly. Windows Subsystem for Linux, Hyper-V guests, Azure Linux images, container hosts, developer workstations, and mixed fleet management all blur the line between “Windows vulnerability” and “not my problem.”Microsoft’s security ecosystem now routinely references vulnerabilities outside the traditional Windows codebase when those components affect supported scenarios. That does not mean this flaw is a Windows kernel bug. It means administrators who live in Microsoft tooling may still see the CVE appear in dashboards, vulnerability feeds, or cloud security inventories.

The practical question is where the Linux kernel actually runs. WSL 2 uses a real Linux kernel inside a lightweight virtual machine. Azure hosts countless Linux VMs. Developers may run Docker Desktop, build agents, or test clusters from Windows machines while relying on Linux kernel networking under the surface.

For enterprise defenders, this creates a visibility challenge. Windows patch compliance does not automatically prove Linux kernel compliance. If your security reporting merges endpoint, cloud, and container findings, a Linux kernel CVE can surface beside Windows Server bulletins even though the remediation path is entirely different.

Windows-adjacent places to check include:

- WSL 2 environments on developer workstations

- Linux virtual machines hosted on Hyper-V

- Azure Linux workloads managed by Microsoft security tooling

- Container build hosts used from Windows engineering teams

- CI/CD runners that execute untrusted or semi-trusted jobs

- Security dashboards that ingest MSRC, NVD, and vendor advisories

Patch Management and Kernel Backports

The version number may not tell the whole story

Linux kernel remediation is rarely as simple as “upgrade to version X.” Distribution vendors often backport individual fixes into long-term kernels while keeping the same major version line. That means a system may appear to run an older kernel but still contain the patch, or it may run a newer custom build that lacks a specific stable commit.The CVE record lists multiple stable references, which strongly suggests the fix was propagated across supported kernel branches. That is normal for kernel security fixes. Stable maintainers take targeted patches and apply them to maintained trees so users are not forced into a major kernel jump just to receive one memory-safety correction.

Administrators should therefore rely on vendor advisories, package changelogs, and actual kernel build metadata rather than generic version comparisons. On enterprise Linux, the important unit is often the distribution package release. On rolling distributions, the answer is usually to install the latest kernel package and reboot into it.

The reboot point is worth stressing. Kernel package installation does not protect a running system until the patched kernel is active. Live patching may help in some environments, but teams should confirm whether this specific fix is covered by their live patch provider rather than assuming it is.

A sensible remediation flow is:

- Inventory all Linux kernels across servers, VMs, containers hosts, WSL instances, and appliances.

- Map each system to its distribution or kernel vendor advisory.

- Update to the vendor-provided kernel package containing the backport.

- Reboot or apply verified live patch coverage where supported.

- Confirm the running kernel version and package build after maintenance.

- Monitor for crash signatures involving

ip6fl_seq_show()or IPv6 flow label paths.

Detection, Triage, and Mitigation

Look for symptoms, but do not wait for them

Because CVE-2026-31680 is a race condition, defenders should not expect reliable indicators before exploitation or accidental triggering. The most visible symptom would likely be a kernel oops, panic, or crash trace involvingip6fl_seq_show(), /proc/net/ip6_flowlabel, or IPv6 flow label release paths. Absence of crashes does not prove absence of exposure.Triage should start with asset classification. Shared systems with local users deserve more urgency than isolated appliances with no shell access. Container platforms need careful review because network namespace capabilities can change the practical boundary between “local only” and “tenant reachable.”

Temporary mitigation is less satisfying than patching. Disabling IPv6 may reduce exposure in some environments, but it can break services, violate assumptions in modern software, or create hidden operational debt. Restricting local access and container capabilities is safer as a general hardening measure, but it should not replace kernel updates.

The best short-term approach is a layered one. Patch where possible, reduce unnecessary access to sensitive networking capabilities, and watch for kernel crash telemetry. If you operate a fleet, prioritize systems where untrusted code can run.

Useful triage checks include:

- Review whether

/proc/net/ip6_flowlabelexists and is readable in relevant namespaces. - Identify users or services that can create IPv6 flow labels.

- Audit containers granted broad networking capabilities.

- Search kernel logs for

ip6fl_seq_show, flow label crashes, or use-after-free reports. - Confirm whether crash dump collection is enabled before testing reproductions.

- Avoid running public proof-of-concept code on production hosts.

Enterprise Impact

Shared infrastructure changes the risk calculation

In an enterprise, the risk of CVE-2026-31680 depends less on IPv6 enthusiasm and more on tenancy. A single-purpose server with no local users has a different exposure profile than a shared research host, university login node, build farm, or container platform. Local denial-of-service issues become more important when many parties can execute code on the same kernel.Cloud and virtualization do not automatically erase the concern. If the vulnerable kernel runs inside a guest VM, the likely crash impact is confined to that guest. But if that guest hosts many containers or build jobs, a crash still has business impact. Availability loss at the wrong layer can cascade into failed deployments, interrupted pipelines, or service-level incidents.

Security teams should also consider compliance workflows. Many scanners will flag the CVE before NVD enrichment is complete, leaving administrators with a finding that has no official CVSS score. That can create tension between vulnerability management SLAs and engineering reality.

The absence of a score should be handled with judgment, not paralysis. Treat it as a kernel memory-safety flaw with credible crash impact, prioritize by exposure, and track vendor updates. The operational goal is to avoid both extremes: ignoring the issue because it is not remotely wormable, or declaring a fleet-wide emergency without evidence.

Enterprise priorities should include:

- Shared Linux hosts with untrusted or semi-trusted users

- Container nodes that expose network namespace operations

- Developer workstations running WSL 2 or local Linux VMs

- Build infrastructure executing third-party code

- Appliances based on Linux kernels with delayed vendor updates

- Monitoring systems that frequently scrape procfs networking files

Consumer and Enthusiast Impact

Most home users should patch normally, not panic

For typical desktop Linux users, CVE-2026-31680 is unlikely to be the kind of vulnerability that demands dramatic immediate action. It does not describe a remote IPv6 packet that can crash every reachable laptop from across the internet. The more plausible path requires local activity that creates and releases flow label state while the procfs view is read concurrently.That does not make the flaw irrelevant. Linux desktops often run browsers, development tools, containers, VPN clients, and local services. Enthusiasts also tend to experiment with networking features and custom kernels, which can make obscure kernel paths more reachable than they are on a locked-down appliance.

Windows users who run WSL 2 should keep an eye on kernel update mechanisms. WSL’s Linux kernel is not updated by the same path as a normal Ubuntu or Fedora package inside the distribution. The user-space distro and the kernel underneath it are related operationally, but they are not the same component.

The best advice for enthusiasts is simple: update through the supported channel, reboot when required, and avoid disabling IPv6 unless you have a clear reason. Security hardening that breaks networking mysteriously is not a win. Timely patching is cleaner.

For home and enthusiast systems:

- Do update your Linux kernel packages promptly.

- Do reboot after kernel updates.

- Do update WSL through its supported update mechanism.

- Do not assume a distribution package upgrade changed the running kernel.

- Do not panic about remote exploitation based solely on the CVE title.

- Do be cautious with untrusted local users, containers, and kernel testing scripts.

Competitive and Ecosystem Implications

Linux’s openness cuts both ways

The Linux kernel ecosystem has a distinctive security rhythm. Bugs are fixed in public, stable trees move quickly, distributions backport selectively, and CVEs may appear after the technical fix has already landed. That openness gives defenders valuable detail, but it also gives attackers a roadmap for reproducing crashes.Compared with proprietary operating systems, Linux often exposes more implementation context earlier. For researchers and administrators, that is a strength. For vendors shipping slow-moving appliances, it is a pressure test. Once a kernel CVE is public, customers expect answers about backports, build numbers, and maintenance windows.

For Microsoft, the relevance is strategic. The company’s customers increasingly operate hybrid estates where Windows, Linux, Azure, containers, and developer tooling overlap. A Linux kernel CVE appearing in Microsoft-facing security channels reinforces the reality that platform security is no longer bounded by a single OS brand.

Rivals in cloud, endpoint, and vulnerability management will interpret this kind of CVE through their own tooling. The vendors that provide the clearest mapping from CVE to affected kernel build will help customers most. The vendors that simply display “NVD score unavailable” without context will generate noise.

The broader ecosystem lessons are:

- Fast upstream fixes are valuable only when downstream mapping is clear.

- Backport transparency matters more than headline kernel versions.

- Hybrid tooling must distinguish Windows exposure from Linux exposure.

- Cloud security platforms need workload-aware prioritization.

- Procfs and diagnostic paths deserve security attention, not just packet parsers.

- CVE scoring gaps should not stall practical risk decisions.

Why “No CVSS Yet” Is Not the Same as “No Risk”

Vulnerability management must handle incomplete records

At publication, the NVD entry for CVE-2026-31680 had not assigned CVSS v4.0, v3.x, or v2.0 severity. That is increasingly common in high-volume vulnerability ecosystems, especially when CVE records arrive faster than enrichment pipelines can fully analyze them. A missing score is an information gap, not a safety signal.Security teams should treat unenriched CVEs with a structured process. First, identify the affected component and whether it exists in your environment. Second, determine the impact described by the CNA or upstream project. Third, assess exploit preconditions against your actual deployment model.

For this CVE, the upstream description provides enough to classify it as a kernel use-after-free with crash potential in IPv6 flow label procfs reporting. That is sufficient to prioritize patching on shared Linux systems. It is also sufficient to avoid overstating the case as proven remote code execution.

A mature triage model looks for evidence-backed urgency. In other words, it asks what is known, what is unknown, and what decision can be made today. Waiting for a numeric score can be convenient, but it should not be the only gate for kernel memory-safety fixes.

When CVSS is unavailable, consider:

- Exploit location: local, adjacent, remote, or unclear

- Privilege requirements: unprivileged user, namespace capability, administrator, or unknown

- Impact type: crash, data exposure, privilege escalation, or code execution

- Asset exposure: single-user, shared, cloud, container, or appliance

- Patch availability: upstream only, vendor package, live patch, or pending

- Operational cost: reboot window, failover, testing, and rollback plan

Strengths and Opportunities

The handling of CVE-2026-31680 shows several strengths in the modern kernel security process, particularly the ability to describe a subtle concurrency flaw in concrete implementation terms. It also gives defenders an opportunity to improve how they inventory Linux kernels across Windows-adjacent environments, cloud workloads, and developer machines.- Clear root-cause language makes the bug understandable without sensationalism.

- Stable-branch references indicate a path for distribution backports.

- RCU lifetime alignment is a targeted fix rather than a broad subsystem rewrite.

- Procfs visibility gives defenders a plausible place to look for crash signatures.

- Hybrid fleet awareness can improve Windows and Linux asset correlation.

- No premature severity inflation leaves room for evidence-based prioritization.

- Patch discipline can reduce similar risk in adjacent kernel subsystems.

Risks and Concerns

The largest concern is not that every IPv6-enabled host is instantly exposed to a remote catastrophe. The larger operational risk is that organizations underestimate local kernel denial-of-service bugs in shared environments, especially where containers, CI jobs, and developer tooling create less obvious attack surfaces.- Use-after-free bugs can sometimes prove more exploitable than initial reports suggest.

- NVD scoring delays may cause inconsistent prioritization across tools.

- Backported kernels can confuse teams relying on simplistic version checks.

- Unrebooted systems may remain vulnerable after package installation.

- Container capabilities may widen the practical attack surface.

- WSL and VM kernels may fall outside normal Windows patch routines.

- Appliance vendors may lag behind upstream stable fixes.

What to Watch Next

The next important development will be vendor-specific advisories and package mappings. Upstream stable commits are useful, but enterprise administrators need to know exactly which Red Hat, Ubuntu, Debian, SUSE, Oracle, Amazon, Azure, or appliance kernel builds contain the fix. Until that mapping is available, teams should track their vendors and avoid guessing from mainline version numbers alone.Security researchers may also publish reproducer code or deeper analysis of the race. If public proof-of-concept material appears, the urgency for shared systems will rise, even if the impact remains denial of service. A reliable crash against multi-tenant infrastructure is still a security event.

Watch for these items over the coming days and weeks:

- Vendor advisories confirming affected and fixed package builds

- Live patch coverage statements from enterprise Linux providers

- Scanner updates that add version and package-level detection

- Public reproducer code targeting

/proc/net/ip6_flowlabel - Revised NVD enrichment, including CVSS and CWE metadata

The broader takeaway from CVE-2026-31680 is that kernel security still turns on details most users never see: pointer lifetimes, RCU grace periods, procfs iterators, and the timing of object teardown. For WindowsForum readers managing modern hybrid environments, that is precisely why Linux kernel CVEs deserve a place in the same operational conversation as Windows updates, cloud advisories, and container hardening. Patch promptly, verify the running kernel, and treat “local-only” bugs with the seriousness appropriate to the shared systems that now define modern computing.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center