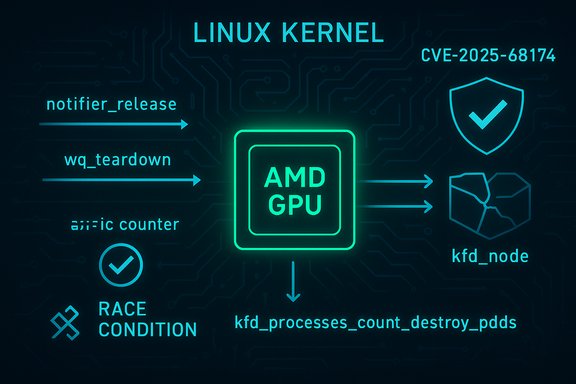

In the Linux kernel’s AMD GPU stack, a race condition in the AMD KFD (Kernel Fusion Driver) process handling has been fixed under CVE‑2025‑68174: a teardown/switch‑partition window could let one thread access a kfd node after another has torn it down, producing kernel oopses and deterministic host instability; the upstream remedy introduces an atomic per‑device process counter (kfd_processes_count) and moves decrement logic to a safer teardown point to close the race.

The AMDGPU + KFD subsystem is the Linux kernel’s path for exposing AMD GPU compute resources to userland processes. KFD tracks GPU compute processes with per‑device structures and tables (for example, kfd_processes_table and kfd_node references) and uses multiple paths for initialization and teardown, including notifier-based callbacks and workqueue teardown routines.

The vulnerability arises because the code that removes an entry from the kfd_processes_table runs in one notifier context, while the complete process teardown that releases the kfd_node pointer occurs later in a workqueue context. If a partition switch or device‑fini path races with a concurrent workqueue teardown, the workqueue code may still reference kfd_node fields after the other context already destroyed them, yielding a kernel crash. This specific failure mode (race between notifier release and workqueue teardown) is the core of CVE‑2025‑68174.

Practical action items remain clear: inventory hosts with AMD GPU drivers, apply vendor kernel updates that include the fix and reboot, and if patching is delayed, restrict device access and monitor kernel logs for the canonical traces. The long tail — vendor and OEM kernels that lag upstream — is the most persistent residual risk and merits vendor engagement and advocacy for backports.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background

Background

The AMDGPU + KFD subsystem is the Linux kernel’s path for exposing AMD GPU compute resources to userland processes. KFD tracks GPU compute processes with per‑device structures and tables (for example, kfd_processes_table and kfd_node references) and uses multiple paths for initialization and teardown, including notifier-based callbacks and workqueue teardown routines.The vulnerability arises because the code that removes an entry from the kfd_processes_table runs in one notifier context, while the complete process teardown that releases the kfd_node pointer occurs later in a workqueue context. If a partition switch or device‑fini path races with a concurrent workqueue teardown, the workqueue code may still reference kfd_node fields after the other context already destroyed them, yielding a kernel crash. This specific failure mode (race between notifier release and workqueue teardown) is the core of CVE‑2025‑68174.

What happened technically

The race sequence (simplified)

- Process B initiates a partition switch (or device finalize) and begins tearing down KFD device state, including tearing down kfd_node objects.

- The code path that removes the process from kfd_processes_table is executed in a notifier (kfd_process_notifier_release), but the full process teardown (kfd_process_wq_release) runs asynchronously under a workqueue.

- Process A, a KFD workqueue worker, is concurrently executing kfd_process_wq_release and attempts to dereference kfd_node members that Process B has already begun or completed destroying.

- The overlap results in use‑after‑free / invalid memory access and kernel oops traces (observed call stacks include kfd_process_wq_release and amdgpu frames).

The upstream fix, at a glance

The patch introduces a per‑device, atomic counter named kfd_processes_count that is incremented on process creation and decremented only after the process’s workqueue teardown is fully finished (moved to a safer teardown site such as kfd_process_destroy_pdds in the v2 revision). This changes the switch/partition logic from a simple check of whether the processes table is empty to a robust, atomic count check that cannot race with asynchronous teardown callbacks. The v2 revision also scoped the counter per kfd_dev and fixed a related bug in where the decrement occurs.Who is affected and why you should care

- Systems running Linux kernels with the AMDGPU/KFD stack loaded and enabled are in scope. That includes desktops, workstations, and servers with AMD GPUs and kernels that predate the upstream fix.

- The vulnerability is a robust availability (denial‑of‑service) primitive: deterministic kernel oopses and driver crashes have been observed in dmesg traces that reference KFD and amdgpu call stacks. While the defect is not described as an information‑leak or privilege‑escalation primitive, the ability to reliably crash kernels is operationally serious—especially in multi‑tenant, CI/CD runner, or cloud GPU host environments.

- Vendor and distribution backport status varies. Desktop distributions and mainstream kernels often pick up such fixes quickly, but long‑tail vendor kernels (embedded devices, OEM builds, appliance kernels) may lag and remain vulnerable. Prioritize hosts that expose DRM device nodes (/dev/dri/*) to untrusted processes or containers.

Evidence from kernel logs and what to hunt for

The practical forensic indicators are consistent across reports:- Kernel oops traces containing functions like kfd_process_wq_release, amdgpu_xcp_pre_partition_switch, amdgpu_amdkfd_device_fini_sw, or messages about invalid ID frees (e.g., “ida_free called for id=… which is not allocated”) are canonical signatures.

- Journalctl/dmesg searches that should be part of triage:

- journalctl -k | grep -E "kfd|amdgpu|ida_free"

- dmesg | grep -E "kfd_process_wq_release|amdgpu|ida_free"

- If you see repeated compositor or DRM driver crashes, or kernel oops traces including the amdgpu/kfd stack, treat that host as high priority for patching and isolation.

How the fix changes behavior (why it works)

- Replacing a table-empty test with an atomic counter eliminates the window where an entry has been removed from the visible table while the worker teardown is still in flight. Counting semantics provide an unambiguous, race‑resistant condition: a partition switch can only proceed when the atomic per‑device counter reaches zero, and the counter is only decremented after the asynchronous workqueue teardown completes. That ordering prevents the use‑after‑free scenario.

- The second iteration of the patch (v2) refines ownership: moving the decrement to kfd_process_destroy_pdds ensures the lifecycle termination point is the last place where teardown work runs, avoiding premature decrements that could reintroduce windows of inconsistency.

Practical mitigation and response checklist

- Inventory exposure (minutes)

- Identify hosts that load AMD GPU modules:

- uname -r; lsmod | grep amdgpu

- Find DRM device nodes and inspect permissions:

- ls -l /dev/dri/*

- List containers and VMs that mount or passthrough /dev/dri: check container definitions, orchestration manifests, and cloud instance metadata.

- Patch (hours)

- Apply vendor/distribution kernel updates that include the upstream fix and reboot into the updated kernel. Because this is a kernel‑level change, a reboot is mandatory to activate the patch. Confirm the kernel package changelog or vendor advisory references the upstream commit or CVE mapping.

- Short‑term compensations if you cannot patch immediately

- Restrict access to DRM device nodes: change udev rules or group ownership so /dev/dri/* is accessible only to trusted users.

- Remove device passthrough and avoid mounting /dev/dri into untrusted containers.

- Consider blacklisting the amdgpu module on hosts where GPU acceleration is non‑essential (note: this disables GPU acceleration and may disrupt workflows).

- Detection and monitoring (ongoing)

- Create SIEM/aggregator rules for the kernel traces described earlier and preserve full kernel oops and serial console logs for vendor triage.

- Run representative GPU workloads for 48–72 hours post‑patch to ensure no regression or lingering oops traces.

Verification for operators and integrators

- On a patched host, validate presence of the fix by searching the kernel source tree or installed kernel symbols for the new counter or related changes:

- grep -R "kfd_processes_count" /usr/src/linux-headers-$(uname -r) || git -C /path/to/kernel/tree log --grep="kfd_processes_count"

- If you maintain custom vendor kernels, confirm the fix exists before accepting an update as complete; ask your vendor for the upstream commit ID or patch details if not explicitly documented in the package changelog.

Severity and exploitability — a measured assessment

- Impact: primarily availability. The bug produces deterministic kernel oopses and driver crashes rather than an obvious confidentiality or integrity compromise. Multiple trackers emphasize that the exploit class is Denial‑of‑Service.

- Exploit vector: local — an unprivileged process with access to DRM device nodes, or an untrusted container/VM that has /dev/dri passed through, can reach the ioctl and VM init paths that exercise KFD and amdgpu code. Cloud and multi‑tenant hosts that expose GPUs to guests are particularly sensitive.

- Complexity: low to moderate. The conditions are reproducible in many configurations where the relevant code paths are reachable; the presence of a deterministic crash pattern makes weaponization as a DoS trivial once a vulnerable host is accessible.

- Public exploitation: as of the initial disclosures and tracker updates, there is no authoritative report of in‑the‑wild exploitation of CVE‑2025‑68174. However, absence of a public PoC should not be treated as lack of exploitable risk for local or shared systems.

Critical analysis — strengths of the upstream fix and residual risks

Strengths

- The upstream change is surgical: adding an atomic counter and moving decrement logic is a minimal, low‑risk patch surface compared with large re‑architectures. That makes it easier for maintainers and distribution packagers to backport into stable kernel branches without inducing regressions.

- The fix addresses the root cause (ownership/timing semantics across asynchronous teardown paths) rather than masking symptoms, which increases confidence it will close the observed crash windows across diverse systems.

Residual risks and operational caveats

- Vendor backport lag remains the primary exposure: embedded kernels, OEM images, Android kernels, and appliance vendors historically delay or omit backports, producing a long tail of vulnerable devices. Organizations should escalate to vendors for backports and track vendor advisories closely.

- Detection blind spots: kernel oops traces may be ephemeral in environments that do not collect serial console or persistent kernel logs. Hosts lacking full crash telemetry may fail to detect repeated exploitation. Implement log retention and centralized collection for kernel logs.

- Attack chaining: while the vulnerability itself is availability‑only, attackers frequently use DoS primitives to disrupt monitoring or failover behavior, creating opportunities to hide other actions. Treat deterministic crash bugs seriously in threat modeling for high‑value, multi‑tenant infrastructure.

Deployment checklist for system administrators

- Immediate (same day)

- Inventory all hosts with amdgpu loaded and list which ones expose /dev/dri to untrusted contexts.

- Near term (24–72 hours)

- Confirm vendor/distro advisories for kernel packages that reference CVE‑2025‑68174 or include the upstream fix; pin update windows.

- Schedule patch windows for affected hosts; apply kernel updates and reboot.

- For hosts that cannot be rebooted immediately, remove /dev/dri access from untrusted containers and limit GPU passthrough.

- Post‑deploy (1–3 days)

- Monitor kernel logs for oopses and run representative GPU workloads to prove stability. Preserve any pre‑patch oops traces for vendor triage.

For kernel integrators and vendors: guidance for backporting and verification

- The patch is deliberately small; backporting into stable branches should be feasible without wholesale redesign. Integrators should:

- Ensure the atomic counter lives per kfd_dev and that decrement occurs only after complete process teardown (kfd_process_destroy_pdds or equivalent finalizer).

- Validate the fix on representative hardware and stress with concurrent partition switches and artificially delayed workqueue teardown to reproduce previously seen oops traces and confirm they are absent post‑patch.

- Verification step: grep your kernel tree for kfd_processes_count and confirm the decrement site is in the final destroy/pdds path. If the symbol is absent, treat the kernel as unpatched until the commit is present or vendor confirms the backport.

Final assessment

CVE‑2025‑68174 is a classic kernel race/ownership bug with a straightforward operational impact: deterministic kernel oops and crashes in AMD GPU compute teardown sequences. The upstream remediation — adding an atomic per‑device process counter and moving decrement logic to a final teardown point — is an appropriate and low‑risk surgical fix that closes the race window. Operators should treat this as a high‑priority fix for multi‑tenant and GPU‑exposed infrastructure, and prioritize patching, limiting access to /dev/dri in the short term, and implementing robust kernel log collection for detection.Practical action items remain clear: inventory hosts with AMD GPU drivers, apply vendor kernel updates that include the fix and reboot, and if patching is delayed, restrict device access and monitor kernel logs for the canonical traces. The long tail — vendor and OEM kernels that lag upstream — is the most persistent residual risk and merits vendor engagement and advocacy for backports.

Source: MSRC Security Update Guide - Microsoft Security Response Center