The Linux kernel’s scheduler subsystem received a targeted fix this month for a subtle-but-real concurrency bug tracked as CVE‑2026‑23225: a logic error in sched/mmcid where code assumed a Concurrency ID (CID) was “CPU‑owned” during a mode transition, producing an out‑of‑bounds access (reported as a KASAN use‑after‑free). The issue is narrow in scope — it arises only during specific per‑CPU ↔ per‑task CID mode transitions under a tight concurrency window — but it touches a critical kernel resource and was patched upstream quickly; operators and distro maintainers should treat this as a stability/security hazard and plan timely updates.

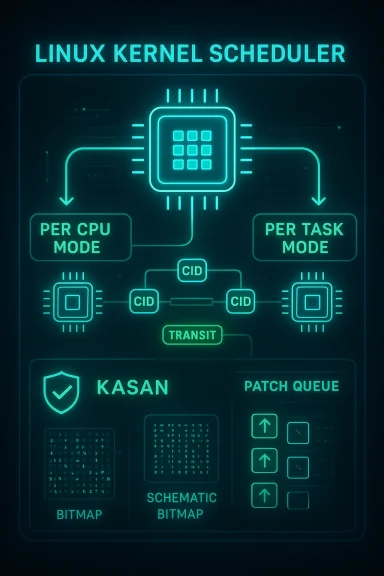

Concurrency IDs (CIDs) are an allocation used by the kernel’s scheduler/MM subsystem to help track per‑memory‑context ownership and to keep CID numbers compact across many CPUs and tasks. The kernel supports two ownership models: per‑CPU CID ownership (the CPU holds a CID and tasks on that CPU borrow it) and per‑task CID ownership (the task carries a CID directly). Mode changes between the two models are purposely lightweight but require careful coordination because threads, forks, and exits can happen concurrently with the “fixup” that transfers or releases CIDs.

CVE‑2026‑23225 arises when the code that handles the transition — specifically the sched/mmcid path — assumes a CID is CPU‑owned even when it is only temporarily marked as in transit. That mistaken assumption causes a code path to clear a bit that is not set and attempt a bitmap clear on a value representing the TRANSIT flag; the pointer/index used becomes invalid, producing an out‑of‑bounds access detected by KASAN. Multiple vendor trackers and upstream mailing lists describe the sequence that leads to the bug and the rationale for the upstream fix.

Conclusion

CVE‑2026‑23225 is an instructive, surgical kernel fix: small codepath, real consequence. Upstream maintainers have acted decisively to validate ownership before manipulating CID bookkeeping, and vendors are mapping the fix into distribution kernels. For operators the message is straightforward — inventory your kernels, apply the patched packages or backports, and reboot hosts on a prioritized schedule. Keep KASAN and kernel diagnostic data in preproduction to catch analogous edge cases earlier, and preserve logs if you encounter instability during the rollout. The bug itself is not a broad remote attack surface, but in modern multi‑tenant and cloud environments even local timing bugs can be material; patching remains the safest course.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

Concurrency IDs (CIDs) are an allocation used by the kernel’s scheduler/MM subsystem to help track per‑memory‑context ownership and to keep CID numbers compact across many CPUs and tasks. The kernel supports two ownership models: per‑CPU CID ownership (the CPU holds a CID and tasks on that CPU borrow it) and per‑task CID ownership (the task carries a CID directly). Mode changes between the two models are purposely lightweight but require careful coordination because threads, forks, and exits can happen concurrently with the “fixup” that transfers or releases CIDs.CVE‑2026‑23225 arises when the code that handles the transition — specifically the sched/mmcid path — assumes a CID is CPU‑owned even when it is only temporarily marked as in transit. That mistaken assumption causes a code path to clear a bit that is not set and attempt a bitmap clear on a value representing the TRANSIT flag; the pointer/index used becomes invalid, producing an out‑of‑bounds access detected by KASAN. Multiple vendor trackers and upstream mailing lists describe the sequence that leads to the bug and the rationale for the upstream fix.

Why this matters: subtle kernel concurrency, visible impact

This is not a simple off‑by‑one logic error in a userland library — it touches the kernel’s scheduler and memory‑management coordinators. The concrete consequences for a vulnerable system are:- Kernel memory corruption or slab corruption in some sequences, usually observed as a KASAN report during testing.

- Hard-to-reproduce kernel oopses or panics in production workloads that exercise high concurrency (fork/exit/exec and scheduling churn).

- Potential denial-of-service from crashes or instability; in theory a carefully crafted local sequence could be used toward privilege escalation, though that would require precise timing and local code execution capabilities. Upstream discussion describes the failure scenario as an out‑of‑bounds access rather than a trivially weaponizable remote path.

Technical analysis: what went wrong, step by step

The model: per‑CPU vs per‑task CIDs

- In per‑CPU mode, a CPU owns a CID and tasks running on that CPU implicitly use it. Ownership is represented in per‑CPU storage and an ONCPU flag.

- In per‑task mode, tasks are given explicit CID values and ownership is stored on the task itself.

The failure sequence

- One CPU initiates a mode change to per‑CPU mode and sets MM_CID_TRANSIT on certain task CID entries as a temporary marker.

- Another thread or the same task exits/forks before it is scheduled again and still carries the TRANSIT flag.

- sched_mm_cid_remove_user() clears the TRANSIT bit on the task and returns the CID to the pool — but the per‑CPU storage may still hold a corresponding per‑CPU CID entry.

- Later, sched_mm_cid_exit() assumes the prior mode had a CID owned by the CPU and calls mm_drop_cid_on_cpu(), which attempts to clear the ONCPU bit and then manipulate a bitmap based on that value.

- Because the TRANSIT flag (a high bit) is still set where code expects an ONCPU bit, the code computes an absurdly large bit index and invokes clear_bit() against that index, producing an out‑of‑bounds access and a KASAN‑reported UAF in testing.

The fix

The upstream patch makes the decision code validate that a CID is actually CPU owned before performing CPU‑specific drop operations (instead of assuming ownership based on the prior global mode). The change is surgical: where mm_drop_cid_on_cpu() previously assumed ONCPU ownership, it now checks the ONCPU bit explicitly and refuses to touch per‑CPU bitmaps unless ownership is confirmed. That simple validation prevents the clear_bit() call with the TRANSIT high‑bit value and removes the out‑of‑bounds access. The patch series is part of a broader, staged rewrite of mm_cid management to improve robustness and to simplify mode transitions.Upstream and vendor response

- The kernel mailing lists contain the patch thread, discussion, and follow‑ups from maintainers and the reporter; it progressed quickly through review and iterative fixes. The thread captures the exact diagnostic trace and the intended upstream remedy.

- LWN’s deeper analysis of the mm_cid rewrite gives useful background on why the subsystem is being refactored and why these particular concurrency windows are tricky; LWN frames the change as part of a larger effort to make CID management both faster and safer.

- Distribution trackers (Ubuntu, Debian, etc.) have recorded CVE‑2026‑23225 and are mapping the upstream commit into their package updates; maintainers are classifying priority (Ubuntu marked it “Medium”) and preparing kernel updates for affected series. Cloud vendors and downstream packagers are expected to push the patched kernels or backports according to their release policies.

Exploitability and risk — a pragmatic view

- Attack vector: Local only. The bug arises from a concurrency sequence involving fork/exit/scheduling; there is no network‑facing remote trigger described in the upstream material. This means an attacker requires local code execution/ability to run processes on the target host to trigger the condition.

- Difficulty: Non‑trivial. The sequence requires precise timing around mode transitions and scheduling. While that increases exploit complexity, it does not eliminate the risk — particularly in multi‑tenant or cloud environments where an attacker might already have a constrained execution capability (unprivileged containers, jailed workloads) and could try to escalate.

- Impact if triggered: Memory corruption and instability; at minimum a reproducible kernel oops or panic was observed under KASAN. In the worst case, careful exploitation of kernel memory corruption can lead to privilege escalation, but a public working exploit has not been reported in upstream discussions or vendor trackers at the time of writing. Operators should treat the bug as an availability and potential integrity risk until patched.

Patch status and what administrators should do now

What has changed upstream

- A set of focused patches validating CPU ownership and hardening mode‑switch paths has landed in the upstream kernel patch queue and propagated into the stable trees. The change is part of a broader mm_cid rewrite to reduce fragile concurrency windows.

Distribution and cloud vendor guidance

- Major distribution security trackers (Ubuntu, Debian) have CVE pages listing the problem and are scheduling or already shipping kernel package updates for affected series. Check your distribution’s security notices for the exact backport/fix versions applicable to your installation.

- For cloud images and vendor kernels (including vendor‑tuned kernels), expect a separate advisory or image refresh from your cloud provider; do not assume a patched upstream kernel immediately implies your provider’s images are patched. Historically, Microsoft’s Azure Linux and other platform kernel packages are specifically mapped by vendors and may differ from upstream. Where you rely on vendor images, verify with the vendor’s image/security bulletin before rolling.

Immediate action checklist (prioritized)

- Identify affected hosts: inventory kernel versions across fleet; cross‑check with distro CVE pages and vendor advisories.

- Apply vendor kernel updates or backports as they become available. Where updates are unavailable, schedule an emergency backport or kernel rebuild that includes the upstream mmcid fixes.

- Reboot at a maintenance window after updating kernels; the change touches scheduling state and requires a restart to fully clear per‑CPU state.

- For multi‑tenant or cloud environments, prioritize host‑level and hypervisor hosts before less critical VMs or containers. If you cannot patch hosts immediately, consider tenant isolation, reduced scheduling churn, or workload migration to patched hardware.

- Monitor for kernel oopses and KASAN reports (if enabled) and for unexpected restarts; collect and preserve logs for incident response in case of instability.

Hardening and mitigation beyond patching

Patching is the definitive fix; however, when immediate patching is not possible, consider the following mitigations:- Reduce risky concurrency patterns: workloads that frequently fork/exit in tight loops or create heavy scheduling churn are more likely to trigger edge cases in mm_cid code. Reconfigure or throttle such workloads where feasible.

- Limit local code execution paths: tighten container isolation, use seccomp/BPF filters, and restrict who can run compilation or scheduling‑heavy tests on multi‑tenant hosts. These are general hardening best practices that reduce the likelihood of local proof‑of‑concept exploit sequences.

- Turn on and retain KASAN/fuzzer test results in pre‑production if you have the capacity; continuous kernel testing catches subtle concurrency bugs early. Upstream regression testing that surfaced this issue relied on KASAN and fuzzing reports.

Detection: what to look for in logs and monitoring

- Kernel oops traces that include mm_cid, mm_drop_cid_on_cpu, sched_mm_cid_exit, or clear_bit calls with odd indexes. These symbols are mentioned in the upstream diagnostics and are strong indicators of the failure path.

- KASAN output lines and slab corruption messages in dmesg or crash dumps. KASAN often reports the initial symptom for such out‑of‑bounds accesses.

- Unexpected kernel panics or reboots on hosts running workloads that exercise heavy fork/exec/exit patterns (compilation farms, CI runners, container builders). Correlate timestamps with user workloads to identify reproducible triggers.

Why timely patching matters for cloud and enterprise environments

CVE‑2026‑23225 is a classic example of a timing/race bug whose exploitability is constrained but whose impact is outsized when it hits production: a single kernel oops can crash a hypervisor host or a database node, causing downtime and potential corruption. For multi‑tenant cloud providers, even a locally exploitable timing bug can be abused when an attacker already has a foothold in a co‑resident container or VM. For that reason, most responsible‑disclosure and patching models prioritize such kernel fixes for rapid backport and distribution. Operators must maintain an up‑to‑date inventory of kernel versions in use, and treat kernel patching as a first‑class security operation. Vendor advisories and distro trackers are already mapping the problem; follow those pages for the exact package names and CVE‑to‑package mappings for your environment.Practical example: how a small cluster owner should respond (stepwise)

- Query your fleet for kernel packages and versions. Export the list and match it to distribution CVE notices (Ubuntu/Debian transform CVE → package mapping).

- For each affected host, schedule package maintenance and a reboot. If hosts are part of a high-availability set, perform rolling upgrades; remove nodes from load balancers before reboot.

- If you run vendor kernels (cloud images), check your image catalog and vendor bulletins — do not assume upstream patches are present in the vendor image until explicitly listed. Request or apply vendor‑specific images that include the upstream fix where necessary.

- After patching, validate by re-running workload sampling for fork/exec heavy tests, and monitor for absence of earlier oops traces. Keep crash logs for at least the next several weeks to spot latent instability.

What the patch teaches engineers about kernel concurrency design

- Small, well‑motivated invariants (like “a CID is CPU‑owned only when ONCPU is set”) can be subtle and brittle across mode transitions; code that bridges ownership models must check provenance, not just global mode flags. The upstream fix replaces assumptions with explicit validation.

- Two‑phase transitions that use transient flags (like TRANSIT) help avoid allocation storms, but they increase reasoning complexity. The kernel team is concurrently refactoring the mm_cid subsystem to reduce these fragile windows — an important maintenance lesson for long‑lived concurrent subsystems.

- Instrumentation (KASAN, syzbot reports, and heavy testing) remains essential: this issue was discovered and triaged thanks to sanitizers and community fuzzing tools. Organizations that run kernel CI with sanitizers gain early detection advantages.

Final assessment and recommendations

CVE‑2026‑23225 is a real kernel bug with a clear, reproducible failure mode under specific concurrency conditions. The fix is narrowly scoped and upstreamed, but operators must still act: ream patch, reboot hosts, and validate stability. The risk profile is largely local‑only and exploit difficulty is non‑trivial, yet the potential consequences (kernel corruption, crashes, possible privilege escalation in a worst‑case exploit) make it critical for production, multi‑tenant, and cloud environments to update promptly.- Apply vendor kernel updates as soon as they are available.

- Prioritize hypervisor and multi‑tenant hosts for patching and reboot.

- Harden local execution pathways and monitor kernel logs for mm_cid‑related oopses until updates are deployed.

Conclusion

CVE‑2026‑23225 is an instructive, surgical kernel fix: small codepath, real consequence. Upstream maintainers have acted decisively to validate ownership before manipulating CID bookkeeping, and vendors are mapping the fix into distribution kernels. For operators the message is straightforward — inventory your kernels, apply the patched packages or backports, and reboot hosts on a prioritized schedule. Keep KASAN and kernel diagnostic data in preproduction to catch analogous edge cases earlier, and preserve logs if you encounter instability during the rollout. The bug itself is not a broad remote attack surface, but in modern multi‑tenant and cloud environments even local timing bugs can be material; patching remains the safest course.

Source: MSRC Security Update Guide - Microsoft Security Response Center