The Linux kernel’s md/raid5 code contained a subtle but dangerous integer‑overflow bug in the function raid5_cache_count() that was tracked as CVE‑2024‑23307 — a defect that can be forced by concurrent modifications of RAID stripe‑count variables and that may lead to a sustained or persistent loss of availability on affected systems.

The Linux MD (multiple device) subsystem provides software RAID functionality, and the RAID5 implementation maintains internal accounting for stripe caches used to accelerate IO. The vulnerable code path lives in drivers/md/raid5.c inside the kernel and revolves around arithmetic that computes how many cache stripes are currently available. Under normal operation this arithmetic is harmless, but when concurrent code updates the underlying configuration fields the subtraction can underflow or wrap around, producing an unexpectedly large value and downstream misbehavior. This class of bug is an integer overflow / wraparound (CWE‑190) and is commonly triggered by race conditions or insufficiently-protected reads of shared state.

Multiple vulnerability trackers and Linux distributors recorded the issue in January 2024, and vendors subsequently published kernel updates and advisories that describe the flaw as an availability‑first risk: an attacker or misbehaving local actor can cause crashes, hangs, or resource exhaustion by coercing the arithmetic into producing unsafe values. The vulnerability was published to the public CVE records as CVE‑2024‑23307 and appears in downstream vendor advisories and distribution security trackers.

Caveat: while the fix prevents the immediate arithmetic underflow that opened the path to resource corruption, maintainers and distributors still emphasize testing in your environment — especially for storage stacks and cluster environments where RAID behavior is critical — because subtle timing and concurrency characteristics can vary across architectures and workload profiles.

Operators should prioritize patching storage hosts and multi‑tenant images, validate their RAID configurations, and enforce least‑privilege controls around block device and RAID management. The fix is small and non‑invasive, but the cost of ignoring the vulnerability can be downtime at exactly the worst possible moment. For resilience and operational security, install the distributed vendor updates, test RAID behavior post‑patch, and continue to monitor vendor advisories for any related follow‑ups.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

The Linux MD (multiple device) subsystem provides software RAID functionality, and the RAID5 implementation maintains internal accounting for stripe caches used to accelerate IO. The vulnerable code path lives in drivers/md/raid5.c inside the kernel and revolves around arithmetic that computes how many cache stripes are currently available. Under normal operation this arithmetic is harmless, but when concurrent code updates the underlying configuration fields the subtraction can underflow or wrap around, producing an unexpectedly large value and downstream misbehavior. This class of bug is an integer overflow / wraparound (CWE‑190) and is commonly triggered by race conditions or insufficiently-protected reads of shared state.Multiple vulnerability trackers and Linux distributors recorded the issue in January 2024, and vendors subsequently published kernel updates and advisories that describe the flaw as an availability‑first risk: an attacker or misbehaving local actor can cause crashes, hangs, or resource exhaustion by coercing the arithmetic into producing unsafe values. The vulnerability was published to the public CVE records as CVE‑2024‑23307 and appears in downstream vendor advisories and distribution security trackers.

What the bug is — technical anatomy

The vulnerable function

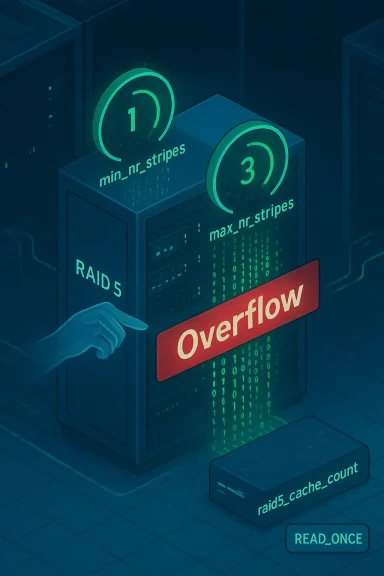

At the heart of CVE‑2024‑23307 is the function *raid5_cache_count(struct shrinker shrink, gfp_t gfp_mask) (or similarly named counting helper depending on kernel version) that returns the number of cache stripes available by subtracting the configured minimum stripe count from the configured maximum stripe count. The function reads two shared fields: conf->max_nr_stripes and conf->min_nr_stripes**. If these reads are not stable (i.e., they can change concurrently because another thread is updating them), then a transient condition can make the subtraction produce a negative result which—when interpreted as an unsigned value or otherwise used in size calculations—becomes a large positive number. That large value can then be used to allocate or index internal tables incorrectly, producing memory corruption, excessive allocations, or kernel panics.How concurrency turns arithmetic into a vulnerability

The specific failure mode reported by researchers and maintainers is an atomicity violation: the code performs multiple reads from shared variables but does not guarantee that the values remain consistent across those reads. Separately, a different function — for example raid5_set_cache_size() — may update conf->min_nr_stripes and conf->max_nr_stripes in multiple steps. If raid5_cache_count() samples the pair of values while they are in an intermediate state, the check that protects against negative results can become ineffective. The kernel’s shrinker machinery and stripe cache code expect a sane non-negative count; when the arithmetic wraps the rest of the subsystem can be driven into pathological states. Kernel maintainers accepted this interpretation and proposed fixes that stabilize reads.The upstream fix — principle and implementation

The accepted upstream remediation is straightforward and surgical: make the reads stable inside raid5_cache_count() so that the min/max values cannot change between the test and the subtraction. The patch introduces local copies (or uses READ_ONCE semantics) to guarantee atomic reads of the two configuration fields and then performs the comparison and subtraction on those local values. This eliminates the race window and prevents the arithmetic underflow/wrap that could otherwise be forced. The patch was discussed and submitted to multiple kernel stable trees and to distribution backports.Impact and exploitability

What an attacker can do

- The immediate, realistic impact described by distributors is loss of availability — the kernel can panic, the md subsystem can abort operations, or system resources can be exhausted, producing a sustained or persistent denial of service until the kernel or the affected service is restarted. Several vendor advisories explicitly classify availability as the primary consequence.

- Some vendor writeups and secondary trackers mention the possibility of more severe outcomes — for instance, memory corruption that leads to privilege escalation or arbitrary code execution — but those outcomes depend on the precise code path used by the malformed arithmetic value and are not universally reported as confirmed by distributors. When downstream providers describe potential code‑execution scenarios they are typically conservative risk models rather than confirmed exploitation paths. Where exploitability beyond DoS is asserted, that claim should be treated as theoretical unless a proof‑of‑concept or in‑the‑wild report is provided.

Required access and complexity

- Most authoritative trackers and distribution advisories describe the vulnerability as local in nature and requiring elevated privileges (or at least the ability to control local RAID device configuration). In other words, a remote, unauthenticated attacker typically cannot trigger this vulnerability unless they can run privileged code on the target system or otherwise control md/raid5 configuration operations. That makes the immediate exploit vector local or limited to environments that permit untrusted users to manipulate RAID settings.

- Attack complexity is generally rated low to moderate by various sources for the DoS outcome (coaxing the arithmetic into overflow simply requires inducing the right sequence of concurrent updates), while more advanced exploitation (achieving code execution) would be harder and more dependent on memory layout and compilation choices. Again, those more advanced outcomes are theoretical in published disclosures.

Scoring and why vendors disagree

Different vendors and aggregators assign different severity scores. For example, some trackers list a CVSS v3.1 score of 4.4 (medium) while others aggregate higher scores (some present higher impact models leading to 7.x values). The divergence comes from differing assumptions about how easy it is to exploit the bug remotely, whether privilege escalation or code execution is plausible in normal configurations, and whether availability impacts are counted as partial or total. When evaluating your exposure, rely on your distribution vendor's advisory and the kernel version you run rather than a single universal score.Affected versions and vendor responses

Kernel series and ranges

Publicly available CVE trackers and distribution advisories indicate that the defect affects a broad range of upstream kernel versions, with specific CPE mappings and version ranges varying between trackers. Some aggregated sources list affected ranges that include kernel series from 4.x through several 6.x releases prior to corresponding stable backports; other trackers indicate more narrow windows for particular branches. Distributors that ship stable or long‑term kernels typically published patched packages or backports rather than asking administrators to upgrade to an upstream release. Because version coverage varies by vendor, operators must check their distribution advisories for exact applicability.Distribution advisories and patches

- Oracle, Amazon and major vendors: Oracle Linux and Amazon Linux published tracking entries and advisories that list CVE‑2024‑23307 and provide versioned errata and kernel package updates. These advisories are the primary place to obtain patched kernel builds for those distributions.

- Red Hat: Red Hat included the defect in several RHSA advisories (and their derived product advisories), backporting fixes into RHEL kernel trees and shipping updated kernel packages. Tenable/Nessus and other scanners reflect these vendor bulletins in vulnerability checks.

- Upstream kernel: The upstream patch to stabilize the reads in raid5_cache_count() was discussed and submitted to kernel maintainers and the md/raid5 maintainers, and patches were made available for stable kernel branches. The patch name and diff are available in kernel patch archives and mailing list posts detailing the introduction of local variables or READ_ONCE usage to guarantee stable reads.

Distribution‑specific notes

Because many enterprise distributions ship kernels with long maintenance lifecycles and apply security backports, the easiest operational path is to install the vendor-provided security update for your distribution and kernel series. The precise package name, erratum number, and install commands differ among CentOS/RHEL, Ubuntu, Debian, SUSE, Oracle Linux and cloud provider images; consult the vendor advisory for step‑by‑step instructions. Oracle and Amazon published vendor pages that enumerate errata and the kernel builds that resolve the CVE.Patch analysis — what the fix changed and why it’s safe

The accepted fix for the defect follows a minimal and conservative approach:- Introduce local copies or READ_ONCE-wrapped reads of conf->max_nr_stripes and conf->min_nr_stripes inside raid5_cache_count() so that both values are observed atomically with respect to the function’s logic.

- Perform the comparison and subtraction on those stable local values and return the computed difference (or zero when an unlikely inverted relation is observed).

Caveat: while the fix prevents the immediate arithmetic underflow that opened the path to resource corruption, maintainers and distributors still emphasize testing in your environment — especially for storage stacks and cluster environments where RAID behavior is critical — because subtle timing and concurrency characteristics can vary across architectures and workload profiles.

Detection, mitigation, and remediation steps

Immediate detection and triage (what to check now)

- Identify running kernel version(s) across your estate and map them to vendor advisories. Use your inventory system or configuration management tool to produce a kernel version report for all hosts that might run md/raid arrays. Many trackers include precise CPEs and version ranges; check the distribution advisory for backport status.

- Determine whether md/raid5 is configured or in use. Systems without software RAID or without the md/raid5 module loaded are not exposed. Use commands such as lsmod, /proc/mdstat, or your orchestration manager to detect active arrays.

- If you run shared, multi‑tenant, containerized or cloud images where unprivileged tenants may be able to create or manipulate RAID devices, treat exposure as higher risk and prioritize patching. The vulnerability is primarily local, but multi‑tenant setups increase the attack surface.

Short‑term mitigations (when you cannot immediately patch)

- If md/raid5 functionality is not required, consider unloading the raid5 module or disabling the md subsystem at boot in coordinator-managed images until patched. This is a blunt but effective mitigation for hosts where software RAID is not essential. Note that unloading modules on active arrays will disrupt service; plan carefully.

- Harden access controls so that unprivileged users cannot perform operations that alter RAID configuration. The vulnerability typically requires local configuration control; restricting who can create or modify md arrays reduces exploit scope. Use standard system hardening and least-privilege practices.

Long‑term remediation (the recommended fix)

- Apply the vendor-provided kernel security update for your distribution and kernel series. This is the canonical remediation: vendors shipped patched kernel packages or backported the upstream fix.

- If your environment requires custom kernels, incorporate the upstream patch (the READ_ONCE/local copy change) and rebuild with your configuration. Test thoroughly in staging before promoting to production.

- After patching, validate RAID stability and run a brief workload test for md/raid5 I/O patterns. Because the bug is concurrency-related, stress tests that exercise concurrent configuration and shrinker activity are valuable to confirm the fix in your specific build and hardware.

Risk analysis and operational perspective

Why this matters to enterprise operators

Software RAID is often used in storage servers, hyperconverged appliances, and cloud images. A bug that can induce a kernel panic, hang md synchronization, or corrupt internal bookkeeping can translate directly into service outages, data unavailability, and lengthy recoveries. For storage‑heavy infrastructure such as backups, databases, file services, and clustered filesystems, even a transient kernel panic during maintenance windows can create cascading operational impact. Vendor advisories therefore emphasize availability as the priority risk for this CVE.Practical exposure model

- Exposed: systems that run the vulnerable kernel version(s) and that have md/raid5 configured or allow local actors (e.g., admins, privileged containers) to change RAID configuration. These must be patched urgently.

- Low exposure: systems that do not use software RAID, run a patched kernel, or have strict separation of privileges.

- Cloud providers: many cloud OS images and vendor kernel builds were updated by distributors; however, the presence of the vulnerable code depends on how the kernel was configured and whether md/raid5 was compiled in. Customers should consult their cloud vendor’s operating system image advisories.

The “availability first” judgment

Multiple trackers and vendor advisories classify the impact primarily as availability (denial of service) rather than guaranteed privilege escalation. That is an important nuance: while worst‑case theoretical impacts include memory corruption and arbitrary code execution, real-world operational consequences that have been documented and emphasized involve service interruption and crashes. Treat the highest‑likelihood outcome (DoS/hang/panic) as the primary operational threat and plan patch rollouts accordingly.Recommendations — prioritized checklist

- Patch now: deploy the vendor kernel updates that include the CVE‑2024‑23307 fix to all affected hosts, prioritizing storage servers, hyperconverged nodes and multi‑tenant hosts. Use your standard patch windows and rollback plans; test on a small subset first.

- Inventory and exposure scoping: run a rapid inventory to list which hosts run md/raid5 and which kernel versions are in use. Use that to prioritize the remediation batch.

- Harden access: restrict who can manipulate RAID configuration and enforce least privilege. Where practical, disallow untrusted workloads from loading kernel modules or creating block devices.

- Validate and test: after applying updates, validate md/raid5 operation with workload tests and recovery drills to ensure arrays behave normally and no regressions are introduced by the patch.

- Monitor advisories: track vendor security advisories for additional fixes or follow‑ups; some distributions backport fixes and later ship cumulative updates. Use your patch management tooling to capture the exact errata IDs relevant to your environments.

Uncertainties, caveats, and what we did not find

- There is no broadly published, credible proof‑of‑concept showing remote, unauthenticated exploitation that leads to privilege escalation in default configurations. Most evidence points to local access requirements and availability impacts. Published trackers and vendor advisories treat more advanced exploitation outcomes as possible but not demonstrated. Operators should therefore treat DoS as the realistic threat and treat other impacts as speculative unless new proof emerges.

- Score discrepancies: different aggregators produced different CVSS values (some 4.4, some 7.x). This is the result of differing assumptions; use your vendor’s advisory and impact model rather than a single global score.

- Hardware and configuration differences matter: concurrency-related bugs can be more or less likely to manifest depending on kernel configuration options, load patterns, and scheduling/timing characteristics on various CPU architectures. The upstream fix is small and low‑risk, but encouragingly it addresses the root cause rather than papering over symptoms.

Conclusion

CVE‑2024‑23307 is a representative example of a concurrency‑driven integer‑overflow bug in low‑level kernel storage code: the vulnerability is real, it can cause severe availability problems for systems that use md/raid5, and it was addressed upstream by stabilizing reads inside raid5_cache_count(). The remediation path is straightforward — apply vendor kernel updates or backports — but the operational consequences of a delayed patch can be significant for storage‑dependent services.Operators should prioritize patching storage hosts and multi‑tenant images, validate their RAID configurations, and enforce least‑privilege controls around block device and RAID management. The fix is small and non‑invasive, but the cost of ignoring the vulnerability can be downtime at exactly the worst possible moment. For resilience and operational security, install the distributed vendor updates, test RAID behavior post‑patch, and continue to monitor vendor advisories for any related follow‑ups.

Source: MSRC Security Update Guide - Microsoft Security Response Center