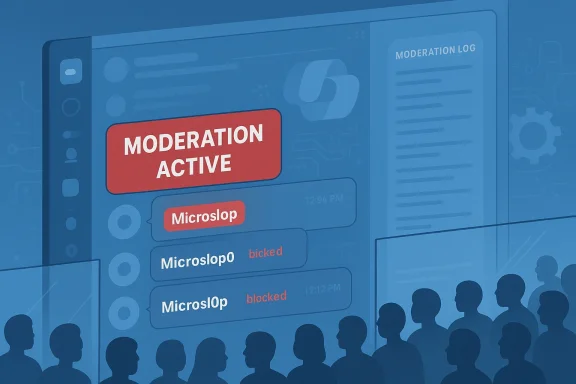

Microsoft’s official Copilot Discord server was quietly enforcing a one-word ban — “Microslop” — and when the community pushed back by testing and evading the filter, moderators effectively locked large parts of the server to stop the escalation, leaving members unable to read or post while the incident played out publicly. (windowslatest.com)

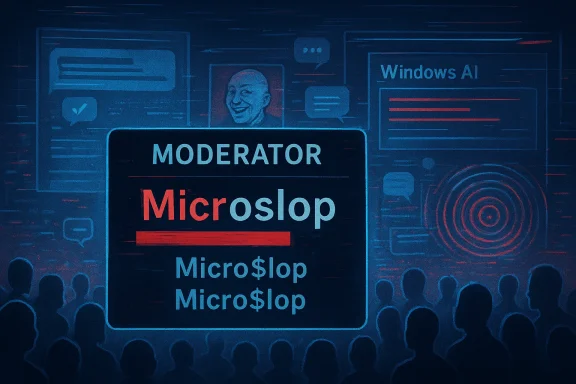

The word “Microslop” is shorthand for a much larger user revolt: a scornful portmanteau that fuses Microsoft’s name with the widely used tech term slop — shorthand for low‑quality AI output. The meme first spread in earnest after public comments about “slop vs. sophistication” from Microsoft leadership, and it quickly metastasized into browser extensions, protest posts, and repeated mentions across social platforms. Mainstream tech outlets and specialist sites documented the trend, noting how the nickname became a proxy for frustration with aggressive AI rollout and perceived quality regressions.

Community reaction to the Copilot push has not been limited to jokes. Over the past year, Windows users and IT pros have logged reliability complaints, surprised re-enabling of AI features, and an optics problem: visible AI surfaces launched into an OS that many users felt still had fundamental stability issues. That mix of grievance and ridicule created fertile ground for a single, sharable insult to stick — and for it to spread into places brand teams would prefer remain constructive.

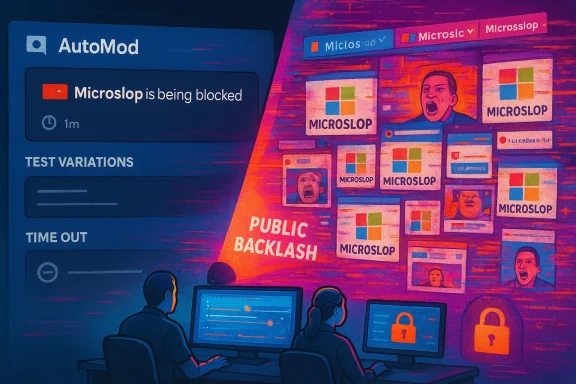

After the block was noticed and shared on social media, users deliberately attempted circumventions — substituting letters for numbers, inserting punctuation, or using lookalike characters — and those variations reportedly bypassed the server’s keyword filter. The testing escalated from a meme‑driven prank to a raid-like situation: some accounts lost posting privileges, message history was hidden in affected channels, and sections of the server were placed into a locked or read-only state while moderators intervened. Windows Latest’s timeline shows the filter discovery, quick experimentation, and the server‑wide containment measures in short order. (windowslatest.com)

Important verification note: the core reporting about the filter and the lockdown comes from Windows Latest’s direct coverage and media included in that story. Contemporary reporting from other outlets documented the broader Microslop meme and Microsoft’s public AI push, but independent, contemporaneous confirmation of the specific Discord moderation actions beyond Windows Latest’s reporting was limited at the time of coverage. Readers should treat the Discord incident as credibly reported by a named tech outlet, but acknowledge the usual caveat: moderation actions inside private, brand‑run community servers are sometimes short‑lived and hard to corroborate once restored. (windowslatest.com)

But the Microslop episode shows the darker side of that calculus: when a moderation policy intersects with a viral meme, the enforcement itself becomes the story. The Streisand effect kicks in. An apparently minor filter becomes proof for critics that the company is trying to silence dissent, which rapidly amplifies the criticism beyond the server and into broader social conversation. Multiple outlets documented how Nadella’s phrasing about AI quality helped spark the meme, and how the meme then fed organized protests (including browser extensions and coordinated posts). That context matters because it explains why a single filter can balloon into reputational damage.

Companies must weigh three competing goals in such moments:

But the execution reveals a deeper problem: a lack of calibrated, transparent moderation paired with a product strategy that made the brand a repeated target. The moderation action, once visible, validates critics’ claims about opaque control and corporate defensiveness. The result is reputational friction that is much harder to repair than the original meme.

This is not just a community incident; it is a symptom of a fragile trust relationship between Microsoft and its Windows user base. Rebuilding that trust will require more than better moderation logic — it will require visible improvements in product reliability, persistent and discoverable user controls, and clearer public communication about how customer feedback shapes the roadmap. (windowslatest.com)

Microsoft’s Copilot Discord moderation episode is small in isolation but telling in context: a viral nickname met with a blunt automated block, an active community that immediately found workarounds, and a moderation response that escalated into server lockdowns visible to the public. The technical fix for a single keyword is trivial; the harder work is rebuilding user trust inside and outside community channels. If Microsoft wants Copilot to be a helpful, widely adopted assistant rather than a standing target for criticism, it must pair product changes with transparent moderation, meaningful user controls, and readable signals that dissent will be treated as feedback — not something to be quietly blocked. (windowslatest.com)

The incident will likely fade as moderation teams adjust rules and reopen channels, but it should remain a cautionary example for any company that runs official communities while rolling controversial features into flagship products.

Source: Windows Latest Microsoft gets tired of “Microslop,” bans the word on its Discord, then locks the server after backlash

Background / Overview

Background / Overview

The word “Microslop” is shorthand for a much larger user revolt: a scornful portmanteau that fuses Microsoft’s name with the widely used tech term slop — shorthand for low‑quality AI output. The meme first spread in earnest after public comments about “slop vs. sophistication” from Microsoft leadership, and it quickly metastasized into browser extensions, protest posts, and repeated mentions across social platforms. Mainstream tech outlets and specialist sites documented the trend, noting how the nickname became a proxy for frustration with aggressive AI rollout and perceived quality regressions.Community reaction to the Copilot push has not been limited to jokes. Over the past year, Windows users and IT pros have logged reliability complaints, surprised re-enabling of AI features, and an optics problem: visible AI surfaces launched into an OS that many users felt still had fundamental stability issues. That mix of grievance and ridicule created fertile ground for a single, sharable insult to stick — and for it to spread into places brand teams would prefer remain constructive.

What happened in the Copilot Discord (what we can verify)

Windows Latest reported that messages containing the exact word “Microslop” were being blocked by the Copilot Discord server’s moderation system; senders received a server moderation notice stating the content included a phrase deemed inappropriate, and the message did not appear publicly. The article included a recording and screenshots showing the moderation notice and the subsequent activity that led moderators to restrict messaging and lock channels. (windowslatest.com)After the block was noticed and shared on social media, users deliberately attempted circumventions — substituting letters for numbers, inserting punctuation, or using lookalike characters — and those variations reportedly bypassed the server’s keyword filter. The testing escalated from a meme‑driven prank to a raid-like situation: some accounts lost posting privileges, message history was hidden in affected channels, and sections of the server were placed into a locked or read-only state while moderators intervened. Windows Latest’s timeline shows the filter discovery, quick experimentation, and the server‑wide containment measures in short order. (windowslatest.com)

Important verification note: the core reporting about the filter and the lockdown comes from Windows Latest’s direct coverage and media included in that story. Contemporary reporting from other outlets documented the broader Microslop meme and Microsoft’s public AI push, but independent, contemporaneous confirmation of the specific Discord moderation actions beyond Windows Latest’s reporting was limited at the time of coverage. Readers should treat the Discord incident as credibly reported by a named tech outlet, but acknowledge the usual caveat: moderation actions inside private, brand‑run community servers are sometimes short‑lived and hard to corroborate once restored. (windowslatest.com)

Why this matters: trust, tone, and product communities

Brand communities are fragile spaces

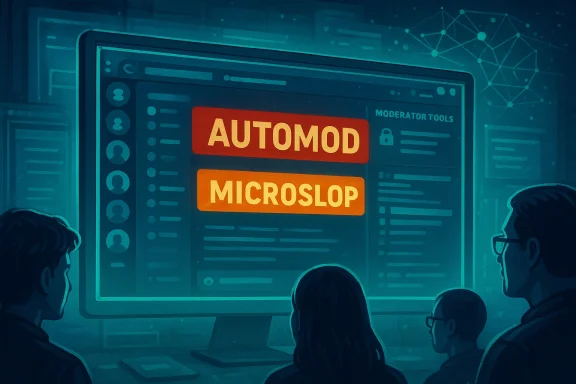

Official product communities — particularly those operated on platforms like Discord — serve dual roles: they are support hubs and marketing channels. That duality demands careful moderation to keep channels helpful, professional, and actionable. Keyword lists and automations are normal tools for brand moderation teams; they can and do block profanity, personal attacks, spam, and sometimes brand‑deprecating nicknames. From a moderation‑policy perspective, removing a derogatory, meme-driven nickname is a defensible rule for a server meant for feedback and support. (windowslatest.com)But the Microslop episode shows the darker side of that calculus: when a moderation policy intersects with a viral meme, the enforcement itself becomes the story. The Streisand effect kicks in. An apparently minor filter becomes proof for critics that the company is trying to silence dissent, which rapidly amplifies the criticism beyond the server and into broader social conversation. Multiple outlets documented how Nadella’s phrasing about AI quality helped spark the meme, and how the meme then fed organized protests (including browser extensions and coordinated posts). That context matters because it explains why a single filter can balloon into reputational damage.

Moderation as a pressure valve — and a liability

When moderators locked channels and hid history, they were acting like emergency responders: pause activity, prevent more damage, and regain control. That tactic can work: short, surgical containment allows time to patch rules, adjust automations, and re-open with clearer expectations. However, from a community relations standpoint, broad lockdowns are blunt instruments that punish bystanders and fuel narratives about heavy-handed corporate control.Companies must weigh three competing goals in such moments:

- preserve productive discourse and support flows,

- prevent harassment and brand abuse,

- avoid turning enforcement into a PR flare.

The technical mechanics: keyword filters, evasions, and the cat-and-mouse game

How keyword moderation typically works

Server‑side moderation on Discord often uses:- exact keyword lists that trigger automated blocks,

- regex or pattern matching to catch variations,

- third‑party moderation bots that enforce community policies,

- rate limits and automatic temporary suspensions for repeated infractions.

Why moderation sophistication matters

More sophisticated moderation pipelines add:- contextual analysis (natural language understanding to detect intent),

- rate and pattern heuristics (to detect raid behavior vs. isolated posts),

- manual review queues for ambiguous cases,

- whitelisting for trusted user groups.

The larger narrative: Copilot, Windows 11, and the trust deficit

Not just a meme — a symptom

Microslop is shorthand for accumulated grievances: perceived performance regressions in Windows, intrusive placement of AI features, confusing defaults, and a sense that major design choices were made for marketing rather than user benefit. Those complaints have been cataloged by community threads, independent tests, and investigative reporting through the last year. When sentiment sours across that many vectors, a meme becomes a proxy weapon: easy to repeat, viral, and emotionally satisfying.Product concessions and reputational repair

Microsoft has already had to adjust course on some visible AI elements following user backlash. Recent browser updates and product controls have increasingly given users ways to hide or remove Copilot affordances — concessions that show the company responds when pressure is sustained. Still, concessions alone don’t repair trust; companies also need to demonstrate reliability, predictable opt‑outs, and a willingness to prioritize core functionality over surface features. Observers have noted some of these changes as tactical retreats rather than a wholesale rethinking of strategy.Community safety vs. free expression: an ethical crossroad

The moderation ethics problem

Corporations operating public communities face competing ethical demands:- create a safe, helpful space for customers,

- respect legitimate critical speech,

- avoid unduly censoring valid complaints.

Practical safeguards community teams should adopt

- Publish a concise moderation policy and a process for appeals.

- Use graduated enforcement (warnings, short timeouts, manual review) rather than immediate global lockdowns when possible.

- Prefer context‑aware moderation (intent detection) over strict string blocks.

- Communicate incident responses publicly when a moderation action affects large groups.

Tactical lessons for Microsoft and other platform owners

- Reassess keyword lists regularly and test for evasions before deploying them widely. Exact string blocks are brittle and will be circumvented. (windowslatest.com)

- Build quick, transparent escalation playbooks that include a public status update when large parts of a community are affected. Silence invites speculation.

- Offer durable opt‑outs for intrusive product features and make those opt‑outs easy to find and persist across updates. A product that forces acceptance invites organized pushback.

- Invest in moderation tooling that balances automation with human judgment to prevent flush‑to‑the‑top enforcement actions that hit innocent users. (windowslatest.com)

What this means for IT pros, privacy‑minded users, and community members

- IT and enterprise admins should watch the optics of vendor communities: vendor‑run channels are part of a company’s reputation surface and can influence end‑user sentiment inside organizations. When vendor communities appear to censor criticism, internal procurement teams will notice.

- Privacy‑minded users should remember that server moderation is an ephemeral, platform‑level control. Archival screenshots and recordings often end up as the public record; if you’re joining official channels for support, keep copies of important guidance. (windowslatest.com)

- Community members who value open dialogue should push for clear rules and appeals processes in any official forum they use. A healthy community is a negotiated space; when brand teams own the terms, that negotiation must be explicit.

A balanced read on Microsoft’s position

Microsoft’s desire to maintain a constructive Copilot community is rational. Brand teams must prevent harassment, spam, and harassment-driven derailment of support forums. Blocking a single insulting nickname — if the server judged it harmful — is an easily explainable moderation choice.But the execution reveals a deeper problem: a lack of calibrated, transparent moderation paired with a product strategy that made the brand a repeated target. The moderation action, once visible, validates critics’ claims about opaque control and corporate defensiveness. The result is reputational friction that is much harder to repair than the original meme.

This is not just a community incident; it is a symptom of a fragile trust relationship between Microsoft and its Windows user base. Rebuilding that trust will require more than better moderation logic — it will require visible improvements in product reliability, persistent and discoverable user controls, and clearer public communication about how customer feedback shapes the roadmap. (windowslatest.com)

Final analysis: risk, opportunity, and the road ahead

- Risk: If brand communities continue to be run without transparent policies and robust escalation playbooks, moderation incidents will keep feeding social campaigns that harm sentiment and adoption. Large, visible products like Copilot are natural lightning rods; each reactive moderation choice compounds the reputational risk.

- Opportunity: Microsoft and other platform owners can use these moments as diagnostics. A well-handled moderation incident can become a trust-building exercise if accompanied by apology, transparent explanation, policy updates, and product concessions that address the underlying grievances.

- Tactical takeaway: Invest in moderation systems that combine automated protections with human-in-the-loop review, publish clear rules and appeal mechanisms, and treat public community incidents as PR and product telemetry simultaneously. That approach reduces the chance a discrete enforcement action becomes a viral reputational event.

Quick recommendations for community managers and product teams

- Immediately after an incident:

- Publish a short public note explaining what happened and why (without exposing moderation inner workings).

- Reopen affected channels in a graduated way, restoring history where safe.

- Offer a mechanism for affected users to appeal or request clarification.

- Medium term:

- Audit keyword rules and add fuzzy matching and intent detection.

- Train moderators on escalation and public communication.

- Align product teams and community teams so product changes that might provoke reaction have preflight comms and opt‑out clarity.

- Long term:

- Treat community incidents as product telemetry: feed lessons back into product design and privacy/opt‑out UX.

- Measure sentiment improvements after changes and publish progress reports to regain trust.

Microsoft’s Copilot Discord moderation episode is small in isolation but telling in context: a viral nickname met with a blunt automated block, an active community that immediately found workarounds, and a moderation response that escalated into server lockdowns visible to the public. The technical fix for a single keyword is trivial; the harder work is rebuilding user trust inside and outside community channels. If Microsoft wants Copilot to be a helpful, widely adopted assistant rather than a standing target for criticism, it must pair product changes with transparent moderation, meaningful user controls, and readable signals that dissent will be treated as feedback — not something to be quietly blocked. (windowslatest.com)

The incident will likely fade as moderation teams adjust rules and reopen channels, but it should remain a cautionary example for any company that runs official communities while rolling controversial features into flagship products.

Source: Windows Latest Microsoft gets tired of “Microslop,” bans the word on its Discord, then locks the server after backlash