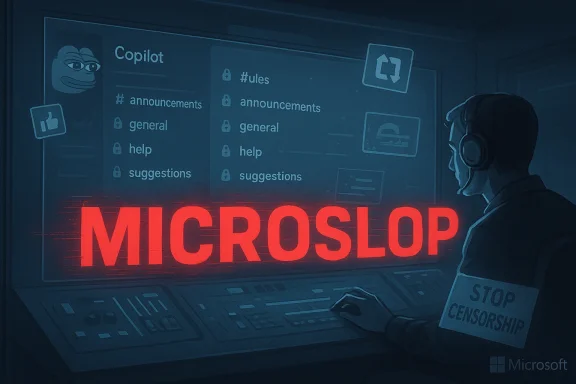

Microsoft’s attempt to silence a single nine‑letter epithet inside its official Copilot Discord — the derisive nickname “Microslop” — turned into a rapid, high‑visibility public relations problem that left the server partially locked, moderators scrambling, and a community more energized by protest than persuaded to change its tone. The escalation — which played out publicly on March 2–3, 2026 — is a compact lesson in how keyword filtering, automated moderation, and brand governance can collide with meme culture and user distrust in ways that amplify the very message they intend to suppress.

Microsoft’s Copilot family has been the company’s flagship push to embed generative AI across Windows, Microsoft 365, and cloud services. That aggressive integration has created two simultaneous dynamics: enthusiasm from early adopters and increasing resentment from users who feel features are pushed into products without adequate control, clarity, or opt‑out mechanisms. Into that friction slipped a new, pejorative portmanteau — Microslop — used by some community members to lampoon Microsoft’s Copilot branding and perceived overreach.

The official Copilot Discord functions as a public-facing hub for product updates, technical Q&A, and community feedback. It also uses automated moderation tools and human moderators to maintain conversation standards. On March 2, 2026, moderators added “Microslop” to an automated keyword filter. The filter’s behavior — blocking or deleting messages that contained the word — became visible to members, who promptly tested, evaded, and amplified the restriction. In short order, channel restrictions were tightened and portions of the server were locked while staff tried to regain control.

Where moderators saw imminent harm, immediate technical action may have been warranted. The mistake was choosing a method and a communication posture that inadvertently amplified the narrative of censorship.

The underlying lesson is practical: moderation is as much a product decision as a safety one. Companies that integrate the two — designing enforcement as an experience that respects users, explains itself, and solicits feedback — will reduce the risk of avoidable PR flareups and build the resilient, trusted communities necessary for sustained product success.

Source: extremetech.com Microsoft Sparks Backlash by Banning the Word ‘Microslop’ on Copilot's Discord Server

Source: Forbes https://www.forbes.com/sites/daveyw...op-chat-ban-is-not-censorship-says-microsoft/

Source: FindArticles Microsoft Copilot Discord Microslop Ban Backfires

Background

Background

Microsoft’s Copilot family has been the company’s flagship push to embed generative AI across Windows, Microsoft 365, and cloud services. That aggressive integration has created two simultaneous dynamics: enthusiasm from early adopters and increasing resentment from users who feel features are pushed into products without adequate control, clarity, or opt‑out mechanisms. Into that friction slipped a new, pejorative portmanteau — Microslop — used by some community members to lampoon Microsoft’s Copilot branding and perceived overreach.The official Copilot Discord functions as a public-facing hub for product updates, technical Q&A, and community feedback. It also uses automated moderation tools and human moderators to maintain conversation standards. On March 2, 2026, moderators added “Microslop” to an automated keyword filter. The filter’s behavior — blocking or deleting messages that contained the word — became visible to members, who promptly tested, evaded, and amplified the restriction. In short order, channel restrictions were tightened and portions of the server were locked while staff tried to regain control.

What happened (a concise timeline)

March 2, 2026 — Keyword filter deployed

Moderators added the term “Microslop” to an automated word‑block list inside the Copilot Discord. Automated filters began removing messages that used the term, which quickly drew attention from the community.March 2, 2026 — The community pushes back

Users reacted by:- Reposting the word with character substitutions to evade the filter.

- Flooding channels with the term as a protest tactic.

- Posting screenshots and commentary about the moderation action to other platforms.

March 2–3, 2026 — Escalation and partial server lockdown

As the volume of evasion attempts and protest posts grew, moderators escalated enforcement by restricting posting permissions and ultimately locking parts of the server to halt the surge. The lockout made message history less accessible to ordinary members for a time, prompting accusations that the company had overreacted.Immediate aftermath

The moderation action and server lockdown drew broader coverage and debate around moderation strategies, corporate community management, and the optics of suppressing a critical, if crude, nickname for a product the company is aggressively promoting.Why this small action became a big story

The incident reads like a miniature case study in modern digital governance. Three dynamics made a routine moderation choice into an outsized issue.- Meme culture is self‑amplifying. Trying to ban a meme term often sends it viral; the attempt to suppress becomes the story.

- Automated filters are opaque. When users can see a message vanish without clear explanation, they assume bad intent — censorship, secrecy, or fragility — and react emotionally.

- The product is already controversial. Copilot has amassed a mixture of fervent supporters and vocal critics; any perceived heavy‑handedness from Microsoft is filtered through that existing mistrust.

The moderation mechanics that failed — and why

Keyword blocking is blunt force

Automated keyword filters excel at removing clear use‑cases of harmful language, spam, and straightforward violations. They are poor tools for nuanced cultural issues. A single word block has limited contextual awareness: it can’t differentiate between a user quoting a news headline, reporting abuse, or expressing an opinion. In the Copilot Discord case, the filter lacked contextual nuance and produced visible deletions that members noticed and explored.Visibility breeds distrust

When users see their posts removed but receive no clear explanation — no rationale, no link to community guidelines, no notice that the term was specifically banned — the moderation action becomes mysterious and thus suspect. Lack of transparency created an information vacuum that users filled with speculation and mockery.Human moderation practices were reactive, not proactive

Moderation teams often face an impossible choice: intervene early and risk appearing authoritarian, or allow problems to grow and risk chaos. Here, the reactive escalation from keyword filter to channel restrictions to lockdown suggested an absence of a graduated, transparent enforcement policy. That reactive posture worsened optics and eroded trust.Evasion created an arms race

Users tested character substitutions, deliberate misspellings, and images to evade the filter. This is a predictable outcome when communities perceive a moderation edge. Automated systems aren’t well equipped to discern intent in such cases and can be gamed quickly.Corporate PR and brand-risk implications

Short‑term reputational damage

Any company perceived to be silencing criticism risks losing moral high ground in public discourse. Even if the moderation was intended to preserve constructive discussion, the public narrative became one of censorship, which is a headline‑friendly framing. That headline value extends beyond Discord to social media and tech press.Long‑term trust cost

For a product line that relies on user trust — especially where AI models ingest data and integrate across user workflows — repeated perceptions of heavy‑handedness can erode willingness to adopt features. Brand trust is not just about features; it’s about how a company responds when challenged.Internal signal issues

Locking parts of an official community and banning members or hiding history sends a signal internally: product teams may feel insulated from dissenting feedback, and community managers may be pushed to act defensively rather than engage. Over time, that dynamic can degrade product quality and blind companies to real user pain points.Legal and policy considerations (what companies should watch)

While private platforms like Discord retain the right to moderate content under their terms of service, several practical and ethical considerations matter:- Transparency obligations: Regulatory trends and consumer expectations increasingly favor clear moderation policies and appeal mechanisms.

- Consistency: Enforcement actions should be consistent across users and contexts to avoid claims of unfair treatment.

- Data accessibility: Hiding message history can complicate investigations or user appeals and can amplify complaints externally.

- Platform rules vs. corporate speech policy: Companies that operate official communities should align their enforcement with a public‑facing code of conduct and explain the rationale behind content moderation.

Critical analysis: strengths, failures, and missed opportunities

Strengths

- Rapid technical response: Moderation tools were deployed quickly to stem a surge of posts, showing the operations team had the capability to act at scale.

- Intent to keep community constructive: The moderation action almost certainly aimed to prevent abusive, derailing behavior and maintain a helpful environment for product discussion.

Failures and missed opportunities

- Lack of a public explanation: A brief, transparent announcement explaining the enforcement rationale and temporary measures would have significantly reduced speculation.

- Overreliance on a single keyword filter: Relying on a one‑word block for a meme born of cultural critique ignored the need for contextual moderation.

- No graduated enforcement: There was no visible escalation path (warning → temporary mute → clearer policy enforcement) that could have signaled fairness and proportionality.

- Failure to use moderation as engagement: Rather than treat the incident solely as a removal task, moderators could have converted it into a listening opportunity by soliciting in‑channel feedback and hosting AMA sessions about Copilot concerns.

- Technical blind spots: Automated filters without robust pattern recognition (contextual embeddings, phrase-level sentiment analysis, cross‑message grouping) are easily evaded and often misclassify.

Practical recommendations for community managers

If your organization operates an official community — especially around contentious products — consider the following operational blueprint.Policy and transparency

- Publish a short, plain‑language Code of Conduct that explains what is and isn’t allowed, why, and how enforcement decisions are made.

- Provide a visible, simple appeal mechanism so users can challenge removals quickly.

Graduated enforcement

- Detect: Use automated systems to flag content.

- Warn: Send an automatic, human‑reviewable warning before permanent removal where possible.

- Moderate: Apply temporary mutes or deletions for clear violations.

- Recover: Offer an explanation and a path to restore access for users who comply.

Technical improvements

- Move beyond single‑keyword blocking to contextual moderation using phrase embeddings, user history, and content clustering to identify coordinated campaigns versus isolated comments.

- Employ image and meme recognition to catch evasive behavior that replaces banned text with images.

- Build moderator tools that show why an item was flagged (matched rule, severity score), enabling faster human review.

Community engagement as a mitigation strategy

- Host regular open forums or Ask‑Me‑Anything sessions where product team members answer hard questions about Copilot’s design choices.

- Treat criticism as market research: escalate common complaints to product teams and report back on what will change.

- Celebrate constructive dissent — recognize and reward contributors who point out real issues with evidence and suggested fixes.

Technical deep dive: how better moderation would have worked

Keyword blocking is the equivalent of a blunt instrument. A modern, resilient moderation stack should combine multiple signals:- Semantic analysis: Use embedding models to group semantically similar phrases and detect coordinated surges even when exact characters differ.

- Reputation signals: Weight actions by user history; longtime contributors should trigger soft enforcement (warnings) when they slip, while brand‑new accounts with abusive patterns should be handled more aggressively.

- Rate limiting: Apply temporary posting caps to channels experiencing sudden surges to blunt coordinated campaigns without locking all users out.

- Explainable flags: Present moderators with the precise rule and confidence score that triggered a flag, speeding thoughtful decisions.

Broader context: Copilot backlash, AI governance, and user autonomy

The Microslop episode is not an isolated skirmish; it’s a symptom of larger tensions:- Forced integration vs. user control: As major platforms bake AI into more product surfaces, users demand clearer opt‑out controls, local processing options, and auditability.

- Data governance anxieties: Users worry about how their content and usage feed model training and telemetry pipelines. Heavy‑handed moderation only heightens suspicion.

- Cultural speed mismatch: Product roadmaps and marketing often move faster than governance and community norms. When companies outpace their community engagement, friction grows.

What Microsoft — and other platform owners — can learn

- Treat official communities as part of the product experience. Community moderation impacts user perception of the product as much as UI bugs or performance issues.

- Build public, short‑form transparency artifacts: one‑page moderation rules, escalation timelines, and a published appeals flow.

- Convert protest into product telemetry. If a meme criticizes a product, parse the underlying grievance and feed that back to product teams as prioritized feedback.

- Invest in soft power: community managers, empathy training for moderators, and engagement budgets that fund robust listening and response activities.

Risk assessment: if nothing changes

If corporations persist with opaque, rapid moderation shortcuts, expect recurring cycles of backlash. Small incidents will continue to balloon into news stories because social media rewards conflict. Worse, companies may lose access to candid feedback loops that communities provide, causing product teams to become insulated from essential user pain points.Counterarguments and limits of criticism

It is reasonable to argue that official channels need protection from harassment, doxxing, and organized trolling. Moderators operate under high load and must make fast choices. There are circumstances where vigorous enforcement — including keyword blocks — is necessary to protect users and maintain useful discussion. The central criticism here is not that moderation occurred; it is that the execution lacked proportionality, transparency, and a plan to de‑escalate.Where moderators saw imminent harm, immediate technical action may have been warranted. The mistake was choosing a method and a communication posture that inadvertently amplified the narrative of censorship.

A short playbook for future incidents (operational checklist)

- Pause and assess: Before deploying a visible filter, assess the likely community reaction and prepare a short public statement explaining why the action is necessary.

- Prefer warnings: Where possible, use soft enforcement and warnings that preserve conversation while signaling norms.

- Use graduated technical controls: Rate limits and temporary channel slow modes are less inflammatory than word bans.

- Communicate quickly: Publish a short note in affected channels explaining what changed and how users can appeal.

- Capture feedback: Convert the incident into structured feedback for product teams within 48 hours.

- Reopen with restoration: When the surge subsides, restore access and post a short after‑action note describing what happened and next steps.

Conclusion

The “Microslop” incident on Microsoft’s Copilot Discord is small in technical scope but large in symbolic value. It exposes how automated moderation, when applied without nuance and without transparent communication, can amplify the very criticism it aims to eliminate. In an era where AI features are deeply entangled with user trust, companies can no longer treat community spaces as back channels for blunt enforcement. Instead, they must invest in context‑aware moderation, clear policies, and rapid, public engagement strategies that turn conflict into dialogue rather than escalation.The underlying lesson is practical: moderation is as much a product decision as a safety one. Companies that integrate the two — designing enforcement as an experience that respects users, explains itself, and solicits feedback — will reduce the risk of avoidable PR flareups and build the resilient, trusted communities necessary for sustained product success.

Source: extremetech.com Microsoft Sparks Backlash by Banning the Word ‘Microslop’ on Copilot's Discord Server

Source: Forbes https://www.forbes.com/sites/daveyw...op-chat-ban-is-not-censorship-says-microsoft/

Source: FindArticles Microsoft Copilot Discord Microslop Ban Backfires