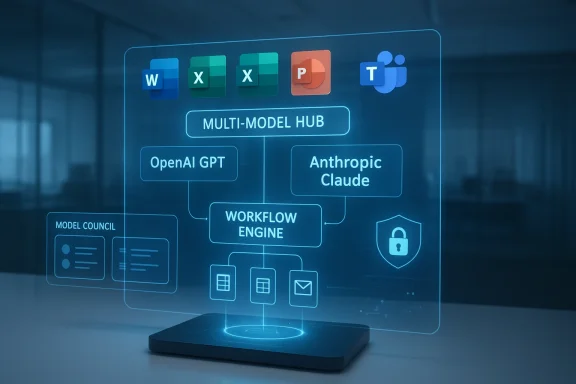

Microsoft is turning Microsoft 365 Copilot into something much bigger than a single-model assistant. The latest Frontier-program rollout brings Anthropic’s Claude into the same workspace as OpenAI’s GPT models, while new Copilot experiences such as Copilot Cowork, Researcher Critique, and Model Council push Microsoft’s productivity stack toward a true multi-model operating layer. That shift matters because it reduces the need to jump between separate AI tools and gives enterprises a more controlled way to compare, chain, and govern model output inside the apps they already use.

Microsoft 365 Copilot launched as one of the clearest examples of how generative AI could be embedded directly into mainstream productivity software. Rather than requiring users to leave Word, Excel, PowerPoint, Outlook, or Teams, Microsoft built Copilot as an interface layer over work data, enterprise identity, and model inference. For a long time, the public story was simple: Microsoft brought OpenAI into the heart of the Office experience, and the rest followed from there.

That story was always more complex in practice. Microsoft’s Copilot architecture was never just about one chatbot answering prompts. It gradually became a system of agents, data connectors, governance controls, and task-specific experiences. As the product evolved, Microsoft also began talking more openly about Work IQ, a knowledge layer that ties model behavior to enterprise context, and about Enterprise Data Protection, which is supposed to keep work content inside Microsoft’s trust boundary. The strategic message was clear: the company wanted Copilot to feel native to work, not like a browser tab bolted onto it.

The most important recent shift is model pluralism. Microsoft has now gone public with a much broader model strategy in Microsoft 365 Copilot, adding Anthropic alongside OpenAI in selected surfaces and workflows. Official Microsoft posts say Claude is now available in mainline Copilot chat through the Frontier program, while Researcher has been upgraded with multi-model approaches such as Critique and side-by-side model comparison through Model Council. Microsoft says this is part of a “Frontier” transformation aimed at bringing the best AI from across the industry into the tenant, grounded in work data and protected by Microsoft controls.

This matters because it changes the competitive meaning of Copilot. Copilot is no longer just an OpenAI distribution channel inside Microsoft 365. It is becoming a broker of model choice, a workflow orchestrator, and, increasingly, a place where enterprise users can compare model strengths without fragmenting their workday. That is a significant step toward a platform model for workplace AI rather than a single-vendor assistant.

The company describes the experience as being built on Work IQ, which lets Copilot draw on calendar, email, and files in a more context-aware way. In practice, that means users can ask for a broad work outcome and then let the system assemble the artifacts behind it. The point is to reduce copy-and-paste across applications and to keep sensitive information inside the Microsoft 365 environment rather than routing it through outside tools.

The company’s official Frontier materials and support guidance describe a multi-model Researcher experience, where GPT and Claude can both participate in generating and reviewing outputs. That same guidance says the new features are opt-in through Frontier, which is important because Microsoft appears to be using early access to test policy, quality, and user acceptance before wider rollout.

That is why the addition of Anthropic is strategically important. Microsoft’s own messaging now emphasizes that Copilot is model diverse by design and that customers should get flexibility rather than a locked-in backend. Official Microsoft sources say Claude now appears alongside the latest OpenAI models in Copilot chat, and that Claude can also be selected in Researcher and Copilot Studio for relevant workflows.

That flexibility is attractive for IT leaders who want to standardize on a platform while avoiding single-vendor exposure. It is also a subtle competitive message to Google, OpenAI, and other workplace AI vendors: Microsoft wants the office suite to become the neutral ground where model competition happens inside the customer’s workflow, not outside it.

That is a big shift in product philosophy. Traditional office assistants respond to isolated requests. Copilot Cowork is intended to work more like a task manager embedded in the productivity suite, preserving momentum and context as work changes. That makes it more useful for managers, analysts, and team leads who often need a bundle of coordinated outputs rather than a single file.

Microsoft’s use of the Frontier program is also telling. Early access lets the company observe where the agent works well and where it breaks down. That is especially important for long-running tasks, because the failure modes of multi-step AI are different from those of short chat replies. A model can be fluent and still drift, misprioritize, or overfit a user’s latest instruction.

But there is a catch. The more the system does, the more important provenance, change tracking, and approval become. If Copilot Cowork is creating artifacts that move directly into circulation, organizations will need to know where the underlying data came from, which model touched which step, and what human review was applied before distribution.

That is a notable change from single-model generation. The system is effectively admitting that no one model should be trusted to do everything alone, especially in a research workflow where accuracy matters. By building a reviewer layer into the product, Microsoft is operationalizing the idea that AI quality improves when models are allowed to inspect one another.

Model Council goes one step further by comparing outputs side by side. Instead of forcing a user to pick one model first, Microsoft lets the prompt fan out to multiple models and then displays the results together. That encourages users to look for differences in reasoning, detail, and tone rather than treating model selection as a blind faith exercise.

The promise is straightforward. Users can get external model diversity without leaving the Microsoft 365 boundary. In theory, that keeps the data flow inside a managed environment where identity, permissioning, retention, and audit are already familiar to IT departments. It also lets Microsoft position Copilot as a safe alternative to shadow AI usage.

This is where Microsoft’s role as platform provider becomes both an advantage and a burden. A platform can unify controls, but it also inherits responsibility for explaining a very complicated chain of model use. Once multiple vendors are in the loop, governance becomes more than a checkbox; it becomes a central product requirement.

That is a subtle but important message to the market. If OpenAI is the model innovator and Anthropic is the safety-and-reasoning alternative, Microsoft wants to be the enterprise distribution layer that can absorb both. In that sense, Copilot becomes less like a fixed feature and more like an intelligence marketplace.

This does not mean Microsoft is abandoning OpenAI. Far from it. Microsoft’s official language is additive, not substitutive, and the company says the latest OpenAI models remain central to Copilot. But the optics matter, and the optics are now unmistakably multi-model.

The opportunity is even broader than immediate productivity gains. If Microsoft executes well, Copilot can become the default enterprise environment where model experimentation happens under governance, not in unsanctioned browser tabs. That would make Copilot both a productivity product and a control plane for workplace AI.

There is also a quality risk. A critique model can improve drafts, but it can also create false confidence if users assume two models agreeing means the answer is correct. That is especially dangerous in research or compliance-heavy workflows where a plausible error can travel quickly through an organization.

The other major question is how fast Microsoft expands this architecture beyond Researcher and chat. Once users become comfortable comparing outputs inside Microsoft 365, the pressure will rise to bring similar flexibility into more apps and more agent surfaces. That would make Copilot less like a single assistant and more like a workplace AI operating system.

Source: Windows Report https://windowsreport.com/anthropic-and-openai-models-now-available-inside-microsoft-365/

Background

Background

Microsoft 365 Copilot launched as one of the clearest examples of how generative AI could be embedded directly into mainstream productivity software. Rather than requiring users to leave Word, Excel, PowerPoint, Outlook, or Teams, Microsoft built Copilot as an interface layer over work data, enterprise identity, and model inference. For a long time, the public story was simple: Microsoft brought OpenAI into the heart of the Office experience, and the rest followed from there.That story was always more complex in practice. Microsoft’s Copilot architecture was never just about one chatbot answering prompts. It gradually became a system of agents, data connectors, governance controls, and task-specific experiences. As the product evolved, Microsoft also began talking more openly about Work IQ, a knowledge layer that ties model behavior to enterprise context, and about Enterprise Data Protection, which is supposed to keep work content inside Microsoft’s trust boundary. The strategic message was clear: the company wanted Copilot to feel native to work, not like a browser tab bolted onto it.

The most important recent shift is model pluralism. Microsoft has now gone public with a much broader model strategy in Microsoft 365 Copilot, adding Anthropic alongside OpenAI in selected surfaces and workflows. Official Microsoft posts say Claude is now available in mainline Copilot chat through the Frontier program, while Researcher has been upgraded with multi-model approaches such as Critique and side-by-side model comparison through Model Council. Microsoft says this is part of a “Frontier” transformation aimed at bringing the best AI from across the industry into the tenant, grounded in work data and protected by Microsoft controls.

This matters because it changes the competitive meaning of Copilot. Copilot is no longer just an OpenAI distribution channel inside Microsoft 365. It is becoming a broker of model choice, a workflow orchestrator, and, increasingly, a place where enterprise users can compare model strengths without fragmenting their workday. That is a significant step toward a platform model for workplace AI rather than a single-vendor assistant.

What Microsoft Actually Announced

At the center of the new rollout is Copilot Cowork, a long-running, multi-step work feature now available in Frontier. Microsoft says it is designed to handle tasks that span documents, presentations, spreadsheets, email, and calendar events, and it can keep working as users add instructions midstream. That means the tool is not merely generating text; it is managing a workflow and preserving context across several outputs at once.The company describes the experience as being built on Work IQ, which lets Copilot draw on calendar, email, and files in a more context-aware way. In practice, that means users can ask for a broad work outcome and then let the system assemble the artifacts behind it. The point is to reduce copy-and-paste across applications and to keep sensitive information inside the Microsoft 365 environment rather than routing it through outside tools.

The key change is orchestration, not just access

Microsoft is not simply adding another model picker. It is making the model layer itself more dynamic, so the best model can be used for a given task, or multiple models can collaborate on the same task. That is a more ambitious design because it treats models as components in a workflow, not as competing chatbots sitting side by side.The company’s official Frontier materials and support guidance describe a multi-model Researcher experience, where GPT and Claude can both participate in generating and reviewing outputs. That same guidance says the new features are opt-in through Frontier, which is important because Microsoft appears to be using early access to test policy, quality, and user acceptance before wider rollout.

- Copilot Cowork is focused on long-running, multi-step work.

- Researcher now includes a critique layer and model-choice options.

- Model Council lets users compare responses from different models side by side.

- Frontier is the access gate for the newest experiences.

- Work IQ is Microsoft’s core enterprise context layer behind the scenes.

Why Multi-Model Matters Now

For the past two years, the AI market has been defined less by raw novelty and more by differentiation. One model may excel at structured reasoning, another at drafting, and another at coding or synthesis. Microsoft’s move recognizes that no single model is the universal best answer for every workplace task, especially when the task spans research, writing, summarization, and artifact generation.That is why the addition of Anthropic is strategically important. Microsoft’s own messaging now emphasizes that Copilot is model diverse by design and that customers should get flexibility rather than a locked-in backend. Official Microsoft sources say Claude now appears alongside the latest OpenAI models in Copilot chat, and that Claude can also be selected in Researcher and Copilot Studio for relevant workflows.

A hedge against dependence

There is also a business reason for the change. Microsoft has invested heavily in OpenAI, but any enterprise platform that depends too heavily on one AI supplier inherits that supplier’s pricing, capacity, and roadmap constraints. Multi-model support gives Microsoft a hedge, and it gives customers a hedge too. If one model underperforms on a task, another can be tried without leaving Microsoft 365.That flexibility is attractive for IT leaders who want to standardize on a platform while avoiding single-vendor exposure. It is also a subtle competitive message to Google, OpenAI, and other workplace AI vendors: Microsoft wants the office suite to become the neutral ground where model competition happens inside the customer’s workflow, not outside it.

The practical effect for users

For everyday workers, the value is more concrete. Users can now test which model handles a research prompt better, compare tone and structure, and keep everything inside one environment. That is especially useful when the work involves sensitive internal data, because the temptation to paste documents into public tools is one of the major security problems in modern knowledge work.- Better task-model matching.

- Less app switching.

- More transparent output comparison.

- Easier enterprise governance.

- Lower friction for experimentation.

Copilot Cowork and the Rise of Long-Running Tasks

Copilot Cowork is the clearest sign that Microsoft wants Copilot to move beyond one-shot answers. Instead of prompting the system repeatedly for smaller outputs, users can ask it to deliver a set of linked work products in one go. Microsoft says it can build a document, a presentation, and a spreadsheet together, while also folding in additional instructions like scheduling meetings or drafting emails as the task evolves.That is a big shift in product philosophy. Traditional office assistants respond to isolated requests. Copilot Cowork is intended to work more like a task manager embedded in the productivity suite, preserving momentum and context as work changes. That makes it more useful for managers, analysts, and team leads who often need a bundle of coordinated outputs rather than a single file.

Why this is more than automation

This is not just workflow automation in the old RPA sense. The system is still reasoning about ambiguous requests, reconciling partially specified goals, and generating artifacts from unstructured instructions. That makes it closer to an intelligent project assistant than a rules engine.Microsoft’s use of the Frontier program is also telling. Early access lets the company observe where the agent works well and where it breaks down. That is especially important for long-running tasks, because the failure modes of multi-step AI are different from those of short chat replies. A model can be fluent and still drift, misprioritize, or overfit a user’s latest instruction.

Enterprise implications

For enterprises, the attraction is obvious: less time spent stitching together output from multiple apps. A finance team might want a slide deck, supporting spreadsheet, and summary memo built from the same source set. A product team might want research findings converted into launch material without manually reformatting everything.But there is a catch. The more the system does, the more important provenance, change tracking, and approval become. If Copilot Cowork is creating artifacts that move directly into circulation, organizations will need to know where the underlying data came from, which model touched which step, and what human review was applied before distribution.

- Stronger multitask productivity.

- Better continuity across apps.

- More value for project-based work.

- Higher need for governance and auditability.

- Greater dependence on clean work data.

Researcher Critique and Model Council

Microsoft’s Researcher upgrades are some of the most interesting parts of the rollout because they show the company thinking like a systems designer rather than a prompt vendor. In the Critique setup, one model drafts the initial report while another model reviews it for completeness and structure. Microsoft’s support guidance says the default Critique flow uses GPT to generate the report and Claude to provide a second reasoning pass, with the review step intended to strengthen citations and reliability.That is a notable change from single-model generation. The system is effectively admitting that no one model should be trusted to do everything alone, especially in a research workflow where accuracy matters. By building a reviewer layer into the product, Microsoft is operationalizing the idea that AI quality improves when models are allowed to inspect one another.

The logic of a second pass

A critique model reduces the odds that weak sourcing or unclear structure survives into the final draft. It is not a guarantee of correctness, but it is a meaningful quality-control mechanism. In enterprise work, that matters because executives do not care whether a model produced a polished answer; they care whether the answer is defensible.Model Council goes one step further by comparing outputs side by side. Instead of forcing a user to pick one model first, Microsoft lets the prompt fan out to multiple models and then displays the results together. That encourages users to look for differences in reasoning, detail, and tone rather than treating model selection as a blind faith exercise.

A new way to evaluate AI

This is important culturally as well as technically. Many users still judge AI by whichever answer looks nicest. Microsoft’s side-by-side comparison pushes users toward a more disciplined evaluation process, where they can inspect competing outputs and decide which one fits the task. That should produce better habits over time, especially for workers who are trying to understand model limitations.- Critique introduces a built-in review layer.

- Model Council enables direct comparison.

- Researcher becomes less of a chatbot and more of a workflow.

- Users can see differences in structure and reasoning.

- The feature set encourages more critical AI literacy.

Work IQ, Security, and the Enterprise Boundary

Microsoft keeps stressing that these features are grounded in Work IQ and protected by Microsoft’s security and compliance stack. That framing is important because it addresses the biggest enterprise objection to generative AI: the risk of moving sensitive data into systems that are not designed for workplace governance. Microsoft’s official materials repeatedly link the new model choice features with the company’s broader enterprise controls.The promise is straightforward. Users can get external model diversity without leaving the Microsoft 365 boundary. In theory, that keeps the data flow inside a managed environment where identity, permissioning, retention, and audit are already familiar to IT departments. It also lets Microsoft position Copilot as a safe alternative to shadow AI usage.

What enterprise buyers will ask

That said, enterprise buyers will not stop at the marketing language. They will want to know which model handled which data, how prompts are routed, what data is retained by each provider, and what admin controls are available. They will also want clear answers about regional processing, tenant boundaries, and compliance obligations.This is where Microsoft’s role as platform provider becomes both an advantage and a burden. A platform can unify controls, but it also inherits responsibility for explaining a very complicated chain of model use. Once multiple vendors are in the loop, governance becomes more than a checkbox; it becomes a central product requirement.

Consumer vs enterprise reality

Consumers may mostly see convenience and better answers. Enterprises will see policy, identity, and risk management. That split is significant, because workplace AI succeeds or fails on the enterprise side long before it becomes a consumer habit. If Microsoft can make multi-model Copilot feel safe by default, it has a strong chance of turning a technical differentiation into a procurement advantage.- Security and compliance remain the main enterprise selling points.

- Work IQ is the context layer that ties the system together.

- Data governance becomes harder as more models participate.

- Admin visibility will be a major purchase criterion.

- Trust will depend on clear routing and policy controls.

Competitive Positioning Against OpenAI, Anthropic, and Google

Microsoft’s move is not just about product quality. It is also a strategic repositioning in the broader AI platform race. By hosting both OpenAI and Anthropic inside Microsoft 365, Microsoft is telling customers that it is the place where the best models can be used pragmatically, rather than the company betting the entire suite on a single AI partner.That is a subtle but important message to the market. If OpenAI is the model innovator and Anthropic is the safety-and-reasoning alternative, Microsoft wants to be the enterprise distribution layer that can absorb both. In that sense, Copilot becomes less like a fixed feature and more like an intelligence marketplace.

The Google and OpenAI angle

For Google, the challenge is that Microsoft can now argue its productivity stack is more open in model choice while still being tightly integrated with enterprise workflow. For OpenAI, the challenge is more delicate: Microsoft remains a crucial channel, but it is no longer acting as if OpenAI alone should define the Copilot experience. That weakens the sense of exclusivity that once surrounded the partnership.This does not mean Microsoft is abandoning OpenAI. Far from it. Microsoft’s official language is additive, not substitutive, and the company says the latest OpenAI models remain central to Copilot. But the optics matter, and the optics are now unmistakably multi-model.

Why this could reshape expectations

Once enterprise users get used to switching models inside one interface, they may begin to expect model choice everywhere. That could push rivals to offer similar orchestration, more transparent benchmarking, or deeper specialization by task. In other words, Microsoft may not just be reacting to model competition; it may be training the market to demand it.- Microsoft now positions itself as model-neutral at the interface layer.

- OpenAI remains important, but no longer exclusive.

- Anthropic gains distribution inside a major enterprise suite.

- Google faces a stronger comparison point inside productivity software.

- Customers may start expecting model choice as a standard feature.

Strengths and Opportunities

Microsoft’s approach has several obvious strengths. It keeps the user inside a familiar workflow, reduces tool fragmentation, and gives organizations more latitude to match models to tasks. It also opens the door to better AI quality because the system can draft, critique, and compare rather than forcing a single model to do every job alone.The opportunity is even broader than immediate productivity gains. If Microsoft executes well, Copilot can become the default enterprise environment where model experimentation happens under governance, not in unsanctioned browser tabs. That would make Copilot both a productivity product and a control plane for workplace AI.

- Lower friction for users who need multiple AI models.

- Better task fit when different models excel at different jobs.

- Stronger governance because work stays inside Microsoft 365.

- Improved research quality through critique and side-by-side review.

- More customer choice without adding new standalone tools.

- Potential lock-in reduction by making Copilot feel less tied to one provider.

- A stronger platform story for Microsoft’s enterprise AI roadmap.

Risks and Concerns

The biggest risk is that multi-model complexity creates more confusion than clarity. Users may not know which model to trust, admins may struggle to document the routing rules, and legal teams may worry about data handling across providers. The more powerful the orchestration, the more important the policy layer becomes.There is also a quality risk. A critique model can improve drafts, but it can also create false confidence if users assume two models agreeing means the answer is correct. That is especially dangerous in research or compliance-heavy workflows where a plausible error can travel quickly through an organization.

- Governance complexity increases with every additional model.

- Data handling questions become more difficult to explain and audit.

- False confidence may rise if users over-trust critique workflows.

- Feature fragmentation could confuse nontechnical users.

- Rollout inconsistency through Frontier may frustrate organizations seeking stability.

- Vendor dependency shifts rather than disappears entirely.

- Overreliance on automation could weaken human review habits.

Looking Ahead

The near-term question is whether Microsoft keeps this a Frontier novelty or turns it into a mainstream Copilot expectation. If the company can prove that model choice improves outcomes without overwhelming users, the multi-model approach could become one of the defining features of Microsoft 365 in the AI era. If it feels too complex, however, most users will fall back to whatever default is easiest.The other major question is how fast Microsoft expands this architecture beyond Researcher and chat. Once users become comfortable comparing outputs inside Microsoft 365, the pressure will rise to bring similar flexibility into more apps and more agent surfaces. That would make Copilot less like a single assistant and more like a workplace AI operating system.

What to watch next

- Whether Frontier features graduate to broad availability.

- How Microsoft expands Model Council and Critique across more workflows.

- Whether enterprises get finer-grained admin controls for model routing.

- How quickly Microsoft adds new model families or versions.

- Whether user demand shifts from “best answer” to “best model for this task.”

Source: Windows Report https://windowsreport.com/anthropic-and-openai-models-now-available-inside-microsoft-365/

Last edited: