Microsoft and NVIDIA are not promising a miracle cure for artificial general intelligence so much as attacking a more mundane obstacle that may determine how quickly AI can scale: the physical bottleneck of power and permitting. The larger story here is not that AGI is around the corner, but that the infrastructure race beneath AI is intensifying, and nuclear energy has become one of the clearest pressure valves. Microsoft’s own recent energy messaging now explicitly places nuclear, grid modernization, and other carbon-free technologies inside its long-term AI strategy, while NVIDIA is pushing its simulation stack deeper into industrial and physical-world workflows. (blogs.microsoft.com)

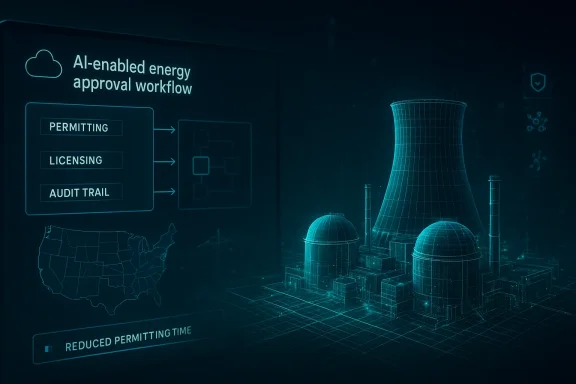

At the center of this push is Microsoft’s Generative AI for Permitting effort, a Garage-born project that reframes licensing as an automation problem instead of a paperwork inevitability. Microsoft says the work targets the “single biggest bottleneck” in clean-energy deployment, and Idaho National Laboratory has separately confirmed that it is collaborating with Microsoft to streamline nuclear permitting and licensing using Azure AI services. In other words, this is not just branding; it is becoming a formalized toolchain with real institutional partners. (microsoft.com)

The significance is broader than nuclear alone. If AI can compress the time it takes to assemble, review, and maintain regulatory documentation, then the same pattern could apply to grid projects, transmission buildouts, and other energy infrastructure that currently moves at the speed of compliance. That is why the Microsoft-NVIDIA combination matters: Microsoft brings the workflow and cloud layer, NVIDIA brings simulation, digital-twin, and physics-heavy tooling, and both companies are betting that software can shave years off the path to electrons on the grid. (investor.nvidia.com)

The current wave of AI infrastructure demand has forced a reappraisal of what counts as a strategic asset. GPUs matter, but so do land, transmission, cooling, permits, and baseload power. Microsoft’s February 2026 sustainability update made that plain by stating that the company is pursuing a broader portfolio of carbon-free energy technologies, including nuclear energy, next-generation grid infrastructure, and carbon capture, while also saying it will continue to use AI-driven tools to design, permit, and deploy new power technologies faster. (blogs.microsoft.com)

That shift did not happen in a vacuum. Microsoft has spent years building one of the largest corporate clean-energy portfolios in the world, with the company saying it has contracted 40 GW of new renewable energy supply across 26 countries since its 2020 carbon-negative announcement. The company’s logic is increasingly pragmatic: renewables remain central, but intermittent generation alone does not solve the rising demand curve created by AI data centers and industrial compute. (blogs.microsoft.com)

That framing is powerful because it moves the debate from ideology to throughput. A process that requires thousands of engineering pages, repetitive validation, and multiple regulatory handoffs is exactly where generative AI can be most useful, if the outputs remain auditable and the humans stay in control. The industry is effectively asking whether AI can become an accelerator for bureaucracy without becoming a substitute for safety.

The implication is that AI firms are becoming infrastructure patrons. Instead of merely consuming grid capacity, they are underwriting technologies that may expand it, especially where regulators and developers need credible long-term offtake commitments. This is where Microsoft’s permitting tools and NVIDIA’s simulation tools fit the market: they are attempts to reduce friction on the supply side while data-center demand keeps rising on the demand side. (blogs.microsoft.com)

The company also has skin in the game. Its announcement that it is working with Constellation Energy to restart the 835 MW Crane Clean Energy Center shows that this is not theoretical advocacy but capital-backed power procurement. At the same time, Microsoft continues to support fusion research through its Helion partnership, underscoring that it sees multiple paths to firm carbon-free power rather than betting everything on one technology. (blogs.microsoft.com)

That is one reason nuclear is back in the conversation. Nuclear’s appeal is not rhetorical; it is practical. It produces large quantities of steady power from a relatively small footprint, which is attractive for data-center corridors where land, transmission, and local grid capacity are all at a premium. (blog.google)

That shift also reflects a competitive reality. If one cloud provider can secure power sooner and more reliably than another, it can expand faster, sign more customers, and place more AI workloads. In that sense, the clean-energy strategy is also a business-development strategy, and Microsoft appears to understand that better than many legacy energy buyers. (blogs.microsoft.com)

That matters because nuclear projects are not ordinary software deployments. They are complex systems where geometry, materials, safety cases, and operational procedures all interact. Simulation software can help teams test designs, inspect workflows, and generate more consistent digital records before a project ever reaches a regulator’s desk.

The industrial angle is critical. Digital twins are not just prettier dashboards; they are a way to keep engineering, operations, and documentation synchronized. In regulated sectors, that can reduce duplication, preserve institutional memory, and surface inconsistencies earlier in the process, which is where the return on investment starts to become more than technical theater. (investor.nvidia.com)

This is where NVIDIA’s stack becomes more than a graphics story. Omniverse, Earth-2, and PhysicsNeMo are part of a broader effort to model reality well enough that industrial AI can help manage it. In the nuclear context, that could mean faster iteration on design and better alignment between engineering artifacts and the regulatory narrative.

The Idaho National Laboratory collaboration confirms the premise. INL said the Microsoft-built solution would help generate engineering and safety analysis reports, ingest and analyze documents, and automate the construction of licensing materials while leaving the actual analysis and verification to humans. That distinction is essential, because regulators are not outsourcing judgment; they are trying to reduce the clerical drag around it.

That said, even assistive systems can materially change project velocity. If the first draft of a licensing package moves from months to days, then engineers spend less time hunting citations and more time on substantive problem-solving. For an industry plagued by labor scarcity and regulatory friction, that is a real productivity gain, not just a minor convenience. (microsoft.com)

The deeper insight is that bureaucracy is often a data-integration problem wearing a legal costume. Once you see it that way, the promise of AI becomes more concrete: not to relax standards, but to create a system where standards can be applied more quickly and more consistently. That is a much more defensible value proposition than “AI will replace regulators.”

This is not a generic co-marketing exercise. NVIDIA’s March 2025 announcement explicitly noted that the Microsoft Azure Marketplace now features preconfigured Omniverse instances and Omniverse Kit App Streaming on NVIDIA A10 GPUs, which is a concrete sign that the partnership is already embedded in cloud distribution channels. That kind of integration is often where abstract ecosystems become real products. (investor.nvidia.com)

That is also why the Azure story extends beyond one flagship use case. Microsoft has positioned its energy and resources portfolio around modular AI scenarios, from permitting to operational knowledge reuse. The result is a platform approach rather than a one-off application, which is a more durable way to capture industrial workflows.

That matters because nuclear is a domain where bad abstractions can be expensive. By grounding the workflow in digital twins and physics-based simulation, the Microsoft-NVIDIA stack has a better chance of surviving contact with regulators and engineering teams. In a safety-critical industry, plausible is not good enough; the tools need to be operationally credible.

The Idaho National Laboratory partnership is the clearest validation point, because INL sits at the center of U.S. nuclear R&D and licensing expertise. The laboratory said the solution is designed to help generate standard regulatory reports and support developers working on both new reactors and upgrades to existing plants, which gives the effort a practical, not just theoretical, footprint.

Operational knowledge reuse is a hidden superpower in nuclear, where every procedure, maintenance lesson, and compliance record has value over decades. AI agents can help surface that knowledge faster, especially in organizations where expertise is distributed across plants, teams, and generations of workers. That reduces the risk that critical context stays trapped in inboxes or PDFs.

This is a healthy sign for the market. It suggests the platform layer and the specialist layer can coexist, with Azure providing scale, compliance, and distribution while startups build focused nuclear applications on top. That division of labor is likely to produce better tools than any “one-size-fits-all” enterprise AI rollout.

For enterprises, especially utilities and reactor developers, the impact is immediate and operational. A better permitting workflow can shorten project timelines, reduce document churn, improve traceability, and help teams standardize knowledge across large organizations. In a regulated market, those are not minor efficiencies; they can determine whether projects move at all.

The important distinction is that consumer benefit depends on successful physical deployment, while enterprise benefit can appear as soon as the software is adopted. If Microsoft and NVIDIA can make the administrative layer faster without compromising safety, the enterprise value may arrive years before the grid benefits fully materialize.

This is why the broader market should pay attention. The real prize is not just faster nuclear licensing, but a reusable template for regulated-industrial AI. Once a workflow is validated in one of the world’s most demanding sectors, it can be repackaged for energy, aviation, mining, manufacturing, and government.

Their rivals are moving in the same direction, but not identically. Google is pairing AI with nuclear developers and utilities, Amazon is tying SMRs to cloud growth, and these moves collectively suggest that hyperscalers are becoming long-term patrons of advanced energy. The competitive question is no longer who buys the most power today, but who helps create the fastest path to new power tomorrow. (blog.google)

This also creates lock-in potential. If regulators, developers, and utilities standardize around specific AI workflows and data structures, the vendor ecosystem that controls those workflows can become deeply embedded. In enterprise technology, the company that owns the process often owns the future budget.

For now, the signal is encouraging. Microsoft is embedding the strategy in public sustainability language, NVIDIA is integrating its tools into cloud marketplaces, and the nuclear ecosystem is actively participating rather than resisting. That combination suggests that the market has moved past “Should we do this?” and into “How quickly can we industrialize it?” (blogs.microsoft.com)

A second strength is ecosystem depth. Microsoft has utilities, labs, and startups using Azure-based tooling, while NVIDIA is pushing Omniverse into cloud channels and industrial workflows. That combination gives the effort distribution, technical depth, and partner variety.

A second concern is safety and validation. Even if AI only drafts documents, bad outputs can propagate errors, and errors in nuclear contexts are not cheap. The human-in-the-loop design helps, but it also means the software must be better than simply adding more staff and better process discipline.

There is also a broader market test underway. If hyperscalers continue using AI to de-risk nuclear and other firm-power technologies, then the relationship between cloud and energy will deepen from customer-supplier to co-developer. That would reshape capital allocation in both sectors and likely accelerate a wave of platform competition around regulated industrial AI. (blogs.microsoft.com)

Source: Neowin Microsoft and NVIDIA are solving the one bottleneck standing between us and AGI

At the center of this push is Microsoft’s Generative AI for Permitting effort, a Garage-born project that reframes licensing as an automation problem instead of a paperwork inevitability. Microsoft says the work targets the “single biggest bottleneck” in clean-energy deployment, and Idaho National Laboratory has separately confirmed that it is collaborating with Microsoft to streamline nuclear permitting and licensing using Azure AI services. In other words, this is not just branding; it is becoming a formalized toolchain with real institutional partners. (microsoft.com)

The significance is broader than nuclear alone. If AI can compress the time it takes to assemble, review, and maintain regulatory documentation, then the same pattern could apply to grid projects, transmission buildouts, and other energy infrastructure that currently moves at the speed of compliance. That is why the Microsoft-NVIDIA combination matters: Microsoft brings the workflow and cloud layer, NVIDIA brings simulation, digital-twin, and physics-heavy tooling, and both companies are betting that software can shave years off the path to electrons on the grid. (investor.nvidia.com)

Overview

Overview

The current wave of AI infrastructure demand has forced a reappraisal of what counts as a strategic asset. GPUs matter, but so do land, transmission, cooling, permits, and baseload power. Microsoft’s February 2026 sustainability update made that plain by stating that the company is pursuing a broader portfolio of carbon-free energy technologies, including nuclear energy, next-generation grid infrastructure, and carbon capture, while also saying it will continue to use AI-driven tools to design, permit, and deploy new power technologies faster. (blogs.microsoft.com)That shift did not happen in a vacuum. Microsoft has spent years building one of the largest corporate clean-energy portfolios in the world, with the company saying it has contracted 40 GW of new renewable energy supply across 26 countries since its 2020 carbon-negative announcement. The company’s logic is increasingly pragmatic: renewables remain central, but intermittent generation alone does not solve the rising demand curve created by AI data centers and industrial compute. (blogs.microsoft.com)

Why the bottleneck is no longer just compute

The new constraint is not purely chip supply or model scaling. It is the timeline for bringing new power online, and especially the time required to license, finance, and construct advanced energy assets. Microsoft’s Garage project says permitting can take more than a decade and hundreds of millions of dollars for a single nuclear plant, which is why the company frames the problem as one of document generation, review, and regulatory coordination. (microsoft.com)That framing is powerful because it moves the debate from ideology to throughput. A process that requires thousands of engineering pages, repetitive validation, and multiple regulatory handoffs is exactly where generative AI can be most useful, if the outputs remain auditable and the humans stay in control. The industry is effectively asking whether AI can become an accelerator for bureaucracy without becoming a substitute for safety.

The clean-energy competition is accelerating

Microsoft is not alone. Google has signed agreements with Kairos Power for multiple small modular reactors, Amazon has announced nuclear-energy agreements tied to SMRs, and both companies openly position nuclear as a solution to AI-era electricity needs. That matters because corporate demand is no longer just buying power; it is helping shape the future build pipeline for advanced nuclear. (blog.google)The implication is that AI firms are becoming infrastructure patrons. Instead of merely consuming grid capacity, they are underwriting technologies that may expand it, especially where regulators and developers need credible long-term offtake commitments. This is where Microsoft’s permitting tools and NVIDIA’s simulation tools fit the market: they are attempts to reduce friction on the supply side while data-center demand keeps rising on the demand side. (blogs.microsoft.com)

Microsoft’s Energy Strategy

Microsoft’s position has evolved from aggressive renewable procurement to a more diversified carbon-free portfolio. The company now says that achieving carbon-negative goals requires an “all-of-the-above” decarbonization strategy, and it explicitly lists nuclear energy among the technologies needed to balance reliability with emissions reduction. That is a meaningful shift because it signals that Microsoft views intermittency, not just carbon intensity, as the central problem. (blogs.microsoft.com)The company also has skin in the game. Its announcement that it is working with Constellation Energy to restart the 835 MW Crane Clean Energy Center shows that this is not theoretical advocacy but capital-backed power procurement. At the same time, Microsoft continues to support fusion research through its Helion partnership, underscoring that it sees multiple paths to firm carbon-free power rather than betting everything on one technology. (blogs.microsoft.com)

From PPAs to firm power

For years, corporate sustainability was dominated by power purchase agreements for wind and solar. Those deals still matter, but they do not always align with around-the-clock industrial load profiles, especially for AI infrastructure that runs continuously and is growing quickly. Microsoft’s current strategy suggests that the company wants cleaner power that is also more dispatchable, more durable, and less exposed to weather-driven volatility. (blogs.microsoft.com)That is one reason nuclear is back in the conversation. Nuclear’s appeal is not rhetorical; it is practical. It produces large quantities of steady power from a relatively small footprint, which is attractive for data-center corridors where land, transmission, and local grid capacity are all at a premium. (blog.google)

The procurement logic behind the pivot

Microsoft’s recent public statements make clear that the company expects energy policy, grid planning, and AI infrastructure to converge. It says it will continue to “design, permit and deploy new power technologies” using AI-driven tools, which implies that energy procurement is no longer being treated as a back-office utility function but as a strategic capability. (blogs.microsoft.com)That shift also reflects a competitive reality. If one cloud provider can secure power sooner and more reliably than another, it can expand faster, sign more customers, and place more AI workloads. In that sense, the clean-energy strategy is also a business-development strategy, and Microsoft appears to understand that better than many legacy energy buyers. (blogs.microsoft.com)

NVIDIA’s Simulation Advantage

NVIDIA’s contribution is less about legal paperwork and more about the physics of building things in the real world. The company has steadily expanded Omniverse, Cosmos, and related simulation tools to support physical AI, digital twins, and synthetic-data generation across industrial use cases. In March 2025, NVIDIA said Microsoft was among the first major partners integrating Omniverse into next-generation software products and services. (investor.nvidia.com)That matters because nuclear projects are not ordinary software deployments. They are complex systems where geometry, materials, safety cases, and operational procedures all interact. Simulation software can help teams test designs, inspect workflows, and generate more consistent digital records before a project ever reaches a regulator’s desk.

Digital twins and industrial credibility

NVIDIA’s broader Omniverse push is built on the idea that physical AI needs a digital mirror. The company says its platform enables physically accurate digital twins, large-scale synthetic data generation, and simulation environments that help developers understand and optimize the physical world. That is especially relevant to nuclear, where mistakes are costly and traceability is non-negotiable.The industrial angle is critical. Digital twins are not just prettier dashboards; they are a way to keep engineering, operations, and documentation synchronized. In regulated sectors, that can reduce duplication, preserve institutional memory, and surface inconsistencies earlier in the process, which is where the return on investment starts to become more than technical theater. (investor.nvidia.com)

Why Omniverse matters to permitting

At first glance, simulation and permitting may seem like separate problems. In practice, they are linked, because every major nuclear license package depends on engineering assumptions that must be captured, checked, and explained. If simulation environments can generate more structured, reusable data, then AI-based permitting systems can draft better first-pass documents and reduce the amount of manual reconciliation needed later.This is where NVIDIA’s stack becomes more than a graphics story. Omniverse, Earth-2, and PhysicsNeMo are part of a broader effort to model reality well enough that industrial AI can help manage it. In the nuclear context, that could mean faster iteration on design and better alignment between engineering artifacts and the regulatory narrative.

The Permitting Problem

If AI is the headline, permitting is the real plot. Microsoft’s Garage materials are unusually blunt: permitting is described as the “single biggest bottleneck” to clean-energy deployment, and in some cases licensing a nuclear plant can take more than a decade and cost hundreds of millions of dollars before the plant produces any power. That is the sort of obstacle that technology companies love to frame as an automation challenge. (microsoft.com)The Idaho National Laboratory collaboration confirms the premise. INL said the Microsoft-built solution would help generate engineering and safety analysis reports, ingest and analyze documents, and automate the construction of licensing materials while leaving the actual analysis and verification to humans. That distinction is essential, because regulators are not outsourcing judgment; they are trying to reduce the clerical drag around it.

What AI can and cannot do here

AI is well suited to drafting, organizing, cross-referencing, and standardizing content pulled from large document sets. It is less suited to making safety determinations, resolving novel technical disputes, or validating reactor physics on its own. Microsoft and INL both describe the workflow as assistive rather than autonomous, which is the right framing for a high-consequence domain.That said, even assistive systems can materially change project velocity. If the first draft of a licensing package moves from months to days, then engineers spend less time hunting citations and more time on substantive problem-solving. For an industry plagued by labor scarcity and regulatory friction, that is a real productivity gain, not just a minor convenience. (microsoft.com)

Why the document layer matters so much

Nuclear licensing is a paperwork-intensive discipline because every claim has to be traceable. Safety analysis reports, operating license materials, engineering justifications, and compliance mapping all need consistent language and disciplined version control. That is exactly the kind of workflow where generative AI can help assemble structure from fragmented source material, provided the human review loop remains mandatory.The deeper insight is that bureaucracy is often a data-integration problem wearing a legal costume. Once you see it that way, the promise of AI becomes more concrete: not to relax standards, but to create a system where standards can be applied more quickly and more consistently. That is a much more defensible value proposition than “AI will replace regulators.”

The Microsoft-NVIDIA Stack

The partnership works because each company brings a different layer of the infrastructure puzzle. Microsoft contributes Azure, enterprise governance, agent frameworks, and a path into regulated-sector workflows. NVIDIA contributes simulation engines, digital-twin tooling, and the computational backbone needed to make physically grounded models useful at scale. (investor.nvidia.com)This is not a generic co-marketing exercise. NVIDIA’s March 2025 announcement explicitly noted that the Microsoft Azure Marketplace now features preconfigured Omniverse instances and Omniverse Kit App Streaming on NVIDIA A10 GPUs, which is a concrete sign that the partnership is already embedded in cloud distribution channels. That kind of integration is often where abstract ecosystems become real products. (investor.nvidia.com)

Azure as the control plane

Microsoft’s advantage is that Azure can serve as the governance layer for regulated workflows. Nuclear developers need access controls, audit trails, data residency options, and tight identity management, all of which align with Microsoft’s enterprise strengths. In a sector where data sensitivity matters, cloud convenience alone is not enough; trust architecture is the real product.That is also why the Azure story extends beyond one flagship use case. Microsoft has positioned its energy and resources portfolio around modular AI scenarios, from permitting to operational knowledge reuse. The result is a platform approach rather than a one-off application, which is a more durable way to capture industrial workflows.

NVIDIA’s role in the model of the physical world

NVIDIA’s industrial strategy is to make simulation central to AI deployment. Omniverse and Cosmos are increasingly about world modeling, synthetic data, and physical reasoning, which fits nuclear better than consumer-facing AI does. A reactor project is not asking the model to write poetry; it is asking it to help represent a complex physical system accurately enough to be useful.That matters because nuclear is a domain where bad abstractions can be expensive. By grounding the workflow in digital twins and physics-based simulation, the Microsoft-NVIDIA stack has a better chance of surviving contact with regulators and engineering teams. In a safety-critical industry, plausible is not good enough; the tools need to be operationally credible.

The Partner Ecosystem

Microsoft’s nuclear AI strategy is broader than NVIDIA. The company has been assembling a network of utilities, labs, and startups around Azure-based AI workflows, signaling that it wants to be the connective tissue for the industry rather than a single-solution vendor. That ecosystem approach is important because no one player can modernize the entire nuclear lifecycle alone.The Idaho National Laboratory partnership is the clearest validation point, because INL sits at the center of U.S. nuclear R&D and licensing expertise. The laboratory said the solution is designed to help generate standard regulatory reports and support developers working on both new reactors and upgrades to existing plants, which gives the effort a practical, not just theoretical, footprint.

Southern Nuclear and operational knowledge reuse

Microsoft has also pointed to Southern Nuclear as an example of AI agents being deployed across a fleet to improve knowledge reuse and decision-making. That use case is quieter than permitting, but in some ways it is more telling: once a power company starts treating institutional memory as a machine-assisted asset, AI becomes part of operations rather than just experimentation.Operational knowledge reuse is a hidden superpower in nuclear, where every procedure, maintenance lesson, and compliance record has value over decades. AI agents can help surface that knowledge faster, especially in organizations where expertise is distributed across plants, teams, and generations of workers. That reduces the risk that critical context stays trapped in inboxes or PDFs.

Startups and specialization

The inclusion of startups such as Everstar and Atomic Canyon shows that Microsoft understands the need for domain-specific tooling. Energy infrastructure is too specialized for one general-purpose model to do everything well, which is why niche vendors that encode regulatory knowledge, data curation, and workflow specificity remain essential.This is a healthy sign for the market. It suggests the platform layer and the specialist layer can coexist, with Azure providing scale, compliance, and distribution while startups build focused nuclear applications on top. That division of labor is likely to produce better tools than any “one-size-fits-all” enterprise AI rollout.

Enterprise vs Consumer Impact

For consumers, the most visible impact is indirect: more reliable power, fewer grid bottlenecks, and possibly a slower rise in AI-related energy costs if infrastructure becomes easier to deploy. That is not a flashy benefit, but it is the kind of systemic improvement that eventually shows up in reliability metrics, price stability, and regional economic growth. (blogs.microsoft.com)For enterprises, especially utilities and reactor developers, the impact is immediate and operational. A better permitting workflow can shorten project timelines, reduce document churn, improve traceability, and help teams standardize knowledge across large organizations. In a regulated market, those are not minor efficiencies; they can determine whether projects move at all.

Different timelines, different payoffs

Consumers will experience this through the electricity system long before they ever hear about a licensing model or simulation platform. Enterprise buyers, by contrast, can begin realizing value now through workflow compression and better internal coordination. That asymmetry is why infrastructure AI often looks boring from the outside but transformative from the inside.The important distinction is that consumer benefit depends on successful physical deployment, while enterprise benefit can appear as soon as the software is adopted. If Microsoft and NVIDIA can make the administrative layer faster without compromising safety, the enterprise value may arrive years before the grid benefits fully materialize.

Why regulated industries are the proving ground

Nuclear is a harsh test environment for AI because the tolerance for error is extremely low. That makes it an excellent proving ground for responsible generative AI, because the systems that survive here are more likely to be trustworthy elsewhere. If AI can help in nuclear permitting, it can likely help in other document-heavy, compliance-heavy sectors too.This is why the broader market should pay attention. The real prize is not just faster nuclear licensing, but a reusable template for regulated-industrial AI. Once a workflow is validated in one of the world’s most demanding sectors, it can be repackaged for energy, aviation, mining, manufacturing, and government.

Competitive Implications

Microsoft and NVIDIA are not just solving a bottleneck; they are defining the terms of competition for AI-era infrastructure. If they can own the workflow where energy projects are designed, modeled, documented, and licensed, they gain strategic influence over the pace of physical-world expansion. That is a subtle but powerful form of market power. (investor.nvidia.com)Their rivals are moving in the same direction, but not identically. Google is pairing AI with nuclear developers and utilities, Amazon is tying SMRs to cloud growth, and these moves collectively suggest that hyperscalers are becoming long-term patrons of advanced energy. The competitive question is no longer who buys the most power today, but who helps create the fastest path to new power tomorrow. (blog.google)

A race to shape the regulatory stack

There is a strategic difference between financing generation and speeding approval. Financing can add certainty, but permitting determines whether certainty turns into megawatts. Microsoft’s emphasis on document automation and NVIDIA’s on physical simulation place both companies upstream of construction, which may prove more valuable than simply consuming the output once it exists.This also creates lock-in potential. If regulators, developers, and utilities standardize around specific AI workflows and data structures, the vendor ecosystem that controls those workflows can become deeply embedded. In enterprise technology, the company that owns the process often owns the future budget.

The market signal to watch

The clearest signal is whether these tools move from pilot-stage novelty into recurring project infrastructure. If more utilities, labs, and developers adopt Microsoft’s permitting stack or NVIDIA’s simulation layer, then the partnership becomes a durable platform play. If not, it risks becoming another impressive demo that never quite escapes the conference circuit.For now, the signal is encouraging. Microsoft is embedding the strategy in public sustainability language, NVIDIA is integrating its tools into cloud marketplaces, and the nuclear ecosystem is actively participating rather than resisting. That combination suggests that the market has moved past “Should we do this?” and into “How quickly can we industrialize it?” (blogs.microsoft.com)

Strengths and Opportunities

The biggest strength of this initiative is that it tackles a real, measurable bottleneck rather than an abstract AI fantasy. By focusing on permitting, documentation, and simulation, Microsoft and NVIDIA are aiming at the operational choke points that actually slow nuclear deployment. That makes the effort more credible than many grand claims about AI transformation. (microsoft.com)A second strength is ecosystem depth. Microsoft has utilities, labs, and startups using Azure-based tooling, while NVIDIA is pushing Omniverse into cloud channels and industrial workflows. That combination gives the effort distribution, technical depth, and partner variety.

- Faster permitting drafts could reduce project lead times.

- Better document consistency may lower review friction.

- Digital twins can improve engineering alignment.

- Cloud governance is a strong fit for regulated industries.

- Partner diversity reduces dependence on one use case.

- Enterprise AI monetization is more durable than demo-driven adoption.

- Cross-sector reuse could extend the model beyond nuclear.

Risks and Concerns

The first risk is overclaiming. The phrase “standing between us and AGI” is catchy, but energy infrastructure will not magically produce general intelligence, and permitting automation will not dissolve the complexity of reactor construction. Real-world deployment still depends on politics, capital, labor, supply chains, and regulatory scrutiny. (microsoft.com)A second concern is safety and validation. Even if AI only drafts documents, bad outputs can propagate errors, and errors in nuclear contexts are not cheap. The human-in-the-loop design helps, but it also means the software must be better than simply adding more staff and better process discipline.

- Model hallucinations could introduce documentation errors.

- Vendor lock-in may make utilities dependent on a small set of platforms.

- Regulatory skepticism could slow adoption.

- Security and confidentiality requirements raise deployment complexity.

- Public trust in AI-assisted nuclear workflows is still fragile.

- Project economics may still be unfavorable despite software gains.

- Infrastructure delays outside permitting can erase software benefits.

Looking Ahead

The next phase will be about proof, not promises. Watch whether Microsoft’s permitting tools show up in more formalized utility and lab deployments, whether NVIDIA’s simulation stack becomes part of standard nuclear project planning, and whether the combined workflow actually compresses project timelines in measurable ways. The difference between a compelling narrative and an industry standard is usually a few boring but crucial pilot programs.There is also a broader market test underway. If hyperscalers continue using AI to de-risk nuclear and other firm-power technologies, then the relationship between cloud and energy will deepen from customer-supplier to co-developer. That would reshape capital allocation in both sectors and likely accelerate a wave of platform competition around regulated industrial AI. (blogs.microsoft.com)

What to watch

- New utility pilots using Microsoft’s permitting stack.

- Additional NVIDIA Omniverse integrations tied to energy infrastructure.

- Formal regulator feedback on AI-assisted licensing workflows.

- More nuclear offtake deals from hyperscale cloud providers.

- Evidence of timeline compression in document-heavy project phases.

Source: Neowin Microsoft and NVIDIA are solving the one bottleneck standing between us and AGI