Microsoft’s pivot toward building and orchestrating its own foundation models — while simultaneously opening Copilot to third‑party models — has thrust enterprise customers, partners, and regulated industries into a new strategic calculus: hedge your model bets now or risk disruption later. s://microsoft.ai/news/two-new-in-house-models/)

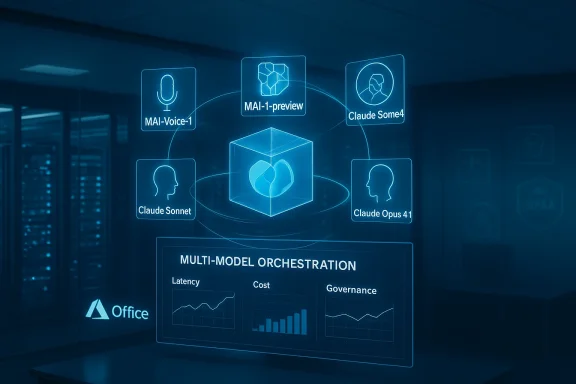

Microsoft’s Copilot family has evolved from a single‑vendor integration into a multi‑model orchestration layer that increasingly mixes first‑party MAI models, OpenAI’s frontier models, and third‑party offerings such as Anthropic’s Claude family. The change is both deliberate and practical: Microsoft wants product control, lower latency and cost, and the ability to optimize models tightly to its apps — while preserving customer choice through model routing and osoft.com]

That engineering and commercial pivot is the central theme of a recent episode of the AI Agent & Copilot Podcast, where RealActivity CEO Paul Swider urged customers — especially healthcare providers and other mission‑critical organizations — to *huse changes in the underlying model layer will ripple up into workflows, regulatory postures, and vendor relationships.

This article unpacks the technical and business drivers behind Microsoft’s move, assesses the short‑ and medium‑term risks to customers, and offers concrete, pragmatic steps IT and procurement leaders should take now to preserve operational resilience and strategic optionality.

Swider’s advice to healthcare organizations was blunt: audit dependencies, build testing plans, and budget for transition validation. He argues that organizations should prepare for model switches in mission‑critical paths now, because testing after the fact is costly and risky.

On employment: Mustafa Suleyman’s public forecast that AI will reach human‑level performance across many white‑collar tasks in 12–18 months has already catalyzed debate. That timeline is a wakeup call for workforce planning and reskilling investments, but organizations should build scenarios rather than rely on a single forecast. Roles will likely evolve — new jobs in agent engineering, prompt governance, monitoring and safety will grow even as some task‑level roles decline.

There are clear, positive outcomes on the horizon: better, more optimized experiences, potential vertical specialization (including healthcare), and the possibility of lower latency and cost for high‑volume scenarios. But those benefits are paired with real risks: reduced model competition, operational instability during transitions, compliance complexity, and an unsettled labor market narrative driven by aggressive automation forecasts.

Practical, actionable steps — cataloging dependencies, contractual protections, model‑agnostic integration, continuous validation, multi‑model pilots, and governance — convert anxiety into control. As Paul Swider and others argue, organizations that plan for model‑layer volatility now will be better positioned to capture the productivity gains AI promises while avoiding the painful scramble of last‑minute remediation.

The AI era in enterprise software will be defined less by single headline features and more by how reliably organizations can operate, govern, and change the models that power their workflows. In that contest, resilience, observability, and contractual clarity are the winning attributes — and they can be built, measured, and maintained long before any vendor declares “model self‑sufficiency.”

Conclusion: hedge your model bets deliberately — not as a defensive reflex, but as a foundational part of modern IT strategy.

Source: Cloud Wars AI Agent and Copilot Podcast: Microsoft AI Model 'Self Sufficiency' Requires Customers to Hedge Bets

Background

Background

Microsoft’s Copilot family has evolved from a single‑vendor integration into a multi‑model orchestration layer that increasingly mixes first‑party MAI models, OpenAI’s frontier models, and third‑party offerings such as Anthropic’s Claude family. The change is both deliberate and practical: Microsoft wants product control, lower latency and cost, and the ability to optimize models tightly to its apps — while preserving customer choice through model routing and osoft.com]That engineering and commercial pivot is the central theme of a recent episode of the AI Agent & Copilot Podcast, where RealActivity CEO Paul Swider urged customers — especially healthcare providers and other mission‑critical organizations — to *huse changes in the underlying model layer will ripple up into workflows, regulatory postures, and vendor relationships.

This article unpacks the technical and business drivers behind Microsoft’s move, assesses the short‑ and medium‑term risks to customers, and offers concrete, pragmatic steps IT and procurement leaders should take now to preserve operational resilience and strategic optionality.

Why Microsoft is building its own models — and why it still buys others

Strategic motivations

Microsoft’s decision to develop in‑house models such as MAI‑Voice‑1 and MAI‑1‑preview is not an ideological pivot so much as an insurance and product strategy. Owning core models promises:- Control over latency, cost, and feature roadmap, enabling Copilot features to be tuned tightly to Office, Windows and Azure scenarios.

- Faster product iteration and integration — voice and image models can be embedded into Copilot experiences without spherical negotiations over third‑party roadmaps.

- Data and telemetry advantages: running more model inference on Microsoft infrastructure collects product‑level signals that can accelerate fine‑tuning for specific workloads.

Economic and geopolitical pressures

Behind the product logic sits a stack of economimpute costs, data center capacity, and franchise risk. Large models are expensive to train and serve; owning some model capacity gives Microsoft margin and operational flexibility. At the same time, geopolitical and regulatory friction — for example, where models run and how data crosses borders — encourage hyperscalers to control more of the stack to deliver compliant experiences. These are precisely the dynamics Paul Swider flagged as reasons organizations should plan for upstream model changes.What this means for customers: the practical impacts and the healthcare lens

Model churn is not an abstract problem

When the underlying model changes — whether Microsoft swaps in a new MAI variant or routes tasks to Anthropic Claude Opus 4.1 — the outputs, latency profile, hallucination modes,neering practices can shift. That can be harmless for simple chat queries, and consequential for clinical summarization, revenue‑cycle automation, or any workflow that directly touches patient care.Swider’s advice to healthcare organizations was blunt: audit dependencies, build testing plans, and budget for transition validation. He argues that organizations should prepare for model switches in mission‑critical paths now, because testing after the fact is costly and risky.

The promise — and the caveat — of vertical specialization

There is a plausible upside: a Microsoft that controls its own models can accelerate healthcare‑oriented capabilities — for example, embedding stronger HIPAA controls at the model level, training on clinical corpora to improve clinical reasoning, or integrating tightly with Azure Health Data Services and FHIR APIs. Paul Swider’s healthcare‑focused analysis warns that these capabilities could materialize if Microsoft invests specifically in healthcare model tutions that vendor control risks narrowing competition and customer choice. We can corroborate that Microsoft’s healthcare attentiing; Swider’s perspective adds industry urgency.amples to watch

- Clinical documentation assistants and automated discharge summaries: small changes in a model’s hallucination profile or tokenization could change coding accuracy and compliance exposure.

- Billing and coding automation: model‑driven decisions that impact revenue need deterministic validation and rollback plans.

- Agentic workflows that act on systems of record (scheduling, claims submission): when agents receive new model outputs, they must be revalidated end‑to‑end — not just in the chat window.

Evidence and verification: what the public record shows

- Microsoft’s official announcement expanding model choice in Microsoft 365 Copilot confirms Anthropic models such as Claude Sonnet 4 and Claude Opus 4.1 are available to customers in scenarios like Researcher and Copilot Studio, with initial access via its Frontier program. That announcement underscores Microsoft’s multi‑model orchestration approach.

- Microsoft’s AI organization publicly introduced first‑party models — MAI‑Voice‑1 (speech) and MAI‑1‑preview (text) — describing MAI‑Voice‑1 as a highly efficient speech generator and MAI‑1‑preview as an instruction‑following foundation model tested on LMArena. Microsoft’s MAI blog and multiple independent outlets reported the launches and their technical claims (e.g., MAI‑1‑preview trained using a mixture‑of‑experts approach on ~15,000 NVIDIA H100 GPUs). Those training and efficiency numbers are Microsoft’s reported figures and are corroborated by industry coverage.

- Microsoft AI chief Mustafa Suleyman publicly warned that AI could achieve “human‑level performance on most, if not all, professional tasks” within a 12‑ to 18‑month window, comments that were widely reported following his Financial Times interview. That timeline is notably aggressive and widely discussed across major outlets; it should be treated as a forecast from Microsoft leadership rather than a settled industry fact.

- Incidents such as a recent Copilot bug that exposed confidential emails underline the operational risk of rapid feature expansion; security and privacy incidents can amplify regulatory scrutiny and customer mistrust. These real‑world failures emphasize why customers must treat AI features as production services requiring observability and incident response.

Critical analysis: strengths, risks, and structural trade‑offs

Strengths of Microsoft’s approach

- Platform coherence and integration: First‑party models let Microsoft bake AI into product UX in ways third parties cannot match. Faster iteration on product experiences is a practical benefit for end users.

- Model orchestration and choice: By integrating Anthropic and OpenAI choices into Copilot, Microsoft reduces vendor lock‑in risk for enterprise customers and enables workload‑specific optimization. That design is a structural hedge for both Microsoft and customers.

- Operational efficiency: Microsoft’s public claims about model efficiency (e.g., MAI‑Voice‑1 generating high‑quality audio with minimal GPU usage) indicate real engineering work to reduce cost and latency that can broaden product availability.

Risks and open questions

- Customer lock‑in vs. model choice paradox: Building first‑party models while integrating third‑party models creates a tension: the more Microsoft optimizes Copilot toward MAI, the less incentive third parties may have to invest deep integrations specific to Microsoft, which could subtly erode competitive choice over time. Swider warns this could reduce competitive pressure. That risk is real and should be actively managed by customers.

- Operational instability from model churn: Frequent model updates or routing changes can break downstream workflows. For regulated industries — where auditability, reproducibility and deterministic outputs matter — this is not hypothetical. Organizations must assume model‑level churn and prepare accordingly.

- Regulatory and compliance exposure: New models may change how data is processed, where it moves, and what protections apply (for instance, data residency or HIPAA applicability). Swider highlights the importance of clarity around cochange under the vendor’s control. Until vendors publish rigorous, auditable model governance processes, customers bear compliance risk.

- Aggressive job automation forecasts may create planning noise: Mustafa Suleyman’s 12–18 month prediction that most white‑collar tasks will reach human‑level performance is a high‑impact forecast and has been widely reported. Organizations should plan for productivity changes and reskilling pressures, brio planning based on an aggressive timeline. Use multiple scenarios and stress‑test workforce and financial models.

Practical guidance: how to hedge model‑layer risk today

Below are concrete, prioritized actions IT leaders should take over the next 90–180 days to increase resilience and preserve optionality.1. Catalog and classify AI dependencies (30 days)

- Inventory all applications that call Copilot or other managed models (including Researcher, Copilot Studio, agentic workflows).

- Classify each dependency by impact: High (patient safety, regulatory reporting), Medium (billing, privacy‑sensitive docs), Low (internal summaries).

- Map data flows and identify where model outputs are acted upon programmatically.

This simple step exposes the surface area of risk and directs testing resources Demand model‑change SLAs and contractual protections (60 days) - Require vendors to include model transition clauses, backward compatibility guarantees for a defined window, and performance SLOs for mission‑critical workloads.

- Insist on auditability: model lineage, prompt versioning, and changelogs should be made available for regulated workflows.

These commercial protections convert vendor rhetoric into enforceable obligations.

3. Build model‑agnostic integration layers (90 days)

- Implement an abstraction layer that routes prompts and receives outputs through a controlled interface (for example, a sidecar service that implements input/output validation, logging, and model selection flags).

- Use feature flags and model selectors so you can switch model backends in staging before any production cutover.

This reduces coupling and makes model swaps operationally tractable.

4. Create continuous validation and monitoring (ongoing)

- Deploy automated tests that evaluate model outputs against expected behavior (accuracy, hallucination rate, latency) per workload.

- Instrument business metrics tied to model outputs (e.g., coding accuracy, reversed claims, clinical discrepancy rate).

- Establish a rollback plan if model outputs drift beyond predefined tolerances.

Think of models as software dependencies — they need CI/CD pipelines, not just vendor release notes.

5. Maintain multi‑model proof‑of‑concepts

- Where permitted, run parallel experiments with at least two model families (MAI, OpenAI, Anthropic, or hosted alternatives) for critical tasks to compare behavior in production‑like data.

- Validate privacy, latency, and compliance posture across each provider.

Diverse pilots give you empirical grounds to pick a model rather than vendor narratives.

6. Operationalize governance and human oversight

- Define exception workflows and human‑in‑the‑loop checkpoints for auto‑actions that affect external stakeholders.

- Ensure audit trails and supporting documentation for inspections and compliance audits.

- Invest in reskilling programs for staff who will supervise, validate, and interpret model outputs. Swider emphasizes that organizational readiness is as much people and process as it is technology.

Sector spotlicific recommendations

Healthcare systems face uniquely high stakes: patient safety, regulatory scrutiny, and complex vendor ecosystems. If your organization uses Copilot‑powered features for clinical or administrative tasks, prioritize the following:- Treat model changes as software upgrades with a preapproved validation checklist for clinical accuracy, bias testing on representative cohorts, and impact analysis on downstream workflows.

- Negotiate explicit HIPAA and data‑processing commitments aligned to the model deployment architecture (where models run, whether PHI leaves the tenant boundary, and retention policies). Ifk to an external model, require clear documentation of that t.com]

- **Establish fallback human workriggered automatically if models produce uncertain or high‑risk recommendations. Clinical staff must be able to revert to manual protocols without operational friction.

The partner ecosystem and job market implications

Paul Swider and others see Microsoft’s model push creating new partner opportunities even as it disrupts older ones. Partners that can deliver model‑agnostic governance, validation tooling, and industry‑specific fine‑tuning will have demand for years to come. Microsoft’s reshaping of the AI supply chain is less a threat to the partner ecosystem than a redefinition of its value: integration, governance, and outcomes delivery matter more than point solutions.On employment: Mustafa Suleyman’s public forecast that AI will reach human‑level performance across many white‑collar tasks in 12–18 months has already catalyzed debate. That timeline is a wakeup call for workforce planning and reskilling investments, but organizations should build scenarios rather than rely on a single forecast. Roles will likely evolve — new jobs in agent engineering, prompt governance, monitoring and safety will grow even as some task‑level roles decline.

A short checklist for CIOs and procurement leaders

- Audit: Identify every Copilot/agent integration and classify impact.

- Contract: Add model transition SLAs and audit clauses.

- Integration: Build a model abstraction layer and use feature flags.

- Monitoring: Automate output validation and business metric drift detection.

- Pilots: Run multi‑model A/B tests on production‑like workloads.

- People: Invest in reskilling, and define human oversight and

Final assessment and what to watch next

Microsoft’s layered approach — building its own MAI models while integrating Anthropic and retaining OpenAI where appropriate — is a pragmatic strategy that balances control with competitive access. For customers, the takeaway is immediate: treat the model layer as part of your platform architecture and institutional risk register.There are clear, positive outcomes on the horizon: better, more optimized experiences, potential vertical specialization (including healthcare), and the possibility of lower latency and cost for high‑volume scenarios. But those benefits are paired with real risks: reduced model competition, operational instability during transitions, compliance complexity, and an unsettled labor market narrative driven by aggressive automation forecasts.

Practical, actionable steps — cataloging dependencies, contractual protections, model‑agnostic integration, continuous validation, multi‑model pilots, and governance — convert anxiety into control. As Paul Swider and others argue, organizations that plan for model‑layer volatility now will be better positioned to capture the productivity gains AI promises while avoiding the painful scramble of last‑minute remediation.

The AI era in enterprise software will be defined less by single headline features and more by how reliably organizations can operate, govern, and change the models that power their workflows. In that contest, resilience, observability, and contractual clarity are the winning attributes — and they can be built, measured, and maintained long before any vendor declares “model self‑sufficiency.”

Conclusion: hedge your model bets deliberately — not as a defensive reflex, but as a foundational part of modern IT strategy.

Source: Cloud Wars AI Agent and Copilot Podcast: Microsoft AI Model 'Self Sufficiency' Requires Customers to Hedge Bets