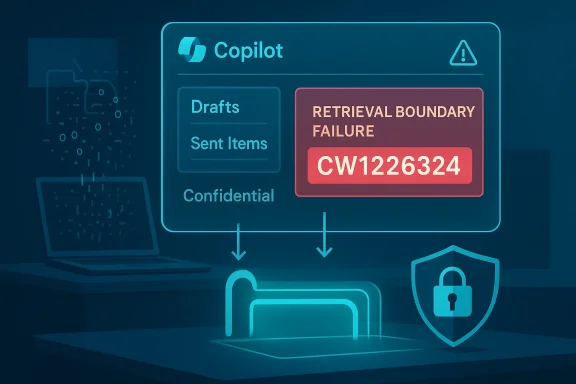

Microsoft’s enterprise Copilot has silently crossed a line: for a window of weeks earlier this year the assistant’s email summarisation pipeline was incorrectly processing messages it should have left alone — including items in users’ Sent Items and Drafts that had been explicitly labelled “Confidential.” The result: AI-generated summaries that violated the intended enforcement of Microsoft Purview sensitivity labels and Data Loss Prevention (DLP) controls, a flaw tracked internally as service advisory CW1226324 and patched with a server-side update in early February. ([bleepingcomputer.cingcomputer.com/news/microsoft/microsoft-says-bug-causes-copilot-to-summarize-confidential-emails/)

Microsoft 365 Copilot — the company’s enterprise AI layer embedded across Outlook, Word, Excel and other 365 surfaces — is designed to surface context and draft content by reading and synthesising information from a user’s permitted data. The feature set promises real productivity gains: automated summaries, suggested replies, and contextual research pulled from an organisation’s own mail and documents.

Those same capabilities are the problem when retrieval logic and policy enforcement do not align. Starting around January 21, 2026, telemetry flagged anomalous behaviour in Copilot Chat’s Work tab: the assistant’s retrieval pipeline was including items from Sent Items and Drafts in its summarisation corpus even when those items carried Purview sensitivity labels or were protected by configured DLP rules. Microsoft identified the issue and rolled out a targeted fix in early February while monitoring remediation and contacting subsets of affected tenants.

The Copilot bug (CW1226324) will not be the last headline that forces organisations to re-examine assumptions about AI and privacy. What it should spark, however, is a sober and concrete response: stronger retrieval‑boundary enforcement, clearer contractual protections, and robust, tenant‑specific audit evidence when things go wrong. Companies that accept productivity gains from embedded AI must insist — in policy, in procurement and in daily operations — that convenience never comes at the cost of confidentiality.

Source: The Hans India Microsoft Copilot Email Bug Sparks Fresh Privacy Concerns

Background

Background

Microsoft 365 Copilot — the company’s enterprise AI layer embedded across Outlook, Word, Excel and other 365 surfaces — is designed to surface context and draft content by reading and synthesising information from a user’s permitted data. The feature set promises real productivity gains: automated summaries, suggested replies, and contextual research pulled from an organisation’s own mail and documents.Those same capabilities are the problem when retrieval logic and policy enforcement do not align. Starting around January 21, 2026, telemetry flagged anomalous behaviour in Copilot Chat’s Work tab: the assistant’s retrieval pipeline was including items from Sent Items and Drafts in its summarisation corpus even when those items carried Purview sensitivity labels or were protected by configured DLP rules. Microsoft identified the issue and rolled out a targeted fix in early February while monitoring remediation and contacting subsets of affected tenants.

What exactly went wrong — technical summary

The retrieval vs. policy enforcement gap

At the heart of this incident was a retrieval-path failure. Copilot’s summarisation flow uses a combination of indexing, Microsoft Graph queries, search results and policy checks to build the context window it passes to the underlying large language model (LLM). In the affected configuration, a server-side logic or configuration error allowed messages stored in Sent Items and Drafts to be included in Copilot’s retrieval result set before the intended Purview/DLP exclusions were applied.- The bug did not change mailbox permissions — users who could already read the message could still read it. Microsoft emphasised that no one gained visibility into messages they weren’t already authorised to see. But the bigger issue is that the AI itself processed and summarised content that organisational policy expected Copilot to ignore.

- The behaviour appears isolated to the Copilot Chat “Work” experience, rather than all Copilot surfaces, and primarily affected items in Drafts and Sent Items, while inbox retrieval behaved as intended. Several independent reports and service alerts referenced the same operational scope.

Why that matters technically

LLMs are powerful because they synthesise and compress document content into new text. If the system feeding the LLM pulls in protected documents, the generated output can reveal private information — including drafts, final wording, client names, attachments descriptions or contract terms — even when the underlying permission model remains intact. That makes the enforcement boundary where the content is excluded critical: exclusion must happen at the retrieval boundary, not afterwards.Timeline and remediation

- Detection: Microsoft’s telemetry flagged the anomaly on or around January 21, 2026 and assigned internal tracking CW1226324.

- Public reporting: BleepingComputer first published a public report that brought wider attention to the matter in mid‑February, prompting follow‑up coverage by TechCrunch, Windows Central, Tom’s Guide and others.

- Fix rollout: Microsoft began rolling out a targeted server‑side configuration and code fix in early February and later said the fix had “saturated across the majority of affected environments,” while monitoring progress in a small subset of complex tenants. The company also said it was contacting tenants to validate remediation.

- Disclosure gaps: Microsoft has not published a comprehensive impact count or a detailed forensic timeline; the vendor has declined to say how many organisations were affected or whether Copilot retained logs or generated summaries that persisted beyond the immediate session. Multiple outlets noted the same lack of transparently reported numbers.

Scope and impact — What we know and what remains unknown

- Known facts:

- The issue was tracked as CW1226324 and tied to Copilot Chat’s Work tab.

- Affected folders were primarily Sent Items and Drafts; inbox items were not reported as part of the failure.

- Microsoft confirmed the behaviour was caused by a code/configuration error and that a server‑side fix was deployed in early February.

- Unverifiable or unreported elements:

- Microsoft has not disclosed the number of affected tenants or users. This prevents a full assessment of the incident’s scale. Multiple news outlets and independent trackers flagged that omission. We should treat any specific impact numbers found in informal reports as unverified unless Microsoft releases an authoritative statement.

- It remains unclear whether AI‑generated summaries were logged, retained, or used as training telemetry outside the immediate Copilot session. Microsoft’s public messages emphasise that access controls remained intact, but they do not fully explain retention or telemetry policies for generated outputs in the context of an enforcement failure. That gap is consequential and should be highlighted for compliance teams.

The enterprise and regulatory angle

This incident lands where capability meets governance. Organisations — especially in regulated sectors — rely on Purview sensitivity labels and DLP to guarantee legal and contractual protections. When a vendor’s AI layer misinterprets or bypasses those protections, the ramifications are both technical and legal.- Public sector sensitivity: The issue attracted attention inside the UK NHS and prompted concern across government IT teams. The European Parliament and other public bodies have been cautious about enabling built‑in AI on work devices; this episode only reinforced those reservations.

- Compliance risk: For teams governed by GDPR, HIPAA, financial conduct rules, or contractual confidentiality clauses, an AI that processes labelled content without explicit contractual treatment could raise compliance questions. Even if no external unauthorised user accessed the content, the mere processing by an AI pipeline may require incident notification, contract review and audit evidence in regulated contexts. Industry advisories urged affected organisations to review control evidence, telemetry, and Microsoft’s remediation notes.

- Trust implications: Beyond immediate legal concerns, the incident weakens trust in embedded cloud AI as a "safe" automation layer. Enterprises that assumed Purview/DLP extended seamlessly into vendor AI workloads now face a fresh governance challenge: verifying that vendor-supplied features respect the full stack of organisational controls.

Microsoft’s response — adequate or insufficient?

Microsoft acted technically — shipping a server-side fix and contacting tenants — but the public communications mix competence with opacity.- Strengths in Microsoft’s response:

- Rapid remediation: Microsoft identified the root cause, developed a targeted fix, and began global rollout in early February; monitoring and tenant outreach continued until the fix reached more complex environments. That technical containment is a necessary first step.

- Precise root‑cause classification: The company attributed the behaviour to a code/configuration error rather than a malicious exploit, which reduces (but does not eliminate) the immediate threat model.

- Where Microsoft fell short:

- Transparency: Microsoft has not provided a full disclosure of the number of impacted organisations, nor has it published a detailed post‑mortem describing retention, telemetry, or what (if any) Copilot logs were generated from summarised confidential content. The absence of those details leaves compliance teams in the dark and fuels suspicion.

- Communication clarity: Saying “no one gained access to information they were not already authorized to see” is technically accurate yet insufficient. It sidesteps the crucial question: Should an automated AI process be permitted to ingest and summarise content that policy explicitly marked as protected? For many security and compliance officers, the answer is a firm no.

Broader industry context — not an isolated pattern

Microsoft’s Copilot is not the first enterprise AI to stumble at the boundary between convenience and control. The rapid deployment of retrieval‑augmented generation (RAG) systems, autonomous agents and productivity assistants has created repeated instances where policy enforcement and AI retrieval interact poorly.- Similar incidents and patterns:

- Echoes of prior Copilot vulnerabilities and other Copilot‑era CVEs have shown that retrieval pipelines and connectors are frequent failure points. Public trackers and incident repositories document multiple Copilot-related info‑disclosure events in recent years.

- Industry observers have warned about prompt injection, shadow AI usage, and unseen telemetry feeding back into vendor systems — all of which amplify enterprise risk when AI features are enabled by default.

Practical guidance for IT leaders and security teams

Organisations running Copilot or similar embedded AI services should act on three fronts: containment, verification and prevention.- Immediate containment and verification

- Audit which tenants had Copilot Chat Work enabled and which user cohorts used the feature during the timeframe Jan 21 → early Feb 2026.

- Request Microsoft’s tenant-specific remediation confirmation and any available telemetry or logs showing whether protected messages were included in Copilot sessions for your environment. Don’t rely solely on global statements; ask for tenant‑level evidence.

- Policy and technical controls you can enforce now

- Disable or restrict Copilot Chat’s Work experience for high‑risk user groups (legal, finance, HR, healthcare teams) until you have validated remediation and retention behaviours.

- Enforce stricter Purview label scopes and explicitly exclude Drafts and Sent Items from automated processing if your sector or contracts require it. Where possible, apply explicit DLP rules that deny indexing at the search/retrieval boundary rather than only post‑processing.

- Operational improvements (short to medium term)

- Add Copilot‑specific controls to change management and security testing plans: require Pen Test and policy‑enforcement verification for every Copilot/LLM-enabled update.

- Demand clearer retention and telemetry contract language from vendors (what logs are retained, how summaries are stored, whether outputs are used for model training). If necessary, insist on contractual limits or on‑prem/private‑instance options for sensitive workloads.

- Longer-term governance and audit steps

- Integrate embedded AI behaviours into SOC playbooks and incident response. Treat an AI policy bypass as a distinct class of incident that requires immediate audit, tenant-level evidence and, where applicable, mandatory notifications.

- Review vendor attestations and auditing: require quarterly transparency reports, per‑tenant remediation evidence, and the ability to run audits or to obtain third‑party verification of policy enforcement at the retrieval boundary.

Recommendations for vendors and Microsoft specifically

- Shift enforcement upstream: Vendors must ensure policy enforcement occurs at the earliest retrieval layer — before any indexed content can be passed to LLMs. That is the most reliable technical defence against AI-based policy bypasses.

- Improve telemetry transparency: When a fault like CW1226324 occurs, customers need tenant‑level telemetry, retention status of generated content, and a clear timeline for all remediation steps. High-level statements are not enough for regulated customers.

- Provide fail‑safe defaults: Embedded AI features should ship with conservative defaults for sensitive data domains (legal, HR, finance, health) and require explicit, auditable opt‑in at the tenant or group level.

- Offer stronger contractual protections: For customers with stringent compliance needs, vendors should offer contractual guarantees that generated content will not be retained, will not be used for model training, and that any processing will be auditable.

Risks that remain and things to watch

- Retention and secondary use: If Copilot session outputs were retained or logged, the organisation may face exposure that isn’t obvious from access controls alone. Confirm retention behaviour and ask for deletion or redaction where necessary.

- Reputational and contractual fallout: Even a contained, non‑exfiltrative processing event can trigger contractual disputes, regulatory queries, or internal investigations. Legal teams should evaluate disclosure obligations and client notification requirements.

- Complacency risk: Organisations that assume a vendor’s DLP integration covers AI workloads without independent verification are taking a material risk. This incident should reset assumptions about default safety.

Final analysis — innovation versus control

The Copilot incident is a laboratory‑class example of a broader tension: the rapid pace of AI feature integration has outstripped the maturity of enforcement models and vendor transparency practices. Microsoft’s technical response indicates the company can move fast to patch service‑side defects. But the episode exposes two persistent problems that industry players must solve together:- Enforcement placement: Protection must be applied at retrieval and indexing boundaries, not only as an afterthought. Systems that delay policy checks until after content reaches an LLM are fragile by design.

- Vendor transparency: Enterprise customers need far more granular evidence in incidents that touch protected data — tenant-level logs, retention statements, and independent post‑mortems where possible.

The Copilot bug (CW1226324) will not be the last headline that forces organisations to re-examine assumptions about AI and privacy. What it should spark, however, is a sober and concrete response: stronger retrieval‑boundary enforcement, clearer contractual protections, and robust, tenant‑specific audit evidence when things go wrong. Companies that accept productivity gains from embedded AI must insist — in policy, in procurement and in daily operations — that convenience never comes at the cost of confidentiality.

Source: The Hans India Microsoft Copilot Email Bug Sparks Fresh Privacy Concerns