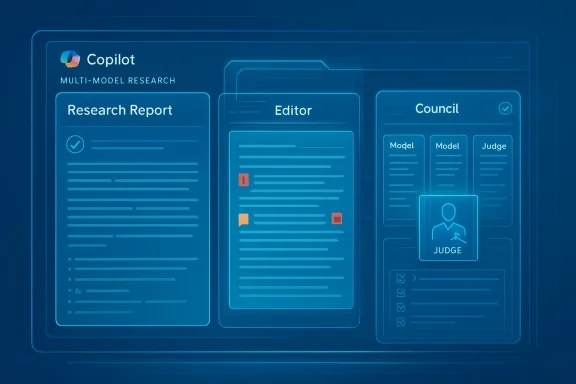

Microsoft is pushing Copilot further into multi-model AI, and that makes its new Critique and Council features more than just another product refresh. Critique is designed as a draft-and-review pipeline, where one model generates a research report and another model evaluates and refines it. Council takes a different tack, running multiple models in parallel so users can compare where they agree, where they differ, and what each adds to the final answer. Microsoft is clearly signaling that the future of Copilot is not a single chatbot brain, but a governed system of specialized reasoning steps that better match real work. oars, the AI industry has been obsessed with one-question, one-answer interfaces. That model worked well enough for summaries, email drafts, and quick explanations, but it showed obvious limits once users began asking for research, analysis, and multi-step reasoning. Microsoft’s latest Copilot update is notable because it admits, implicitly, that high-value knowledge work is not one-shot work. It is iterative, skeptical, and often collaborative.

That is the real significance of Critique. Instead of asking a single model to generate, self-check, and polish in one pass, Microsoft separates those jobs. One model drafts the report, while another reviews the result for gaps, weak sourcing, missing angles, and uneven structure. In enterprise software terms, that is a move from fluency to process, and process is usually where trust is won or lost.

Council is the more visible expression ymodel diversity behind the scenes, Microsoft brings it into the user experience by letting multiple models answer the same prompt independently. A judge model then compares the outputs and summarizes the differences. That makes disagreement useful instead of awkward, which is a very enterprise way to think about AI. It also exposes an important truth: for research-heavy work, a single polished answer is often less useful than a structured comparison of several plausible ones.

The timing matters too. Microsoft has spent much of 2025 and early 2026 reframingt not just an assistant that replies to prompts. In its March 9, 2026 Microsoft 365 blog post, the company said Wave 3 of Copilot brings “multi-model intelligence” into everyday workflows and emphasized that Copilot is now model diverse by design. Critique and Council fit that strategy perfectly. They are not isolated features; they are the product logic made visible.

Critique is the more consequential of the two features because it changes the mechanics of how a research artifact is produced. Microsoft’s description of the workflow is straightforward: one model handles planning, retrieval, and drafting, and another model reviews the output, strengthens arguments, and improves the structure. That second layer acts like an internal editor, and in practice that can be more valuable than simply making the answer longer.

Critique also makes verification a first-class feature rather than an afterthought. Microsoft says the reviewer follows a rubric-based process that checks source reliability, coverage, andat is important because the biggest problem in enterprise AI is not always the presence of hallucinations; it is the confidence with which those hallucinations can be packaged into attractive prose. A critique pass cannot eliminate that risk, but it can reduce the odds that a bad answer escapes unchallenged.

In a newsroom metaphor, the first model is the reporter, and the second is the editor. That analogy is not perfect, but it is close enough to explain why the feature matters. The system is no longer just prodden argued with. That distinction could prove decisive in legal, finance, procurement, and strategy workflows where how a conclusion was reached matters almost as much as the conclusion itself.

Microsoft says it tested Critique on the DRACO benchmark, which was designed for complex, real-world research tasks. The company reports gains in depth, presentation quality, and factual accuracy, and it claims the system outperformed competing approaches by about 13.88 pe, but the direction is telling: Microsoft is not just chasing raw model capability. It is trying to optimize the workflow that turns retrieval into a report.

That matters for compliance, policy, legal review, and strategy work, where users want to know what each model noticed and what each model missed. A side-by-side view can reveal where the evidence is ambiguous or where framing assumptions differ. In other words, Council turns “Which answer is correct?” into “What did each model contribute?” That is a much more mature way to present AI output in a serious business settininforces Microsoft’s broader claim that Copilot is model diverse by design. Rather than binding the experience to a single AI provider, Microsoft is presenting itself as the orchestrator of a multi-model environment. That is strategically important because it protects Microsoft from overdependence on any one model family while also giving customers a sense that the platform can adapt as model quality evolves.

This is also why the company keeps emphasizing enterprise controls. In the March 9 blog post, Microsoft stressed that Copilot works with existing Microsoft 365 permissions, sensitivity labels, and governance frameworks. That matters because the more autonomous AI becomes, the more dangerous it is for it to operate without policy guardrails. The company is trying to make innovation feel safe enough for IT departments to approve.

There is a competitive angle here as well. OpenAI, Anthropic, Google, and others are all racing toward deeper research and agent workflows. Microsoft’s advantage is that it can combeworkflow integration in one stack. If Critique and Council work well, Microsoft can argue that Copilot is not merely another AI chatbot; it is the place where AI gets turned into usable work product.

The timing is also commercially smart because Copilot usage has grown enough that reliability now matters more than novelty. Microsoft appears to be betting that enterprise buyers care more about better outputs and fewer mistakes than about flashy demos. That is probably the right bewaster than hype.

The benchmark is especially important because it reflects a broader shift in how AI products are evaluated. It is no longer enough to ask whether a model can produce a plausible response. Enterprises care whether the response is complete, cited, balanced, and ready to circulate. That is why pipelis. It shifts the evaluation from model intelligence to system reliability.

Still, benchmark gains should be read cautiously. A reviewer model may perform well on structured deep research tasks while offering less benefit in messy, fast-moving, or ambiguous situations. That is not a weakness so much as a reminder that no benchmark proves universal superiority. Real-world usefulness will depend on how the feature behaves across different workloads and how visible the review process#eally changes

The most interesting thing about DRACO is that Microsoft is no longer advertising “our model is smarter.” It is effectively saying, “our system is better organized.” That is an important distinction. Enterprise buyers rarely buy AI because it feels clever. They buy it because it reduces risk, saves time, and makes workflows more repeatable.

That also helps explain why Microsoft diversity. If the system can assemble a stronger answer from multiple models, then the product becomes less dependent on any single provider’s strengths or weaknesses. In a market where model quality changes quickly, that flexibility is a competitive advantage. It also makes the platform feel more future-proof.

Council is especially helpful for teams that need to understand uncertainty. If two models agree, confidence rises. If theys a useful signal that more scrutiny is required. That can be more valuable than a single polished answer, especially in situations where the cost of a bad recommendation is high. Ambiguity, in this context, is information.

Microsoft’s broader Copilot direction also suggests that AI features will increasingly be embedded directly into the apps where work already happens. That lowers the friction of adoption. Users do not need to leave Word, Excel, hat to benefit from more structured AI reasoning, which is exactly how enterprise software tends to win. Convenience plus governance is a powerful combination.

That matters because enterprise AI rarely fails on capability alone. It fails when governance is unclear, when output quality is inconsistent, or when departments do not trust the syste. Critique and Council are Microsoft’s answer to that trust problem, and they are more convincing than simply saying a model is “better” because they embed the safeguards in the workflow.

That euld not be underestimated. Many people overtrust a single polished answer simply because the interface makes it look authoritative. Council may help correct that habit by exposing disagreement as a normal part of AI reasoning. In a sense, it teaches users to think more like editors and less like consumers of a finished product.

There is also a broader usability point. As Copilot becomes more model-diverse and more agentic, the software is moving away from “chat with a bot” and toward “work with a system.” That can be more powerful, but it can also be more complex. Microsoft will have to keep the experience simple enough that regular users do noteund to benefit from it.

The bigger strategic question is whether this becomes the default pattern for enterprise AI. If it does, then the market may move away from debating which single model is “best” and toward asking which workflow is best at combining models. That would be a major shift in how AI products are built and sold. Microsoft seems to be betting that the winners in enterprise AI will be the companies that can orchestrateeider availability beyond early access and preview channels.

Source: news9live.com Microsoft Copilot Critique and Council AI features explained

That is the real significance of Critique. Instead of asking a single model to generate, self-check, and polish in one pass, Microsoft separates those jobs. One model drafts the report, while another reviews the result for gaps, weak sourcing, missing angles, and uneven structure. In enterprise software terms, that is a move from fluency to process, and process is usually where trust is won or lost.

Council is the more visible expression ymodel diversity behind the scenes, Microsoft brings it into the user experience by letting multiple models answer the same prompt independently. A judge model then compares the outputs and summarizes the differences. That makes disagreement useful instead of awkward, which is a very enterprise way to think about AI. It also exposes an important truth: for research-heavy work, a single polished answer is often less useful than a structured comparison of several plausible ones.

The timing matters too. Microsoft has spent much of 2025 and early 2026 reframingt not just an assistant that replies to prompts. In its March 9, 2026 Microsoft 365 blog post, the company said Wave 3 of Copilot brings “multi-model intelligence” into everyday workflows and emphasized that Copilot is now model diverse by design. Critique and Council fit that strategy perfectly. They are not isolated features; they are the product logic made visible.

What Critique Actually Does

What Critique Actually Does

Critique is the more consequential of the two features because it changes the mechanics of how a research artifact is produced. Microsoft’s description of the workflow is straightforward: one model handles planning, retrieval, and drafting, and another model reviews the output, strengthens arguments, and improves the structure. That second layer acts like an internal editor, and in practice that can be more valuable than simply making the answer longer.Draft, then challenge

The value of this design is that it separates creative generation from critical evaluation. That is a familiar patterncia, where a draft is never the finish line. The system is essentially trying to reproduce that editorial discipline inside Copilot. For users, that means fewer weak claims, fewer missing counterpoints, and fewer polished-but-fragile reports.Critique also makes verification a first-class feature rather than an afterthought. Microsoft says the reviewer follows a rubric-based process that checks source reliability, coverage, andat is important because the biggest problem in enterprise AI is not always the presence of hallucinations; it is the confidence with which those hallucinations can be packaged into attractive prose. A critique pass cannot eliminate that risk, but it can reduce the odds that a bad answer escapes unchallenged.

In a newsroom metaphor, the first model is the reporter, and the second is the editor. That analogy is not perfect, but it is close enough to explain why the feature matters. The system is no longer just prodden argued with. That distinction could prove decisive in legal, finance, procurement, and strategy workflows where how a conclusion was reached matters almost as much as the conclusion itself.

Why the reviewer matters

The reviewer is important because it introduces friction into a space that has often prized speed above all else. A single-pass model can sound persuasive even when it is incomplete. A second pass creates a chance to catchk transitions, unsupported assertions, or shallow analysis before the user sees the result. In the enterprise, that hidden labor matters because it reduces the amount of manual checking workers still have to do themselves.Microsoft says it tested Critique on the DRACO benchmark, which was designed for complex, real-world research tasks. The company reports gains in depth, presentation quality, and factual accuracy, and it claims the system outperformed competing approaches by about 13.88 pe, but the direction is telling: Microsoft is not just chasing raw model capability. It is trying to optimize the workflow that turns retrieval into a report.

- Critique separates generation from evaluation.

- The reviewer checks sources, coverage, and evidence.

- The goal is not just polish, but defensible output.

- It mirrors the human editorial process more than a normal chat reply.

- It is especially useful for **research-heavy enterprise woruis easier to explain and, in some ways, easier to understand. Instead of one model drafting and another model revising, Council runs multiple models on the same prompt at the same time. Microsoft’s description indicates that Anthropic and OpenAI models can each produce a standalone report, after which a judge model compaummarizes where they align and diverge. That makes the model differences visible instead of hidden.

Comparison as a product feature

This is a smart move because it turns disagreement into a user-facing tool. In many workflows, disagreement is not a bug; it is a signal. If one model emphasizes caution and another emphasizes breadth, the difference can teach users something about the underlying task. Council lets users inspect those trade-offs directly instead of guessing g decision.That matters for compliance, policy, legal review, and strategy work, where users want to know what each model noticed and what each model missed. A side-by-side view can reveal where the evidence is ambiguous or where framing assumptions differ. In other words, Council turns “Which answer is correct?” into “What did each model contribute?” That is a much more mature way to present AI output in a serious business settininforces Microsoft’s broader claim that Copilot is model diverse by design. Rather than binding the experience to a single AI provider, Microsoft is presenting itself as the orchestrator of a multi-model environment. That is strategically important because it protects Microsoft from overdependence on any one model family while also giving customers a sense that the platform can adapt as model quality evolves.

Why sideFhe key value of Council is calibration. Workers are often too quick to treat polished AI output as authoritative. By making model variance visible, Microsoft is nudging users toward healthy skepticism. That is not a glamorous feature, but it may be one of the most useful ones Copilot has shipped for research-heavy work.

There is also a practical training effect. Teams can learn which model is better at summarization, which is more careful with cibetter at synthesis. Over time, that can help organizations build internal playbooks for AI use instead of leaving employees to experiment blindly. For large companies, that alone can be worth a great deal.- Council runs multiple models in parallel.

- A separate judge model compares the outputs.

- It surfaces consensus and disagreement.

- It is useful for modelorkflow training.

- It makes AI reasoning more transparent.

Why Microsoft Is Doing This Now

The timing is not accidental. Microsoft has been gradually transforming Copilot from a productivity add-on into an enterprise AI operating layer. In March 2026, the company said Wave 3 of Microsoft 365 Copilot brings agentic capabilities directly intoint, Outlook, and Copilot Chat. It also said Copilot now hosts leading models from multiple providers, including Anthropic and OpenAI, instead of forcing users into a single model stack.From assistant to orchestration layer

That shift is crucial. Earlyused on helping users draft, summarize, and search faster. The newer messaging is about execution, governance, and trust. Microsoft is telling customers that AI should not just answer questions; it should help complete work inside a controlled environment. Critique and Council fit that model because they make the reasoning process itself more inspectable.This is also why the company keeps emphasizing enterprise controls. In the March 9 blog post, Microsoft stressed that Copilot works with existing Microsoft 365 permissions, sensitivity labels, and governance frameworks. That matters because the more autonomous AI becomes, the more dangerous it is for it to operate without policy guardrails. The company is trying to make innovation feel safe enough for IT departments to approve.

There is a competitive angle here as well. OpenAI, Anthropic, Google, and others are all racing toward deeper research and agent workflows. Microsoft’s advantage is that it can combeworkflow integration in one stack. If Critique and Council work well, Microsoft can argue that Copilot is not merely another AI chatbot; it is the place where AI gets turned into usable work product.

The market context

The market has also matured enough to expose the limits of the “single-model wins everything” story. Users now know that one model may be excellent at style but weaker at structure, or strong at reasoning but too verbose for business use. Microsoft is leaning into that reality instead of pretending it does not exist. That is a subtle but important product decision.The timing is also commercially smart because Copilot usage has grown enough that reliability now matters more than novelty. Microsoft appears to be betting that enterprise buyers care more about better outputs and fewer mistakes than about flashy demos. That is probably the right bewaster than hype.

What the DRACO Benchmark Tells Us

Microsoft is using DRACO as the headline proof point for Critique, and that deserves attention. DRACO is a deep research benchmark built around real-world complexity, with tasks spanning multiple domains and a strong emphasis on factual accuracy, breadth, citation quality, and presentation. That matters because it is much closer pilot Researcher is meant to support than a toy benchmark or a narrow knowledge test.Benchmarks and reality

According to Microsoft’s claims, Critique improved results across several dimensions, including factual accuracy and presentation quality. The company also reported a roughly 13.88 percent improvement over competing systems. That nruarantee, but it does suggest the architecture is doing something meaningful. The point is not just that the answer is better; it is that the system is learning how to review itself.The benchmark is especially important because it reflects a broader shift in how AI products are evaluated. It is no longer enough to ask whether a model can produce a plausible response. Enterprises care whether the response is complete, cited, balanced, and ready to circulate. That is why pipelis. It shifts the evaluation from model intelligence to system reliability.

Still, benchmark gains should be read cautiously. A reviewer model may perform well on structured deep research tasks while offering less benefit in messy, fast-moving, or ambiguous situations. That is not a weakness so much as a reminder that no benchmark proves universal superiority. Real-world usefulness will depend on how the feature behaves across different workloads and how visible the review process#eally changes

The most interesting thing about DRACO is that Microsoft is no longer advertising “our model is smarter.” It is effectively saying, “our system is better organized.” That is an important distinction. Enterprise buyers rarely buy AI because it feels clever. They buy it because it reduces risk, saves time, and makes workflows more repeatable.

That also helps explain why Microsoft diversity. If the system can assemble a stronger answer from multiple models, then the product becomes less dependent on any single provider’s strengths or weaknesses. In a market where model quality changes quickly, that flexibility is a competitive advantage. It also makes the platform feel more future-proof.

- DRACO measures accuracy, coverage, and citation quality.

- It is closer to real enterprise research - Microsoft is optimizing the system, not just the model.

- Benchmark gains matter most when they translate into trust.

- The real test is everyday workflow reliability.

Enterprise Impact

For enterprises, the biggest appeal of Critique and Council is not novelty. It is control. Microsoft is trying to answer the question that every IT leader eventually asks about thout creating new compliance, quality, or governance problems? The answer, at least in product form, is becoming more credible because Microsoft is building review and comparison into the workflow itself.What changes for knowledge workers

For analysts, researchers, legal teams, and strategists, Critique can reduce the amount of time spent manually fact-checking as not eliminate review, but it changes its scale. Instead of checking everything from scratch, users can focus on the hardest judgments. That is where the real productivity gain likely sits.Council is especially helpful for teams that need to understand uncertainty. If two models agree, confidence rises. If theys a useful signal that more scrutiny is required. That can be more valuable than a single polished answer, especially in situations where the cost of a bad recommendation is high. Ambiguity, in this context, is information.

Microsoft’s broader Copilot direction also suggests that AI features will increasingly be embedded directly into the apps where work already happens. That lowers the friction of adoption. Users do not need to leave Word, Excel, hat to benefit from more structured AI reasoning, which is exactly how enterprise software tends to win. Convenience plus governance is a powerful combination.

Why admins will care

Administrators will care because model diversity creates policy questions. If a workflow can switch between model families, the organization needs to know what data is being processed, where itnd which controls apply. Microsoft’s emphasis on tenant controls, permissions, sensitivity labels, and managed rollout is designed to address those concerns before they become blockers.That matters because enterprise AI rarely fails on capability alone. It fails when governance is unclear, when output quality is inconsistent, or when departments do not trust the syste. Critique and Council are Microsoft’s answer to that trust problem, and they are more convincing than simply saying a model is “better” because they embed the safeguards in the workflow.

- Better fit for regulated industries.

- Less manual verification of first drafts.

- More visible model governance.

- Easier team training and prompt calibration.

- Stronger alignment with Microsoft 365 security controls.

Consumer Impact

For ordinary users, the immediate benefit is subtler but still important. Critique can make research answers feel less brittle, while Council can make AI output easier to interpret. That may not sound dramatic, but it addresses one of the most frustrating parts of using AI tools today: the output can be polished enough to look finished while still feeling oddly uncertain.Better answers, less blind trust

Consumer users often want speed, but they also want confidence. Critique nudges the system toward answers that are more complete and better checked, which should help users who rely on Copilot for planning, learning, and personal research. Council goes one step further by showing variation instead of hiding it, which can help users understand why AI sometimes gives different answers to similar questions.That euld not be underestimated. Many people overtrust a single polished answer simply because the interface makes it look authoritative. Council may help correct that habit by exposing disagreement as a normal part of AI reasoning. In a sense, it teaches users to think more like editors and less like consumers of a finished product.

There is also a broader usability point. As Copilot becomes more model-diverse and more agentic, the software is moving away from “chat with a bot” and toward “work with a system.” That can be more powerful, but it can also be more complex. Microsoft will have to keep the experience simple enough that regular users do noteund to benefit from it.

What users may notice first

The first thing many users will notice is likely not the model architecture but the quality of the final report. If Critique works as intended, answers should feel more structured, more complete, and less prone to obvious omissions. Council may appeal more to power users and professionals, but it could also become a useful learning tool for anyone who wants to compare perspectives on a hing users may notice is that Microsoft is increasingly making model choice a platform feature rather than a user burden. That is a subtle shift. Instead of forcing users to decide which model is best for each task, Microsoft is trying to assemble the right workflow on their behalf. That is more convenient, but it also makes product design and governance maore reliable research-style outputs.- Better visibility into model differences.

- Less dependence on a single AI answer.

- More educational value for everyday users.

- A stronger sense that Copilot is thinking through the task.

Strengths and Opportunities

Microsoft’s biggest strength here is that it is solving a real problem, not chasing a gimmick. Users do not merely need AI that sounds smart; they need AI that is less likely to embarrass them. Critique and Council both address that need by making evaluation, comparison, and review part of the product design rather than a manual afterthought.- Critique adds a second-pass review layer.

- Council makes model disagreement visible.

- Microsoft is leaning into multi-model orchestration.

- The features fit enterprise needs for governance and trust.

- Ts position as a work platform, not just a chatbot.

- They create a path toward better research quality.

- They may help Microsoft reduce dependence on any single model supplier.

Risks and Concerns

The main risk is that Microsoft could make AI output more layered without making it more understandable. If users do not know how disagreements are resolved, or when one model’s judgment overrides another’s, the workflow could become more opaque even as it becomes more sophisticated. That would be the wrong kind of complexity for enterprise adoption.- Reviewers can share the same blind spots as generators.

- More model laency.

- Side-by-side comparison may create analysis paralysis.

- Benchmark gains may not generalize to every real-world use case.

- Multi-model workflows raise governance and compliance questions.

- Users may still overtrust polished outputs despite added checks.

- Experimental features can behave differently across tenants and rollout stages.

Looking Ahead

The most likely next step is broader rollout, followed by tighter integration with the rest of Microsoft 365. If Microsoft can make Critique and Council feel native inside Word, Excel, Outlook, and Copilot Chat, it will have a very strong story: AI that not only drafts and summarizes, but also reviews and compares in the same place people already work. That would be a meaningful competitive advantage.The bigger strategic question is whether this becomes the default pattern for enterprise AI. If it does, then the market may move away from debating which single model is “best” and toward asking which workflow is best at combining models. That would be a major shift in how AI products are built and sold. Microsoft seems to be betting that the winners in enterprise AI will be the companies that can orchestrateeider availability beyond early access and preview channels.

- More explicit controls for admins and compliance teams.

- Stronger reporting on how review and comparison affect output quality.

- Additional model pairings for different research workloads.

- Tighter integration between Researcher, agents, and Copilot’s core workspace.

Source: news9live.com Microsoft Copilot Critique and Council AI features explained

Last edited: