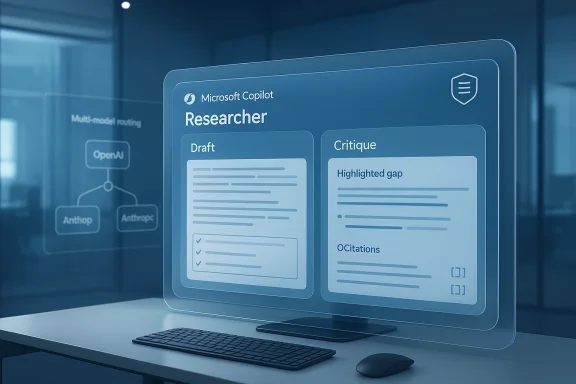

Microsoft’s latest Copilot move is less about a shiny new chatbot trick and more about a strategic reset. With Critique inside Microsoft 365 Copilot’s Researcher experience, Microsoft is effectively turning one AI model’s output into another model’s review draft, then using that second pass to improve accuracy, completeness, and presentation. The company is also rolling out a Model Council view that lets users compare answers from two AI systems side by side, a subtle but important signal that Microsoft is betting on multi-model AI rather than a single-model future. That shift matters because it changes how enterprises evaluate trust, compliance, and quality in AI-assisted research.

For much of the Copilot era, Microsoft’s AI story revolved around a close partnership with OpenAI. That relationship gave Microsoft a fast path into the generative AI market, but it also created a structural dependency that increasingly looked risky as the market matured. Over the last several months, Microsoft has widened the aperture, adding Anthropic models to Microsoft 365 Copilot and Copilot Studio and now using them in Researcher workflows as part of a broader move toward model choice. Microsoft’s own documentation confirms that organizations can elect to use Anthropic’s Claude models with the Researcher agent, and that doing so shifts data processing outside Microsoft-managed environments and changes the applicable compliance framework. (learn.microsoft.com)

The new Critique flow fits neatly into that broader strategy. Rather than relying on one model to both research and self-check, Microsoft is using one system to generate a response and another to critique or revise it. That mirrors a familiar professional pattern in academia, consulting, and journalism: one analyst drafts, another reviews, and the final product improves because judgment is distributed across multiple passes. The appeal is obvious. A first model may be strong at synthesis, while a second model may be better at catching omissions, awkward structure, or overconfident phrasing. In practical terms, this is Microsoft acknowledging that good enough AI is no longer good enough for business research.

There is also a competitive dimension. Microsoft’s move arrives amid a broader industry race to build “deep research” tools that do more than answer questions quickly. Perplexity helped define that category, but Microsoft is now trying to position Copilot Researcher as an enterprise-grade alternative with tighter integration into Microsoft 365 and stronger governance controls. Perplexity’s own DRACO benchmark work frames the market around accuracy, completeness, and objectivity, which is exactly the terrain Microsoft is now trying to occupy. Even if Microsoft’s comparison claims should be treated cautiously, the direction of travel is clear: research agents are becoming a battleground for credibility, not just speed.

Microsoft’s timing is notable too. Earlier this month, the company reshaped its Copilot leadership and explicitly framed the Copilot stack as four connected pillars: experience, platform, Microsoft 365 apps, and AI models. That organizational language matters because it shows the company is treating model routing and model selection as core product architecture, not experimental garnish. In other words, Critique and Model Council are not isolated features; they are visible proof of a deeper platform strategy. (blogs.microsoft.com)

Anthropic’s entry into Microsoft 365 Copilot changed that equation. Microsoft’s documentation now says organizations can connect Anthropic models, use them with the Researcher agent, and manage access through the Microsoft 365 admin center. It also makes clear that when Anthropic is used, the data is processed outside Microsoft-managed environments and that Microsoft’s standard customer commitments do not apply in the same way. That is not a footnote. It is a reminder that model choice has legal, operational, and procurement consequences, not just UX implications. (learn.microsoft.com)

Researcher itself was designed for heavier workloads than a standard prompt-and-answer assistant. Microsoft describes it as a tool for complex, multistep research and structured reporting, with response times that can stretch from under five minutes for simple tasks to much longer for complex ones. That makes it a natural candidate for layered evaluation, because the more steps an AI agent takes, the more opportunities there are for drift, omission, and hallucination. The more ambitious the task, the more a second-model check starts to look like a necessity rather than a luxury. (learn.microsoft.com)

The timing also reflects competitive pressure outside Microsoft. Perplexity’s deep research tools, OpenAI’s browsing and reasoning systems, and emerging enterprise agent platforms have all pushed the market toward more structured, source-aware outputs. Users are less impressed by raw fluency than they were two years ago. They want citations, evidence, and a visible chain of reasoning. That is why “research” features now matter so much: they are the front line in the fight for trust. Microsoft is clearly trying to meet that demand by formalizing review and comparison inside Copilot itself.

That also changes user expectations. If a first draft is no longer final output, then the system is implicitly admitting that the raw model response is only part of the product. For enterprise users, that is a healthy admission. It reduces the temptation to trust a single answer just because it sounds polished. For consumers, though, it introduces a different kind of risk: the system may feel more authoritative precisely because it looks more deliberative. That can be dangerous if users confuse layered output with guaranteed correctness.

The concept is especially relevant in research workflows where the output needs to be credible, not merely helpful. If an AI can find a plausible answer, but not the best answer, the cost of error may show up later in a report, a strategy memo, or an executive presentation. Critique is Microsoft’s answer to that problem: a way to spend more compute in exchange for fewer embarrassing misses. That is expensive, but for enterprise software it may be worth it.

This is a major shift in the power balance. OpenAI still matters deeply to Microsoft, but the Copilot product is becoming more modular. That gives Microsoft leverage, bargaining power, and resilience. If one model family underperforms on a specific task, Microsoft can route around it. If one vendor’s pricing rises, Microsoft has more room to negotiate. And if enterprise customers want choice, Copilot can now present itself as the platform that orchestrates models rather than worships one.

There is a catch, though. The moment Microsoft routes work outside its own environment, governance becomes more complicated. Microsoft’s documentation is explicit that Anthropic use changes the data-handling framework and affects commitments around residency, compliance, and copyright protection. That means the model-selection story is not just about performance; it is also about procurement discipline and risk management. (learn.microsoft.com)

That may sound modest, but it is a meaningful shift in how AI products are designed. Many assistants try to collapse uncertainty into a single polished paragraph. Model Council does the opposite. It exposes disagreement and asks the user to notice where the models converge and diverge. That is closer to how teams actually work. It also aligns with the way analysts, lawyers, and researchers test a claim: by triangulating, not merely querying once.

Still, there is an adoption challenge. Comparison tools are only useful if users have the patience and literacy to interpret them. Many will still prefer the fastest answer. That means Microsoft may need to teach users how to think with the tool, not just how to click it. The product is smarter, but the workflow is also more demanding.

This is also where Microsoft’s enterprise advantage matters. Perplexity may be sleek and focused, but Microsoft has distribution, identity, admin controls, and document adjacency on its side. If a company already lives inside Microsoft 365, a better research experience embedded in the same ecosystem can be enough to shift usage. That is the classic Microsoft playbook: win by integrating the better idea into the platform people already pay for.

There is also a reputational angle. By using multiple frontier models, Microsoft reduces the risk that Copilot becomes identified too closely with one vendor’s failures. That matters in a market where users are increasingly aware of model drift, hallucination, and inconsistent quality. A multi-model approach gives Microsoft more room to say, we chose the right tool for the job, rather than we used the same tool everywhere.

Enterprise users will likely appreciate the practical upside. Multi-model research reduces single-vendor risk and may improve the quality of complex outputs. It also gives IT leaders a stronger story when users ask why one assistant is not enough. The answer is becoming clearer: no single model is universally optimal, and enterprise AI should reflect that reality. Microsoft is not just selling an assistant; it is selling a controlled model ecosystem.

Microsoft will need to walk a fine line. Too much transparency and the product feels complicated. Too little transparency and users won’t understand the implications of model choice. The best outcome is probably a layered experience: simple by default, but with enough visibility for power users to inspect the process. That is hard to build, but enterprise software lives or dies on that balance.

At the same time, Microsoft says Researcher itself stays within Microsoft 365’s commercial data boundary and inherits Microsoft 365 Copilot’s security, privacy, and compliance practices. Those two ideas can coexist, but they are not identical. The implication is that the platform can host both Microsoft-native workflows and externally hosted model workflows, depending on the configuration. That gives customers flexibility, but it also means the burden of understanding the fine print shifts to the customer.

There is also a broader regulatory implication. As AI tools become more deeply embedded in workflows, companies will need better internal policies on model use, logging, retention, and approval. Microsoft’s multi-model strategy may actually accelerate that discipline by forcing enterprises to ask harder questions. In that sense, the product could become a catalyst for better governance, even if that was not the primary intent.

The challenge is that more intelligence often means more complexity in the interface. If Microsoft gets this wrong, the feature may feel like overkill for simple tasks. But for multi-step research, that complexity is the point. A trivial answer should be quick; a serious answer should be inspectable. Microsoft seems to be trying to separate those two modes more cleanly.

The opportunity is bigger than one feature. If Microsoft can make multi-model routing feel natural, it could define the next phase of enterprise AI, where the winner is not the company with the single best model but the company with the best orchestration stack. That would put Microsoft in a very strong position across productivity, governance, and developer tooling.

There are also governance risks. Because Anthropic processing sits outside Microsoft-managed environments, enterprises may need fresh reviews of compliance, retention, and contractual obligations. That adds friction at exactly the point where Microsoft wants adoption to accelerate. Finally, there is a strategic risk that Copilot becomes harder to explain: the more models, providers, and modes Microsoft adds, the more the product may feel powerful to insiders and confusing to ordinary users.

It will also be worth watching how enterprises respond to the governance implications. Some will welcome the flexibility. Others will be cautious about adding another external model relationship to their AI stack. The winners will likely be organizations that treat model routing as a managed policy, not an ad hoc user preference.

Source: Laodong.vn Microsoft upgrades Copilot, increases research power by combining AI tools

Overview

Overview

For much of the Copilot era, Microsoft’s AI story revolved around a close partnership with OpenAI. That relationship gave Microsoft a fast path into the generative AI market, but it also created a structural dependency that increasingly looked risky as the market matured. Over the last several months, Microsoft has widened the aperture, adding Anthropic models to Microsoft 365 Copilot and Copilot Studio and now using them in Researcher workflows as part of a broader move toward model choice. Microsoft’s own documentation confirms that organizations can elect to use Anthropic’s Claude models with the Researcher agent, and that doing so shifts data processing outside Microsoft-managed environments and changes the applicable compliance framework. (learn.microsoft.com)The new Critique flow fits neatly into that broader strategy. Rather than relying on one model to both research and self-check, Microsoft is using one system to generate a response and another to critique or revise it. That mirrors a familiar professional pattern in academia, consulting, and journalism: one analyst drafts, another reviews, and the final product improves because judgment is distributed across multiple passes. The appeal is obvious. A first model may be strong at synthesis, while a second model may be better at catching omissions, awkward structure, or overconfident phrasing. In practical terms, this is Microsoft acknowledging that good enough AI is no longer good enough for business research.

There is also a competitive dimension. Microsoft’s move arrives amid a broader industry race to build “deep research” tools that do more than answer questions quickly. Perplexity helped define that category, but Microsoft is now trying to position Copilot Researcher as an enterprise-grade alternative with tighter integration into Microsoft 365 and stronger governance controls. Perplexity’s own DRACO benchmark work frames the market around accuracy, completeness, and objectivity, which is exactly the terrain Microsoft is now trying to occupy. Even if Microsoft’s comparison claims should be treated cautiously, the direction of travel is clear: research agents are becoming a battleground for credibility, not just speed.

Microsoft’s timing is notable too. Earlier this month, the company reshaped its Copilot leadership and explicitly framed the Copilot stack as four connected pillars: experience, platform, Microsoft 365 apps, and AI models. That organizational language matters because it shows the company is treating model routing and model selection as core product architecture, not experimental garnish. In other words, Critique and Model Council are not isolated features; they are visible proof of a deeper platform strategy. (blogs.microsoft.com)

Background

Microsoft 365 Copilot began as a tightly integrated productivity assistant tied heavily to OpenAI models. That made sense when the market was still young, because Microsoft needed a credible model backbone quickly and OpenAI was the obvious option. But once Copilot moved from a branding exercise to a real workplace tool, the tradeoffs became harder to ignore. Enterprises do not buy generative AI just for clever prose; they buy it for reliable output, predictable controls, and defensible governance. A single-model stack can be elegant, but it can also be brittle.Anthropic’s entry into Microsoft 365 Copilot changed that equation. Microsoft’s documentation now says organizations can connect Anthropic models, use them with the Researcher agent, and manage access through the Microsoft 365 admin center. It also makes clear that when Anthropic is used, the data is processed outside Microsoft-managed environments and that Microsoft’s standard customer commitments do not apply in the same way. That is not a footnote. It is a reminder that model choice has legal, operational, and procurement consequences, not just UX implications. (learn.microsoft.com)

Researcher itself was designed for heavier workloads than a standard prompt-and-answer assistant. Microsoft describes it as a tool for complex, multistep research and structured reporting, with response times that can stretch from under five minutes for simple tasks to much longer for complex ones. That makes it a natural candidate for layered evaluation, because the more steps an AI agent takes, the more opportunities there are for drift, omission, and hallucination. The more ambitious the task, the more a second-model check starts to look like a necessity rather than a luxury. (learn.microsoft.com)

The timing also reflects competitive pressure outside Microsoft. Perplexity’s deep research tools, OpenAI’s browsing and reasoning systems, and emerging enterprise agent platforms have all pushed the market toward more structured, source-aware outputs. Users are less impressed by raw fluency than they were two years ago. They want citations, evidence, and a visible chain of reasoning. That is why “research” features now matter so much: they are the front line in the fight for trust. Microsoft is clearly trying to meet that demand by formalizing review and comparison inside Copilot itself.

What Critique Actually Changes

Critique matters because it moves Copilot from a single-pass generator to a reviewed workflow. The idea is not simply to produce a more eloquent answer; it is to create a feedback loop where one model checks another for gaps, weak logic, thin sourcing, or presentation flaws. Microsoft says this improves accuracy, analysis depth, and presentation quality, and that framing is consistent with how serious knowledge work is often done in practice.That also changes user expectations. If a first draft is no longer final output, then the system is implicitly admitting that the raw model response is only part of the product. For enterprise users, that is a healthy admission. It reduces the temptation to trust a single answer just because it sounds polished. For consumers, though, it introduces a different kind of risk: the system may feel more authoritative precisely because it looks more deliberative. That can be dangerous if users confuse layered output with guaranteed correctness.

Why a second model matters

A critique model can catch issues that the original model misses because different systems fail in different ways. One may over-explain while another omits key facts. One may be strong on structure but weak on specificity. By forcing cross-model review, Microsoft is effectively trying to exploit variance as a quality-control tool. That is a smart design choice because in generative AI, diversity of failure modes can be more useful than raw model size. (blogs.microsoft.com)The concept is especially relevant in research workflows where the output needs to be credible, not merely helpful. If an AI can find a plausible answer, but not the best answer, the cost of error may show up later in a report, a strategy memo, or an executive presentation. Critique is Microsoft’s answer to that problem: a way to spend more compute in exchange for fewer embarrassing misses. That is expensive, but for enterprise software it may be worth it.

- Cross-checking can reduce one-model blind spots.

- Revision loops can improve structure and clarity.

- Second-pass analysis can reveal unsupported claims.

- Professional workflows benefit from visible review steps.

- Enterprise trust often depends on process, not just accuracy.

Why Anthropic Matters

Microsoft’s decision to use Anthropic in this workflow is strategically important. It signals that Copilot’s value is no longer tied to a single provider relationship, and that Microsoft is willing to route work to the model it believes fits the task best. Microsoft’s own documentation says Anthropic models are now available with Researcher, and that organizations can choose whether to connect them through the admin center. (learn.microsoft.com)This is a major shift in the power balance. OpenAI still matters deeply to Microsoft, but the Copilot product is becoming more modular. That gives Microsoft leverage, bargaining power, and resilience. If one model family underperforms on a specific task, Microsoft can route around it. If one vendor’s pricing rises, Microsoft has more room to negotiate. And if enterprise customers want choice, Copilot can now present itself as the platform that orchestrates models rather than worships one.

What this says about Microsoft’s strategy

The move also reflects a broader industry realization that no single model is best at everything. Some systems excel at breadth, others at synthesis, others at structured reasoning. Microsoft is trying to turn that reality into a product advantage. Instead of asking enterprises to believe in one “best” model, it is promising the best possible composition of models. That is a more mature message, and perhaps a more believable one.There is a catch, though. The moment Microsoft routes work outside its own environment, governance becomes more complicated. Microsoft’s documentation is explicit that Anthropic use changes the data-handling framework and affects commitments around residency, compliance, and copyright protection. That means the model-selection story is not just about performance; it is also about procurement discipline and risk management. (learn.microsoft.com)

- Model choice becomes a product feature.

- Vendor dependence becomes less absolute.

- Governance becomes more complex.

- Routing logic becomes a strategic asset.

- Compliance review becomes part of AI adoption.

Model Council and the Case for Comparison

Model Council is the more visibly user-facing piece of this update. Rather than hiding the competition between models, Microsoft is surfacing it: two AI systems, parallel responses, differences highlighted, similarities identified. That is a powerful product idea because it acknowledges a truth enterprise users already know—AI outputs often need interpretation, not blind acceptance. If a user can compare two answers, the system is encouraging judgment rather than compliance.That may sound modest, but it is a meaningful shift in how AI products are designed. Many assistants try to collapse uncertainty into a single polished paragraph. Model Council does the opposite. It exposes disagreement and asks the user to notice where the models converge and diverge. That is closer to how teams actually work. It also aligns with the way analysts, lawyers, and researchers test a claim: by triangulating, not merely querying once.

Comparison as a trust mechanism

This design may become especially useful in regulated or high-stakes settings. A side-by-side comparison can make ambiguity visible without forcing a premature conclusion. It can also surface model-specific weaknesses, which helps users understand that model selection is not neutral. Different systems bring different priors, different training data, and different stylistic habits. Seeing the difference is often the first step toward better use of the tools.Still, there is an adoption challenge. Comparison tools are only useful if users have the patience and literacy to interpret them. Many will still prefer the fastest answer. That means Microsoft may need to teach users how to think with the tool, not just how to click it. The product is smarter, but the workflow is also more demanding.

- Side-by-side answers reduce black-box feel.

- Differences and similarities support human judgment.

- Ambiguity becomes visible instead of hidden.

- High-stakes use cases benefit from triangulation.

- User literacy becomes more important than ever.

Competitive Pressure from Perplexity and OpenAI

The timing of Microsoft’s update makes the competitive backdrop impossible to ignore. Perplexity has made deep research one of its signature categories, and Microsoft is now clearly trying to answer that challenge inside the Copilot ecosystem. Microsoft’s reported comparison claims, including better results in accuracy, completeness, and objectivity than some newer Perplexity research systems, should be treated cautiously because such claims are inherently benchmark-sensitive. But even without accepting the ranking at face value, the product intent is obvious: Microsoft wants Copilot to be seen as a serious research environment, not just a productivity sidebar.This is also where Microsoft’s enterprise advantage matters. Perplexity may be sleek and focused, but Microsoft has distribution, identity, admin controls, and document adjacency on its side. If a company already lives inside Microsoft 365, a better research experience embedded in the same ecosystem can be enough to shift usage. That is the classic Microsoft playbook: win by integrating the better idea into the platform people already pay for.

Why this is different from a normal feature race

The deeper contest is not about who can answer a question faster. It is about who can become the default place where work-grade research happens. In that sense, Critique and Model Council are not just AI features; they are retention mechanisms. If Microsoft can make Copilot the trusted review layer for enterprise research, it may keep users from reaching for specialist tools.There is also a reputational angle. By using multiple frontier models, Microsoft reduces the risk that Copilot becomes identified too closely with one vendor’s failures. That matters in a market where users are increasingly aware of model drift, hallucination, and inconsistent quality. A multi-model approach gives Microsoft more room to say, we chose the right tool for the job, rather than we used the same tool everywhere.

- Perplexity pressure is forcing feature acceleration.

- OpenAI dependence is being strategically reduced.

- Enterprise distribution remains Microsoft’s biggest asset.

- Workflow integration can beat standalone elegance.

- Default placement is as important as raw model quality.

Enterprise Impact vs Consumer Impact

For enterprises, the most important takeaway is governance. Microsoft’s documentation makes clear that Anthropic use can change where data is processed and which contractual commitments apply. That means admin teams, compliance officers, and procurement leaders will need to understand exactly which model is being used, when, and under what terms. In a world where AI is increasingly embedded in daily work, model routing becomes policy. (learn.microsoft.com)Enterprise users will likely appreciate the practical upside. Multi-model research reduces single-vendor risk and may improve the quality of complex outputs. It also gives IT leaders a stronger story when users ask why one assistant is not enough. The answer is becoming clearer: no single model is universally optimal, and enterprise AI should reflect that reality. Microsoft is not just selling an assistant; it is selling a controlled model ecosystem.

Consumer expectations are different

Consumers, by contrast, may mostly experience this as Copilot becoming “smarter” or more nuanced. They will care less about administrative controls and more about whether the answer feels better. That creates a perception challenge. A user who only sees a polished final answer may not realize how much orchestration went into it. The more invisible the pipeline, the more magical the product feels—and the easier it is to overtrust it.Microsoft will need to walk a fine line. Too much transparency and the product feels complicated. Too little transparency and users won’t understand the implications of model choice. The best outcome is probably a layered experience: simple by default, but with enough visibility for power users to inspect the process. That is hard to build, but enterprise software lives or dies on that balance.

- Enterprises need auditability and compliance.

- Consumers want simplicity and speed.

- Admins need control over providers and access.

- Power users want compare-and-contrast workflows.

- Trust depends on transparency, but not overload.

Governance, Privacy, and Compliance

The biggest hidden story here is that Microsoft is normalizing a world where Copilot can route work across different model providers with different terms. That sounds flexible, but it also creates a broader surface area for governance. Microsoft’s documentation explicitly states that Anthropic processing is outside Microsoft-managed environments and audit controls, and that Microsoft’s customer agreements do not apply in the same way. That is not a minor footnote for enterprises; it is a legal and operational boundary. (learn.microsoft.com)At the same time, Microsoft says Researcher itself stays within Microsoft 365’s commercial data boundary and inherits Microsoft 365 Copilot’s security, privacy, and compliance practices. Those two ideas can coexist, but they are not identical. The implication is that the platform can host both Microsoft-native workflows and externally hosted model workflows, depending on the configuration. That gives customers flexibility, but it also means the burden of understanding the fine print shifts to the customer.

The compliance tradeoff

This is a classic enterprise software tradeoff: more capability often means more governance complexity. Microsoft can say it is giving customers choice, which is true, but choice always comes with administration. That is especially so when AI outputs may be used in reports, presentations, or internal decision-making. If a model is used to shape a business recommendation, the data path behind that output matters almost as much as the output itself.There is also a broader regulatory implication. As AI tools become more deeply embedded in workflows, companies will need better internal policies on model use, logging, retention, and approval. Microsoft’s multi-model strategy may actually accelerate that discipline by forcing enterprises to ask harder questions. In that sense, the product could become a catalyst for better governance, even if that was not the primary intent.

- Data routing now matters as much as model quality.

- External processing raises legal review needs.

- Admins must understand provider-specific terms.

- Retention and logging policies may need updates.

- AI governance becomes a procurement issue.

Product Design and User Experience

From a design perspective, Critique and Model Council are interesting because they make the invisible visible without fully exposing the machinery. Users do not need to know every prompt or chain-of-thought step; they just need enough structure to trust the outcome. That is the right instinct for a business product. The goal is not to turn every user into an AI engineer, but to create enough friction for better decisions and enough clarity for users to spot weaknesses.The challenge is that more intelligence often means more complexity in the interface. If Microsoft gets this wrong, the feature may feel like overkill for simple tasks. But for multi-step research, that complexity is the point. A trivial answer should be quick; a serious answer should be inspectable. Microsoft seems to be trying to separate those two modes more cleanly.

Design principles that appear to be emerging

There is a broader pattern here that Microsoft appears to be embracing across Copilot: let the user choose when they need depth, let the system show its work when the stakes are high, and let model diversity improve the odds of a good answer. That is a more sophisticated product philosophy than “just ask the chatbot.” It is also more aligned with how knowledge work actually happens inside enterprises.- Simple tasks should stay fast.

- Complex tasks should earn more orchestration.

- Review layers should be visible when useful.

- Model disagreement should be informative, not hidden.

- Workflow depth should match task importance.

Strengths and Opportunities

Microsoft’s update has several clear strengths. It improves Copilot’s credibility in one of the most important emerging AI categories: deep research for knowledge work. It also gives Microsoft a stronger story for enterprise customers who want performance without surrendering control to a single model vendor. Most importantly, it helps Copilot evolve from a branded assistant into a platform layer that can coordinate intelligence from multiple sources.The opportunity is bigger than one feature. If Microsoft can make multi-model routing feel natural, it could define the next phase of enterprise AI, where the winner is not the company with the single best model but the company with the best orchestration stack. That would put Microsoft in a very strong position across productivity, governance, and developer tooling.

- Better research quality through cross-model validation.

- Less dependence on OpenAI as the sole model backbone.

- More flexibility for enterprise customers with different risk tolerances.

- Stronger competitive posture against Perplexity and standalone AI apps.

- A clearer platform narrative around model orchestration.

- Potentially better user trust through visible comparison.

- Improved resilience if one vendor underperforms or changes terms.

Risks and Concerns

The biggest concern is that the feature can create a false sense of certainty. If two models agree, users may assume the answer is right when both could still be wrong. Agreement is not the same as truth. Another risk is cost and latency; multi-model workflows are inherently heavier, and if Microsoft overuses them, Copilot could become slower or more expensive to operate at scale. That tradeoff may be acceptable for premium workflows, but it is not trivial.There are also governance risks. Because Anthropic processing sits outside Microsoft-managed environments, enterprises may need fresh reviews of compliance, retention, and contractual obligations. That adds friction at exactly the point where Microsoft wants adoption to accelerate. Finally, there is a strategic risk that Copilot becomes harder to explain: the more models, providers, and modes Microsoft adds, the more the product may feel powerful to insiders and confusing to ordinary users.

- False confidence if multiple models agree on a wrong answer.

- Higher compute costs from dual-model workflows.

- Latency increases on longer research tasks.

- Compliance complexity when external model providers are involved.

- User confusion if model selection becomes too opaque.

- Benchmark claims may be hard to verify in real-world use.

- Vendor entanglement shifts, but does not disappear.

Looking Ahead

The most important thing to watch is whether Microsoft expands Critique beyond Researcher. If the company sees strong adoption, it could bring similar multi-model review patterns into other Copilot surfaces, especially where summaries, drafting, and analysis intersect. That would make the feature less like an experiment and more like a design pattern. It would also reinforce Microsoft’s message that AI productivity is about systems, not single prompts.It will also be worth watching how enterprises respond to the governance implications. Some will welcome the flexibility. Others will be cautious about adding another external model relationship to their AI stack. The winners will likely be organizations that treat model routing as a managed policy, not an ad hoc user preference.

Signals to monitor

- Expansion to more Copilot workflows beyond Researcher.

- Adoption rates among Frontier and enterprise customers.

- Changes in admin controls for provider selection and governance.

- Competitive responses from Perplexity, OpenAI, and other AI vendors.

- User education efforts around model comparison and critique.

- Any future Microsoft first-party models appearing in the routing mix.

Source: Laodong.vn Microsoft upgrades Copilot, increases research power by combining AI tools