Microsoft’s latest Copilot terms have reignited a familiar but uncomfortable debate: how much should users trust generative AI at work? The short answer from Microsoft’s consumer-facing legal language is: not much. The company says Copilot is for “entertainment purposes only,” warns that it can make mistakes, and tells users not to rely on it for advice, even as Microsoft continues to market Copilot aggressively as a work tool. That tension is not unique to Microsoft, but it is especially striking because the same company is selling an AI assistant as a productivity engine while its own terms shift risk back onto the user. (microsoft.com)

The controversy began with a straightforward observation: Microsoft’s consumer Copilot terms now contain unusually strong disclaimers about accuracy, reliance, and liability. The terms state that the service is for entertainment purposes only, may generate incorrect information, and should not be used for advice of any kind. They also say that users use Copilot at their own risk and must indemnify Microsoft against claims arising from use of the service. (microsoft.com)

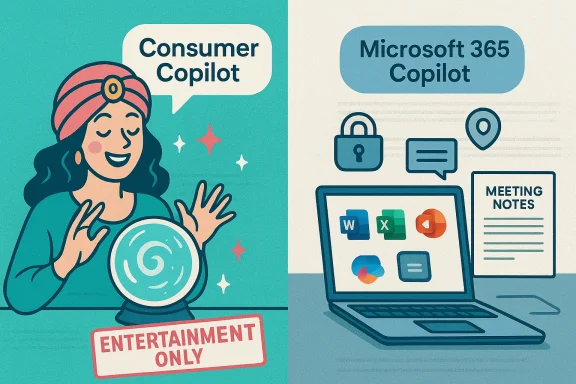

That language sounds harsh when read beside Microsoft’s product pages for Microsoft 365 Copilot, which describe an AI system “built for work” and integrated into everyday business apps like Word, Excel, Outlook, and Teams. Microsoft’s enterprise messaging emphasizes productivity, governed access, business data, and enterprise-grade security. In other words, the company is making two very different promises depending on which Copilot product and which terms you are reading. (microsoft.com)

This is not a Microsoft-only story. OpenAI’s terms similarly warn users not to rely on outputs as a sole source of truth and require human review before sharing or acting on them. Anthropic’s commercial terms likewise say outputs are provided “as is” and may not be accurate, complete, or error-free. The broader industry pattern is clear: AI vendors want adoption, but they are also building legal cushions around hallucinations and misuse. (openai.com)

Still, Microsoft’s wording lands differently because of its scale in enterprise software. A company that sells the operating system, email suite, document tools, cloud services, and now AI assistants has extraordinary influence over workplace behavior. If its consumer terms say “do not rely” while its marketing says “boost productivity,” that contradiction is not just legal housekeeping. It is a signal that the AI market is still negotiating where useful assistance ends and dependable automation begins. (microsoft.com)

That is a common strategy in AI terms, but it is still important because it clarifies the intended legal relationship. Microsoft is not saying Copilot is useless; it is saying that Copilot is not a guaranteed decision engine. The company wants users to treat it as a tool whose outputs need review, not as an authority whose answers can be adopted blindly. That distinction matters in settings where an AI-generated mistake can create financial, legal, or reputational harm. (microsoft.com)

That split helps explain the apparent contradiction. Microsoft is essentially saying: if you are using the consumer assistant, treat it like a general-purpose AI companion with no warranties; if you are using the enterprise product, you get a more controlled work environment. The problem is that many users still see “Copilot” as a single brand, which makes the legal nuance easy to miss. (microsoft.com)

Microsoft’s terms are a reminder that this habit has liability consequences. If Copilot produces an inaccurate answer and a user acts on it, Microsoft’s position is that the user should have validated it. That is not just a legal line; it is an operating principle for AI adoption in the enterprise. Human review is not optional if the output could affect contracts, customer communications, compliance decisions, or regulated advice. (microsoft.com)

For business customers, this distinction is crucial. A company paying for Microsoft 365 Copilot is buying into an environment where data boundaries, retention policies, and access controls are part of the promise. A casual user on the consumer Copilot interface is not getting the same legal and operational posture. The branding may be unified, but the risk profile is not. (microsoft.com)

OpenAI’s terms are particularly direct. They say users should not rely on outputs as a sole source of truth or a substitute for professional advice, and they explicitly require human review before using or sharing output. That is a meaningful point of comparison because it shows Microsoft’s cautionary language is not unprecedented, even if the wording in Copilot’s consumer terms sounds especially blunt. (openai.com)

There is also a trust issue. Microsoft is simultaneously promoting enterprise-grade protections like governed access and data privacy while warning consumers not to rely on the tool for advice. That dual message is defensible, but it makes the consumer version look more fragile than the marketing suggests. In practical terms, the industry’s legal language is becoming more honest, but not necessarily more reassuring. (microsoft.com)

The consumer terms, by contrast, make no such enterprise-grade promise. That difference should influence how organizations guide employees. If a worker uses the consumer version to draft work material, the company should assume a less protected environment than the Microsoft 365 version provides. Governance is not a marketing slide; it is a boundary condition for acceptable use. (microsoft.com)

That framing is strategically important. If AI is sold as a workflow accelerator, then the quality bar is about usefulness and safety under supervision. If it is sold as a decision-maker, the liability and trust expectations rise dramatically. Microsoft is clearly trying to stay on the safer side of that line, even while its branding encourages ambitious use. (microsoft.com)

A practical rollout policy would likely include review requirements for legal, financial, external, and customer-facing content. It should also define which Copilot surfaces are approved, which data sources are allowed, and what kinds of sensitive prompts are prohibited. The biggest mistake would be assuming all AI output is equally safe simply because it came from a trusted vendor. (microsoft.com)

That warning is harsh, but it reflects the current state of consumer AI. The tools can be useful, fast, and impressively fluent, yet they still produce confident errors. The consumer lesson is simple: if the output matters, verify it elsewhere. The enterprise lesson is similar, but with higher stakes and more formal accountability. (microsoft.com)

Microsoft has separately announced copyright protections for paid commercial Copilot services in the past, but that does not erase the consumer terms’ broad disclaimer structure. In fact, it underscores the segmentation strategy: enterprise customers may get more protection, while general users get a more self-service, self-risk model. That is a familiar enterprise pattern in cloud software, but AI makes it more visible because the outputs themselves can be legally ambiguous.

That reality should temper the hype cycle. The next phase of AI adoption will be less about whether a model can generate convincing prose and more about whether companies can prove they controlled the risk around its use. For Microsoft, that means balancing the promise of workplace transformation with a contract structure that repeatedly reminds users to be careful. (microsoft.com)

That gap is not merely semantic. Workers may assume they can use Copilot the way they use search, a calculator, or spellcheck. Microsoft’s own consumer terms say that assumption is unsafe. The company’s enterprise pages try to restore confidence by describing safeguards and governance, but the average reader will still notice the tension. The more capable the demo, the more severe the disclaimer feels. (microsoft.com)

This means the best operational approach is to define Copilot as a drafting and assistance layer rather than an authority layer. If a worker uses it to brainstorm, summarize, or translate, that fits the intended role. If a worker uses it as the final arbiter of policy, law, or finance, the company’s own terms suggest that is a misuse of the tool’s implied reliability. (microsoft.com)

For consumers, the message should probably be even simpler: use Copilot as a starting point, not an authority. That advice may sound obvious, but the terms show Microsoft thinks it needs to be said plainly. In the near term, the winning AI products are likely to be the ones that combine speed with transparency about limits, not the ones that pretend confidence is the same thing as correctness. (microsoft.com)

Source: TechRadar Even Microsoft's official terms say you shouldn't be using Copilot at work

Overview

Overview

The controversy began with a straightforward observation: Microsoft’s consumer Copilot terms now contain unusually strong disclaimers about accuracy, reliance, and liability. The terms state that the service is for entertainment purposes only, may generate incorrect information, and should not be used for advice of any kind. They also say that users use Copilot at their own risk and must indemnify Microsoft against claims arising from use of the service. (microsoft.com)That language sounds harsh when read beside Microsoft’s product pages for Microsoft 365 Copilot, which describe an AI system “built for work” and integrated into everyday business apps like Word, Excel, Outlook, and Teams. Microsoft’s enterprise messaging emphasizes productivity, governed access, business data, and enterprise-grade security. In other words, the company is making two very different promises depending on which Copilot product and which terms you are reading. (microsoft.com)

This is not a Microsoft-only story. OpenAI’s terms similarly warn users not to rely on outputs as a sole source of truth and require human review before sharing or acting on them. Anthropic’s commercial terms likewise say outputs are provided “as is” and may not be accurate, complete, or error-free. The broader industry pattern is clear: AI vendors want adoption, but they are also building legal cushions around hallucinations and misuse. (openai.com)

Still, Microsoft’s wording lands differently because of its scale in enterprise software. A company that sells the operating system, email suite, document tools, cloud services, and now AI assistants has extraordinary influence over workplace behavior. If its consumer terms say “do not rely” while its marketing says “boost productivity,” that contradiction is not just legal housekeeping. It is a signal that the AI market is still negotiating where useful assistance ends and dependable automation begins. (microsoft.com)

What Microsoft’s terms actually say

The most attention-grabbing phrase is the one that sounds almost sarcastic in a workplace context: Copilot is for “entertainment purposes only.” That line appears in the consumer terms, where Microsoft also says the service is not error-free, may not work as expected, and may generate incorrect information. The same section warns users not to rely on Copilot for advice of any kind. (microsoft.com)The legal shield is doing a lot of work

The legal structure is designed to reduce Microsoft’s exposure if a user acts on bad output. The terms say Microsoft makes no guarantees that Copilot will function as intended and that users accept the service at their own risk. They also require users to indemnify Microsoft for claims, losses, and expenses tied to use of the service, including breaches of the terms or violations of law. (microsoft.com)That is a common strategy in AI terms, but it is still important because it clarifies the intended legal relationship. Microsoft is not saying Copilot is useless; it is saying that Copilot is not a guaranteed decision engine. The company wants users to treat it as a tool whose outputs need review, not as an authority whose answers can be adopted blindly. That distinction matters in settings where an AI-generated mistake can create financial, legal, or reputational harm. (microsoft.com)

Consumer Copilot versus workplace Copilot

Microsoft’s consumer terms and its business messaging are not identical products with identical promises. The consumer Copilot terms emphasize caution and risk allocation, while Microsoft 365 Copilot is positioned as an enterprise product with security, privacy, and governance features. Microsoft says work prompts, inputs, and responses in Microsoft 365 Copilot are never used to train the models, and that enterprise users benefit from governed access and existing Microsoft 365 permissions. (microsoft.com)That split helps explain the apparent contradiction. Microsoft is essentially saying: if you are using the consumer assistant, treat it like a general-purpose AI companion with no warranties; if you are using the enterprise product, you get a more controlled work environment. The problem is that many users still see “Copilot” as a single brand, which makes the legal nuance easy to miss. (microsoft.com)

- Consumer Copilot: broad disclaimers, no guarantee of accuracy, no advice reliance.

- Microsoft 365 Copilot: work-focused positioning, enterprise controls, governed data access.

- Shared reality: both still require human judgment. (microsoft.com)

Why this matters for workplace use

The practical issue is not whether people can use Copilot at work; of course they can, and Microsoft wants them to. The real issue is whether organizations understand that using a consumer-grade AI assistant to draft text, summarize material, or answer questions does not transfer responsibility to the vendor. Microsoft’s terms say the opposite: the user remains responsible for use and should not rely on the outputs as advice. (microsoft.com)The risk of treating AI like a senior colleague

In many offices, AI tools quickly become informal authority figures. Workers ask a chatbot for policy interpretations, customer responses, code snippets, competitive analysis, or legal-ish phrasing, then copy the answer into a memo or email with minimal review. That workflow is efficient, but it also creates an illusion of expertise that the model does not actually possess. (microsoft.com)Microsoft’s terms are a reminder that this habit has liability consequences. If Copilot produces an inaccurate answer and a user acts on it, Microsoft’s position is that the user should have validated it. That is not just a legal line; it is an operating principle for AI adoption in the enterprise. Human review is not optional if the output could affect contracts, customer communications, compliance decisions, or regulated advice. (microsoft.com)

The consumer app is not the enterprise stack

Microsoft 365 Copilot is built around organizational data, permissions, and compliance controls. Microsoft says it can work across Word, Excel, Outlook, Teams, and other apps, using work context and governed access to produce more relevant outputs. That is far closer to a managed enterprise service than the consumer assistant described in the terms article that sparked this story. (microsoft.com)For business customers, this distinction is crucial. A company paying for Microsoft 365 Copilot is buying into an environment where data boundaries, retention policies, and access controls are part of the promise. A casual user on the consumer Copilot interface is not getting the same legal and operational posture. The branding may be unified, but the risk profile is not. (microsoft.com)

- Work outputs still need review, especially for legal, financial, HR, and compliance tasks.

- Brand familiarity can create false confidence if users assume all Copilot products behave the same.

- Enterprise controls reduce some risk, but they do not eliminate hallucinations or bad judgment. (microsoft.com)

The industry-wide legal trend

Microsoft is not alone in pushing responsibility toward the user. OpenAI’s terms say users are responsible for content and must evaluate outputs for accuracy and appropriateness before using or sharing them. Anthropic’s commercial terms say its services and outputs are provided “as is” and that the company does not warrant accuracy, completeness, or error-free use. (openai.com)Everyone is writing the same escape hatch

That legal convergence is not accidental. AI vendors know that hallucinations, misattributions, and harmful outputs are not edge cases anymore; they are an expected part of the product category. So the contracts increasingly describe outputs as probabilistic, advisory, and reviewable rather than authoritative. This is a structural shift in how software is sold. (openai.com)OpenAI’s terms are particularly direct. They say users should not rely on outputs as a sole source of truth or a substitute for professional advice, and they explicitly require human review before using or sharing output. That is a meaningful point of comparison because it shows Microsoft’s cautionary language is not unprecedented, even if the wording in Copilot’s consumer terms sounds especially blunt. (openai.com)

Why Microsoft gets singled out anyway

Microsoft gets more scrutiny because it sits at the center of mainstream productivity software. When a tool is built into the same ecosystem that runs email, documents, meetings, and identity, its mistakes can feel more consequential. The company also markets Copilot as something users can rely on to transform work, which creates a sharper contrast with the legal disclaimers.There is also a trust issue. Microsoft is simultaneously promoting enterprise-grade protections like governed access and data privacy while warning consumers not to rely on the tool for advice. That dual message is defensible, but it makes the consumer version look more fragile than the marketing suggests. In practical terms, the industry’s legal language is becoming more honest, but not necessarily more reassuring. (microsoft.com)

- OpenAI: evaluate outputs before use or sharing.

- Anthropic: outputs are not guaranteed accurate or error-free.

- Microsoft: consumer Copilot is framed as non-advisory and used at the user’s risk. (openai.com)

What Microsoft is selling to businesses

Microsoft’s enterprise narrative is still aggressive. The company says Microsoft 365 Copilot is “AI built for work,” powered by Work IQ, and designed to connect personal and organizational knowledge across work apps. Microsoft also says prompts, inputs, and responses are never used to train the models in the enterprise service. (microsoft.com)Security and privacy are part of the value proposition

This matters because enterprise AI adoption is often blocked by data governance concerns more than by model quality. Microsoft’s pitch is that Copilot works inside the organization’s existing permissions, sensitivity labels, and retention policies, so companies can deploy AI without surrendering control over sensitive content. That is a compelling story for IT departments that need something more formal than a public chatbot. (microsoft.com)The consumer terms, by contrast, make no such enterprise-grade promise. That difference should influence how organizations guide employees. If a worker uses the consumer version to draft work material, the company should assume a less protected environment than the Microsoft 365 version provides. Governance is not a marketing slide; it is a boundary condition for acceptable use. (microsoft.com)

Copilot as a workflow layer, not a replacement brain

Microsoft’s product pages describe Copilot as a way to automate routine work, summarize documents, generate insights, and help with tasks across apps. This is a productivity layer, not an autonomous substitute for human decision-making. The company’s own descriptions repeatedly frame the assistant as something that helps users move faster, not something that can be trusted to think for them. (microsoft.com)That framing is strategically important. If AI is sold as a workflow accelerator, then the quality bar is about usefulness and safety under supervision. If it is sold as a decision-maker, the liability and trust expectations rise dramatically. Microsoft is clearly trying to stay on the safer side of that line, even while its branding encourages ambitious use. (microsoft.com)

- Enterprise Copilot is governed by business controls.

- Consumer Copilot is framed more like a general assistant.

- Microsoft wants users to see Copilot as a helper, not a final authority. (microsoft.com)

Enterprise versus consumer impact

For enterprises, Microsoft’s wording should be read as a policy signal as much as a legal one. It suggests that companies need usage rules, approval processes, and review workflows for anything Copilot generates. That is especially true in functions where a mistake can create contractual exposure, privacy violations, or compliance failures. (microsoft.com)What IT departments should take from this

IT and security leaders should not interpret Microsoft’s disclaimers as an anti-Copilot message. Rather, they should read them as a warning that deployment without governance is reckless. Microsoft’s enterprise messaging around permissions, privacy, and control only works if organizations configure and monitor the tool properly. (microsoft.com)A practical rollout policy would likely include review requirements for legal, financial, external, and customer-facing content. It should also define which Copilot surfaces are approved, which data sources are allowed, and what kinds of sensitive prompts are prohibited. The biggest mistake would be assuming all AI output is equally safe simply because it came from a trusted vendor. (microsoft.com)

Consumers face a different kind of problem

For consumers, the issue is less about enterprise governance and more about overtrust. Casual users are more likely to ask for advice about health, school, money, travel, or personal decisions, then assume the answer is authoritative because it arrived in a polished interface. Microsoft’s terms explicitly warn against this mindset by saying Copilot should not be used for advice of any kind. (microsoft.com)That warning is harsh, but it reflects the current state of consumer AI. The tools can be useful, fast, and impressively fluent, yet they still produce confident errors. The consumer lesson is simple: if the output matters, verify it elsewhere. The enterprise lesson is similar, but with higher stakes and more formal accountability. (microsoft.com)

- Enterprises need policies, not just licenses.

- Consumers need skepticism, not blind trust.

- Both groups should assume Copilot can be wrong. (microsoft.com)

The copyright and liability backdrop

One reason AI terms are getting tougher is that the copyright and liability picture remains unsettled. Microsoft’s consumer Copilot terms explicitly say the company does not warrant that material created by the service will not infringe third-party rights in subsequent use, and users agree to indemnify Microsoft for claims arising from use of the service. That is a significant load-bearing clause in a world where generative AI can echo style, structure, or fragments of protected material. (microsoft.com)Why indemnity clauses keep appearing

Indemnity is the contract mechanism that pushes some downstream legal risk onto the user. If a user takes AI output and publishes it, distributes it, or relies on it in a way that triggers a claim, the vendor wants to limit its exposure. This is especially relevant for content generation, image creation, and work product that may later be subject to IP or defamation disputes. (microsoft.com)Microsoft has separately announced copyright protections for paid commercial Copilot services in the past, but that does not erase the consumer terms’ broad disclaimer structure. In fact, it underscores the segmentation strategy: enterprise customers may get more protection, while general users get a more self-service, self-risk model. That is a familiar enterprise pattern in cloud software, but AI makes it more visible because the outputs themselves can be legally ambiguous.

The output problem is bigger than copyright

It would be a mistake to reduce this to copyright alone. The broader issue is whether a user can safely rely on an AI-generated answer for any consequential task. OpenAI’s terms explicitly prohibit using output as the basis for important decisions about a person, and Anthropic’s terms disclaim accuracy and completeness. Microsoft’s Copilot terms fit the same family of caution, suggesting the legal industry is converging on a basic truth: current AI is useful, but not trustworthy enough to bear sole responsibility. (openai.com)That reality should temper the hype cycle. The next phase of AI adoption will be less about whether a model can generate convincing prose and more about whether companies can prove they controlled the risk around its use. For Microsoft, that means balancing the promise of workplace transformation with a contract structure that repeatedly reminds users to be careful. (microsoft.com)

- Indemnity shifts risk downstream.

- Copyright is only one part of the legal puzzle.

- The larger question is whether AI outputs can be treated as dependable work product. (microsoft.com)

Why the messaging feels contradictory

The contradiction is mostly rhetorical, but rhetoric matters when a company is selling trust. Microsoft’s public pages say Copilot is built for work, delivers productivity gains, and helps users move faster with enterprise-grade security. The consumer terms say it is for entertainment, can be wrong, and should not be used for advice. Both statements can be true in context, but they do not sound harmonious to the average user. (microsoft.com)Marketing language versus legal language

Marketing exists to expand use cases. Legal language exists to constrain liability. In AI, those functions collide more sharply than they do in traditional software because the product can sound far more competent than it is. A polished chatbot can feel like a knowledgeable assistant even when the contract says otherwise. (microsoft.com)That gap is not merely semantic. Workers may assume they can use Copilot the way they use search, a calculator, or spellcheck. Microsoft’s own consumer terms say that assumption is unsafe. The company’s enterprise pages try to restore confidence by describing safeguards and governance, but the average reader will still notice the tension. The more capable the demo, the more severe the disclaimer feels. (microsoft.com)

What users should infer

Users should not infer that Microsoft is trying to ban Copilot from work. It plainly is not; the company continues to center its product strategy on workplace adoption. What users should infer is that Microsoft is trying to separate capability from guarantee. That is a subtle but critical difference, especially for organizations eager to automate without changing their review culture. (microsoft.com)This means the best operational approach is to define Copilot as a drafting and assistance layer rather than an authority layer. If a worker uses it to brainstorm, summarize, or translate, that fits the intended role. If a worker uses it as the final arbiter of policy, law, or finance, the company’s own terms suggest that is a misuse of the tool’s implied reliability. (microsoft.com)

- Copilot is marketed as productive, but licensed as fallible.

- Capability does not equal reliability.

- Organizations should write policies that reflect the tool’s legal limits. (microsoft.com)

Strengths and Opportunities

Microsoft’s position is stronger than the headline suggests because it has both a massive installed base and a clear enterprise path for Copilot adoption. It can bundle AI into the apps workers already use, add governance controls, and sell an integrated story rather than a standalone chatbot. That gives Microsoft a powerful distribution advantage over smaller AI vendors. The company also benefits from the fact that many organizations want AI assistance but still need compliance-friendly tooling. That is a very large market. (microsoft.com)- Distribution advantage through Microsoft 365.

- Enterprise governance that many competitors cannot match.

- Familiar workflows reduce adoption friction.

- Data-bound positioning helps with compliance conversations.

- Brand power keeps Copilot top of mind for IT buyers.

- Cross-app integration increases daily utility.

- Opportunity to formalize AI literacy inside companies. (microsoft.com)

Risks and Concerns

The biggest risk is trust erosion. If consumers read Microsoft’s terms and conclude Copilot is too unreliable for serious use, the product could suffer reputationally even if enterprise adoption continues. A second risk is internal misuse: employees may treat consumer Copilot as if it were enterprise Copilot, especially when the branding is similar. There is also the evergreen danger of over-reliance, where users skip verification because the answer looks polished. That is how small errors become expensive incidents. (microsoft.com)- Trust gap between marketing and legal language.

- Brand confusion across consumer and enterprise Copilot products.

- Over-reliance on unverified outputs.

- Potential copyright and IP disputes from reused content.

- Compliance exposure if AI is used without review.

- User frustration if the tool is positioned as smarter than it is.

- Legal ambiguity around liability remains unresolved. (microsoft.com)

Looking Ahead

Microsoft’s challenge is not really to explain away the disclaimer; it is to prove that the disclaimer and the product can coexist. The company appears to be betting that enterprises will accept a tool that is explicitly fallible so long as it is secure, integrated, and efficient. That may be the right bet, because most businesses do not need AI to be perfect. They need it to be useful under supervision. (microsoft.com)For consumers, the message should probably be even simpler: use Copilot as a starting point, not an authority. That advice may sound obvious, but the terms show Microsoft thinks it needs to be said plainly. In the near term, the winning AI products are likely to be the ones that combine speed with transparency about limits, not the ones that pretend confidence is the same thing as correctness. (microsoft.com)

- Clearer product segmentation between consumer and enterprise Copilot.

- More explicit company policies on AI-generated work output.

- Better in-product disclosures about verification and uncertainty.

- Greater demand for auditability in AI-assisted workflows.

- Ongoing legal refinement as vendors continue shifting risk language. (microsoft.com)

Source: TechRadar Even Microsoft's official terms say you shouldn't be using Copilot at work