Microsoft’s Copilot strategy is entering a new phase, and the significance goes well beyond another feature launch. Microsoft is now blending OpenAI GPT and Anthropic Claude inside its research and agentic experiences to improve verification, comparison, and long-running task execution. The move reflects a broader shift in enterprise AI: one model is no longer enough, and buyers increasingly want systems that can cross-check themselves before producing a final answer. Microsoft’s own documentation and blog posts now show Claude support in Researcher, broader model diversity in Copilot, and a growing “Frontier” program for early access to the latest agentic tools.

For much of the Copilot era, Microsoft leaned heavily on its partnership with OpenAI and positioned that relationship as the foundation of its productivity AI stack. That made sense when the market was still treating large language models as standalone chat engines, but it is a weaker posture now that enterprise buyers care about reliability, orchestration, and governance as much as raw model quality. Microsoft’s latest changes suggest a deliberate pivot from “which model is best?” to “how do we combine multiple models into a better workflow?”

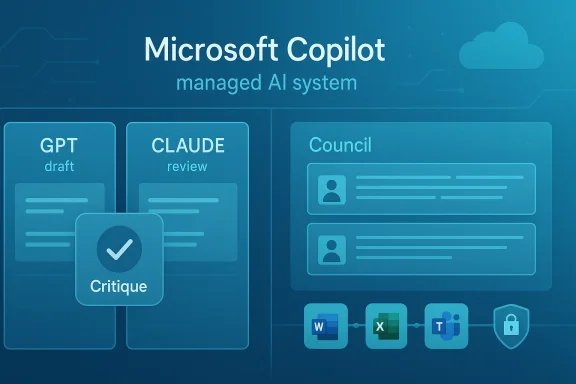

That shift is visible in the Researcher experience, where Microsoft now supports Claude in addition to GPT, and in the company’s wider Copilot Studio and Frontier initiatives. Microsoft says the architecture is model diverse by design, which is a notable statement from a company that once appeared tightly coupled to a single frontier model partner. The practical implication is clear: Microsoft wants Copilot to feel less like a chatbot and more like a managed AI system that can reason, draft, review, and act across tasks.

The timing matters too. Microsoft is pushing these changes while the broader market is still debating whether agentic AI is transformative or overhyped. At the same time, the company is facing investor scrutiny over the cost of AI infrastructure and the pace at which those investments will convert into durable revenue. That tension helps explain why Microsoft is emphasizing enterprise controls, multi-model flexibility, and workflow productivity rather than flashy consumer-style demos.

There is also an important competitive subtext. Google has Gemini, Anthropic has Claude, OpenAI continues to improve GPT, and independent agent platforms are trying to own the “workflow layer” above the models. Microsoft’s answer is to sit in the middle: own the interface, own the enterprise integration, and remain flexible about which model powers the work. That is a pragmatic strategy, and possibly the only one that can keep Copilot relevant as model quality converges.

The strongest signal came with Microsoft’s description of Copilot as model diverse by design. That phrase reveals a long-term strategic position: Microsoft wants to make every model useful at work, whether it comes from OpenAI, Anthropic, or another provider later. In effect, Copilot becomes a brokerage layer for AI capability, not just a branded wrapper around one model’s output.

The market implication is that Microsoft is trying to become the default enterprise aggregator for AI models. That is a smart move because most businesses do not want to manage a zoo of point solutions if one platform can orchestrate the same capabilities under a unified security and identity layer. The more Microsoft can make model switching invisible, the more value it captures from the relationship.

According to Microsoft’s support documentation, Claude is now available in Researcher, with availability rolling out gradually and full access expected by the end of March 2026. That matters because it shows the integration is not theoretical or merely announced; it is already being enabled in production workflows for some organizations. The fact that Microsoft says the system reverts to the default model after the session ends also suggests the company is keeping the model choice controlled and session-based.

This is especially relevant for research tasks, where users often care less about a polished tone than about whether the answer is trustworthy. By turning verification into a visible workflow, Microsoft is acknowledging a reality the industry has been slow to admit: AI needs checks and balances, not just bigger models. For enterprise adoption, that is a stronger sales message than “our model is best on benchmarks.”

This kind of side-by-side evaluation helps users see where models agree, where they diverge, and which one is stronger for a given type of task. In enterprise settings, that matters because different workflows require different strengths: one model may be better at summarization, another at synthesis, and another at long-context reasoning. A comparison view makes those differences visible rather than hidden.

There is also a governance angle. When teams can compare outputs, they are better equipped to make informed decisions about confidence and escalation. That is a big deal for regulated industries, where traceability and explainability often matter almost as much as raw accuracy.

Microsoft and Anthropic have both described Cowork as a system for long-running, multi-step work. In Microsoft’s framing, this is about productivity inside the same security, identity, and compliance boundaries that already govern Microsoft 365. That combination of autonomy and enterprise guardrails is exactly what buyers have been asking for, even if the technology is still maturing.

But the promise also raises the bar for reliability. A model that writes a bad paragraph is annoying; an agent that makes a bad decision inside a workflow can create cascading damage. Microsoft’s push into Cowork suggests the company believes enterprise customers are willing to accept that risk if the controls are strong enough and the gains are large enough.

That governance-first posture helps explain why Microsoft keeps describing these additions as part of a managed platform rather than a freeform AI playground. The company is not simply opening the floodgates to every model; it is integrating them under a controlled product and tenancy model. For regulated organizations, that distinction is not cosmetic.

This is also where Microsoft has a competitive advantage over smaller AI toolmakers. Many startups can offer clever agents, but fewer can match Microsoft’s footprint in identity, data, and productivity software. If Copilot can reliably combine those layers with best-in-class models, it becomes harder for standalone AI apps to justify themselves inside the enterprise stack.

The company also appears to be responding to customer sentiment that model quality alone is not enough. Many enterprises are now asking for validation, orchestration, and auditable workflows, not just a more fluent chatbot. By making multi-model collaboration a visible part of Copilot, Microsoft is effectively productizing a solution to the trust problem.

The broader lesson is that the AI race is becoming less about who has the smartest demo and more about who can operationalize model diversity at scale. Microsoft’s answer is to use its software empire to make model choice feel seamless. That is a powerful position if execution holds.

At the same time, Microsoft is trying to prove that Copilot can become a habit, not a novelty. The company has repeatedly emphasized adoption, paid seats, daily active usage, and broad deployment across large organizations. That makes sense: if AI is to become a durable business, it must move from occasional use to routine workflow dependency.

That is why verification features like Critique matter more than they might appear at first glance. They are not just usability enhancements; they are evidence that Microsoft is trying to close the gap between capability and confidence. In enterprise software, that gap often determines whether a product becomes indispensable or merely interesting.

Microsoft will also need to keep proving that this multi-model strategy is not just a hedge, but a durable advantage. That means better reliability, clearer admin controls, and more obvious productivity gains in Word, Excel, Outlook, Teams, and Copilot Chat. The company has made a strong strategic statement; now it has to deliver the operational evidence.

Source: LatestLY Microsoft Unveils Multi-Model AI Upgrades as Copilot Integrates Claude and GPT for Real-Time Verification | LatestLY

LatestLY

Overview

Overview

For much of the Copilot era, Microsoft leaned heavily on its partnership with OpenAI and positioned that relationship as the foundation of its productivity AI stack. That made sense when the market was still treating large language models as standalone chat engines, but it is a weaker posture now that enterprise buyers care about reliability, orchestration, and governance as much as raw model quality. Microsoft’s latest changes suggest a deliberate pivot from “which model is best?” to “how do we combine multiple models into a better workflow?”That shift is visible in the Researcher experience, where Microsoft now supports Claude in addition to GPT, and in the company’s wider Copilot Studio and Frontier initiatives. Microsoft says the architecture is model diverse by design, which is a notable statement from a company that once appeared tightly coupled to a single frontier model partner. The practical implication is clear: Microsoft wants Copilot to feel less like a chatbot and more like a managed AI system that can reason, draft, review, and act across tasks.

The timing matters too. Microsoft is pushing these changes while the broader market is still debating whether agentic AI is transformative or overhyped. At the same time, the company is facing investor scrutiny over the cost of AI infrastructure and the pace at which those investments will convert into durable revenue. That tension helps explain why Microsoft is emphasizing enterprise controls, multi-model flexibility, and workflow productivity rather than flashy consumer-style demos.

There is also an important competitive subtext. Google has Gemini, Anthropic has Claude, OpenAI continues to improve GPT, and independent agent platforms are trying to own the “workflow layer” above the models. Microsoft’s answer is to sit in the middle: own the interface, own the enterprise integration, and remain flexible about which model powers the work. That is a pragmatic strategy, and possibly the only one that can keep Copilot relevant as model quality converges.

The Multi-Model Pivot

Microsoft’s embrace of multiple models is not merely a product tweak; it is an architectural bet. In practical terms, the company is telling enterprise customers that the best AI experience may come from combining different systems rather than forcing every task through a single model family. That matters because different models excel at different workloads, and enterprises care deeply about variance, latency, and accuracy.The strongest signal came with Microsoft’s description of Copilot as model diverse by design. That phrase reveals a long-term strategic position: Microsoft wants to make every model useful at work, whether it comes from OpenAI, Anthropic, or another provider later. In effect, Copilot becomes a brokerage layer for AI capability, not just a branded wrapper around one model’s output.

Why diversification matters

A diversified AI stack reduces the risk of overdependence on a single vendor relationship. It also gives Microsoft a stronger negotiating position and more room to optimize for cost, speed, or quality depending on the task. For customers, that can translate into better answers and more predictable availability, though it also creates more complexity behind the scenes.The market implication is that Microsoft is trying to become the default enterprise aggregator for AI models. That is a smart move because most businesses do not want to manage a zoo of point solutions if one platform can orchestrate the same capabilities under a unified security and identity layer. The more Microsoft can make model switching invisible, the more value it captures from the relationship.

- Microsoft is reducing dependence on a single frontier model vendor.

- Enterprise buyers get more optionality without rebuilding workflows.

- The Copilot brand becomes a platform, not just an assistant.

- Model diversity can improve resilience when one system underperforms.

- The trade-off is greater operational complexity behind the UI.

How Critique Changes Researcher

The most interesting part of the update is the Critique workflow, which uses one model to draft and another to review. That is a meaningful departure from the usual “single-shot generation” pattern that still dominates consumer AI. Instead of treating the model output as final, Microsoft is effectively building a second layer of scrutiny into the process.According to Microsoft’s support documentation, Claude is now available in Researcher, with availability rolling out gradually and full access expected by the end of March 2026. That matters because it shows the integration is not theoretical or merely announced; it is already being enabled in production workflows for some organizations. The fact that Microsoft says the system reverts to the default model after the session ends also suggests the company is keeping the model choice controlled and session-based.

Verification as a product feature

The logic behind Critique is straightforward. If GPT drafts an answer and Claude reviews it for accuracy, the user gets a process that resembles internal peer review. That does not eliminate hallucinations, but it should reduce obvious errors, unsupported claims, and weak reasoning chains.This is especially relevant for research tasks, where users often care less about a polished tone than about whether the answer is trustworthy. By turning verification into a visible workflow, Microsoft is acknowledging a reality the industry has been slow to admit: AI needs checks and balances, not just bigger models. For enterprise adoption, that is a stronger sales message than “our model is best on benchmarks.”

- Drafting and reviewing are being separated into distinct model roles.

- The workflow aims to reduce hallucinations and unsupported statements.

- Session-based model selection gives admins more control.

- The feature is more valuable for research than for casual chat.

- It implicitly validates the need for human-style review in AI output.

Council and Side-by-Side Comparison

If Critique is about internal review, Council is about transparency. The comparison interface lets users view responses from different models side by side, which is useful when the goal is not a single correct answer but a better understanding of trade-offs. That is a subtle but important shift in the user experience.This kind of side-by-side evaluation helps users see where models agree, where they diverge, and which one is stronger for a given type of task. In enterprise settings, that matters because different workflows require different strengths: one model may be better at summarization, another at synthesis, and another at long-context reasoning. A comparison view makes those differences visible rather than hidden.

Why comparison matters for enterprise AI

A lot of AI disappointment in the enterprise comes from mismatched expectations. Users ask one model to do everything and then blame the product when it performs unevenly. Council gives Microsoft a way to educate users through the interface itself, turning model evaluation into a native part of the workflow.There is also a governance angle. When teams can compare outputs, they are better equipped to make informed decisions about confidence and escalation. That is a big deal for regulated industries, where traceability and explainability often matter almost as much as raw accuracy.

- Council exposes model differences instead of hiding them.

- It helps users identify disagreements and edge cases.

- It supports training and adoption by making model behavior visible.

- It can improve trust in AI-assisted research.

- It positions Copilot as a decision-support layer, not just a text generator.

Copilot Cowork and Agentic Workflows

The expansion of Copilot Cowork is the other major pillar of the announcement. Microsoft is broadening access to its agentic tooling through the Frontier program, signaling that it wants customers to move from asking questions to delegating tasks. That is the core promise of agentic AI: not just generating content, but completing workflows over time.Microsoft and Anthropic have both described Cowork as a system for long-running, multi-step work. In Microsoft’s framing, this is about productivity inside the same security, identity, and compliance boundaries that already govern Microsoft 365. That combination of autonomy and enterprise guardrails is exactly what buyers have been asking for, even if the technology is still maturing.

What agentic AI actually changes

The real difference with agentic tools is persistence. Instead of answering a prompt and disappearing, the system can keep working in the background, returning to a task after multiple steps or context changes. That has obvious appeal for scheduling, research, document preparation, and cross-application coordination.But the promise also raises the bar for reliability. A model that writes a bad paragraph is annoying; an agent that makes a bad decision inside a workflow can create cascading damage. Microsoft’s push into Cowork suggests the company believes enterprise customers are willing to accept that risk if the controls are strong enough and the gains are large enough.

- Cowork is aimed at multi-step, background work.

- It is designed to operate within Microsoft 365 governance controls.

- It represents a shift from assistance to delegation.

- It raises the operational stakes if errors propagate through workflows.

- It is one of Microsoft’s clearest answers to the agentic AI market.

Enterprise Readiness and Governance

Microsoft is clearly targeting enterprise buyers first, and for good reason. Consumer users may enjoy model choice, but enterprises need permissioning, data boundaries, admin controls, and predictable rollout schedules. The support page for Claude in Researcher specifically notes that admins must allow access in Microsoft 365 before users can use it.That governance-first posture helps explain why Microsoft keeps describing these additions as part of a managed platform rather than a freeform AI playground. The company is not simply opening the floodgates to every model; it is integrating them under a controlled product and tenancy model. For regulated organizations, that distinction is not cosmetic.

Security and control remain the selling point

Microsoft’s pitch is that customers can gain frontier-model capability without surrendering enterprise controls. In theory, that means identity, compliance, retention, and policy enforcement stay intact even as the underlying model changes. In practice, the strength of the system will depend on how cleanly Microsoft abstracts the model layer and how consistently it enforces policy across workflows.This is also where Microsoft has a competitive advantage over smaller AI toolmakers. Many startups can offer clever agents, but fewer can match Microsoft’s footprint in identity, data, and productivity software. If Copilot can reliably combine those layers with best-in-class models, it becomes harder for standalone AI apps to justify themselves inside the enterprise stack.

- Admin approval is required before Claude is available in some environments.

- Microsoft is emphasizing enterprise governance over open-ended experimentation.

- Identity and compliance integration are central to the pitch.

- The platform strategy favors larger organizations with existing Microsoft estates.

- Control is part of the product value, not just an IT afterthought.

Competitive Implications

Microsoft’s move is best understood as a response to a crowded and fast-moving market. Google is pushing Gemini deeper into its own productivity stack, Anthropic is advancing the reputation of Claude as a strong reasoning and enterprise model, and OpenAI continues to extend GPT capabilities across consumer and enterprise use cases. Microsoft does not need to win every benchmark; it needs to remain the most practical place to use frontier AI at work.The company also appears to be responding to customer sentiment that model quality alone is not enough. Many enterprises are now asking for validation, orchestration, and auditable workflows, not just a more fluent chatbot. By making multi-model collaboration a visible part of Copilot, Microsoft is effectively productizing a solution to the trust problem.

Pressure on rivals

For OpenAI, the Microsoft partnership remains strategically important, but it no longer guarantees exclusive prominence inside Copilot. For Anthropic, the integration is a major distribution win that validates Claude as an enterprise-grade choice beyond its own standalone environment. For Google, Microsoft’s move raises the bar: matching features is not enough if the enterprise workflow remains less integrated.The broader lesson is that the AI race is becoming less about who has the smartest demo and more about who can operationalize model diversity at scale. Microsoft’s answer is to use its software empire to make model choice feel seamless. That is a powerful position if execution holds.

- Microsoft is competing on platform integration, not just model quality.

- Anthropic gains enterprise distribution through Microsoft.

- OpenAI remains important, but not singularly dominant in Copilot.

- Google faces a workflow-first challenge, not just a model benchmark challenge.

- The real competition is increasingly about trust, governance, and integration.

Why the Timing Matters

This rollout arrives when investors are scrutinizing AI spending more aggressively. Microsoft’s infrastructure outlays have fueled concerns about whether the current wave of AI investment will produce a commensurate return, especially if customers are still experimenting rather than scaling. The company’s stock pressure this quarter underscores that tension between long-term strategy and near-term market expectations.At the same time, Microsoft is trying to prove that Copilot can become a habit, not a novelty. The company has repeatedly emphasized adoption, paid seats, daily active usage, and broad deployment across large organizations. That makes sense: if AI is to become a durable business, it must move from occasional use to routine workflow dependency.

The investment story behind the product story

The product roadmap and the financial story are tightly linked. If Copilot becomes more accurate, more useful, and more trusted, Microsoft can justify continued spend on models, data centers, and orchestration layers. If it does not, the company risks being seen as a cloud provider subsidizing experimentation rather than monetizing transformation.That is why verification features like Critique matter more than they might appear at first glance. They are not just usability enhancements; they are evidence that Microsoft is trying to close the gap between capability and confidence. In enterprise software, that gap often determines whether a product becomes indispensable or merely interesting.

- AI spending needs to show product and revenue conversion.

- Copilot adoption metrics are central to Microsoft’s narrative.

- Better trust features support the investment thesis.

- Long-term usage matters more than one-time experimentation.

- Enterprise confidence can justify continued platform expansion.

Strengths and Opportunities

Microsoft’s latest Copilot changes create a more credible story for enterprise AI than a single-model assistant ever could. The combination of multi-model drafting, verification, comparison, and agentic execution gives the company a broader toolkit for winning productivity workflows. Just as importantly, it reframes AI as a system of managed capabilities rather than a single conversational interface.- Model diversity can improve reliability across different task types.

- Critique may reduce obvious hallucinations and unsupported claims.

- Council gives users transparency into model differences.

- Copilot Cowork expands the platform from chat to workflow automation.

- Enterprise governance makes the offering easier to adopt at scale.

- Anthropic support broadens Microsoft’s vendor ecosystem.

- Frontier access helps Microsoft test features with early enterprise adopters.

Risks and Concerns

The same changes that make Copilot more powerful also make it more complicated. Multi-model orchestration can introduce latency, inconsistent behavior, and tougher debugging when outputs disagree. There is also a real risk that customers will expect verification to equal correctness, even though it only reduces errors rather than eliminating them.- Added complexity may confuse users who just want a quick answer.

- Latency could rise when one model drafts and another reviews.

- Governance gaps may appear if policies are not enforced consistently.

- False confidence could grow if users overtrust the Critique layer.

- Cost pressures may intensify if multiple models are used per query.

- Agentic errors can compound in long-running workflows.

- Brand confusion remains a problem across Copilot’s many product variants.

Looking Ahead

The most important question is whether Microsoft turns these capabilities into something enterprises use every day, or whether they remain premium features for a narrower set of power users. The answer will likely depend on how well Microsoft abstracts the complexity of model choice and how consistently the tools improve output quality in real-world work. If the company gets that balance right, Copilot could become the central operating layer for office productivity in the AI era.Microsoft will also need to keep proving that this multi-model strategy is not just a hedge, but a durable advantage. That means better reliability, clearer admin controls, and more obvious productivity gains in Word, Excel, Outlook, Teams, and Copilot Chat. The company has made a strong strategic statement; now it has to deliver the operational evidence.

- Watch for broader rollout timing across Microsoft 365 tenants.

- Monitor whether Claude access expands beyond Researcher and Frontier programs.

- Track adoption data for Copilot Cowork and other agentic workflows.

- Look for evidence that Critique measurably improves answer quality.

- Pay attention to pricing, licensing, and licensing tier boundaries.

- Observe how Google, OpenAI, and Anthropic respond in enterprise suites.

Source: LatestLY Microsoft Unveils Multi-Model AI Upgrades as Copilot Integrates Claude and GPT for Real-Time Verification |