Microsoft is leaning into a strategy that would have sounded improbable not long ago: using one frontier AI model to scrutinize another. The company has now moved into a multi-model Copilot era, pairing OpenAI’s GPT and Anthropic’s Claude across selected Microsoft 365 experiences, with the stated goal of reducing hallucinations, improving answer quality, and giving enterprise users more control over which model does the work. That shift matters because it signals Microsoft’s confidence that model diversity is no longer a compromise — it is becoming a product feature. It also raises a bigger question for the AI market: if the best way to trust a model is to subject it to another model’s critique, what does that say about the current state of enterprise AI?

Microsoft’s newest Copilot direction is best understood as part of a broader model-diversification strategy that has accelerated through 2025 and into 2026. Earlier Copilot generations were closely associated with OpenAI’s GPT family, and that association shaped both the product’s identity and the public’s expectations. But Microsoft has increasingly emphasized that its AI stack is not a single-model dependency; it is a platform that can route tasks to whatever model is best suited for the job. The latest wave of updates, including Claude in Researcher and Copilot Cowork, makes that philosophy concrete.

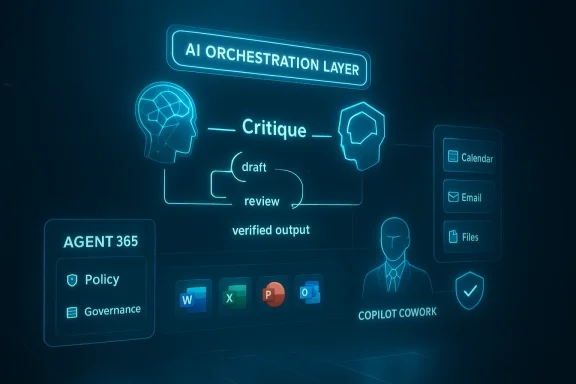

The key innovation described in the Reuters-linked report is the Critique workflow, in which GPT produces an initial draft and Claude evaluates it for factual mistakes, quality issues, and likely hallucinations. Microsoft has also reportedly introduced a model Council feature for side-by-side comparison of model outputs, which suggests the company is trying to operationalize model disagreement as a quality-control asset rather than a bug. That is a meaningful shift in how enterprise AI systems are presented: not as singular oracles, but as layered decision systems with internal checks and balances. The Reuters description aligns with Microsoft’s public posture around “intelligence + trust,” even though Microsoft’s own announcements have focused more broadly on multi-model choice than on a formal critique loop.

This is also happening against a backdrop of Microsoft’s Frontier program, which is designed to give early access to experimental Copilot capabilities. Microsoft has publicly said that Claude is now available in mainline Copilot chat through Frontier, and that Copilot Cowork is being tested with a limited set of customers before wider rollout. In other words, the company is not merely testing a new AI feature; it is testing a new operating model for AI product development, one that relies on rapid iteration, model pluralism, and enterprise governance.

Microsoft’s public documentation now shows that Claude has been introduced as a supported subprocessor in Microsoft 365 Copilot environments, and that its rollout is being handled gradually. The support page notes that Anthropic as a subprocessor is being introduced in phases and that full availability is expected by the end of March 2026. Microsoft also states that Claude can be selected in the Researcher agent during an active session, after which the system reverts to the default Microsoft 365 generative model. That points to a controlled, enterprise-first rollout rather than a blanket consumer launch.

There is also a strategic dimension here. Microsoft’s March 2026 blog posts describe Claude as part of a broader Frontier Suite, where the company wants to combine intelligence and trust in a way that supports “long-running, multi-step work” and “mainline chat” access to both Anthropic and OpenAI models. This is not just about having options. It is about building a product architecture where different models can play different roles: drafting, reasoning, checking, and coordinating.

Another important backdrop is the competitive reality inside Microsoft’s own ecosystem. Copilot has become an umbrella brand that spans chat, research, agentic workflows, and development tools. Microsoft has already been broadening model choice in Copilot Studio and other experiences, and the company has highlighted that it is increasingly using “the right model for the task” rather than insisting on one model family for everything. That messaging suggests Microsoft sees enterprise buyers as wanting risk-managed flexibility, not vendor purity.

Finally, the timing matters. By early 2026, the conversation around AI in the workplace had shifted from “can it do the task?” to “can it do the task repeatedly, safely, and at scale?” That is why a critique layer is so compelling. It reflects a maturing market in which trust is becoming a differentiator, not just model benchmark scores. That subtle change may be the biggest product story here.

This kind of arrangement has an obvious appeal in enterprise settings. A second model can catch inconsistent reasoning, missing caveats, and vague or unsupported claims before they reach a user who may act on them. It can also encourage answers that are more cautious and better structured, especially when the output will influence business decisions. The downside is that a critique system may sometimes become conservative, slower, or overly defensive if the reviewer model penalizes useful but uncertain inferences. That tradeoff is worth watching closely.

This also gives Microsoft room to optimize cost and workload routing. Not every task needs the same class of model, and not every user experience benefits from the same reasoning style. The company’s own marketing language emphasizes selecting the right model for the job, which is a practical way to reduce cost while improving fit. That can matter just as much as raw model quality in a product used by millions of knowledge workers.

In effect, Microsoft is teaching users to treat AI like a panel of advisors rather than a singular authority. That could be a healthier mental model for business work, where judgment matters and certainty is often overstated. Still, there is a danger that users will interpret disagreement between models as a sign of unreliability rather than as useful signal. The interface will matter enormously here.

That matters because the future of enterprise AI is increasingly agentic. The value proposition is no longer limited to chat. It is about delegating work that crosses files, apps, and time boundaries, while keeping the human in the loop. A tool that can research, draft, revise, and continue working is much more useful than one that only answers prompts in isolation.

A critique layer could help by checking for unsupported claims, missing qualifiers, or weak reasoning chains. But no verification system is perfect if the underlying source material is incomplete, contradictory, or stale. In enterprise contexts, the quality of grounding data and permission-aware retrieval still matter enormously. The critique model is a second line of defense, not a substitute for good data hygiene.

For OpenAI, the upside is that GPT remains deeply embedded in Microsoft’s most important productivity experiences. The downside is that GPT is no longer the only star of the show. For Anthropic, the upside is major enterprise distribution through Microsoft 365. The downside is that Claude becomes part of a broader platform strategy controlled by Microsoft, not an end-to-end consumer brand experience.

Another concern is governance fragmentation. Multi-model systems can create confusion about where data flows, which model handled what, and which policy applies at each step. Microsoft has worked to address this through its subprocessor framework and enterprise data protections, but the complexity is real, especially when different experiences use different models in different jurisdictions or tenant configurations.

It will also be worth watching how quickly Microsoft expands these capabilities beyond early-access and Researcher scenarios. The company has already suggested that Claude is available through Frontier and that Copilot Cowork is in preview, but broad adoption will depend on usability, regional readiness, and trust. The strongest AI products of 2026 may not be the ones with the flashiest demos, but the ones that quietly reduce friction and errors every day.

Source: Technobezz Microsoft pairs GPT with Claude to reduce AI hallucinations

Overview

Overview

Microsoft’s newest Copilot direction is best understood as part of a broader model-diversification strategy that has accelerated through 2025 and into 2026. Earlier Copilot generations were closely associated with OpenAI’s GPT family, and that association shaped both the product’s identity and the public’s expectations. But Microsoft has increasingly emphasized that its AI stack is not a single-model dependency; it is a platform that can route tasks to whatever model is best suited for the job. The latest wave of updates, including Claude in Researcher and Copilot Cowork, makes that philosophy concrete.The key innovation described in the Reuters-linked report is the Critique workflow, in which GPT produces an initial draft and Claude evaluates it for factual mistakes, quality issues, and likely hallucinations. Microsoft has also reportedly introduced a model Council feature for side-by-side comparison of model outputs, which suggests the company is trying to operationalize model disagreement as a quality-control asset rather than a bug. That is a meaningful shift in how enterprise AI systems are presented: not as singular oracles, but as layered decision systems with internal checks and balances. The Reuters description aligns with Microsoft’s public posture around “intelligence + trust,” even though Microsoft’s own announcements have focused more broadly on multi-model choice than on a formal critique loop.

This is also happening against a backdrop of Microsoft’s Frontier program, which is designed to give early access to experimental Copilot capabilities. Microsoft has publicly said that Claude is now available in mainline Copilot chat through Frontier, and that Copilot Cowork is being tested with a limited set of customers before wider rollout. In other words, the company is not merely testing a new AI feature; it is testing a new operating model for AI product development, one that relies on rapid iteration, model pluralism, and enterprise governance.

Background

The story begins with a long-standing tension in AI product design: the more capable a model becomes, the more users expect it to be reliable, and the more visible its errors become when it is wrong. Hallucinations have remained one of the most stubborn shortcomings of large language models, especially in enterprise scenarios where the cost of a confident mistake can be significant. Microsoft has spent years trying to reduce that risk through grounding, retrieval, permissions-aware data access, and model tuning, but the emergence of multi-model evaluation suggests the company is also looking beyond single-model safeguards.Microsoft’s public documentation now shows that Claude has been introduced as a supported subprocessor in Microsoft 365 Copilot environments, and that its rollout is being handled gradually. The support page notes that Anthropic as a subprocessor is being introduced in phases and that full availability is expected by the end of March 2026. Microsoft also states that Claude can be selected in the Researcher agent during an active session, after which the system reverts to the default Microsoft 365 generative model. That points to a controlled, enterprise-first rollout rather than a blanket consumer launch.

There is also a strategic dimension here. Microsoft’s March 2026 blog posts describe Claude as part of a broader Frontier Suite, where the company wants to combine intelligence and trust in a way that supports “long-running, multi-step work” and “mainline chat” access to both Anthropic and OpenAI models. This is not just about having options. It is about building a product architecture where different models can play different roles: drafting, reasoning, checking, and coordinating.

Another important backdrop is the competitive reality inside Microsoft’s own ecosystem. Copilot has become an umbrella brand that spans chat, research, agentic workflows, and development tools. Microsoft has already been broadening model choice in Copilot Studio and other experiences, and the company has highlighted that it is increasingly using “the right model for the task” rather than insisting on one model family for everything. That messaging suggests Microsoft sees enterprise buyers as wanting risk-managed flexibility, not vendor purity.

Finally, the timing matters. By early 2026, the conversation around AI in the workplace had shifted from “can it do the task?” to “can it do the task repeatedly, safely, and at scale?” That is why a critique layer is so compelling. It reflects a maturing market in which trust is becoming a differentiator, not just model benchmark scores. That subtle change may be the biggest product story here.

How the Critique Workflow Changes Copilot

The purported Critique feature is more than a cosmetic addition. If GPT drafts an answer and Claude reviews it before the user sees it, Microsoft is essentially creating an internal editorial pipeline for machine-generated work. That mirrors how good human organizations operate: draft, review, revise, and then publish. The important difference is that the review step is now automated and model-based, which could make quality control faster and more scalable.This kind of arrangement has an obvious appeal in enterprise settings. A second model can catch inconsistent reasoning, missing caveats, and vague or unsupported claims before they reach a user who may act on them. It can also encourage answers that are more cautious and better structured, especially when the output will influence business decisions. The downside is that a critique system may sometimes become conservative, slower, or overly defensive if the reviewer model penalizes useful but uncertain inferences. That tradeoff is worth watching closely.

Why cross-model review matters

Cross-model review matters because different models tend to have different strengths, blind spots, and style preferences. One model may be better at generating a fluent draft, while another is better at spotting missing context or internal contradictions. In practice, the combination can improve answer quality even if neither model is perfect on its own.- It can reduce obvious factual slips.

- It can improve answer structure and completeness.

- It can surface caveats that a single model would omit.

- It can make enterprise outputs feel more accountable.

- It can introduce latency if the review pass is heavy-handed.

Why Microsoft Is Diversifying Models

Microsoft’s embrace of Claude alongside GPT is not a rejection of OpenAI; it is a hedge against overdependence. For years, Microsoft’s AI ambitions were tightly tied to OpenAI’s frontier models, but enterprise buyers have increasingly asked for resilience, choice, and specialization. By making model choice visible and operational, Microsoft can reduce the risk that one model’s limitations become the product’s limitation.This also gives Microsoft room to optimize cost and workload routing. Not every task needs the same class of model, and not every user experience benefits from the same reasoning style. The company’s own marketing language emphasizes selecting the right model for the job, which is a practical way to reduce cost while improving fit. That can matter just as much as raw model quality in a product used by millions of knowledge workers.

Enterprise vs. consumer implications

For enterprise customers, model diversity is attractive because it creates more levers for governance, compliance, and productivity. IT teams can think in terms of approved workloads, data boundaries, and role-specific AI behaviors. For consumers, the appeal is simpler: better answers and fewer embarrassing mistakes. The challenge is that consumer users may not see or understand the model plumbing, so Microsoft has to translate technical complexity into a straightforward experience.- Enterprises want auditability and admin controls.

- Consumers want speed and accuracy.

- Both want fewer hallucinations.

- Both benefit when model selection is invisible unless needed.

- Both can be frustrated if choice becomes clutter.

Model Council and the Rise of Comparative AI

The reported model Council feature is especially interesting because it turns comparison into a first-class product behavior. Instead of forcing users to trust one model’s answer, Microsoft is apparently making it easier to compare outputs from multiple models side by side. That can help users spot inconsistencies, identify different reasoning paths, and choose the response that best matches the task.In effect, Microsoft is teaching users to treat AI like a panel of advisors rather than a singular authority. That could be a healthier mental model for business work, where judgment matters and certainty is often overstated. Still, there is a danger that users will interpret disagreement between models as a sign of unreliability rather than as useful signal. The interface will matter enormously here.

Comparative interfaces and trust

A comparative interface can improve trust by exposing uncertainty, but it can also overwhelm users with too many options. If model Council becomes a dashboard of competing drafts, some users may gain confidence while others lose it. The design challenge is to make comparison informative rather than paralyzing.- Clear differences can help users decide faster.

- Hidden model variance can reduce trust.

- Too many choices can create decision fatigue.

- Simple defaults are still important.

- Transparent labeling may be more valuable than raw model names.

Copilot Cowork and the Agentic Future

The rollout of Copilot Cowork is just as important as the model-comparison story because it shows where Microsoft thinks AI work is heading. Rather than merely generating a single response, Copilot Cowork is designed to handle long-running, multi-step tasks that unfold over time. Microsoft says it is being built in close collaboration with Anthropic and is meant to bring Claude Cowork-style capabilities into Microsoft 365 Copilot.That matters because the future of enterprise AI is increasingly agentic. The value proposition is no longer limited to chat. It is about delegating work that crosses files, apps, and time boundaries, while keeping the human in the loop. A tool that can research, draft, revise, and continue working is much more useful than one that only answers prompts in isolation.

From prompts to delegated work

Copilot Cowork appears to reflect Microsoft’s belief that work should be broken into visible steps rather than hidden inside a single opaque response. That is a meaningful enterprise design choice. It lets users steer the work while it is happening, which should improve confidence and reduce unpleasant surprises.- Tasks can span minutes or hours.

- Progress can be reviewed while work is underway.

- Users can intervene instead of starting over.

- Enterprise controls remain central.

- Multi-step execution becomes part of the UX.

Hallucinations, Accuracy, and the Reality Check

Reducing hallucinations is the obvious headline, but the deeper issue is whether Microsoft can make AI outputs operationally trustworthy. Hallucination reduction is not just about fewer false statements. It is about ensuring that a response is appropriate, grounded, and actionable in the context of work. A model can be technically fluent and still be a poor enterprise assistant if it is too confident or too vague.A critique layer could help by checking for unsupported claims, missing qualifiers, or weak reasoning chains. But no verification system is perfect if the underlying source material is incomplete, contradictory, or stale. In enterprise contexts, the quality of grounding data and permission-aware retrieval still matter enormously. The critique model is a second line of defense, not a substitute for good data hygiene.

The limits of model-to-model verification

Model-to-model verification can catch some mistakes, but it can also miss errors that both models share. That is especially true if the models were trained on overlapping corpora or if the critique prompt is too similar to the original task. Microsoft therefore needs to avoid presenting Critique as a magical fix. It is better understood as an incremental quality layer.- Shared blind spots can survive review.

- Confidence can be mistaken for correctness.

- Errors in source data can still leak through.

- Domain-specific prompts may need specialized checking.

- Human oversight remains important for high-stakes tasks.

The Competitive Landscape

Microsoft’s move puts pressure on rival AI platforms in a subtle but significant way. Rather than forcing a winner-take-all model strategy, Microsoft is turning model interoperability into a selling point. That means competitors now have to explain not only why their model is better, but why users should care if another model is available alongside it.For OpenAI, the upside is that GPT remains deeply embedded in Microsoft’s most important productivity experiences. The downside is that GPT is no longer the only star of the show. For Anthropic, the upside is major enterprise distribution through Microsoft 365. The downside is that Claude becomes part of a broader platform strategy controlled by Microsoft, not an end-to-end consumer brand experience.

What rivals may do next

Competitors will likely respond by emphasizing specialization, deeper vertical integrations, or their own trust and safety claims. Some may lean into exclusive agents, while others may stress superior coding, research, or document workflows. The bigger market trend is that AI vendors are no longer competing just on benchmark bragging rights; they are competing on orchestration, governance, and workflow fit.- More vendors will pitch multi-model support.

- Enterprise platforms will emphasize governance.

- Consumers may see more “best model for the task” routing.

- Quality control features will become product differentiators.

- AI trust may become as important as AI capability.

Strengths and Opportunities

Microsoft’s approach has several clear strengths. It aligns with enterprise expectations, it gives product teams more flexibility, and it reflects a more realistic understanding of AI limitations. Perhaps most importantly, it turns model diversity into a measurable product capability instead of a procurement footnote. That creates room for better workflows and, potentially, better user outcomes.- Better accuracy through cross-model checking.

- More enterprise trust via governance-friendly design.

- Higher flexibility in routing tasks to different models.

- Improved productivity with multi-step agentic workflows.

- Stronger differentiation versus single-model AI tools.

- Reduced vendor lock-in in the product layer.

- More useful comparisons for complex tasks.

Risks and Concerns

The biggest risk is that model orchestration becomes too complex for ordinary users and too opaque for administrators. If Microsoft adds layers of drafting, critique, comparison, and agentic execution without clear controls, the experience could feel slower rather than smarter. There is also the risk that users will assume the presence of a reviewer model means answers are verified when they are only filtered.Another concern is governance fragmentation. Multi-model systems can create confusion about where data flows, which model handled what, and which policy applies at each step. Microsoft has worked to address this through its subprocessor framework and enterprise data protections, but the complexity is real, especially when different experiences use different models in different jurisdictions or tenant configurations.

Operational and reputational risks

The more Microsoft markets AI as trustworthy, the more visible any failure becomes. A high-profile error in a critique-validated response could undermine confidence faster than a simple chatbot mistake, because users will feel the system was supposed to catch it. That expectation gap is dangerous.- Review layers can create false confidence.

- Latency may increase with extra checks.

- Admin policies may be difficult to explain.

- Regional compliance differences can complicate rollout.

- Model disagreement may confuse users.

- Enterprise trust can erode quickly after visible failures.

Looking Ahead

The next phase will depend on whether Microsoft can prove that multi-model quality control improves real-world outcomes. Public messaging is one thing; enterprise performance in day-to-day workflows is another. If the company can demonstrate better research, cleaner drafting, fewer factual slips, and smoother agent execution, the Critique approach could become a blueprint for the next generation of workplace AI.It will also be worth watching how quickly Microsoft expands these capabilities beyond early-access and Researcher scenarios. The company has already suggested that Claude is available through Frontier and that Copilot Cowork is in preview, but broad adoption will depend on usability, regional readiness, and trust. The strongest AI products of 2026 may not be the ones with the flashiest demos, but the ones that quietly reduce friction and errors every day.

Key signals to watch

- Whether Critique becomes a standard Copilot feature or remains limited.

- Whether model Council improves user decision-making or adds clutter.

- How quickly Copilot Cowork expands beyond preview.

- Whether Microsoft publishes measurable accuracy or satisfaction gains.

- How enterprises respond to multi-model governance and compliance.

- Whether rivals copy the multi-model pattern or resist it.

Source: Technobezz Microsoft pairs GPT with Claude to reduce AI hallucinations