Microsoft’s Copilot — the assistant Microsoft bet would make the company “AI‑first” — is no longer just an engineering experiment: it is now a strategic linchpin that is showing cracks in reliability, brand clarity, and measurable enterprise adoption, and those cracks are starting to matter to customers and investors alike.

Microsoft introduced Copilot as a family of AI assistants woven into Windows, Microsoft 365, GitHub and browser surfaces with a clear commercial objective: make AI a productivity layer that is unavoidable for knowledge workers and therefore a new, recurring revenue stream. The product family includes enterprise‑facing Microsoft 365 Copilot, developer‑focused GitHub Copilot, and consumer chat experiences exposed through Edge and the Copilot app. That breadth is a strategic strength — the potential at‑scale integration with Office and Windows is unique — but it alproduct portfolio that has confused customers and internal teams.

Satya Nadella positioned Copilot as a center‑stage initiative in Microsoft’s AI transformation. Executives and product leads have repeatedly framed Copilot as essential to the company’s next era of growth. But an expanding list of operational incidents, user complaints, and adoption metrics suggest the program is still in the painful phase between spectacle and substance — a transition Nadella himself warned about in public remarks.

Outages like CP1193544 matter because Copilot is embedded directly into workflows. When the assistant times out or returns a fallback “Sorry, I wasn’t able to respond” message, the user experience isn’t merely degraded — automated flows and Copilot‑driven document actions can fail outright. That increases support load for IT teams and creates real business risk for teams that have already allowed Copilot to operate in production processes.

Internal employee frustration and public criticism amplified the signal. Engineers, admins and power users described certain Copilot placements and behaviors as intrusive or unreliable; some internal voices even characterised recent changes as “gimmicky,” reflecting a gap between the internal vision and user realities. That employee feedback is not trivial — dissatisfied employees working on the product reflect projected risks to execution quality if leadership does not quickly align product goals and deliverables.

A recent independent survey cited in reporting shows Copilot losing ground as a first choice among its own subscriber base while Gemini’s share rose modestly in the same period. That dynamic implies that trial and surface visibility do not automatically translate into loyalty or preference. If customers opt for alternative assistants at the point of primary use, Microsoft risks losing the downstream moments (workflows, automation, templates) that create stickiness and willingness to pay.

The commercial math is straightforward: embedding Copilot widely increarket for paid seats and agent services, but the operational costs are large (infrastructure, GPUs, software engineering) and the conversion from free users to paid Copilot seats is currently modest. Until seat penetration increases materially or Copilot enables high‑margin add‑ons, Microsoft must demonstrate that its huge capital expenditures on AI infrastructure will produce durable revi

Microsoft can still win this narrative — it has the platform, engineering resources, and enterprise relationships — but the path ahead is a marathon of repair and incremental rebuilding rather than a sprint of flashy ads and celebrity placements. The strategic prize is large, but success will come to teams that prioritize reliability, governance, and real day‑to‑day utility over novelty.

In short: Copilot is strategically indispensable to Microsoft’s AI ambitions, but the product’s current state exposes a fundamental product‑management dilemma — ubiquity without predictable value becomes liability. Fix the basics, prove reliability and governance, and only then scale the spectacle. Until that sequence is executed and validated in the field, enterprises and investors will reasonably ask for proof that Copilot is a productivity multiplier rather than a costly experiment.

Source: Hindustan Times Microsoft’s pivotal AI product is running into big problems

Background and overview

Background and overview

Microsoft introduced Copilot as a family of AI assistants woven into Windows, Microsoft 365, GitHub and browser surfaces with a clear commercial objective: make AI a productivity layer that is unavoidable for knowledge workers and therefore a new, recurring revenue stream. The product family includes enterprise‑facing Microsoft 365 Copilot, developer‑focused GitHub Copilot, and consumer chat experiences exposed through Edge and the Copilot app. That breadth is a strategic strength — the potential at‑scale integration with Office and Windows is unique — but it alproduct portfolio that has confused customers and internal teams. Satya Nadella positioned Copilot as a center‑stage initiative in Microsoft’s AI transformation. Executives and product leads have repeatedly framed Copilot as essential to the company’s next era of growth. But an expanding list of operational incidents, user complaints, and adoption metrics suggest the program is still in the painful phase between spectacle and substance — a transition Nadella himself warned about in public remarks.

What the numbers actually say

Microsoft has disclosed several headline figures that are often repeated in investor briefings and press reports:- Microsoft 365 has an installed base of more than 450 million commercial paid seats, and Microsoft reported 15 million paid Microsoft 365 Copilot seats sold. That translates to roughly 3% of the Microsoft 365 commercial base being on a paid Copilot seat.

- Microsoft told the market it has grown Copilot usage to 150 million monthly active users across consumer and commercial surfaces, a number the company has highlighted when discussing ecosystem momentum. That figure is a consolidation of many Copilot touchpoints rather than a clean “paid commercial users” metric.

- Public comparisons used by journalists and analysts put Copilot well behind rivals on consumer usage: Google's Gemini app has been reported at hundreds of millions of monthly users (estimates vary, with a common cited figure in mid‑hundreds of millions) and ChatGPT has been reported with hundreds of millions of weekly active users, depending on the metric and time window. Different outlets quote Gemini at 400 million MAUs in mid‑2025 and ChatGPT’s weekly counts have been reported in the high hundreds of millions at various points through 2025. These are not apples‑to‑apples metrics: some reports use MAU, some WAU, some conflate web and mobile, and the time frames differ.

- Independent market research cited in coverage indicates an erosion in the share of Copilot subscribers who prefer Copilot as their primary AI tool: one survey showed a drop from 18.8% (July) to 11.5% (late January) choosing Copilot as the primary option, while Google’s Gemini rose in the same window. This decline is a red flag for product stickiness if it holds across broader samples. The same reporting traces the survey to Recon Analytics and a large U.S. respondent pool.

Where the product is failing: interoperability, branding and UX

Microsoft’s Copilot e from three linked product problems: confusing branding across multiple Copilot products, inconsistent interoperability between surfaces, and noisy or intrusive UX patterns that create friction rather than help.Fragmented identity and product family confusion

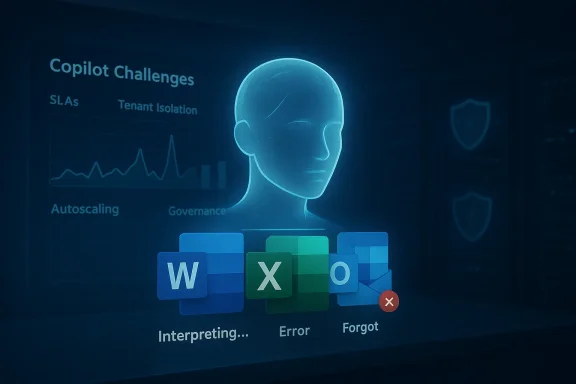

Microsoft sells multiple “Copilots” — enterprise Copilot for Microsoft 365, GitHub Copilot for coding, developer/IT Copilots, plus a consumer‑facing chat product — and Microsoft’s internal structure divides ownership across teams. This fragmentation hent naming, differing feature sets, and unclear upgrade pathways for customers who want to move from trial to paid, or from consumer to enterprise tiers. The result is buyer confusion at scale: purchasing teams, IT administrators, and end users are often uncertain which Copilot product they are using and what privileges or limitations apply.Interoperability and integration gaps

Even where Copilot is installed, the assistant can behave differently across apps. Customer and employee reports point to inconsistent results when Copilot is asked to take the same action in Word vs. Outlook vs. Teams; automation and agent flows can fail at integration boundaries. Those gaps weaken the primary Microsoft advantage — contextual help leveraging corporate data and identity — because a “trusted” assistant must deliver consistent behavior across the suite.UX intrusiveness and regressions

Attempts to make Copilot visible — Copilot buttons throughout the UI, inline prompts, and aggressive placement in lightweight apps — produced backlash from users and administrators who view some UIs as clutter or hard sells. Features intended to be helpful became a source of irritation when they did not reliably add value. In several builds, Microsoft has paused or reworked certain Copilot placements after user pushback. Those product oscillations themselves erode confidence among enterprise buyers who need predictability.Reliability and operational risk: outages and autoscaling

A telling episode came in December when Copilot experienced a regionally concentrated outage that Microsoft logged as incident CP1193544; users across the United Kingdom and parts of Europe reported failing responses and timeouts inside Word, Excel, Te. Microsoft’s incident notes cited an unexpected surge in request traffic and autoscaling / load‑balancing pressures as the proximate causes. Those operational events demonstrate that making a synchronous, integrated assistant dependable at global scale is a different engineering problem than running a stand‑alone chatbot.Outages like CP1193544 matter because Copilot is embedded directly into workflows. When the assistant times out or returns a fallback “Sorry, I wasn’t able to respond” message, the user experience isn’t merely degraded — automated flows and Copilot‑driven document actions can fail outright. That increases support load for IT teams and creates real business risk for teams that have already allowed Copilot to operate in production processes.

Trust, privacy and the Recall backlash

Copilot’s ambition reaches beyond chat: Microsoft experimented with features such as Windows Recall (a timeline of indexed on‑screen content) and tighter on‑device/in‑cloud hybrid models. Recall and similar features raised privacy and attack‑surface concerns among security researchers and enterprise customers. Even after Microsoft moved to opt‑in defaults and stronger gating mechanisms, the controversy left a residue of scepticism that is difficult to repair. Microsoft’s experience underlines a simple lesson: high‑visibility features that touch private or sensitive content require ironclad governance and clear default‑off controls.Internal employee frustration and public criticism amplified the signal. Engineers, admins and power users described certain Copilot placements and behaviors as intrusive or unreliable; some internal voices even characterised recent changes as “gimmicky,” reflecting a gap between the internal vision and user realities. That employee feedback is not trivial — dissatisfied employees working on the product reflect projected risks to execution quality if leadership does not quickly align product goals and deliverables.

Competition and mindshare: why user preference matters

Copilot competes in two different markets at once: enterprise productivity and consumer chat/search. In consumer mindshare, ChatGPT and Google’s Gemini dominate public perception and discovery surfaces. In enterprise contexts, Microsoft’s integration advantage is meaningful — the problem is converting that advantage into routine, mission‑critical usage.A recent independent survey cited in reporting shows Copilot losing ground as a first choice among its own subscriber base while Gemini’s share rose modestly in the same period. That dynamic implies that trial and surface visibility do not automatically translate into loyalty or preference. If customers opt for alternative assistants at the point of primary use, Microsoft risks losing the downstream moments (workflows, automation, templates) that create stickiness and willingness to pay.

Financial and strategic stakes

Investors reacted to the mixed signals. Microsoft’s quarterly results and the company’s disclosure of heavy AI investment have prompted questions about near‑term returns and Azure’s growth trajectory. Analysts and commentators flagged worries that Microsoft might be diverting compute from higher‑margin Azure workloads to support Copilot’s performance and scaling needs, and that the payoff for Copilot could be distant given current adoption rates. Market reactions following earnings illustrate that narrative risk is real: AI enthusiasm is now being judged by concrete monetization and reliability metrics, not just product roadmaps.The commercial math is straightforward: embedding Copilot widely increarket for paid seats and agent services, but the operational costs are large (infrastructure, GPUs, software engineering) and the conversion from free users to paid Copilot seats is currently modest. Until seat penetration increases materially or Copilot enables high‑margin add‑ons, Microsoft must demonstrate that its huge capital expenditures on AI infrastructure will produce durable revi

Technical anatomy: why Copilot is hard to ship

Delivering a reliable, integrated assistant is harder than operating a consumer chatbot because you must solve multiple engineering challenges at once:- Identity and tenant isolation: enterprise Copilot requires strict separation of company data and identity flows so that outputs do not leak across tenants. That increases complexity in the control plane and in API design.

- Synchronous inference at scale: features like document edits or meeting summarization are synchronous and user‑facing; they cannot be. That forces aggressive autoscaling and operational safeguards that must work across region and edge layers. Outages due to autoscaling stress expose how brittle a synchronous architecture can be if not engineered for rare spikes.

- Model sourcing and governance: Microsoft has chosen to use the best external models available (OpenAI, Anthropic) while simultaneously investing in its own models. That hybrid approach complicates model governance, explainability, and SLAs — enterprises want auditable provenance and consistent quality guarantees that kends.

- UX automation reliability: when Copilot is asked to “do” things in the UI (change settings, update documents), it must interact reliably with a moving targetrmissions, and third‑party integrations. Automation failures are highly visible and quickly erode trust.

What Microsoft should do — practical, tactical fixes

Microsoft has the assets to recover but must prioritize surgical work over brand amplification. The followre pragmatic and actionable.- Fix the operational baseline first.

- Publish clear, regionally segmented SLAs for synchronous Copilot features and make autoscaling behaviours observable to large customers.

- Harden the control plane to reduce single‑point failures and automate more of the scaling steps that were handled manually during recent incidents.

- Simplify product identity and buyer journeys.

- Consolidate Copilot naming and provide simple, side‑by‑side feature comparison tables so administrators and procurement teams understand what they get at each tier. Avoid multiple, overlapping “Copilot” brands that create buke governance and privacy airtight and user‑visible.

- Default to opt‑in for high‑sensitivity features (like screen indexing), publish independent audits of tenant isolation, and ship admin tooling that enables rapid attestation and testing of governance controeliability over new features.

- Delay glossy consumer marketing until measurable improvements in enterprise stability and primary‑use preference metrics are visible. Brand campaigns are expensive; fixing the product is more valuable to adoption in the medium term.

- Offer staged enterprise adoption patterns.

- Encourage customers to pilot Copilot in read‑only advisory modes first, require human review for agent actions in high‑risk areas, and provide pattern libraries that show validated, low‑risk automation templates. This reduces surprise exposure and builds operational muscle in customers.

Risks Microsoft faces icorrect

- Eroding trust: repeated outages, hallucinations, and intrusive UX risk creating a durable trust deficit that cannot be fixed by model upgrades alone. Enterprises prefer predictable, auditable tools.

- Wasted capital: Microsoft’s investment in AI infrastructure is immense; failing to convert usage into sustainable, monetizable features will pressure margins and investor patience.

- Competitive displacement: rivals that capture the first‑choice status for users (whether via superior UX, better free tiers, or clearer consumer value) will lose Microsoft critical touchpoints where long‑term monetization happens. Surveys showing a drop in Copilot’s share of “first choice” are an early warning sign.

- Regulatory and privacy pushback: features that index user content or act proactively will attract more regulatory scrutiny. Missteps at scale could trigger enforcement actions or procurement bans in sensitive industries.

A balanced verdict

Copilot is one of Microsoft’s most consequential bets: it leverages unrivalled distribution inside Office and Windows and has the potential to create a new monetization layer across tens of millions of seats. Those structural advantages are real and durable. Yet the product is not yet delivering consistent, sticky value at scale. Adoption metrics hide important nuance (paid seat penetration is small; active user counts aggregate heterogeneous surfaces), and independent surveys suggest preference and primary‑use share are not guaranteed. Operational incidents and UX friction add a credibility tax that Microsoft must pay down with engineering rigor, clearer product design, and stronger governance.Microsoft can still win this narrative — it has the platform, engineering resources, and enterprise relationships — but the path ahead is a marathon of repair and incremental rebuilding rather than a sprint of flashy ads and celebrity placements. The strategic prize is large, but success will come to teams that prioritize reliability, governance, and real day‑to‑day utility over novelty.

In short: Copilot is strategically indispensable to Microsoft’s AI ambitions, but the product’s current state exposes a fundamental product‑management dilemma — ubiquity without predictable value becomes liability. Fix the basics, prove reliability and governance, and only then scale the spectacle. Until that sequence is executed and validated in the field, enterprises and investors will reasonably ask for proof that Copilot is a productivity multiplier rather than a costly experiment.

Source: Hindustan Times Microsoft’s pivotal AI product is running into big problems