Microsoft is pushing Microsoft 365 Copilot into a new phase where trust, verification, and orchestration matter as much as raw model quality. The company’s latest Wave 3 messaging adds Copilot Cowork for long-running work, expands its model-diverse strategy, and leans harder into the idea that enterprise AI should be reviewed, governed, and compared before it is trusted. That broader direction is consistent with Microsoft’s March 2026 Frontier announcements, which said Cowork would enter research preview and be made available through the Frontier program, while Microsoft also emphasized that its Copilot stack is increasingly built around model choice and enterprise controls

Microsoft’s Copilot story has evolved from a chat layer into a platform strategy. What began as a productivity add-on for Word, Excel, Outlook, Teams, and the rest of Microsoft 365 is now being recast as an agentic operating layer that can reason across apps, coordinate workflows, and, in some cases, keep working over minutes or hours rather than a single prompt-response cycle. Microsoft’s own March 9, 2026 announcements framed this as “Frontier Transformation,” with intelligence and trust as the twin pillars of the next phase of workplace AI

That shift did not happen in isolation. Over the past year, Microsoft has steadily broadened Copilot’s model options, including public references to Anthropic in Copilot Studio and more explicit model choice in enterprise settings. Microsoft’s Copilot blog said Copilot Studio would keep OpenAI as the default for new agents while also allowing Anthropic models, and Microsoft 365 materials stressed that the company was moving toward multi-model intelligence rather than a single-vendor approach

The same arc shows up in Microsoft’s agent tooling. In Build 2025, Microsoft announced multi-agent orchestration, bringing multiple agents together with human oversight, and positioned Copilot Studio as the place where organizations could bring their own models and manage increasingly complex workflows. That was an early signal that Microsoft was not merely adding features to Copilot; it was building a workflow platform for enterprise AI

Against that backdrop, the Tech Portal’s description of Critique, Model Council, and Copilot Cowork reads as the logical continuation of Microsoft’s public roadmap. Even if some of the article’s feature naming appears more productized than Microsoft’s own phrasing, the underlying themes are visible in Microsoft’s official messaging: model diversity, agent governance, long-running tasks, and the need to make AI outputs more trustworthy before they are allowed into everyday work

One of the most important context points is that enterprise AI buyers are no longer dazzled by demos alone. They want systems that are observable, auditable, and less likely to hallucinate under pressure. Microsoft’s recent announcements repeatedly return to that premise, presenting Copilot not just as a clever assistant but as a controlled environment where enterprises can review progress, govern access, and choose the right model for the job

This is also why multi-model logic is becoming central. The best model for drafting is not always the best model for reviewing. The best model for planning is not always the best model for execution. Microsoft appears to be betting that the enterprise market will reward a platform that can route each job to the right system, even if the user never sees the handoff

But the platform story cuts both ways. The more layers Microsoft inserts between the user and the final answer, the more it has to manage latency, reliability, and governance. That is why Microsoft keeps pairing model diversity with trust language. The company wants to persuade buyers that complexity is not a bug; it is the price of building AI that can be used in real companies without creating chaos

The file set describing Critique also points to the broader enterprise problem: polished prose often hides weak substance. The better a model writes, the easier it is to trust it too much. By making critique part of the workflow, Microsoft is effectively admitting that trust cannot be assumed; it has to be engineered into the system itself

That limitation matters because enterprises often buy AI not for novelty but for defensibility. They want outputs they can act on, cite, and justify. Critique is therefore not just a quality feature; it is a confidence feature. If Microsoft can show that it reduces rework and increases trust, the feature could become one of the quietest but most valuable upgrades in Copilot’s history

The downside is obvious: side-by-side answers can also create decision paralysis. If every model says something slightly different, users may not know which answer to trust, or they may assume disagreement means the system is unreliable. Microsoft will need to guide users carefully so that comparison feels empowering rather than confusing

It also serves a commercial purpose. Microsoft can position Copilot not merely as an answer engine, but as a decision-support environment where enterprises can experiment with different model behaviors without leaving Microsoft’s security and governance stack. That is a subtle but important distinction, because it turns AI selection into an operational feature rather than a procurement headache

Microsoft’s official descriptions emphasize visibility and control. Work can be reviewed, guided, or stopped, and the system runs inside Microsoft’s security and identity framework. That matters because enterprise AI adoption rises or falls on whether IT and security teams believe the machine is working with them rather than around them

That said, model diversity is not a free lunch. A richer system can be harder to debug, harder to price, and harder to explain to users. Microsoft’s challenge is to make Cowork feel like one coherent experience even if multiple model families are involved behind the scenes

The trust angle is especially important for regulated industries. Financial services, healthcare, government, and large legal organizations are not simply looking for faster drafting. They are looking for systems that can show their work, stay within permissions, and avoid leaking sensitive context. Microsoft’s move toward critique and comparison is therefore as much a compliance story as it is a productivity story

But small organizations may also feel the complexity more sharply. If the UI becomes crowded with model selection, comparison panes, and workflow controls, casual users may feel overwhelmed. Microsoft will need to make these capabilities feel optional and progressively disclosed rather than mandatory from the start

That means the best consumer-facing version of these tools may not be the most powerful one. It may be the one that hides complexity when users want simplicity and reveals it only when users want to inspect the work. That is a subtle UX problem, but it will determine whether the feature set feels premium or merely busy

Google and Anthropic can still compete on raw quality, reasoning, and product design. But Microsoft has an advantage that rivals cannot easily replicate: it sits in the flow of office work. If Copilot can be the place where decisions are compared, tasks are executed, and outputs are verified, then Microsoft wins by owning the workflow surface rather than any single model headline

The second test will be administrative. Enterprises will want to know how these features behave under real permissions, real compliance rules, and real data sensitivity. Microsoft’s success will depend on whether IT teams see the new Copilot stack as controllable enough to deploy broadly, or merely impressive enough to pilot. If Microsoft can make trust visible without making the experience cumbersome, it will have something the rest of the market will struggle to match

Source: The Tech Portal Microsoft rolls out multi-model AI feature 'Critique' along with 'Model Council' tool to expand Copilot capabilities - The Tech Portal

Background

Background

Microsoft’s Copilot story has evolved from a chat layer into a platform strategy. What began as a productivity add-on for Word, Excel, Outlook, Teams, and the rest of Microsoft 365 is now being recast as an agentic operating layer that can reason across apps, coordinate workflows, and, in some cases, keep working over minutes or hours rather than a single prompt-response cycle. Microsoft’s own March 9, 2026 announcements framed this as “Frontier Transformation,” with intelligence and trust as the twin pillars of the next phase of workplace AIThat shift did not happen in isolation. Over the past year, Microsoft has steadily broadened Copilot’s model options, including public references to Anthropic in Copilot Studio and more explicit model choice in enterprise settings. Microsoft’s Copilot blog said Copilot Studio would keep OpenAI as the default for new agents while also allowing Anthropic models, and Microsoft 365 materials stressed that the company was moving toward multi-model intelligence rather than a single-vendor approach

The same arc shows up in Microsoft’s agent tooling. In Build 2025, Microsoft announced multi-agent orchestration, bringing multiple agents together with human oversight, and positioned Copilot Studio as the place where organizations could bring their own models and manage increasingly complex workflows. That was an early signal that Microsoft was not merely adding features to Copilot; it was building a workflow platform for enterprise AI

Against that backdrop, the Tech Portal’s description of Critique, Model Council, and Copilot Cowork reads as the logical continuation of Microsoft’s public roadmap. Even if some of the article’s feature naming appears more productized than Microsoft’s own phrasing, the underlying themes are visible in Microsoft’s official messaging: model diversity, agent governance, long-running tasks, and the need to make AI outputs more trustworthy before they are allowed into everyday work

One of the most important context points is that enterprise AI buyers are no longer dazzled by demos alone. They want systems that are observable, auditable, and less likely to hallucinate under pressure. Microsoft’s recent announcements repeatedly return to that premise, presenting Copilot not just as a clever assistant but as a controlled environment where enterprises can review progress, govern access, and choose the right model for the job

What Microsoft Is Really Changing

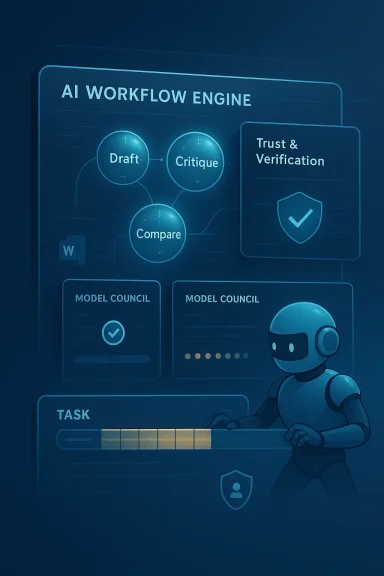

The most visible change in this wave is not any single feature. It is the move from a one-shot assistant to a multi-step orchestration layer. In practical terms, Microsoft is signaling that different AI models can play different roles inside one workflow: one model drafts, another critiques, another compares, and a separate agent may execute tasks across Microsoft 365. That is a meaningful architectural shift because it treats AI less like a chatbot and more like a managed production systemFrom answer engine to workflow engine

That transition matters because the value of AI in the enterprise is no longer measured only by how fluent it sounds. It is measured by how much work it can safely absorb from a human. Microsoft’s official Frontier language explicitly says Cowork can break down complex requests, reason across tools and files, and carry work forward with visible progress and opportunities to steer. That is a far cry from a static “write me a draft” experienceThis is also why multi-model logic is becoming central. The best model for drafting is not always the best model for reviewing. The best model for planning is not always the best model for execution. Microsoft appears to be betting that the enterprise market will reward a platform that can route each job to the right system, even if the user never sees the handoff

Why this is a platform play

The strategic upside is obvious. If Microsoft controls the orchestration layer, it can reduce dependence on any one model provider while still delivering frontier capabilities. That gives the company leverage in negotiations, product differentiation, and enterprise sales. It also gives Microsoft a cleaner story to tell to customers worried about lock-in: the platform is designed to be model-diverse by designBut the platform story cuts both ways. The more layers Microsoft inserts between the user and the final answer, the more it has to manage latency, reliability, and governance. That is why Microsoft keeps pairing model diversity with trust language. The company wants to persuade buyers that complexity is not a bug; it is the price of building AI that can be used in real companies without creating chaos

Critique as an AI Quality Gate

The Critique concept is arguably the most interesting part of the package because it tackles the oldest complaint in generative AI: confident answers can still be wrong. According to the Tech Portal’s description, one model generates the response while another reviews it for accuracy, logic, completeness, and quality. That is a simple idea, but in enterprise AI it use it formalizes the review step instead of leaving it to the human user after the factWhy a second model matters

The benefit of a critique pass is not that it guarantees truth. It does not. The benefit is that it creates a second probabilistic check before the answer reaches the user. That can reduce obvious errors, catch missing context, and force the system to articulate its reasoning more carefully. In knowledge work, that can save time not just by producing better drafts, but by reducing the cleanup burden that follows a bad draftThe file set describing Critique also points to the broader enterprise problem: polished prose often hides weak substance. The better a model writes, the easier it is to trust it too much. By making critique part of the workflow, Microsoft is effectively admitting that trust cannot be assumed; it has to be engineered into the system itself

What Critique can and cannot do

Critique can improve the odds that an output is useful. It can also make Microsoft’s products feel more mature to enterprises that are tired of hand-waving around hallucinations. But it cannot replace human review in high-stakes settings. A second AI opinion is still a machine-generated opinion, and it can still inherit blind spots, misunderstand context, or confidently amplify a first model’s mistakeThat limitation matters because enterprises often buy AI not for novelty but for defensibility. They want outputs they can act on, cite, and justify. Critique is therefore not just a quality feature; it is a confidence feature. If Microsoft can show that it reduces rework and increases trust, the feature could become one of the quietest but most valuable upgrades in Copilot’s history

Model Council and the Power of Comparison

If Critique is about internal review, Model Council is about external comparison. The idea of putting multiple model outputs side by side is compelling because it lets users see how different systems interpret the same prompt. That can be especially useful in research, strategy, and policy work, where a single answer can flatten nuance or miss competing interpretations. Microsoft’s broader Copilot and Copilot Studio messaging already supports this direction, with explicit references to model choice and side-by-side evaluation patterns in Microsoft Learn contentComparison as a trust mechanism

Comparison is an underrated trust tool. When users see multiple answers, they are more likely to notice differences in framing, completeness, or certainty. That makes it easier to spot weak reasoning than relying on one polished response that may look authoritative even when it is not. In that sense, Model Council is as much about calibrating human judgment as it is about improving machine performanceThe downside is obvious: side-by-side answers can also create decision paralysis. If every model says something slightly different, users may not know which answer to trust, or they may assume disagreement means the system is unreliable. Microsoft will need to guide users carefully so that comparison feels empowering rather than confusing

Where Model Council fits best

Model Council makes the most sense when the task is inherently ambiguous. Strategic planning, competitive analysis, and policy drafting often benefit from multiple lenses rather than one final answer. In those cases, the point is not to eliminate judgment but to surface the range of plausible interpretations faster than a human team could aloneIt also serves a commercial purpose. Microsoft can position Copilot not merely as an answer engine, but as a decision-support environment where enterprises can experiment with different model behaviors without leaving Microsoft’s security and governance stack. That is a subtle but important distinction, because it turns AI selection into an operational feature rather than a procurement headache

Copilot Cowork and the Rise of Long-Running Work

The other major piece of the story is Copilot Cowork, which Microsoft has said is available through the Frontier program for early access customers and is designed to handle long-running, multi-step work across Microsoft 365. In Microsoft’s own words, Cowork is about allowing AI to break down complex requests, carry work forward over time, and operate inside enterprise controls rather than just producing a one-off responseWhat “long-running” really means

This is a big deal because it changes the unit of AI value. Instead of asking a model to help with one paragraph or one slide, Microsoft is asking it to stay with a task until the task is finished. That might include gathering information, organizing files, revising documents, and continuing progress across sessions. In other words, AI becomes a persistent collaborator, not a disposable assistantMicrosoft’s official descriptions emphasize visibility and control. Work can be reviewed, guided, or stopped, and the system runs inside Microsoft’s security and identity framework. That matters because enterprise AI adoption rises or falls on whether IT and security teams believe the machine is working with them rather than around them

Why Anthropic matters here

Microsoft has also said that Cowork was built in close collaboration with Anthropic and that it brings Anthropic-powered agent technology into Microsoft 365 Copilot. That is a notable signal because it shows Microsoft is willing to blend model ecosystems rather than frame Copilot as purely a Microsoft-OpenAI stack. The result is a more flexible and arguably more credible enterprise story: use the best model for the job, then govern it inside Microsoft’s platformThat said, model diversity is not a free lunch. A richer system can be harder to debug, harder to price, and harder to explain to users. Microsoft’s challenge is to make Cowork feel like one coherent experience even if multiple model families are involved behind the scenes

Enterprise Implications

For enterprises, the most important question is not whether these features are cool. It is whether they improve throughput without increasing risk. Microsoft is clearly betting that its customers want more automation, but only if that automation is paired with review, observability, and policy controls. The company’s Frontier messaging repeatedly emphasizes exactly those themesGovernance now matters as much as generation

This is where Microsofe consumer-oriented AI products. Enterprises need auditability, identity controls, permission boundaries, and predictable admin workflows. Microsoft’s positioning around Agent 365, Frontier, and the Microsoft 365 security stack suggests that it wants to be the vendor that makes AI safe enough for deployment at scale, not just impressive enough for demosThe trust angle is especially important for regulated industries. Financial services, healthcare, government, and large legal organizations are not simply looking for faster drafting. They are looking for systems that can show their work, stay within permissions, and avoid leaking sensitive context. Microsoft’s move toward critique and comparison is therefore as much a compliance story as it is a productivity story

Procurement and ROI pressure

At the same time, enterprises will ask a blunt question: does multi-model orchestration justify the cost? More review steps and more agent logic can increase compute usage, operational complexity, and licensing headaches. Microsoft will need to prove that the net result is higher productivity, lower rework, or both. Otherwise, organizations may see the new capabilities as elegant but expensive extras- Better outputs can reduce human revision time.

- Side-by-side model comparison can improve decision quality.

- Long-running agents can absorb repetitive workflow tasks.

- Governance controls may reassure IT and compliance teams.

- Multi-model flexibility can reduce vendor concentration risk.

- But added complexity may increase latency and cost.

- And trust gains will still need to be proven in real deployments.

Consumer and SMB Impact

Although the headlines focus on enterprise Copilot, the long-term effects may spill over to smaller organizations and advanced consumers. Microsoft often uses enterprise features as the test bed for broader product design, and the same pattern has held with Copilot: first the high-end workplace stack, then the features that are most broadly useful make their way into more accessible surfaces over timeWhy smaller teams will care

Small businesses have some of the same problems as big enterprises, just with fewer admins. They need reliable outputs, quicker drafting, and less time spent checking AI work. A critique pass and side-by-side model comparison could be especially valuable for teams without in-house analysts or editors, because those teams often rely on AI to fill expertise gaps rather than simply speed up known workflowsBut small organizations may also feel the complexity more sharply. If the UI becomes crowded with model selection, comparison panes, and workflow controls, casual users may feel overwhelmed. Microsoft will need to make these capabilities feel optional and progressively disclosed rather than mandatory from the start

The user experience challenge

The experience question is crucial. Side-by-side model views are valuable only if they stay readable and actionable. Microsoft Learn’s recent UI guidance for Copilot surfaces emphasizes conversation-first design and side-by-side reasoning visibility, which suggests Microsoft understands that the interface must support comprehension, not just capabilityThat means the best consumer-facing version of these tools may not be the most powerful one. It may be the one that hides complexity when users want simplicity and reveals it only when users want to inspect the work. That is a subtle UX problem, but it will determine whether the feature set feels premium or merely busy

Competitive Landscape

Microsoft’s move is also a competitive message to Google, Anthropic, OpenAI, and enterprise AI startups. The company is telling the market that the next battleground is not just who has the strongest iver the best system around the model. That includes critique, comparison, governance, identity, workflow integration, and enterprise deployment pathwaysWhy this pressures rivals

For model vendors, Microsoft’s approach is both flattering and threatening. It validates the importance of frontier models while also commoditizing them inside a larger platform. A model can win the benchmark race and still lose the customer relationship if Microsoft becomes the default place where work happens. That is why Microsoft’s orchestration strategy may be more durable than a single model winGoogle and Anthropic can still compete on raw quality, reasoning, and product design. But Microsoft has an advantage that rivals cannot easily replicate: it sits in the flow of office work. If Copilot can be the place where decisions are compared, tasks are executed, and outputs are verified, then Microsoft wins by owning the workflow surface rather than any single model headline

The multi-model era

The broader industry is heading in this direction anyway. Microsoft’s public embrace of Anthropic models, its model-diverse Copilot story, and its focus on multi-agent orchestration all point to a market where the best AI product is not necessarily the best standalone model. It is the product that can compose multiple models into a reliable system with the fewest user-visible seams- Microsoft gains leverage by becoming the orchestration layer.

- Model vendors gain distribution through Microsoft’s ecosystem.

- Customers gain choice, but also more complexity.

- Product differentiation shifts from raw model performance to system reliability.

- Trust, governance, and observability become selling points.

- The real moat becomes workflow integration.

- And the market may increasingly reward composition over isolation.

Strengths and Opportunities

Microsoft’s latest Copilot direction has several clear strengths. It addresses a real enterprise pain point, expands the role of AI beyond basic drafting, and gives Microsoft a stronger answer to concerns about hallucinations and model lock-in. Just as importantly, it aligns product design with the way organizations actually buy software: for control, safety, and measurable value, not just novelty- Critique could reduce obvious errors before users see them.

- Model Council can improve judgment by surfacing multiple perspectives.

- Copilot Cowork moves AI from assistance to actual task execution.

- Model diversity reduces overreliance on a single vendor.

- Enterprise governance makes the stack more credible for IT buyers.

- Workflow integration strengthens Microsoft’s platform moat.

- Frontier access lets Microsoft test features before broad rollout.

- Trust-first positioning gives Microsoft a strong narrative in a crowded AI market.

Risks and Concerns

The risks are just as real. Multi-model systems can become harder to explain, more expensive to run, and more frustrating if the user interface exposes too much complexity. There is also the deeper issue that a second AI review is still not the same as independent verification, so Microsoft must avoid overselling critique as a cure for hallucinations rather than what it actually is: a mitigation layer- Latency may increase as more models are chained together.

- Cost may rise with extra generation and review steps.

- User confusion could grow if comparison views are not intuitive.

- False confidence remains a danger if users trust AI review too much.

- Governance gaps could appear if permissions are not airtight.

- Vendor complexity may complicate support and troubleshooting.

- Benchmark claims can be hard to verify outside vendor-controlled demos.

- Adoption friction could slow rollout if the features feel too experimental.

Looking Ahead

The next few months should reveal whether Microsoft’s new Copilot direction is a genuine product shift or just a sophisticated way of packaging the same promise. The most important test will be whether Critique, Model Council, and Cowork actually change day-to-day workflows in ways users can feel: fewer revisions, better decisions, and more tasks completed without human handoffs. If that happens, Microsoft will have a strong case that the age of single-model workplace AI is fading fastThe second test will be administrative. Enterprises will want to know how these features behave under real permissions, real compliance rules, and real data sensitivity. Microsoft’s success will depend on whether IT teams see the new Copilot stack as controllable enough to deploy broadly, or merely impressive enough to pilot. If Microsoft can make trust visible without making the experience cumbersome, it will have something the rest of the market will struggle to match

- Frontier availability could expand beyond early-access users.

- Microsoft may add more model routing and review options.

- Copilot Studio could absorb more of the orchestration logic.

- Enterprises will likely demand clearer audit and traceability tools.

- Competitors may respond with their own multi-model review workflows.

Source: The Tech Portal Microsoft rolls out multi-model AI feature 'Critique' along with 'Model Council' tool to expand Copilot capabilities - The Tech Portal