Microsoft has quietly — and decisively — created a new research and engineering unit inside its AI division called the MAI Superintelligence Team, led by Microsoft AI CEO Mustafa Suleyman, and set its north star on what the company calls “humanist superintelligence” — advanced, domain‑targeted AI that is explicitly designed to remain controllable, auditable and firmly in service of people.

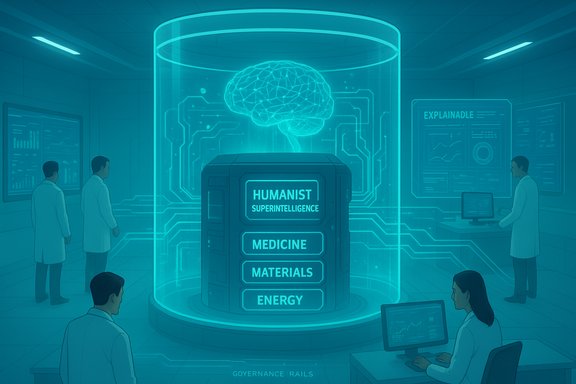

Microsoft’s announcement is more than a rebrand or a PR exercise: it signals a strategic pivot toward building first‑party frontier models for highly regulated, high‑impact domains while insisting the work be bounded by strong safety and governance commitments. The company frames the effort as a contrast to an unconstrained race for general artificial general intelligence; instead Microsoft proposes Humanist Superintelligence (HSI) — systems that aim for superhuman performance in specific problems without becoming open‑ended, autonomous agents. Mustafa Suleyman, who now runs Microsoft AI, laid out the approach in a public essay and accompanying announcement that described HSI as “problem‑oriented and domain‑specific” with an emphasis on containment, alignment and human control. Karén Simonyan is named as the team’s chief scientist, and Microsoft says the team will include model researchers already working across Microsoft AI. The company has not published final headcount targets for the new group.

Key safety principles Microsoft emphasizes:

Success will depend on converting rhetoric into verifiable practice: publish independent evaluations, subject medical claims to peer review and regulatory processes, build auditable containment mechanisms, and sustain an open, multidisciplinary governance posture. Without those hard proofs, humanist superintelligence risks becoming a strategic positioning exercise rather than a durable model for safe, societally beneficial AI. The announcement changes the stakes for Windows users, enterprises, policymakers and competitors alike. It also raises the bar for transparency: Microsoft has set an expectation for what responsible, high‑capability AI should look like — now it must demonstrate it in the open.

Source: GeekWire Microsoft forms Superintelligence team to pursue ‘humanist’ AI under Mustafa Suleyman

Background

Background

Microsoft’s announcement is more than a rebrand or a PR exercise: it signals a strategic pivot toward building first‑party frontier models for highly regulated, high‑impact domains while insisting the work be bounded by strong safety and governance commitments. The company frames the effort as a contrast to an unconstrained race for general artificial general intelligence; instead Microsoft proposes Humanist Superintelligence (HSI) — systems that aim for superhuman performance in specific problems without becoming open‑ended, autonomous agents. Mustafa Suleyman, who now runs Microsoft AI, laid out the approach in a public essay and accompanying announcement that described HSI as “problem‑oriented and domain‑specific” with an emphasis on containment, alignment and human control. Karén Simonyan is named as the team’s chief scientist, and Microsoft says the team will include model researchers already working across Microsoft AI. The company has not published final headcount targets for the new group. Why this matters now

- Microsoft has rapidly embedded advanced generative models across Windows, Microsoft 365 and Copilot experiences. Those product integrations give Microsoft both the scale to influence design norms and the responsibility to manage safety defaults at scale.

- The commercial and cloud context changed during 2025 as OpenAI restructured and broadened cloud relationships, creating incentives for Microsoft to regain optionality on latency, cost and data governance by building in‑house capabilities.

What Microsoft announced — the essentials

Microsoft’s public messaging and independent reporting converge on several concrete commitments:- Formation of the MAI Superintelligence Team inside Microsoft AI, led by Mustafa Suleyman.

- Public framing of the project as Humanist Superintelligence (HSI) — advanced AI systems optimized to serve human and societal priorities with constrained autonomy, auditable behavior, and explicit limits.

- An early, stated focus on medical diagnostics and scientific domains (materials, battery chemistry, molecule discovery, fusion) where domain‑specific superhuman performance could produce measurable public benefit.

- Karén Simonyan as chief scientist and staffing drawn from existing Microsoft AI model teams plus external recruits consistent with major lab hiring trends. Microsoft has not disclosed the team’s full scale.

Technical aims: what “humanist superintelligence” looks like in practice

Microsoft’s HSI concept emphasizes architectures and product designs that are:- Domain‑specialist: models trained and evaluated to deliver superhuman performance on narrowly specified scientific or clinical tasks rather than an all‑purpose intelligence.

- Containable and interpretable: designs that favor traceable decision paths, robust failure modes, and the ability to restrict or shut down capabilities when necessary.

- Auditable by design: engineering and governance practices that allow independent inspection of datasets, training procedures, red‑team results and real‑world performance metrics.

- Integrated into safety‑forward product defaults: for consumer Copilot and enterprise integrations, Microsoft is doubling down on explicit memory controls, opt‑in personas and clear labeling to avoid creating systems that seem conscious. This is consistent with Suleyman’s recent public arguments about Seemingly Conscious AI (SCAI) and the “psychosis risk.”

Early target: medical superintelligence

Microsoft has highlighted medical diagnostics as an initial domain where HSI could deliver immediate public benefit — for instance, earlier detection of preventable disease and decision support that materially improves clinical outcomes. Reporting references internal tests and a “line of sight” toward clinically relevant systems, but those performance claims are explicitly provisional: they require peer‑review, clearly documented datasets and regulatory pathways before deployment in clinical settings.Strategy and commercial rationale

The MAI Superintelligence Team serves at least three strategic functions for Microsoft:- Regain operational optionality: Build first‑party MAI models so Microsoft can route queries to models according to latency, cost and governance needs rather than rely exclusively on external providers. This reduces exposure to partner pricing or cloud selection decisions.

- Compete where regulation matters: Offer enterprise customers models with contractual guarantees, sovereign hosting options and traceability that are attractive for regulated industries such as healthcare, finance and government.

- Shape norms and defaults at scale: Because Microsoft powers Windows, Office and enterprise infrastructure, the company can institutionalize product choices — memory defaults, persona gating, and UI transparency — so HSI design values propagate across billions of users.

Safety, governance and the “humanist” framing

The novelty of Microsoft’s public position is not the ambition but the ethical framing. Humanist superintelligence intentionally ties capability scope to normative limits — a design stance that explicitly prioritizes human welfare and institutional accountability.Key safety principles Microsoft emphasizes:

- Non‑autonomy by default — do not enable open‑ended agency or unsupervised self‑improvement.

- Clear boundaries and shutdown controls — design models and deployments with technical kill switches and provable containment guarantees where possible.

- Transparent evaluation — publish robust evaluation protocols and enable external auditors to validate safety claims.

Why independent verification matters

Microsoft’s promise of HSI raises a practical verification problem: claims such as “models outperform clinician groups” or “medical superintelligence is within two to three years” are consequential and time‑sensitive. They must be backed by:- Peer‑reviewed publications or third‑party replication studies.

- Transparent data provenance and the ability for regulators to audit training data.

- Published safety and failure‑mode testing results, not just internal red‑team summaries.

Risks and challenges — what could derail HSI ambitions

Building useful, safe and trustworthy HSI is technically feasible in some domains, but not without major obstacles. Key risks include:- Technical gaps in provable containment: current provable‑safety and verification methods are limited for large neural models; containment remains an active research frontier.

- Regulatory uncertainty: medical diagnostics, drug discovery and other target domains fall under strict regulatory regimes. Achieving certification will require reproducible evidence, clinical trials and liability frameworks.

- Talent and competition: rival labs (Meta, Anthropic, OpenAI and specialized startups) are aggressively recruiting frontier researchers, often with lucrative packages and research autonomy. Microsoft will need competitive structures to attract and retain top talent.

- Cost and infrastructure: training domain‑leading models at industrial scale demands massive GPU fleets, cloud capacity and ongoing inferencing costs — a long‑term investment with uncertain near‑term ROI.

- Market fragmentation and customer complexity: customers will face choices among OpenAI, MAI, and other providers — increasing integration work and potential lock‑in tradeoffs.

- Social and ethical hazards from anthropomorphism: even with conservative defaults, products that incorporate voice, memory and avatars risk creating attachment and misuse among vulnerable users. Suleyman’s SCAI diagnosis is a practical attempt to surface this risk.

What it means for Windows users and enterprise customers

For Windows and Microsoft 365 users, the new MAI work will be felt across product choices and safety defaults rather than as a single product reveal.- Copilot integrations may increasingly route sensitive queries to MAI‑class models when governance and provenance are required. This could improve privacy and reduce latency for enterprise deployments.

- Expect clearer memory controls, persona gating and labeling in consumer copilots as Microsoft tries to operationalize HSI principles. These defaults will matter for classrooms, families and workplaces.

- Enterprises operating in regulated sectors might get tailored MAI offerings with contractual SLAs, on‑prem or sovereign cloud options, and audit guarantees — but those will likely carry premium pricing and integration overhead.

Competitive landscape — how MAI shifts the field

Microsoft’s announcement is part of an escalating industry sprint where multiple players define their own terms for “superintelligence.”- OpenAI continues to push toward broad AGI‑class capabilities and remains a strategic partner for Microsoft in many products even as Microsoft builds first‑party options. The corporate relationship has evolved but remains commercially central.

- Meta, Anthropic, Safe Superintelligence labs and deep tech startups are also pursuing high‑end model research; some prioritize unconstrained capability, others explicit safety. Microsoft’s “humanist” label is a strategic differentiator meant to appeal to governments, healthcare providers and large enterprises.

A practical checklist Microsoft and regulators should follow

To turn the humanist rhetoric into durable, verifiable practice, three technical and three governance actions are essential.- Publish independent evaluation protocols and invite external audits for MAI models.

- Release clinical validation data for any medical claims into peer‑reviewed literature and working registries.

- Implement provable containment benchmarks and make red‑team results auditable by qualified third parties.

- Create a public governance board including external safety researchers, ethicists and domain advocates to review deployments.

- Default to opt‑in memory and persona features in consumer facing copilots; make deletion and export easy and transparent.

- Offer sovereign cloud and on‑prem hosting options for regulated customers with explicit contractual assurances on telemetry, data retention and provenance.

Verification, caveats and what remains unverified

Multiple high‑quality outlets and Microsoft’s own announcement corroborate the team formation, leadership and HSI framing. The key points — Suleyman’s MAI team, Karén Simonyan’s chief scientist role, the focus on medical superintelligence and the humanist design constraints — are documented in the Microsoft AI essay and independently reported by Reuters, GeekWire, The Verge and others. That said, several load‑bearing claims remain unverifiable in public at the time of the announcement:- Internal test results suggesting models have outperformed clinician groups are cited by Microsoft reporting but have not been published in peer‑reviewed journals or made available for independent replication. Those performance claims must be treated as provisional until published with full methodology and datasets.

- Exact team size, budget and engineering timelines are not disclosed. The statement that Microsoft will invest “a lot of money” is plausible given the company’s scale, but precise financial commitments and ROI timelines are not publicly specified.

Conclusion

Microsoft’s formation of the MAI Superintelligence Team and its public commitment to humanist superintelligence represents a consequential, high‑stakes bet: pursue superhuman capability where it measurably helps people, but make containment, auditability and human control non‑negotiable design requirements. That framing is novel at scale and, if operationalized, could set practical industry standards for safety‑forward advanced AI.Success will depend on converting rhetoric into verifiable practice: publish independent evaluations, subject medical claims to peer review and regulatory processes, build auditable containment mechanisms, and sustain an open, multidisciplinary governance posture. Without those hard proofs, humanist superintelligence risks becoming a strategic positioning exercise rather than a durable model for safe, societally beneficial AI. The announcement changes the stakes for Windows users, enterprises, policymakers and competitors alike. It also raises the bar for transparency: Microsoft has set an expectation for what responsible, high‑capability AI should look like — now it must demonstrate it in the open.

Source: GeekWire Microsoft forms Superintelligence team to pursue ‘humanist’ AI under Mustafa Suleyman