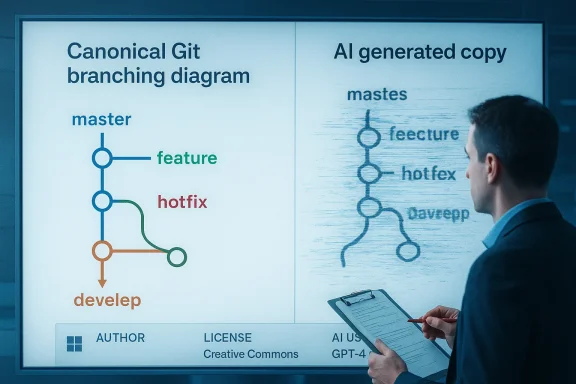

Microsoft Learn briefly published an AI‑generated reproduction of Vincent Driessen’s iconic Git branching diagram that mangled words, misdirected arrows, and—by multiple accounts—appeared to be the product of a careless, unvetted generative image workflow rather than a human redraft.

Fifteen years after Vincent Driessen published “A successful Git branching model,” his diagram became canonical teaching material for teams learning branching strategies. Driessen intentionally made the original diagram’s source available so others could reuse and adapt it with attribution; over the years it appeared in articles, presentations, and internal docs across the industry.

On February 2026, an image that closely echoed Driessen’s diagram appeared on an official Microsoft Learn page explaining GitHub Flow. The reproduced diagram contained clear artifacts that are now characteristic of generative image errors: misspellings such as “continvoucly morged,” stray glyphs where “Time” should have been, misaligned arrows and bubbles, and inconsistent color and layout choices that broke the original’s readability. The image was removed after the community identified the original author and raised concerns.

This episode crystallized two quick takeaways for many observers: large organizations are experimenting with generative AI for content creation, and without strict provenance, review, and attribution processes, high‑visibility mistakes—including effective plagiarism or unattributed reuse—are likely to occur.

Where this incident causes friction is the method: instead of crediting or directly reusing the original asset, an apparent generative workflow translated the visual into a synthetic image and published it with no visible attribution. There are three distinct issues to unpack:

Several points are worth noting in that response:

Community reaction serves a dual role:

That reaction matters because developer communities curate the shared artifacts—diagrams, patterns, and idioms—that make engineering teams interoperable. When those artifacts are muddy, the friction in onboarding and education increases. Organizations that treat documentation as a cost center risk degrading communal knowledge; those that treat it as a strategic asset must protect its provenance and quality.

If companies are serious about scaling their documentation using AI, they must pair that ambition with commensurate investment in policy, tooling, and oversight. Without it, the next viral public‑facing error will be less amusing and more consequential.

Source: Windows Central https://www.windowscentral.com/soft...ontinvoucly-morged-my-diagram-there-for-sure/

Background

Background

Fifteen years after Vincent Driessen published “A successful Git branching model,” his diagram became canonical teaching material for teams learning branching strategies. Driessen intentionally made the original diagram’s source available so others could reuse and adapt it with attribution; over the years it appeared in articles, presentations, and internal docs across the industry.On February 2026, an image that closely echoed Driessen’s diagram appeared on an official Microsoft Learn page explaining GitHub Flow. The reproduced diagram contained clear artifacts that are now characteristic of generative image errors: misspellings such as “continvoucly morged,” stray glyphs where “Time” should have been, misaligned arrows and bubbles, and inconsistent color and layout choices that broke the original’s readability. The image was removed after the community identified the original author and raised concerns.

This episode crystallized two quick takeaways for many observers: large organizations are experimenting with generative AI for content creation, and without strict provenance, review, and attribution processes, high‑visibility mistakes—including effective plagiarism or unattributed reuse—are likely to occur.

What happened — a closer timeline

- An official Microsoft Learn module included an explanatory chart for GitHub Flow that resembled Driessen’s classic Git Flow diagram.

- Readers recognized the layout and traced it to Driessen’s long‑standing diagram; Driessen confirmed the likeness and published a reaction post.

- Social posts and developer community threads highlighted that the reproduced diagram contained obvious AI artifacts and errors—most famously the phrase “continvoucly morged.”

- Microsoft removed the graphic from the Learn page and Scott Hanselman, Microsoft’s Vice President of Developer Community, publicly suggested an “overzealous vendor” may have created it and promised a post‑mortem and stronger guardrails.

Why the image stood out (technical readout)

At a glance, the recreated chart failed on both form and function. For a developer audience, clarity is non‑negotiable; diagrams must precisely represent relationships and direction of motion. The Microsoft image suffered several classes of failure commonly seen when generative image tools are used for technical diagrams:- Typographic hallucination: Generative models sometimes invent or corrupt text embedded in images because optical and text rendering parts of the pipeline are less reliable than vector or raster shape generation. The infamous “continvoucly morged” and “Tim” artifacts are textbook examples.

- Layout and alignment errors: The original diagram’s careful alignment of lanes, dots, and bubbles is a human design choice tuned for legibility. The AI version muddled those relationships, producing arrows that pointed incorrectly or omitted entirely—breaking the semantic flow the diagram intended to convey.

- Color and contrast regression: Driessen’s diagram uses a restrained, purposeful color palette to encode branch types. The AI re‑render introduced mismatched hues and contrast issues that degraded quick comprehension.

Attribution, reuse, and the copyright question

The ethical and legal lines here are blurry but important to examine. Driessen released his original as reusable source material; the community has repeatedly copied and adapted it with attribution. That status matters: many reused versions (with credit) sit squarely within accepted community norms.Where this incident causes friction is the method: instead of crediting or directly reusing the original asset, an apparent generative workflow translated the visual into a synthetic image and published it with no visible attribution. There are three distinct issues to unpack:

- Proper reuse with attribution: When the original author offers the source under a permissive approach, the clean path is to reuse the original with attribution, or to produce a derivative that is clearly labeled and credited. Driessen’s own reaction shows he was not primarily upset about the reuse itself, but about the process and lack of credit.

- Generative transformation as disguise: Running an existing work through an AI generator that re‑creates the image—especially when the generator produces a degraded copy—erases the original's authorship signals (visual style, metadata, source linkage). That appearance of authorship can cross ethical boundaries even if there is no strict copyright violation.

- Copyright and attribution obligations: Whether Microsoft or its vendor violated copyright depends on the original asset’s license and how it was used; Driessen made source files available in the spirit of sharing, but corporate policies and fair‑use practices vary. Importantly, the absence of credit on an official page is an ethical lapse that large companies must avoid to maintain trust.

Microsoft’s public response and vendor dynamics

Microsoft’s immediate public reply—via Scott Hanselman—blamed what he described as an “overzealous vendor” and pledged a post‑mortem and better guardrails. Hanselman’s messaging emphasized that mistakes happen in large organizations and promised deeper investigation to determine whether this was a single person’s error or a systemic problem.Several points are worth noting in that response:

- Outsourcing content creation is common: Large companies frequently rely on external vendors or contractors for documentation, localization, and marketing. When generative AI enters that vendor workflow, corporate QA and procurement processes must adapt. This case shows how vendor output can quickly become a public relations issue if internal review is insufficient.

- Post‑mortems are necessary but not sufficient: Promising a post‑mortem is appropriate; what matters is the transparency and the concrete steps that follow. Internal remediation—updated procurement contracts, mandatory provenance metadata, and pre‑publish human review—are the kinds of guardrails the community expects.

- Attribution policies: A clear attribution policy for reused third‑party materials must be enforced in vendor scopes. The lack of credit here is an avoidable oversight that quality governance should catch.

Community reaction and the meme cycle

Developers and online communities were quick to spot the reproduction—and quicker to meme it. The phrase “continvoucly morged” became shorthand for the kinds of nonsensical output generative tools can produce when used without human curation. Driessen himself reacted with a mix of amusement and dismay, calling the result “proper AI slop” and expressing sadness over the “lack of process and care.”Community reaction serves a dual role:

- It acts as real‑time quality control, catching and escalating errors that corporate teams missed. That watchdog function will grow in importance as AI accelerates content production.

- It amplifies reputational damage. When a developer‑facing company publishes low‑quality technical artifacts, trust among the target audience erodes faster than companies can publish corrections.

What this reveals about current generative workflows

The incident is an instructive case study of contemporary AI adoption patterns inside large organizations:- Rapid adoption without updated governance: Teams eager to scale documentation or to automate art/redraw tasks may adopt image generation tools without revising editorial checklists and legal review procedures.

- Black‑box vendor workflows: When external vendors use AI in their content pipelines, clients may not fully understand the vendor’s models, training data, or prompt engineering. That lack of transparency creates blind spots where unattributed or derivative content can slip through.

- Insufficient human‑in‑the‑loop QA: Generative art is not yet reliable for technical diagrams without a review step by someone who understands the subject. The types of errors seen here—misplaced arrows, corrupted text—are exactly the kinds of issues a skilled human reviewer would catch.

- Metadata and provenance gaps: AI‑generated artifacts often lose metadata (creation history, source references, original author). Documentation sites must require provenance fields before publication.

Broader legal, ethical and business risks

If we step back, several recurring risks emerge when organizations adopt generative AI for content at scale:- Copyright exposure: If a model was trained on copyrighted material and regurgitates distinctive elements, the publisher could face legal claims depending on jurisdiction and the original work’s license. In this case, Driessen published his work for reuse, but the lack of credit still raises ethical concerns.

- Reputational damage: A single sloppily generated diagram from a major brand can go viral and damage credibility, especially in technical communities that prize rigor.

- Operational risk: Poorly supervised AI use increases the likelihood of errors reaching customers, potentially creating downstream operational or security issues if inaccurate technical guidance is published.

- Regulatory scrutiny: As governments and standard bodies tighten rules around generative AI, demonstrable failures to apply provenance and rights‑respecting practices could attract audits or fines.

- Internal morale and culture: When public assets are produced with little craft or attribution, it can demoralize creators both inside and outside the company who value craftsmanship and proper credit.

Practical recommendations for organizations using generative image tools

To transform this episode from a reputation hit into a teachable moment, organizations should adopt concrete guardrails. Below are pragmatic steps based on best practices in content governance and technical documentation.- Institute a mandatory provenance field for every published asset that includes:

- Original author (if any) and license

- Whether a generative model was used

- Vendor or tool used and the human reviewer’s name

- Require human‑in‑the‑loop QA for all technical diagrams:

- A subject‑matter expert must verify semantics (arrows, labels, timelines).

- A designer must approve alignment and color choices.

- Update vendor contracts to explicitly govern AI use:

- Vendors must disclose when they use AI and must not claim authorship for transformed third‑party assets.

- Contracts should include audit rights to inspect vendor prompts and models where legal/feasible.

- Maintain an internal “AI asset registry”:

- Track where generated images are used so recall or correction can be automated if problems are found.

- Provide prompt and transparent remediation when errors surface:

- Publicly note corrections and the reason (e.g., “This image was replaced after we discovered it did not meet our provenance standards.”)

- Educate teams about the limits of image generation:

- Train writers and designers to know when to prefer source reuse over synthetic recreation.

What remains unverified and what to watch for

There are important details we do not yet know, and the difference matters for accountability:- Which specific vendor created the image, what prompts or models they used, and whether they were contractually required to credit source material are not publicly confirmed at this time. Microsoft’s announcement pointed to a vendor but did not publish the vendor’s name or the post‑mortem findings when the initial statements were made. That chain of custody should be clarified in the promised post‑mortem.

- Whether the vendor used an internal, third‑party, or fine‑tuned model trained on proprietary datasets with questionable licensing is unknown. That matters for legal exposure and will be a central focus for any compliance review.

- Finally, we cannot infer systemic intent from a single incident: the initial public messaging treats this as a vendor error rather than an intentional misattribution. That distinction reduces the likelihood of regulatory action for deliberate infringement but does not eliminate reputational root cause issues. These nuances should be made explicit in Microsoft’s follow‑up investigation.

The cultural dimension: craftsmanship vs. automation

Beyond legalities and controls, this episode touches a deeper cultural fault line in technology: the tension between craftsmanship and automated scale. Driessen’s diagram is an artifact of careful visual design; its clarity is the result of deliberate constraints chosen for a technical audience. When a massive organization substitutes that craftsmanship with a high‑throughput generative approach and the product looks worse for it, the community reaction is visceral.That reaction matters because developer communities curate the shared artifacts—diagrams, patterns, and idioms—that make engineering teams interoperable. When those artifacts are muddy, the friction in onboarding and education increases. Organizations that treat documentation as a cost center risk degrading communal knowledge; those that treat it as a strategic asset must protect its provenance and quality.

Conclusion

The “continvoucly morged” incident is more than a memeable typo; it’s a compact case study in the governance, technical limits, and cultural friction introduced by generative AI in high‑visibility technical content. Microsoft’s removal of the image and promise of a post‑mortem are the correct immediate steps, but the episode should be a wider alarm bell for corporations: adopt provenance requirements, update vendor contracts to explicitly govern AI use, and preserve human review for any asset that conveys technical relationships.If companies are serious about scaling their documentation using AI, they must pair that ambition with commensurate investment in policy, tooling, and oversight. Without it, the next viral public‑facing error will be less amusing and more consequential.

Source: Windows Central https://www.windowscentral.com/soft...ontinvoucly-morged-my-diagram-there-for-sure/