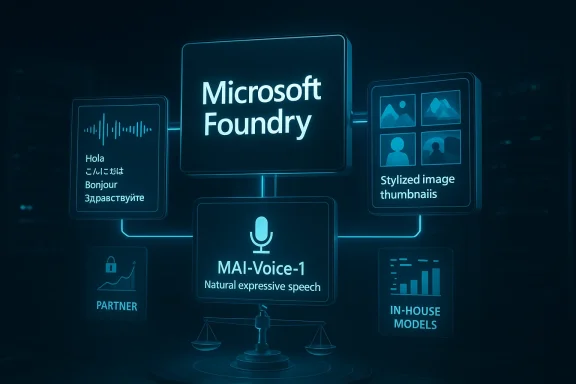

Microsoft’s launch of MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 in public preview is more than a routine model drop. It is a clear signal that Microsoft wants its Foundry stack to become the default place where developers build speech, voice, and image experiences with first-party models. The timing matters too: these models are already powering Microsoft products like Copilot and Bing, but now they are being exposed to developers as a productized platform play rather than a behind-the-scenes capability. Microsoft is also putting a strong emphasis on efficiency, latency, and cost, which suggests the company is competing not just on model quality but on operational economics.

Microsoft has spent the last several years turning AI from a bundle of features into a platform strategy. What began as scattered integrations across Office, Bing, and Azure has evolved into a more unified approach centered on Microsoft Foundry, where models, APIs, and deployment tooling are meant to live under one developer umbrella. The new MAI releases fit neatly into that plan because they cover three of the most commercially important multimodal workloads: transcription, speech synthesis, and image generation.

The important strategic point is that Microsoft is not introducing these as isolated experiments. The company says the models are already used in consumer and enterprise products such as Copilot, Bing, PowerPoint, and Azure Speech, which means Microsoft has had time to battle-test the stack internally. That matters because the AI market has often rewarded vendors that can prove their models work in production-like settings, not just in benchmark demos.

At the same time, Microsoft’s move reflects a broader industry shift. Developers increasingly want model access that is tightly connected to deployment, security, governance, and billing, rather than a loose collection of APIs stitched together by the customer. Foundry is Microsoft’s answer to that demand, and the MAI family gives it a more complete in-house story.

The announcement also arrives during a period when enterprise buyers are asking harder questions about cost per minute, latency per request, and scalability under load. That makes Microsoft’s emphasis on GPU efficiency especially notable. In other words, the company is not only saying these models are good; it is arguing they are practical to run at scale.

It also strengthens Microsoft’s control over the AI value chain. When a cloud vendor owns the model, the platform, the identity layer, and the surrounding developer tools, it has more leverage over performance tuning, pricing, and enterprise compliance. That is a major competitive advantage in a market where customers want fewer moving parts and more predictable bills.

That matters because many AI applications are no longer text-only. A support bot may need to transcribe a customer’s voice, summarize the issue, and answer in a natural voice. A marketing tool may need to generate draft copy, speak it aloud, and create supporting imagery. A modern productivity app may need all three. Microsoft is positioning Foundry to cover that whole chain.

The company’s messaging also shows a desire to differentiate from generic model marketplaces. Rather than just saying developers can “access models,” Microsoft is framing the MAI family as a first-party AI stack with cost, latency, and product integration advantages. That is a subtle but meaningful distinction.

The early public preview status is another key detail. Microsoft is giving developers access now, but it is also leaving itself room to refine the models, adjust pricing, and expand support before claiming full production maturity. That is standard for Microsoft previews, yet it also underscores that this is still the beginning of a broader rollout.

That consolidation has market implications. If Microsoft can make its own models easy to discover, cheap to test, and reliable to deploy, developers may default to the Microsoft stack rather than mixing vendors. That would deepen Foundry’s strategic importance and make Microsoft harder to displace in AI infrastructure deals.

Microsoft’s own documentation says the feature is in public preview and not recommended for production workloads yet, but it also describes the model as having a dual focus on accuracy and efficiency. The Learn page lists supported languages and notes that diarization is not currently supported, which is a meaningful limitation for meetings or multi-speaker call-center scenarios. That means the model is promising, but not yet a full replacement for every transcription workflow.

That cost positioning matters because transcription is often a volume game. Enterprises do not just care whether a model works; they care whether it can transcribe thousands of hours per month without ballooning cloud spend. A lower-cost model can unlock use cases that were previously marginal or too expensive.

Microsoft is clearly betting that buyers will value predictability as much as raw benchmark performance. In a large deployment, even small efficiency gains can translate into major savings. If MAI-Transcribe-1 performs well on accent-heavy, low-quality, or mixed-language audio, it could become a strong option for global customer-service operations.

This model is clearly intended for voice assistants, interactive agents, and content-generation tools. Microsoft describes it as natural and expressive, which is important because generic synthetic voices can still sound flat, robotic, or emotionally disconnected. In consumer products, that can hurt engagement. In enterprise tools, it can reduce user trust.

That speed also opens doors for richer agent behavior. A fast model can support back-and-forth dialogue, brief confirmations, spoken summaries, and live coaching without long pauses. It can also be paired with transcription to create a complete speech loop inside a Microsoft-hosted stack.

It also hints at a broader platform ambition: voice should not be a special feature bolted onto an app; it should be a native interaction mode. If Microsoft can make voice generation feel cheap and instant, more developers will design for spoken interfaces from the start rather than treating them as an afterthought.

Microsoft says the model was trained with input from designers, photographers, and visual storytellers, which suggests a more curated creative direction than a purely scale-at-all-costs approach. The company also points to strong benchmark performance, including a #3 debut on the Arena.ai leaderboard for image model families. That is not the final word on quality, but it is a useful signal that the model is competitive.

That is where Microsoft’s enterprise audience comes in. Workers do not merely want pretty images; they want usable images. Product teams, marketers, and internal communications teams care about layout, legibility, and brand alignment as much as style.

Enterprise partner adoption, including creative workflows with WPP, also matters because it helps validate the model in professional settings. When a large agency publicly experiments with a tool, it can influence other buyers who care about production readiness and brand control.

According to Microsoft Learn, MAI-Transcribe-1 is available in Azure Speech preview through the LLM Speech API, and the documentation explicitly says the preview comes without an SLA and is not recommended for production workloads. That kind of wording is routine, but it also tells enterprises exactly where Microsoft thinks the model is on the maturity curve. It is ready for evaluation, not yet for mission-critical dependence.

That is a subtle advantage over companies that have strong models but weaker enterprise plumbing. The easier it is to move from testing to production, the more likely a developer is to stay inside the ecosystem. That is where Foundry can become sticky.

The MAI launch reinforces that approach. If businesses can keep transcription, speech, and image generation within Microsoft’s trust boundary, they may be less inclined to stitch together multiple vendors. That could be especially attractive for regulated industries that need tighter control over data flows and auditability.

Pricing, however, is only part of the story. In AI, the effective cost of adoption includes integration effort, model tuning, latency, governance, and support. Microsoft appears to be betting that a tightly integrated stack will be worth more than a slightly cheaper standalone model from a competitor.

The strategic question is whether these prices will remain attractive as usage scales. Early preview pricing is often designed to encourage experimentation, so enterprises should assume the economics may shift as the product matures. That is normal, but it means budgeting teams should watch closely.

If developers believe they can get comparable quality with fewer vendors and lower total infrastructure costs, Microsoft may win even where it is not obviously the “best” single model provider. That is the kind of advantage cloud incumbents like to build: not a single brilliant feature, but a system that is good enough everywhere and excellent where integration matters most.

This is a strong play because it can reduce dependency on external model providers while improving product differentiation across Microsoft 365, Copilot, Bing, and Azure. It also lets Microsoft tune models for its own ecosystem in ways that third-party APIs may not allow. The company can optimize for its products first, then expose that optimization to developers.

At the same time, internal usage creates a feedback loop. Microsoft can gather operational data from its own products, improve the models, and then distribute those improvements to developers. That is a classic platform advantage and one that rivals will struggle to match unless they have similarly broad product surfaces.

The opportunity is especially large in enterprise workflows, where cost, governance, and integration matter more than flashy demos. Microsoft can win by making these models easy to adopt, cheap enough to scale, and tightly connected to existing tools. If it does that well, Foundry could become a default destination for multimodal AI builders.

There is also the risk of overpromising on benchmarks and efficiency. Claims about cost and speed are useful, but buyers will care about actual results in their own environments. If real-world audio quality, multilingual edge cases, or creative consistency fall short, the excitement could fade quickly.

What will matter most is whether Microsoft can keep the stack coherent while improving each model’s specialization. A strong transcription model, a fast voice engine, and a capable image generator are useful on their own, but the bigger prize is a seamless multimodal pipeline that feels like one product. That is the vision Microsoft is selling, and the industry will now judge how much of that vision survives contact with real deployment.

Source: FoneArena.com Microsoft rolls out MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2 in Foundry public preview

Background

Background

Microsoft has spent the last several years turning AI from a bundle of features into a platform strategy. What began as scattered integrations across Office, Bing, and Azure has evolved into a more unified approach centered on Microsoft Foundry, where models, APIs, and deployment tooling are meant to live under one developer umbrella. The new MAI releases fit neatly into that plan because they cover three of the most commercially important multimodal workloads: transcription, speech synthesis, and image generation.The important strategic point is that Microsoft is not introducing these as isolated experiments. The company says the models are already used in consumer and enterprise products such as Copilot, Bing, PowerPoint, and Azure Speech, which means Microsoft has had time to battle-test the stack internally. That matters because the AI market has often rewarded vendors that can prove their models work in production-like settings, not just in benchmark demos.

At the same time, Microsoft’s move reflects a broader industry shift. Developers increasingly want model access that is tightly connected to deployment, security, governance, and billing, rather than a loose collection of APIs stitched together by the customer. Foundry is Microsoft’s answer to that demand, and the MAI family gives it a more complete in-house story.

The announcement also arrives during a period when enterprise buyers are asking harder questions about cost per minute, latency per request, and scalability under load. That makes Microsoft’s emphasis on GPU efficiency especially notable. In other words, the company is not only saying these models are good; it is arguing they are practical to run at scale.

Why this launch matters

The MAI family is important because it creates a more coherent stack for voice and visual AI. Instead of relying entirely on third-party model providers, Microsoft can offer developers a first-party path from audio input to speech output to image generation. That lowers friction for teams building agents, assistants, contact-center tools, and creative workflows.It also strengthens Microsoft’s control over the AI value chain. When a cloud vendor owns the model, the platform, the identity layer, and the surrounding developer tools, it has more leverage over performance tuning, pricing, and enterprise compliance. That is a major competitive advantage in a market where customers want fewer moving parts and more predictable bills.

- Foundry is becoming Microsoft’s primary developer front door for AI.

- The MAI models extend Microsoft’s first-party model strategy into core multimodal tasks.

- Internal adoption inside Copilot and Bing helps validate these models operationally.

- The launch reflects a shift from “AI features” to “AI infrastructure.”

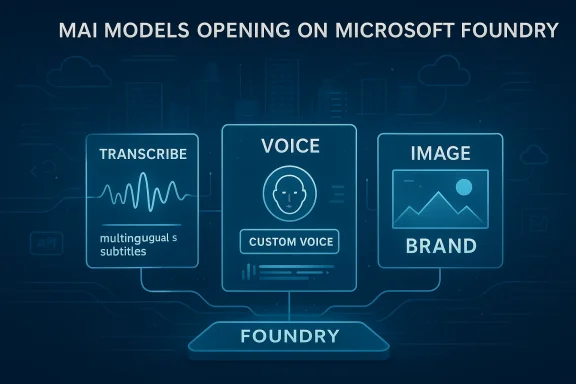

Overview

The three models each target a different part of the multimodal workflow. MAI-Transcribe-1 handles speech recognition, MAI-Voice-1 produces synthetic speech, and MAI-Image-2 generates visuals from text prompts. Together, they form what Microsoft wants developers to see as a unified creative and conversational stack.That matters because many AI applications are no longer text-only. A support bot may need to transcribe a customer’s voice, summarize the issue, and answer in a natural voice. A marketing tool may need to generate draft copy, speak it aloud, and create supporting imagery. A modern productivity app may need all three. Microsoft is positioning Foundry to cover that whole chain.

The company’s messaging also shows a desire to differentiate from generic model marketplaces. Rather than just saying developers can “access models,” Microsoft is framing the MAI family as a first-party AI stack with cost, latency, and product integration advantages. That is a subtle but meaningful distinction.

The early public preview status is another key detail. Microsoft is giving developers access now, but it is also leaving itself room to refine the models, adjust pricing, and expand support before claiming full production maturity. That is standard for Microsoft previews, yet it also underscores that this is still the beginning of a broader rollout.

The platform angle

The launch is not only about the models themselves. It is also about where the models live, how they are consumed, and how easily they fit into enterprise workflows. By putting them in Foundry and tying voice capabilities into Azure Speech, Microsoft is effectively consolidating usage paths for builders who want a single ecosystem.That consolidation has market implications. If Microsoft can make its own models easy to discover, cheap to test, and reliable to deploy, developers may default to the Microsoft stack rather than mixing vendors. That would deepen Foundry’s strategic importance and make Microsoft harder to displace in AI infrastructure deals.

- The release is tied to developer platform strategy, not just model R&D.

- Microsoft is aiming for workflow completeness across speech, voice, and image.

- Public preview allows iteration while still capturing developer mindshare.

- The stack is designed to reduce integration complexity for teams.

MAI-Transcribe-1: Efficiency as a differentiator

MAI-Transcribe-1 is the clearest example of Microsoft’s efficiency-first approach. The model is designed for speech recognition workloads and supports 25 languages, with a stated emphasis on handling accents and messy real-world audio. Microsoft says it is built for enterprise transcription use cases such as call centers, voice input, and audio pipelines, which are exactly the kinds of tasks where cost and speed can make or break deployment economics.Microsoft’s own documentation says the feature is in public preview and not recommended for production workloads yet, but it also describes the model as having a dual focus on accuracy and efficiency. The Learn page lists supported languages and notes that diarization is not currently supported, which is a meaningful limitation for meetings or multi-speaker call-center scenarios. That means the model is promising, but not yet a full replacement for every transcription workflow.

Accuracy, cost, and deployment

The most eye-catching claim is the efficiency story. Microsoft says MAI-Transcribe-1 can achieve roughly 50% lower GPU cost compared with leading alternatives when benchmarked. If that claim holds up in customer environments, it could be a powerful differentiator, especially for businesses processing large volumes of audio.That cost positioning matters because transcription is often a volume game. Enterprises do not just care whether a model works; they care whether it can transcribe thousands of hours per month without ballooning cloud spend. A lower-cost model can unlock use cases that were previously marginal or too expensive.

- Supports 25 languages.

- Designed for real-world noisy audio.

- Targets enterprise transcription rather than hobbyist demos.

- Claims lower GPU cost than leading alternatives.

- Current preview limitations include no diarization.

Practical enterprise relevance

For enterprises, transcription is often the first step in a larger automation pipeline. Audio can be converted to text, analyzed for sentiment or compliance risk, summarized, routed to a CRM, or handed off to an agent. That means a more efficient transcription model can cascade into lower costs across the entire workflow.Microsoft is clearly betting that buyers will value predictability as much as raw benchmark performance. In a large deployment, even small efficiency gains can translate into major savings. If MAI-Transcribe-1 performs well on accent-heavy, low-quality, or mixed-language audio, it could become a strong option for global customer-service operations.

MAI-Voice-1: Fast speech synthesis for real-time applications

MAI-Voice-1 is Microsoft’s speech generation model, and it is aimed squarely at real-time, conversational experiences. The company says it can generate up to 60 seconds of audio in under one second on a single GPU, which is an attention-grabbing claim because latency is one of the biggest barriers to natural voice agents. The lower the delay, the more human the interaction feels.This model is clearly intended for voice assistants, interactive agents, and content-generation tools. Microsoft describes it as natural and expressive, which is important because generic synthetic voices can still sound flat, robotic, or emotionally disconnected. In consumer products, that can hurt engagement. In enterprise tools, it can reduce user trust.

Why latency is the real battleground

The voice market is becoming increasingly competitive, and latency is one of the few things users immediately notice. If a system hesitates too long after a prompt, the experience feels broken even if the underlying answer is correct. Microsoft’s focus on rapid generation suggests it wants voice to feel as immediate as chat.That speed also opens doors for richer agent behavior. A fast model can support back-and-forth dialogue, brief confirmations, spoken summaries, and live coaching without long pauses. It can also be paired with transcription to create a complete speech loop inside a Microsoft-hosted stack.

- Designed for low-latency voice responses.

- Can generate long-form audio rapidly.

- Fits conversational agents and voice assistants.

- Useful for audio content generation and narration.

- Strengthens Microsoft’s end-to-end voice pipeline.

Consumer and enterprise implications

On the consumer side, this could improve Copilot-style voice experiences and make voice interaction more central to Windows and productivity apps over time. On the enterprise side, it matters for customer support, training, and internal knowledge systems where spoken answers can reduce friction.It also hints at a broader platform ambition: voice should not be a special feature bolted onto an app; it should be a native interaction mode. If Microsoft can make voice generation feel cheap and instant, more developers will design for spoken interfaces from the start rather than treating them as an afterthought.

MAI-Image-2: Microsoft’s creative model gets sharper

MAI-Image-2 is Microsoft’s new text-to-image model, and the emphasis here is on visual fidelity, prompt adherence, and better text rendering inside generated images. That combination is especially relevant for enterprise users, because many business graphics fail when they need labels, charts, packaging, or layout-heavy compositions. A model that handles those better has immediate practical value.Microsoft says the model was trained with input from designers, photographers, and visual storytellers, which suggests a more curated creative direction than a purely scale-at-all-costs approach. The company also points to strong benchmark performance, including a #3 debut on the Arena.ai leaderboard for image model families. That is not the final word on quality, but it is a useful signal that the model is competitive.

Why text rendering still matters

One of the most persistent problems in image generation is readable text. Posters, mockups, slides, and product visuals often break down because generated lettering becomes garbled or inconsistent. If MAI-Image-2 improves that area, it becomes much more useful for real work, not just eye-catching novelty.That is where Microsoft’s enterprise audience comes in. Workers do not merely want pretty images; they want usable images. Product teams, marketers, and internal communications teams care about layout, legibility, and brand alignment as much as style.

- Focuses on photorealism and structured visuals.

- Improves text handling in generated images.

- Suited to marketing, design, and product visualization.

- Trained with creative-professional input.

- Already integrated into Microsoft products like Copilot and PowerPoint.

Competitive positioning in image AI

The image-generation market is crowded, but Microsoft’s advantage may lie in distribution rather than novelty. By embedding MAI-Image-2 into tools like PowerPoint and Bing Image Creator, Microsoft can turn casual users into active AI users without asking them to adopt a separate application. That is a classic Microsoft move: win through workflow placement.Enterprise partner adoption, including creative workflows with WPP, also matters because it helps validate the model in professional settings. When a large agency publicly experiments with a tool, it can influence other buyers who care about production readiness and brand control.

Foundry and Azure Speech: The distribution layer matters

Microsoft is making a deliberate distinction between general developer access and speech-specific deployment. The models are available in Foundry, with additional integration for voice through Azure Speech. That dual route is important because it suggests Microsoft wants developers to choose between experimentation in Foundry and more operational speech deployments in Azure.According to Microsoft Learn, MAI-Transcribe-1 is available in Azure Speech preview through the LLM Speech API, and the documentation explicitly says the preview comes without an SLA and is not recommended for production workloads. That kind of wording is routine, but it also tells enterprises exactly where Microsoft thinks the model is on the maturity curve. It is ready for evaluation, not yet for mission-critical dependence.

Why multiple access paths matter

Different teams build differently. Some want a playground to test prompts and workflows. Others want APIs that drop directly into production apps. Still others need regional deployment, service keys, or governance hooks tied to Azure. Microsoft is trying to satisfy all of them without fragmenting the experience too much.That is a subtle advantage over companies that have strong models but weaker enterprise plumbing. The easier it is to move from testing to production, the more likely a developer is to stay inside the ecosystem. That is where Foundry can become sticky.

- Playground for testing and experimentation.

- APIs for application and agent development.

- Azure Speech for voice-related deployment.

- Preview status means limited production guarantees.

- Microsoft is offering a graduated adoption path.

Enterprise governance and control

For enterprises, platform coherence is not just a convenience; it is a risk-management feature. Centralized access, resource controls, and policy alignment make procurement easier and reduce the number of places where sensitive data may be exposed. Microsoft has long sold its cloud story on exactly that idea.The MAI launch reinforces that approach. If businesses can keep transcription, speech, and image generation within Microsoft’s trust boundary, they may be less inclined to stitch together multiple vendors. That could be especially attractive for regulated industries that need tighter control over data flows and auditability.

Pricing, economics, and the real competitive fight

Microsoft’s published pricing is likely to get a lot of attention because the company is clearly trying to make the economics compelling. The announced starting points are $0.36 per hour for MAI-Transcribe-1, $22 per 1 million characters for MAI-Voice-1, and $5 per 1 million text-input tokens plus $33 per 1 million image-output tokens for MAI-Image-2. Those numbers suggest Microsoft wants to undercut or at least closely match alternatives while highlighting efficiency gains.Pricing, however, is only part of the story. In AI, the effective cost of adoption includes integration effort, model tuning, latency, governance, and support. Microsoft appears to be betting that a tightly integrated stack will be worth more than a slightly cheaper standalone model from a competitor.

What the pricing signals

The pricing structure also reveals how Microsoft thinks about usage patterns. Transcription is often metered by time, voice synthesis by generated characters, and image generation by input and output tokens. That variety reflects the different compute profiles of each workload and indicates Microsoft is aiming for a more granular, enterprise-friendly billing model.The strategic question is whether these prices will remain attractive as usage scales. Early preview pricing is often designed to encourage experimentation, so enterprises should assume the economics may shift as the product matures. That is normal, but it means budgeting teams should watch closely.

- Transparent preview pricing encourages trial use.

- Different workload types get different billing meters.

- Efficiency claims may be used to justify long-term adoption.

- Competitive pressure will likely shape later pricing adjustments.

- Cost predictability may matter more than sticker price.

Competing with the rest of the market

The deeper competition here is not just with one vendor. It is with a whole class of AI platforms that offer separate tools for transcription, voice, and images. Microsoft is trying to collapse those categories into one cohesive proposition, which can be powerful if it works well enough.If developers believe they can get comparable quality with fewer vendors and lower total infrastructure costs, Microsoft may win even where it is not obviously the “best” single model provider. That is the kind of advantage cloud incumbents like to build: not a single brilliant feature, but a system that is good enough everywhere and excellent where integration matters most.

The broader strategic bet on first-party AI

The most important implication of the MAI rollout is that Microsoft is increasingly comfortable being both the platform owner and the model creator. That dual role gives it more control, but it also raises expectations. If Microsoft makes the models, then customers will compare them not just to the market, but to Microsoft’s own claims about performance and efficiency.This is a strong play because it can reduce dependency on external model providers while improving product differentiation across Microsoft 365, Copilot, Bing, and Azure. It also lets Microsoft tune models for its own ecosystem in ways that third-party APIs may not allow. The company can optimize for its products first, then expose that optimization to developers.

Internal leverage becomes external value

When Microsoft says the models are already used internally, that gives the company a credibility boost. Internal usage implies the models must satisfy real product constraints: scale, reliability, moderation, and cost. That can reassure enterprise buyers who worry that preview AI models are merely academic demonstrations.At the same time, internal usage creates a feedback loop. Microsoft can gather operational data from its own products, improve the models, and then distribute those improvements to developers. That is a classic platform advantage and one that rivals will struggle to match unless they have similarly broad product surfaces.

- Microsoft can optimize models for its own product ecosystem.

- Internal usage provides a real-world validation loop.

- Developers benefit from improvements tested at Microsoft scale.

- The company deepens its moat across cloud and productivity.

- First-party models reduce dependence on external providers.

Strengths and Opportunities

The strongest part of this launch is that it aligns product, platform, and infrastructure in one move. Microsoft is not just releasing three models; it is defining an architecture for how developers can build next-generation voice and image experiences inside the company’s stack. That creates both immediate utility and longer-term strategic leverage.The opportunity is especially large in enterprise workflows, where cost, governance, and integration matter more than flashy demos. Microsoft can win by making these models easy to adopt, cheap enough to scale, and tightly connected to existing tools. If it does that well, Foundry could become a default destination for multimodal AI builders.

- Unified developer experience across transcription, voice, and image.

- Strong fit for enterprise automation and contact-center scenarios.

- Potential for lower operating costs through efficiency gains.

- Better workflow integration with Copilot, Bing, PowerPoint, and Azure Speech.

- First-party control over model quality and roadmap.

- Attractive for teams that want fewer vendors and simpler governance.

- Stronger positioning for real-time voice agents.

Risks and Concerns

The biggest caution is that public preview does not equal production readiness. Microsoft’s own documentation makes clear that at least some of these features are preview-only and may have limited capabilities or missing functions, such as diarization. Enterprises that rush in too early could find themselves stuck with capabilities that are not yet complete enough for real workloads.There is also the risk of overpromising on benchmarks and efficiency. Claims about cost and speed are useful, but buyers will care about actual results in their own environments. If real-world audio quality, multilingual edge cases, or creative consistency fall short, the excitement could fade quickly.

- Preview status means no SLA and limited production guarantees.

- Some workflows still lack important features like diarization.

- Efficiency claims need validation in customer environments.

- Voice and image safety concerns will remain a live issue.

- Pricing may change as preview converts to general availability.

- Competitive pressure could force Microsoft to revise positioning.

- Enterprises may hesitate until compliance and governance are clearer.

Looking Ahead

Microsoft’s next challenge is execution. The company has shown it can identify high-value AI categories and package them into a platform story, but the real test is how fast these models mature and how consistently they perform across diverse workloads. Developers will quickly move from curiosity to skepticism if the models are impressive in demos but uneven in production.What will matter most is whether Microsoft can keep the stack coherent while improving each model’s specialization. A strong transcription model, a fast voice engine, and a capable image generator are useful on their own, but the bigger prize is a seamless multimodal pipeline that feels like one product. That is the vision Microsoft is selling, and the industry will now judge how much of that vision survives contact with real deployment.

- Watch for general availability timelines.

- Monitor whether diarization and other missing features arrive.

- Track whether pricing remains stable after preview.

- Compare real-world performance against rival speech and image models.

- Look for deeper integration into Copilot and Microsoft 365.

- Pay attention to enterprise case studies, especially in support and creative workflows.

Source: FoneArena.com Microsoft rolls out MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2 in Foundry public preview