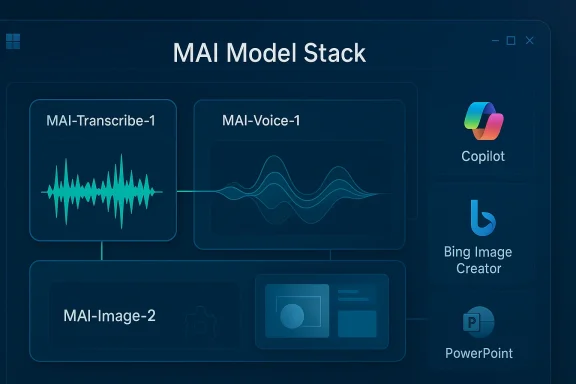

Microsoft’s latest MAI rollout is bigger than a product update, and smaller than the breathless “AI domination” framing making the rounds. What the company has actually done is introduce MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 as first-party models inside Microsoft Foundry, with immediate ties to Azure Speech, Copilot, Bing Image Creator, and PowerPoint. That matters because it marks a more aggressive vertical integration strategy: Microsoft is no longer just a platform hosting other people’s models, but a model maker pushing its own stack deeper into everyday productivity software. (techcommunity.microsoft.com)

The announcement is best understood as the next step in a long transition that began when Microsoft made its AI bet not just about features, but about control. For the past two years, the company has leaned heavily on partner models while building the surrounding cloud, security, and app plumbing. That approach delivered speed, but it also left Microsoft exposed to pricing pressure, dependency risk, and the strategic reality that the most important layer of the AI stack could remain outside its direct control.

Now the company is tightening that loop. Microsoft says MAI-Transcribe-1 is built in-house by the Microsoft AI Superintelligence team, MAI-Voice-1 comes from Microsoft’s own speech foundation models, and MAI-Image-2 is a Microsoft AI model with a release date of April 2, 2026. The models are being positioned not as experimental curiosities, but as commercial tools for transcription, narration, design, accessibility, customer support, and internal productivity. (ai.azure.com)

That positioning is important because the product story is not only about benchmark bragging rights. Microsoft is also making a platform argument: if you can run the models inside the same environment that already hosts enterprise identity, compliance, storage, and workflow automation, you reduce operational friction for customers and create a more durable business moat for Microsoft. In other words, the models are part of a much larger control strategy. (techcommunity.microsoft.com)

The timing also fits Microsoft’s broader hardware-software push. In January, the company unveiled Maia 200, its first-party inference accelerator, and described it as part of a heterogeneous AI infrastructure used across Microsoft Foundry and Microsoft 365 Copilot. That suggests the MAI models are not standalone vanity projects; they sit inside a broader plan to optimize inference economics from silicon to software.

The company’s own descriptions make the strategic split obvious. Transcribe is about accuracy, languages, noise tolerance, and cost efficiency. Voice is about speed, persona consistency, and natural delivery. Image is about creative fidelity, layout awareness, and production-quality visual output. This is not a one-model-fits-all push; it is an attempt to own the most commercially valuable slices of audio and image generation. (techcommunity.microsoft.com)

The practical effect is that Microsoft can test adoption in controlled enterprise environments while rapidly dogfooding the models in its own products. According to Microsoft, MAI-Voice-1 already powers Copilot Audio Expressions, while MAI-Transcribe-1 powers Copilot Voice Mode transcriptions and dictation. MAI-Image-2 is also being used in Copilot, Bing Image Creator, and PowerPoint. (techcommunity.microsoft.com)

That is especially important in enterprise software, where customers increasingly ask how much of their AI usage is going to third parties and how much is running under the cloud vendor’s own governance. Microsoft can now answer with a much stronger “first-party” story. It can also claim tighter performance optimization, lower latency, and better alignment between the models and the Azure infrastructure that serves them. (techcommunity.microsoft.com)

That is a meaningful competitive shift because it reduces the ability of rivals to define the user experience. If the model, the runtime, the cloud, and the app all belong to Microsoft, then Microsoft can bundle value in ways that are difficult for independent model vendors to match. The product becomes less a marketplace of interchangeable APIs and more a managed operating environment.

That combination matters because transcription is an infrastructure workload masquerading as a feature. Enterprises need it for meetings, compliance, call centers, accessibility, and content indexing. If Microsoft can offer lower compute cost and predictable scaling, it can turn speech recognition into a high-volume platform service rather than a commodity add-on. (techcommunity.microsoft.com)

The model’s broad language support is another important signal. Microsoft lists English, French, German, Italian, Spanish, Hindi, Portuguese, Czech, Danish, Finnish, Hungarian, Dutch, Polish, Romanian, Swedish, Japanese, Korean, Chinese, Arabic, Indonesian, Russian, Thai, Turkish, and Vietnamese. That multilingual coverage makes it especially relevant for global firms that cannot rely on a single-language transcription pipeline. (ai.azure.com)

The model is especially interesting because Microsoft is not merely seeking naturalness; it is seeking consistency. A branded voice that can speak warmly and adapt emotion without drifting in identity is far more useful to enterprise buyers than a generic “realistic” voice. Consistency is what lets a company deploy voice at scale across products, regions, and use cases without making the experience feel fragmented. (learn.microsoft.com)

The enterprise implications are obvious. Companies can use it for product explainers, e-learning modules, accessibility narration, virtual assistants, and brand-specific voice experiences. In the consumer layer, the model can make Copilot feel less like a chatbot reading from a script and more like a polished assistant that has a recognizable personality. (techcommunity.microsoft.com)

That matters because Microsoft is clearly trying to move image generation from novelty toward workflow utility. Strong prompt adherence, better text rendering inside images, and more coherent layouts can make the model useful for marketing, product design, internal communications, and rapid concept visualization. Those are enterprise tasks, not just consumer amusements. (techcommunity.microsoft.com)

The company’s WPP partnership is a notable signal because it shows Microsoft trying to prove the model in real production environments, not just controlled demos. That is important in image generation, where the gap between benchmark output and campaign-ready output can be enormous. Professional usefulness is the harder prize. (techcommunity.microsoft.com)

That is a more pragmatic competitive strategy than a pure “best model wins” contest. OpenAI still has the brand advantage in frontier AI mindshare, and Google remains deeply entrenched in search, cloud, and multimodal research. Microsoft’s advantage is distribution: if MAI models become the default inside Copilot, Foundry, Windows-adjacent workflows, and Office apps, then model quality matters, but ecosystem convenience matters too.

It also means Microsoft can avoid some of the “all or nothing” pressure that comes with frontier-model prestige battles. If MAI-Transcribe-1 is the best choice for transcription and MAI-Voice-1 is the best choice for branded speech, Microsoft can win on workflow value even if it is not always the most talked-about lab in the room. (ai.azure.com)

For enterprises, the implications are larger and more structural. A first-party model stack can reduce procurement complexity, improve security narratives, and make it easier to standardize AI across departments. That is especially attractive for organizations that have been hesitant to lean too heavily on external APIs with unclear long-term economics. (techcommunity.microsoft.com)

That split also explains why Microsoft is making such a point of mentioning Azure Speech, Foundry, and preview access. It wants developers and IT leaders to view MAI as a managed service layer, not a consumer gimmick. That distinction will shape adoption. (techcommunity.microsoft.com)

That creates a flywheel. Developers adopt the models through Foundry, enterprise teams standardize on Azure, and consumers encounter the output inside Microsoft products they already trust. Each layer reinforces the other, and Microsoft can optimize feedback loops across all three. (techcommunity.microsoft.com)

That makes MAI less of a standalone bet and more of a packaging strategy. If the same enterprise can use the model in a Microsoft-managed environment, deploy it through familiar APIs, and see it appear in Copilot workflows, switching costs rise quickly. That is not an accident; it is the business model. (techcommunity.microsoft.com)

The other key question is how quickly Microsoft expands beyond the initial preview footprint. If MAI-Image-2, MAI-Voice-1, and MAI-Transcribe-1 become more deeply embedded in Copilot, Office, and Azure services, this launch will look less like a single release and more like the opening chapter of Microsoft’s independent model era. That would be a meaningful shift in the AI market’s balance of power.

Source: IT Voice Media Pvt. Ltd. https://www.itvoice.in/microsoft-unveils-triple-threat-new-mai-models-to-challenge-ai-dominance/

Overview

Overview

The announcement is best understood as the next step in a long transition that began when Microsoft made its AI bet not just about features, but about control. For the past two years, the company has leaned heavily on partner models while building the surrounding cloud, security, and app plumbing. That approach delivered speed, but it also left Microsoft exposed to pricing pressure, dependency risk, and the strategic reality that the most important layer of the AI stack could remain outside its direct control.Now the company is tightening that loop. Microsoft says MAI-Transcribe-1 is built in-house by the Microsoft AI Superintelligence team, MAI-Voice-1 comes from Microsoft’s own speech foundation models, and MAI-Image-2 is a Microsoft AI model with a release date of April 2, 2026. The models are being positioned not as experimental curiosities, but as commercial tools for transcription, narration, design, accessibility, customer support, and internal productivity. (ai.azure.com)

That positioning is important because the product story is not only about benchmark bragging rights. Microsoft is also making a platform argument: if you can run the models inside the same environment that already hosts enterprise identity, compliance, storage, and workflow automation, you reduce operational friction for customers and create a more durable business moat for Microsoft. In other words, the models are part of a much larger control strategy. (techcommunity.microsoft.com)

The timing also fits Microsoft’s broader hardware-software push. In January, the company unveiled Maia 200, its first-party inference accelerator, and described it as part of a heterogeneous AI infrastructure used across Microsoft Foundry and Microsoft 365 Copilot. That suggests the MAI models are not standalone vanity projects; they sit inside a broader plan to optimize inference economics from silicon to software.

What Microsoft Actually Announced

At the center of the story is a trio of models with very different jobs. MAI-Transcribe-1 is a speech-to-text system for batch transcription and related voice workflows. MAI-Voice-1 is a neural text-to-speech model aimed at expressive, conversational audio. MAI-Image-2 is a text-to-image generator built for creative and design scenarios, with a clear emphasis on photorealism and prompt adherence. (ai.azure.com)The company’s own descriptions make the strategic split obvious. Transcribe is about accuracy, languages, noise tolerance, and cost efficiency. Voice is about speed, persona consistency, and natural delivery. Image is about creative fidelity, layout awareness, and production-quality visual output. This is not a one-model-fits-all push; it is an attempt to own the most commercially valuable slices of audio and image generation. (techcommunity.microsoft.com)

A platform release, not just a feature drop

Microsoft is offering the models through Foundry and, for the audio models, through Azure Speech in public preview. The company is also inviting users into a MAI Playground and making explicit references to enterprise deployment and SDK use. That means the release is aimed at builders first, end users second, even if consumer-facing products will likely benefit quickly. (techcommunity.microsoft.com)The practical effect is that Microsoft can test adoption in controlled enterprise environments while rapidly dogfooding the models in its own products. According to Microsoft, MAI-Voice-1 already powers Copilot Audio Expressions, while MAI-Transcribe-1 powers Copilot Voice Mode transcriptions and dictation. MAI-Image-2 is also being used in Copilot, Bing Image Creator, and PowerPoint. (techcommunity.microsoft.com)

- MAI-Transcribe-1 focuses on speech recognition across 25 languages.

- MAI-Voice-1 emphasizes expressive and conversational speech generation.

- MAI-Image-2 targets creative and design workflows with photorealistic output.

- All three are now part of Microsoft’s first-party AI product story. (techcommunity.microsoft.com)

Why This Matters Strategically

The real story is Microsoft’s desire to reduce its long-term dependency on external model providers. For a company that controls Windows, Microsoft 365, Azure, and a fast-growing enterprise AI platform, model dependence is both a cost issue and a strategic vulnerability. Owning more of the model stack gives Microsoft more leverage over pricing, roadmaps, safety policy, and product integration. (techcommunity.microsoft.com)That is especially important in enterprise software, where customers increasingly ask how much of their AI usage is going to third parties and how much is running under the cloud vendor’s own governance. Microsoft can now answer with a much stronger “first-party” story. It can also claim tighter performance optimization, lower latency, and better alignment between the models and the Azure infrastructure that serves them. (techcommunity.microsoft.com)

Control of the stack matters

Owning the full stack lets Microsoft optimize from several angles at once. It can tune deployment economics, adjust model behavior for enterprise workflows, and integrate safety controls more tightly into the service layer. It can also use the same underlying infrastructure to serve both customer-facing AI and its own internal copilots. (techcommunity.microsoft.com)That is a meaningful competitive shift because it reduces the ability of rivals to define the user experience. If the model, the runtime, the cloud, and the app all belong to Microsoft, then Microsoft can bundle value in ways that are difficult for independent model vendors to match. The product becomes less a marketplace of interchangeable APIs and more a managed operating environment.

- Better cost predictability for enterprise buyers

- Tighter alignment with Microsoft compliance tooling

- More room for product bundling

- Less exposure to partner roadmap changes

- Stronger differentiation in Microsoft 365 and Copilot

MAI-Transcribe-1 and the Enterprise Voice Stack

Among the three models, MAI-Transcribe-1 may have the clearest business case. Microsoft says it is designed for reliable transcription across 25 languages and is robust against accents, dialects, and noisy environments. The company also describes it as efficient, claiming competitive accuracy at nearly half the GPU cost of leading alternatives. (techcommunity.microsoft.com)That combination matters because transcription is an infrastructure workload masquerading as a feature. Enterprises need it for meetings, compliance, call centers, accessibility, and content indexing. If Microsoft can offer lower compute cost and predictable scaling, it can turn speech recognition into a high-volume platform service rather than a commodity add-on. (techcommunity.microsoft.com)

Productivity is the first wedge

Microsoft is already tying transcription to familiar scenarios: video captions, meeting transcription, accessibility tools, call analysis, content creation, and voice agents. That means the model is not just for new AI-native apps; it can also slot into existing workflows that enterprises already pay to support. The adoption path is likely to be much smoother than with experimental standalone AI tools. (ai.azure.com)The model’s broad language support is another important signal. Microsoft lists English, French, German, Italian, Spanish, Hindi, Portuguese, Czech, Danish, Finnish, Hungarian, Dutch, Polish, Romanian, Swedish, Japanese, Korean, Chinese, Arabic, Indonesian, Russian, Thai, Turkish, and Vietnamese. That multilingual coverage makes it especially relevant for global firms that cannot rely on a single-language transcription pipeline. (ai.azure.com)

- Meeting notes and action-item generation

- Call-center QA and analytics

- Accessibility captions and transcripts

- Media archiving and search

- Dictation and voice-agent backends

MAI-Voice-1 and the New Audio Economy

MAI-Voice-1 is the flashier product, but its significance is more subtle than “human-sounding voice.” Microsoft says it can generate 60 seconds of expressive audio in under one second on a single GPU, and its Learn documentation describes it as a neural text-to-speech model with stable voice persona quality and style controls via SSML. That is the kind of capability that can quietly reshape customer support, narration, learning content, and conversational agents. (techcommunity.microsoft.com)The model is especially interesting because Microsoft is not merely seeking naturalness; it is seeking consistency. A branded voice that can speak warmly and adapt emotion without drifting in identity is far more useful to enterprise buyers than a generic “realistic” voice. Consistency is what lets a company deploy voice at scale across products, regions, and use cases without making the experience feel fragmented. (learn.microsoft.com)

From narration to interaction

Microsoft says MAI-Voice-1 is optimized for conversational, expressive, and long-form scenarios, and it supports six prebuilt English voices. That is a narrower language scope than transcription, but the quality target is different: it is designed to sound polished, controlled, and emotionally adaptable rather than merely intelligible. (learn.microsoft.com)The enterprise implications are obvious. Companies can use it for product explainers, e-learning modules, accessibility narration, virtual assistants, and brand-specific voice experiences. In the consumer layer, the model can make Copilot feel less like a chatbot reading from a script and more like a polished assistant that has a recognizable personality. (techcommunity.microsoft.com)

- More natural customer-service automation

- Faster creation of narrated training content

- Better accessibility for visually impaired users

- Branded voice consistency across touchpoints

- Lower production overhead than studio voice work

MAI-Image-2 and the Visual Productivity Push

MAI-Image-2 is Microsoft’s most obviously competitive creative model, and it arrives in a crowded field where image quality alone is no longer enough. Microsoft says it is optimized for high-quality, visually rich images from text prompts, with particular strength in photorealistic synthesis, visual diversity, and professional-grade output. The company also claims it debuted as a top-three text-to-image model family on the Arena.ai leaderboard. (ai.azure.com)That matters because Microsoft is clearly trying to move image generation from novelty toward workflow utility. Strong prompt adherence, better text rendering inside images, and more coherent layouts can make the model useful for marketing, product design, internal communications, and rapid concept visualization. Those are enterprise tasks, not just consumer amusements. (techcommunity.microsoft.com)

Creative workflows are the real battleground

Microsoft says MAI-Image-2 is designed for creative ideation, enterprise communications, and UX/product concept visualization. That speaks directly to the white-collar workplace, where teams often need fast visual drafts more than final art. If the model shortens the path from idea to presentation slide, it has immediate value across Microsoft 365. (techcommunity.microsoft.com)The company’s WPP partnership is a notable signal because it shows Microsoft trying to prove the model in real production environments, not just controlled demos. That is important in image generation, where the gap between benchmark output and campaign-ready output can be enormous. Professional usefulness is the harder prize. (techcommunity.microsoft.com)

- Concept art and mood boards

- Internal branding and executive presentations

- Product mockups and interface ideation

- Marketing assets and campaign drafts

- Visual explainers and training content

Microsoft’s Competitive Position Against OpenAI and Google

The headline comparison is inevitable, but it should be handled carefully. Microsoft is not really trying to out-OpenAI OpenAI in the abstract, nor out-Google Google in the broadest sense. It is trying to make its own ecosystem harder to leave by embedding first-party models into the places where work already happens. (techcommunity.microsoft.com)That is a more pragmatic competitive strategy than a pure “best model wins” contest. OpenAI still has the brand advantage in frontier AI mindshare, and Google remains deeply entrenched in search, cloud, and multimodal research. Microsoft’s advantage is distribution: if MAI models become the default inside Copilot, Foundry, Windows-adjacent workflows, and Office apps, then model quality matters, but ecosystem convenience matters too.

A fragmentation story, not a winner-take-all story

The AI market is starting to look less like a single race and more like a set of specialized lanes. Microsoft’s new models reinforce that direction because they are differentiated by use case rather than built as one universal umbrella model. That can be a strength, because enterprises often want reliable task-specific systems more than one gigantic general-purpose engine. (ai.azure.com)It also means Microsoft can avoid some of the “all or nothing” pressure that comes with frontier-model prestige battles. If MAI-Transcribe-1 is the best choice for transcription and MAI-Voice-1 is the best choice for branded speech, Microsoft can win on workflow value even if it is not always the most talked-about lab in the room. (ai.azure.com)

- Competition is shifting from single-model supremacy to workflow specialization

- Distribution inside Microsoft products remains a major moat

- Enterprise buyers will care more about cost and governance than hype

- OpenAI and Google still set the pace on visibility

- Microsoft is trying to win through integration, not spectacle

Consumer Impact vs Enterprise Impact

For consumers, the most immediate effect will probably be experience quality. If Copilot sounds better, captions become more accurate, and image generation becomes more useful inside apps people already use, the value shows up as convenience rather than a headline feature. Most users will not care which model is under the hood if the output is better and the workflow feels smoother. (techcommunity.microsoft.com)For enterprises, the implications are larger and more structural. A first-party model stack can reduce procurement complexity, improve security narratives, and make it easier to standardize AI across departments. That is especially attractive for organizations that have been hesitant to lean too heavily on external APIs with unclear long-term economics. (techcommunity.microsoft.com)

Different buyers, different metrics

Consumers will judge these models by usefulness, speed, and how often they quietly disappear into normal workflows. Enterprises will judge them by compliance, cost, multilingual support, reliability, and whether Microsoft can integrate them into existing administrative controls. Those are not the same market tests, and Microsoft appears to understand that. (learn.microsoft.com)That split also explains why Microsoft is making such a point of mentioning Azure Speech, Foundry, and preview access. It wants developers and IT leaders to view MAI as a managed service layer, not a consumer gimmick. That distinction will shape adoption. (techcommunity.microsoft.com)

- Consumers get better in-app AI experiences

- Enterprises get more control and standardization

- IT teams get a clearer governance story

- Developers get a Microsoft-native model choice

- Procurement teams may get simpler vendor consolidation

The Role of Azure, Foundry, and Microsoft 365

The platform angle is impossible to miss. Microsoft Foundry is where developers evaluate and build with the models, while Azure Speech handles delivery for the voice stack. Meanwhile, Microsoft says the models are already being used inside Copilot, Bing Image Creator, and PowerPoint, which means Microsoft 365 is the main distribution engine for mainstream awareness. (techcommunity.microsoft.com)That creates a flywheel. Developers adopt the models through Foundry, enterprise teams standardize on Azure, and consumers encounter the output inside Microsoft products they already trust. Each layer reinforces the other, and Microsoft can optimize feedback loops across all three. (techcommunity.microsoft.com)

Why integration matters more than raw model claims

In AI, the most technically impressive model is not always the most commercially successful. The winning product is often the one that integrates best with identity, storage, permissions, and workflow tools. Microsoft’s big advantage is that it already owns those adjacent layers at scale.That makes MAI less of a standalone bet and more of a packaging strategy. If the same enterprise can use the model in a Microsoft-managed environment, deploy it through familiar APIs, and see it appear in Copilot workflows, switching costs rise quickly. That is not an accident; it is the business model. (techcommunity.microsoft.com)

- Foundry gives Microsoft developer reach

- Azure Speech gives the models enterprise infrastructure

- Microsoft 365 gives everyday visibility

- Copilot gives immediate productization

- The stack creates strong switching costs

Strengths and Opportunities

Microsoft’s MAI release has real strategic upside because it combines model ownership, distribution, and infrastructure in a way few competitors can match. The company is not just shipping models; it is shaping the default AI experience for a huge installed base of business and consumer users. That creates room for monetization, stickiness, and product differentiation across the broader Microsoft ecosystem. It is a classic platform play, but with stronger control than before.- First-party control over key AI workloads

- Tighter enterprise integration with Azure and Microsoft 365

- Lower latency potential through in-house optimization

- Better cost predictability for transcription and voice workloads

- Broader multilingual utility for global organizations

- Stronger brand differentiation versus generic partner-model usage

- Immediate product surface area across Copilot, PowerPoint, and Bing Image Creator

Risks and Concerns

The launch is impressive, but it also opens Microsoft to a new set of expectations. Customers will now compare its models not just against partner systems, but against the best available alternatives in transcription, speech synthesis, and image generation. That raises the bar on reliability, safety, and consistency, especially once public preview usage turns into production dependence. The hype cycle is not the problem; the operational burden is.- Preview-status limitations could frustrate production buyers

- Safety and misuse risks are significant for voice and image generation

- Language and regional coverage may lag for some markets

- Benchmark claims may not translate cleanly into real-world deployment

- Brand risk grows if outputs are biased, inaccurate, or inconsistent

- Voice cloning concerns could trigger policy scrutiny

- Competitive response from OpenAI, Google, and others may blunt the advantage

Looking Ahead

The next phase will be about adoption, not announcement. Microsoft has made a credible case that its MAI models can serve real enterprise and consumer workflows, but the decisive test is whether customers actually move workloads onto them. The company will also need to prove that these models can scale globally without compromising safety, latency, or support quality. (techcommunity.microsoft.com)The other key question is how quickly Microsoft expands beyond the initial preview footprint. If MAI-Image-2, MAI-Voice-1, and MAI-Transcribe-1 become more deeply embedded in Copilot, Office, and Azure services, this launch will look less like a single release and more like the opening chapter of Microsoft’s independent model era. That would be a meaningful shift in the AI market’s balance of power.

- Wider rollout beyond select enterprise and preview users

- More language support and lower-latency deployment options

- Deeper Copilot integration across Microsoft 365

- Additional MAI model families beyond audio and image

- Competitive responses from OpenAI, Google, and cloud rivals

Source: IT Voice Media Pvt. Ltd. https://www.itvoice.in/microsoft-unveils-triple-threat-new-mai-models-to-challenge-ai-dominance/