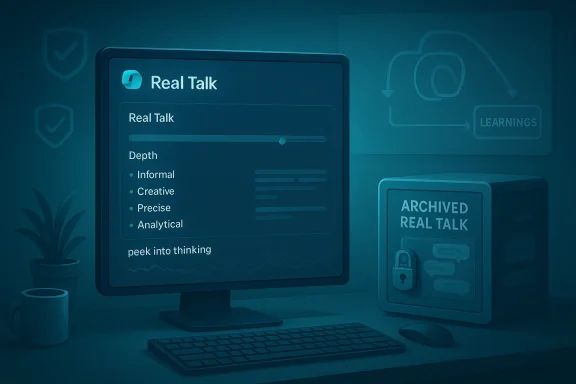

Microsoft has quietly paused and effectively retired the experimental “Real Talk” mode inside Copilot, archiving existing Real Talk conversations and removing the option to start new sessions as Microsoft prepares to fold lessons from the experiment into Copilot’s broader behaviour and product roadmap.

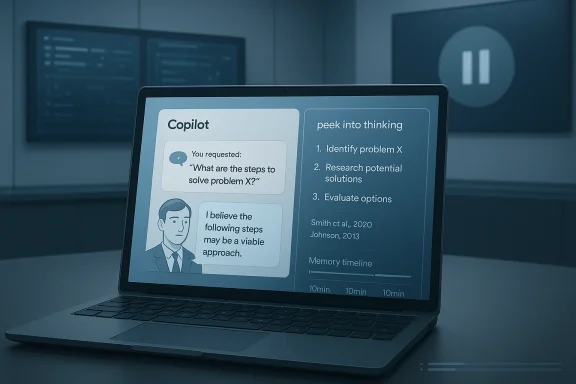

Microsoft introduced Copilot as a unified, multimodal assistant that spans Windows, Microsoft 365, Edge and mobile apps. In its Fall release cycle the company began shipping personality and conversation-style experiments intended to make Copilot feel more like a thinking partner than a polite, risk-averse helper. One of the most visible experiments was Real Talk, a conversational mode that aimed to push back, adopt tone and surface reasoning, giving users a way to see a simplified trace of the assistant’s “thinking.” Real Talk arrived publicly in January 2026 as part of Microsoft’s staged rollout of Copilot enhancements.

Real Talk’s headline mechanics included a user-facing control called Depth (for verbosity and emotional granularity), selectable writing styles, and a “peek into thinking” feature that attempted to expose intermediate reasoning steps behind a reply. The mode was intentionally experimental; Microsoft positioned it as a testbed for new interaction patterns rather than as a finished product.

What Microsoft has not published publicly is a detailed technical post-mortem or a full specification explaining the depth control, the internal safety filter changes applied to Real Talk, or the retention/export policy for archived conversations beyond the brief confirmations reported by press outlets. The absence of a formal engineering post or white paper means some claims about technical architecture and guardrails remain unverifiable without internal documentation. Where the company has been explicit — Real Talk’s experimental status, archiving of conversations, and intent to fold learnings into the core product — the reporting is consistent across multiple outlets.

Readers should treat any detailed architectural claims about Real Talk with caution until Microsoft releases a formal engineering write-up or peer-reviewed evaluation. Where reporting has described behavior and user-facing controls, those accounts are corroborated by multiple outlets and community testimony; where reporting speculates about model internals or dataset sources, that material remains unverifiable.

Yet the episode also highlights a broader industry tension: the faster vendors chase interactive, agentic features, the more they must reconcile user expectations, regulatory exposure, and the technical challenge of reliably grounding opinions. Rolling out “opinions” at scale without a commensurate investment in evidence plumbing and admin controls risks eroding trust.

For power users who valued Real Talk, the pause is a setback; for enterprise customers and regulators, it is a welcome sign that Microsoft is proceeding with caution. The most valuable outcome would be a Copilot that inherits Real Talk’s intellectual rigor and transparency while shipping with clearer source citations, confidence metadata, and enterprise-grade governance controls.

Source: Windows Report https://windowsreport.com/microsoft-shuts-down-copilot-real-talk-as-it-refines-ai-strategy/

Background

Background

Microsoft introduced Copilot as a unified, multimodal assistant that spans Windows, Microsoft 365, Edge and mobile apps. In its Fall release cycle the company began shipping personality and conversation-style experiments intended to make Copilot feel more like a thinking partner than a polite, risk-averse helper. One of the most visible experiments was Real Talk, a conversational mode that aimed to push back, adopt tone and surface reasoning, giving users a way to see a simplified trace of the assistant’s “thinking.” Real Talk arrived publicly in January 2026 as part of Microsoft’s staged rollout of Copilot enhancements.Real Talk’s headline mechanics included a user-facing control called Depth (for verbosity and emotional granularity), selectable writing styles, and a “peek into thinking” feature that attempted to expose intermediate reasoning steps behind a reply. The mode was intentionally experimental; Microsoft positioned it as a testbed for new interaction patterns rather than as a finished product.

What Microsoft said (and what it didn’t)

Microsoft’s public framing of the change has been cautious and compact: Real Talk was an experimental mode, the company says it is “listening to feedback,” and rather than maintaining Real Talk as a distinct, permanent toggle the team will integrate the experiment’s learnings into Copilot more broadly. Microsoft confirmed that existing Real Talk conversations have been archived and that users can no longer start new Real Talk sessions. That confirmation was relayed to multiple outlets and community channels.What Microsoft has not published publicly is a detailed technical post-mortem or a full specification explaining the depth control, the internal safety filter changes applied to Real Talk, or the retention/export policy for archived conversations beyond the brief confirmations reported by press outlets. The absence of a formal engineering post or white paper means some claims about technical architecture and guardrails remain unverifiable without internal documentation. Where the company has been explicit — Real Talk’s experimental status, archiving of conversations, and intent to fold learnings into the core product — the reporting is consistent across multiple outlets.

What Real Talk promised — a concise feature summary

- Contrarian, reasoning-first responses: Instead of reflexive agreement, Real Talk tried to challenge assumptions and point out flaws in reasoning.

- Depth control: Users could choose responses that were compressive (short, direct) or standard (more expansive, nuanced).

- Peek into thinking: A visible trace of intermediate reasoning steps that aimed to increase transparency and auditability.

- Tone and persona tuning: The mode could mirror a user’s conversational vibe while retaining an independent stance.

- Integration with memory: Real Talk leveraged Copilot memory features to produce coherent, context-rich conversations over time.

Timeline (compact)

- January 19, 2026 — Real Talk rolls out broadly after Fall 2025 previews and internal testing.

- Late January–February 2026 — early user reports and reviews highlight the mode’s novel stance and transparency features.

- March 1–5, 2026 — Microsoft pauses Real Talk; existing conversations are archived and the mode is removed as a standalone option. Press outlets and community channels report the change.

Community reaction — praise, confusion and pushback

Reaction to the shutdown has been a mix of enthusiasm and disappointment. Power users and many developers praised Real Talk as a genuinely valuable thinking partner — a rare assistant that would both criticize and explain rather than simply comply. Those users argued the mode improved ideation and error-spotting during cosame time, other community members welcomed the pause. Critics warned that an assistant that intentionally pushes back could inadvertently escalate risk: confident-sounding but poorly grounded assertions become more harmful when wrapped in a contrarian tone. Community threads documented confusion about whether Real Talk was deprecated permanently or temporarily paused while engineering resources were reallocated.Why Microsoft likely folded Real Talk back into Copilot

Several interlocking reasons explain the product decision. These are drawn from the public record, reporting, and community analysis — and while we can’t peer into Microsoft’s internal roadmaps, the external signals point to a consistent set of trade-offs.1) Safety and factual grounding

An assistant that disputes user assumptions must anchor criticisms to verifiable evidence. When a contrarian mode produces an unverified or hallucinated critique, the harm multiplies because the wording and tone can increase perceived authority. Fixing those failure modes requires additional grounding systems, metadata about confidence, and stricter source-tracing. Microsoft appears to have judged that more work was needed before shipping that capability widely. (theregister.com)2) Personalization and privacy surface area

Real Talk leaned on Copilot’s memory features to maintain coherence across multi-session dialogues. Deeper personalization increases retention and personalization risks: what gets remembered, how long it’s stored, and whether users can export or purge those memories. Archiving conversations buys time to document and improve retention controls and to publish clearer export/retention options. ([windowslatesndowslatest.com/2026/03/05/microsoft-drops-copilots-real-talk-after-learning-people-dont-just-want-ai-validation/)3) Product complexity and user mental model fragmentation

Offering multiple labeled personas or modes fragments UX and increases cognitive load. Microsoft signalled a preference (in public statements and product behaviour) for an adaptive assistant that changes tone contextually rather than forcing users to choose labeled alk’s capabilities into core Copilot behaviour simplifies the UX but requires more reliable context detection.4) Resource prioritization and roadmap trade-offs

Large product teams make pragmatic choices about where to focus engineering effort. Microsoft has been simultaneously rolling out other high-priority Copilot features (voice, vision, enterprise controls, agentic toggles and in-house model work), and pausing Real Talk frees engineering, safety and red-team capacity for those areas.Strengths Real Talk revealed (why the experiment mattered)

- Reduced sycophancy: Real Talk directly tackled a common pain point in conversational AI: unhelpful agreement. The feature showed there’s user appetite for more critical, adversarial assistants in certain tasks.

- Transparency as a product idea: Exposing reasoning steps — eveoves the interface from a black box toward an auditable system. This is meaningful for trust and for powering human-in-the-loop checks.

- New UX paradigms: Treating stance as a first-class UI element — not just content style but the assistant’s argumentative posture — forced designers to think differently about conversational controls.

Key risks and failure modes

- Confident errors: Contrarian answers that are factually wrong will be more damaging if phrased assertively. This is the classic “wrong but confident” failure amplified by rhetorical force.

- Tone miscalibration: Tone-mirroflect a user’s worst behaviour (sarcasm, rudeness) or escalate sensitive interactions, which is especially risky in regulated contexts (healthcare, legal, finance).

- Personalization creep and emotional dependency: A persistent assistant that feels like a friend may shift expectations about agency, privacy and accountability. That creates new support and governance requirements.

- Legal and compliance exposure: Opinionated responses can drift into areas that require professional qualifiers or trigger regulatory obligations; enterprises will want admin controls and conservative defaults.

How Microsoft should — and likely will — respond next

Based on the public signals and governance best practices, the sensible next steps for Microsoft are clear:- Publish retention and export options for archived Real Talk chats so users and admins know exactly how long data is retained and how it can be moved or deleted.

- Offer tiered transparency controls: a simple view for casual users and a richer, audit-oriented reasoning trace for power users, researchers and enterprise auditors. Pair transparency with confidence metadata and citation of sources where possible.

- Red-team contrarian behaviour: run specialized red-team scenarios that stress-test adversarial, legal and misinformation vectors; this is necessary because pushing back increases the surface for creative failure modes.

- Enterprise admin controls and conservative defaults: allow organizations to disable opinionated modes or set conservative thresholds for regulated deployments. Microsoft has been shipping more admin-level Copilot controls; Real Talk’s lessons should feed into those controls.

Practical guidance for users and IT teams today

- If you used Real Talk and have imporved, export or locally back up any content you need, because the conversations are archived and new sessions cannot be started. Multiple outlets have advised users to save critical content.

- Treat Copilot as an assistant rather than an authority: always cross-check Copilot assertions in high-stakes contexts and request evidence or citations when you need them.

- For IT and security teams: review Copilot memory settings at the tenant level and ensure default policies align with your data retention, compliance and privacy requirements. Microsoft’s admin guidance for Copilot and Microsoft 365 Copilot increasingly focuses on these controls; administrators should verify and test those settings.

- Keep track of feature flags: Microsoft has been experimenting with “agentic features” toggles and experimenta 11; administrators should monitor insider channels and release notes to manage exposure to new conversational modes.

Market and competitive implications

Microsoft’s decision to pause Real Talk is not an admission of defeat — it’s an example of iterative product development under a public spotlight. The experiment validated user demand for assistants that challenge assumptions, and competitors will watch closely.- Vendors thative, safety-first responses will claim the high ground among regulated customers.

- Firms that can demonstrate rigorous grounding, evidence tracing and effective admin controls will be able to offer contrarian features more safely.

- The market differentiator will be execution — delivering opinionated, reasoning-capable assistants with provable accuracy and robust governance.

Technical realities and what remains unverified

Real Talk introduced several novel UX concepts, including Depth and the reasoning trace. However, Microsoft did not publish a detailed technical specification for Depth or the internal model-switching behaviour that determined when Real Talk should assert vs. be deferential. That lack of published detail means independent verilying model choices (e.g., which model families, how context windows were used, automated evidence retrieval techniques) is limited.Readers should treat any detailed architectural claims about Real Talk with caution until Microsoft releases a formal engineering write-up or peer-reviewed evaluation. Where reporting has described behavior and user-facing controls, those accounts are corroborated by multiple outlets and community testimony; where reporting speculates about model internals or dataset sources, that material remains unverifiable.

A measured editorial assessment

Microsoft’s move to pause Real Talk and fold its learnings into the core Copilot product is a reasonable product-path decision rooted in safety, governance and product simplicity trade-offs. Real Talk surfaced important new ideas — contrarian answers, stance as UI, and transparent reasoning traces — and those ideas will likely reappear in more contained, auditable forms.Yet the episode also highlights a broader industry tension: the faster vendors chase interactive, agentic features, the more they must reconcile user expectations, regulatory exposure, and the technical challenge of reliably grounding opinions. Rolling out “opinions” at scale without a commensurate investment in evidence plumbing and admin controls risks eroding trust.

For power users who valued Real Talk, the pause is a setback; for enterprise customers and regulators, it is a welcome sign that Microsoft is proceeding with caution. The most valuable outcome would be a Copilot that inherits Real Talk’s intellectual rigor and transparency while shipping with clearer source citations, confidence metadata, and enterprise-grade governance controls.

Final takeaways

- Microsoft has archived Real Talk conversations and removed the ability to start new Real Talk sessions while it integrates lessons into Copilot more broadly.

- Real Talk demonstrated concrete product ideas — contrarian responses, depth controls and a peek-into-thinking UI — that are likely to reappear in refined, less overt forms.

- The main engineering challenges are grounding, safety, retention policies and enterprise admin controls; these remain active problem spaces requiring investment before opinionated features can be broadly released.

- Users and IT teams should back up important Real Talk conversations, review Copilot memory and admin settings, and treat Copilot output as assistance — not an unqualified source of truth.

Source: Windows Report https://windowsreport.com/microsoft-shuts-down-copilot-real-talk-as-it-refines-ai-strategy/