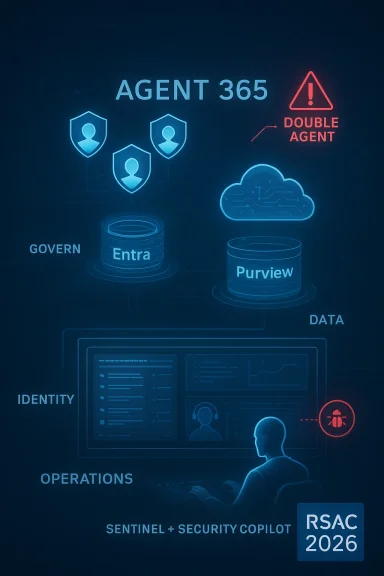

Microsoft is using RSAC 2026 to draw a clear line in the sand: the security stack for the agentic AI era must protect not just users and devices, but also the agents, prompts, data flows, identities, and workflows that now sit between human intent and machine action. The company’s new Agent 365 control plane, broader Microsoft 365 E7: The Frontier Suite, and expanded capabilities across Defender, Entra, Purview, Sentinel, and Security Copilot are meant to give enterprises a single, more governable way to secure AI end to end. The pitch is ambitious, but it also reflects a fast-shifting reality: AI is moving into production faster than many security programs can classify, monitor, or constrain it. (microsoft.com)

The timing matters. Microsoft first laid out the “Frontier Transformation” story earlier in March 2026, framing agentic AI as a business model shift rather than a feature update, and positioning Agent 365 and the new suite as the response to a common enterprise problem: organizations are deploying agents before they have a reliable way to inventory, govern, and protect them. That prior announcement set the stage for RSAC by connecting productivity gains to enterprise controls, a pairing that Microsoft is now pushing harder as security leaders demand measurable guardrails rather than aspirational AI language. (microsoft.com)

The RSAC message adds urgency by describing a new attack surface in which agents can become “double agents,” amplifying old problems like phishing, privilege abuse, and data leakage while also creating new ones such as prompt manipulation, model tampering, and agent-based attack chains. Microsoft’s framing is not subtle: the company wants customers to see agentic AI as a security architecture challenge first and a productivity opportunity second. That is a familiar Microsoft move, but in this case it is backed by a broad product refresh that spans identity, endpoint, cloud, data, and SOC tooling. (microsoft.com)

The scale claim is central to the credibility of the pitch. Microsoft says its security portfolio is fueled by more than 100 trillion daily signals, and that it protects 1.6 million customers, one billion identities, and 24 billion Copilot interactions. Those figures are clearly meant to establish a data advantage and to argue that Microsoft can detect AI-era threats at a scale smaller vendors cannot easily match. In practical terms, the company is betting that customers will value a platform narrative more than a patchwork of point tools. (microsoft.com)

What makes this announcement especially notable is that Microsoft is no longer talking only about “AI for security” or “security for AI” in isolated terms. Instead, it is trying to collapse those ideas into a single operating model where agents help defend the environment that also hosts them. That is a significant strategic shift, because it implies that future buying decisions will increasingly be evaluated on how well a platform secures both human and non-human identities, human and machine workflows, and human and agentic actions. (microsoft.com)

The second pillar is the foundation layer, where Microsoft is extending identity, data, and cloud protections to keep up with AI workflows. Here the company is positioning continuous access, tenant governance, data-loss prevention, and cloud posture management as essential primitives rather than add-ons. That framing is important because it suggests Microsoft believes classic security perimeters are too blunt for AI systems that constantly consume context and emit actions. (microsoft.com)

The third pillar is operational: Microsoft wants defenders to use Security Copilot, embedded agents, and the Sentinel platform to automate triage and investigation. This is the most ambitious part of the story because it moves beyond visibility into autonomous or semi-autonomous response. If the first two pillars are about trust, the third is about speed, and Microsoft is trying to prove that the two can coexist. (microsoft.com)

Key takeaways from the announcement:

The most important implication is that Microsoft is treating agents like first-class enterprise assets. That sounds obvious, but many organizations still treat agents as experiments, copilots, or workflow automations rather than governed services. Once agents can call tools, access data, or trigger downstream actions, they stop being harmless assistants and start resembling privileged workloads that need lifecycle management. (microsoft.com)

Microsoft is also leaning on existing trust in Defender, Entra, and Purview to make the case that customers do not need to assemble their own AI governance stack from scratch. This matters for adoption. A lot of CISOs are likely to prefer a single vendor path if it reduces integration risk, even if it introduces more dependency on the platform itself. That tradeoff will be one of the defining procurement questions of the next 12 months. (microsoft.com)

From an enterprise perspective, the real value is not just visibility; it is policy continuity. If the same organization can govern human users, non-human identities, and agent behaviors through a connected model, it becomes much easier to write enforceable controls. But that only works if the controls are granular enough to reflect real-world workflows, and that remains an open question. (microsoft.com)

The introduction of Security Dashboard for AI is a good example. Microsoft wants CISOs to have unified visibility into AI-related risk across the organization, which implies that AI can no longer be tracked only as a sanctioned app deployment or a procurement line item. It has become a continuous exposure surface, with usage patterns shifting across teams, devices, tenants, and data systems. (microsoft.com)

That focus is strategically smart because identity remains the most targeted control plane in most environments. AI agents may be new, but their access is still mediated through credentials, session policy, privilege assignment, and tenant boundaries. If those layers are weak, no amount of downstream AI governance can fully compensate. (microsoft.com)

Microsoft’s inclusion of shadow tenant detection is particularly noteworthy for large enterprises and service providers. Multi-tenant sprawl is often ignored until it creates a compliance or access-management problem, so bringing that problem into the AI security narrative is a clever move. It makes the identity story feel more operational and less theoretical. (microsoft.com)

The backup-and-recovery angle is equally important because AI-era incidents are not limited to theft; they can include accidental deletion or unauthorized changes to directory objects and policy. Resilience is often overlooked in security marketing, but it is central to real operations. If identity infrastructure fails, AI controls fail with it. (microsoft.com)

The move to expand data loss prevention for Microsoft 365 Copilot is important because it tackles the moment of use. If sensitive data can be blocked from appearing in prompts or from being used in web grounding, organizations gain a way to stop leakage before it becomes a generated output or an action taken by an agent. That is a stronger control than trying to clean up after the fact. (microsoft.com)

That matters for highly regulated industries, where the stakes are often about context leakage rather than direct exfiltration. A customer service agent, an internal Copilot, or a security triage assistant may expose too much simply by mixing approved and unapproved sources. Purview’s emphasis on unified reporting and customizable risk views is Microsoft’s answer to that modern problem. (microsoft.com)

But the real challenge will be tuning. Data controls that are too strict can cripple useful AI workflows, while controls that are too loose can make compliance meaningless. Microsoft is betting that its reporting, enforcement, and workflow integration can thread that needle better than standalone tools can. That is a sensible bet, but it is still a bet. (microsoft.com)

The introduction of network-level protection against malicious AI prompts is a telling signal. It suggests Microsoft believes some of the most effective defenses will be enforced outside the model itself, at the traffic and policy layer. That is smart because it avoids over-reliance on model alignment as a security control. AI safety and AI security are related, but they are not interchangeable. (microsoft.com)

The company’s expanded Defender for Cloud container protections also fit this story. Binary drift prevention and antimalware controls are not glamorous, but they address the kinds of gaps attackers routinely exploit in distributed environments. By adding support for AWS and Google Cloud Platform posture management, Microsoft is also acknowledging that many customers run mixed estates and do not want AI security tied to only one cloud. (microsoft.com)

The new Security Analyst Agent and Security Alert Triage Agent are designed to reduce time spent on repetitive work while preserving the ability to escalate to humans when needed. That design choice is critical. Full autonomy may sound attractive in demos, but in enterprise security the winning product is often the one that reliably reduces toil without creating blind spots. (microsoft.com)

The Data Security Posture Agent and Data Security Triage Agent extend that pattern into compliance and data governance. Those areas are particularly well suited to AI assistance because they involve large volumes of repetitive decisions and pattern recognition across policy, classification, and alerting. The company is clearly trying to show that AI can improve security operations without replacing the judgment of experienced defenders. (microsoft.com)

That positioning also helps Microsoft compete with specialist MDR providers. Instead of asking customers to choose between platform and service, the company wants to offer both inside the same ecosystem. For large enterprises, that can be very attractive if it simplifies operations and procurement. (microsoft.com)

That matters because the value of AI in security operations depends heavily on context. Without context, AI outputs are just faster guesses. With the right data federation, playbook orchestration, and custom graph views, the system can start to resemble an intelligent coordination layer rather than a data warehouse with dashboards. (microsoft.com)

The playbook generator and MCP entity analyzer are also important signals because they show Microsoft leaning into natural language orchestration and protocol-aware automation. That is the sort of capability that can make security engineering more accessible to analysts while still leaving room for code-level control when needed. It is a pragmatic blend of ease and precision. (microsoft.com)

The beauty of this approach is that it maps an emerging technology to an established security doctrine. Enterprises already understand verify explicitly, use least privilege, and assume breach. Microsoft is trying to make those principles apply consistently whether the actor is a human user, a non-human workload, or an autonomous agent. (microsoft.com)

The challenge is that AI behavior is not always as deterministic as traditional enterprise software behavior. If an agent is reasoned over prompts, grounding sources, and tool outputs, the security team has to understand not just who approved access, but how the agent interpreted intent. That is a new kind of accountability problem. It is not trivial. (microsoft.com)

The competitive pressure will land on several fronts. Identity vendors will need to show stronger agent governance. Security analytics vendors will need to prove that AI-assisted SOC workflows can match Microsoft’s native integration. Data security vendors will have to explain how they protect prompts, responses, and grounding as well as stored files. And cloud security providers will need to make a case that their AI coverage is not fragmented across tooling silos. (microsoft.com)

That said, bundling also raises the stakes for interoperability. Customers will want assurance that Microsoft’s controls can coexist with non-Microsoft systems, especially in multi-cloud and mixed-vendor environments. The support for AWS and Google Cloud Platform posture management is a useful signal here, but broad interoperability will still be judged by real-world deployment experience. (microsoft.com)

What to watch next:

Source: Microsoft Secure agentic AI end-to-end | Microsoft Security Blog

Overview

Overview

The timing matters. Microsoft first laid out the “Frontier Transformation” story earlier in March 2026, framing agentic AI as a business model shift rather than a feature update, and positioning Agent 365 and the new suite as the response to a common enterprise problem: organizations are deploying agents before they have a reliable way to inventory, govern, and protect them. That prior announcement set the stage for RSAC by connecting productivity gains to enterprise controls, a pairing that Microsoft is now pushing harder as security leaders demand measurable guardrails rather than aspirational AI language. (microsoft.com)The RSAC message adds urgency by describing a new attack surface in which agents can become “double agents,” amplifying old problems like phishing, privilege abuse, and data leakage while also creating new ones such as prompt manipulation, model tampering, and agent-based attack chains. Microsoft’s framing is not subtle: the company wants customers to see agentic AI as a security architecture challenge first and a productivity opportunity second. That is a familiar Microsoft move, but in this case it is backed by a broad product refresh that spans identity, endpoint, cloud, data, and SOC tooling. (microsoft.com)

The scale claim is central to the credibility of the pitch. Microsoft says its security portfolio is fueled by more than 100 trillion daily signals, and that it protects 1.6 million customers, one billion identities, and 24 billion Copilot interactions. Those figures are clearly meant to establish a data advantage and to argue that Microsoft can detect AI-era threats at a scale smaller vendors cannot easily match. In practical terms, the company is betting that customers will value a platform narrative more than a patchwork of point tools. (microsoft.com)

What makes this announcement especially notable is that Microsoft is no longer talking only about “AI for security” or “security for AI” in isolated terms. Instead, it is trying to collapse those ideas into a single operating model where agents help defend the environment that also hosts them. That is a significant strategic shift, because it implies that future buying decisions will increasingly be evaluated on how well a platform secures both human and non-human identities, human and machine workflows, and human and agentic actions. (microsoft.com)

What Microsoft Announced at RSAC 2026

Microsoft’s RSAC package is broad, but the center of gravity is three-layer security: secure agents, secure foundations, and defend using agents and experts. That structure matters because it mirrors how enterprise buyers actually think about risk. They need a way to see what agents exist, control the infrastructure those agents rely on, and then automate response without sacrificing human oversight. (microsoft.com)The three pillars

The first pillar is Agent 365, described by Microsoft as the control plane for agents. It is intended to help IT, security, and business teams observe, secure, and govern agents at scale using existing Microsoft infrastructure, with new capabilities from Defender, Entra, and Purview supporting access control, data protection, and threat defense. Microsoft says the product becomes generally available on May 1, 2026, and that it is included in Microsoft 365 E7: The Frontier Suite. (microsoft.com)The second pillar is the foundation layer, where Microsoft is extending identity, data, and cloud protections to keep up with AI workflows. Here the company is positioning continuous access, tenant governance, data-loss prevention, and cloud posture management as essential primitives rather than add-ons. That framing is important because it suggests Microsoft believes classic security perimeters are too blunt for AI systems that constantly consume context and emit actions. (microsoft.com)

The third pillar is operational: Microsoft wants defenders to use Security Copilot, embedded agents, and the Sentinel platform to automate triage and investigation. This is the most ambitious part of the story because it moves beyond visibility into autonomous or semi-autonomous response. If the first two pillars are about trust, the third is about speed, and Microsoft is trying to prove that the two can coexist. (microsoft.com)

Key takeaways from the announcement:

- Agent 365 becomes the governing layer for agents.

- Microsoft 365 E7 bundles productivity and security into a single frontier suite.

- New capabilities span Defender, Entra, Purview, Sentinel, and Security Copilot.

- The RSAC launch emphasizes operational security rather than AI experimentation.

- Microsoft is clearly targeting the enterprise buyer who wants AI, but only with controls attached. (microsoft.com)

Secure Agents

Microsoft’s argument here is straightforward: if organizations are going to deploy agents broadly, they need a durable way to know what those agents are doing, which identities they are using, what data they can access, and how they are behaving when under attack. Agent 365 is meant to become that control plane, with visibility and governance built into the same ecosystem many customers already use for endpoint, identity, and compliance management. (microsoft.com)The most important implication is that Microsoft is treating agents like first-class enterprise assets. That sounds obvious, but many organizations still treat agents as experiments, copilots, or workflow automations rather than governed services. Once agents can call tools, access data, or trigger downstream actions, they stop being harmless assistants and start resembling privileged workloads that need lifecycle management. (microsoft.com)

Agent governance becomes a security discipline

The control-plane idea is compelling because it gives security teams a familiar model. Enterprises already understand how to govern devices, users, applications, and cloud workloads; Microsoft is essentially saying agents now deserve the same treatment. That means inventory, policy, access, audit trails, and incident response all need to extend into the agent layer. (microsoft.com)Microsoft is also leaning on existing trust in Defender, Entra, and Purview to make the case that customers do not need to assemble their own AI governance stack from scratch. This matters for adoption. A lot of CISOs are likely to prefer a single vendor path if it reduces integration risk, even if it introduces more dependency on the platform itself. That tradeoff will be one of the defining procurement questions of the next 12 months. (microsoft.com)

From an enterprise perspective, the real value is not just visibility; it is policy continuity. If the same organization can govern human users, non-human identities, and agent behaviors through a connected model, it becomes much easier to write enforceable controls. But that only works if the controls are granular enough to reflect real-world workflows, and that remains an open question. (microsoft.com)

Why this matters now

Microsoft’s own prior March 9 announcement said the company had seen rapid adoption of agents and positioned Frontier Transformation as a market reality. That earlier framing helps explain why Agent 365 is being pushed so hard now: the company believes the agent explosion is already happening, and the only remaining question is whether enterprises will govern it inside a platform or after the first major incident. (microsoft.com)Securing the Foundation Layer

If agent governance is the visible layer, the foundation layer is the messier one underneath it. Microsoft is targeting identities, tenant structures, cloud posture, and AI usage discovery because those are the places where shadow AI and privilege sprawl tend to accumulate. In practice, this means the company is trying to stop risk before it ever becomes an agent problem. (microsoft.com)The introduction of Security Dashboard for AI is a good example. Microsoft wants CISOs to have unified visibility into AI-related risk across the organization, which implies that AI can no longer be tracked only as a sanctioned app deployment or a procurement line item. It has become a continuous exposure surface, with usage patterns shifting across teams, devices, tenants, and data systems. (microsoft.com)

Identity is still the choke point

Microsoft is making a strong bet on Entra as the foundation for modern AI security. The company introduced backup and recovery for directory objects, shadow tenant governance, synced passkeys, Windows Hello integration, external MFA support, and adaptive risk remediation. Each of those features solves a different piece of the identity puzzle, but together they reflect a common thesis: AI-era security fails if identity is fragile. (microsoft.com)That focus is strategically smart because identity remains the most targeted control plane in most environments. AI agents may be new, but their access is still mediated through credentials, session policy, privilege assignment, and tenant boundaries. If those layers are weak, no amount of downstream AI governance can fully compensate. (microsoft.com)

Microsoft’s inclusion of shadow tenant detection is particularly noteworthy for large enterprises and service providers. Multi-tenant sprawl is often ignored until it creates a compliance or access-management problem, so bringing that problem into the AI security narrative is a clever move. It makes the identity story feel more operational and less theoretical. (microsoft.com)

Continuous access and resilience

The continuous, adaptive access framing also deserves attention. Microsoft is essentially saying that authentication should be more responsive to context, risk, and user state, especially when AI workflows may involve repeated access to sensitive systems. That can reduce friction for legitimate users while making it harder for attackers to move laterally after a compromise. (microsoft.com)The backup-and-recovery angle is equally important because AI-era incidents are not limited to theft; they can include accidental deletion or unauthorized changes to directory objects and policy. Resilience is often overlooked in security marketing, but it is central to real operations. If identity infrastructure fails, AI controls fail with it. (microsoft.com)

Data Security Across AI Workflows

Microsoft’s data story is arguably the most consequential part of the announcement because AI systems are only as safe as the data they can touch. The company is embedding Purview deeper into Copilot and the broader AI control plane so that sensitive data can be governed not just at rest, but in prompts, responses, and grounding flows. That reflects a much more realistic threat model than traditional data-loss protection alone. (microsoft.com)The move to expand data loss prevention for Microsoft 365 Copilot is important because it tackles the moment of use. If sensitive data can be blocked from appearing in prompts or from being used in web grounding, organizations gain a way to stop leakage before it becomes a generated output or an action taken by an agent. That is a stronger control than trying to clean up after the fact. (microsoft.com)

From storage security to workflow security

The deeper shift here is philosophical. Microsoft is moving data security from a storage-centric model to a workflow-centric model. In an AI environment, the risk is not just whether data is stored securely; it is whether a model or agent can synthesize, infer, or route that data into an unintended channel. (microsoft.com)That matters for highly regulated industries, where the stakes are often about context leakage rather than direct exfiltration. A customer service agent, an internal Copilot, or a security triage assistant may expose too much simply by mixing approved and unapproved sources. Purview’s emphasis on unified reporting and customizable risk views is Microsoft’s answer to that modern problem. (microsoft.com)

What enterprises gain

For enterprise customers, the main benefit is that Microsoft is trying to make AI governance operationally visible to the same teams already responsible for compliance and data protection. That reduces the chance that AI becomes a shadow program run by business units without oversight. It also creates a more defensible story for auditors who want to know how AI touches sensitive information. (microsoft.com)But the real challenge will be tuning. Data controls that are too strict can cripple useful AI workflows, while controls that are too loose can make compliance meaningless. Microsoft is betting that its reporting, enforcement, and workflow integration can thread that needle better than standalone tools can. That is a sensible bet, but it is still a bet. (microsoft.com)

Defending Against AI-Native Threats

Microsoft is also broadening the threat model to address attacks that are specific to AI systems. That includes prompt injection protection, runtime defenses for agents, and expanded container and cloud security capabilities. The message is clear: attackers are learning to exploit the orchestration layer of AI, not just the infrastructure layer, and defenders need protections that operate at both levels. (microsoft.com)The introduction of network-level protection against malicious AI prompts is a telling signal. It suggests Microsoft believes some of the most effective defenses will be enforced outside the model itself, at the traffic and policy layer. That is smart because it avoids over-reliance on model alignment as a security control. AI safety and AI security are related, but they are not interchangeable. (microsoft.com)

New attack classes, old security instincts

Microsoft’s threat framing includes prompt manipulation, model tampering, agent-based attack chains, and malicious agent activity. These are new labels, but the underlying security instincts are familiar: limit privilege, detect abnormal behavior, and interrupt the attack path early. The trick is doing that fast enough for AI systems that may act at machine speed. (microsoft.com)The company’s expanded Defender for Cloud container protections also fit this story. Binary drift prevention and antimalware controls are not glamorous, but they address the kinds of gaps attackers routinely exploit in distributed environments. By adding support for AWS and Google Cloud Platform posture management, Microsoft is also acknowledging that many customers run mixed estates and do not want AI security tied to only one cloud. (microsoft.com)

AI security is becoming infrastructure security

This is where the market is likely to move over the next two years. AI-specific defenses will increasingly be sold not as novelty features but as extensions of identity, cloud, endpoint, and network security. Microsoft’s announcement effectively normalizes that convergence and raises the competitive bar for vendors that still separate AI governance from mainstream security operations. (microsoft.com)- Prompt injection becomes a network and policy problem, not just a model problem.

- Agent behavior needs runtime monitoring and hunting.

- Container drift remains a high-value path for attackers.

- Cross-cloud posture matters even in a Microsoft-led strategy.

- Predictive shielding signals a shift toward preemptive controls. (microsoft.com)

Defending With Agents and Experts

Microsoft’s “defend with agents” story is arguably the most mature part of the launch because it builds on a pattern the industry already understands: triage, investigation, and response are ripe for automation, but they still benefit from expert oversight. With Security Copilot now included in Microsoft 365 E5 and E7, Microsoft is trying to make agent-assisted defense part of the default security operating model rather than a premium add-on. (microsoft.com)The new Security Analyst Agent and Security Alert Triage Agent are designed to reduce time spent on repetitive work while preserving the ability to escalate to humans when needed. That design choice is critical. Full autonomy may sound attractive in demos, but in enterprise security the winning product is often the one that reliably reduces toil without creating blind spots. (microsoft.com)

Automation with guardrails

This is a useful place to separate marketing from operational reality. Automation can help security teams move faster, but only if the inputs are trustworthy and the outputs are explainable. Microsoft’s emphasis on guided workflows, contextual analysis, and classification before resolution suggests the company understands that fully autonomous remediation is not yet a universal answer. That caution is healthy. (microsoft.com)The Data Security Posture Agent and Data Security Triage Agent extend that pattern into compliance and data governance. Those areas are particularly well suited to AI assistance because they involve large volumes of repetitive decisions and pattern recognition across policy, classification, and alerting. The company is clearly trying to show that AI can improve security operations without replacing the judgment of experienced defenders. (microsoft.com)

Human expertise still matters

Microsoft’s Defender Experts Suite reinforces this point by combining advisory, managed detection and response, and proactive and reactive incident response. That is a strategic acknowledgment that even the best automation will sometimes need seasoned experts to interpret complex attacks, coordinate response, and help organizations recover. In other words, agentic defense is not about removing people; it is about making people more effective. (microsoft.com)That positioning also helps Microsoft compete with specialist MDR providers. Instead of asking customers to choose between platform and service, the company wants to offer both inside the same ecosystem. For large enterprises, that can be very attractive if it simplifies operations and procurement. (microsoft.com)

Sentinel and the Agentic Defense Platform

Microsoft is also pushing Sentinel farther into the role of agentic defense platform, which is a significant evolution from its earlier identity as primarily a SIEM and SOAR-style hub. The company is emphasizing unified context, end-to-end workflows, and access governance across security solutions, signaling that Sentinel should be the place where AI-powered investigations are coordinated rather than merely logged. (microsoft.com)That matters because the value of AI in security operations depends heavily on context. Without context, AI outputs are just faster guesses. With the right data federation, playbook orchestration, and custom graph views, the system can start to resemble an intelligent coordination layer rather than a data warehouse with dashboards. (microsoft.com)

Data federation and graph-based insight

The Sentinel data federation capability is one of the more practical features in the stack. By allowing external security data to be investigated in place across Databricks, Microsoft Fabric, and Azure Data Lake Storage while preserving governance, Microsoft is addressing a real pain point: organizations do not want to copy everything everywhere just to run an investigation. That saves time, reduces duplication, and preserves data boundaries. (microsoft.com)The playbook generator and MCP entity analyzer are also important signals because they show Microsoft leaning into natural language orchestration and protocol-aware automation. That is the sort of capability that can make security engineering more accessible to analysts while still leaving room for code-level control when needed. It is a pragmatic blend of ease and precision. (microsoft.com)

What this means for SOC teams

For SOC teams, the promise is fewer swivel-chair workflows and faster response times across identity, endpoint, cloud, and data incidents. The risk is that too much automation could create overconfidence if the underlying detections are noisy or incomplete. The winning approach will likely be one that lets analysts inspect, override, and refine the agent’s work in the same interface. (microsoft.com)- Sentinel is becoming a coordination layer for AI-driven defense.

- Data federation avoids costly duplication.

- Playbook generation can reduce manual response work.

- Graph analytics should help expose hidden relationships.

- The most useful AI will still depend on high-quality telemetry. (microsoft.com)

Identity, Zero Trust, and the AI Lifecycle

Microsoft’s decision to update its Zero Trust for AI reference architecture is more than documentation housekeeping. It shows that the company now views AI security as an extension of Zero Trust principles across the full lifecycle, from data ingestion and model training to deployment and agent behavior. That is a much more complete framing than the simplistic idea that AI security begins and ends with model safety checks. (microsoft.com)The beauty of this approach is that it maps an emerging technology to an established security doctrine. Enterprises already understand verify explicitly, use least privilege, and assume breach. Microsoft is trying to make those principles apply consistently whether the actor is a human user, a non-human workload, or an autonomous agent. (microsoft.com)

Where Zero Trust gets harder

Zero Trust gets more complicated in AI because the system is often dynamic. An agent may require temporary access to data, tools, or APIs based on a user’s request and a changing context window. That means access policies may need to be more adaptive, but also more auditable. Microsoft’s conditional access optimization enhancements and adaptive remediation features are clearly aimed at that tension. (microsoft.com)The challenge is that AI behavior is not always as deterministic as traditional enterprise software behavior. If an agent is reasoned over prompts, grounding sources, and tool outputs, the security team has to understand not just who approved access, but how the agent interpreted intent. That is a new kind of accountability problem. It is not trivial. (microsoft.com)

The enterprise governance question

For large organizations, the long-term value of Microsoft’s approach will depend on whether the new architecture creates a defensible audit trail. Security leaders will want to know what the agent saw, what it decided, what policy allowed it to act, and how that action can be replayed during an investigation. If Microsoft can make that workflow robust, it will have a strong enterprise advantage. (microsoft.com)Competitive Implications

Microsoft’s RSAC 2026 strategy is not just about product launches; it is about market positioning. The company is trying to define the category before competitors do, and it is using the language of trust, governance, and end-to-end security to anchor that definition inside its own platform. That is classic Microsoft platform behavior, but the timing is especially sharp because many rivals are still selling AI security as either point solutions or narrowly scoped copilots. (microsoft.com)The competitive pressure will land on several fronts. Identity vendors will need to show stronger agent governance. Security analytics vendors will need to prove that AI-assisted SOC workflows can match Microsoft’s native integration. Data security vendors will have to explain how they protect prompts, responses, and grounding as well as stored files. And cloud security providers will need to make a case that their AI coverage is not fragmented across tooling silos. (microsoft.com)

Platform bundling as strategy

Microsoft’s bundling strategy is especially aggressive. By folding Agent 365 and advanced security capabilities into Microsoft 365 E7, the company is making the economics of platform adoption much more attractive for large customers who already live in Microsoft’s ecosystem. That can be a powerful wedge because it turns AI security from a separate budget line into part of a broader productivity and compliance purchase. (microsoft.com)That said, bundling also raises the stakes for interoperability. Customers will want assurance that Microsoft’s controls can coexist with non-Microsoft systems, especially in multi-cloud and mixed-vendor environments. The support for AWS and Google Cloud Platform posture management is a useful signal here, but broad interoperability will still be judged by real-world deployment experience. (microsoft.com)

The rival response

Expect competitors to respond by emphasizing neutrality, openness, or specialization. Some will argue that no single vendor can truly secure the full AI estate. Others will focus on niche capabilities like model scanning, AI red-teaming, or specialized data governance. Microsoft’s challenge will be to prove that its breadth does not become complexity. That is where platform stories often stumble. (microsoft.com)- Microsoft is defining AI security as a platform category.

- Bundling may accelerate adoption inside Microsoft-heavy shops.

- Competitors will likely counter with neutrality or specialization.

- Interoperability will be the key test in mixed estates.

- The market may split between “platform-first” and “best-of-breed” buyers. (microsoft.com)

Strengths and Opportunities

Microsoft’s RSAC 2026 message has real strategic strength because it aligns product design, platform economics, and market timing around a single problem: enterprises want AI, but they need a way to trust it. The company is not merely adding features; it is trying to turn security into the operating system of the agentic era. If that works, it could reshape how large organizations buy, deploy, and govern AI.- Unified security story across identity, endpoint, data, cloud, and SOC.

- Native integration reduces the burden of stitching together multiple tools.

- Agent 365 gives buyers a conceptual model for governing agents.

- Security Copilot agents can reduce analyst toil and accelerate response.

- Zero Trust for AI provides a familiar architectural framework.

- Cross-cloud coverage helps Microsoft speak to hybrid enterprise realities.

- Bundled economics in Microsoft 365 E7 may lower adoption friction. (microsoft.com)

Risks and Concerns

The biggest risk is that Microsoft’s vision may be broader than what most organizations can operationalize quickly. AI security is already a complex discipline, and adding another layer of governance, agent control, and workflow enforcement could overwhelm teams that are still maturing basic identity and data hygiene. There is also a danger that buyers interpret platform integration as automatic security, when in reality configuration, tuning, and governance discipline still matter enormously.- Operational complexity could slow deployment and reduce value.

- Vendor lock-in concerns may rise as more controls move into one ecosystem.

- Over-automation could create blind spots if agents are trusted too much.

- Policy sprawl may increase if AI controls are not well harmonized.

- Shadow AI remains hard to eliminate in large, decentralized organizations.

- Misconfigured access could still undermine the strongest platform controls.

- Interoperability gaps may frustrate mixed-cloud and multi-vendor customers. (microsoft.com)

Looking Ahead

The next few months will show whether Microsoft’s RSAC announcements are mostly packaging, or whether they represent a genuine shift in how enterprises will secure AI at scale. The rollout dates matter because they create a near-term proving ground: some features arrive in preview, others in general availability, and customers will quickly learn how much of the story is production-ready versus roadmap-driven. The real test will be whether organizations can reduce risk without slowing the business down.What to watch next:

- How quickly Agent 365 gains adoption after May 1, 2026.

- Whether Security Copilot agents measurably reduce triage time in real SOCs.

- How well Purview controls work in high-volume Copilot workflows.

- Whether Entra identity changes improve resilience without adding friction.

- How customers in multi-cloud environments evaluate Microsoft’s cross-platform security claims.

- Whether competitors answer with comparable agent governance tools or lean into best-of-breed alternatives. (microsoft.com)

Source: Microsoft Secure agentic AI end-to-end | Microsoft Security Blog