Microsoft’s plan to build its own frontier-class AI models by 2027 marks one of the clearest signs yet that the company no longer wants to be defined only as OpenAI’s biggest commercial partner. The strategy is not a simple hedge; it is a structural reset aimed at making Microsoft AI more self-sufficient in the model layer while still leveraging OpenAI, Anthropic, and others where it makes sense. That shift matters because Microsoft has spent the past several years turning Copilot into an ecosystem, and now it wants the foundation beneath that ecosystem to be its own. (blogs.microsoft.com)

Microsoft’s AI posture in 2026 is best understood as a progression from dependence to diversification. In 2020, Microsoft licensed GPT-3 technology from OpenAI and then built a broad product strategy around that relationship, turning OpenAI’s breakthroughs into visible consumer and enterprise features across its stack. The January 2023 partnership extension deepened the arrangement, with Azure positioned as the exclusive cloud provider for OpenAI workloads and Microsoft gaining room to commercialize the technology in its own products.

That partnership still exists, and Microsoft’s own public language says it remains strong. But the company is now explicitly saying that it wants more control over the model layer, because models are no longer just research artifacts; they are product infrastructure, margin drivers, and strategic leverage. Mustafa Suleyman’s March 2026 internal reorganization made that clear by putting him on a “superintelligence” track focused on frontier models and by unifying Copilot across consumer and commercial into a single system spanning the experience, platform, Microsoft 365 apps, and AI models. (blogs.microsoft.com)

The timing also matters. Microsoft has spent the last year broadening model choice rather than insisting on a single house model. In March 2026, Microsoft said Microsoft 365 Copilot would remain “model diverse by design,” explicitly leveraging OpenAI and Anthropic models and bringing Claude into mainline Copilot chat through the Frontier program. That tells us Microsoft is not abandoning partners; it is insulating itself from any one partner’s roadmap, pricing, or strategic direction. (blogs.microsoft.com)

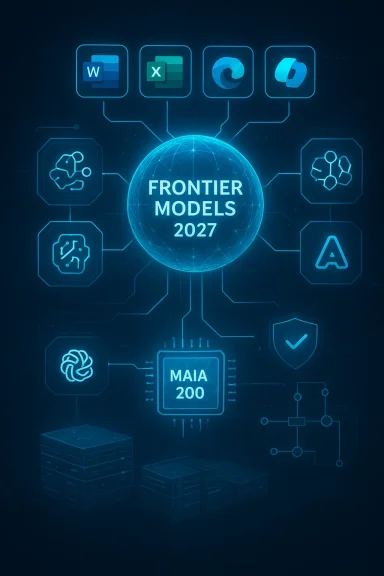

At the same time, the company has been investing heavily in AI infrastructure, including Maia 200, its new inference accelerator, and a broader compute roadmap designed to support both its own models and OpenAI’s. When Microsoft says it has a “locked” compute roadmap and wants to deliver “SOTA models,” that is not a vague aspiration. It is a signal that the company sees frontier compute, not just software distribution, as the next battleground. (blogs.microsoft.com)

Suleyman’s messaging suggests that Microsoft believes frontier models are becoming indispensable to product quality and cost control. In the March leadership update, he said Microsoft needed to focus on models that improve evals, reduce COGS, and advance enterprise breakthroughs. In plain English, that means Microsoft wants models that are not only smart, but cheaper to run and more deeply aligned with business workflows. (blogs.microsoft.com)

There is also a competitive logic to the deadline. By setting a 2027 objective, Microsoft can benchmark itself against OpenAI, Anthropic, and Google without pretending parity exists today. The date creates pressure internally while leaving room for incremental progress across model quality, latency, multimodality, and agentic behavior. In a field where months matter, that is both a commitment and a warning.

But managed interdependence can still be a strategic constraint. Microsoft’s current product portfolio has benefited enormously from OpenAI’s breakthroughs, yet the more Microsoft integrates those models into Copilot, Office, and developer tooling, the more exposed it becomes to pricing shifts, governance changes, and roadmap decisions outside its full control. Building its own frontier models gives Microsoft a seat at the table it does not have to borrow. (blogs.microsoft.com)

That flexibility is important because the AI stack is becoming modular. Enterprises increasingly expect choice in model selection, governance, residency, and cost. Microsoft appears to understand that a single-model strategy would be too brittle for a market where customers want optionality and regulators want auditability. (blogs.microsoft.com)

The recent leadership shift also says something important about Microsoft’s internal priorities. Jacob Andreou was elevated to lead Copilot experience across consumer and commercial, while Suleyman narrowed his focus toward model development and superintelligence efforts. That division suggests Microsoft is separating product execution from model science in the same way it once separated Windows, Azure, and Office as distinct engines of scale. (blogs.microsoft.com)

That move is clever, but it is also difficult. Consumers want delight and immediacy, while enterprises want control, compliance, and predictable ROI. A unified Copilot has to satisfy both without becoming bland, expensive, or overgoverned. That tension will define whether Microsoft’s AI ambitions feel coherent or merely sprawling. (blogs.microsoft.com)

In other words, Copilot is both the showcase and the pressure valve. If Microsoft’s own models are not ready, Copilot can still ship using a mix of OpenAI and Anthropic capabilities. If Microsoft’s internal models improve quickly, the company gains better margins and more control. Either way, Copilot remains the place where strategy becomes visible. (blogs.microsoft.com)

The company’s March 2026 frontier suite announcement leaned hard into this idea, highlighting agentic experiences in Word, Excel, PowerPoint, and Outlook, plus Copilot Cowork for long-running, multi-step work. Microsoft also said Agent 365 would give IT and security leaders a control plane for agents, underscoring that the company sees agent sprawl as both an opportunity and a governance problem. (blogs.microsoft.com)

This also explains why Microsoft is talking about office work automation in such broad terms. Even if the company is being a little too optimistic about the pace of change, the direction is obvious: repetitive knowledge work is increasingly a target for software-mediated delegation. The economic prize is not just higher productivity. It is labor compression, faster throughput, and lower support costs.

The risk is that complexity becomes friction. If every agent requires policy tuning, approval chains, and integration work, adoption slows. Microsoft knows this, which is why it is packaging trust and intelligence together rather than treating security as an afterthought. That is wise, but it also means the company must prove that governed automation can still feel magical. (blogs.microsoft.com)

The hardware strategy is important for another reason: frontier models are increasingly constrained by economics, not just raw availability. Training and serving are both expensive, and Microsoft wants to own more of the value chain so it can optimize latency, throughput, and cost. That is the difference between being a reseller of AI and being a true AI infrastructure company.

This infrastructure story gives Microsoft a kind of hidden moat. Even if its own model research lags the absolute frontier, the company can still win through distribution, integration, and economics. But if it also closes the model gap by 2027, the result could be a much more vertically integrated AI empire than many rivals expected. (blogs.microsoft.com)

Anthropic may actually benefit in the short run. Microsoft has already shown that it is willing to bring Claude into Copilot and Microsoft 365 experiences, which gives Anthropic direct distribution inside one of the world’s largest enterprise software ecosystems. But the more Microsoft builds its own models, the more Anthropic becomes a partner of convenience rather than an indispensable pillar. (blogs.microsoft.com)

The broader market implication is that the AI stack is becoming more pluralistic. Customers are already using multiple models, and Microsoft is leaning into that reality rather than resisting it. That can help reduce lock-in and spur innovation, but it also means the “winner takes all” narrative is fading. The next phase may belong to companies that can orchestrate many strong models, not just own one great one. (blogs.microsoft.com)

What consumers may notice sooner is a more coherent Copilot identity across Windows, Microsoft 365, Edge, and connected apps. The company wants one assistant to follow users across contexts, and that only works if the underlying models can retain memory, manage tasks, and feel reliable. If Microsoft gets that right, the consumer payoff could be meaningful even if the model brand stays invisible. (blogs.microsoft.com)

The upside is that Microsoft already has distribution advantages through Windows and Microsoft 365. If its internal models improve, the company can deploy them at enormous scale without asking users to adopt a wholly new product. That kind of embedded advantage is enormous if the product quality is strong enough to deserve it. (blogs.microsoft.com)

That progress will likely show up in stages rather than a single reveal. Microsoft can improve through incremental model releases, deeper Copilot integration, expanded agent governance, and more efficient serving infrastructure. If those pieces line up, 2027 may become the year Microsoft stops sounding like a partner in someone else’s AI era and starts looking like a primary architect of its own. (blogs.microsoft.com)

Source: eWeek Microsoft Plans to Build Advanced AI Models by 2027

Overview

Overview

Microsoft’s AI posture in 2026 is best understood as a progression from dependence to diversification. In 2020, Microsoft licensed GPT-3 technology from OpenAI and then built a broad product strategy around that relationship, turning OpenAI’s breakthroughs into visible consumer and enterprise features across its stack. The January 2023 partnership extension deepened the arrangement, with Azure positioned as the exclusive cloud provider for OpenAI workloads and Microsoft gaining room to commercialize the technology in its own products.That partnership still exists, and Microsoft’s own public language says it remains strong. But the company is now explicitly saying that it wants more control over the model layer, because models are no longer just research artifacts; they are product infrastructure, margin drivers, and strategic leverage. Mustafa Suleyman’s March 2026 internal reorganization made that clear by putting him on a “superintelligence” track focused on frontier models and by unifying Copilot across consumer and commercial into a single system spanning the experience, platform, Microsoft 365 apps, and AI models. (blogs.microsoft.com)

The timing also matters. Microsoft has spent the last year broadening model choice rather than insisting on a single house model. In March 2026, Microsoft said Microsoft 365 Copilot would remain “model diverse by design,” explicitly leveraging OpenAI and Anthropic models and bringing Claude into mainline Copilot chat through the Frontier program. That tells us Microsoft is not abandoning partners; it is insulating itself from any one partner’s roadmap, pricing, or strategic direction. (blogs.microsoft.com)

At the same time, the company has been investing heavily in AI infrastructure, including Maia 200, its new inference accelerator, and a broader compute roadmap designed to support both its own models and OpenAI’s. When Microsoft says it has a “locked” compute roadmap and wants to deliver “SOTA models,” that is not a vague aspiration. It is a signal that the company sees frontier compute, not just software distribution, as the next battleground. (blogs.microsoft.com)

Why 2027 Became the Target

The 2027 target is strategically interesting because it gives Microsoft enough runway to build capability without publicly admitting that it is behind in the present tense. It also aligns with the company’s broader pattern of long-horizon AI planning: build the platform, land the products, and then integrate the infrastructure tightly enough that margins and performance improve together. That is a classic Microsoft move, but the AI cycle is moving faster than past platform cycles, which makes the timeline both ambitious and risky. (blogs.microsoft.com)Suleyman’s messaging suggests that Microsoft believes frontier models are becoming indispensable to product quality and cost control. In the March leadership update, he said Microsoft needed to focus on models that improve evals, reduce COGS, and advance enterprise breakthroughs. In plain English, that means Microsoft wants models that are not only smart, but cheaper to run and more deeply aligned with business workflows. (blogs.microsoft.com)

The economics behind the date

The AI market has matured enough that model performance alone is no longer the only competitive metric. A model can be excellent and still be strategically weak if it is too expensive, too dependent on external licensing, or too hard to integrate into enterprise systems. Microsoft’s model push is therefore about economic sovereignty as much as technical prestige. (blogs.microsoft.com)There is also a competitive logic to the deadline. By setting a 2027 objective, Microsoft can benchmark itself against OpenAI, Anthropic, and Google without pretending parity exists today. The date creates pressure internally while leaving room for incremental progress across model quality, latency, multimodality, and agentic behavior. In a field where months matter, that is both a commitment and a warning.

- 2027 is far enough out to build infrastructure.

- It is close enough to create internal urgency.

- It lets Microsoft avoid overpromising on near-term parity.

- It positions the company for the next AI platform reset.

- It gives the market a concrete milestone to judge.

The OpenAI Relationship Is Still Central

Despite all the talk about independence, Microsoft is not breaking up with OpenAI. The companies’ February 2026 joint statement said the partnership remained strong, that Microsoft keeps its exclusive license and access to OpenAI IP, and that Azure remains the exclusive cloud provider for stateless OpenAI APIs. It also reaffirmed that the commercial and revenue-sharing relationship remains unchanged. That is not the language of a divorce; it is the language of managed interdependence. (blogs.microsoft.com)But managed interdependence can still be a strategic constraint. Microsoft’s current product portfolio has benefited enormously from OpenAI’s breakthroughs, yet the more Microsoft integrates those models into Copilot, Office, and developer tooling, the more exposed it becomes to pricing shifts, governance changes, and roadmap decisions outside its full control. Building its own frontier models gives Microsoft a seat at the table it does not have to borrow. (blogs.microsoft.com)

What “exclusive” does and does not mean

The phrase exclusive license sounds definitive, but in practice it leaves room for product complexity. Microsoft can keep benefitting from OpenAI while also exploring alternatives, as its own public statements now demonstrate. Microsoft’s March 2026 frontier suite announcement explicitly said Copilot is model diverse by design and that the company is bringing in Anthropic models alongside OpenAI models. (blogs.microsoft.com)That flexibility is important because the AI stack is becoming modular. Enterprises increasingly expect choice in model selection, governance, residency, and cost. Microsoft appears to understand that a single-model strategy would be too brittle for a market where customers want optionality and regulators want auditability. (blogs.microsoft.com)

- Microsoft still depends on OpenAI for a large share of its AI differentiation.

- The company has preserved commercial rights and cloud leverage.

- Model diversity is now a feature, not a workaround.

- Microsoft is reducing strategic single-point dependence.

- The partnership is stable, but no longer exclusive in spirit.

Copilot Is Becoming the Battlefield

Copilot remains the most important outward expression of Microsoft’s AI ambitions, because it is where model strategy meets user experience. In March 2026, Microsoft unified consumer and commercial Copilot into one effort and described the system as spanning four pillars: Copilot experience, Copilot platform, Microsoft 365 apps, and AI models. That is a textbook sign that the company sees AI as an operating layer, not just a feature set. (blogs.microsoft.com)The recent leadership shift also says something important about Microsoft’s internal priorities. Jacob Andreou was elevated to lead Copilot experience across consumer and commercial, while Suleyman narrowed his focus toward model development and superintelligence efforts. That division suggests Microsoft is separating product execution from model science in the same way it once separated Windows, Azure, and Office as distinct engines of scale. (blogs.microsoft.com)

Consumer and enterprise are converging

Microsoft’s consumer Copilot story has never fully caught fire in the way ChatGPT has. At the same time, its enterprise story is stronger, especially inside Microsoft 365. The company is now trying to close the gap between those worlds by making one Copilot system serve both audiences, which could create better continuity across identity, data, and workflows.That move is clever, but it is also difficult. Consumers want delight and immediacy, while enterprises want control, compliance, and predictable ROI. A unified Copilot has to satisfy both without becoming bland, expensive, or overgoverned. That tension will define whether Microsoft’s AI ambitions feel coherent or merely sprawling. (blogs.microsoft.com)

- Copilot is no longer just a chatbot.

- It is becoming a system architecture.

- Microsoft is trying to unify consumer and enterprise demand.

- Leadership is being reorganized around product specialization.

- The experience layer will matter as much as the model layer.

The Claude factor

Microsoft’s willingness to put Claude into mainline Copilot chat is one of the clearest signs that the company is no longer thinking like a platform monopolist. It is thinking like a platform broker. That is healthy for customers, because it gives them choice, but it also reveals that Microsoft is using external models as a bridge while it builds internal alternatives. (blogs.microsoft.com)In other words, Copilot is both the showcase and the pressure valve. If Microsoft’s own models are not ready, Copilot can still ship using a mix of OpenAI and Anthropic capabilities. If Microsoft’s internal models improve quickly, the company gains better margins and more control. Either way, Copilot remains the place where strategy becomes visible. (blogs.microsoft.com)

Agents, Not Just Models, Are the Endgame

Suleyman’s view of the future is not just about better models; it is about automation at work. Microsoft has been repeatedly messaging that AI is moving from question-answering into multi-step execution with user control points. That shift is crucial, because it turns AI from a content engine into a workflow engine. (blogs.microsoft.com)The company’s March 2026 frontier suite announcement leaned hard into this idea, highlighting agentic experiences in Word, Excel, PowerPoint, and Outlook, plus Copilot Cowork for long-running, multi-step work. Microsoft also said Agent 365 would give IT and security leaders a control plane for agents, underscoring that the company sees agent sprawl as both an opportunity and a governance problem. (blogs.microsoft.com)

Why agents change the value equation

If models are the engine, agents are the transmission. They determine how intelligence enters the workflow, how much manual oversight is required, and how well tasks can be chained together. That is why Microsoft has been investing in agent governance, security, and enterprise controls alongside model development. (blogs.microsoft.com)This also explains why Microsoft is talking about office work automation in such broad terms. Even if the company is being a little too optimistic about the pace of change, the direction is obvious: repetitive knowledge work is increasingly a target for software-mediated delegation. The economic prize is not just higher productivity. It is labor compression, faster throughput, and lower support costs.

- Agents shift AI from assistance to execution.

- Workflow integration matters more than benchmark bragging rights.

- Governance is becoming a core product feature.

- Enterprise adoption will depend on auditability and control.

- Microsoft is building a stack for automation, not just inference.

Enterprise implications

For enterprises, this is where Microsoft has a real shot at leadership. Large companies do not just want the best model on a benchmark; they want a system that can operate across identity, security, data, and business rules. Microsoft’s agent strategy—paired with Entra, Defender, Purview, and Office—gives it a compelling enterprise story if execution stays tight. (blogs.microsoft.com)The risk is that complexity becomes friction. If every agent requires policy tuning, approval chains, and integration work, adoption slows. Microsoft knows this, which is why it is packaging trust and intelligence together rather than treating security as an afterthought. That is wise, but it also means the company must prove that governed automation can still feel magical. (blogs.microsoft.com)

Compute, Silicon, and the Infrastructure Race

Microsoft cannot build frontier models without massive compute, and it has made that point repeatedly. The company’s Maia 200 announcement in January 2026 described the chip as part of a heterogeneous AI infrastructure strategy that serves models including OpenAI’s latest GPT-5.2 family. That matters because Microsoft is no longer only buying compute from the market; it is shaping its own performance-per-dollar stack.The hardware strategy is important for another reason: frontier models are increasingly constrained by economics, not just raw availability. Training and serving are both expensive, and Microsoft wants to own more of the value chain so it can optimize latency, throughput, and cost. That is the difference between being a reseller of AI and being a true AI infrastructure company.

The infrastructure moat

Microsoft’s public rhetoric around AI has shifted from “we have access to the best models” to “we are building the system that makes models affordable and useful at scale.” That includes custom silicon, datacenter design, agent orchestration, and enterprise controls. It also includes long-term capital commitments that smaller rivals would struggle to match.This infrastructure story gives Microsoft a kind of hidden moat. Even if its own model research lags the absolute frontier, the company can still win through distribution, integration, and economics. But if it also closes the model gap by 2027, the result could be a much more vertically integrated AI empire than many rivals expected. (blogs.microsoft.com)

- Custom silicon can improve serving economics.

- Datacenters are now strategic AI assets.

- Model quality and compute efficiency are linked.

- Microsoft wants control over both training and inference economics.

- Infrastructure scale could offset temporary model lag.

Competitive Implications for OpenAI, Anthropic, and Google

Microsoft’s move should worry every major AI player, even if the company is still partnering widely. For OpenAI, the relationship remains strategically valuable, but Microsoft’s internal model push means the company is no longer guaranteed to remain the permanent center of gravity inside Microsoft’s product stack. That reduces OpenAI’s leverage over time, even if the partnership contract stays strong. (blogs.microsoft.com)Anthropic may actually benefit in the short run. Microsoft has already shown that it is willing to bring Claude into Copilot and Microsoft 365 experiences, which gives Anthropic direct distribution inside one of the world’s largest enterprise software ecosystems. But the more Microsoft builds its own models, the more Anthropic becomes a partner of convenience rather than an indispensable pillar. (blogs.microsoft.com)

Google’s competitive pressure

Google remains the benchmark Microsoft must chase if it wants to claim state-of-the-art credibility by 2027. Gemini’s presence in the market means Microsoft cannot rely solely on application dominance or Azure scale to claim model leadership. It needs models that are visibly competitive in reasoning, multimodality, latency, and cost.The broader market implication is that the AI stack is becoming more pluralistic. Customers are already using multiple models, and Microsoft is leaning into that reality rather than resisting it. That can help reduce lock-in and spur innovation, but it also means the “winner takes all” narrative is fading. The next phase may belong to companies that can orchestrate many strong models, not just own one great one. (blogs.microsoft.com)

- OpenAI loses some exclusivity leverage.

- Anthropic gains distribution, but not permanence.

- Google remains a direct benchmark competitor.

- Multi-model enterprise strategies are becoming normal.

- The market is shifting from model singularity to orchestration.

What This Means for Consumers

For consumers, Microsoft’s 2027 model push could eventually mean a better, more capable Copilot experience, but not necessarily one that is obviously better tomorrow. Consumer AI products are won through fast iteration, visible delight, and habit formation, and Microsoft has not yet matched ChatGPT’s cultural pull. Building better models is necessary, but it is not sufficient on its own.What consumers may notice sooner is a more coherent Copilot identity across Windows, Microsoft 365, Edge, and connected apps. The company wants one assistant to follow users across contexts, and that only works if the underlying models can retain memory, manage tasks, and feel reliable. If Microsoft gets that right, the consumer payoff could be meaningful even if the model brand stays invisible. (blogs.microsoft.com)

Consumer priorities

Consumers tend to care less about whether a model is SOTA and more about whether it saves time, feels safe, and produces consistently useful output. Microsoft’s challenge is to turn abstract frontier-model progress into everyday utility that people can notice in work, school, and personal productivity. That is harder than it sounds, because consumer trust is fragile and errors are highly visible. (blogs.microsoft.com)The upside is that Microsoft already has distribution advantages through Windows and Microsoft 365. If its internal models improve, the company can deploy them at enormous scale without asking users to adopt a wholly new product. That kind of embedded advantage is enormous if the product quality is strong enough to deserve it. (blogs.microsoft.com)

- Better models can improve Copilot responsiveness.

- Consumers will judge utility, not benchmark language.

- Cross-app continuity could be a major differentiator.

- Trust and reliability will matter more than novelty.

- Microsoft’s distribution gives it a massive launchpad.

Strengths and Opportunities

Microsoft’s strategy has several genuine strengths. It combines elite distribution, enterprise trust, massive infrastructure, and a willingness to use outside models while building its own. That mix gives the company a path to compete even before it wins outright at the model layer, which is a far stronger position than trying to force a single bet too early. (blogs.microsoft.com)- Massive product distribution through Windows, Office, Azure, and Copilot.

- Enterprise credibility that makes governance and security part of the pitch.

- Multi-model flexibility that reduces dependency on any one partner.

- Custom silicon and datacenter investment that can improve unit economics.

- A unified Copilot vision that may simplify the user experience.

- Agentic workflow momentum that could drive durable customer value.

- Room to improve margins by owning more of the model stack.

Risks and Concerns

The biggest risk is overreach. Microsoft is trying to do a lot at once: build frontier models, unify Copilot, expand agents, maintain OpenAI ties, integrate Anthropic, and scale infrastructure. Each part makes sense on its own, but the whole strategy could become unwieldy if leadership focus fractures or if the company promises more than the models can deliver in the next 18 to 24 months. Ambition is not execution. (blogs.microsoft.com)- Model catch-up may take longer than planned.

- Copilot can become too complicated if too many model and workflow choices are exposed.

- OpenAI dependence is still real, even if it is shrinking.

- Enterprise buyers may resist if governance feels heavy or expensive.

- Consumer momentum may remain weak if Copilot lacks emotional pull.

- Infrastructure costs could outpace monetization if adoption is uneven.

- Agent sprawl could create security and compliance risk if controls lag usage.

Looking Ahead

The next 12 months will be the real test of whether Microsoft’s strategy is a genuine model breakthrough plan or mainly a strategic hedge. The company now has a clearer internal structure, a stronger compute roadmap, and a more explicit public narrative around frontier models and agents. What it needs next is visible progress that customers can feel in product quality, cost, and reliability. (blogs.microsoft.com)That progress will likely show up in stages rather than a single reveal. Microsoft can improve through incremental model releases, deeper Copilot integration, expanded agent governance, and more efficient serving infrastructure. If those pieces line up, 2027 may become the year Microsoft stops sounding like a partner in someone else’s AI era and starts looking like a primary architect of its own. (blogs.microsoft.com)

What to watch

- New Microsoft-made model announcements and how they compare to OpenAI and Google.

- Whether Copilot usage grows meaningfully in both consumer and enterprise segments.

- How much more model diversity Microsoft adds across Copilot, Foundry, and Office.

- Whether Agent 365 adoption becomes a real enterprise standard.

- How Microsoft balances partnership and independence without confusing customers.

Source: eWeek Microsoft Plans to Build Advanced AI Models by 2027

Last edited: